M. Lautenschlager (M&D) / 11.02.03 / 1 ENES: The European Earth System GRID ENES – Alcatel WS...

-

Upload

ophelia-obrien -

Category

Documents

-

view

215 -

download

0

Transcript of M. Lautenschlager (M&D) / 11.02.03 / 1 ENES: The European Earth System GRID ENES – Alcatel WS...

M. Lautenschlager (M&D) / 11.02.03 / 1

ENES: The European Earth System GRID

ENES – Alcatel WS 11.+12.02.03, ANTWERPEN

Michael Lautenschlager

Model and Data Max-Planck Institute for Meteorology

Hamburg

Mail: [email protected] / Web: http://www.mad.zmaw.de/

M. Lautenschlager (M&D) / 11.02.03 / 2

ENES GRID

Successful modelling and prediction of the Earth System relies heavily on the availability of huge data sets for boundary and initial conditions from observations and other model studies, and on comparison with the output of other model studies.

Networking Aspects: NRN cooperation, bandwidth, latency, quality of service, middleware integration

Software Aspects: science supporting data processing and visualisation, data and metadata standards, authorising systems

Data Archives: standardisation of data models, primary data and meta data organization, long-term storage, data quality, data access

Organizational Aspects: load balancing, job scheduling, trans-national access, rights management, cost management

M. Lautenschlager (M&D) / 11.02.03 / 3

Model Computing GRID (PRISM)

+ distributedcomputing

M. Lautenschlager (M&D) / 11.02.03 / 4

CLIMSTER Data Catalogue

CLIMSTER Core Data

Remote ArchiveDatabase System

Remote ArchiveFile-Based Storage

CL

IMS

TE

RD

ata

Net

wor

k

CLIMSTERUser Interface

Geo

grap

hica

l D

istr

ibut

edD

ata

Arc

hive

s In

tern

etA

cces

s

Data Processing GRID

+ processing

M. Lautenschlager (M&D) / 11.02.03 / 5

ENES Partners

M. Lautenschlager (M&D) / 11.02.03 / 6

Traffic Matrix

DKRZ (G)Archive: 3.5 Tbytes/dayRestore: 1.5 Tbytes/dayTotal: 600 Tbytes

Hadley Centre (GB)Archive: 250 Gbytes/dayRestore: 400 Gbytes/dayTotal: 200 Tbytes

Mass Storage Archives

Extrapolation of data rates depends on installedcompute power and application profiles (comp. Use Cases)

IPSL (F)Archive: 170 Gbytes/dayRestore: 330 Gbytes/day

M. Lautenschlager (M&D) / 11.02.03 / 7

Traffic Matrix:Questions

What is the minimum, guaranteed bandwidth?

What is the average bandwidth?

What is the peak bandwidth?

What are the quality requirements especially latency?

M. Lautenschlager (M&D) / 11.02.03 / 8

Traffic Matrix:Alcatel - Questionaire

Server included DKRZ: Sun-Solaris and Linux (Suse + Red Hat) for data,

NEC SX6 for computing Hadley: IBM Z-series for data, NEC + Cray T3E for

computing, HP UX server for web IPSL: NEC SX5, IBM SP4, Fujitsui VPP, Compaq for

computing, SGI for data

Protocols DKRZ: TCP/IP Hadley: TCP/IP IPSL: TCP/IP

M. Lautenschlager (M&D) / 11.02.03 / 9

Services DKRZ:

External: Apache, proftp, OpenSSH, SMTP, cvs, Oracle Appl.Server + DB-Server, Open DAP

Internal: LDAP, NIS, NFS, CUPS Hadley: very similar IPSL: very similar, Open DAP

Client Systems DKRZ: Sun-Solaris, Windows 2000, Linux, (Mac) Hadley: Linux, HP-UX Windows XP IPSL: Sun-Solaris, Windows 2000, Linux, Mac

Traffic Matrix:Alcatel - Questionaire

M. Lautenschlager (M&D) / 11.02.03 / 10

Applications for ENES Model development Run coupled climate models Process and analyse climate model data Process and analyse observations

Future DKRZ: We plan for a European Climate Computing Facility

because our analysis so far shows that distributed computing is not an option for single model components (needs further investigation).

Hadley: Future grid applications will include tools for finding, navigating and extracting subsets of model and observation datasets. Also possibly tools for model intercomparison and running ensembles of different models.

Traffic Matrix:Alcatel - Questionaire

M. Lautenschlager (M&D) / 11.02.03 / 11

Future DevelopmentPath

Internet Access

DB AccessHSM Access

M. Lautenschlager (M&D) / 11.02.03 / 12

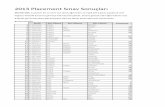

2001 2002 2003 2004 2005 20060

1000

2000

3000

4000

5000

6000

7000

8000

9000

10000

11000

DKRZ Datenarchiv

UNIX-Files f^3/4

CERA-Files f^3/4

UNIX-Files f^1

CERA-Files f^1

Jahre

Da

ten

in T

Byt

e

(IV/2001)

January 2003: 600 TB

M. Lautenschlager (M&D) / 11.02.03 / 13

CERA-DB Access

M. Lautenschlager (M&D) / 11.02.03 / 14

CERA DB Access

Number of Downloads in 2002: 2000 – 5000 / month 80% are external

Total Data Volume: 100 – 400 GB / month Data per Download: 50 – 100 MB

Current database size is 12.8439 Terabyte Number of experiments: 288 Number of datasets: 18199

Number of blob within cera at 28-JAN-03: 804327136

M. Lautenschlager (M&D) / 11.02.03 / 15

FTP-Access to UniTree-System

Up to 2002 -> Read : Write = 2 : 1 for climate datasets

Data rates January 2003: 1.5 TB/day Read 3.5 TB/day Write

M. Lautenschlager (M&D) / 11.02.03 / 16

DKRZ-Internet: G-WiN

Received by DKRZSent by DKRZ

M. Lautenschlager (M&D) / 11.02.03 / 17

Statistik ERA40 Daten =====================

load size |<---------retrieval---------->| |<-----download/transfer---->|Mon. MB start end diff rate start end diff rate9101 1724 22-13:07 22-22:15 09:08=188MB/h 23-08:02 23-08:37 :35=2.9GB/h9102 1557 22-22:15 23-05:49 07:34=205MB/h 23-10:35 23-11:05 :30=3.1GB/h9103 1724 23-05:49 23-15:30 09:41=178MB/h 23-16:34 23-17:09 :35=2.9GB/h9104 1669 23-15:30 24-01:30 10:00=166MB/h 24-11:00 24-11:31 :31=3.1GB/h9105 1724 24-01:30 24-09:16 07:46=221MB/h 24-12:39 24-13:14 :35=2.9GB/h9106 1669 24-09:16 24-22:10 12:56=129MB/h 24-23:22 24-23:54 :32=3.0GB/h9107 1724 24-22:10 25-09:15 11:05=155MB/h 25-12:40 25-13:17 :37=2.8GB/h9108 1724 25-09:15 25-18:34 09:19=185MB/h 25-23:48 26-00:24 :36=2.9GB/h9109 1669 25-18:34 26-01:49 07:15=230MB/h 26-10:11 26-10:48 :37=2.8GB/h9110 1724 26-01:49 26-08:21 06:32=263MB/h 26-10:12 26-10:51 :39=2.7GB/h9111 1669 26-08:21 26-14:31 06:10=270MB/h 26-20:42 26-21:16 :34=3.0GB/h9112 1724 26-14:31 26-20:01 05:30=313MB/h 26-20:43 26-21:19 :36=2.9GB/h

Example WANData Transfer

File Size Retrieval Rate Transfer Rate

M. Lautenschlager (M&D) / 11.02.03 / 18

ERA40 - Data Transfer: ECMWF DKRZ

Data extraction from ECMWF Archive (Retrieval): daily average 200 MB/h (single request) day time 170 MB/h, night time and week end 300 MB/h

FTP download (Transfer): 1 GB in 20 minutes per transfer process, also 1 GB / 20 min / transfer for two parallel FTP's

M. Lautenschlager (M&D) / 11.02.03 / 19

UC: Uses Cases

• 2D / 3D time series• Raw data transfer• Blending of different data sources

M. Lautenschlager (M&D) / 11.02.03 / 20

Standard Model Resolution

Number of horizontal grid points for entire globeT42: 64 x 128 points (present)T63: 96 x 192 points (next)T106: 160 x 320 points (future)

M. Lautenschlager (M&D) / 11.02.03 / 21

UC: Transfer Data Volume and Response Time Requirements

Basic application: time series access (TS)

2D and 3D TS from 500 model years as monthly means (MM), response time of 5 sec

2D and 3D TS from 100 model years with 6 hours storage interval (6H), response time of 10 sec

Raw data TS output from 50 model years, response time of 20 sec

Model configurations T42-L19: 17.5 KB/global-field, 0.4 GB/model-

month T63-L31: 37.5 KB/global-field, 1.5 GB/model-

month T106-L31: 101.5 KB/global-field, 5.6 GB/model-month

M. Lautenschlager (M&D) / 11.02.03 / 22

DT: Time Series and Data Transfer Volume

TS Length T42-L19 T63-L31 T106-L31

2D (MM) 500 Y 0.1 0.2 0.6

3D (MM) 500 Y 2 7 18

2D (6H) 100 Y 3 5 14

3D (6H) 100 Y 46 160 430

Raw 50 Y 240 900 3400

Units: GB (Table entries are rounded.)

M. Lautenschlager (M&D) / 11.02.03 / 23

DT: Response Time and Net Band Width on Application Level

TSResponse

TimeT42-L19 T63-L31 T106-L31

2D (MM) 5 sec 0.2 0.3 1

3D (MM) 5 sec 3 10 30

2D (6H) 10 sec 2 4 10

3D (6H) 10 sec 40 130 350

Raw 20 sec 100 400 1400

Units: Gbit/sec (Table entries are rounded.)ERA40: 0.007 Gbit/sec

M. Lautenschlager (M&D) / 11.02.03 / 24

Traffic Matrix:Working Hypothesis

Dynamic infrastructure which takes into account changing distribution of "hot spots" and flexible adaptation to applications. Layer 1: Hot Spots (peak = 1.4 Tbit/s)

ECMWF, Exeter, Hamburg, France, Poland?, Scandinavia?, Italy? (Users/Producers)

Layer 2: ENES Partners + e.g. Earth Simulator Community, Earth System Grid, .... (30% of peak) MPI-M, NERSC, IPSL, DMI, KNMI, CGAM, Meteo-France, CERFACS,

CSCS, SMHI, UCL, INGV, MPI-BGC, IfM Kiel, CRU-UEA, FZJ, ICMCM, Hamburg University, AWI, IfM-Berlin, ZIB

Layer 3: Rest of the World (5% of peak)

What is the minimum, guaranteed bandwidth? 10 % of peak(1,2,3)

What is the average bandwidth? 50% of peak(1,2,3)

What is the peak bandwidth? Peak(1,2,3) for 10% of day

![IS-ENES [ees-enes] InfraStructure for the European Network for Earth System Modelling IS-ENES will develop a virtual Earth System Modelling Resource Centre.](https://static.fdocuments.in/doc/165x107/56649e385503460f94b299fe/is-enes-ees-enes-infrastructure-for-the-european-network-for-earth-system.jpg)