Supervised Learning I, Cont’d Reading: Bishop, Ch 14.4, 1.6, 1.5.

-

date post

22-Dec-2015 -

Category

Documents

-

view

213 -

download

0

Transcript of Supervised Learning I, Cont’d Reading: Bishop, Ch 14.4, 1.6, 1.5.

Administrivia I•Machine learning reading group

•Not part of/related to this class

•We read advanced (current research) papers in the ML field

•Might be of interest. All are welcome

•Meets Fri, 2:00-3:30, FEC325 conf room

•More info: http://www.cs.unm.edu/~terran/research/reading_group/

•Lecture notes online

Administrivia II•Microsoft on campus for talk/recruiting

•Feb 5

•Mock interviews

•Hiring both undergrad, grad, interns

•Office hours Wed

•9:00-10:00 and 11:00-noon

•Final exam time/day

• Incorrect in syllabus (last year’s. Oops.)

•Should be: Tues, May 8, 10:00-noon

Yesterday & today•Last time:

•Basic ML problem

•Definitions and such

•Statement of the supervised learning problem

•Today:

•HW 1 assigned

•Hypothesis spaces

• Intro to decision trees

Homework 1•Due: Tues, Jan 30

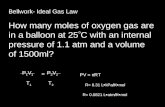

•Bishop, problems 14.10, 14.11, 1.36, 1.38

•Plus:

A)Show that entropy gain is concave (anti-convex)

B)Show that a binary, categorical decision tree, using information gain as a splitting criterion, always increases purity. That is, information gain is non-negative for all possible splits, and is 0 only when the split leaves the data distribution unchanged in both leaves.

•Feature (attribute):

• Instance (example):

•Label (class):

•Feature space:

•Training data:

Review of notation

Finally, goals•Now that we have and , we have a

(mostly) well defined job:

Find the function

that most closely approximates the “true” function

The supervised learning problem:

Goals?•Key Questions:

•What candidate functions do we consider?

•What does “most closely approximates” mean?

•How do you find the one you’re looking for?

•How do you know you’ve found the “right” one?

Hypothesis spaces•The “true” we want is usually called

the target concept (also true model, target function, etc.)

•The set of all possible we’ll consider is called the hypothesis space,

•NOTE! Target concept is not necessarily part of the hypothesis space!!!

•Example hypothesis spaces:

•All linear functions

•Quadratic & higher-order fns.

More hypothesis spacesRulesif (x.skin==”fur”) { if (x.liveBirth==”true”) { return “mammal”; } else { return “marsupial”; }} else if (x.skin==”scales”) { switch (x.color) { case (”yellow”) { return “coral snake”; } case (”black”) { return “mamba snake”; } case (”green”) { return “grass snake”; } }} else { ...}

Finding a good hypothesis•Our job is now: given an in some and

an , find the best we can by searching

Space of all functionson

Measuring goodness•What does it mean for a hypothesis to be

“as close as possible”?

•Could be a lot of things

•For the moment, we’ll think about accuracy

•(Or, with a higher sigma-shock factor...)

Aside: Risk & Loss funcs.•The quantity is called a risk function

•A.k.a., empirical loss function

•Approximation to true (expected) loss:

•(Sort of) measure of distance between “true” concept and approximation to it

All functionson

Constructing DT’s, intro•Hypothesis space:

•Set of all trees, w/ all possible node labelings and all possible leaf labelings

•How many are there?

•Proposed search procedure:

1.Propose a candidate tree, ti

• Evaluate accuracy of ti w.r.t. X and y

• Keep max accuracy ti

1.Go to 1

1.Will this work?

A more practical alg.

•Can’t really search all possible trees

• Instead, construct single tree

•Greedily

•Recursively

•At each step, pick decision that most improves the current tree

A more practical alg.DecisionTree buildDecisionTree(X,Y) {// Input: instance set X, label set Yif (Y.isPure()) {return new LeafNode(Y);

}else {Feature a=getBestSplitFeature(X,Y);DecisionNode n=new DecisionNode(a);[X0,...,Xk,Y0,...,Yk]=a.splitData(X,Y);for (i=0;i<=k;++i) {n.addChild(buildDecisionTree(Xi,Yi));

}return n;

}}

The geometry of DTs•Decision tree splits space w/ a series of axis

orthagonal decision surfaces

•A.k.a. axis parallel

•Equivalent to a series of half-spaces

• Intersection of all half-spaces yields a set of hyper-rectangles (rectangles in D>3 dimensional space)

• In each hyper-rectangle, DT assigns a constant label

•So a DT is a piecewise-constant approximator over a sequence of hyper-rectangular regions