Text Representation & Fixed-Size Ordinally-Forgetting Encoding Approach

-

Upload

ahmed-hani-ibrahim -

Category

Documents

-

view

115 -

download

2

Transcript of Text Representation & Fixed-Size Ordinally-Forgetting Encoding Approach

Survey on Text Representation&

Shallow Sentence Embedding: Fixed-SizeOrdinally-Forgetting Encoding Approach

Ahmed H. AlGhidaniMSc Student in Computer Science at Cairo University

Research and SDE at RDI Egypt

Agenda

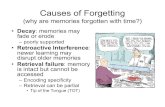

• Word Representation- 1-hot Encoding

• Word Embedding- Word2Vec- GloVe

• Sentence Representation- Bag of Words (BoW)

• Sentence Embedding- Doc2Vec- Fixed-Size Ordinally-Forgetting Encoding (FOFE)- Others

Word Representation

• Transform a word to vector space model

• Each word has a unique vector that differsit from others

• Vector dimensions is varies according tothe method used for transformation

Word Representation (Cont.)

• 1-hot Encoding

Word Representation (Cont.)

• Pros- Easy to understand and implement

• Cons- Depends on vocabulary size (Memoryissues)- Doesn’t represent the semanticrepresentation of words

Word Embedding

• A vector space model

• Each word has a fixed-size unique vectorrepresentation

• It shows the semantic relations betweenwords

Word Embedding (Cont.)

• Word2Vec (Mikolov, 2013)

• To represent a word, we need to use itscontext

• Group of models that are about usingshallow Neural Networks

• We will talk about Skip-gram andContinuos Bag of Words (CBOW)

Word Embedding (Cont.)

• Skip-gram model

• 2 layers of Multi-layer perceptron (MLP)neural netwoek

• Input is a word and output is group ofwords that maybe occured if that word isgiven

• Softmax layer to get the probability ofgetting an output word

Word Embedding (Cont.)

• Continuos Bag of Words (CBOW)

• 2 layers of MLP neural network

• Input is a word’s context and output is theword

• Softmax layer to get the probability ofgetting an output word

Word Embedding (Cont.)

• Pros- Fast and effcient- Fixed-size Dimension

• Cons- We don’t know why it works (includingMikolov itself!)

Word Embedding (Cont.)

• Global Vectors (GloVe)

• We build a co-occurence matrix for thewhole corpus

• Factorize this matrix to word vectors andcontext vectors

Word Embedding (Cont.)

Corpus: A D C E A D F E B A C E DWindow-size: 2 (bi-grams)

Co-occurence matrix XA B C D E

A 0 1 3 2 3B 1 0 1 0 1C 3 1 0 2 2D 2 0 2 0 4E 3 1 2 4 0

Word Embedding (Cont.)

We want to use the co-occuerenceinformation to produce the word vectors, so,we use this function for pair of words

Our target is to minimize this objectivefunction all over the corpus words

Word Embedding (Cont.)

Where,

Word Embedding (Cont.)

• Pros- Statistical approach- Combines statistics methos and skip-gram model to produce the word vector

Sentence Representation

• Given a context of words, the target is tovectorize the whole context to a vectorspace model

Sentence Representation(Cont.)

• Bag of Words Model (BoW)

• Depends on term-frequency in the context

• The vector dimensions varies according tothe size of corpus’s unique words

Sentence Representation(Cont.)

Corpus:Sentence 1: “The cat sat on the hat”Sentence 2: “The dog ate the cat and thehat”

Unique words:{the, cat, sat, on, hat, dog, ate, and}

Sentence 1: [2, 1, 1, 1, 1, 0, 0, 0]Sentence 2: [3, 1, 0, 0, 1, 1, 1, 1]

Sentence Representation(Cont.)

• Pros- Easy to understand and implement

• Cons- Depends on vocabulary size (Memoryissues)- Doesn’t represent the semanticrepresentation of words

Sentence Embedding

• A vector space model

• Each document has a fixed-size uniquevector representation

• It shows the semantic identity betweendocuments

Sentence Embedding (Cont.)

• Doc2Vec (Mikolov, 2014)

• Predicting words that follows the documentsemantic.

• Group of models that are about usingshallow Neural Networks

• We will talk about (PV-DM) and (PV-DBOW) models

Sentence Embedding (Cont.)

• Distributed Memory Model of ParagraphVector (PV-DM)

• 2 layers of Multi-layer perceptron (MLP)neural netwoek

• Input is a paragraph matrix and contextwords and output is a predicted word giventhe paragraph and context

Sentence Embedding (Cont.)

• Distributed Bag of Words of ParagraphVector (PV-DBOW)

• 2 layers of Multi-layer perceptron (MLP)neural netwoek

• Input is a paragraph and output is group ofwords that maybe occured if thatparagraph vector is given (extractingkeywords)

Sentence Embedding (Cont.)• Fixed-Size Ordinally-Forgetting Encoding (FOFE) (2015)

• Produces a fixed-size of vector dimension given avocublary

• The produced vectors are mostly unique, that helps todifferes them in semantic way

• Used mainly in NLP Language Modeling task usingregular Neural Network

Vocabulary{A, B, C}

InitiallyA = [1, 0, 0]B = [0, 1, 0]C = [0, 0, 1]

Sentence 1: {ABC}Sentence 2: {ABCBC}

TargetGet a fixed-size vector that represents eachsentence

Encoding Function

where,

A = [1, 0, 0], B = [0, 1, 0], C = [0, 0, 1]Sentence 1: {ABC},

Sentence 2: {ABCBC}

Sentence Embedding (Cont.)

• Deep Sentence Embedding Using LongShort-Term Memory Networks

References

• https://en.wikipedia.org/wiki/Vector_space_model

• https://en.wikipedia.org/wiki/One-hot• https://arxiv.org/pdf/1301.3781v3.pdf• http://www-

personal.umich.edu/~ronxin/pdf/w2vexp.pdf

• https://www.tensorflow.org/extras/candi-date_sampling.pdf

• http://www-nlp.stanford.edu/pubs/glove.pdf

References (Cont.)

• http://www.foldl.me/2014/glove-python/• https://en.wikipedia.org/wiki/Bag-of-

words_model• https://iksinc.wordpress.com/2015/06/23/h

ow-to-use-words-co-occurrence-statistics-to-map-words-to-vectors/

• https://arxiv.org/pdf/1502.06922.pdf• http://cs.stanford.edu/~quocle/paragraph_v

ector.pdf• http://www.aclweb.org/anthology/P15-2081