Supervised and Interpretable Machine Learning in...

Transcript of Supervised and Interpretable Machine Learning in...

Supervised and Interpretable MachineLearning in Medicine

Nataliya Sokolovska

Sorbonne UniversityParis, France

Master 2 BIMOctober, 25, 2019

Outline

Medical Data and Some Applications

State-of-the-art Supervised ML Methods

Interpretable Models

Challenges in Interpretable Supervised Learning

Medical Data and Some Applications

State-of-the-art Supervised ML Methods

Interpretable Models

Challenges in Interpretable Supervised Learning

Medical Data

I Small number of observations (N)

I Big number of parameters p

I Data are noisy

I Missing data

I Batch effect (possible)

I In a real medical study, data are usually heterogeneous

Heterogeneous Medical Data and Data Integration

I Clinical data, alimentary patterns, nutritional habits

I Drugs taken, treatementI “omics” data

I lipidomicsI transcriptomicsI metagenomicsI proteomics

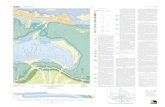

Machine Learning Applications in Medicine

from E. J. Topol. High-performance medicine: the convergence of human andartificial intelligence. Nature Medicine 2019.

Multi-modal data inputs to provide individualized guidance

from E. J. Topol. High-performance medicine: the convergence of human andartificial intelligence. Nature Medicine 2019.

Machine Learning Studies and Medical Routines

from E. J. Topol. High-performance medicine: the convergence of human andartificial intelligence. Nature Medicine 2019.

Medical Data and Some Applications

State-of-the-art Supervised ML Methods

Interpretable Models

Challenges in Interpretable Supervised Learning

State-of-the-art Supervised ML methods

I Logistic regression

I Support Vector Machines

I Random Forests

I Boosting and Gradient Boosting

I Deep Learning

Support Vector Machines in MedicineI K. Anjani, M. Lhomme, N. Sokolovska, C. Poitou, J.-L. Bouillot, P.

Lesnik, P. Bedossa, A. Kontush, K. Clement, I. Dugail, J. Tordjman.Circulating phospholipid profiling identifies portal contribution toNASH signature in obesity, Journal of Hepatology, 2015.

I Find the best predictors for NASHI Apply a sparse SVM, perform also feature selectionI Visualise the selected features by a Bayesian net

Random Forests in Medicine

0.0 0.2 0.4 0.6 0.8 1.0False Positive Rate

0.0

0.2

0.4

0.6

0.8

1.0

True

Pos

itive

Rat

e

Receiver operating characteristic example

ROC fold 0 (AUC = 0.84)ROC fold 1 (AUC = 0.72)ROC fold 2 (AUC = 0.84)ROC fold 3 (AUC = 0.81)ROC fold 4 (AUC = 0.64)LuckMean ROC (AUC = 0.77 ± 0.08)± 1 std. dev.

0.00 0.05 0.10 0.15 0.20Relative Importance

[Ruminococcus] torquesFaecalibacterium prausnitzii

butyrate-producing bacterium SS3/4Subdoligranulum variabile

IP10_pg_mlRatioBiotinProdLax.BiotinTransporters.sums

WBCMONOCYAGE

CRP_us_OGTTClostridium bolteae

butyrate-producing bacteriumWBCLEUCO

down_10000000Escherichia coli

IL6HS_OGTT

Feature Importances

Black box models

I Black box vs interpretable or explainable models

I Interpretability is not well defined

I Not interpretable by human experts

I Some models have some interpretable aspects

I A classical example of a black box model: neural (deep)networks (typically involve non-linearities and interactionsbetween inputs, which means that not only is there no simplemapping from input to outputs, the effect of changing oneinput may dependent critically on the values of other inputs.This makes it very hard to mentally figure out what?shappening)

Medical Data and Some Applications

State-of-the-art Supervised ML Methods

Interpretable Models

Challenges in Interpretable Supervised Learning

Explainable Decisions

I Fairness: Ensuring that predictions are unbiased and do notimplicitly or explicitly discriminate against protected groups.An interpretable model can tell you why it has decided that acertain person should not get a loan, and it becomes easier fora human to judge whether the decision is based on a learneddemographic (e.g. racial) bias.

I Privacy: Ensuring that sensitive information in the data isprotected.

I Reliability: or Robustness: Ensuring that small changes in theinput do not lead to large changes in the prediction.

I Causality: Check that only causal relationships are picked up.

I Trust: It is easier for humans to trust a system that explainsits decisions compared to a black box.

Fairness in Machine Learning

A hot topic in Machine Learning:

I Unintended discrimination arises naturally and frequently inthe use of machine learning and algorithmic decision making

I The focus is on understanding and mitigating discriminationbased on sensitive characteristics, such as, gender, race,religion, physical ability, and sexual orientation

Why it happens?

I A learning algorithm is designed to pick up statistical patternsin training data

I If the training data reflect existing social biases against aminority, the algorithm is likely to incorporate these biases.

Interpretable Linear Regression

y = β0 + β1x1 + · · ·+ βdxd + ε (1)

I βs are the learned feature weights

I β0 is the intercept, ε is the error the model makes (Gaussiandistribution)

I Numerical feature: Increasing the numerical feature by oneunit changes the estimated outcome by its weight. Anexample of a numerical feature is the size of a house

I We hope that there are not any strongly correlated features

I In the medical field, it is not only important to predict theclinical outcome of a patient, but also to quantify theinfluence of the drug and at the same time take sex, age, andother features into account in an interpretable way

Interpretable Logistic Regression

A linear model (above) does not output probabilities, but it treatsthe classes as numbers (0 and 1)

log(P(Y = 1|X )

P(Y = 0|X )

)= β0 + β1x1 + · · ·+ βdxd (2)

I The outcomes are probabilities

I A change in a feature by one unit changes the odds ratio(multiplicative) by a factor of exp(βj)

Decision Trees

I Linear models fail is the relationship between classes andfeatures is non-linear

I Tree based models split the data multiple times according tocertain cutoff values in the features

I Each instance falls into exactly one leaf node

I Feature Importance: Go through all the splits for which thefeature was used and measure how much it has reduced thevariance or Gini index compared to the parent node (The sumof all importances is scaled to 100)

I Advantages: capturing interactions between features, goodvisualisation

I Disadvantages: unstable, the number of terminal nodesincreases quickly with depth (difficult to interpret)

Motivation and Goals

I MotivationI Simple and interpretable models

I A scoring systemI sparse linear model

I based on simple arithmetic operations

I has few significant digits (ideally integers)

I can be explained by human experts

I to be learned purely from data

Example: the DiaRem (Diabetes Prediction) Score

Variable Thresholds Score

Age <40 040–49 1

50 – 59 2>60 3

Glycated hemoglobin <6.5 06.5 – 6.9 27 – 8.9 4> 9 6

Insuline No 0Yes 10

Other drugs No 0Yes 3

Classify as Remission if sum of scores < 7Classify as Non-remission if sum of scores ≥ 7

C. D. Still et al., Preoperative prediction of type 2 diabetes remissionafter Roux-en-Y gastric bypass surgery: a retrospective cohortstudy, 2013

The State-of-the-Art

Medical Scores (widely used)

I SAPS I, II, and III and APACHE I, II, III to assess intensivecare units mortality risks

I CHADS2 to assess the risk of stroke

I TIMI to estimate the risk of death of ischemic events

None of the existing medical scores was learned directly fromdata without any human manipulation.

State-of-the-Art Cont’d

Machine Learning point of view:

I Problems are formulated and solved as linear integer tasksI B. Ustun and C. Rudin. Supersparse linear integer models for

optimized medical scoring systems. Machine Learning, 2015.

I Bayesian optimisation is used to fit a modelI S. Ertekin and C. Rudin. A Bayesian approach to learning

scoring systems. Big Data, 3(4), 2015.

I Linear methods (regressions) using gradient-basedoptimisation, with rounded coefficientsI D. Golovin, D. Sculley, H. B. McMahan, and M. Young.

Large-scale learning with less ram via randomization. InICML, 2013.

Automated Score Construction

1. Identification of related clinical variables

age glycated hemoglobin insuline other drugs

2. Meaningful thresholds for clinical variablesage glycated hemoglobin insuline other drugs

<40 40–49 50 – 59 >60 <6.5 6.5 – 6.9 7 – 8.9 > 9 yes no yes no

3. Optimization of weights for sub-groups of the variablesage glycated hemoglobin insuline other drugs

<40 40–49 50 – 59 >60 <6.5 6.5 – 6.9 7 – 8.9 > 9 yes no yes no0 1 2 3 0 2 4 6 10 0 3 0

4. Find an optimal separator between two classes

Classify as Remission if sum of scores < 7Classify as Non-remission if sum of scores ≥ 7

Automated Score Construction

1. Identification of related clinical variables

age glycated hemoglobin insuline other drugs

2. Meaningful thresholds for clinical variablesage glycated hemoglobin insuline other drugs

<40 40–49 50 – 59 >60 <6.5 6.5 – 6.9 7 – 8.9 > 9 yes no yes no

3. Optimization of weights for sub-groups of the variablesage glycated hemoglobin insuline other drugs

<40 40–49 50 – 59 >60 <6.5 6.5 – 6.9 7 – 8.9 > 9 yes no yes no0 1 2 3 0 2 4 6 10 0 3 0

4. Find an optimal separator between two classes

Classify as Remission if sum of scores < 7Classify as Non-remission if sum of scores ≥ 7

Automated Score Construction

1. Identification of related clinical variables

age glycated hemoglobin insuline other drugs

2. Meaningful thresholds for clinical variablesage glycated hemoglobin insuline other drugs

<40 40–49 50 – 59 >60 <6.5 6.5 – 6.9 7 – 8.9 > 9 yes no yes no

3. Optimization of weights for sub-groups of the variablesage glycated hemoglobin insuline other drugs

<40 40–49 50 – 59 >60 <6.5 6.5 – 6.9 7 – 8.9 > 9 yes no yes no0 1 2 3 0 2 4 6 10 0 3 0

4. Find an optimal separator between two classes

Classify as Remission if sum of scores < 7Classify as Non-remission if sum of scores ≥ 7

Automated Score Construction

1. Identification of related clinical variables

age glycated hemoglobin insuline other drugs

2. Meaningful thresholds for clinical variablesage glycated hemoglobin insuline other drugs

<40 40–49 50 – 59 >60 <6.5 6.5 – 6.9 7 – 8.9 > 9 yes no yes no

3. Optimization of weights for sub-groups of the variablesage glycated hemoglobin insuline other drugs

<40 40–49 50 – 59 >60 <6.5 6.5 – 6.9 7 – 8.9 > 9 yes no yes no0 1 2 3 0 2 4 6 10 0 3 0

4. Find an optimal separator between two classes

Classify as Remission if sum of scores < 7Classify as Non-remission if sum of scores ≥ 7

Our team worked on:

I Simultaneously do: binning (a supervised discretization) andthe score learning for the bins.

I The Fused Lasso (R. Tibshirani et al., 2015) shrinks similarvariables to each other creating bins, and ordering them.

I In our approach: the Fused Lasso creates categories andestimates the corresponding weights.

The Linear Formulation

We minimise the hinge loss

N∑

i=1

`(yi , θ · xi + b) + λ

d−1∑

j=1

|θj − θj+1|. (3)

If we re-write the task as an optimisation problem, we obtain:

min( N∑

i=1

ξi +d∑

j=1

ηj), such that (4)

for all i , yi (θ · xi + b) ≥ 1− ξi , (5)

for all j , − ληj ≤ θj − θj+1 ≤ ληj , (6)

ξi ≥ 0, θi ∈ N for all i , (7)

and we get d + 1 + N + (d − 1) variablesθ1, . . . , θd , b, ξ1, . . . , ξN , η1, . . . , ηd−1.

The Algorithm: a Linear SVM Penalized by Fused Lassofor Score Learning

Input: a continuous matrix X (N × d), class vector YOutput: weights associated with each (observed) value in X

for j ∈ {1, . . . , d} doReformulate X j as a matrix X using one-hot-encoding

Solve discrete L1-SVM with integrity constraints on θ andfused-lasso penalty using X and Y

From the resulting θ, build a binning of the values of X j , suchthat two contiguous values associated with equal weights arein the same bin

end for

The obtained scores: Mushrooms and Breast CancerData Sets

−7 −4 −1 1 4 5 6 7

ediblepoisonous

Scores

050

010

0015

0020

0025

00

−14 −7 −1 3 7 10 16 22 28

class 1class 2

Scores

05

1015

2025

30

Distributions of the scores on the Mushrooms data (on the left), and onthe Breast cancer data (on the right). On the horizontal axis: all possiblescores in data sets. On the vertical axis: the number of observations withthe corresponding score. The classes are quite well separated; the optimalseparator value is 0.

Prediction of the Diabetes Remission

0 2 4 6 8 11 15 18 21

remissionnot remission

Score

05

1015

2025

30

0 2 4 6 7 8 9 11 15

remissionnot remission

Score

010

2030

4050

6070

Distributions of patients according to the diabetes remissionscores. On the left: scores obtained with the DiaRem score, on theright: a distribution based on the learned scoring system.

FCB

We define the problem of scoring systems learning as follows. Wehave a set of training examples {Zi ,Yi}Ni=1, where Z is the intervalencoding of some matrix X , and Y is a class label. A scorefunction is defined as 〈θ,Z 〉, where θ is a coefficient vector, and〈·, ·〉 is the scalar product. Given Z , and estimated weights θ, ascore si for an observation Zi is equal to 〈θ,Zi 〉. A class can bepredicted according to the conditional probability

p(y = 1|Z ) =1

1 + exp(−〈θ,Z 〉) . (8)

FCB cont’dThe problem is formulated as a feature selection task.The proposed algorithm at each iteration finds an optimal modelover all already added features, and adds a new feature, i.e., splitsone of the existing bins into two bins, if this operation minimizesthe empirical risk:

j , l , u, r = argmaxfor all j ,]l ,u],r∈]l ,u]

(max(|(∇R)jlr |, |(∇R)jru|)

), (9)

θ =(θ ∪ {θjlr , θjru})− {θjlu}. (10)

In a replacement step of the algorithm, the least important feature

j , l , u, q = argminfor all j ,]l ,q],]q,u],q∈]l ,u]

(|θjlq − θjqu|

), (11)

θ =(θ ∪ {θjlu})− {θjlq, θjqu}. (12)

is removed from the model if this operation does not degrade theperformance. In other words, one of the bins is merged with itsneighbour.

FCB cont’d

FCB cont’d

SLIM

Supersparse Linear Classification ModelsB. Ustun, S. Traca, C. Rudin, Supersparse Linear Integer Modelsfor Interpretable Classification, 2014

I L1 norm is used to find a sparse solution

I L0 norm is a an “ideal” penalty

minλ

1

N

N∑

i=1

1{yiλT xi ≤ 0}+ C0‖λ‖0 + ε‖λ‖1 (13)

SLIM optimises accuracy and sparsity by minimising the 0− 1 lossthe the L0 norm.

SLIM cont’d

The task is presented and solved as an integer programmingproblem, and we use the Matlab implementation1 provided by theSLIM authors. The training procedure relies on the IBM ILOGCPLEX Optimization Studio2 which efficiently performs theconstrained optimization. In particular, integrity constraints areadded to the optimisation problem to obtain integer solutions.

1https://github.com/ustunb/slim-matlab2http://www-03.ibm.com/software

Local interpretable model-agnostic explanations (LIME)

Ribeiro, M.T., Singh, S. and Guestrin, C., 2016

I Surrogate models are trained to approximate the predictionsof the underlying black box model

I Instead of training a global surrogate model, LIME focuses ontraining local surrogate models to explain why individualpredictions were made.

The goal is to understand why the machine learning model made acertain prediction. LIME tests what happens to the predictionswhen you give variations of your data into the machine learningmodel. LIME generates a new dataset consisting of permutedsamples and the corresponding predictions of the black box model.On this new dataset LIME then trains an interpretable model,which is weighted by the proximity of the sampled instances to theinstance of interest.

LIME cont’d

The recipe for training local surrogate models:

1. Select your instance of interest for which you want to have anexplanation of its black box prediction.

2. Perturb your dataset and get the black box predictions forthese new points.

3. Weight the new samples according to their proximity to theinstance of interest.

4. Train a weighted, interpretable model on the dataset with thevariations.

5. Explain the prediction by interpreting the local model.

LIME cont’d

Learn an interpretable model (e.g., linear model) in the vicinity ofthe given instance.

M. T. Ribeiro, S. Singh, and C. Guestrin. Why should i trust you?: Explainingthe predictions of any classifier. In Proceedings of the 22nd ACM SIGKDDInternational Conference on Knowledge Discovery and Data Mining, pages1135?1144. ACM, 2016.

LIME cont’d

Learn an interpretable model (e.g., linear model) in the vicinity ofthe given instance.

M. T. Ribeiro, S. Singh, and C. Guestrin. Why should i trust you?: Explainingthe predictions of any classifier. In Proceedings of the 22nd ACM SIGKDDInternational Conference on Knowledge Discovery and Data Mining, pages1135?1144. ACM, 2016.

Prototype learning

I A prototype is a data instance that is representative of all thedata

I Prototypes can improve the interpretability of complex datadistributions (but usually they can not explain the data)

I Any clustering algorithm that returns actual data points ascluster centers would qualify for selecting prototypes

I Data points in areas with high data density are goodprototypes

Prototype learning cont’d

Oscar Li, Hao Liu, Chaofan Chen, Cynthia Rudin. Deep Learning forCase-Based Reasoning through Prototypes: A Neural Network thatExplains Its Predictions, AAAI, 2018

Prototype learning cont’d

Two interpretable regularization terms:

R1(p1, . . . , pm,D) =1

m

m∑

j=1

mini∈[1,n]

‖pj − f (xi )‖22, (14)

R2(p1, . . . , pm,D) =1

m

m∑

j=1

minj∈[1,n]

‖pj − f (xj)‖22 (15)

I Minimization of R1 requires each prototype vector to be asclose as possible to at least one of the training examples (inthe latent space)

I Minimization of R2 requires every (encoded) training exampleto be as close as possible to one of the prototypes

Oscar Li, Hao Liu, Chaofan Chen, Cynthia Rudin. Deep Learning forCase-Based Reasoning through Prototypes: A Neural Network thatExplains Its Predictions, AAAI, 2018

Sparse Models

Loss function + λPenalty (16)

I The L2 penalty term is to avoid overfitting

I The L1 penalty term induces sparsity

I Sparse (compact) models are considered to be moreinterpretable

I Penalty terms including the L1: group penalties, hierarchicalpenalties, etc.

Rounding Methods

Input: X , YOutput: weights associated with each (observed) value in X

function Project(w) = max(−R,min(w ,R))function RandomizedRounding(w ,ε)

b = ε⌈wε

⌉

a = ε⌊wε

⌋

return b with probability (w − a)/ε,and a with probability 1− (w − a)/ε

Medical Data and Some Applications

State-of-the-art Supervised ML Methods

Interpretable Models

Challenges in Interpretable Supervised Learning

The ChallengesConstructing optimal logical models

I A model consisting of statements “or”, “and”, “if-then”, etc.

I Often called rule lists

I Expert systems (1970’s)

I Optimisation problem:

minf ∈F

(1

n1{training observation i is misclassified by f } + λ× size(f )

)

(17)

I The parameter λ is the classification error one would sacrificein order to have one fewer term in the model; if λ is 0.01, itmeans we would sacrifice 1% training accuracy in order toreduce the size of the model by one.

from C. Rudin. Please Stop Explaining Black Box Models for High-StakesDecisions, 2019

The Challenges cont’dConstruct optimal sparse scoring systemsCHADS2 Score to assess stroke risk:

I Often used in medicine (and criminology)I Optimisation problem

minb1,b2,...,bp∈{−10,−9,...,9,10}

1

n

n∑

i=1

log(

1 + exp(−p∑

j=1

bjxij))

+ λ∑

j

1{bj 6=0}

(18)

I The model size is the number of non-zero coefficients, and λis the trade-off parameter

from C. Rudin. Please Stop Explaining Black Box Models for High-StakesDecisions, 2018

The Challenges cont’dDefine interpretability for specific domains and create methodsaccordingly including computer vision

From Chen et al., 2018 : parts of the image are similar toprototypical parts of training examples.

from C. Rudin. Please Stop Explaining Black Box Models for High-StakesDecisions, 2018