Prof. Dr. PhilippeCattin: Digital Image Fundamentals Contents … › BIA › pdf ›...

Transcript of Prof. Dr. PhilippeCattin: Digital Image Fundamentals Contents … › BIA › pdf ›...

Digital ImageFundamentals

Biomedical ImageAnalysis

Prof. Dr. Philippe Cattin

MIAC, University of Basel

Feb 22nd, 2016

Feb 22nd, 2016Biomedical Image Analysis

1 of 75 21.02.2016 22:33

Contents

2

4

5

6

7

8

9

10

11

12

15

16

18

19

20

21

22

23

25

26

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Contents

Abstract

1 Light and its Interaction with Matter

Light as an Electro-Magnetic Wave

Parameters to Fully Describe a Harmonic Wave

Equivalents in the Human Visual System

The Saharan Ant Cataglyphis

Interaction of Light and Matter

Absorption

Scattering

Refraction

Reflection

2 The Human Eye

2.1 Anatomy of the Human Eye

Anatomy of the Human Eye

Image Formation in the Eye

2.2 Colour Perception

The Electromagnetic Spectrum

The Human Trichromatic Vision

Colour Perception in the Human Eye

Luminosity Function of the Human Eye

Primary and Secondary Colours

Primary and Secondary Colours of Pigments

3 Digital Images

3.1 A Simple Image Model

A Simple Image Model

Basic Nature of f(x,y)Feb 22nd, 2016Biomedical Image Analysis

2 of 75 21.02.2016 22:33

28

29

30

31

32

33

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

52

53

54

56

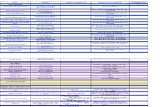

3.2 Sampling and Quantisation

Phenomenological View of Sampling & Quantisation

Sampling

Quantisation

Sampling+Quantisation

Number of Samples and Grey-Levels

Uniform vs. Non-uniform Sampling

3.3 Basic Relationships between Pixels

Motivation

Basic Relationships between Pixels

Neighbourhood

Connectivity

Paradox of the 4-Connectivity

Paradox of the 8-Connectivity

Adjacency

Ambiguity with 8-Adjacency

Summary of Connectivity

Connected Component

Distance Measures

Euclidian Distance

D4 Distance

D8 Distance

Dm Distance

4 Colour and Colour Models

4.1 Motivation

Why Colour?

Why Do We Need Different Colour Models?

Colour Models, Colour Spacesspace

4.2 RGB Colour Model

RGB Colour ModelFeb 22nd, 2016Biomedical Image Analysis

3 of 75 21.02.2016 22:33

57

59

60

61

63

64

65

67

69

71

72

73

74

75

77

79

80

81

82

83

84

85

RGB Colour Model (2)

4.3 HSV Colour Model

HSV Colour Model

Converting from RGB to HSV

Converting from HSV to RGB

4.4 HSI Colour Model

HSI Colour Model

Converting from RGB to HSI

Converting from HSI to RGB

4.5 YCbCr Colour Model

YCbCr Colour Model

4.6 CMY/CMYK Colour Model

CMY/CMYK Colour Model

4.7 CIE Absolute Colour Spaces

Absolute Colour Spaces

Conversion of Colour Spaces

CIE 1931 Colour Space

CIE xy Chromaticity Diagram

CIELAB Colour Model

5 Fundamental Steps in Image Processing

Fundamental Steps in Image Processing

5.1 Example: Aorta Segmentation

Example

Example: A Naive Approach

Example: Pre-Processing, Enhancement

Example: Basic Feature Extraction

Example: Grouping

Example: Detection of Ascending and DescendingAorta

Example: Detection of Ascending and DescendingAorta (2)

Feb 22nd, 2016Biomedical Image Analysis

4 of 75 21.02.2016 22:33

86

87

Example: Generation of the Rough Aortic Mesh

Example: Generation of the Rough Aortic Mesh (2)

Feb 22nd, 2016Biomedical Image Analysis

5 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

(2)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Abstract

The purpose of this chapter is to introduce several concepts relatedto digital images and some of the notation used throughout thelecture. Furthermore it briefly summarises the mechanics of thehuman visual system, and introduces an image model based on theillumination-reflection phenomenon.

6 of 75 21.02.2016 22:33

Light and itsInteraction withMatter

Feb 22nd, 2016Biomedical Image Analysis

(4)Light as an Electro-MagneticWave

The theory of light and its interaction with matter is known as Optics.It can be analysed in three levels of detail:

Geometrical Optics: Useful to predict

light paths that interact with bodies of a

larger size than its wavelength (no

diffraction).

1.

Fig 2.1: Pencil in a bowl of

water

Physical Optics: Useful to predict light

paths that interact with bodies of a

larger size than its wavelength (no

diffraction).

2.

Fig 2.2: Linear grid polariser

Quantum-mechanical Optics: Is required

to explain aspects like emission,

absorption, near-field microscopy

(nsom),...

3.

Fig 2.3: Near-field scanning

optical microscope

7 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Light and its Interaction with Matter

(5)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Parameters to Fully Describe aHarmonic Wave

Wavelength : The length of one period

Direction: It is perpendicular to both

the electric and magnetic field vectors

and

PhaseFig 2.4: Light wave

Amplitude : The maximum of the magnitude is

proportional to the perceived intensity

Polarisation: When the orientation of remains fixed, the light is

linearly polarised with an angle .

The amplitude of the magnetic field is not missing from the

list, but connected by a simple relation to the electric field. Thisrelation depends on the medium:

where is the magnetic permeability and the electric permittivity

of the medium.

8 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Light and its Interaction with Matter

(6)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Equivalents in the HumanVisual System

The first three parameters have a bearing on the way they areperceived by the human visual system:

Wavelength → related to observed colour

Direction → determines the viewpoints from where the wave can

be observed

Amplitude → brightness/intensity of the wave

Phase and direction of polarisation are not perceived by the humanvision system. Yet many insects, fish and amphibia are sensitive topolarisation and use it for orientation.

9 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Light and its Interaction with Matter

(7)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

The Saharan Ant Cataglyphis

Many insects are capable of complex androbust navigation behaviour. The desert antcataglyphis for example explores thesurrounding of its nest for up to a fewhundred meters and returns back to its nestprecisely and on a straight line. An amazingtask for an animal with a body of less than

and a brain of less than one cubicmillimeter.

To achieve this amazing task, ants as well asbees use the different level of polarisation ofthe sky as their compass.

Fig. 2.6 shows the high degree of linearpolarisation of the sky away from thesun.

Fig 2.5: Saharan cataglyphis

ant

Fig 2.6: Wide angle image

taken with a linear polariser.

10 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Light and its Interaction with Matter

(8)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Interaction of Light and Matter

Through its interaction with matter light might change its direction,intensity, polarisation, and sometimes even its wavelength. Four basictypes of interaction will be discussed:

Absorption1.

Scattering2.

Refraction3.

Reflection4.

Diffraction and fluorescence are not discussed in this lecture.

11 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Light and its Interaction with Matter

(9)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Absorption

Definition:

Absorption is the process by which the energy of a photon istaken up by another entity, for example, by an atom whosevalence electrons make a transition between two electronicenergy levels.

The absorbed photon is destroyed in the process

The absorbed energy may be re-emitted or converted into heat

energy

The amount of absorption varies with the wavelength of the light

→ leading to colour in pigments and spectral lines

The spectral lines are characteristic to the matter

12 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Light and its Interaction with Matter

(10)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Scattering

Definition:

Scattering is a general physical process whereby some formof radiation, such as light, sound, or moving particles, isforced to deviate from a straight trajectory by one or morelocalised non-uniformities in the medium through which itpasses.

Scattering of sunlight by the atmosphere is the reason why the

sky is blue (Tyndall effect, Rayleigh scattering).

13 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Light and its Interaction with Matter

(11)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Refraction

Definition:

Refraction is the change in direction of awave due to a change in its velocity.

Refraction occurs when a wave travels from

a medium with a given refractive index

(speed of light) to a medium with a different

refractive index (different speed of light)

Snell's Law (Willebrord Snell 1580–1626):

If the light travels from the optically denser

medium to the less dens medium total

refraction may occur

The following optical phenomenom can be

explained with refraction: Rainbow,

Mirages, and Fata Morgana

Fig 2.7: Refraction

Fig 2.8: Pencil in a bowl

14 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Light and its Interaction with Matter

(12)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Reflection

Definition:

Reflection is the change in direction of awave front at an interface between twodissimilar media so that the wave frontreturns into the medium from which itoriginated.

Specular (mirror-like) reflection

According to the law of reflection, the incident

angle is equal to the reflection angle , thus

Light is reflected whenever it hits the boundary

of two media with different refractive indices.

In fact a certain fraction of the light is reflected

and the remainder is refracted

Total internal reflection only happens if light

travels from a denser medium to a less dense

medium and the angle is above the critical

angle

Fig 2.9: Total

reflection

Fig 2.10: Diffuse

reflection

15 of 75 21.02.2016 22:33

The Human Eye

Anatomy of theHuman Eye

Feb 22nd, 2016Biomedical Image Analysis

(15)Anatomy of the Human Eye

The vertebrate's eye uses a convex

lens and a layer of photoreceptors at

the back of a spherically shaped

cavity, see Fig 2.11.

1.

The cornea, iris, lens, and the retina

are the major components

Cornea: Protective transparent

tissue

Iris: Diaphragm that regulates

the amount of light entering the

eye

Lens: Absorbs ultraviolet/infrared

light and focuses the visible light

Retina: Layer of photoreceptors

(rods, cones) that covers the

surface

2.

Fig 2.11 The human eye

16 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Anatomy of the Human Eye

(16)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Image Formation in the Eye

The lens focuses distant and close objects

onto the retinal surface

The iris regulates the amount of light that

passes to the retina → this also influences

the depth of field

Due to chromatic aberration the human eye

can not properly focus on red and blue at

the same time

The rods and cones on the photosensitive

layer then translate the signals into

electrical impulses that are transmitted to

the brain through the optical nerve.

Fig 2.12 Focusing

mechanism

Fig 2.13 Two eye stereo

vision

17 of 75 21.02.2016 22:33

Colour Perception

Feb 22nd, 2016Biomedical Image Analysis

(18)The ElectromagneticSpectrum

What is Colour?

18 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Colour Perception

(19)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

The Human TrichromaticVision

The human eye has about cones in each eye. Most of them in thecentral portion, the fovea. There are

three types of cones each sensitive in adifferent wavelength band, see Fig 2.14

blue ,

green ,

red

In addition a human eye has approx.

evenly spread rodes that aremost sensitive at and used fornight vision.

As the irradiance threshold is muchlower for the rodes compared to thecones we do not perceive colour inmoonlit scenes.

Fig 2.14 Spectral sensitivity of the

cones and rodes

Fig 2.15 Retina image with the

optic nerve and fovea

19 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Colour Perception

(20)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Colour Perception in theHuman Eye

Monochromatic (spectral) Colours

Every base colour causes a certain

activity on all three cones

We see the spectral-colour

(wavelength) that would cause the

same activity on the cones

Although men can not see a difference

certain animals (and some women)

with tetrachromacy can see it

Mixed Colours

20 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Colour Perception

(21)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Luminosity Function of theHuman Eye

Fig 2.16 Luminosity function of

the human eye

The eye is most sensitive in the green

portion of the spectrum → green

laserpointers are better visible than

red pointers

The average colour sensitivity of the

female eye is different than the

average colour sensitivity of man's

eye.

Woman can differentiate high

frequency colours (short

wavelengths) better than men

21 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Colour Perception

(22)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Primary and SecondaryColours

Additive primaries - mixture of light

Fig 2.17 Additive colour mixing

The Primary Colours are red,

green, and blue

The Secondary Colours are

yellow, cyan, and magenta

Primary colours are not a

physical but rather a

biological concept

The primary colours are

chosen such, that they provide

a wide gamut

The primary colours span a

space of colours

22 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Colour Perception

(23)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Primary and SecondaryColours of Pigments

Subtractive primaries - mixture of pigments

Fig 2.18 Subtractive colour mixing

The subtractive primaries are

the equivalent concept for

mixtures of pigments, such as

printers

The Primary Colours of

pigments are yellow, cyan, and

magenta

The Secondary Colours of

pigments are red, green, and

blue

23 of 75 21.02.2016 22:33

Digital Images

A Simple Image Model

Feb 22nd, 2016Biomedical Image Analysis

(25)A Simple Image Model

The term image refers to a 2D light-intensity function denotedby , where the value or amplitude of at spatial

coordinates gives the intensity (brightness) of the image

at that point

24 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

A Simple Image Model

(26)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Basic Nature of f(x,y)

The basic nature of can be characterised by two components:

The amount of source light incident (illumination) on the scene

being viewed is

(2.1)

1.

The amount of light reflected (reflectance) by the objects in the

scene is

(2.2)

Total absorption and total reflectance is never

achieved

2.

The functions and combine as a product

(2.3)

and since light is a form of energy

(2.4)

holds.

25 of 75 21.02.2016 22:33

Sampling andQuantisation

Feb 22nd, 2016Biomedical Image Analysis

(28)Phenomenological View ofSampling & Quantisation

In order for a computer to process an image, it has to be described asa series of numbers, each of finite precision. The digitisation of

is called:

Image sampling when it refers to spatial coordinates and1.

Quantisation when it refers to the amplitude of 2.

The images are thus only sampled at a discrete number of locationswith a discrete set of brightness levels.

A more thorough account of Sampling and Quantisation willbe given in a separate chapter.

26 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Sampling and Quantisation

(29)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Sampling

Fig 2.19 Height profile of Switzerland Fig 2.20 16:1 subsampled height profile

27 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Sampling and Quantisation

(30)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Quantisation

Fig 2.21 Height profile of Switzerland

Fig 2.22 Height profile along the red line

Fig 2.23 Quantised height profile of

the red line

28 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Sampling and Quantisation

(31)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Sampling+Quantisation

The digitisation process requires decision about:

The size of the image array and

The number of discrete grey-levels allowed for each pixel

(2.5)

In digital image processing these quantities are usually powers oftwo, thus

(2.6)

29 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Sampling and Quantisation

(32)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Number of Samples andGrey-Levels

How many samples and grey-levels are required for a goodapproximation?

Resolution (degree of discernable detail) of an image depends on

the number of samples and grey-levels

i.e. the bigger these parameters, the closer the digitised array

approximates the original image

But the storage and processing time increases rapidly

30 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Sampling and Quantisation

(33)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Uniform vs. Non-uniformSampling

Cartesian sampling Hexagonal sampling Non-uniform sampling

Non-uniform sampling can be advantages if the sampling process

is adapted to the image thus fine sampling close to sharp

boundaries, whereas coarse sampling can be used in smooth

regions

Problems: Not equal area of the picture elements (pixels)

31 of 75 21.02.2016 22:33

Basic Relationshipsbetween Pixels

Feb 22nd, 2016Biomedical Image Analysis

(35)Motivation

Quantisation does not automatically imply a spatial structure→ has to be defined!

32 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Basic Relationships between Pixels

(36)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Basic Relationships betweenPixels

Definitions

Digital Image:

Pixels:

Subset of pixels of :

Quantisation alone does not imply a spatial structure → it must be

defined

Important aspects

Topology

Metric (distances)

Neighbourhood ←→ Metric

Neighbourhood on the grid

2D → 4-, 8- or mixed-neighbourhood

3D → 6-, 18-, 26-neighbourhood

33 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Basic Relationships between Pixels

(37)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Neighbourhood

4-Neighbours

A pixel at spatial position has 4 neighbours:

This set of pixels is called the 4-neighbourhood of :

Diagonal Neighbours

The four diagonal neighbours of are :

8-Neighbourhood

4-neighbourhood

d-neighbourhood

8-neighbourhood

34 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Basic Relationships between Pixels

(38)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Connectivity

Connectivity between pixels is important to:

Establish boundaries around objects1.

Extract connected components in an image2.

Two pixels p,q are connected if

They are neighbours, e.g. 1.

Their grey values satisfy a specified

criterion of similarity, e.g. in a binary image

they have the same value of either or

2.

Fig 2.24: The pixels

p,q are not

connected under to

4-connectivity but

they are under

8-connectivity

Let be the set of grey-level values used to define connectivity;

for example in a binary image or in a grey-scale image

. We can define two types of connectivity:

4-connectivity if two pixels with values from and is in1.

8-connectivity if two pixels with values from and is in2.

35 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Basic Relationships between Pixels

(39)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Paradox of the 4-Connectivity

Problem

The black pixels on the diagonal in Fig 2.25 are not4-connected. However, they perfectly insulatebetween the two sets of white pixels (which arealso not 4-connected).

This creates undesirable topological anomalies.

Solution

Use the 8-connectivity

Fig 2.25:

4-connectivity

paradox

36 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Basic Relationships between Pixels

(40)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Paradox of the 8-Connectivity

Problem

However, a similar paradox exists with 8-connectivity

(a) Binary image (b)

4-connectivity

(c)

8-connectivity

(d) 8-conn with

background

(e) forground

with 8-,

background with

4-connectivity

Solution

Jordan Theorem:

Foreground 8-neighbourhood+Background 4-neighbourhood

37 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Basic Relationships between Pixels

(41)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Adjacency

Similar to connectivity we can define two typesof adjacency that connect pixels over severalhops:

4-adjacency

8-adjacency

A pixel is adjacent to a pixel

if there exists a sequence

(path/curve) of length where is

connected to for all .

Fig 2.26: Given a binary

image with p,q. The

pixels p,q are adjacent

under the 8-adjacency

but no not under

4-adjacency

Adjacency is in other words the transitive extension ofconnectivity

38 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Basic Relationships between Pixels

(42)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Ambiguity with 8-Adjacency

Example

Adjacency of a binary image with and

8-connectivity → ambiguity in the path connections

Solution

m-connectivity (mixed connectivity): Two pixels

with values from are connected if

is in , or

is in and the set is empty

Fig 2.27:

8-connectivity

Fig 2.28:

m-connectivity

39 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Basic Relationships between Pixels

(43)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Summary of Connectivity

Three types of connectivity can thus be defined:

4-connectivity

8-connectivity

m-connectivity

40 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Basic Relationships between Pixels

(44)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Connected Component

These connectivity definitions allow to extract connected componentsfrom an image, thus

For any pixel in , the set of pixels in that

are connected to is a connected component

of

If has only one connected component then is called a connected set

The concept of assigning labels to disjoint connectedcomponents of an image is of fundamental importancein automated image analysis.

Fig 2.29: The

sample binary

image has two

disjoint

connected

components

41 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Basic Relationships between Pixels

(45)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Distance Measures

For pixels and , with coordinates and

respectively, is a distance function or metric if

,1.

, and2.

3.

The following distance measures will be considered

Euclidian distance

distance (city-block-distance)

distance (chess-board-distance)

distance

42 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Basic Relationships between Pixels

(46)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Euclidian Distance

The Euclidian distance between and is defined

as

(2.7)

Pixels having a distance less than or equal

to from are contained in a disc of

radius centred at .Fig 2.30: Euclidian

distance

43 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Basic Relationships between Pixels

(47)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

D4 Distance

The distance or city-block-distance

forms a diamond centred at

Fig 2.31: Pixels with

from

44 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Basic Relationships between Pixels

(48)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

D8 Distance

The distance or chessboard distance

forms a square centred at

are the 8-neighbours of

Fig 2.32: Pixels with

from

45 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Basic Relationships between Pixels

(49)

Prof. Dr. Philippe Cattin: Digital Image

Fundamentals

Dm Distance

The distance or mixed distance

is derived from the m-connectivity (mixed connectivity)

The distances and between the points are

independent of any paths that might exist between these points,

because these distances involve only the coordinates of the points

The mixed distance in contrast depends on the values of the

pixels along the path and those of their neighbours, as it relies on

m-connectivity

Example:

46 of 75 21.02.2016 22:33

Colour and ColourModels

Motivation

Feb 22nd, 2016Biomedical Image Analysis

(52)Why Colour?

The use of colour in image processing is mainly motivated by twoprincipal factors:

Colour is a powerfull descriptor that often simplifies object

identification and extraction from a scene

1.

The human eye can discern thousands of colour shades and

intensities, compared to only two-dozen shades of grey

2.

(a) (b) (c) (d)

Fig 2.33 (a) Original retina fundus image, (b) red component, (c) green component,

and (d) blue component

47 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Motivation

(53)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Why Do We Need DifferentColour Models?

The purpose of a colour model is to facilitate further processing.Depending on the application different colour models are suitable.The figure below illustrates nicely that the hue channel seems idealfor the flower segmentation.

Original image Red channel Green channel Blue channel

Fig 2.34: Colour channels of the RGB colour model

Hue channel Saturation channel Intensity channel

Fig 2.35: Channels of the HSI colour model

48 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Motivation

(54)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Colour Models, ColourSpacesspace

Relative Colour Models

RGB: Broadly used in digital

cameras

HSV: Used by artists (gimp,...)

HSI: Similar to HSV

YIQ: Used in NTSC Colour TV

broadcasts

YCbCr: Digital video (MPEG,

JPEG)

CMY & CMYK: - printers

Absolute Colour Models

CIE L*a*b

sRGB

Adobe RGB

49 of 75 21.02.2016 22:33

RGB Colour Model

Feb 22nd, 2016Biomedical Image Analysis

(56)RGB Colour Model

An RGB colour image is an array of colour pixels. Each pixel is atriplet corresponding to the red, green,and blue component.

The number of bits used to represent thepixel values of the component imagesdetermines the bit depth of an RGBimage and is usually:

resulting in a total of

resulting in a total of

If all component images are identical,the result is a grey-scale image.

The RGB colour space is usually showngraphically as a colour cube, see Fig2.37.

Fig 2.36: RGB Image

Fig 2.37: RGB Colourcube

The vertices are the primary and secondary colours of light plusblack and white.

50 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

RGB Colour Model

(57)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

RGB Colour Model (2)

The best example of the usefulness of the RGB model is in theprocessing of multispectral image data.

Images are obtained by sensors operating in different spectral

ranges

The retina is often imaged at different wavelengths.

Each image plane has physical meaning

Suppose, though, that the problem is of enhancing a human facepartly occluded by shadow. As histogram equalisation on eachcomponent alters the three images differently, the resulting fleshtones will not appear natural. A different colour model might helphere.

51 of 75 21.02.2016 22:33

HSV Colour Model

Feb 22nd, 2016Biomedical Image Analysis

(59)HSV Colour Model

The HSV (Hue, Saturation, Value) colour model,also known as HSB (Hue, Saturation,Brightness), is considerably closer than theRGB system to the way in which humansexperience and describe colour sensations. Itdefines the colour space in terms of threecomponents:

Hue: defines the pure colour and ranges

from

Saturation: is the vibrancy or a measure for

the degree to which a pure colour is diluted

by white light. It ranges from

Value: the brightness of the colour in the

range of

The HSV colour model is formulated by lookingat the RGB colour cube along its grey axis,which results in a hexagonally shaped colourpalette, Fig 2.38

Fig 2.38: HSV colour

hexagon

Fig 2.39: HSV hexagonal

cone

52 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

HSV Colour Model

(60)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Converting from RGB to HSV

Given the RGB values with R, G, and B normalised to ,

, and the HSI values can

be determined with the following rules:

1.

2.

3.

4.

The resulting lies in the interval , and in the interval

.

Some special cases have to be observed when transforming RGB toHSV

is undefined if . All points with show a

shade of grey and thus no Hue (H) can be assigned.

1.

is undefined if . As only for

black the saturation term is undefined and generally set to . The

same applies for white where but no special care has

to be taken for this case, as the equation correctly yields .

2.

In computer graphics, it is typical to represent each channel as an

8-bit integer (0-255) instead of real numbers. It is worth noting

that when encoded in this way, every possible HSV colour has an

RGB equivalent. However, the inverse is not true. Certain RGB

3.

53 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

colours have no integer HSV representation. In fact, only 1/256th

of the RGB colours are available in HSV.

54 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

HSV Colour Model

(61)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Converting from HSV to RGB

Given the (H,S,V)-Values of a colour where and

the RGB values can be determined using the

following rules:

1. If the colour is a shade of grey and are

set to .

2. If the following rules are used:

55 of 75 21.02.2016 22:33

HSI Colour Model

Feb 22nd, 2016Biomedical Image Analysis

(63)HSI Colour Model

The HSI (Hue, Saturation, Intensity)colour model, also known as HSL (Hue,Saturation, Luminosity/Luminance), is incontrast to HSV drawn as a colour cone,a colour hexcone or as a sphere. Bothsystems are non-linear deformations ofthe RGB colour cube. HSI spans thecolour space in terms of threeparameters:

Hue: defines the pure colour and

ranges from

Saturation: is a measure for the

degree to which a pure colour is

diluted by white light. It ranges from

I: intensity of the colour in the range

of

HSI, similar to HSV, decouples nicely theintensity from the colour-carryinginformation (hue and saturation).

Fig 2.40: HSI Colour model

56 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

HSI Colour Model

(64)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Converting from RGB to HSI

Given an image in RGB colour format, the components can beobtained using the following equations:

with

It is assumed that the RGB values have been normalised to the range, and that the angle is measured with respect to the red axis of

the HSI space. Hue can be normalised to the range by

dividing by .

57 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

HSI Colour Model

(65)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Converting from HSI to RGB

Given the values of HSI in the interval , the corresponding RGB

values can be found using the equations below. The procedure startsby multiplying by , which returns hue in its original range of

.

If

, ,

elseif

, ,

elseif

, ,

end

58 of 75 21.02.2016 22:33

YCbCr Colour Model

Feb 22nd, 2016Biomedical Image Analysis

(67)YCbCr Colour Model

The YCbCr colour model is widely used in digital video (MPEG, JPEG).In this format, the luminance information is represented by a singlecomponent, , and colour information is stored as two colour-difference components, and . This accounts for the fact that thehuman eye can discern thousands of colour shades but onlytwo-dozen shades of grey.

Component is the difference between the blue component and areference, and is the difference between the red component and areference value.

The transformation used to convert from RGB to YCbCr is

(2.8)

In order to obtain the RGB values from a set of YCbCr values, onesimply uses the inverse matrix operation.

59 of 75 21.02.2016 22:33

CMY/CMYK ColourModel

Feb 22nd, 2016Biomedical Image Analysis

(69)CMY/CMYK Colour Model

As already mentioned previously, cyan (C), magenta (M), and yellow(Y) are the secondary colours of light or, alternatively, the primarycolours of pigments. In other words, a cyan coating subtracts redlight from reflected white light.

Most devices that deposit coloured pigments such as printers andcopiers, require the CMY colour model. The conversion from RGB toCMY is performed using the simple equation:

(2.9)

where the assumption is that all colour values have been normalisedto the range of .

In theory mixing equal amounts of the three primary pigments shouldproduce black. In practice it produces a muddy looking black. Inorder to produce true black, a fourth colour, black (K) is added,giving rise to the CMYK colour model.

60 of 75 21.02.2016 22:33

CIE Absolute ColourSpaces

Feb 22nd, 2016Biomedical Image Analysis

(71)Absolute Colour Spaces

An absolute colour space is a colour space in which colours areunambiguous and do not depend on any external factors. Oneexample of an absolute colour is the L*a*b* that if reproduced usingaccurate devices in the right conditions looks exactly as intended.

A counter-example of a colour space that is not absolute is RGB. RGBis made by mixing red, green, and blue, but these are notstandardised. Two computer monitors will most likely show the sameRGB image very different.

One way to think of this is that L*a*b* is a colour, whilst RGBis a recipe. The result of mixing RGB depends on theingredients.

A non-absolute colour space can be made absolute by

defining its ingredients more precisely

A popular way to make a colour space like RGB into an absolutespace is to define an ICC profile which contains the attributes. RGBcolours defined by widely accepted profiles include sRGB and AdobeRGB.

61 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

CIE Absolute Colour Spaces

(72)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Conversion of Colour Spaces

In general an absolute colour can be converted to another absolutecolour and back again. As each colour space has its own gamutconversions that lie outside that gamut will not produce correctresults.

Although there are formulae to convert between twonon-absolute, e.g. RGB to CMYK, or between an absolute andnon-absolute colour space, e.g. RGB to L*a*b*, the concept ismeaningless and can give only roughly equivalent results.

Also note that part of the definition of an absolute colour isthe viewing condition. The same colour under differentlighting conditions looks different.

62 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

CIE Absolute Colour Spaces

(73)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

CIE 1931 Colour Space

In the study of the perception of colour,the first mathematically defined colourspace CIE 1931 Colour Space also knownas CIE XYZ Colour Space was created.

As the human eye has three types ofcolour sensors a full plot of all visiblecolours is a 3D figure. However, colourscan be split into two parts

brightness and

chromaticity.

By removing the brightness from the CIEXYZ colour space the chromacity of acolour is then specified by the twoparameters and

(2.10)

The and tristimulus values can thenbe calculated back from the chromaticityvalues and the brightness value

with

(2.11)

Fig 2.41 shows the related chromaticitydiagram representing all coloursperceivable by the average human eye.

Fig 2.41: CIE xy chromaticity

diagram

63 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

CIE Absolute Colour Spaces

(74)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

CIE xy Chromaticity Diagram

The diagram represents all the

chromaticities visible by the average

person.

This region is called the gamut of

human vision

The curved edge of the gamut is

called spectral locus and corresponds

to monochromatic light

The straight edge on the lower part

of the gamut is called the purple line

and they have no counterpart in

monochromatic light

Less saturated colours appear in the

interior with white at the centre

If one chooses any two points in the

diagram, all colours that can be

formed by mixing these two colours

lie on the connecting line

All mixing colours of three sources

are found inside the triangle formed

by them

The mixture of two equally bright

colours will not generally lie on the

midpoint of that line segment

It is obvious that three real sources

can not cover the gamut of human

vision

Fig 2.42: CIE xy chromaticity

diagram

Fig 2.43: CIE xy chromaticity

diagram with the MacAdam

Ellipses (10x their actual size)

64 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

CIE Absolute Colour Spaces

(75)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

CIELAB Colour Model

Lab is the abbreviation for two different colourmodels. The best known CIELAB (CIE 1976L*a*b*) and the other is Hunter Lab.

Both colour models have the intention to beperceptually more linear that means:

Perceptually linear means that a changeof the same amount in a colour valueshould produce a change of similarvisual importance

The three parameters in the model represent:

L*: Lightness of the colour (L*=0 yields

black, L*=100 white)

a*: Position between magenta and green

(negative a* indicates green, positive a*

magenta

b*: Position between yellow and blue

(negative b* indicates blue, positive b*

yellow

CIE 1976 L*a*b* is based directly on the CIE1931 XYZ colour space as an attempt tolinearise the perceptibility of colour differences,using the colour difference metric described bythe MacAdam ellipse.

Fig 2.44 Lab with

luminance 25%

Fig 2.45 Lab with

luminance 50%

Fig 2.46 Lab with

luminance 75%

65 of 75 21.02.2016 22:33

Fundamental Steps inImage Processing

Feb 22nd, 2016Biomedical Image Analysis

(77)Fundamental Steps in ImageProcessing

Preprocessing:

remove geometrical distortions,

damp image noise, improve the

contrast,...

Basic Feature Extraction:

search for points/lines/circles,

homogeneous regions, sudden

intensity changes,...

Grouping:

connect the previously found features

to objects

Scene Analysis:

analyse the objects that were found

66 of 75 21.02.2016 22:33

Example: AortaSegmentation

Feb 22nd, 2016Biomedical Image Analysis

(79)Example

Example application of an aorta and dissection membranesegmentation approach.

Problem:

Manual segmentation is

difficult & time consuming →

automatic method

CT artefacts complicate

automatic segmentation

Methods:

Hough Transformation

Model based segmentation

Active deformable shape

FilteringFig 2.47: CT of a dissected aorta

67 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Example: Aorta Segmentation

(80)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Example: A Naive Approach

Unfortunately the simple "naive" segmentation approaches likeregion growing or watershed will fail.

Fig. 2.48: Streak artefacts disturb the

watershed segmentation process and

cause incomplete lumen segmentation

Fig. 2.49: The ascending aorta and close

by cave vein are often merged due to

acquisition noise and reconstruction

artefacts

A better solution must be found.

68 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Example: Aorta Segmentation

(81)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Example: Pre-Processing,Enhancement

As the images already look quitenice, no further pre-processinge.g. noise filtering is required.

Fig. 2.50: Original CT image of a dissected

aorta

69 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Example: Aorta Segmentation

(82)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Example: Basic FeatureExtraction

As the basic feature we extractthe edges of the image. Beware,that depending on the exact typeof edge extraction some filteringis done implicitely.

Fig. 2.51: Edge image

70 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Example: Aorta Segmentation

(83)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Example: Grouping

As the aorta is almost round in shape a robust circle detection seemsappropriate.

Fig. 2.52: Hough accumulator for circles Fig. 2.53: Detected circles

71 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Example: Aorta Segmentation

(84)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Example: Detection ofAscending and Descending Aorta

Key to many medical image analysisapplications is the proper selection of an area of

interest. This not only reduces thecomputational complexity but also makes the

approach more robust.

Fig. 2.54: The gray area

markes the region of

interest in the ascending-

and descending-aorta

72 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Example: Aorta Segmentation

(85)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Example: Detection ofAscending and Descending Aorta(2)

Using the circle detector, the ascending and descending aorta can beeasily found. Clustering methods, e.g. K-means, then yield theascending and descending aorta as well as the region of interest.

(a)

(b)

Fig. 2.55: (a) Circles are detected in several axial slices and (b) their position

accumulated.

73 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Example: Aorta Segmentation

(86)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Example: Generation of theRough Aortic Mesh

In a first step, the top of the aortic arch, in a slice reformatedperpendicular to the axial slices, is located. Assuming toroid shape,the center of the arch is found and more circles detected on inclinedreformated slices. Assuming the torodidal shape of the aorta, outlierscan be easily detected.

(a) (b)

Fig. 2.56: Principle of aorta detection

74 of 75 21.02.2016 22:33

Feb 22nd, 2016Biomedical Image Analysis

Example: Aorta Segmentation

(87)

Prof. Dr. Philippe Cattin: Digital Image Fundamentals

Example: Generation of theRough Aortic Mesh (2)

Internal and external forces are used to furter refine the initial roughmesh.

(a)

(b)

Fig. 2.57: (a) The initial mesh is further refined, (b) the resulting mesh

75 of 75 21.02.2016 22:33