Convolutional Neural Networkscs194-26/sp20/Lectures/... · 2020. 3. 14. · The brain/neuron view...

Transcript of Convolutional Neural Networkscs194-26/sp20/Lectures/... · 2020. 3. 14. · The brain/neuron view...

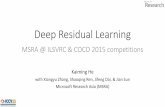

Convolutional Neural Networks

CS194: Image Manipulation, Comp. Vision, and Comp. PhotoAlexei Efros, UC Berkeley, Spring 2020

image X

Convolutional Neural

Network“Penguin”

label Y

Neural Nets: a particularly useful Black Box

image X

“Penguin”

label Y

Classic Object Recognition

Edges

Texture

Colors

Segments

Parts

Slide by Philip Isola

Feature extractors

Classifier

image X

“Penguin”

label Y

Classic Object Recognition

Edges

Texture

Colors

Segments

Parts

Slide by Philip Isola

Feature extractors

Classifier

Learned

image X label Y

Learning Features

Edges

Texture

Colors

Feature extractorsLearned

image X

“Penguin”

label Y

Neural Network:algorithm + feature + data!

Slide by Philip Isola

Learned

Vanilla (fully-connected) Neural Networks

8

Example: 200x200 image40K hidden units

~2B parameters!!!

- Spatial correlation is local- Waste of resources + we have not enough

training samples anyway..

Fully Connected Layer

Ranzato

9

Locally Connected Layer

Example: 200x200 image40K hidden unitsFilter size: 10x10

4M parameters

Ranzato

Note: This parameterization is good when input image is registered (e.g., face recognition).

10

STATIONARITY? Statistics is similar at different locations

Ranzato

Locally Connected Layer

Example: 200x200 image40K hidden unitsFilter size: 10x10

4M parameters

11

Convolutional Layer

Share the same parameters across different locations (assuming input is stationary):Convolutions with learned kernels

Ranzato

Convolutional Layer

Ranzato

Convolutional Layer

Ranzato

Convolutional Layer

Ranzato

Convolutional Layer

Ranzato

Convolutional Layer

Ranzato

Convolutional Layer

Ranzato

Convolutional Layer

Ranzato

Convolutional Layer

Ranzato

Convolutional Layer

Ranzato

Convolutional Layer

Ranzato

Convolutional Layer

Ranzato

Convolutional Layer

Ranzato

Convolutional Layer

Ranzato

Convolutional Layer

Ranzato

Convolutional Layer

Ranzato

Convolutional Layer

Ranzato

28

Learn multiple filters.

E.g.: 200x200 image100 FiltersFilter size: 10x10

10K parameters

Ranzato

Convolutional Layer

Fei-Fei Li & Andrej Karpathy Lecture 7 - 21 Jan 201529

before:

now:

input layer hidden layer

output layer

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201630

32

32

3

Convolution Layer32x32x3 image

width

height

depth

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201631

32

32

3

Convolution Layer

5x5x3 filter

32x32x3 image

Convolve the filter with the imagei.e. “slide over the image spatially, computing dot products”

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201632

32

32

3

Convolution Layer

5x5x3 filter

32x32x3 image

Convolve the filter with the imagei.e. “slide over the image spatially, computing dot products”

Filters always extend the full depth of the input volume

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201633

32

32

3

Convolution Layer32x32x3 image5x5x3 filter

1 number: the result of taking a dot product between the filter and a small 5x5x3 chunk of the image(i.e. 5*5*3 = 75-dimensional dot product + bias)

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201634

32

32

3

Convolution Layer32x32x3 image5x5x3 filter

convolve (slide) over all spatial locations

activation map

1

28

28

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201635

32

32

3

Convolution Layer32x32x3 image5x5x3 filter

convolve (slide) over all spatial locations

activation maps

1

28

28

consider a second, green filter

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201636

32

32

3

Convolution Layer

activation maps

6

28

28

For example, if we had 6 5x5 filters, we’ll get 6 separate activation maps:

We stack these up to get a “new image” of size 28x28x6!

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201637

convolving the first filter in the input gives the first slice of depth in output volume

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201638

Preview: ConvNet is a sequence of Convolution Layers, interspersed with activation functions

32

32

3

28

28

6

CONV,ReLUe.g. 6 5x5x3 filters

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201639

Preview: ConvNet is a sequence of Convolutional Layers, interspersed with activation functions

32

32

3

CONV,ReLUe.g. 6 5x5x3 filters 28

28

6

CONV,ReLUe.g. 10 5x5x6 filters

CONV,ReLU

….

10

24

24

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201640

A closer look at spatial dimensions:

32

32

3

32x32x3 image5x5x3 filter

convolve (slide) over all spatial locations

activation map

1

28

28

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201641

7x7 input (spatially)assume 3x3 filter

7

7

A closer look at spatial dimensions:

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201642

7x7 input (spatially)assume 3x3 filter

7

7

A closer look at spatial dimensions:

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201643

7x7 input (spatially)assume 3x3 filter

7

7

A closer look at spatial dimensions:

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201644

7x7 input (spatially)assume 3x3 filter

7

7

A closer look at spatial dimensions:

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201645

7x7 input (spatially)assume 3x3 filter

=> 5x5 output

7

7

A closer look at spatial dimensions:

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201646

7x7 input (spatially)assume 3x3 filterapplied with stride 2

7

7

A closer look at spatial dimensions:

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201647

7x7 input (spatially)assume 3x3 filterapplied with stride 2

7

7

A closer look at spatial dimensions:

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201648

7x7 input (spatially)assume 3x3 filterapplied with stride 2=> 3x3 output!

7

7

A closer look at spatial dimensions:

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201649

7x7 input (spatially)assume 3x3 filterapplied with stride 3?

7

7

A closer look at spatial dimensions:

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201650

7x7 input (spatially)assume 3x3 filterapplied with stride 3?

7

7

A closer look at spatial dimensions:

doesn’t fit! cannot apply 3x3 filter on 7x7 input with stride 3.

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201651

N

NF

F

Output size:(N - F) / stride + 1

e.g. N = 7, F = 3:stride 1 => (7 - 3)/1 + 1 = 5stride 2 => (7 - 3)/2 + 1 = 3stride 3 => (7 - 3)/3 + 1 = 2.33 :\

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201652

In practice: Common to zero pad the border0 0 0 0 0 0

0

0

0

0

e.g. input 7x73x3 filter, applied with stride 1 pad with 1 pixel border => what is the output?

(recall:)(N - F) / stride + 1

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201653

In practice: Common to zero pad the border

e.g. input 7x73x3 filter, applied with stride 1 pad with 1 pixel border => what is the output?

7x7 output!

0 0 0 0 0 0

0

0

0

0

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201654

In practice: Common to zero pad the border

e.g. input 7x73x3 filter, applied with stride 1 pad with 1 pixel border => what is the output?

7x7 output!in general, common to see CONV layers with stride 1, filters of size FxF, and zero-padding with (F-1)/2. (will preserve size spatially)e.g. F = 3 => zero pad with 1

F = 5 => zero pad with 2F = 7 => zero pad with 3

0 0 0 0 0 0

0

0

0

0

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201655

Remember back to… E.g. 32x32 input convolved repeatedly with 5x5 filters shrinks volumes spatially!(32 -> 28 -> 24 ...). Shrinking too fast is not good, doesn’t work well.

32

32

3

CONV,ReLUe.g. 6 5x5x3 filters 28

28

6

CONV,ReLUe.g. 10 5x5x6 filters

CONV,ReLU

….

10

24

24

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201656

(btw, 1x1 convolution layers make perfect sense)

6456

561x1 CONVwith 32 filters

3256

56(each filter has size 1x1x64, and performs a 64-dimensional dot product)

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201657

The brain/neuron view of CONV Layer

32

32

3

32x32x3 image5x5x3 filter

1 number: the result of taking a dot product between the filter and this part of the image(i.e. 5*5*3 = 75-dimensional dot product)

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201658

The brain/neuron view of CONV Layer

32

32

3

32x32x3 image5x5x3 filter

1 number: the result of taking a dot product between the filter and this part of the image(i.e. 5*5*3 = 75-dimensional dot product)

It’s just a neuron with local connectivity...

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201659

The brain/neuron view of CONV Layer

32

32

3

An activation map is a 28x28 sheet of neuron outputs:1. Each is connected to a small region in the input2. All of them share parameters

“5x5 filter” -> “5x5 receptive field for each neuron”28

28

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201660

The brain/neuron view of CONV Layer

32

32

3

28

28

E.g. with 5 filters,CONV layer consists of neurons arranged in a 3D grid(28x28x5)

There will be 5 different neurons all looking at the same region in the input volume5

61

Let us assume filter is an “eye” detector.

Q.: how can we make the detection robust to the exact location of the eye?

Pooling Layer

Ranzato

62

By “pooling” (e.g., taking max) filterresponses at different locations we gainrobustness to the exact spatial locationof features.

Ranzato

Pooling Layer

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201663

Pooling layer- makes the representations smaller and more manageable - operates over each activation map independently:

Lecture 7 - 27 Jan 2016Fei-Fei Li & Andrej Karpathy & Justin JohnsonFei-Fei Li & Andrej Karpathy & Justin Johnson Lecture 7 - 27 Jan 201664

1 1 2 4

5 6 7 8

3 2 1 0

1 2 3 4

Single depth slice

x

y

max pool with 2x2 filters and stride 2 6 8

3 4

MAX POOLING

65

ConvNets: Typical Stage

Convol. Pooling

One stage (zoom)

courtesy ofK. Kavukcuoglu Ranzato

Neural network

“clown fish”

Learned

Deep neural network

“clown fish”

Learned

Computation in a neural net

Input representation

Output representation

Computation in a neural net

Input representation

Output representation

Computation in a neural net

Rectified linear unit (ReLU)

Computation in a neural net

Last layer

…

…

dolphin

cat

grizzly bear

angel fish

chameleon

iguana

elephant

clown fish

“clown fish”argmax

Learning with deep nets

“clown fish”

Learned

Learning with deep nets

“clown fish”

“grizzly bear”

“chameleon”

Train network to associate the right

label with each image

Learning with deep nets

“clown fish”

Loss

Learned

Loss function

“clown fish”

Loss error

Network output

…

…

dolphin

cat

grizzly bear

angel fish

chameleon

iguana

elephant

clown fish

Ground truth label

Loss function

“clown fish”

Loss small

Network output

…

…

dolphin

cat

grizzly bear

angel fish

chameleon

iguana

elephant

clown fish

Ground truth label

Loss function

“grizzly bear”

Loss large

Network output

…

…

dolphin

cat

grizzly bear

angel fish

chameleon

iguana

elephant

clown fish

Ground truth label

Loss function for classificationNetwork output

…

…

dolphin

cat

grizzly bear

angel fish

chameleon

clown fish

Ground truth label

…

iguana

elephant

Probability of the observed data under

the model

Results in learning a probability model !

Learning with deep nets

“clown fish”

Loss

Learned

Learning with deep nets

“grizzly bear”

Loss

Learned

Learning with deep nets

“chameleon”

Loss

Learned

Gradient descent

One iteration of gradient descent:

learning rate

Gradient descent

Texture synthesis by non-parametric sampling

p

Synthesizing a pixel

non-parametricsampling

Input image

[Efros & Leung 1999]

Models

Input partial image

Predicted color of next

pixel

Texture synthesis with a deep net

“white”

PixelCNN [van der Oord et al. 2016]

Texture synthesis with a deep net

“white”

[van der Oord et al. 2016]

…

Sampling

Network output

…

…

turquoise

blue

green

red

orange

gray

black

white

p

Sampling

Network output

p

…

…

turquoise

blue

green

red

orange

gray

black

white

prob

abilit

y

Sampling

Network output

prob

abilit

y

…

…

turquoise

blue

green

red

orange

gray

black

white

Sampling

Network output

prob

abilit

y

…

…

turquoise

blue

green

red

orange

gray

black

white

Sampling

Network output

…

…

turquoise

blue

green

red

orange

gray

black

white

prob

abilit

y

Sampling

Network output

…

…

turquoise

blue

green

red

orange

gray

black

white

prob

abilit

y

“yellow”

…

Discriminative Deep Networks

96

“Rockfish”

Discriminative Deep Networks

97

Raw, Unlabeled Pixels

Generative Deep Networks

98

Raw, Unlabeled Pixels

Ansel Adams. Yosemite Valley Bridge.99

Grayscale image: L channel Color information: ab channels

abL

Zhang, Isola, Efros. Colorful Image Colorization. In ECCV, 2016.100

Concatenate (L,ab) channels

101

Grayscale image: L channel

Zhang, Isola, Efros. Colorful Image Colorization. In ECCV, 2016.

abL

Input Output Ground truth

Simple L2 regression doesn’t work

Better Loss Function Colors in ab space(discrete)

104

• Regression with L2 loss inadequate

• Use per-pixel multinomial classification

105

Color distribution cross-entropy loss with colorfulness enhancing term.

Zhang et al. 2016

[Zhang, Isola, Efros, ECCV 2016]

Designing loss functionsInput Ground truth

![arXiv:1811.08537v1 [cs.CV] 21 Nov 2018 · 1 arXiv:1811.08537v1 [cs.CV] 21 Nov 2018 # Layer type Parameters 1 Conv layer + ReLU Kernel size: 3 3, number channels: 96 (no recurrency)](https://static.fdocuments.in/doc/165x107/5f948e55c94c060bbd21b915/arxiv181108537v1-cscv-21-nov-2018-1-arxiv181108537v1-cscv-21-nov-2018.jpg)