Comparison of Discrimination Methods for the Classification of Tumors Using Gene Expression Data

description

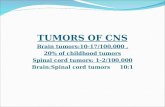

Transcript of Comparison of Discrimination Methods for the Classification of Tumors Using Gene Expression Data

1

Comparison of Discrimination Methods for the Classification of Tumors Using Gene Expression Data

Presented by: Tun-Hsiang Yang

2

purpose of this paper

Compare the performance of different discrimination methods

Nearest Neighbor classifier Linear discriminant analysis Classification tree Machine learning approaches: bagging, boosting

Investigate the use of prediction votes to assess the confidence of each prediction

3

Statistical problems:

The identification of new/unknown tumor classes using gene expression profiles

Clustering analysis/unsupervised learning

The classification of malignancies into known classes

Discriminant analysis/supervised learning

The identification of marker genes that identified different tumor classes

Variable (Gene) selection

4

Datasets

Gene expression data on p genes for n mRNA samples:

n x p matrix X={x ij},

where x ij denotes the expression level of gene (variable) j in ith mRNA sample(observation)

Response: k-dimensional vector Y={yi},

where yi denotes the class of observation i

Lymphoma dataset (p=4682, n=81,k=3) Leukemia dataset (p=3571, n=72, k=3 or 2) NCI 60 dataset (p=5244, n=61, k=8)

5

Data preprocessing

Imputation of missing data (KNN)

Standardization of data (Euclidean distance)

preliminary gene selection

Lymphoma dataset (p=4682 p=50, n=81,k=3) Leukemia dataset (p=3571p=40, n=72, k=3) NCI 60 dataset (p=5244p=30, n=61, k=8)

Microsoft Equation

3.0

i k

kjiji

i k

jkji

xxkyI

xxkyI

jWSS

jBSS

2

2.

))((

))((

)(

)(

6

Visual presentation of Leukemia dataset

Correlation matrix (72x72) ordered by class Black: 0 correlation / Red: positive correlation / Green: negative correlation

P=3571 p=40

7

Prediction Methods

Supervised Learning Methods

Machine learning approaches

8

Supervised Learning Methods Nearest Neighbor classifier(NN)

Fisher Linear Discriminant Analysis (LDA)

Weighted Gene Voting

Classification trees (CART)

9

Nearest Neighbor

The k-NN rule

Find the k closest observations in the learning set

Predict the class for each element in the test dataset by majority vote

K is chosen by minimizing cross-validation error rate

10

Linear Discirminantion Analysis

FLDA consists of

finding linear functions a’x of the gene expression levels x=(x1, …,xp) with large ratio of between groups to within groups sum of squares

Predicting the class of an observation by the class whose mean vector is closest to the discrimination

variables

11

Maximum likelihood discriminant rules

}||log)(){(minarg)(1 '

kk kkk xxxC

•Predicts the class of an observation x as C(x)=argmaxkpr(x|y=k)

12

Weighted Gene Voting

An observation x=(x1,…xp) is classified as 1 iff

Prediction strength as the margin of victory(p9)

0)2

)((

)(

1

21

^

2

^

1

21

p

j

jjj

jj

jj xxx

xx

)2,1min()2,1max(

)2,1min()2,1max(

vvvv

vvvvPS

13

Classification tree

Constructed by repeated splits of subsets (nodes)

Each terminal subset is assigned a class label

The size of the tree is determined by minimizing the cross validation error rate

Three aspects to tree construction

the selection of the splits

the stopping criteria

the assignment of each terminal node to a class

14

Aggregated Predictors

There are several ways to generate perturbed learning set:

Bagging

Boosting

Convex Pseudo data (CPD))),((maxarg kLxCIw b

bbk

15

Bagging

Predictors are built for each sub-sample and aggregated by

Majority voting with equal wb=1

Non-parametric bootstrap: drawing at random with replacement to form a

perturbed learning sets of the same size as the original learning set

By product: out of bag observations can be used to estimate misclassification rates of bagged predictors

A prediction for each observation (xi, yi) is obtained by aggregating the classifiers in which (xi,yi) is out-of-bag

16

Bagging (cont.)

Parametric bootstrap:

Perturbed learning sets are generated according to a mixture of MVN distributions

For each class k, the class sample mean and covariance matrix were taken as the estimates of distribution parameters

Make sure at least one observation sampled from each class

17

BoostingThe bth step of the boosting algorithm

Get another learning set Lb of the same size nL

Build a classifier based on Lb

Run the learning set L let di=1 if the ith case is classified incorrectly

di=0 otherwise

Define b=Pidi and Bbdi=(1- b)/ b

Update by pi=piBbdi/ piBb

di

Re-sampling probabilities are reset to equal if b>=1/2 or b=0

18

Prediction votes

For aggregated classifiers, prediction votes assessing the strength of

a prediction may be defined for each observation

The prediction vote (PV) for an observation x

bb

bb

bk

w

kLxCIwxPV

)),((max)(

19

Study Design

Randomly divide the dataset into a learning and test set (2:1 scheme)

For each of N=150 runs: Select a subset of p genes from the learning set

with the largest BSS/WSS Build the different predictors using the learning

sets with p genes Apply the predictors to the observations in the test

set to obtain test set error rates

20

Results

Test set error rates: apply classifier build based on learning set to test set. Summarized by box-plot over runs

Observation-wise error rates: for each observation, record the proportion of times it was classified incorrectly. Summarized by means of survival plots

Variable selection: compare the effect of increasing or decreasing number of genes (variables)

21

Leukemia data, two classes

22

Leukemia data, three classes

23

Lymphoma data

24

Conclusions In the main comparison, NN and DLDA had the smallest error

rates, while FLDA had the highest error rates

Aggregation improved the performance of CART classifiers, the largest gains being with boosting and bagging with CPD

For the lymphoma and leukemia datasets, increasing the number of variables to p=200 did not affect much the performance of the various classifiers. There was an improvement for the NCI 60 dataset.

A more carefully selection of a small number of genes (p=10) improved the performance of FLDA dramatically