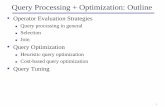

Algorithms and Data Structures (INF1)people.cs.aau.dk/~luhua/courses/ad07/Lecture-3.pdf · 2007. 9....

Transcript of Algorithms and Data Structures (INF1)people.cs.aau.dk/~luhua/courses/ad07/Lecture-3.pdf · 2007. 9....

Lecture 3/15Hua Lu

Department of Computer ScienceAalborg University

Fall 2007

Algorithms and Data Structures (INF1)

2

This Lecture• Complexity of algorithms

Algorithm efficiency Time and space complexity Worst case, average case and best case Measuring the complexity of recursive and iterative algorithmsAsymptotic complexity, O-notation

3

Efficiency of Algorithms• Efficiency / Complexity

Space usedRunning time (usually we are more concerned to running time)

• Why bother? Aren’t computers today fast enough?Computer speed has increased greatly, but still is not enough…We have hard problems

Factorize large integerssearch for prime numbers

People even want to do more challenging tasks fastHigh-resolution graphics and visualizationWeather simulation and prediction

• Correct algorithms may still consume too much computation time on even super computers!

Efficient algorithms are thus expected

4

Example• Given an array A[a..b]:Integer, the following algorithm finds

the index of the minimum element in A• min_index(a, b: Integer)

result:=afor i:=a+1 to b do

if A[i]<A[result] then result:=ireturn result

• How long does min_index(1,n) take to execute?• Two impact factors

Particular implementationLanguage, compiler, and computer

The size of the inputn can be very large in the example

• Usually we ignore implementation but focus on input size

5

Time Complexity• Time to execute the algorithm, the running time, as a

function of the input sizeA rigorous function usually is not be possibleWe turn to make estimates

• Steps for an estimateChoose some characteristic operation performed repeatedlyDefine T(n) as the number of characteristic operations the algorithm performs on an input of size n

• Characteristic operation in min_index(a, b: Integer)#1: result:=a? -> T(n)=1#2: A[i]<A[result]? -> T(n)= n-1, the number of loop iterations#3: result:=i? -> T(n) depends on values in A, varying from 0 to n-1#1 obviously is not characteristic. Both #2 and #3 are possible choices

6

Different Cases• min_index(a, b: Integer)

result:=afor i:=a+1 to b do

if A[i]<A[result] then result:=ireturn result

• Characteristic operation result:=i, T(n)?0, if A[a] is the minimum element

n=4, input instance: 1, 4, 2, 3n-1, if all elements in A are in descending order

n=4, input instance: 4, 3, 2, 1On average, 0 ≤ T(n) ≤ n-1

• Best case, worst case, average case

7

Best/Worst/Average Case (1)

1n

2n

3n

4n

5n

6n

• For a specific input size n, investigate running times for different input instances

Intuitive graphic illustrationA, B, C, …G are input instances of size n

Formal definitions

8

Best/Worst/Average Case (2)• Suppose algorithm P accepts k different input instances of

size n. Let Ti(n) be the time complexity of P on the ith input instance, for 1 ≤ i ≤ k, and pi be the probability that thisinstance occurs.

• Best case time complexity: B(n) = min1≤ i≤ kTi(n)The minimum running time over all k inputs of size n

• Worst case time complexity: W(n) = max1≤ i≤ kTi(n)The maximum running time over all k inputs of size nIt is most interesting/important!

• Average case time complexity: A(n) = Σ1≤ i≤ k piTi(n)The average running time over all k inputs of size nIt is hard to determine very often, because pi is hard to know

9

Best/Worst/Average Case (3)

1n

2n

3n

4n

5n

6n

Input instance size

Run

ning

tim

e

1 2 3 4 5 6 7 8 9 10 11 12 …..

best-case

average-case

worst-case

• For of all input instance sizesWe always have B(n) ≤ A(n) ≤ W(n)Sometimes we have B(n) = A(n) = W(n)

10

Best/Worst/Average Case (4)• Worst case is usually used

It is an upper-bound In certain application domains (e.g., air traffic control, surgery) knowing the worst-case time complexity is of crucial importanceFor some algorithms worst case occurs fairly often

• The average case is often as bad as the worst case

Finding the average case can be very difficultRecall the probability piE.g., min_index example, you never know what values are in the input array

11

• Towers of HanoiA, B, C, and n disks on AGoal: transfer all n disks from peg A to peg C

• Rulesmove one disk at a timenever place larger disk above smaller one

• Recursive solutiontransfer n − 1 disks from A to Bmove the largest disk from A to Ctransfer n − 1 disks from B to C

Complexity of Recursive Algorithms

12

Towers of Hanoi (1)• Recursive algorithm: Hanoi(n, from, to, spare)

if (n>0) thenHanoi(n-1, from, spare, to);Print ”Move disk from ” from ” to ” to;Hanoi(n-1, spare, to, from);

• Total number of movesT(n) = 2T(n − 1) + 1

• Running times of algorithms with Recursive calls can bedescribed using recurrences

• A recurrence is an equation or inequality that describes a function in terms of its value on smaller inputs

13

Towers of Hanoi (2)• Recurrence relation:

T(n) = 2 T(n − 1) + 1T(1) = 1Solution by repeated substitution:T(n) = 2 (2 T(n - 2) + 1) + 1 =

= 4 T(n - 2) + 2 + 1 == 4 (2 T(n - 3) + 1) + 2 + 1 == 8 T(n - 3) + 4 + 2 + 1 = ...= 2i T(n - i) + 2i-1 +2i-2 +...+21 +20

The expansion stops (n - i = 1) when i = n − 1T(n) = 2n – 1 + 2n – 2 + 2n – 3 + ... + 21 + 20

This is a geometric series, so that we haveT(n) = 2n − 1

14

Solving Recurrence Equations• Recurrence equation

T(n0) = aT(n) = func(T(n-1))

• Solve recurrence equations by repeated substitutionT(n) = func(T(n-1)) = func(func(T(n-2))… = func(…func(n0))Express T(n) in a form without func, T(n) usually becomes a sum of a series si

T(n) = Σ(si), i from n0 to nWork out a decent and simplified form of the sum aboveProve it by math induction

• Repeated substitution does not always workF(0) = 0, F(1) = 1F(n) = F(n-1) + F(n-2)

15

Complexity of Iterative Algorithms• Sort algorithm BubbleSort(A[1..n]: int)

for i:= n downto 1 do // Counting downfor j:=2 to i do // bubbling up

if A[ j-1 ] > A[ j ] then swap(A, j-1, j) end for

end for• Compare each element (except the last one) with its neighbor to the

rightIf they are out of order, swap themThis puts the largest element at the very endThe last element is now in the correct and final place

• Compare each element (except the last two) with its neighbor to the right

If they are out of order, swap themThis puts the second largest element next to lastThe last two elements are now in their correct and final places

• Compare each element (except the last three) with its neighbor to the right

Continue as above until you have no unsorted elements on the left

16

Complexity of BubbleSort• Sort algorithm BubbleSort(A[1..n]: int)

for i:= n downto 1 do // Counting downfor j:=2 to i do // bubbling up

if A[ j-1 ] > A[ j ] then swap(A, j-1, j) end for

end for• The outer loop is executed n times• The inner loop, #of comparisons

n-1, n-2, n-3, …, 1, 0

• T(n)=(n-1)+(n-2)+(n-3)…+0=n(n-1)/2

17

Going Further• Question

How to compare efficiency of algorithms? Linear search vs. binary search

• AnswerLook at how fast T(n) grows as n growsAsymptotic complexity

T(n)

n

Linear search

Binary search

18

Asymptotic Analysis• Goal

To simplify analysis of running time by getting rid of”details”, which may be affected by specificimplementation and hardware

“rounding” for numbers: 1,000,001 ≈ 1,000,000“rounding” for functions: 3n2 ≈ n2

• Capturing the essenceHow the running time of an algorithm increases with the size of the input in the limit.

Small-sized inputs are easy to deal with

Asymptotically more efficient algorithms are best for all but small inputs

Their advantage shows up when inputs become large enough

19

Asymptotic Notation (1)

)(nT( )c g n⋅

0n Input Size

Run

ning

Tim

e

• The “big-Oh” O-NotationAn asymptotic upper bound of funciton T(n)T(n) = O(g(n)), if there exist fixed constants c and n0, s.t. T(n) ≤ c g(n) for all n ≥ n0

c is a positive real number, n and n0 are natural numbersT(n) and g(n) are non-negative functions

• T(n) grows asymptotically slower than g(n)• Used for worst-case analysis• Abuse of notation

T(n) = O(g(n)) actually means T(n) ∈O(g(n)) O(g(n)) indicates a group of functions satisfying the definition2n2+3n is/in O(n2)

20

Asymptotic Notation (2)• Simple rule for O-notation

Drop lower order terms and constant factors.

• Examples7n - 3 is O(n)50 n log n is O(n log n)8n2 log n + 5n2 + n is O(n2 log n)3n2+2n is O(n2)

• Note: Even though (50 n log n) is O(n5), it is expected thatsuch an approximation be of as small an order as possible

A tighter upper bound helps show the algorithm is good

• O(1) is a quantity special that is no larger than some fixed constant, whose precise value is not stated or interesting

Algorithms with constant running timeNo loops, no subroutine calls

21

Asymptotic Notation (3)• Assume T(n)=O(loga n), a>1. Prove that T(n)=O(logb n) for

any b>1• According to the O-notation definition, there exist fixed

constants c and n0, s.t. T(n) ≤ c loga n for all n ≥ n0• T(n) ≤ c loga n = c (logb n / logb a) by change of base• T(n) ≤ (c / logb a) logb n• Let c’= c / logb a, then for all n ≥ n0, we have

T(n) ≤ c’ logb n• According to the O-notation definition, T(n)=O(logb n)

• Usually no base of the logarithm is specified for O(log n)since it is irrelevant for the O-notation

• Various useful theorems about O-notation can be proved in this way: finding appropriate c and n0

22

Input Size

Run

ning

Tim

e )(nT( )c g n⋅

0n

Asymptotic Notation (4)• The “big-Omega” Ω−Notation

An asymptotic lower boundT(n) = Ω(g(n)) if there exist fixed constants c and n0, s.t. c g(n) ≤ T(n) for all n ≥ n0

• Used to describe lower bounds of algorithmic problemsEvery algorithm for a problemneeds at least a certain amountof time to executeE.g., lower-bound of searching in an unsorted array is Ω(n)

Not means all input instancestake that time!Rather, for all instances, there must be one which takes that long!

23

Asymptotic Notation (5)

Input SizeR

unni

ngTi

me )(nT

0n

)(ngc ⋅2

)(ngc ⋅1

• The “big-Theta” Θ−NotationAn asymptotically tight boundT(n) = Θ(g(n)) if there exists fixed constants c1, c2, and n0, s.t. c1 g(n) ≤ T(n) ≤ c2 g(n) for all n ≥ n0

• T(n) = Θ(g(n)) if and only if T(n) = Ο(g(n)) and T(n) =Ω(g(n))

Θ(g(n)) is more precise than O(g(n))Binary search is Θ(log n) in the worst case

O(g(n)) is more widely used than theother two notations

24

Common Time Complexities

• O(1) constant time

• O(log n) log time• O(n) linear time• O(n log n) log linear time

• O(n2) quadratic time• O(n3) cubic time

• O(2n) exponential time

BETTER

WORSE

Polynomial time complexities (k not too big) really depend on the input size

Feasible algorithms. Very often, O(n log n) is good enough.

Infeasible!!!

25

Growth Functions

1,00E-01

1,00E+00

1,00E+01

1,00E+02

1,00E+03

1,00E+04

1,00E+05

1,00E+06

1,00E+07

1,00E+08

1,00E+09

1,00E+10

2 4 8 16 32 64 128 256 512 1024n

T(n)

nlog nsqrt nn log n100nn^2n^3

• We usually care more about very large problemsWhere it matters more

26

Growth Functions (Cont.)

1,00E-01

1,00E+11

1,00E+23

1,00E+35

1,00E+47

1,00E+59

1,00E+71

1,00E+83

1,00E+95

1,00E+107

1,00E+119

1,00E+131

1,00E+143

1,00E+155

2 4 8 16 32 64 128 256 512 1024n

T(n)

nlog nsqrt nn log n100nn^2n^32^n

• Exponential time is BAD!

27

Next Lecture• Data abstraction

Object oriented programming (OOP) Abstract data types Examples

• Linear structures (I) Array List