Word Embeddings for the Analysis of Ideological Placement ...

Transcript of Word Embeddings for the Analysis of Ideological Placement ...

Word Embeddings for the Analysis of Ideological

Placement in Parliamentary Corpora

Ludovic Rheault† and Christopher Cochrane‡

[Pre-print version of forthcoming article in Political Analysis]

Abstract

Word embeddings, the coe�cients from neural network models predicting the use ofwords in context, have now become inescapable in applications involving natural languageprocessing. Despite a few studies in political science, the potential of this methodology forthe analysis of political texts has yet to be fully uncovered. �is paper introduces models ofword embeddings augmented with political metadata and trained on large-scale parliamen-tary corpora from Britain, Canada, and the United States. We �t these models with indicatorvariables of the party a�liation of members of parliament, which we refer to as party em-beddings. We illustrate how these embeddings can be used to produce scaling estimatesof ideological placement and other quantities of interest for political research. To validatethe methodology, we assess our results against indicators from the Comparative ManifestosProject, surveys of experts, and measures based on roll-call votes. Our �ndings suggest thatparty embeddings are successful at capturing latent concepts such as ideology, and the ap-proach provides researchers with an integrated framework for studying political language.

†Assistant Professor, Department of Political Science and Munk School of Global A�airs and Public Policy, Uni-versity of Toronto. Email: [email protected]

‡Associate Professor, Department of Political Science, University of Toronto. Email: [email protected]

Authors’ Note: We thank participants in the annual meeting of the Society for Political Methdology, the CanadianPolitical Science Association annual conference, the Advanced Computational Linguistics seminar at the Universityof Toronto, as well as anonymous reviewers for their helpful comments. Replication data available through thePolitical Analysis Dataverse (Rheault and Cochrane 2019).

1 Introduction

�e representation of meaning is a fundamental objective in natural language processing. As a

basic illustration, consider queries performed with a search engine. We ideally want computers

to return documents that are relevant to the substantive meaning of a query, just like a human

being would interpret it, rather than simply the records with an exact word match. To achieve

such results, early methods such as latent semantic analysis relied on low-rank approximations

of word frequencies to score the semantic similarity between texts and rank them by relevance

(Deerwester et al. 1990; Manning, Raghavan, and Schutze 2009, Ch. 18). �e new state of the

art in meaning representation is word embeddings, or word vectors, the parameter estimates

of arti�cial neural networks designed to predict the occurrence of a word by the surrounding

words in a text sequence. Consistent with the use theory of meaning (Wi�genstein 2009), these

embeddings have been shown to capture semantic properties of language, revealed by an ability

to solve analogies and identify synonyms (Mikolov, Sutskever, Chen, Corrado, and Dean 2013;

Pennington, Socher, and Manning 2014). Despite a broad appeal across disciplines, the use of

word embeddings to analyze political texts remains a new �eld of research.1 �e aim of this

paper is to examine the reliability of the methodology for the detection of a latent concept such

as political ideology. In particular, we show that neural networks for word embeddings can be

augmented with metadata available in parliamentary corpora. We illustrate the properties of this

method using publicly available corpora from Britain, Canada and the United States, and assess

its validity using external indicators.

�e proposed methodology addresses at least three shortcomings associated with textual in-

dicators of ideological placement currently available. First, as opposed to measures based on

word frequencies, the estimates from our neural networks are trained to predict the use of lan-

guage in context. Put another way, the method accounts for a party’s usage of words given the

surrounding text. Second, our approach can easily accommodate control variables, factors that

could otherwise confound the placement of parties or politicians. For example, we account for1Examples of recent applications in political research include Rheault et al. (2016), Preotiuc-Pietro et al. (2017),

and Glavas, Nanni, and Ponze�o (2017).

1

the government-opposition dynamics that have foiled ideology indicators applied to Westmin-

ster systems in the past, and �lter out their in�uence to achieve more accurate estimates of party

placement. �ird, the methodology allows us to map political actors and language in a com-

mon vector space. �is means that we can situate actors of interest based on their proximity

to political concepts. Using a single model of embeddings, researchers can rank political actors

relative to these concepts using a variety of metrics for vector arithmetic. We demonstrate such

implementations in our empirical section.

Our results suggest that word embeddings are a promising tool for expanding the possibilities

of political research based on textual data. In particular, we �nd that scaling estimates of party

placement derived from the embeddings for the metadata—which we call party embeddings—

are strongly correlated with human-annotated and roll-call vote measures of le�-right ideology.

We compare the methodology with WordFish, which represents the most popular alternative

for text-based scaling of political ideology. �e two methods share similarities in that both can

be estimated without the need for annotated documents. �is comparison illustrates how em-

bedding models are well suited to account for the evolution of language over time. In contrast

to other text-based scaling methods, our methodology also allows to map political actors in a

multi-dimensional space. Finally, we demonstrate how the methodology can help to advance

substantive research in comparative politics, by replicating the model in two Westminster-style

democracies. For such countries in particular, the ability to include control variables proves use-

ful to account for the e�ect of institutional characteristics.

We start by situating our methodology in the broader literature on textual analysis, in the

next section. We then introduce the methodology concretely in Section 3. Section 4 discusses

preprocessing steps and so�ware implementation. Next, an empirical section illustrates con-

crete applications of the methodology: we demonstrate strategies for the retrieval of ideological

placement, validate the results against external benchmarks, and compare the properties of the

method against WordFish. We also illustrate an application at the legislator level and brie�y ad-

dress the question of uncertainty estimates for prospective users. Finally, we discuss intended

uses and raise some warnings regarding interpretation.

2

2 Relations to Previous Work

Two of the most popular approaches in political science for the extraction of ideology from texts

are WordScores (Laver, Benoit, and Garry 2003) and WordFish (Slapin and Proksch 2008).2 �e

�rst relies on a sample of labeled documents, for instance party manifestos annotated by experts.

�e relative probabilities of word occurrences in the labeled documents serve to produce scores

for each word, which can be viewed as indicators of their ideological load. Next, the scores can

be applied to the words found in new documents, to estimate their ideological placement. In

fact, this approach can be compared to methods of supervised machine learning (Bishop 2006),

where a computer is trained to predict the class of a labeled set of documents based on their

observed features (e.g. their words). WordFish, on the other hand, relies on party annotations

only. �e methodology consists of ��ing a regression model where word counts are projected

onto party-year parameters, using an expectation maximization algorithm (Slapin and Proksch

2008). �is approach avoids the reliance on expert annotations, and amounts to estimating the

speci�city of word usage by party, at di�erent points in time.

Neither of these approaches, however, takes into account the role of words in context. Put an-

other way, they ignore semantics. Although theoretically both WordScores and WordFish could

be expanded to include n-grams (sequences of more than one word), this comes at an increased

computational cost. �ere are so many di�erent combinations of words in the English language

that it rapidly becomes ine�cient to count them. �is problem has been addressed recently in

Gentzkow, Shapiro, and Taddy (2016), and presented as a curse of dimensionality. Using a large

number of words may be ine�cient when tracking down ideological slants from textual data,

since a high feature-document ratio overstates the variance across the target corpora (Taddy

2013; Gentzkow, Shapiro, and Taddy 2016). But for a few exceptions (Sim et al. 2013; Iyyer et al.

2014), models of political language face a trade-o� between ignoring the role of words in con-

text and dealing with high-dimensional variables.3 Instead of relying on word frequencies, word2See also Lauderdale and Herzog (2016) for an extension of the method to legislative speeches, and Kim, Lon-

dregan, and Ratkovic (2018) for an expanded model combining both text and votes.3Other examples of text-based methods for the detection of ideology based on word and phrase occurrences

include Gentzkow and Shapiro (2010), Diermeier et al. (2012) and Jensen et al. (2012).

3

embedding models aim to capture and represent relations between words using co-occurrences,

which sidesteps the high feature-document ratio problems while allowing researchers to move

beyond counts of words taken in isolation.

In the context of studies based on parliamentary debates, an additional concern is the ability

to account for other institutional elements such as the di�erence in tone between the government

and the opposition. A dangerous shortcut would consist of a�ributing any observed di�erences

between party speeches to ideology. In Westminster-style parliaments, the cabinet will use a

di�erent vocabulary than opposition parties due to the nature of these legislative functions. For

instance, opposition parties will invoke ministerial positions frequently when addressing their

counterparts. Hirst et al. (2014) show that machine learning models used to classify texts by

ideology have a tendency to be confounded with government-opposition language. As a result,

temporal trends can also be obscured by procedural changes in the way government and op-

position parties interact in parliament. A similar issue has been found to a�ect methods based

on roll-call votes to infer ideology, where government-opposition dynamics can dominate the

�rst dimension of the estimates (Spirling and McLean 2007; Hix and Noury 2016). Our proposed

methodology can accommodate the inclusion of additional “control variables” to �lter out the

e�ect of these institutional factors.

We argue that neural network models of language represent the natural way for scholars to

move forward when a�empting to measure a concept such as political ideology. �e pa�erns

of ideas that constitute ideologies are not as straightforward as is o�en assumed (Freeden 1998;

Cochrane 2015). Ideologies are emergent phenomena that are tangible but irreducible. Ideologies

are tangible in that they genuinely structure political thinking, disagreement, and behavior; they

are irreducible, however, in that no one actor or idea, or group of actors or ideas, constitutes the

core from which an ideology emanates. Second-degree connections between words and phrases

give rise to meaningful clusters that fade from view when we analyze ideas, actors, or subsets

of these things in isolation from their broader context. People know ideology when they see it,

but struggle to say precisely what it is, because they cannot resolve the irresolvable (Cochrane

2015). �is property of ideology has bedeviled past analysis. Since neural network models are

4

designed to capture complex interactions between the inputs—in our case, context words and

indicator variables for political actors—they are well adapted for the study of concepts that should

theoretically emerge from such interactions.

�e neural network models used in this paper are derived from word embeddings (Mikolov,

Chen, Corrado, and Dean 2013; Pennington, Socher, and Manning 2014; Levy, Goldberg, and

Dagan 2015). Such models have gained a wide popularity in many disciplines, including, more

recently, political science. For example, Preotiuc-Pietro et al. (2017) use word embeddings to gen-

erate lists of topic-speci�c words automatically, by exploiting the ability of embeddings to �nd

semantic relations between words. Glavas, Nanni, and Ponze�o (2017) utilize a word embed-

ding model expanded to multiple languages as their main input to classify sentences from party

manifestos by topic, which they evaluate against data from the Comparative Manifestos Project.

Rheault et al. (2016) rely on word embeddings to automatically adapt sentiment lexicons to the

domain of politics, which avoids problems associated with o�-the-shelf sentiment dictionaries

that o�en a�ribute an emotional valence to politically relevant words such as “health”, “war” or

“education”. In this study, we expand on traditional word embedding models to include political

variables (for an illustration using political texts, see Nay 2016). We examine how such models

can be used to study the ideological leanings of political actors across legislatures.

3 Methodology

Models for word embeddings have been explored thoroughly in the literature, but we need to

introduce them summarily to facilitate the exposition of our approach. �is section also adopts a

notation familiar to social science scholars. Our implementation uses shallow neural networks,

that is, statistical models containing one layer of latent variables—or hidden nodes—between the

input and output data.4 �e outcome variablewt is the word occurring at position t in the corpus.

�e variablewt is multinomial with V categories corresponding to the size of the vocabulary. �e

input variables in the model are the surrounding words appearing in a window ∆ before and a�er4For the purpose of our presentation, we follow the steps of the model that Mikolov, Chen, Corrado, and Dean

(2013) call continuous bag-of-words (CBOW).

5

the outcome word, which we denote as w∆ = (wt−∆, ..., wt−1, wt+1, ..., wt+∆). �e window is

symmetrical to the le� and to the right, which is the speci�cation we use for this study, although

non-symmetrical windows are possible, for instance if one wishes to give more consideration

to the previous words in a sequence than to the following ones. Simply put, word embedding

models consist of predicting wt from w∆.

�e neural network can be subdivided into two components. Let zm represent a hidden node,

with m = {1, ...,M} and where M is the dimension of the hidden layer. Each node can be

expressed as a function of the inputs:

zm = f(w′∆βm) (1)

In machine learning, f is called an activation function. In the case of word embedding models

such as the one we rely upon, that function is simply the average value ofw′∆βm across all input

words (see Mikolov, Chen, Corrado, and Dean 2013). Since each word in the vocabulary can be

treated as an indicator variable, Eq. (1) can be expressed equivalently as

zm =1

2∆

∑wv∈w∆

βv,m (2)

that is, a hidden node is the average of coe�cients βv,m speci�c to a word wv if that word is

present in the context of the target word wt. In turn, the vector of hidden nodes z = (z1, ..., zM)

is the average of the M -dimensional vectors of coe�cients βv, for all words v occurring in w∆:

z =1

2∆

∑wv∈w∆

βv (3)

Upon estimation, these vectors βv are the word embeddings of interest.

�e remaining component of the model expresses the probability of the target word wt as a

function of the hidden nodes. Similar to the multinomial logit regression framework, commonly

used to model vote choice, a latent variable representing a speci�c word i can be expressed as

a linear function of the hidden nodes: u∗it = αi + z′µi. �e probability P (wt = i) given the

6

surrounding words corresponds to:

P (wt = i|w∆) =eαi+z

′µi∑Vv=1 e

αv+z′µv

(4)

�e full model can be wri�en compactly using nested functions and dropping some indices for

simplicity:

P (wt|w∆) = g (α,µ, f(w′∆β)) (5)

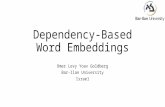

As can be seen with the visual depiction in Figure 1, the embeddings β link each input word to

the hidden nodes.5 �e parameters of the model can be ��ed by minimizing the cross-entropy

using variants of stochastic gradient descent. We rely on negative sampling to �t the predicted

probabilities in Eq. (4) (see Mikolov, Sutskever, Chen, Corrado, and Dean 2013). In an in�uential

study, Pennington, Socher, and Manning (2014) have shown that a corresponding model can be

represented as a log-bilinear Poisson regression using the word-word co-occurrence matrix of a

corpus as data. However, the implementation we use here facilitates the inclusion of metadata

by preserving individual words as units of analysis.

�e basic model introduced above can be expanded to include additional input variables,

which is our main interest in this paper. A common implementation uses indicator variables

for documents or segments of text of interest, in addition to the context words (Le and Mikolov

2014).6 �e approach was originally called paragraph vectors or document vectors. More gener-

ally, other types of metadata can be entered in Eq. 1 to account for properties of interest at the

document level, which is the approach we adopt here. In our implementation, we focus primarily

on indicator variables measuring the party a�liation of a member of parliament (MP) or con-

gressperson u�ering a speech. �e inner component of the expanded model can be represented

as:

zm = f(w′∆βm + x′ζm) (6)5In fact, Mikolov, Chen, Corrado, and Dean (2013) proposed two approaches: one in which the word embeddings

are the link coe�cients between input words and the hidden nodes (CBOW), and another where the outcome andthe inputs are switched (called skip-gram)—in e�ect, predicting surrounding context from the word, rather than thereverse.

6�e type of model described here is called “distributed memory” in the original article (Le and Mikolov 2014).

7

Figure 1: Example of Model with Word and Party Embeddings

wt−3: overcoming

wt−2: barriers

wt−1: to

wt+1: and

wt+2: tackling

wt+3: inequalities

x: Labour 2005

wt: work

zβ

ζ

µ

w∆

Schematic example of input and output data in a model with M = 5 and a window ∆ = 3. �e model includes avariable indicating the party a�liation and parliament of the politician making the speech.

where x is a vector of metadata, and the rest of the speci�cation is similar as before. In addition

to party a�liation, it is straightforward to account for a�ributes with the potential to a�ect the

use of language and confound party-speci�c estimates. We mentioned the government status

earlier (cabinet versus non-cabinet positions, or party in power versus opposition). For Canada,

a country where federal politics is characterized by persistent regional divides, a relevant variable

would be the province of the MP. Just like words have their embeddings, each variable entered

in x has a corresponding vector ζ of dimension M .

Observe that the resulting vectors ζ have commonalities with the WordFish estimator of party

placement. In their WordFish model, Slapin and Proksch (2008) predict word counts with party-

year indicator variables. �e resulting parameters are interpreted as the ideological placement of

parties. �e model introduced in (6) achieves a similar goal. �e key di�erence is that our model

is estimated at the word-level, while taking into account the context (w∆) in which a word occurs.

�e hidden layer serves an important purpose by capturing interactions between the metadata

and these context words. Moreover, the dimension of the hidden layer will determine the size

of what we refer to as party embeddings in what follows, that is, the estimated parameters for

8

each party. Rather than a single point estimate, we �t a vector of dimension M . A bene�t is that

these party embeddings can be compared against the rest of the corpus vocabulary in a common

vector space, as we illustrate below.

Speci�cally, our implementation uses party-parliament pairs as indicator variables, for a

number of reasons. First, ��ing combinations of parties and time periods allows us to reproduce

the nature of the WordFish model as closely as possible: each party within a given parliament

or congress has a speci�c embedding. �is approach has relevant bene�ts, by accounting for

the possibility that the language and issues debated by each party may evolve from one par-

liament to the next. Parties are allowed to “move” over time in the vector space. We rely on

parliaments/congresses, rather than years, to facilitate external validity tests against roll-call

vote measures and annotations based on party manifestos, which are published at the election

preceding the beginning of each parliament. Of course, the possible speci�cations are virtually

endless and may di�er in future applications. But we believe that the models we present are

consistent with existing practice and provide a useful ground for a detailed assessment.

4 Data and So�ware Implementation

Models of word embeddings have been shown to perform best when ��ed on large corpora that

are adapted to the domain of interest (Lai, Liu, and Xu 2016). For the purpose of this study,

we rely on three publicly available collections of digitized parliamentary debates overlapping

a century of history in the United States, Britain and Canada. Replicating the results in three

polities helps to demonstrate that the proposed methodology is general in application. �e United

States corpus is the version released by Gentzkow, Shapiro, and Taddy (2016). Our version of the

British Hansard corpus is hosted on the Political Mashup website.7 Finally, the Canadian Hansard

corpus is described in Beelen et al. (2017) and released as linked and open data on www.lipad.ca.

Each resource is enriched with a similar set of metadata about speakers, such as party a�liations

and functions. �e �rst section of the online appendix describes each resource in more detail.

We considered speeches made by the major parties in each corpus. �e United States corpus7See h�p://search.politicalmashup.nl/.

9

ranges from 1873 to 2016 (43rd to 114th Congress). We present results for the House of Rep-

resentatives and the Senate separately, and restrict our a�ention to voting members a�liated

with the Democratic and Republican parties. For the United Kingdom, the corpus covers the

period from 1935 to 2014. We restrict our focus to the three major party labels: Labour, Liberal-

Democrats, and Conservatives. For Canada, we use the entirety of the available corpus, which

covers a period ranging between 1901 and 2018, from the 9th to the 42nd Parliament. �e corpus

represents over 3 million speeches a�er restricting our a�ention to �ve major parties (Conser-

vatives, Liberals, New Democratic Party, Bloc �ebecois, and Reform Party/Canadian Alliance).

We removed speeches from the Speaker of the House of Commons in Britain and Canada, whose

role is non-partisan.

4.1 Steps for Implementation

We �t the embedding models on each corpora using custom scripts based on Rehurek and Sojka

(2010)’s library for Python, which builds upon the implementation of document vectors proposed

by Le and Mikolov (2014). �is model itself extends the original C++ implementation of the word

embeddings model proposed by Mikolov, Sutskever, Chen, Corrado, and Dean (2013). �e library

uses asynchronous stochastic gradient descent and relies on the method of negative sampling to

�t the so�max function at the output of the neural networks. Our scripts are released openly,

and the source code for the aforementioned library is also available publicly.8 Fi�ing the models

involves some decisions pertaining to text preprocessing and the choice of hyperparameters. We

discuss each of these decision processes in turn.

4.1.1 Text Preprocessing

Preprocessing decisions when working with textual data may have non-trivial consequences

(see Denny and Spirling 2018). Word embedding models are o�en implemented with li�le text

preprocessing. One of the most popular implementations, however, that of Mikolov, Sutskever,

Chen, Corrado, and Dean (2013), relies on subsampling—the random removal of frequent words8Our materials are available at Rheault and Cochrane (2019) and on a dedicated GitHub repository

(h�ps://github.com/lrheault/partyembed).

10

from the context window during estimation. Subsampling essentially achieves a goal similar

to stop word removal, by limiting the in�uence of overly frequent tokens. �is strategy was

shown to improve the accuracy of word embeddings for tasks involving semantic relations. In

our case, we want the models to learn information about terms that are substantively meaningful

for politics. Simply put, the co-occurrence of the terms “reducing” and “taxes” is more meaningful

to learn political language than that of the terms “the” and “taxes”.

For this study, we preprocessed the text by removing digits and words with two le�ers or

fewer, as well as a list of English stop words enriched to remove overly common procedural words

such as “speaker” (or “chairwoman/chairman” in the United States), used in most speeches due

to decorum. Even though our corpora comprise hundreds of millions of words, they are smaller

than the corpora used in original implementations using word embeddings. �e removal of these

common words ensures that we observe many instances of substantively relevant words used in

the same context during the training stage. We tested models with and without stop words

removed. Although removing procedural words only has a marginal e�ect on our methodology,

we �nd that the removal of English stop words does improve the accuracy of our models for

tasks such as ideology detection. We also limit the vocabulary to tokens with a minimum count

of 50 occurrences. �is avoids ��ing embeddings for words with few training examples.

Finally, our models include not only words, but also common phrases. We proceed with two

passes of a collocation detection algorithm, which is applied to each corpus prior to estimation.

Collocations (words used frequently together) are merged as single entities, which means that

with two passes of the algorithm, we capture phrases of up to 4 words.9 Since phrases longer

than four words are very sparse, we stop a�er two runs of collocation detection on each corpus.

Although not entirely necessary for the methodology, we �nd that the inclusion of phrases facil-

itates interpretation for political research, where multi-word entities are frequent and common

expressions may have speci�c meanings (e.g. “civil rights”). �e appendix includes a table with

the most frequent phrases for each corpus.9�e algorithm used to detect phrases is based on the original implementation of word embeddings proposed in

Mikolov, Sutskever, Chen, Corrado, and Dean (2013). Each pass combines pairs of words frequently used togetheras a single expression, for instance “united kingdom”. By applying a second pass, expressions of one word or twowords can be merged, resulting in phrases of up to 4 words.

11

4.1.2 Fitting the Model

For the most part, we implemented our models using hyperparameters set at default values in the

original algorithms proposed by Mikolov, Sutskever, Chen, Corrado, and Dean (2013) and Le and

Mikolov (2014). We �t each model with a learning rate of 0.025 and 5 epochs—that is, �ve passes

over all speeches in each corpus. Previous work using word embeddings o�en relies on hidden

layers of size 100, 200 or 300. �e main text reports models with hidden layers of 200 nodes,

which we �nd to be reliable for applied research. �e appendix provides additional information

on parameterization and its in�uence on the output. In essence, we �nd that modi�cations to

these default hyperparameters do not provide substantial improvements to the results presented

in this paper. Hence, choosing the values mentioned above appears to be a reasonable starting

point, and avoids over��ing the parameters to the characteristics of a speci�c corpus in ways

that may not generalize over time.

�e only departure from default hyperparameters is the choice of a window size. We rely

on a window ∆ of ± 20 words.10 In contrast, implementations in the �eld of computer science

o�en have window sizes of 5 or 10 words (see, e.g., Levy, Goldberg, and Dagan 2015). �is choice

is based on our own examination of parliamentary corpora. We �nd that the discursive style

of members of parliament is more verbose than the language typically found on the web. �e

chosen size roughly corresponds to the average length of a sentence in the British corpus (19.56

tokens per sentence on average) and the Canadian one (20.62 tokens per sentence). Moreover,

the topics discussed in individual speeches tend to expand over several sentences. As a result,

a window of 20 context words takes into account information from the previous and following

sentences. We �nd this choice to be appropriate for our methodology. Our appendix also reports

sensitivity tests regarding the window size.10Note that during estimation, we weigh words according to their position in the context window, with the tth

word weighted by a factor of 1/t. At the beginning or end of a speech, the context window is truncated.

12

5 Empirical Illustrations

Upon estimation of the models, the embeddings can be used to compute several quantities of in-

terest. In this section, we emphasize an approach to ideological scaling based on low-dimensional

projections from the party embeddings.11 We introduce tools facilitating interpretation and dis-

cuss validity assessments against three external sources of data on party ideology. Next, we

compare the method against WordFish to emphasize a desirable property of word embedding

models: the ability to account for changes in word usage over time. Finally, we illustrate how

the methodology can be applied to individual legislators and discuss the question of uncertainty

measures.

5.1 Party Embeddings

We start by assessing the models ��ed with party-speci�c indicator variables. �e objective is

to project the M -dimensional party embeddings into a substantively meaningful vector space.

�ese party embeddings can be visualized in two dimensions using standard dimensionality re-

duction techniques such as principal component analysis (PCA), which we rely upon in this

section.

In plain terms, PCA �nds the one-dimensional component that maximizes the variance across

the vectors of party embeddings (see e.g. Hastie, Tibshirani, and Friedman 2009, Ch. 14.5). �e

next component is calculated the same way, by imposing a constraint of zero covariance with

the �rst component. Additional components could be computed but our analysis focuses on

two-dimensional projections, to simplify visualizations. If the speeches made by members of

di�erent parties are primarily characterized by ideological con�icts, as is normally assumed in

unsupervised techniques for ideology scaling, we can reasonably expect the �rst component

to describe the ideological placement of parties. �e second component will capture the next

most important source of semantic variation across parties in a legislature. To facilitate the

interpretation of these components, we can use the model’s word embeddings and examine the11In the appendix, we demonstrate how users can create projections on a customized space for the study of

political ideology, and compute other quantities of interest from the raw embeddings such as indicators of partypolarization.

13

concepts most strongly associated with each dimension.

Starting with the US corpus, Figure 2a plots the party embeddings in a two-dimensional space

for the House of Representatives. We label some data points using an abbreviation of the party

name and the beginning year of a Congress; for instance, the embedding ζDem 2011 means the

Democratic party in the Congress starting in 2011 (the 112th Congress). �e only adjustment

that may be relevant to perform is orienting the scale in a manner intuitive for interpretation,

for instance, by multiplying the values of a component by −1 such that conservative parties

appear on the right. Each model includes party-congress indicator variables as well as separate

dummy variables for Congresses, which account for temporal change in the discourse. To fa-

cilitate visualization of the results, panels b and c from Figure 2 plot the party embeddings as

time-series, respectively for the �rst and second component.

Our methodology captures ideological shi�s that occurred during the 20th Century. Whereas

both major parties were originally close to the center of the �rst dimension, which we interpret

as the le�-right or liberal-conservative divide, they begin to dri� apart around the New Deal era

in the 1930s, period usually associated with the ��h party system. �e trend culminates with

a period of marked polarization from the late 1990s to the most recent Congresses. �e most

spectacular shi� is probably the one occurring on the second dimension, which we interpret as

a South-North divide (we oriented the South to the bo�om, and North to the top). �e change

re�ects a well-documented realignment between Northern and Southern states that occurred

since the New Deal and the civil rights eras (Shafer and Johnston 2009; Sundquist 2011). A similar

trajectory is manifest using both the House and the Senate corpora (we report equivalent �gures

for the Senate in the online appendix). Whereas Republicans initially became associated with

issues of Northern states, the opposite is true today—the two parties eventually switched sides

completely. �e recent era appears particularly polarized on both axes, which is consistent with

a body of literature documenting party polarization. On the other hand, we do not �nd evidence

of polarization on the principal component in the late 19th Century, contrary to indicators based

on vote data Poole and Rosenthal (2007) but consistently with Gentzkow, Shapiro, and Taddy

(2016).

14

Figure 2: Party Placement in the US House (1873–2016)

(a) Two-Dimensional Projection

15 10 5 0 5 10 15

Component 1

6

4

2

0

2

4

6

Com

ponen

t 2 Dem 1873

Dem 1879Dem 1885

Dem 1891

Dem 1897

Dem 1903

Dem 1909Dem 1915

Dem 1921

Dem 1927

Dem 1933

Dem 1939

Dem 1945

Dem 1951

Dem 1957Dem 1963

Dem 1969

Dem 1975

Dem 1981

Dem 1987

Dem 1993

Dem 1999

Dem 2005

Dem 2011

Rep 1873

Rep 1879

Rep 1885

Rep 1891

Rep 1897

Rep 1903

Rep 1909

Rep 1915

Rep 1921

Rep 1927

Rep 1933

Rep 1939Rep 1945

Rep 1951

Rep 1957

Rep 1963

Rep 1969

Rep 1975

Rep 1981Rep 1987 Rep 1993

Rep 1999

Rep 2005

Rep 2011

DemocratsRepublicans

(b) First Dimension

1880 1900 1920 1940 1960 1980 2000

Year

10

5

0

5

10

Ideo

logic

al P

lace

men

t (F

irst

Princi

pal

Com

ponen

t)

DemocratsRepublicans

(c) Second Dimension

1880 1900 1920 1940 1960 1980 2000

Year

6

4

2

0

2

4

South

-Nort

h A

xis

(Sec

ond P

rinci

pal

Com

ponen

t)

DemocratsRepublicans

�e �gure shows a 2-dimensional projection of the two principal components of party embeddings for the USHouse of Representatives (a), and time-series plots for each of the two components separately in (b) and (c).

15

5.1.1 Interpreting Axes

�e models have desirable properties for interpreting the principal components in substantive

terms. Since both words and party indicators are used as inputs in the same neural networks,

we can project the embeddings associated with each word from the corpus vocabulary onto the

principal components just estimated. Next, we can rank the words based on their distance from

speci�c coordinates. For instance, the point (10, 0) in Figure 2 is located on the right end of the

�rst component. �e words and phrases closest to that location can help researchers to interpret

the meaning of that axis.12

To illustrate, Table 1 reports the 20 expressions with the shortest Euclidean distances to the

four cardinal points of the two-dimensional space for the US House of Representatives.13 We

use the minimum and maximum values of the party projections on each axis to determine the

cardinal points. Expressions located closest to the le� end of the �rst component comprise “civil

rights”, “racism”, “decent housing” and “poorest”, indicating that these terms are semantically

associated with this end of the ideological spectrum. �ese words refer to topics one would

expect in the language of liberal (or le�-wing) parties in the United States. Conversely, terms like

“bureaucracy”, “free enterprise” and “red tape” are associated with the right. As for the second

dimension, the keywords refer to Southern and Northern locations, or industries associated with

each region, which supports our interpretation of that axis as a South-North divide.

We should emphasize that these lists may contain unexpected locutions, for instance the

“Missouri River” among the terms closest to the right edge of the �rst component. As argued

earlier, political ideology cannot be reduced easily to any single idea or core. A�empting to sum-

marize concepts such as liberal or conservative ideologies with individual words entails losing

the context-dependent nature of semantics, which our model is designed to capture. For instance,

some words contained in the lists of Table 1 may re�ect idiosyncratic speaking styles of some

Members of Congress on each side of the spectrum. �erefore, the interpretation ultimately in-

volves the domain knowledge of the researcher to detect an overarching pa�ern. In this case, we12We also present an alternative tool for interpretation in the appendix.13To help us focus on common expressions, we restrict our a�ention to the 50,000 most frequent words or phrases

up to three words in the vocabulary.

16

Table 1: Interpreting PCA Axes

Component Orientation Words and Phrases Closest to Edges of the PCA SpaceFirst Positive (Right) bureaucracy, bureaucracies, bureaucratic, nebraska, regimentation,

bureaucrats, missouri river, obamacare, centralized, redtape, kansas,hatch act, charter schools, captive nations, free enterprise, hoover com-mission, lenin, communist, feed grains, wheat

Negative (Le�) congressional black caucus, wealthiest, decent housing, south african,slums, racism, poor elderly, africanamericans, latinos, africanameri-can, segregated, civil rights, gun violence, apartheid, african, poorest,joint resolution res, tax breaks, brooklyn, richest

Second Positive (North) detroit, bu�alo, sea�le, minneapolis, vermont, duluth, rochester, lakeerie, debt gratitude, lake michigan, erie, cleveland, cleanup, retraining,toledo, maine, trade adjustment assistance, oregon, chicago, recycling

Negative (South) brooks, usury, sam houston, parishes, rome, co�on acreage, poage, to-bacco growers, sam, burley tobacco, tyrannical, south carolina, pinkbollworm, southwest, athens, fertilizer, military installations, northcarolina, andrew jackson, monopolies

believe the word associations provide relatively straightforward clues that facilitate a substantive

interpretation of each axis. Since there are several di�erent ways to explore relations between

words and political actors in this model, an objective for future research would be to develop

robust techniques for interpretation.

5.1.2 Replication in Parliamentary Democracies

Next, we illustrate that the methodology is generalizable across polities by replicating the same

steps using the British and Canadian parliamentary corpora. To begin, Figure 3a reports a vi-

sualization of party embeddings for Britain. In addition to party and parliament indicators, the

model includes a variable measuring whether an MP is member of the cabinet or not. As can

be seen, political parties are once again appropriately clustered together in the vector space:

speeches made by members of the same group tend to resemble each other across parliaments.

Moreover, the parties are correctly ordered relative to intuitive expectations about political ide-

ology. Focusing on the �rst principal component (x-axis), the Labour party appears on one end

of the spectrum, the Liberal-Democrats occupy the center, whereas the embeddings for Conser-

vatives are clustered on the other side. In fact, without any intervention needed on our end, the

model correctly captures well-accepted claims about ideological shi�s within the British party

17

system over time (see e.g. Clarke et al. 2004). For instance, the party embeddings for Conserva-

tives during the �atcher era (Cons 1979, 1983, and 1987) are ranked farther apart on the right

end of the axis, whereas the Labour’s shi� toward the center at the time of the “New Labour” era

(Labour 1997, 2001, and 2005), under the leadership of Tony Blair, is also apparent. �e second

component captures dynamics opposing the party in power and opposition, with parties forming

a government appearing at the top of the y-axis.

Finally, Figure 3b reports the results for Canada.14 Once more, the �rst principal component

can be readily interpreted in terms of le�-right ideological placement. �e Conservatives appear

closer to the right, whereas the le�-wing New Democratic Party (NDP, which is merged with its

predecessor, the Co-operative Commonwealth Federation) is correctly located on the other end

of the spectrum. �e Reform/Canadian Alliance split from the Conservatives, generally viewed

as the most conservative political party in the Canadian system (see Cochrane 2010), appears at

the extreme right of the �rst dimension, consistent with substantive expectations. In the case of

Canada, the second principal component can be easily interpreted as a division between parties

re�ecting their views of the federation. �e secessionist party, the Bloc �ebecois, appears clus-

tered on one end of the y-axis, whereas federalist parties are grouped on the other side. Such a

division also resurfaces in models based on vote data (see Godbout and Høyland 2013; Johnston

2017).

5.1.3 Validity Assessments

To assess the external validity of estimates derived from our models, we evaluate the predicted

ideological placement against gold standards: ideology scores based on roll-call votes (for the

US), surveys of experts, and measures based on the Comparative Manifestos Project (CMP) data.

For each gold standard, we report the Pearson correlation coe�cient with the �rst principal

component of our party embeddings. We also report the pairwise accuracy, that is, the percentage

of pairs of party placements that are consistently ordered relative to the gold standard. Pairwise

accuracy accounts for all possible comparisons, within parties and across parties. Table 2 presents14For that country, our model includes variables measuring whether the MP making the speech belongs to the

cabinet or not, whether they belong to the party in power or the opposition, and their province of origin.

18

Figure 3: Party Placement in Britain (1935–2014) and Canada (1901–2017)

(a) Britain

20 15 10 5 0 5 10 15 20

Component 1

10

5

0

5

10

Com

ponen

t 2

Labour 1987

Labour 2001

LibDems 1997

Cons 1987

Cons 2010

LabourLiberal-DemocratConservative

(b) Canada

20 15 10 5 0 5 10 15 20

Component 1

10

5

0

5

10

15

20

25

Com

ponen

t 2

NDP 1984

Bloc 1993

Liberal 2015 Cons 2015

RefAll 1993

New DemocraticLiberalConservativeBloc QuebecoisReform/Canadian Alliance

�e �gure shows the two principal components of party embeddings for the British and Canadian parliaments.

19

Table 2: Accuracy of Party Placement against Gold Standards

Gold Standard Metric US House US Senate Canada Britain

Voteview(1921-2016)

Correlation 0.918 0.919Pairwise Accuracy 85.66% 83.93%

Experts Surveys(1984-2002)

Correlation 0.982 0.981 0.869 0.910Pairwise Accuracy 86.67% 100.00% 87.88% 83.33%

rile(1945-2015)

Correlation 0.624 0.634 0.768 0.678Pairwise Accuracy 72.61% 72.25% 77.03% 74.98%

vanilla(1945-2015)

Correlation 0.736 0.736 0.731 0.755Pairwise Accuracy 75.62% 75.09% 76.30% 78.06%

legacy(1945-2015)

Correlation 0.898 0.907 0.855 0.876Pairwise Accuracy 85.55% 85.37% 79.78% 82.67%

�e �rst gold standard used for the US is the average DW-NOMINATE score (�rst dimension) from the Voteviewproject for House and Senate (Poole and Rosenthal 2007). Experts surveys are standardized measures of le�-right

party placement from three sources (Castles and Mair 1984; Huber and Inglehart 1995; Benoit and Laver 2006). �eother three references are the rile measure of party placement based on the 2017 version of the ComparativeManifestos Project (CMP) dataset (Budge and Laver 1992; Budge et al. 2001), the Vanilla measure of le�-right

placement (Gabel and Huber 2000), and the legacy measure from Cochrane (2015). �e pairwise accuracy metriccounts the percentage of correct ideological orderings for all possible pairs of parties and parliaments.

the results.

Starting with the US corpus, we consider DW-NOMINATE estimates based on roll-call votes

from the 67th to the 114th Congress and retrieved from the latest version of the Voteview project

(Poole and Rosenthal 2007).15 We use the �rst dimension of the aggregate Voteview indicator,

which measures the average placement of congress members by party over time. Our ideological

placement is strongly correlated with the Voteview scores (r ≈ 0.92) and the pairwise accuracy,

a more conservative metric, is around 85% for both the House and the Senate. �ese tests provide

preliminary support to the conclusion that our model produces reliable estimates of ideological

placement.

To further validate our methodology, we rely upon a second set of benchmarks based on

human evaluations, namely experts surveys. Such surveys have been conducted sporadically in

the discipline, asking country experts to locate national parties on a le�-right scale. �e average15Note that for Westminster systems, vote-based estimates are less reliable indicators of ideology, and we did not

consider them as a benchmark.

20

expert position is commonly used to examine party ideology at �xed points in time (see Benoit

and Laver 2006). We retrieved experts surveys from three di�erent sources covering the three

countries under study in this paper (Castles and Mair 1984; Huber and Inglehart 1995; Benoit and

Laver 2006). Two points on measurement should be emphasized. Firstly, since experts surveys

come from di�erent sources, they may vary in construction and measurement scales. As a result,

we standardize the scores before combining data points from di�erent sources. We compute

z-scores for each of the three surveys by subtracting the mean for all parties across the three

countries, then dividing by the standard deviation. Secondly, we should point out that experts

surveys provide us with only a few data points, in contrast to the other benchmarks reported in

Table 2. In the United States, we retrieved expert party placements from three sources, which

means six data points (i.e. Democrats and Republicans at three di�erent points in time).

�e third and fourth rows of Table 2 report the Pearson correlation coe�cient and pair-

wise accuracy of our ideological placement—again, the �rst principal component of the party

embeddings—evaluated against experts surveys. For both the US House and Senate, the two

goodness-of-�t scores suggest that our methodology produces ideology scores consistent with

the views of experts. �e correlation coe�cients are very high (r ≈ 0.98), and the pairwise

accuracy reaches 100% for the Senate. Despite the low number of comparison points, validating

with a di�erent source helps to give further credence to the interpretation of our unsupervised

method of party placement. Experts surveys represent a more challenging test for the other two

countries, which contain multiple parties, hence additional data points. �e �t with experts sur-

veys in Canada and Britain remains very strong, however, with correlation coe�cients near or

above 0.9.

Finally, we validate our ideological placement variables using data from the Comparative

Manifestos Project (CMP) (Budge and Laver 1992; Budge et al. 2001). �e CMP is based on party

manifestos and relies on human annotations to score the orientation of political parties on a num-

ber of policy items, following a normalized scheme. We test whether three ideology indicators

derived from the project’s data are consistent with our estimated placement of the same party

in the parliament that immediately follows. �e rile measure is the original le�/right measure

21

in the CMP. It is an additive index composed of 26 policy-related items, as described in Budge

et al. (2001). �e rile metric, however, excludes important dimensions of ideology of the CMP,

such as equality and the environment. �e Vanilla measure proposed by Gabel and Huber (2000)

uses all 56 items in the CMP and weights them according to their loadings on the �rst unro-

tated dimension of a factor analysis. Finally, the Legacy measure is a weighted index based on a

network analysis of party positions and a model that assigns exponentially decaying weights to

party positions in prior elections (Cochrane 2015).

Overall, the CMP-based indicators are consistent with our ideology scores, in particular when

considering the Vanilla and Legacy measures. For the US, the correlation is positive but very

modest when considering the more basic “right minus le�” measure (rile). Accuracy reaches r ≈

0.9, however, when considering the Legacy score.16 Looking at the British case, party placements

appear positively correlated with the three external indicators, ranging from r ≈ 0.68 when

considering the rile indicator, up to r ≈ 0.76 and r ≈ 0.88 using more robust ideology metrics

based on the same data. As for pairwise accuracy, between 75 to 83% of comparisons against

CMP-based measures are ordered consistently. Using the Canadian corpus, the �t with the CMP

data is also strong across the three gold standards. Overall, these results provide strong evidence

that our party embeddings are related to le�-right ideology as coded by humans using party

manifestos.

5.1.4 Comparison with WordFish

We now emphasize the properties of these embeddings using a comparison with estimates from

the WordFish model.17 �e proposed methodology di�ers from WordFish in at least two im-

portant ways. Our model �ts embeddings by predicting words from political variables and a

window of context words. Each word in the corpus represents a distinct unit of analysis. In

contrast, WordFish relies on word frequencies tabulated within groupings of interest. �e data16Note that for the United States, the scores are only available for Presidential election years, whereas the corpus

is divided into two-year Congresses. However, the CMP data are available from the election year 1920 in the US.17Grimmer and Stewart (2013) have previously illustrated a limitation to WordFish when applied to individual

legislators in Congress, a case for which the model does not perform well at capturing the expected ideologicaldistance expected between members of opposite political parties.

22

required to �t the WordFish estimator can be contained in a term-document matrix (for full ex-

positions of this model, see Slapin and Proksch 2008; Proksch and Slapin 2010; Lowe and Benoit

2013). �is matrix representation loses the original sequence in which the terms were used in

the document, and the information loss is where the two models fundamentally di�er. A second

di�erence is that WordFish produces a single estimate of party placement, whereas the embed-

dings contain a �exible number of dimensions, which can be projected onto lower dimensional

spaces, as illustrated above.

To illustrate these di�erences, we compute WordFish estimates on the entire US House cor-

pus, restricting the vocabulary to the 50,000 most frequent terms.18 We combined speeches by

members of the same party into single “documents” for each Congress, following the approach

used in Proksch and Slapin (2010). Figure 4a plots the estimated party positions for the full time

period, which can be contrasted against our placement in Figure 2b. When ��ing the WordFish

model on the full corpus, the estimator fails to capture the ideological distinctiveness of the par-

ties. In fact, the dominant dimension appears to be the distinction between the language used

in the late 19th Century and that of more recent Congresses. Put another way, the estimates for

both parties appear confounded by the change in language. Both parties are located next to each

other over time, and the estimates bear no resemblance to benchmarks from either the Voteview

project or the results based on our party embeddings.

�e sharp contrast re�ects a central property of the proposed methodology—and of word

embeddings more generally—namely the ability to place equivalent expressions at proximity in

a vector space.19 Because the names and issues debated in early Congresses tend to be markedly

di�erent from those used in recent Congresses, the Democrats (or Republicans) of the past may

seem disconnected from the Democrats (Republicans) of the present when considering word

usage alone. In contrast, word usage across parties within a speci�c time period will appear

very similar, since both parties are debating the same topics. By accounting for the fact that

di�erent words can express the same meaning, a model based on word embeddings can account

for continuity in a party’s position even when word usage changes over time. For example, an18We use the implementation available in the quanteda package in R, which is based on Lowe and Benoit (2013).19We are indebted to an anonymous reviewer for their helpful suggestions regarding this discussion.

23

Figure 4: Comparison with WordFish Estimates

(a) US House, Full Corpus

1880 1900 1920 1940 1960 1980 2000

Year

1.0

0.5

0.0

0.5

1.0

1.5

Word

Fish

Est

imat

e

DemocratsRepublicans

(b) US House, Five Congresses

2006 2008 2010 2012 2014 2016

Year

1.5

1.0

0.5

0.0

0.5

1.0

Word

Fish

Est

imat

e

DemocratsRepublicans

�e �gure reports WordFish estimates for Democrat and Republican parties in the US House of Representatives,��ed on the full corpus (a) or using �ve Congresses from 2007 to 2015 (b).

expression related to an insurrection in the late 19th and early 20th Century, the term “Moros”

(referring to the Moro Rebellion in the Philippines), has a word embedding similar to the term

“ISIL” (the Islamic State of Iraq and the Levant).20 Even though ISIL was never mentioned in

debates from the 19th Century, the embedding model is trained to recognize that both terms

share a related meaning.

When ��ing the WordFish estimator on a shorter time span (the �ve most recent Congresses

in the corpus), the model then places the two parties farther apart, and estimates become more

consistent with the ideological divide expected between Democrats and Republicans (see Figure

4b). We calculate the accuracy of both implementations of the WordFish estimator against the

Voteview benchmarks in Table 3. Whereas WordFish does not perform well for studying long

periods of time, a shorter model produces estimates that are closer to those achieved with the �rst

principal component of the proposed methodology. Both methods have bene�ts and limitations,

and we should point out that an advantage of WordFish is that the model can be estimated on

smaller corpora, as opposed to embedding models, which require more training data.20�e cosine similarity between the two terms’ embeddings is 0.48.

24

Table 3: Accuracy of Party Placement in the US House: WordFish and Party Embeddings

1921–2016 2007-2016Metric WordFish Embeddings WordFish EmbeddingsCorrelation –0.027 0.918 0.829 0.999Pairwise Accuracy 44.36% 85.66% 75.56% 88.89%

Accuracy scores for WordFish estimates and party embeddings against the DW-NOMINATE scores (�rstdimension) from the Voteview project (Poole and Rosenthal 2007). �e pairwise accuracy metric counts the

percentage of correct ideological orderings for all possible pairs of parties and Congresses.

5.2 Legislator Embeddings

�e methodology introduced in this paper can be adapted to the study of individual legislators,

rather than parties. �e speci�cation is similar to that of the model used for political parties,

with the di�erence that legislator-Congress indicator variables replace the party-Congress ones.

We illustrate such an implementation by examining a model comprising embeddings ��ed at

the level of individual Senators in the US corpus. Since the number of speeches per legislator is

lower than for a party as a whole, we compensate by increasing the number of epochs during

training. �e model discussed below is trained with 20 epochs.

Figure 5 reports the two principal components of the embeddings associated with individual

Senators in the 114th Congress. �e �gure illustrates the expected division of Senators along

party lines, although the clustering is nowhere as tight as that typically obtained using scaling

methods based on roll-call vote data. For instance, some Republican Senators appear mixed

together with Democratic counterparts in the center of the spectrum. �is can be explained

in part by the fact that language re�ects a much wider variance in positions than binary vote

data, an issue that could be explored in future research. �e centrist Republicans in Figure 5

include Robert Jones Portman, Lamar Alexander and Susan Collins, who were generally ranked

as moderates in external sources of ideology ratings discussed below.

To assess whether the low-dimensional projection captures political ideology, we proceed

once more with a validation against external measures. By focusing on a recent Congress, we

are able to use a variety of measures of ideological placement for US Senators: the Voteview

scores for individual Senators, ratings from the American Conservative Union (ACU) and Gov-

25

Figure 5: Ideological Placement of Senators (114th Congress)

20 15 10 5 0 5 10 15 20

Component 1

15

10

5

0

5

10

15

20Com

ponen

t 2

DemocratsRepublicans

Track.us ideology scores.21 Table 4 reports Pearson correlation coe�cients and pairwise accuracy

scores using the �rst principal component of our model and each gold standard. Since our corpus

is restricted to members a�liated with major party labels, this analysis excludes two indepen-

dent Senators. For each gold standard considered, we obtain correlation coe�cients of at least

0.85. �e �t with the GovTrack ideology scores is particularly strong. Overall, these accuracy

results support the conclusion that the �rst principal component extracted from our “Senator

embeddings” is related to political ideology.

5.3 Uncertainty Estimates

A natural question regarding models based on word embeddings is whether they can be ��ed

with measures of uncertainty, such as standard errors and con�dence intervals commonly re-

ported in inference-based research. For instance, it would be convenient to express uncertainty21�e ACU ratings for 2016 were retrieved from h�p://acuratings.conservative.org/acu-federal-legislative-

ratings/ on August 29, 2018. GovTrack report cards are also for 2016 and were retrieved the same day ath�ps://www.govtrack.us/congress/members/report-cards/2016/senate/ideology.

26

Table 4: Accuracy of Senator Ideological Placement

Gold Standard Pearson Correlation Pairwise AccuracyDW-NOMINATE 0.876 80.81%Nokken-Poole 0.885 81.09%ACU 2016 0.852 75.38%ACU 2015 0.862 71.28%ACU Life 0.884 80.43%GovTrack 0.923 86.01%

�e DW-NOMINATE score is the main indicator from the Voteview data, and the Nokken-Poole is an alternativeimplementation based on (Nokken and Poole 2004). �e ACU ratings are for the year indicated, or alternatively thelife-long rating a�ributed to each Senator. �e methodology used for the GovTrack ideology scores is described in

the source website.

around a prediction of a political party’s placement. Unfortunately, deriving measures of uncer-

tainty with neural network models, even with a relatively modest degree of complexity, remains

an area of research under development (see Gentzkow, Kelly, and Taddy 2017). Bootstrap meth-

ods would be impractical due to the size of the corpora and the computing time needed to �t each

model. Machine learning aims at prediction rather than inference, and the preferred validation

methods are designed to assess model speci�cation using predictive accuracy metrics, as we did

above, rather than measures of uncertainty (Mullainathan and Spiess 2017). However, there are

solutions available for producing measures of uncertainty for quantities of interest, a�er accept-

ing the raw embeddings as point estimates. For example, when computing cosine similarities

using lists of words, researchers working with word embeddings have relied on techniques such

as permutation t-tests (Caliskan, Bryson, and Narayanan 2017) or bootstrap estimators (Garg

et al. 2018).

6 Intended Uses and Caveats

�e proposed methodology can help to support research agendas in a number of sub�elds of

political science. In comparative politics, the use of scaling estimates based on roll-call votes is

o�en problematic when exported outside the United States, in particular for countries with high

levels of party discipline or where the executive sits inside the parliament. �e party embed-

27

ding model introduced here a�ords comparative scholars with new opportunities to study party

ideology, whenever a collection of digitized parliamentary corpora is available. Since the model

can be adapted to the study of individual legislators, the technique also provides an additional

resource for research on intra-party con�ict, a central focus of recent research involving tex-

tual analysis of parliamentary speeches (see e.g. Proksch and Slapin 2015; Back and Debus 2016;

Lauderdale and Herzog 2016; Schwarz, Traber, and Benoit 2017; Proksch et al. 2018). Next, the

model is well adapted for research on political representation (Powell 2004; Bird 2010). In par-

ticular, the party embeddings can allow researchers to go beyond descriptive representation and

examine how the language of political actors relates to issues and groups of interest. �e results

presented earlier suggest a connection between parties and minorities in the US Congress, and

the method could be adapted for studies on the representation of other groups of interest across

legislatures. Finally, the model can serve as a basis to develop additional methodological tools

for textual analysis going beyond word counts, with the goal of studying political semantics.

Some words of caution are warranted, however, when interpreting text-based indicators of

ideological placement. �ere are well-known limitations to scaling estimates based on roll-call

votes, and those are o�en relevant for applications based on textual data. For instance, strate-

gic agenda-se�ing decisions may limit opportunities to vote on some issues, and non-random

abstentions during votes could obscure the true ideal point of a legislator (Clinton 2012). In

a similar fashion, the distribution of issues in parliamentary corpora may be constrained by

who controls the agenda during a given legislature, and some legislators could strategically re-

frain from expressing their true opinions. �ese constraints on legislative debates may also vary

across polities. For instance, the daily order of business in the Canadian House of Commons is

dominated by the government, whereas other legislatures may o�er extended opportunities to

opposition parties. Researchers working with the proposed methodology should make sure to

gain familiarity with the traits characterizing a legislature, and the behavioral constraints that

may a�ect their conclusions.

28

7 Conclusion

Methods based on word embeddings have the potential to o�er a useful, integrated framework

for studying political texts. By relying on arti�cial neural networks that take into account in-

teractions between words and political variables, the methodology proposed in this paper is

naturally suited for research focusing on latent properties of political discourse such as ideol-

ogy. �is approach has the bene�t of going beyond word counts, which improves the ability

to account for changes in word usage over time, in contrast to existing methods. �e present

paper relied on a custom implementation of word embeddings trained on parliamentary cor-

pora and augmented to include input variables measuring party a�liations. As illustrated in our

empirical section, the resulting “party embeddings” can be utilized to characterize the content

of parliamentary speeches and place political actors in a vector space representing ideological

dimensions. Our validity assessments suggest that low-dimensional projections from the model

are generally consistent with human-annotated indicators of ideology, experts surveys and, in

the case of Congress, roll-call votes measures. Judging by the wide adoption of word embeddings

in real-world applications based on natural language processing, we believe that the technique

is bound to gain in popularity in the social sciences. In fact, our paper has probably scratched

only the surface of the potential of word embeddings for political analysis.

Despite the promise of (augmented) word embeddings for political research, scholars should

be wary of some limitations with the methodology. First, ��ing the models requires a large

corpus of text documents to fully bene�t from their properties for semantic analysis. We �nd

that corpora of digitized parliamentary debates appear reasonably large for this type of research,

but smaller collections of texts may not achieve the same level of accuracy. Second, obtain-

ing uncertainty estimates for the neural network models necessary to �t the embeddings would

require further research. Although we have illustrated a variety of techniques for model valida-

tion, the methodology could eventually be implemented using frameworks like Bayesian neural

networks and variational inference (see e.g. MacKay 1992; Tran et al. 2017). �is would facili-

tate the estimation of con�dence intervals, although at this stage the cost in terms of compu-

tational time remains prohibitive. Moreover, the quantities of interest to political scientists will

29

likely be derivatives of the embeddings—for instance, a measure of similarity between words and

parties—which implies compounding uncertainty estimates across the di�erent steps involved.

On the other hand, we have shown that the methodology can be used with a limited number of

arbitrary decisions and produce reliable indicators of party placement, eliminating a source of

variance associated with individual judgments.

30

ReferencesBack, Hanna, and Marc Debus. 2016. Political Parties, Parliaments and Legislative Speechmaking.

New York: Palgrave Macmillan.

Beelen, Kaspar, Timothy Alberdingk �ijm, Christopher Cochrane, Kees Halvemaan, GraemeHirst, Michael Kimmins, Sander Lijbrink, Maarten Marx, Nona Naderi, Roman Polyanovsky,Ludovic Rheault, and Tanya Whyte. 2017. “Digitization of the Canadian Parliamentary De-bates.” Canadian Journal of Political Science 50 (3): 849–864.

Benoit, Kenneth, and Michael Laver. 2006. Party Policy in Modern Democracies. New York: Rout-ledge.

Bird, Karen. 2010. “Pa�erns of Substantive Representation Among Visible Minority MPs: Evi-dence from Canada’s House of Commons.” In �e Political Representation of Immigrants andMinorities, ed. Karen Bird, �omas Saalfeld, and Andreas M. Wust. New York: Routledge.

Bishop, Christopher M. 2006. Pa�ern Recognition and Machine Learning. New York: Springer.

Budge, Ian, Hans-Dieter Klingemann, Andrea Volkens, Judith Bara, and Eric Tanenbaum. 2001.Mapping Policy Preferences: Estimates for Parties, Electors, and Governments (1945–1998). Ox-ford: Oxford University Press.

Budge, Ian and Michael J. Laver, eds. 1992. Party Policy and Government Coalitions. London: Pal-grave Macmillan UK.

Caliskan, Aylin, Joanna J. Bryson, and Arvind Narayanan. 2017. “Semantics Derived Automati-cally from Language Corpora Contain Human-Like Biases.” Science 356 (6334): 183–186.

Castles, Francis G, and Peter Mair. 1984. “Le�-Right Political Scales : Some ‘Expert’ Judgments.”European Journal of Political Research 12 (1): 73–88.

Clarke, Harold D., David Sanders, Marianne C. Stewart, and Paul Whiteley. 2004. Political Choicein Britain. Oxford: Oxford University Press.

Clinton, Joshua D. 2012. “Using Roll Call Estimates to Test Models of Politics.” Annual Review ofPolitical Science 15: 79–99.

Cochrane, Christopher. 2010. “Le�/Right Ideology and Canadian Politics.” Canadian Journal ofPolitical Science 45 (3): 583–605.

Cochrane, Christopher. 2015. Le� and Right: �e Small World of Political Ideas. Montreal,Kingston: McGill-�een’s University Press.

Deerwester, Sco�, Susan T. Dumais, George W. Furnas, �omas K. Landauer, and Richard Harsh-man. 1990. “Indexing by Latent Semantic Analysis.” Journal of the American Society for Infor-mation Science 41 (6): 391–407.

Denny, Ma�hew J., and Arthur Spirling. 2018. “Text Preprocessing For Unsupervised Learning:Why It Ma�ers, When It Misleads, And What To Do About It.” Political Analysis 26 (2): 168–189.

31

Diermeier, Daniel, Jean-Francois Godbout, Bei Yu, and Stefan Kaufmann. 2012. “Language andIdeology in Congress.” British Journal of Political Science 42 (1): 31–55.

Freeden, Michael. 1998. Ideology and Political �eory: A Conceptual Approach. Oxford: OxfordUniversity Press.

Gabel, Ma�hew J., and John D. Huber. 2000. “Pu�ing Parties in �eir Place: Inferring Party Le�-Right Ideological Positions from Party Manifestos Data.” American Journal of Political Science44 (1): 94–103.

Garg, Nikhil, Londa Schiebinger, Dan Jurafsky, and James Zou. 2018. “Word Embeddings �an-tify 100 Years of Gender and Ethnic Stereotypes.” Proceedings of the National Academy of Sci-ences 115 (16): E3635–E3644.

Gentzkow, Ma�hew, Bryan T. Kelly, and Ma� Taddy. 2017. “Text as Data.” NBER Working Pa-per (w23276).

Gentzkow, Ma�hew, and Jesse M. Shapiro. 2010. “What Drives Media Slant? Evidence from U.S.Daily Newspapers.” Econometrica 78 (1): 35–71.

Gentzkow, Ma�hew, Jesse M. Shapiro, and Ma� Taddy. 2016. “Measuring Polarization in High-Dimensional Data: Method and Application to Congressional Speech.” NBER Working Paper:22423.

Glavas, Goran, Federico Nanni, and Simone Paolo Ponze�o. 2017. “Cross-Lingual Classi�cationof Topics in Political Texts.” In Proceedings of the 2017 ACL Workshop on Natural LanguageProcessing and Computational Social Science. Association for Computational Linguistics pp. 42–46.

Godbout, Jean-Francois, and Bjørn Høyland. 2013. “�e Emergence of Parties in the CanadianHouse of Commons (1867–1908).” Canadian Journal of Political Science 46 (4): 773–797.

Grimmer, Justin, and Brandon M. Stewart. 2013. “Text as Data: The Promise and Pitfalls of Au-tomatic Content Analysis Methods for Political Texts.” Political Analysis 21 (3): 267–297.

Hastie, Trevor, Robert Tibshirani, and Jerome Friedman. 2009. The Elements of Statistical Learning.Berlin: Springer.

Hirst, Graeme, Yaroslav Riabinin, Jory Graham, Magali Boizot-Roche, and Colin Morris. 2014.“Text to Ideology or Text to Party Status?” In From Text to Political Positions: Text Analysisacross Disciplines, ed. Bertie Kaal, Isa Maks, and Annemarie van Elfrinkhof. John BenjaminsPublishing Company.

Hix, Simon, and Abdul Noury. 2016. “Government-Opposition or Le�-Right? The InstitutionalDeterminants of Voting in Legislatures.” Political Science Research and Methods 4 (2): 249–273.

Huber, John, and Ronald Inglehart. 1995. “Expert Interpretations of Party Space and Party Loca-tions in 42 Societies.” Party Politics 1 (1): 73–111.

Iyyer, Mohit, Peter Enns, Jordan Boyd-Graber, and Philip Resnik. 2014. “Political Ideology De-tection Using Recursive Neural Networks.” In Proceedings of the 2014 Annual Meeting of theAssociation for Computational Linguistics (ACL 2014).

32

Jensen, Jacob, Ethan Kaplan, Suresh Naidu, and Laurence Wilse-Samson. 2012. “Political Polar-ization and the Dynamics of Political Language: Evidence from 130 Years of Partisan Speech.”Brookings Papers on Economic Activity Fall: 1–81.

Johnston, Richard. 2017. �e Canadian Party System: An Analytic History. Vancouver: UBC Press.

Kim, In Song, John Londregan, and Marc Ratkovic. 2018. “Estimating Spatial Preferences fromVotes and Text.” Political Analysis 26 (2): 210–229.

Lai, Siwei, Kang Liu, and Jun Xu, Liheng an Zhao. 2016. “How to Generate Good Word Embed-ding?” IEEE Intelligent Systems 31 (6): 5–14.

Lauderdale, Benjamin E., and Alexander Herzog. 2016. “Measuring Political Positions from Leg-islative Speech.” Political Analysis 24 (3): 374–394.

Laver, Michael, Kenneth Benoit, and John Garry. 2003. “Extracting Policy Positions from PoliticalTexts Using Words as Data.” American Political Science Review 97 (2): 311–331.

Le, �oc, and Tomas Mikolov. 2014. “Distributed Representations of Sentences and Documents.”In Proceedings of the 31st International Conference on Machine Learning.

Levy, Omer, Yoav Goldberg, and Ido Dagan. 2015. “Improving Distributional Similarity withLessons Learned from Word Embeddings.” Transactions of the Association for ComputationalLinguistics 3: 211–225.

Lowe, Will, and Kenneth Benoit. 2013. “Validating Estimates of Latent Traits from Textual DataUsing Human Judgment as a Benchmark.” Political Analysis 21 (3): 298-313.

MacKay, David J. C. 1992. “A Practical Bayesian Framework for Backpropagation Networks.”Neural Computation 4 (3): 448-472.

Manning, Christopher D., Prabhakar Raghavan, and Hinrich Schutze. 2009. An Introduction toInformation Retrieval. Cambridge: Cambridge University Press.

Mikolov, Tomas, Ilya Sutskever, Kai Chen, Greg Corrado, and Je�rey Dean. 2013. “DistributedRepresentations of Words and Phrases and their Compositionality.” In Proceedings of the 26thInternational Conference on Neural Information Processing Systems. pp. 3111–3119.