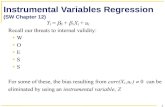

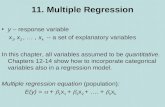

Multiple Regression Here we add more independent variables to the regression.

Week 4 Multiple regression analysis. More general regression model Consider one Y variable and n...

-

date post

19-Dec-2015 -

Category

Documents

-

view

216 -

download

2

Transcript of Week 4 Multiple regression analysis. More general regression model Consider one Y variable and n...

More general regression modelConsider one Y variable and n independent variables X i, e.g.

X1, X2, X3.

Data on n tuples (yi, xi1,xi2,xi3). Scatter plots show linear association between Y and the

X-variables The observations on y can be assumed to satisfy the

following model

y x x x e for i ni i i i i 0 1 1 2 2 3 3 1,...,

Data

Prediction

error

Assumptions on the regression model1. The relationship between the Y-variable and the X-variables is

linear

2. The error terms ei (measured by the residuals)

– have zero mean (E(ei)=0)

– have the same standard deviation e for each fixed x

– are approximately normally distributed - Typically true for large samples!

– are independent (true if sample is S.R.S.)

Such assumptions are necessary to derive the inferential methods for testing and prediction (to be seen later)!

WARNING: if the sample size is small (n<50) and errors are not normal, you can’t use regression methods!

Parameter estimates

Suppose we have a random sample of n observations on Y and on p-1 independent X-variables.

How do we estimate the values of the coefficients ’s in the regression model of Y versus the X-variables?

The parameter estimates are those values for ’s that minimize the sum of the square errors:

Thus the parameter estimates are those values for ’s that will make the model residuals as small as possible!

The fitted model to compute predictions for Y is

pp xxy ˆ...ˆˆˆ 110

Regression Parameter estimates

2110

2 )]...([)ˆ( kkii i

ii xxyyy

Using Linear Algebra for model estimation (section 12.9)

Let be the parameter vector for the regression model in p variables.

The parameter estimates for each beta can be efficiently found using linear algebra as

where X is the data matrix for the X-variables and Y is the data vector for the response variable.

Hard to compute by hand – better use a computer!

Tk ),...,,( 10 β

YXX)Xβ T1T (ˆXT is the transpose of matrix XX-1

denotes the inverse of matrix X

EXAMPLE: CPU usage

A study was conducted to examine what factors affect the CPU usage. A set of 38 processes written in a programming language was considered. For each program, data were collected on the

Y = CPU usage (time) in seconds of time, X1= the number of lines (linet) in thousands generated by the process

execution. X2 = number of programs (step) forming the process X3 = number of mounted computer devices (device).

Problem: Estimate the regression model of Y on X1,X2 and X3

DEVICESTEPLINETy 3210ˆˆˆˆˆ

I) Exploratory data step: Are the associations between Y and the x-variables linear?Draw the scatter plot for each pair (Y, Xi)

Lines executed in process Number of programs

Mounted devices

CPU time CPU time

CPU time

Do the plots showlinearity?

PROC REG - SAS OUTPUT

The REG Procedure Parameter Estimates

Parameter StandardVariable Label DF Estimate Error t Value Pr > |t|Intercept --- 1 0.00147 0.01071 0.14 0.8920Linet --- 1 0.02109 0.00271 7.79 <.0001step --- 1 0.00924 0.00210 4.41 <.0001device --- 1 0.01218 0.00288 4.23 0.0002

The fitted regression model is

DEVICESTEPLINETy 012.0009.0021.00014.0ˆ DEVICESTEPLINETy 012.0009.0021.00014.0ˆ

Fitted model

The ’s estimated values measure the changes in Y for changes in X’s.

For instance, for each increase of 1000 lines executed by the process (keeping the other variables fixed), the CPU usage time will increase of 0.021 seconds.

Fixing the other variables, what happens on the CPU time if I add another device?

The fitted regression model is

DEVICESTEPLINETy 012.0009.0021.00014.0ˆ

Interpretation of model parameters

In multiple regression

coefficient value of an X variable measures the predicted change in Y for any unit increase in that X-variable while the other independent variables stay constant.

For instance: measures the changes in Y for a unit increase of the variable X2 if the other x-variables X1 and X3 are fixed.

y x x x e for i ni i i i i 0 1 1 2 2 3 3 1,...,

2

Are the estimated values accurate?

Residual Standard Deviation (pg. 632)

Testing effects of individual variables (pg. 652- 655)

How do we measure the accuracy of the estimated parameter values? (page 632)

2ˆ)(

11 xxi

e

2

2

ˆ)(

10 xx

x

n ie

For a simple linear regression with one X, the standard deviation of the parameter estimates are defined as:

They are both functions of the error variance regarded as asort of standard deviation (spread) of the points around the line! The error variance is estimated by the residual standard deviation se (a.k.a. root mean square error )

e

2

)ˆ( 2

n

yys ii

eResiduals!

How do we interpret residual standard deviation? Used as a coarse approximation of the prediction error for

new y-values. Probable error in new predictions is

+/- 2 se

se also used in the formula of standard errors of parameter estimates:

they can be computed from the data and measure the noise in the parameter estimates

2

2

ˆ)(

10 xx

x

nss

ie

2ˆ

)(

11 xx

ssi

e

For general regression models with k x-variables

For k predictors, the standard errors of the parameter estimates have a complicated form…but they still depend on the error standard deviation !

The residual standard deviation or root mean square error is defined as

k+1 = number of parameters ’s

)1(

)ˆ(

)1(

)()

2

kn

yy

kn

ResidualSSlMS(Residuas ii

e

e

This measures the precision of our predictions!

The REG Procedure Analysis of Variance

Sum of MeanSource DF Squares Square F Value Pr > FModel 3 0.59705 0.19902 166.38 <.0001Error 34 0.04067 0.00120Corrected Total 37 0.63772

Root MSE 0.03459 R-Square 0.9362 Dependent Mean 0.15710 Adj R-Sq 0.9306 Coeff Var 22.01536

The root mean square error for the CPU usage regression model is computed above. That gives an estimate of the error standard deviation

se=0.03459

Inference about regression parameters! Regression estimates are affected by random

error The concepts of hypothesis testing and

confidence intervals apply! The t-distribution is used to construct significance

tests and confidence intervals for the “true” parameters of the population.

Tests are often used to select those x-variables that have a significant effect on Y

Tests on the slope for straight line regressionConsider the simple straight line case.

A common test on the slope is the test on the hypothesis

“X has no effect on Y” or the slope is equal to zero!

Or in statistical terms :

X has a negative effect

Ho: vs Ha: X has a significant effect X has a positive effect

01 01

The test is given by the t-statistic

2

1

1

1

)(/1

ˆ

)ˆ.(.

0ˆ

xxsest

ie

With t-distribution with n-2 degrees of freedom!

Tests on the parameters in multiple regression

Significance Tests on parameter test hypothesis:

“Xj has no effect on Y” or in statistical terms

j

0: vs0:

jj HaHo Xj has a negative effect on Y Xj has a significant effect on Y Xj has a positive effect on Y

The test is given by the t-statistic

with t-distribution with n-(k+1) degrees of freedom )ˆ.(.

ˆ

j

j

est

Computed by SAS

Assumptions on the data:1. e1, e2, … en are independent of each other.2. The ei are normally distributed with mean zero and

have common variance s.

Tests in SAS

The test p-values for regression coefficients are computed by PROC REG

SAS will produce the two-sided p-value.

If your alternative hypothesis is one-sided (either > or < ), then find the one-sided p-value dividing by 2 the p-value computed by SAS

one-sided p-value = (two-sided p-value)/2

SAS Output

The REG Procedure Parameter Estimates

Parameter StandardVariable Label DF Estimate Error t Value Pr > |t|Intercept --- 1 0.00147 0.01071 0.14 0.8920Linet --- 1 0.02109 0.00271 7.79 <.0001step --- 1 0.00924 0.00210 4.41 <.0001device --- 1 0.01218 0.00288 4.23 0.0002

T-statistic value P-value

T-tests on each parameter value show that all the x-variables in the model are significant at 5% level (p-values <0.05). The null hypothesis of no effect can be rejected, and we conclude that there is a significant association between Y and each x-variable.

Test on the intercept 0

The REG Procedure Parameter Estimates

Parameter StandardVariable Label DF Estimate Error t Value Pr > |t|Intercept --- 1 0.00147 0.01071 0.14 0.8920Linet --- 1 0.02109 0.00271 7.79 <.0001step --- 1 0.00924 0.00210 4.41 <.0001device --- 1 0.01218 0.00288 4.23 0.0002

The test on the intercept says that the null hypothesis of 0=0 should be accepted. The test p-value is 0.8920.

This means that the model should have no intercept ! This is not recommended though – unless you know that Y=0 if all the x-variables are equal to zero.

What do we do if a model parameter is not significant?

If the t-test on a parameter bj shows that the parameter value is not significantly different from zero, we should refit the regression model without the x-variable corresponding to bj.

SAS Code for the CPU usage data

Data cpu;infile "C:\week5\cpudat.txt";input time line step device;linet=line/1000;label time="CPU time in seconds" line="lines in program execution"

step="number of computer programs" device="mounted devices" linet="lines in program (thousand)";

/*Exploratory data analysis *//* computes correlation values between all variables in dataset */proc corr data=cpu;run;/* creates scatterplots between time vs linet, time vs step and

time vs device, respectively */proc gplot data=cpu;plot time*(linet step device);run;