Units of Linguistic Representation in the Brain Colin...

Transcript of Units of Linguistic Representation in the Brain Colin...

Units of Linguistic Representation in the Brain

Colin Phillips

University of Delaware

Main Collaborators

Alec Marantz (MIT)David Poeppel (U. Md.)

Tim Roberts (UCSF)Tom Pellathy (U. of Delaware)Krishna Govindarajan (MIT)

Linguistic Computation

• Computation over discrete categories• Computations integrate multiple units at multiple levels of

representation over time

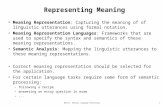

Objectives for Cognitive Neuroscience of Language

• How are discrete linguistic categories represented in the brain?• How is sequential linguistic computation carried out in the brain?

Notice that…

• Localization is not an end in itself• We will know when we have found an answer• Strongly influenced by models in linguistics and psycholinguistics

Outline

• Biomagnetic measurements

• ‘Surprise’ measures: many ways of being shocked• Measures of ‘normal’ processing in syntax and semantics

• Surprise measures and phonological categories• Measures of ‘normal’ processing in extracting phonetic features

a)

d)c)

b)Averaged waveforms Magnetic field contour map

MRI-overlay of dipolesource localization

Whole-head reconstructionwith dipole source overlay

Stages in Magnetic Source Imaging

Paradigms in Electrophysiological Studies of Language

• Individual trial analysis

Activity of interest masked by irrelevant activitySome recent attempts

• Averaged responses to non-disrupted processing

Data is much cleanerAbility to interpret depends on highly explicit models of non-disrupted processing

• Averaged responses to disrupted processing

Disruption can be isolated by subtraction of data from control conditionBy far the most productive paradigm to date

Disruption of Semantic Processing

• N400

‘John spread the warm bread with butter/socks.’

‘When you go to bed, turn off the living room light/giraffe.’

‘I ordered a ham and cheese sandwich/scissors.’

(Kutas & Hillyard 1980)

• Elicited by semantic anomaly• Possible generator in anterior medial temporal lobe (McCarthy et al. 1995)

Disruption of Syntactic Processing

• P600

‘The broker hoped to sell the stock was acrook.’

‘The broker persuaded to sell the stock was acrook.’

(Osterhout & Holcomb 1992; Hagoort et al.1993)

• Elicited by Syntactic anomaly• Location of generator uncertain at present; ERP scalp distribution is

broad

Additional Syntactic ‘Surprise’ Responses

• Left Anterior Negativity (~300ms)

What do you wonder who they caught __ at __ by accident?The plane took we to paradise and back.The doctor forced the patient was lying.

(Neville et al. 1991; Kluender & Kutas 1993; Coulson et al. 1998; Osterhout et al. 1994)

• ELAN (~150ms)

The scientist criticized Max’s of proof the theorem.(Neville et al. 1991, Friederici 1995)

• Evidence bearing on correct linguistic analysis

The successful woman congratulated himself on the promotion. P600 elicited(Osterhout & Mobley 1995)

• Time course of different anomaly responses constrain models oflanguage processing (Friederici 1995)

Revised Understanding of N400

• All words elicit N400

• N400 strength varies with cloze probability in sentence contexts, isreduced by priming in word-lists

• N400 decreases across sentence, as constraints on continuationsincrease

• N400 may largely reflect normal process of integrating/updatingsemantic representations – averaging picks out synchronizedsemantic processing

• Syntactic anomaly responses may similarly reflect components ofnormal sentence processing – depends on a suitably explicit modelof undisrupted sentence processing.

Objects of Phonological Perception

• Phonemes

e.g. d, t, b, p, a, i, u

• Collections of features

d = [voice] + [alveolar] + [stop]t = [voiceless] + [alveolar] + [stop]

[voiced] b, d, g, j , ¶, z, z , m, n, [voiceless] p, t, k, f, , s, s , c

• Outcome of Perception is categories

A A A A A A B A A A A A A A A A A B A A A A A A A B …

Mismatch Response to Auditory Oddball (~180-250 msec)

Discontinuous acoustic sensitivity

0

0.1

0.2

0.3

0.4

0.5

0.6

0.7

0.8

0.9

1

0 10 20 30 40 50 60 70 80

Pro

port

ion

of /d

æ/ R

espo

nses

Voice Onset Time (ms)

B

B

B

B

BB

0-10 10-20 20-30 30-40 40-50 50-600

10

20

30

40

50

60

70

80

90

100

% 'D

iffer

ent'

Res

pons

es

Pairs from /dæ/-/tæ/ VOT Continuum

Results from behavioral identification and discrimination tasks with /dæ/-/tæ/ continuum

• Recent ERP/MEG studies showing effects of discontinuous sensitivity to speech continua

Näätänen et al. 1997: MMF amplitude reflects cross-linguistic vowel difference inFinnish and Estonian speakers

Dehaene-Lambertz 1997: MMN amplitude greater for across-category contrasts thanwithin-category contrasts

‘Categorical Perception’ ≠ Category Labeling

• Discontinuous acoustic sensitivity often co-occurs with categorylabeling, and may be an important substrate for categorization, butthey are not the same

• Discontinuous discrimination without phonological category labeling

Infant speech discrimination (Eimas et al. 1971)Discontinuous discrimination of non-native sounds at short ISI (Werker & Logan 1985)Discontinuous discrimination of non-speech sounds (Pisoni 1977)Discontinuous discrimination of human speech sounds in non-human animals (Kuhl &Miller 1978)

• Phonological category labeling without discontinuous discrimination

Vowel perception (Fry et al. 1962, Pisoni 1973)Perception of words and phonemes in sine-wave speech (Remez et al. 1981, 1994)

0 20 40 60 80 100 120 140 160 180 2000

10

20

30

40

50

60

Stimulus Number

Voi

ce O

nset

Tim

e (m

s)

perceptual boundary

Design for Phonological Mismatch Studies

0 20 40 60 80 100 120 140 160 180 20010

20

30

40

50

60

70

Stimulus Number

Voi

ce O

nset

Tim

e (m

s)

perceptual boundary

Design for phonological mismatch control

Interim Conclusion

• Phonological mismatch paradigm only elicits MMF when many-to-one ratio matches phonological categories

• Auditory cortex has access to representations of phonologicalcategories

However …

• Does not tell us whether categories are computed by any part ofauditory cortex – information could be feedback from higher area

• Does not automatically extend to additional aspects of discretephonological representations

--phonological categories cued by more complex acoustic cues--phonological features which categories are built out of

Towards Isolating Phonetic Features

[+voice] b, d, g[-voice] p, t, k

• Acoustic cue is similar: voice onset time• Perceptual boundary differs:

b-p ~20msd-t ~28msg-k ~35ms

• Phonological mismatch paradigm, with added within-categoryvariation in place-of-articulation

ta, pa, ka, ka, pa, ta, da, pa, ka, pa, pa, ta, pa, ka, ba, ta, ta, ka, pa,ta, pa, ka, ka, ga, pa, ka …

(Phillips, Pellathy & Marantz, in progress)

Non-disrupted Speech Processing

• Relatively well-understood domain: perception of phonemes andphonological features

• Extremely well-controlled stimuli: synthetic speech can be perfectlycontrolled

Extraction of spectral information for vowel perception

Formants

F1

F2

F3

F4

Fundamentalfrequency (F0)

Spectrogram of /u/ in ‘boot’

F0 F1 F2 F3a(m) 100 710 1100 2540a(f) 200 850 1220 2810

i(m) 100 280 2250 2890i(f) 200 310 2790 3310

u(m) 100 310 870 2250u(f) 200 370 950 2670

Vowel formants–spectral composition (in Hz)

i (male) i (female) a (male) a (female) u (male) u (female)100

105

110

115

120

125

130

M10

0 la

tenc

y (m

s)

Latency variation due to category (n=6)

Significant effect of vowel category on latency:F(2,5)=27.2, p<0.0001

Vowel Category is best predictor of M100 latency(Poeppel, Phillips, Yellin, et al. 1997)

Male /a/F0: 100HzF1: 720Hz

Female /a/F0: 170HzF1: 720Hz

Male /u/F0: 100HzF1: 300Hz

Female /u/F0: 170HzF1: 300Hz

/a/ /u/

Male voice

Female voice

Single-formant vowel stimuli

F1 determines M100 latency to single-formant vowels (n=9)(Phillips, Govindarajan, Poeppel, et al. 1998)

a (male) a (female) u (male) u (female)130

135

140

145M

100

late

ncy

(ms)

/a/ left /u/ left /a/ right /u/ right100

105

110

115

120

125

M10

0 La

tenc

y (m

s)

Category and Hemisphere

Tone-complexes based on single-formant vowels

F1 affects M100 latency in left-hemisphere,but not in right-hemisphere

In contrast…

• Ragot & Lepaul-Ercole (1996) latency of ERP responses to speech-like sounds tracked F0 rather than formant-like energy bands.

• R&L stimuli contain energy bands which are formant-like, but withunusually high formant amplitude relative to amplitude offundamental

• Increased formant amplitudes evoked responses less likely to trackformant values

• Evoked responses tracking formant frequencies may depend onstimuli having overall spectral shape of speech

Conclusions and Prospects

• Objective is to track down components of linguistic computation

• Pre-requisites

Ability to track activity in space and timeHighly explicit models of real-time linguistic computation

• Surprise-based paradigms continue to yield useful findings,measures of normal processing beginning to provide useful findings

• Further progress requires parallel efforts in behavioralexperimentation, computational modeling and data analysis