Understanding important emerging design techniques...

Transcript of Understanding important emerging design techniques...

EECC722 - ShaabanEECC722 - Shaaban#1 Lec # 1 Fall 2004 9-6-2004

Advanced Computer ArchitectureAdvanced Computer ArchitectureCourse Goal:Understanding important emerging design techniques, machinestructures, technology factors, evaluation methods that willdetermine the form of high-performance programmable processors andcomputing systems in 21st Century.

Important Factors:• Driving Force: Applications with diverse and increased computational demands

even in mainstream computing (multimedia etc.)• Techniques must be developed to overcome the major limitations of current

computing systems to meet such demands:– ILP limitations, Memory latency, IO performance.– Increased branch penalty/other stalls in deeply pipelined CPUs.– General-purpose processors as only homogeneous system computing

resource.

• Enabling Technology for many possible solutions:– Increased density of VLSI logic (one billion transistors in 2005?)– Enables a high-level of system-level integration.

EECC722 - ShaabanEECC722 - Shaaban#2 Lec # 1 Fall 2004 9-6-2004

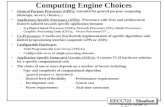

Course TopicsTopics we will cover include:

• Overcoming inherent ILP & clock scaling limitations by exploitingThread-level Parallelism (TLP):– Support for Simultaneous Multithreading (SMT).

• Alpha EV8. Intel P4 Xeon (aka Hyper-Threading), IBM Power5.

– Chip Multiprocessors (CMPs):• The Hydra Project. IBM Power4, 5 ….

• Instruction Fetch Bandwidth/Memory Latency Reduction:

– Conventional & Block-based Trace Cache (Intel P4).

• Advanced Branch Prediction Techniques.

• Towards micro heterogeneous computing systems:– Vector processing. Vector Intelligent RAM (VIRAM).– Digital Signal Processing (DSP) & Media Architectures &

Processors.– Re-Configurable Computing and Processors.

• Virtual Memory Implementation Issues.

• High Performance Storage: Redundant Arrays of Disks (RAID).

EECC722 - ShaabanEECC722 - Shaaban#3 Lec # 1 Fall 2004 9-6-2004

Computer System ComponentsComputer System Components

SDRAMPC100/PC133100-133MHZ64-128 bits wide2-way inteleaved~ 900 MBYTES/SEC

Double DateRate (DDR) SDRAMPC3200400MHZ (effective 200x2)64-128 bits wide4-way interleaved~3.2 GBYTES/SEC(second half 2002)

RAMbus DRAM (RDRAM)PC800, PC1060400-533MHZ (DDR)16-32 bits wide channel~ 1.6 - 3.2 GBYTES/SEC ( per channel)

CPU

CachesFront Side Bus (FSB)

I/O Devices:

MemoryControllers

adapters

DisksDisplaysKeyboards

Networks

NICs

I/O BusesMemoryController

Examples: Alpha, AMD K7: EV6, 400MHZ Intel PII, PIII: GTL+ 133MHZ Intel P4 800MHZ

Example: PCI-X 133MHZ PCI, 33-66MHZ 32-64 bits wide 133-1024 MBYTES/SEC

1000MHZ - 3.6 GHZ (a multiple of system bus speed)Pipelined ( 7 - 30 stages )Superscalar (max ~ 4 instructions/cycle) single-threadedDynamically-Scheduled or VLIWDynamic and static branch prediction

L1

L2 L3

Memory Bus

Support for one or more CPUs

Fast EthernetGigabit EthernetATM, Token Ring ..

NorthBridge

SouthBridge

Chipset

EECC722 - ShaabanEECC722 - Shaaban#4 Lec # 1 Fall 2004 9-6-2004

Computer System ComponentsComputer System Components

CPU

CachesFront Side Bus (FSB)

I/O Devices:

MemoryControllers

adapters

Disks (RAID)DisplaysKeyboards

Networks

NICs

I/O BusesMemoryController

L1

L2 L3

Memory Bus

Conventional & Block-based Trace Cache.

Integrate MemoryController & a portionof main memory with CPU: Intelligent RAM

Integrated memory Controller: AMD Opetron

IBM Power5

Memory Latency Reduction:

Enhanced CPU Performance & Capabilities:

• Support for Simultaneous Multithreading (SMT): Intel HT.• VLIW & intelligent compiler techniques: Intel/HP EPIC IA-64.• More Advanced Branch Prediction Techniques.• Chip Multiprocessors (CMPs): The Hydra Project. IBM Power 4,5• Vector processing capability: Vector Intelligent RAM (VIRAM). Or Multimedia ISA extension.• Digital Signal Processing (DSP) capability in system.• Re-Configurable Computing hardware capability in system.

SMTCMP

NorthBridge

SouthBridge

Chipset

Recent Trend:More system components integration(lowers cost, improves system performance)

EECC722 - ShaabanEECC722 - Shaaban#5 Lec # 1 Fall 2004 9-6-2004

EECC551 ReviewEECC551 Review•• Recent Trends in Computer Design.Recent Trends in Computer Design.

•• A Hierarchy of Computer Design.A Hierarchy of Computer Design.

•• Computer Architecture’s Changing Definition.Computer Architecture’s Changing Definition.

•• Computer Performance Measures.Computer Performance Measures.

•• Instruction Pipelining.Instruction Pipelining.

•• Branch Prediction.Branch Prediction.

•• Instruction-Level Parallelism (ILP).Instruction-Level Parallelism (ILP).

•• Loop-Level Parallelism (LLP).Loop-Level Parallelism (LLP).

•• Dynamic Pipeline Scheduling.Dynamic Pipeline Scheduling.

•• Multiple Instruction Issue (CPI < 1): Multiple Instruction Issue (CPI < 1): Superscalar vsSuperscalar vs. VLIW. VLIW

•• Dynamic Hardware-Based SpeculationDynamic Hardware-Based Speculation•• Cache Design & Performance.Cache Design & Performance.

EECC722 - ShaabanEECC722 - Shaaban#6 Lec # 1 Fall 2004 9-6-2004

Trends in Computer DesignTrends in Computer Design• The cost/performance ratio of computing systems have seen a

steady decline due to advances in:

– Integrated circuit technology: decreasing feature size, l• Clock rate improves roughly proportional to improvement in λλ• Number of transistors improves proportional to λλ22 (or faster).

– Architectural improvements in CPU design.

• Microprocessor systems directly reflect IC improvement in termsof a yearly 35 to 55% improvement in performance.

• Assembly language has been mostly eliminated and replaced byother alternatives such as C or C++

• Standard operating Systems (UNIX, NT) lowered the cost ofintroducing new architectures.

• Emergence of RISC architectures and RISC-core architectures.

• Adoption of quantitative approaches to computer design based onempirical performance observations.

EECC722 - ShaabanEECC722 - Shaaban#7 Lec # 1 Fall 2004 9-6-2004

Processor Performance TrendsProcessor Performance Trends

Microprocessors

Minicomputers

Mainframes

Supercomputers

Year

0.1

1

10

100

1000

1965 1970 1975 1980 1985 1990 1995 2000

Mass-produced microprocessors a cost-effective high-performancereplacement for custom-designed mainframe/minicomputer CPUs

EECC722 - ShaabanEECC722 - Shaaban#8 Lec # 1 Fall 2004 9-6-2004

Microprocessor PerformanceMicroprocessor Performance1987-971987-97

0

200

400

600

800

1000

1200

87 88 89 90 91 92 93 94 95 96 97

DEC Alpha 21264/600

DEC Alpha 5/500

DEC Alpha 5/300

DEC Alpha 4/266IBM POWER 100

DEC AXP/500

HP 9000/750

Sun-4/

260

IBMRS/6000

MIPS M/120

MIPS M

2000

Integer SPEC92 PerformanceInteger SPEC92 Performance

EECC722 - ShaabanEECC722 - Shaaban#9 Lec # 1 Fall 2004 9-6-2004

Microprocessor Architecture TrendsMicroprocessor Architecture TrendsCISC Machines

instructions take variable times to complete

RISC Machines (microcode)simple instructions, optimized for speed

RISC Machines (pipelined)same individual instruction latency

greater throughput through instruction "overlap"

Superscalar Processorsmultiple instructions executing simultaneously

Multithreaded Processorsadditional HW resources (regs, PC, SP)each context gets processor for x cycles

VLIW"Superinstructions" grouped togetherdecreased HW control complexity

Single Chip Multiprocessorsduplicate entire processors

(tech soon due to Moore's Law)

SIMULTANEOUS MULTITHREADINGmultiple HW contexts (regs, PC, SP)each cycle, any context may execute

CMPs

(SMT)

SMT/CMPs (e.g. IBM Power5 in 2004)

EECC722 - ShaabanEECC722 - Shaaban#10 Lec # 1 Fall 2004 9-6-2004

Microprocessor Frequency TrendMicroprocessor Frequency Trend

Result:Deeper PipelinesLonger stallsHigher CPI(lowers effective performance per cycle)

Ê Frequency doubles each generationË Number of gates/clock reduce by 25%Ì Leads to deeper pipelines with more stages (e.g Intel Pentium 4E has 30+ pipeline stages)

Realty Check:Clock frequency scalingis slowing down!(Did silicone finally hit the wall?)

386486

Pentium(R)

Pentium Pro(R)

Pentium(R) IIMPC750

604+604

601, 603

21264S

2126421164A

2116421064A

21066

10

100

1,000

10,000

1987

1989

1991

1993

1995

1997

1999

2001

2003

2005

Mh

z

1

10

100

Gat

e D

elay

s/ C

lock

Intel

IBM Power PC

DEC

Gate delays/clock

Processor freq scales by 2X per

generation

EECC722 - ShaabanEECC722 - Shaaban#11 Lec # 1 Fall 2004 9-6-2004

Microprocessor TransistorMicroprocessor TransistorCount Growth RateCount Growth Rate

Year

1000

10000

100000

1000000

10000000

100000000

1970 1975 1980 1985 1990 1995 2000

i80386

i4004

i8080

Pentium

i80486

i80286

i8086 Moore’sMoore’s Law: Law:2X transistors/ChipEvery 1.5 yearsStill valid possibly until 2010

Alpha 21264: 15 millionPentium Pro: 5.5 millionPowerPC 620: 6.9 millionAlpha 21164: 9.3 millionSparc Ultra: 5.2 million

Moore’s Law

One billion in 2005?

EECC722 - ShaabanEECC722 - Shaaban#12 Lec # 1 Fall 2004 9-6-2004

Tran

sist

ors

uuuuuuu

uu

u

uu

u

u

u uu

u

u

u

u

uu

uuu u

uu

u

u

u u

u

u

u

u

u

uu

u u

uuu

uuu u

uu uu u

u

uuu u

uuu

u

u uuu

1,000

10,000

100,000

1,000,000

10,000,000

100,000,000

1970 1975 1980 1985 1990 1995 2000 2005

Bit-level parallelism Instruction-level Thread-level (?)

i4004

i8008

i8080

i8086

i80286

i80386

R2000

Pentium

R10000

R3000

Parallelism in Microprocessor VLSI GenerationsParallelism in Microprocessor VLSI Generations

Simultaneous Multithreading SMT:e.g. Intel’s Hyper-threading

Chip-Multiprocessors (CMPs)e.g IBM Power 4

Multiple micro-operations per cycle

Chip-LevelParallelProcessing

(Superscalar)

EECC722 - ShaabanEECC722 - Shaaban#13 Lec # 1 Fall 2004 9-6-2004

Computer Technology Trends:Computer Technology Trends:Evolutionary but Rapid ChangeEvolutionary but Rapid Change

• Processor:– 2X in speed every 1.5 years; 100X performance in last decade.

• Memory:– DRAM capacity: > 2x every 1.5 years; 1000X size in last decade.– Cost per bit: Improves about 25% per year.

• Disk:– Capacity: > 2X in size every 1.5 years.– Cost per bit: Improves about 60% per year.– 200X size in last decade.– Only 10% performance improvement per year, due to mechanical

limitations.

• Expected State-of-the-art PC by end of year 2004:– Processor clock speed: > 3600 MegaHertz (3.6 GigaHertz)– Memory capacity: > 4000 MegaByte (2 GigaBytes)– Disk capacity: > 300 GigaBytes (0.3 TeraBytes)

EECC722 - ShaabanEECC722 - Shaaban#14 Lec # 1 Fall 2004 9-6-2004

Architectural ImprovementsArchitectural Improvements• Increased optimization, utilization and size of cache systems with

multiple levels (currently the most popular approach to utilize theincreased number of available transistors) .

• Memory-latency hiding techniques.

• Optimization of pipelined instruction execution.

• Dynamic hardware-based pipeline scheduling.

• Improved handling of pipeline hazards.

• Improved hardware branch prediction techniques.

• Exploiting Instruction-Level Parallelism (ILP) in terms of multiple-instruction issue and multiple hardware functional units.

• Inclusion of special instructions to handle multimedia applications.

• High-speed system and memory bus designs to improve data transferrates and reduce latency.

EECC722 - ShaabanEECC722 - Shaaban#15 Lec # 1 Fall 2004 9-6-2004

Current Computer Architecture TopicsCurrent Computer Architecture Topics

Instruction Set Architecture

Pipelining, Hazard Resolution, Superscalar,Reordering, Branch Prediction, Speculation,VLIW, Vector, DSP, ...

Multiprocessing,Simultaneous CPU Multi-threading

Addressing,Protection,Exception Handling

L1 Cache

L2 Cache

DRAM

Disks, WORM, Tape

Coherence,Bandwidth,Latency

Emerging TechnologiesInterleavingBus protocols

RAID

VLSI

Input/Output and Storage

MemoryHierarchy

Pipelining and Instruction Level Parallelism (ILP)

Thread Level Parallelism (TLB)

EECC722 - ShaabanEECC722 - Shaaban#16 Lec # 1 Fall 2004 9-6-2004

CPU Execution Time: The CPU EquationCPU Execution Time: The CPU Equation• A program is comprised of a number of instructions, I

– Measured in: instructions/program

• The average instruction takes a number of cycles per instruction(CPI) to be completed.– Measured in: cycles/instruction– IPC (Instructions Per Cycle) = 1/CPI

• CPU has a fixed clock cycle time C = 1/clock rate– Measured in: seconds/cycle

• CPU execution time is the product of the above threeparameters as follows:

CPU Time = I x CPI x C

CPU time = Seconds = Instructions x Cycles x Seconds Program Program Instruction Cycle

CPU time = Seconds = Instructions x Cycles x Seconds Program Program Instruction Cycle

EECC722 - ShaabanEECC722 - Shaaban#17 Lec # 1 Fall 2004 9-6-2004

Factors Affecting CPU PerformanceFactors Affecting CPU PerformanceCPU time = Seconds = Instructions x Cycles x Seconds

Program Program Instruction Cycle

CPU time = Seconds = Instructions x Cycles x Seconds Program Program Instruction Cycle

CPIIPC

Clock Cycle CInstruction Count I

Program

Compiler

Organization(Micro-Architecture)

Technology

Instruction SetArchitecture (ISA)

X

X

X

X

X

X

X X

X

EECC722 - ShaabanEECC722 - Shaaban#18 Lec # 1 Fall 2004 9-6-2004

Metrics of Computer PerformanceMetrics of Computer Performance

Compiler

Programming Language

Application

DatapathControl

Transistors Wires Pins

ISA

Function UnitsCycles per second (clock rate).

Megabytes per second.

Execution time: Target workload,SPEC95, SPEC2000, etc.

Each metric has a purpose, and each can be misused.

(millions) of Instructions per second – MIPS(millions) of (F.P.) operations per second – MFLOP/s

EECC722 - ShaabanEECC722 - Shaaban#19 Lec # 1 Fall 2004 9-6-2004

SPEC: System PerformanceSPEC: System PerformanceEvaluation CooperativeEvaluation Cooperative

The most popular and industry-standard set of CPU benchmarks.

• SPECmarks, 1989:– 10 programs yielding a single number (“SPECmarks”).

• SPEC92, 1992:– SPECInt92 (6 integer programs) and SPECfp92 (14 floating point programs).

• SPEC95, 1995:– SPECint95 (8 integer programs):

• go, m88ksim, gcc, compress, li, ijpeg, perl, vortex

– SPECfp95 (10 floating-point intensive programs):• tomcatv, swim, su2cor, hydro2d, mgrid, applu, turb3d, apsi, fppp, wave5

– Performance relative to a Sun SuperSpark I (50 MHz) which is given a scoreof SPECint95 = SPECfp95 = 1

• SPEC CPU2000, 1999:– CINT2000 (11 integer programs). CFP2000 (14 floating-point intensive programs)

– Performance relative to a Sun Ultra5_10 (300 MHz) which is given a score ofSPECint2000 = SPECfp2000 = 100

EECC722 - ShaabanEECC722 - Shaaban#20 Lec # 1 Fall 2004 9-6-2004

Top 20 SPEC CPU2000 Results (As of March 2002)

# MHz Processor int peak int base MHz Processor fp peak fp base

1 1300 POWER4 814 790 1300 POWER4 1169 1098

2 2200 Pentium 4 811 790 1000 Alpha 21264C 960 776

3 2200 Pentium 4 Xeon 810 788 1050 UltraSPARC-III Cu 827 7014 1667 Athlon XP 724 697 2200 Pentium 4 Xeon 802 779

5 1000 Alpha 21264C 679 621 2200 Pentium 4 801 779

6 1400 Pentium III 664 648 833 Alpha 21264B 784 6437 1050 UltraSPARC-III Cu 610 537 800 Itanium 701 701

8 1533 Athlon MP 609 587 833 Alpha 21264A 644 571

9 750 PA-RISC 8700 604 568 1667 Athlon XP 642 59610 833 Alpha 21264B 571 497 750 PA-RISC 8700 581 526

11 1400 Athlon 554 495 1533 Athlon MP 547 504

12 833 Alpha 21264A 533 511 600 MIPS R14000 529 49913 600 MIPS R14000 500 483 675 SPARC64 GP 509 371

14 675 SPARC64 GP 478 449 900 UltraSPARC-III 482 427

15 900 UltraSPARC-III 467 438 1400 Athlon 458 42616 552 PA-RISC 8600 441 417 1400 Pentium III 456 437

17 750 POWER RS64-IV 439 409 500 PA-RISC 8600 440 397

18 700 Pentium III Xeon 438 431 450 POWER3-II 433 42619 800 Itanium 365 358 500 Alpha 21264 422 383

20 400 MIPS R12000 353 328 400 MIPS R12000 407 382

Source: http://www.aceshardware.com/SPECmine/top.jsp

Top 20 SPECfp2000Top 20 SPECint2000

EECC722 - ShaabanEECC722 - Shaaban#21 Lec # 1 Fall 2004 9-6-2004

Performance Enhancement Calculations:Performance Enhancement Calculations: Amdahl's Law Amdahl's Law

• The performance enhancement possible due to a given designimprovement is limited by the amount that the improved feature is used

• Amdahl’s Law:

Performance improvement or speedup due to enhancement E:

Execution Time without E Performance with E Speedup(E) = -------------------------------------- = --------------------------------- Execution Time with E Performance without E

– Suppose that enhancement E accelerates a fraction F of theexecution time by a factor S and the remainder of the time isunaffected then:

Execution Time with E = ((1-F) + F/S) X Execution Time without EHence speedup is given by:

Execution Time without E 1Speedup(E) = --------------------------------------------------------- = -------------------- ((1 - F) + F/S) X Execution Time without E (1 - F) + F/S

EECC722 - ShaabanEECC722 - Shaaban#22 Lec # 1 Fall 2004 9-6-2004

Pictorial Depiction of Amdahl’s LawPictorial Depiction of Amdahl’s Law

Before: Execution Time without enhancement E:

Unaffected, fraction: (1- F)

After: Execution Time with enhancement E:

Enhancement E accelerates fraction F of execution time by a factor of S

Affected fraction: F

Unaffected, fraction: (1- F) F/S

Unchanged

Execution Time without enhancement E 1Speedup(E) = ------------------------------------------------------ = ------------------ Execution Time with enhancement E (1 - F) + F/S

EECC722 - ShaabanEECC722 - Shaaban#23 Lec # 1 Fall 2004 9-6-2004

Performance Enhancement ExamplePerformance Enhancement Example• For the RISC machine with the following instruction mix given earlier:

Op Freq Cycles CPI(i) % TimeALU 50% 1 .5 23%Load 20% 5 1.0 45%Store 10% 3 .3 14%

Branch 20% 2 .4 18%

• If a CPU design enhancement improves the CPI of load instructionsfrom 5 to 2, what is the resulting performance improvement from thisenhancement:

Fraction enhanced = F = 45% or .45

Unaffected fraction = 100% - 45% = 55% or .55

Factor of enhancement = 5/2 = 2.5

Using Amdahl’s Law: 1 1Speedup(E) = ------------------ = --------------------- = 1.37 (1 - F) + F/S .55 + .45/2.5

CPI = 2.2

EECC722 - ShaabanEECC722 - Shaaban#24 Lec # 1 Fall 2004 9-6-2004

Extending Amdahl's Law To Multiple EnhancementsExtending Amdahl's Law To Multiple Enhancements

• Suppose that enhancement Ei accelerates a fraction Fi of theexecution time by a factor Si and the remainder of the time isunaffected then:

∑ ∑+−=

i ii

ii

XSFF

SpeedupTime Execution Original)1

Time Execution Original

)((

∑ ∑+−=

i ii

ii S

FFSpeedup

)( )1

1

(

Note: All fractions refer to original execution time.

EECC722 - ShaabanEECC722 - Shaaban#25 Lec # 1 Fall 2004 9-6-2004

Amdahl's Law With Multiple Enhancements:Amdahl's Law With Multiple Enhancements:ExampleExample

• Three CPU or system performance enhancements are proposed with thefollowing speedups and percentage of the code execution time affected:

Speedup1 = S1 = 10 Percentage1 = F1 = 20%

Speedup2 = S2 = 15 Percentage1 = F2 = 15%

Speedup3 = S3 = 30 Percentage1 = F3 = 10%

• While all three enhancements are in place in the new design, eachenhancement affects a different portion of the code and only oneenhancement can be used at a time.

• What is the resulting overall speedup?

• Speedup = 1 / [(1 - .2 - .15 - .1) + .2/10 + .15/15 + .1/30)] = 1 / [ .55 + .0333 ] = 1 / .5833 = 1.71

∑ ∑+−=

i ii

ii S

FFSpeedup

)( )1

1

(

EECC722 - ShaabanEECC722 - Shaaban#26 Lec # 1 Fall 2004 9-6-2004

Pictorial Depiction of ExamplePictorial Depiction of ExampleBefore: Execution Time with no enhancements: 1

After: Execution Time with enhancements: .55 + .02 + .01 + .00333 = .5833

Speedup = 1 / .5833 = 1.71

Note: All fractions refer to original execution time.

Unaffected, fraction: .55

Unchanged

Unaffected, fraction: .55 F1 = .2 F2 = .15 F3 = .1

S1 = 10 S2 = 15 S3 = 30

/ 10 / 30/ 15

EECC722 - ShaabanEECC722 - Shaaban#27 Lec # 1 Fall 2004 9-6-2004

Evolution of Instruction SetsEvolution of Instruction SetsSingle Accumulator (EDSAC 1950)

Accumulator + Index Registers(Manchester Mark I, IBM 700 series 1953)

Separation of Programming Model from Implementation

High-level Language Based Concept of a Family(B5000 1963) (IBM 360 1964)

General Purpose Register Machines

Complex Instruction Sets Load/Store Architecture

RISC

(Vax, Intel 432 1977-80) (CDC 6600, Cray 1 1963-76)

(Mips,SPARC,HP-PA,IBM RS6000, . . .1987)

EECC722 - ShaabanEECC722 - Shaaban#28 Lec # 1 Fall 2004 9-6-2004

A "Typical" RISCA "Typical" RISC

• 32-bit fixed format instruction (3 formats I,R,J)

• 32 64-bit GPRs (R0 contains zero, DP take pair)

• 32 64-bit FPRs,

• 3-address, reg-reg arithmetic instruction

• Single address mode for load/store:base + displacement

– no indirection• Simple branch conditions (based on register values)

• Delayed branch

EECC722 - ShaabanEECC722 - Shaaban#29 Lec # 1 Fall 2004 9-6-2004

A RISC ISA Example: MIPSA RISC ISA Example: MIPS

Op

31 26 01516202125

rs rt immediate

Op

31 26 025

Op

31 26 01516202125

rs rt

target

rd sa funct

Register-Register

561011

Register-Immediate

Op

31 26 01516202125

rs rt displacement

Branch

Jump / Call

EECC722 - ShaabanEECC722 - Shaaban#30 Lec # 1 Fall 2004 9-6-2004

Instruction Pipelining ReviewInstruction Pipelining Review• Instruction pipelining is CPU implementation technique where multiple

operations on a number of instructions are overlapped.

• An instruction execution pipeline involves a number of steps, where each stepcompletes a part of an instruction. Each step is called a pipeline stage or a pipelinesegment.

• The stages or steps are connected in a linear fashion: one stage to the next toform the pipeline -- instructions enter at one end and progress through the stagesand exit at the other end.

• The time to move an instruction one step down the pipeline is is equal to themachine cycle and is determined by the stage with the longest processing delay.

• Pipelining increases the CPU instruction throughput: The number of instructionscompleted per cycle.

– Under ideal conditions (no stall cycles), instruction throughput is oneinstruction per machine cycle, or ideal CPI = 1

• Pipelining does not reduce the execution time of an individual instruction: Thetime needed to complete all processing steps of an instruction (also calledinstruction completion latency).

– Minimum instruction latency = n cycles, where n is the number of pipelinestages

EECC722 - ShaabanEECC722 - Shaaban#31 Lec # 1 Fall 2004 9-6-2004

MIPS In-Order Single-Issue Integer PipelineMIPS In-Order Single-Issue Integer PipelineIdeal OperationIdeal Operation

Clock Number Time in clock cycles →Instruction Number 1 2 3 4 5 6 7 8 9

Instruction I IF ID EX MEM WB

Instruction I+1 IF ID EX MEM WB

Instruction I+2 IF ID EX MEM WB

Instruction I+3 IF ID EX MEM WBInstruction I +4 IF ID EX MEM WB

Time to fill the pipeline

MIPS Pipeline Stages:

IF = Instruction Fetch

ID = Instruction Decode

EX = Execution

MEM = Memory Access

WB = Write Back

First instruction, ICompleted

Last instruction, I+4 completed

5 pipeline stages Ideal CPI =1

EECC722 - ShaabanEECC722 - Shaaban#32 Lec # 1 Fall 2004 9-6-2004

A Pipelined MIPS DatapathA Pipelined MIPS Datapath• Obtained from multi-cycle MIPS datapath by adding buffer registers between pipeline stages• Assume register writes occur in first half of cycle and register reads occur in second half.

EECC722 - ShaabanEECC722 - Shaaban#33 Lec # 1 Fall 2004 9-6-2004

Pipeline HazardsPipeline Hazards• Hazards are situations in pipelining which prevent the next

instruction in the instruction stream from executing duringthe designated clock cycle.

• Hazards reduce the ideal speedup gained from pipeliningand are classified into three classes:

– Structural hazards: Arise from hardware resourceconflicts when the available hardware cannot support allpossible combinations of instructions.

– Data hazards: Arise when an instruction depends onthe results of a previous instruction in a way that isexposed by the overlapping of instructions in thepipeline

– Control hazards: Arise from the pipelining of conditionalbranches and other instructions that change the PC

EECC722 - ShaabanEECC722 - Shaaban#34 Lec # 1 Fall 2004 9-6-2004

Performance of Pipelines with StallsPerformance of Pipelines with Stalls

• Hazards in pipelines may make it necessary to stall the pipelineby one or more cycles and thus degrading performance from theideal CPI of 1.

CPI pipelined = Ideal CPI + Pipeline stall clock cycles per instruction

• If pipelining overhead is ignored (no change in clock cycle) and weassume that the stages are perfectly balanced then:

Speedup = CPI un-pipelined / CPI pipelined

= CPI un-pipelined / (1 + Pipeline stall cycles per instruction)

EECC722 - ShaabanEECC722 - Shaaban#35 Lec # 1 Fall 2004 9-6-2004

MIPS with MemoryMIPS with MemoryUnit Structural HazardsUnit Structural Hazards

EECC722 - ShaabanEECC722 - Shaaban#36 Lec # 1 Fall 2004 9-6-2004

Resolving A StructuralResolving A StructuralHazard with StallingHazard with Stalling

EECC722 - ShaabanEECC722 - Shaaban#37 Lec # 1 Fall 2004 9-6-2004

Data HazardsData Hazards• Data hazards occur when the pipeline changes the order of

read/write accesses to instruction operands in such a way thatthe resulting access order differs from the original sequentialinstruction operand access order of the unpipelined machineresulting in incorrect execution.

• Data hazards usually require one or more instructions to bestalled to ensure correct execution.

• Example: DADD R1, R2, R3

DSUB R4, R1, R5

AND R6, R1, R7

OR R8,R1,R9

XOR R10, R1, R11

– All the instructions after DADD use the result of the DADD instruction

– DSUB, AND instructions need to be stalled for correct execution.

EECC722 - ShaabanEECC722 - Shaaban#38 Lec # 1 Fall 2004 9-6-2004

Figure A.6 The use of the result of the DADD instruction in the next three instructionscauses a hazard, since the register is not written until after those instructions read it.

Data Data Hazard ExampleHazard Example

EECC722 - ShaabanEECC722 - Shaaban#39 Lec # 1 Fall 2004 9-6-2004

Minimizing Data hazard Stalls by ForwardingMinimizing Data hazard Stalls by Forwarding• Forwarding is a hardware-based technique (also called register

bypassing or short-circuiting) used to eliminate or minimizedata hazard stalls.

• Using forwarding hardware, the result of an instruction is copieddirectly from where it is produced (ALU, memory read portetc.), to where subsequent instructions need it (ALU inputregister, memory write port etc.)

• For example, in the MIPS pipeline with forwarding:– The ALU result from the EX/MEM register may be forwarded or fed

back to the ALU input latches as needed instead of the registeroperand value read in the ID stage.

– Similarly, the Data Memory Unit result from the MEM/WB registermay be fed back to the ALU input latches as needed .

– If the forwarding hardware detects that a previous ALU operation is towrite the register corresponding to a source for the current ALUoperation, control logic selects the forwarded result as the ALU inputrather than the value read from the register file.

EECC722 - ShaabanEECC722 - Shaaban#40 Lec # 1 Fall 2004 9-6-2004

MIPS Pipeline MIPS Pipelinewith Forwardingwith Forwarding

A set of instructions that depend on the DADD result uses forwarding paths to avoid the data hazard

EECC722 - ShaabanEECC722 - Shaaban#41 Lec # 1 Fall 2004 9-6-2004

Data Hazard ClassificationData Hazard ClassificationI (Write)

Shared

Operand

J (Read)

Read after Write (RAW)

I (Read)

Shared

Operand

J (Write)

Write after Read (WAR)

I (Write)

Shared

Operand

J (Write)

Write after Write (WAW)

I (Read)

Shared

Operand

J (Read)

Read after Read (RAR) not a hazard

EECC722 - ShaabanEECC722 - Shaaban#42 Lec # 1 Fall 2004 9-6-2004

Control HazardsControl Hazards

Branch instruction IF ID EX MEM WBBranch successor IF stall stall IF ID EX MEM WBBranch successor + 1 IF ID EX MEM WB Branch successor + 2 IF ID EX MEMBranch successor + 3 IF ID EXBranch successor + 4 IF IDBranch successor + 5 IF

Assuming we stall on a branch instruction: Three clock cycles are wasted for every branch for current MIPS pipeline

• When a conditional branch is executed it may change the PCand, without any special measures, leads to stalling the pipelinefor a number of cycles until the branch condition is known.

• In current MIPS pipeline, the conditional branch is resolved inthe MEM stage resulting in three stall cycles as shown below:

EECC722 - ShaabanEECC722 - Shaaban#43 Lec # 1 Fall 2004 9-6-2004

Reducing Branch Stall CyclesReducing Branch Stall CyclesPipeline hardware measures to reduce branch stall cycles:

1- Find out whether a branch is taken earlier in the pipeline. 2- Compute the taken PC earlier in the pipeline.

In MIPS:

– In MIPS branch instructions BEQZ, BNE, test a registerfor equality to zero.

– This can be completed in the ID cycle by moving the zerotest into that cycle.

– Both PCs (taken and not taken) must be computed early.

– Requires an additional adder because the current ALU isnot useable until EX cycle.

– This results in just a single cycle stall on branches.

EECC722 - ShaabanEECC722 - Shaaban#44 Lec # 1 Fall 2004 9-6-2004

Modified MIPS Pipeline:Modified MIPS Pipeline: Conditional Branches Conditional Branches Completed in ID Stage Completed in ID Stage

EECC722 - ShaabanEECC722 - Shaaban#45 Lec # 1 Fall 2004 9-6-2004

Pipeline Performance ExamplePipeline Performance Example• Assume the following MIPS instruction mix:

• What is the resulting CPI for the pipelined MIPS withforwarding and branch address calculation in ID stagewhen using a branch not-taken scheme?

• CPI = Ideal CPI + Pipeline stall clock cycles per instruction

= 1 + stalls by loads + stalls by branches

= 1 + .3 x .25 x 1 + .2 x .45 x 1

= 1 + .075 + .09

= 1.165

Type FrequencyArith/Logic 40%Load 30% of which 25% are followed immediately by an instruction using the loaded valueStore 10%branch 20% of which 45% are taken

EECC722 - ShaabanEECC722 - Shaaban#46 Lec # 1 Fall 2004 9-6-2004

Pipelining and ExploitingPipelining and ExploitingInstruction-Level Parallelism (ILP)Instruction-Level Parallelism (ILP)

• Pipelining increases performance by overlapping the executionof independent instructions.

• The CPI of a real-life pipeline is given by (assuming idealmemory):

Pipeline CPI = Ideal Pipeline CPI + Structural Stalls + RAW Stalls

+ WAR Stalls + WAW Stalls + Control Stalls

• A basic instruction block is a straight-line code sequence with nobranches in, except at the entry point, and no branches outexcept at the exit point of the sequence .

• The amount of parallelism in a basic block is limited byinstruction dependence present and size of the basic block.

• In typical integer code, dynamic branch frequency is about 15%(average basic block size of 7 instructions).

EECC722 - ShaabanEECC722 - Shaaban#47 Lec # 1 Fall 2004 9-6-2004

Increasing Instruction-Level ParallelismIncreasing Instruction-Level Parallelism• A common way to increase parallelism among instructions

is to exploit parallelism among iterations of a loop– (i.e Loop Level Parallelism, LLP).

• This is accomplished by unrolling the loop either staticallyby the compiler, or dynamically by hardware, whichincreases the size of the basic block present.

• In this loop every iteration can overlap with any otheriteration. Overlap within each iteration is minimal.

for (i=1; i<=1000; i=i+1;)

x[i] = x[i] + y[i];

• In vector machines, utilizing vector instructions is animportant alternative to exploit loop-level parallelism,

• Vector instructions operate on a number of data items. Theabove loop would require just four such instructions.

EECC722 - ShaabanEECC722 - Shaaban#48 Lec # 1 Fall 2004 9-6-2004

MIPS Loop Unrolling ExampleMIPS Loop Unrolling Example• For the loop:

for (i=1000; i>0; i=i-1)

x[i] = x[i] + s;

The straightforward MIPS assembly code is given by:

Loop: L.D F0, 0 (R1) ;F0=array element

ADD.D F4, F0, F2 ;add scalar in F2

S.D F4, 0(R1) ;store result

DADDUI R1, R1, # -8 ;decrement pointer 8 bytes

BNE R1, R2,Loop ;branch R1!=R2

R1 is initially the address of the element with highest address.8(R2) is the address of the last element to operate on.

EECC722 - ShaabanEECC722 - Shaaban#49 Lec # 1 Fall 2004 9-6-2004

MIPS FP LatencyMIPS FP LatencyAssumptionsAssumptions

• All FP units assumed to be pipelined.

• The following FP operations latencies are used:

Instruction Producing Result

FP ALU Op

FP ALU Op

Load Double

Load Double

Instruction Using Result

Another FP ALU Op

Store Double

FP ALU Op

Store Double

Latency InClock Cycles

3

2

1

0

EECC722 - ShaabanEECC722 - Shaaban#50 Lec # 1 Fall 2004 9-6-2004

Loop Unrolling Example (continued)Loop Unrolling Example (continued)

• This loop code is executed on the MIPS pipeline as follows:

With delayed branch scheduling

Loop: L.D F0, 0(R1) DADDUI R1, R1, # -8 ADD.D F4, F0, F2 stall BNE R1,R2, Loop S.D F4,8(R1)

6 cycles per iteration

No scheduling Clock cycle

Loop: L.D F0, 0(R1) 1

stall 2

ADD.D F4, F0, F2 3

stall 4

stall 5

S.D F4, 0 (R1) 6

DADDUI R1, R1, # -8 7

stall 8

BNE R1,R2, Loop 9

stall 10

10 cycles per iteration10/6 = 1.7 times faster

EECC722 - ShaabanEECC722 - Shaaban#51 Lec # 1 Fall 2004 9-6-2004

Loop Unrolling Example (continued)Loop Unrolling Example (continued)• The resulting loop code when four copies of the loop body

are unrolled without reuse of registers:

No schedulingLoop: L.D F0, 0(R1) ADD.D F4, F0, F2 SD F4,0 (R1) ; drop DADDUI & BNE

LD F6, -8(R1) ADDD F8, F6, F2 SD F8, -8 (R1), ; drop DADDUI & BNE

LD F10, -16(R1) ADDD F12, F10, F2 SD F12, -16 (R1) ; drop DADDUI & BNE

LD F14, -24 (R1) ADDD F16, F14, F2 SD F16, -24(R1) DADDUI R1, R1, # -32 BNE R1, R2, Loop

Three branches and threedecrements of R1 are eliminated.

Load and store addresses arechanged to allow DADDUIinstructions to be merged.

The loop runs in 28 assuming eachL.D has 1 stall cycle, each ADD.Dhas 2 stall cycles, the DADDUI 1stall, the branch 1 stall cycles, or7 cycles for each of the fourelements.

EECC722 - ShaabanEECC722 - Shaaban#52 Lec # 1 Fall 2004 9-6-2004

Loop Unrolling Example (continued)Loop Unrolling Example (continued) When scheduled for pipeline

Loop: L.D F0, 0(R1) L.D F6,-8 (R1) L.D F10, -16(R1) L.D F14, -24(R1) ADD.D F4, F0, F2 ADD.D F8, F6, F2 ADD.D F12, F10, F2 ADD.D F16, F14, F2 S.D F4, 0(R1) S.D F8, -8(R1) DADDUI R1, R1,# -32 S.D F12, -16(R1),F12 BNE R1,R2, Loop S.D F16, 8(R1), F16 ;8-32 = -24

The execution time of the loophas dropped to 14 cycles, or 3.5 clock cycles per element

compared to 6.8 before schedulingand 6 when scheduled but unrolled.

Unrolling the loop exposed more computation that can be scheduled to minimize stalls.

EECC722 - ShaabanEECC722 - Shaaban#53 Lec # 1 Fall 2004 9-6-2004

Loop-Level Parallelism (LLP) AnalysisLoop-Level Parallelism (LLP) Analysis• Loop-Level Parallelism (LLP) analysis focuses on whether data

accesses in later iterations of a loop are data dependent on datavalues produced in earlier iterations.

e.g. in for (i=1; i<=1000; i++)

x[i] = x[i] + s;

the computation in each iteration is independent of the previousiterations and the loop is thus parallel. The use of X[i] twice is withina single iteration.

⇒Thus loop iterations are parallel (or independent from each other).

• Loop-carried Dependence: A data dependence between differentloop iterations (data produced in earlier iteration used in a later one).

• LLP analysis is normally done at the source code level or close to itsince assembly language and target machine code generationintroduces a loop-carried name dependence in the registers used foraddressing and incrementing.

• Instruction level parallelism (ILP) analysis, on the other hand, isusually done when instructions are generated by the compiler.

EECC722 - ShaabanEECC722 - Shaaban#54 Lec # 1 Fall 2004 9-6-2004

LLP Analysis Example 1LLP Analysis Example 1• In the loop:

for (i=1; i<=100; i=i+1) {

A[i+1] = A[i] + C[i]; /* S1 */

B[i+1] = B[i] + A[i+1];} /* S2 */

} (Where A, B, C are distinct non-overlapping arrays)

– S2 uses the value A[i+1], computed by S1 in the same iteration. Thisdata dependence is within the same iteration (not a loop-carrieddependence).

⇒ does not prevent loop iteration parallelism.

– S1 uses a value computed by S1 in an earlier iteration, since iteration icomputes A[i+1] read in iteration i+1 (loop-carried dependence,prevents parallelism). The same applies for S2 for B[i] and B[i+1]

⇒These two dependences are loop-carried spanning more than one iterationpreventing loop parallelism.

EECC722 - ShaabanEECC722 - Shaaban#55 Lec # 1 Fall 2004 9-6-2004

LLP Analysis Example 2LLP Analysis Example 2• In the loop:

for (i=1; i<=100; i=i+1) {

A[i] = A[i] + B[i]; /* S1 */

B[i+1] = C[i] + D[i]; /* S2 */

}

– S1 uses the value B[i] computed by S2 in the previous iteration (loop-carried dependence)

– This dependence is not circular:• S1 depends on S2 but S2 does not depend on S1.

– Can be made parallel by replacing the code with the following:

A[1] = A[1] + B[1];

for (i=1; i<=99; i=i+1) {

B[i+1] = C[i] + D[i];

A[i+1] = A[i+1] + B[i+1];

}

B[101] = C[100] + D[100];

Loop Start-up code

Loop Completion code

EECC722 - ShaabanEECC722 - Shaaban#56 Lec # 1 Fall 2004 9-6-2004

LLP Analysis Example 2LLP Analysis Example 2

Original Loop:

A[100] = A[100] + B[100];

B[101] = C[100] + D[100];

A[1] = A[1] + B[1];

B[2] = C[1] + D[1];

A[2] = A[2] + B[2];

B[3] = C[2] + D[2];

A[99] = A[99] + B[99];

B[100] = C[99] + D[99];

A[100] = A[100] + B[100];

B[101] = C[100] + D[100];

A[1] = A[1] + B[1];

B[2] = C[1] + D[1];

A[2] = A[2] + B[2];

B[3] = C[2] + D[2];

A[99] = A[99] + B[99];

B[100] = C[99] + D[99];

for (i=1; i<=100; i=i+1) { A[i] = A[i] + B[i]; /* S1 */ B[i+1] = C[i] + D[i]; /* S2 */ }

A[1] = A[1] + B[1]; for (i=1; i<=99; i=i+1) { B[i+1] = C[i] + D[i]; A[i+1] = A[i+1] + B[i+1]; } B[101] = C[100] + D[100];

Modified Parallel Loop:

Iteration 1 Iteration 2 Iteration 100Iteration 99

Loop-carried Dependence

Loop Start-up code

Loop Completion code

Iteration 1Iteration 98 Iteration 99

Not LoopCarried Dependence

. . . . . .

. . . . . .

. . . .

EECC722 - ShaabanEECC722 - Shaaban#57 Lec # 1 Fall 2004 9-6-2004

Reduction of Data Hazards StallsReduction of Data Hazards Stallswith Dynamic Schedulingwith Dynamic Scheduling

• So far we have dealt with data hazards in instruction pipelines by:

– Result forwarding and bypassing to reduce latency and hide orreduce the effect of true data dependence.

– Hazard detection hardware to stall the pipeline starting with theinstruction that uses the result.

– Compiler-based static pipeline scheduling to separate the dependentinstructions minimizing actual hazards and stalls in scheduled code.

• Dynamic scheduling:– Uses a hardware-based mechanism to rearrange instruction

execution order to reduce stalls at runtime.

– Enables handling some cases where dependencies are unknown atcompile time.

– Similar to the other pipeline optimizations above, a dynamicallyscheduled processor cannot remove true data dependencies, but triesto avoid or reduce stalling.

EECC722 - ShaabanEECC722 - Shaaban#58 Lec # 1 Fall 2004 9-6-2004

Dynamic Pipeline Scheduling:Dynamic Pipeline Scheduling: The ConceptThe Concept

• Dynamic pipeline scheduling overcomes the limitations of in-orderexecution by allowing out-of-order instruction execution.

• Instruction are allowed to start executing out-of-order as soon astheir operands are available.

Example:

• This implies allowing out-of-order instruction commit (completion).

• May lead to imprecise exceptions if an instruction issued earlierraises an exception.

• This is similar to pipelines with multi-cycle floating point units.

In the case of in-order execution SUBD must wait for DIVD to complete which stalled ADDD before starting executionIn out-of-order execution SUBD can start as soon as the values of its operands F8, F14 are available.

DIVD F0, F2, F4

ADDD F10, F0, F8

SUBD F12, F8, F14

EECC722 - ShaabanEECC722 - Shaaban#59 Lec # 1 Fall 2004 9-6-2004

Dynamic Scheduling:Dynamic Scheduling:The Tomasulo AlgorithmThe Tomasulo Algorithm

• Developed at IBM and first implemented in IBM’s 360/91mainframe in 1966, about 3 years after the debut of the scoreboardin the CDC 6600.

• Dynamically schedule the pipeline in hardware to reduce stalls.

• Differences between IBM 360 & CDC 6600 ISA.

– IBM has only 2 register specifiers/instr vs. 3 in CDC 6600.– IBM has 4 FP registers vs. 8 in CDC 6600.

• Current CPU architectures that can be considered descendants ofthe IBM 360/91 which implement and utilize a variation of theTomasulo Algorithm include:

RISC CPUs: Alpha 21264, HP 8600, MIPS R12000, PowerPC G4

RISC-core x86 CPUs: AMD Athlon, Pentium III, 4, Xeon ….

EECC722 - ShaabanEECC722 - Shaaban#60 Lec # 1 Fall 2004 9-6-2004

Dynamic Scheduling: The Tomasulo ApproachDynamic Scheduling: The Tomasulo Approach

The basic structure of a MIPS floating-point unit using Tomasulo’s algorithm

EECC722 - ShaabanEECC722 - Shaaban#61 Lec # 1 Fall 2004 9-6-2004

Reservation Station FieldsReservation Station Fields• Op Operation to perform in the unit (e.g., + or –)

• Vj, Vk Value of Source operands S1 and S2– Store buffers have a single V field indicating result to be

stored.

• Qj, Qk Reservation stations producing source registers.(value to be written).– No ready flags as in Scoreboard; Qj,Qk=0 => ready.– Store buffers only have Qi for RS producing result.

• A: Address information for loads or stores. Initially immediatefield of instruction then effective address when calculated.

• Busy: Indicates reservation station and FU are busy.

• Register result status: Qi Indicates which functional unit willwrite each register, if one exists.– Blank (or 0) when no pending instructions exist that will

write to that register.

EECC722 - ShaabanEECC722 - Shaaban#62 Lec # 1 Fall 2004 9-6-2004

Three Stages of Tomasulo AlgorithmThree Stages of Tomasulo Algorithm1 Issue: Get instruction from pending Instruction Queue.

– Instruction issued to a free reservation station (no structural hazard).– Selected RS is marked busy.– Control sends available instruction operands values (from ISA registers)

to assigned RS.– Operands not available yet are renamed to RSs that will produce the

operand (register renaming).

2 Execution (EX): Operate on operands.– When both operands are ready then start executing on assigned FU.– If all operands are not ready, watch Common Data Bus (CDB) for needed

result (forwarding done via CDB).

3 Write result (WB): Finish execution.– Write result on Common Data Bus to all awaiting units– Mark reservation station as available.

• Normal data bus: data + destination (“go to” bus).

• Common Data Bus (CDB): data + source (“come from” bus):– 64 bits for data + 4 bits for Functional Unit source address.– Write data to waiting RS if source matches expected RS (that produces result).– Does the result forwarding via broadcast to waiting RSs.

EECC722 - ShaabanEECC722 - Shaaban#63 Lec # 1 Fall 2004 9-6-2004

Dynamic Conditional Branch PredictionDynamic Conditional Branch Prediction• Dynamic branch prediction schemes are different from static

mechanisms because they use the run-time behavior of branches to makemore accurate predictions than possible using static prediction.

• Usually information about outcomes of previous occurrences of a givenbranch (branching history) is used to predict the outcome of the currentoccurrence. Some of the proposed dynamic branch predictionmechanisms include:

– One-level or Bimodal: Uses a Branch History Table (BHT), a tableof usually two-bit saturating counters which is indexed by a portionof the branch address (low bits of address).

– Two-Level Adaptive Branch Prediction.

– MCFarling’s Two-Level Prediction with index sharing (gshare).

– Hybrid or Tournament Predictors: Uses a combinations of two ormore (usually two) branch prediction mechanisms.

• To reduce the stall cycles resulting from correctly predicted takenbranches to zero cycles, a Branch Target Buffer (BTB) that includes theaddresses of conditional branches that were taken along with theirtargets is added to the fetch stage.

EECC722 - ShaabanEECC722 - Shaaban#64 Lec # 1 Fall 2004 9-6-2004

Branch Target Buffer (BTB)Branch Target Buffer (BTB)• Effective branch prediction requires the target of the branch at an early pipeline

stage.

• One can use additional adders to calculate the target, as soon as the branchinstruction is decoded. This would mean that one has to wait until the ID stagebefore the target of the branch can be fetched, taken branches would be fetchedwith a one-cycle penalty (this was done in the enhanced MIPS pipeline Fig A.24).

• To avoid this problem one can use a Branch Target Buffer (BTB). A typical BTBis an associative memory where the addresses of taken branch instructions arestored together with their target addresses.

• Some designs store n prediction bits as well, implementing a combined BTB andBHT.

• Instructions are fetched from the target stored in the BTB in case the branch ispredicted-taken and found in BTB. After the branch has been resolved the BTBis updated. If a branch is encountered for the first time a new entry is createdonce it is resolved.

• Branch Target Instruction Cache (BTIC): A variation of BTB which cachesalso the code of the branch target instruction in addition to its address. Thiseliminates the need to fetch the target instruction from the instruction cache orfrom memory.

EECC722 - ShaabanEECC722 - Shaaban#65 Lec # 1 Fall 2004 9-6-2004

Basic Branch Target Buffer (BTB)Basic Branch Target Buffer (BTB)

EECC722 - ShaabanEECC722 - Shaaban#66 Lec # 1 Fall 2004 9-6-2004

EECC722 - ShaabanEECC722 - Shaaban#67 Lec # 1 Fall 2004 9-6-2004

One-Level Bimodal Branch PredictorsOne-Level Bimodal Branch Predictors

• One-level or bimodal branch prediction uses only one level of branchhistory.

• These mechanisms usually employ a table which is indexed by lowerbits of the branch address.

• The table entry consists of n history bits, which form an n-bitautomaton or saturating counters.

• Smith proposed such a scheme, known as the Smith algorithm, thatuses a table of two-bit saturating counters.

• One rarely finds the use of more than 3 history bits in the literature.

• Two variations of this mechanism:– Decode History Table: Consists of directly mapped entries.

– Branch History Table (BHT): Stores the branch address as a tag. Itis associative and enables one to identify the branch instructionduring IF by comparing the address of an instruction with thestored branch addresses in the table (similar to BTB).

EECC722 - ShaabanEECC722 - Shaaban#68 Lec # 1 Fall 2004 9-6-2004

One-Level Bimodal Branch PredictorsOne-Level Bimodal Branch PredictorsDecode History Table (DHT)Decode History Table (DHT)

N Low Bits of

Table has 2N entries.

0 00 11 01 1

Not Taken

Taken

High bit determines branch prediction0 = Not Taken1 = Taken

Example:

For N =12Table has 2N = 212 entries = 4096 = 4k entries

Number of bits needed = 2 x 4k = 8k bits

EECC722 - ShaabanEECC722 - Shaaban#69 Lec # 1 Fall 2004 9-6-2004

Prediction AccuracyPrediction Accuracyof A 4096-Entry Basicof A 4096-Entry BasicDynamic Two-BitDynamic Two-BitBranch PredictorBranch Predictor

Integer average 11%FP average 4%

Integer

EECC722 - ShaabanEECC722 - Shaaban#70 Lec # 1 Fall 2004 9-6-2004

Correlating BranchesCorrelating BranchesRecent branches are possibly correlated: The behavior ofrecently executed branches affects prediction of currentbranch.

Example:

Branch B3 is correlated with branches B1, B2. If B1, B2 areboth not taken, then B3 will be taken. Using only the behaviorof one branch cannot detect this behavior.

B1 if (aa==2) aa=0;B2 if (bb==2)

bb=0;

B3 if (aa!==bb){

DSUBUI R3, R1, #2 BENZ R3, L1 ; b1 (aa!=2) DADD R1, R0, R0 ; aa==0L1: DSUBUI R3, R1, #2 BNEZ R3, L2 ; b2 (bb!=2) DADD R2, R0, R0 ; bb==0L2: DSUBUI R3, R1, R2 ; R3=aa-bb BEQZ R3, L3 ; b3 (aa==bb)

EECC722 - ShaabanEECC722 - Shaaban#71 Lec # 1 Fall 2004 9-6-2004

Correlating Two-Level Dynamic Correlating Two-Level Dynamic GApGAp Branch Predictors Branch Predictors• Improve branch prediction by looking not only at the history of the branch in

question but also at that of other branches using two levels of branch history.

• Uses two levels of branch history:

– First level (global):

• Record the global pattern or history of the m most recently executedbranches as taken or not taken. Usually an m-bit shift register.

– Second level (per branch address):

• 2m prediction tables, each table entry has n bit saturating counter.

• The branch history pattern from first level is used to select the properbranch prediction table in the second level.

• The low N bits of the branch address are used to select the correctprediction entry within a the selected table, thus each of the 2m tableshas 2N entries and each entry is 2 bits counter.

• Total number of bits needed for second level = 2m x n x 2N bits

• In general, the notation: (m,n) GAp predictor means:

– Record last m branches to select between 2m history tables.

– Each second level table uses n-bit counters (each table entry has n bits).

• Basic two-bit single-level Bimodal BHT is then a (0,2) predictor.

EECC722 - ShaabanEECC722 - Shaaban#72 Lec # 1 Fall 2004 9-6-2004

Organization of A Correlating Two-level GAp (2,2) Branch Predictor

First Level(2 bit shift register)

Second Level

m = # of branches tracked in first level = 2Thus 2m = 22 = 4 tables in second level

N = # of low bits of branch address used = 4Thus each table in 2nd level has 2N = 24 = 16entries

n = # number of bits of 2nd level table entry = 2

Number of bits for 2nd level = 2m x n x 2N

= 4 x 2 x 16 = 128 bits

High bit determines branch prediction0 = Not Taken1 = Taken

Low 4 bits of address

Selects correct table

Selects correct entry in table

GAp

Global(1st level) Adaptive

per address(2nd level)

EECC722 - ShaabanEECC722 - Shaaban#73 Lec # 1 Fall 2004 9-6-2004

Prediction AccuracyPrediction Accuracyof Two-Bit Dynamicof Two-Bit DynamicPredictors UnderPredictors UnderSPEC89SPEC89

BasicBasic BasicBasic Correlating Correlating Two-levelTwo-level

GAp

EECC722 - ShaabanEECC722 - Shaaban#74 Lec # 1 Fall 2004 9-6-2004

Multiple Instruction Issue: CPI < 1Multiple Instruction Issue: CPI < 1• To improve a pipeline’s CPI to be better [less] than one, and to utilize ILP

better, a number of independent instructions have to be issued in the samepipeline cycle.

• Multiple instruction issue processors are of two types:

– Superscalar: A number of instructions (2-8) is issued in the samecycle, scheduled statically by the compiler or dynamically(Tomasulo).

• PowerPC, Sun UltraSparc, Alpha, HP 8000 ...

– VLIW (Very Long Instruction Word): A fixed number of instructions (3-6) are formatted as one long

instruction word or packet (statically scheduled by the compiler).– Joint HP/Intel agreement (Itanium, Q4 2000).

– Intel Architecture-64 (IA-64) 64-bit address:

• Explicitly Parallel Instruction Computer (EPIC): Itanium.

• Limitations of the approaches:– Available ILP in the program (both).– Specific hardware implementation difficulties (superscalar).– VLIW optimal compiler design issues.

EECC722 - ShaabanEECC722 - Shaaban#75 Lec # 1 Fall 2004 9-6-2004

• Two instructions can be issued per cycle (two-issue superscalar).

• One of the instructions is integer (including load/store, branch). The other instructionis a floating-point operation.

– This restriction reduces the complexity of hazard checking.

• Hardware must fetch and decode two instructions per cycle.

• Then it determines whether zero (a stall), one or two instructions can be issued percycle.

Simple Statically ScheduledSimple Statically Scheduled Superscalar Superscalar Pipeline Pipeline

MEM

EX

EX

EX

ID

ID

IF

IF

EX

EX

ID

ID

IF

IF

WB

WB

EX

MEM

EX

EX

EX

WB

WB

EX

MEM

EX

WB

WB

EX

ID

ID

IF

IF

WB

EX

MEM

EX

EX

EX

ID

ID

IF

IF

Integer Instruction

Integer Instruction

Integer Instruction

Integer Instruction

FP Instruction

FP Instruction

FP Instruction

FP Instruction

1 2 3 4 5 6 7 8Instruction Type

Two-issue statically scheduled pipeline in operationTwo-issue statically scheduled pipeline in operationFP instructions assumed to be addsFP instructions assumed to be adds

EECC722 - ShaabanEECC722 - Shaaban#76 Lec # 1 Fall 2004 9-6-2004

Intel/HP VLIW “Explicitly ParallelIntel/HP VLIW “Explicitly ParallelInstruction Computing (EPIC)”Instruction Computing (EPIC)”

• Three instructions in 128 bit “Groups”; instruction templatefields determines if instructions are dependent or independent– Smaller code size than old VLIW, larger than x86/RISC– Groups can be linked to show dependencies of more than three

instructions.

• 128 integer registers + 128 floating point registers– No separate register files per functional unit as in old VLIW.

• Hardware checks dependencies (interlocks ⇒ binary compatibility over time)

• Predicated execution: An implementation of conditionalinstructions used to reduce the number of conditional branchesused in the generated code ⇒ larger basic block size

• IA-64 : Name given to instruction set architecture (ISA).• Itanium : Name of the first implementation (2001).

EECC722 - ShaabanEECC722 - Shaaban#77 Lec # 1 Fall 2004 9-6-2004

Intel/HP EPIC VLIW ApproachIntel/HP EPIC VLIW Approachoriginal sourceoriginal source

codecode

ExposeExposeInstructionInstructionParallelismParallelism

OptimizeOptimizeExploitExploit

Parallelism:Parallelism:GenerateGenerate

VLIWsVLIWs

compilercompiler

Instruction DependencyInstruction DependencyAnalysisAnalysis

Instruction 2Instruction 2 Instruction 1Instruction 1 Instruction 0Instruction 0 TemplateTemplate

128-bit bundle128-bit bundle

00127127

EECC722 - ShaabanEECC722 - Shaaban#78 Lec # 1 Fall 2004 9-6-2004

Unrolled Loop Example for Scalar PipelineUnrolled Loop Example for Scalar Pipeline

1 Loop: L.D F0,0(R1)2 L.D F6,-8(R1)3 L.D F10,-16(R1)4 L.D F14,-24(R1)5 ADD.D F4,F0,F26 ADD.D F8,F6,F27 ADD.D F12,F10,F28 ADD.D F16,F14,F29 S.D F4,0(R1)10 S.D F8,-8(R1)11 DADDUI R1,R1,#-3212 S.D F12,-16(R1)13 BNE R1,R2,LOOP14 S.D F16,8(R1) ; 8-32 = -24

14 clock cycles, or 3.5 per iteration

L.D to ADD.D: 1 CycleADD.D to S.D: 2 Cycles

EECC722 - ShaabanEECC722 - Shaaban#79 Lec # 1 Fall 2004 9-6-2004

Loop Unrolling in Superscalar Pipeline:Loop Unrolling in Superscalar Pipeline:(1 Integer, 1 FP/Cycle)(1 Integer, 1 FP/Cycle)

Integer instruction FP instruction Clock cycle

Loop: L.D F0,0(R1) 1

L.D F6,-8(R1) 2

L.D F10,-16(R1) ADD.D F4,F0,F2 3

L.D F14,-24(R1) ADD.D F8,F6,F2 4

L.D F18,-32(R1) ADD.D F12,F10,F2 5

S.D F4,0(R1) ADD.D F16,F14,F2 6

S.D F8,-8(R1) ADD.D F20,F18,F2 7

S.D F12,-16(R1) 8

DADDUI R1,R1,#-40 9

S.D F16,-24(R1) 10

BNE R1,R2,LOOP 11

SD -32(R1),F20 12

• Unrolled 5 times to avoid delays (+1 due to SS)• 12 clocks, or 2.4 clocks per iteration (1.5X)• 7 issue slots wasted

EECC722 - ShaabanEECC722 - Shaaban#80 Lec # 1 Fall 2004 9-6-2004

Loop Unrolling in VLIW PipelineLoop Unrolling in VLIW Pipeline(2 Memory, 2 FP, 1 Integer / Cycle)(2 Memory, 2 FP, 1 Integer / Cycle)

Memory Memory FP FP Int. op/ Clockreference 1 reference 2 operation 1 op. 2 branchL.D F0,0(R1) L.D F6,-8(R1) 1

L.D F10,-16(R1) L.D F14,-24(R1) 2

L.D F18,-32(R1) L.D F22,-40(R1) ADD.D F4,F0,F2 ADD.D F8,F6,F2 3

L.D F26,-48(R1) ADD.D F12,F10,F2 ADD.D F16,F14,F2 4

ADD.D F20,F18,F2 ADD.D F24,F22,F2 5

S.D F4,0(R1) S.D F8, -8(R1) ADD.D F28,F26,F2 6

S.D F12, -16(R1) S.D F16,-24(R1) DADDUI R1,R1,#-56 7

S.D F20, 24(R1) S.D F24,16(R1) 8

S.D F28, 8(R1) BNE R1,R2,LOOP 9

Unrolled 7 times to avoid delays 7 results in 9 clocks, or 1.3 clocks per iteration (1.8X) Average: 2.5 ops per clock, 50% efficiency Note: Needs more registers in VLIW (15 vs. 6 in Superscalar)

EECC722 - ShaabanEECC722 - Shaaban#81 Lec # 1 Fall 2004 9-6-2004

SuperscalarSuperscalar Dynamic Scheduling Dynamic Scheduling• How to issue two instructions and keep in-order instruction

issue for Tomasulo?

– Assume: 1 integer + 1 floating-point operations.

– 1 Tomasulo control for integer, 1 for floating point.

• Issue at 2X Clock Rate, so that issue remains in order.

• Only FP loads might cause a dependency between integer andFP issue:– Replace load reservation station with a load queue;

operands must be read in the order they are fetched.

– Load checks addresses in Store Queue to avoid RAWviolation

– Store checks addresses in Load Queue to avoid WAR,WAW.

• Called “Decoupled Architecture”

EECC722 - ShaabanEECC722 - Shaaban#82 Lec # 1 Fall 2004 9-6-2004

• Empty or wasted issue slots can be defined as either vertical waste orhorizontal waste:

– Vertical waste is introduced when the processor issues noinstructions in a cycle.

– Horizontal waste occurs when not all issue slots can be filledin a cycle.

SuperscalarSuperscalar Architectures: Architectures:Issue Slot Waste Classification

EECC722 - ShaabanEECC722 - Shaaban#83 Lec # 1 Fall 2004 9-6-2004

Hardware Support for Extracting More ParallelismHardware Support for Extracting More Parallelism• Compiler ILP techniques (loop-unrolling, software Pipelining etc.)

are not effective to uncover maximum ILP when branch behavioris not well known at compile time.

• Hardware ILP techniques:

– Conditional or Predicted Instructions: An extension to theinstruction set with instructions that turn into no-ops ifa condition is not valid at run time.

– Speculation: An instruction is executed before the processorknows that the instruction should execute to avoid controldependence stalls:

• Static Speculation by the compiler with hardware support:– The compiler labels an instruction as speculative and the hardware

helps by ignoring the outcome of incorrectly speculated instructions.

– Conditional instructions provide limited speculation.

• Dynamic Hardware-based Speculation:– Uses dynamic branch-prediction to guide the speculation process.

– Dynamic scheduling and execution continued passed a conditionalbranch in the predicted branch direction.

EECC722 - ShaabanEECC722 - Shaaban#84 Lec # 1 Fall 2004 9-6-2004

Conditional or Predicted InstructionsConditional or Predicted Instructions• Avoid branch prediction by turning branches into

conditionally-executed instructions:

if (x) then (A = B op C) else NOP– If false, then neither store result nor cause exception:

instruction is annulled (turned into NOP) .– Expanded ISA of Alpha, MIPS, PowerPC, SPARC

have conditional move.– HP PA-RISC can annul any following instruction.– IA-64: 64 1-bit condition fields selected so conditional execution of any instruction

(Predication).

• Drawbacks of conditional instructions– Still takes a clock cycle even if “annulled”.

– Must stall if condition is evaluated late.– Complex conditions reduce effectiveness;

condition becomes known late in pipeline.

x

A = B op C

EECC722 - ShaabanEECC722 - Shaaban#85 Lec # 1 Fall 2004 9-6-2004

Dynamic Hardware-Based SpeculationDynamic Hardware-Based Speculation•• Combines:Combines:

– Dynamic hardware-based branch prediction– Dynamic Scheduling: of multiple instructions to issue and

execute out of order.

• Continue to dynamically issue, and execute instructions passeda conditional branch in the dynamically predicted branchdirection, before control dependencies are resolved.– This overcomes the ILP limitations of the basic block size.– Creates dynamically speculated instructions at run-time with no

compiler support at all.– If a branch turns out as mispredicted all such dynamically

speculated instructions must be prevented from changing the state ofthe machine (registers, memory).

• Addition of commit (retire or re-ordering) stage and forcinginstructions to commit in their order in the code (i.e to writeresults to registers or memory).

• Precise exceptions are possible since instructions must commit inorder.

EECC722 - ShaabanEECC722 - Shaaban#86 Lec # 1 Fall 2004 9-6-2004

Hardware-BasedHardware-BasedSpeculationSpeculation

Speculative Execution +Speculative Execution + Tomasulo’s Algorithm Tomasulo’s Algorithm

EECC722 - ShaabanEECC722 - Shaaban#87 Lec # 1 Fall 2004 9-6-2004

Four Steps of Speculative Tomasulo AlgorithmFour Steps of Speculative Tomasulo Algorithm1. Issue — Get an instruction from FP Op Queue

If a reservation station and a reorder buffer slot are free, issue instruction& send operands & reorder buffer number for destination (this stage issometimes called “dispatch”)

2. Execution — Operate on operands (EX) When both operands are ready then execute; if not ready, watch CDB for

result; when both operands are in reservation station, execute; checksRAW (sometimes called “issue”)

3. Write result — Finish execution (WB) Write on Common Data Bus to all awaiting FUs & reorder buffer; mark

reservation station available.

4. Commit — Update registers, memory with reorder buffer result– When an instruction is at head of reorder buffer & the result is present,

update register with result (or store to memory) and remove instructionfrom reorder buffer.

– A mispredicted branch at the head of the reorder buffer flushes thereorder buffer (sometimes called “graduation”)

⇒ Instructions issue in order, execute (EX), write result (WB) out oforder, but must commit in order.

EECC722 - ShaabanEECC722 - Shaaban#88 Lec # 1 Fall 2004 9-6-2004

Memory Hierarchy: The motivationMemory Hierarchy: The motivation• The gap between CPU performance and main memory has been

widening with higher performance CPUs creating performancebottlenecks for memory access instructions.

• The memory hierarchy is organized into several levels of memory withthe smaller, more expensive, and faster memory levels closer to theCPU: registers, then primary Cache Level (L1), then additionalsecondary cache levels (L2, L3…), then main memory, then mass storage(virtual memory).

• Each level of the hierarchy is a subset of the level below: data found in alevel is also found in the level below but at lower speed.

• Each level maps addresses from a larger physical memory to a smallerlevel of physical memory.

• This concept is greatly aided by the principal of locality both temporaland spatial which indicates that programs tend to reuse data andinstructions that they have used recently or those stored in their vicinityleading to working set of a program.

EECC722 - ShaabanEECC722 - Shaaban#89 Lec # 1 Fall 2004 9-6-2004

Memory Hierarchy: MotivationMemory Hierarchy: MotivationProcessor-Memory (DRAM) Performance GapProcessor-Memory (DRAM) Performance Gap

µProc60%/yr.

DRAM7%/yr.

1

10

100

100019

8019

81

1983

1984

1985

1986

1987

1988

1989

1990

1991

1992

1993

1994

1995

1996

1997

1998

1999

2000

DRAM

CPU

1982

Processor-MemoryPerformance Gap:(grows 50% / year)

Per

form

ance

EECC722 - ShaabanEECC722 - Shaaban#90 Lec # 1 Fall 2004 9-6-2004

Cache Design & Operation IssuesCache Design & Operation Issues• Q1: Where can a block be placed cache?

(Block placement strategy & Cache organization)– Fully Associative, Set Associative, Direct Mapped.

• Q2: How is a block found if it is in cache?(Block identification)– Tag/Block.

• Q3: Which block should be replaced on a miss?(Block replacement)– Random, LRU.

• Q4: What happens on a write?(Cache write policy)– Write through, write back.

EECC722 - ShaabanEECC722 - Shaaban#91 Lec # 1 Fall 2004 9-6-2004

Cache Organization & Placement StrategiesCache Organization & Placement StrategiesPlacement strategies or mapping of a main memory data block onto

cache block frame addresses divide cache into three organizations:

1 Direct mapped cache: A block can be placed in one location only,given by:

(Block address) MOD (Number of blocks in cache)

2 Fully associative cache: A block can be placed anywhere incache.

3 Set associative cache: A block can be placed in a restricted set ofplaces, or cache block frames. A set is a group of block frames inthe cache. A block is first mapped onto the set and then it can beplaced anywhere within the set. The set in this case is chosen by:

(Block address) MOD (Number of sets in cache)

If there are n blocks in a set the cache placement is called n-wayset-associative.

EECC722 - ShaabanEECC722 - Shaaban#92 Lec # 1 Fall 2004 9-6-2004

Locating A Data Block in CacheLocating A Data Block in Cache• Each block frame in cache has an address tag.

• The tags of every cache block that might contain the required dataare checked in parallel.

• A valid bit is added to the tag to indicate whether this entry containsa valid address.

• The address from the CPU to cache is divided into:

– A block address, further divided into:

• An index field to choose a block set in cache.

(no index field when fully associative).

• A tag field to search and match addresses in the selected set.

– A block offset to select the data from the block.

Block Address BlockOffsetTag Index

EECC722 - ShaabanEECC722 - Shaaban#93 Lec # 1 Fall 2004 9-6-2004

Address Field SizesAddress Field Sizes

Block Address BlockOffsetTag Index

Block offset size = log2(block size)

Index size = log2(Total number of blocks/associativity)

Tag size = address size - index size - offset sizeTag size = address size - index size - offset size

Physical Address Generated by CPU

Mapping function:

Cache set or block frame number = Index = = (Block Address) MOD (Number of Sets)

Number of Sets

EECC722 - ShaabanEECC722 - Shaaban#94 Lec # 1 Fall 2004 9-6-2004

4K Four-Way Set Associative Cache:4K Four-Way Set Associative Cache:MIPS Implementation ExampleMIPS Implementation Example

A ddress

22 8

V TagIndex

0

1

2

253

254

255

Data V Tag D ata V Tag Data V Tag Data

3 22 2

4- to-1 m ultiplexo r

H it Da ta

123891011123 031 0

IndexField

TagField

1024 block framesEach block = one word4-way set associative256 sets

Can cache up to232 bytes = 4 GBof memory

Block Address = 30 bits

Tag = 22 bits Index = 8 bits Block offset

= 2 bits

Mapping Function: Cache Set Number = (Block address) MOD (256)

EECC722 - ShaabanEECC722 - Shaaban#95 Lec # 1 Fall 2004 9-6-2004

Cache Performance:Average Memory Access Time (AMAT), Memory Stall cycles

• The Average Memory Access Time (AMAT): The number ofcycles required to complete an average memory access requestby the CPU.

• Memory stall cycles per memory access: The number of stallcycles added to CPU execution cycles for one memory access.

• For ideal memory: AMAT = 1 cycle, this results in zeromemory stall cycles.

• Memory stall cycles per average memory access = (AMAT -1)

• Memory stall cycles per average instruction =

Memory stall cycles per average memory access

x Number of memory accesses per instruction

= (AMAT -1 ) x ( 1 + fraction of loads/stores)

Instruction Fetch

EECC722 - ShaabanEECC722 - Shaaban#96 Lec # 1 Fall 2004 9-6-2004

Cache PerformanceCache PerformanceSingle Level L1 Princeton (Unified) Memory ArchitectureSingle Level L1 Princeton (Unified) Memory Architecture

CPUtime = Instruction count x CPI x Clock cycle time

CPIexecution = CPI with ideal memory

CPI = CPIexecution + Mem Stall cycles per instruction

Mem Stall cycles per instruction = Mem accesses per instruction x Miss rate x Miss penalty

CPUtime = Instruction Count x (CPIexecution + Mem Stall cycles per instruction) x Clock cycle time

CPUtime = IC x (CPIexecution + Mem accesses per instruction x Miss rate x Miss penalty) x Clock cycle time

Misses per instruction = Memory accesses per instruction x Miss rate

CPUtime = IC x (CPIexecution + Misses per instruction x Miss penalty) x Clock cycle time

EECC722 - ShaabanEECC722 - Shaaban#97 Lec # 1 Fall 2004 9-6-2004

Miss Rates for Caches with Different Size,Miss Rates for Caches with Different Size,Associativity & Replacement AlgorithmAssociativity & Replacement Algorithm

Sample DataSample Data

Associativity: 2-way 4-way 8-way

Size LRU Random LRU Random LRU Random

16 KB 5.18% 5.69% 4.67% 5.29% 4.39% 4.96%

64 KB 1.88% 2.01% 1.54% 1.66% 1.39% 1.53%

256 KB 1.15% 1.17% 1.13% 1.13% 1.12% 1.12%

EECC722 - ShaabanEECC722 - Shaaban#98 Lec # 1 Fall 2004 9-6-2004

Cache Write StrategiesCache Write Strategies1 Write Though: Data is written to both the cache block and to

a block of main memory.

– The lower level always has the most updated data; an importantfeature for I/O and multiprocessing.

– Easier to implement than write back.

– A write buffer is often used to reduce CPU write stall while datais written to memory.

2 Write back: Data is written or updated only to the cacheblock. The modified or dirty cache block is written to mainmemory when it’s being replaced from cache.

– Writes occur at the speed of cache

– A status bit called a dirty or modified bit, is used to indicatewhether the block was modified while in cache; if not the block isnot written back to main memory when replaced.

– Uses less memory bandwidth than write through.

EECC722 - ShaabanEECC722 - Shaaban#99 Lec # 1 Fall 2004 9-6-2004

Cache Write Miss PolicyCache Write Miss Policy• Since data is usually not needed immediately on a write miss

two options exist on a cache write miss:

Write Allocate:

The cache block is loaded on a write miss followed by write hit actions.

No-Write Allocate:

The block is modified in the lower level (lower cache level, or main

memory) and not loaded into cache.

While any of the above two write miss policies can be used with either write back or write through:

• Write back caches always use write allocate to capture subsequent writes to the block in cache.

• Write through caches usually use no-write allocate since subsequent writes still have to go to memory.

EECC722 - ShaabanEECC722 - Shaaban#100 Lec # 1 Fall 2004 9-6-2004

Memory Access Tree, Unified L1Write Through, No Write Allocate, No Write Buffer

CPU Memory Access

L1

Read Write

L1 Write Miss:Access Time : M + 1Stalls per access: % write x (1 - H1 ) x M

L1 Write Hit:Access Time: M +1 Stalls Per access:% write x (H1 ) x M

L1 Read Hit:Access Time = 1Stalls = 0

L1 Read Miss:Access Time = M + 1Stalls Per access

% reads x (1 - H1 ) x M

Stall Cycles Per Memory Access = % reads x (1 - H1 ) x M + % write x M

AMAT = 1 + % reads x (1 - H1 ) x M + % write x M

EECC722 - ShaabanEECC722 - Shaaban#101 Lec # 1 Fall 2004 9-6-2004

Memory Access Tree Unified L1

Write Back, With Write AllocateCPU Memory Access

L1

Read Write

L1 Write Miss L1 Write Hit:% write x H1Access Time = 1Stalls = 0

L1 Hit:% read x H1Access Time = 1Stalls = 0

L1 Read Miss

Stall Cycles Per Memory Access = (1-H1) x ( M x % clean + 2M x % dirty )

AMAT = 1 + Stall Cycles Per Memory Access

CleanAccess Time = M +1Stall cycles = M x (1-H1 ) x % reads x % clean

DirtyAccess Time = 2M +1Stall cycles = 2M x (1-H1) x %read x % dirty

CleanAccess Time = M +1Stall cycles = M x (1 -H1) x % write x % clean

DirtyAccess Time = 2M +1Stall cycles = 2M x (1-H1) x %read x % dirty

EECC722 - ShaabanEECC722 - Shaaban#102 Lec # 1 Fall 2004 9-6-2004

Miss Rates For Multi-Level CachesMiss Rates For Multi-Level Caches• Local Miss Rate: This rate is the number of misses in a

cache level divided by the number of memory accesses tothis level. Local Hit Rate = 1 - Local Miss Rate

• Global Miss Rate: The number of misses in a cache leveldivided by the total number of memory accesses generatedby the CPU.

• Since level 1 receives all CPU memory accesses, for level 1: Local Miss Rate = Global Miss Rate = 1 - H1

• For level 2 since it only receives those accesses missed in 1: Local Miss Rate = Miss rateL2= 1- H2

Global Miss Rate = Miss rateL1 x Miss rateL2

= (1- H1) x (1 - H2)

EECC722 - ShaabanEECC722 - Shaaban#103 Lec # 1 Fall 2004 9-6-2004

Write Policy For 2-Level Cache• Write Policy For Level 1 Cache:

– Usually Write through to Level 2

– Write allocate is used to reduce level 1 miss reads.

– Use write buffer to reduce write stalls

• Write Policy For Level 2 Cache:

– Usually write back with write allocate is used.• To minimize memory bandwidth usage.

• The above 2-level cache write policy results in inclusive L2 cachesince the content of L1 is also in L2

• Common in the majority of all CPUs with 2-levels of cache

• As opposed to exclusive L1, L2 (e.g AMD Athlon)

EECC722 - ShaabanEECC722 - Shaaban#104 Lec # 1 Fall 2004 9-6-2004

2-Level (Both Unified) Memory Access Tree2-Level (Both Unified) Memory Access Tree

L1: Write Through to L2, Write Allocate, With Perfect Write BufferL1: Write Through to L2, Write Allocate, With Perfect Write BufferL2: Write Back with Write AllocateL2: Write Back with Write Allocate

CPU Memory Access

L1 Miss:L1 Hit:Stalls Per access = 0

L2 Hit:Stalls = (1-H1) x H2 x T2

(1-H1)(H1)

L2 Miss

(1-H1) x (1-H2)

CleanStall cycles = M x (1 -H1) x (1-H2) x % clean

L2

L1

DirtyStall cycles =2M x (1-H1) x (1-H2) x% dirty

Stall cycles per memory access = (1-H1) x H2 x T2 + M x (1 -H1) x (1-H2) x % clean + 2M x (1-H1) x (1-H2) x % dirty

= (1-H1) x H2 x T2 + (1 -H1) x (1-H2) x ( % clean x M + % dirty x 2M)

EECC722 - ShaabanEECC722 - Shaaban#105 Lec # 1 Fall 2004 9-6-2004

Cache Optimization SummaryCache Optimization SummaryTechnique MR MP HT Complexity