Time Series Dr. Sevtap Kestel Albert Ludwigs Universität ... Time... · 3.1 Scatterplot and...

Transcript of Time Series Dr. Sevtap Kestel Albert Ludwigs Universität ... Time... · 3.1 Scatterplot and...

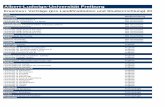

Time Series Analysis

Dr. Sevtap Kestel

Albert‐Ludwigs‐Universität FreiburgDepartment of Economics

1. Introduction

Lecture 1

2

Time Series AnalysisDr. Sevtap Kestel

1.1.1 Descriptive Statistics

• Population and Sample– Finite and infinite population

• Data– Quantitative– Qualitative (nominal, ordinal)

• Variable– Discrete – Continuous

• Graphical representations– Bar graphs, pie charts,

histograms, ogive • Measures of location

– Mean– Median– Mode

• Measures of dispersion– Range– Absolute mean deviation– Variance and standard

deviation– The five‐number summary and

Boxplots

2.1 Estimation Techniques2.1.1 Types of Estimation

Point EstimationPopulation mean

Population variance

MLE‐Maximum Likelihood EstimatorMax{∏n

i=1f(xi)}

Interval EstimationPopulation Mean

Population Variance

/ 2 / 2( ) 1P X z X zn nα ασ σμ α− ≤ ≤ + = −

1

1 n

ii

X xn =

= ∑

2 2

1

1 ( )1

n

ii

s x Xn =

= −− ∑

2 22

2 21 / 2 / 2

( 1) ( 1)( ) 1n s n sPα α

σ αχ χ−

− −≤ ≤ = −

2.1.2 Properties of Estimation

• Unbiased

• Consistent

• Efficient

ˆ[ ]E θ θ=

ˆlim{ [ ]} 0n

Var θ→∞

=

1 2 1ˆ ˆ ˆ[ ] [ ]Var Var is efficientθ θ θ≤ →

2.2 Hypothesis Testing

2.2.1. Types of Hypothesis• Simple TestsHo: θ=θ0 vs HA: θ=θ1

• Composite TestsHo: θ=θ0 vs HA: θ>θ1 one‐tailed testHo: θ=θ0 vs HA: θ<θ1 one‐tailed testHo: θ=θ0 vs HA: θ≠θ1 two tailed‐test

• Test Statistic is the measure

Types of errors

• Type I error: Reject Ho when it is true

• Type II error: Reject HA when it is true

• Power of the test:

Prob. Rejecting H0 when θ=θ1• P‐value: Prob. of rejecting H0 under null hypothesis

2.2.2 Testing Population Parameters

• Mean: Test Statistics– Large sample

– Small sample

• Variance

• Proportion

• Decision: Reject H0 when test statistics falls within the Rejection Region

0

/Xz

nμ

σ−

=

0

/Xts n

μ−=

22

20

( 1)n sχσ−

=

0

0 0

ˆ/

p pzp q n−

=

3. „Linear Models“

9

Time Series AnalysisDr. Sevtap Kestel

3.1 Scatterplot and Correlation

Relation between two random variables

Linear, quadratic, exponential etc.

Covariance, Cov(X,Y), is the average amount of interaction between two

variables

Correlation Coefficient, ρ,is the measure dependency among two

variables

xE[(X- )( )] ( , )ρ=( ) ( )

1 ρ 1ρ 1 perfect correlationρ=0 no correlation

Y

x Y

Y Cov X YVar X Var Y

μ μσ σ

−=

− ≤ ≤= ±

Scatterplot

40

50

60

70

80

90

100

150 160 170 180 190 200

height

wei

ght

3.1 Sample Correlation Coeffcient

x2 2

x Y

2 2 2 2 2 2

E[(X- )( )] [ ] [ ] [ ]ρ=( ) ( ) [( ) ] [( ) ]

E[(X-X)( )]( ( ) ) ( ( ) )

( )( )

1

1 ρ 1ρ 1 perfect correlationρ=0 no correlation

Y

X Y

i i

x y

Y E XY E X E YVar X Var Y E X E Y

n XY X YY Yrs s X X Y Y

X X Y Ys s

rn

μ μμ μ

− −=

− −

−−= =

− −

− −

=−

− ≤ ≤=±

∑ ∑ ∑∑ ∑ ∑ ∑

∑

Height Weight

161 55

164 58

166 63

168 66

169 70

172 74

173 76

174 79

176 80

176 82

177 84

178 83

180 84

182 85

183 87

185 89

188 90

189 91

191 93

, 0.965weight heightr =

3.2 Simple Linear Regression

• Aim: to fit a linear model to two or more variables

X: independent variable (explanatory)

Y: dependent variable (response)

• Simple Linear Regression

Y=a+bX+ random error

3.2 Regression Analysis

Random error ε ~N(0,σ2)

Parameters: a,b, or ai, i=0,1,..,n, are estimated by

Least Square Estimation Technique (LSE)

LSE: min{Σerror2}

ˆResidual=ε=Y-Y=Actual-Estimate

Y

X

sˆ ˆa=Y-bX; b=rs

Example:Suppose Mr. Bump observes the selling price and sales volume of

milk gallons for 10 randomly selected weeks as follows

Week

Weekly sales level, Y*

Selling price, X, $

1 10 1.30

2 6 2.0

3 5 1.70

4 12 1.50

5 10 1.60

6 15 1.20

7 5 1.60

8 12 1.40

9 17 1.00

10 20 1.10

* Thousand of gallons

WEEK x y x^2 y^2 xy

1 1.3 10 1.69 100 13

2 2 6 4 36 12

3 1.7 5 2.89 25 8.5

4 1.5 12 2.25 144 18

5 1.6 10 2.56 100 16

6 1.2 15 1.44 225 18

7 1.6 5 2.56 25 8

8 1.4 12 1.96 144 16.8

9 1 17 1 289 17

10 1.1 20 1.21 400 22

sum 14.4 112 21.56 1488 149.3

Normal equation:

10

10

ˆ4.14ˆ)10(1120

ˆˆ

ββ

ββ

+=

+= ∑∑ ii xny

8.119)112)(4.14(149)10(

6.2331121488)10()(

24.8)4.14(56.21)10()(222

222

−=−=−=

=−=−=

=−=−=

∑∑∑∑∑∑∑

yxxynS

yynS

xxnS

xy

yy

xx

14.3210

4.14)54.14(10

112ˆˆ

54.1424.8

8.119)4.14()56.21)(10(

112)4.14()3.149)(10(ˆ

0

21

=−−=−=

−=−

=−

−==

xy

SS

xx

xy

ββ

β

Regression model

xy 54.1414.32ˆ −=

86.01121488)10(4.1456.21)10(

112)4.14(3.149)10(22

−=−−

−==

yyxx

xy

SSS

r

Standard error of estimate:

week x

Actual y

Estimated y error error^2

1 1.3 10 13.238 -3.238 10.48464

2 2 6 3.06 2.94 8.6436

3 1.7 5 7.422 -2.422 5.866084

4 1.5 12 10.33 1.67 2.7889

5 1.6 10 8.876 1.124 1.263376

6 1.2 15 14.692 0.308 0.094864

7 1.6 5 8.876 -3.876 15.02338

8 1.4 12 11.784 0.216 0.046656

9 1 17 17.6 -0.6 0.36

10 1.1 20 16.146 3.854 14.85332

sum 14.4 112 112.024 0 59.4248272.2

842.59

2)ˆ(

2ˆ

22

==−

−=

−= ∑∑

nyy

nerror

eσ

Predicting Y: Suppose Mr. Bump wished to forecast the quantity of

milk sold if the price were set at $1.63

44.863.1)54.14(14.32ˆ 63.1 =−== =xYEy

Standard error of the forecast measures when x=1.63 is

90.28240.0

)44.163.1(101172.2

)()(11

2

2

2

=−

++=−

−++=∑ xx

xxn i

ep σσ

Then 95% prediction interval is 8.44 ± tn‐2, 0.025 2.90 => 8.44±2.306(2.90) => (1.753, 15.121)

Standard error of estimator of β1 00.3824.072.2

)( 21==

−==∑ xxS

e

xx

e σσσβ

Hypothesis TestingH0: β=0 vs H0: β≠0 8.4

00.3054.14

−=−−

=t < ‐2.306 Reject Ho

Coefficient of determination746.0

6.23314.591

)()ˆ(

11 2

22 =−=

−

−−=−=∑∑

yyyy

SSSEr

i

ii

yy

Approximately 75% of Y is explained by X.

4. „Linear Models“

18

Time Series AnalysisDr. Sevtap Kestel

4. Outline

4.1 Multiple Linear Regression

4.2 Nonlinear Regression

4.3 Regression Techniques

4.1 Multiple Linear Regression

• Multiple Linear Regression

Y=a0+a1X1 +a2X2+..+anXn+ random error

• Use of matrix algebra

Example: Suppose Mr. Bump observes the selling price, sales volume of milk gallons and advertising expense for 10 randomly selected weeks as follows (* Thousand of gallons; **

hundreds of dollars)

Week sales level, Y*

Selling price, X1 , $

Advertising**, X2

1 10 1.30 9

2 6 2.0 7

3 5 1.70 5

4 12 1.50 14

5 10 1.60 15

6 15 1.20 12

7 5 1.60 6

8 12 1.40 10

9 17 1.00 15

10 20 1.10 21

Correlation Matrix

165.089.065.0186.0

89.086.01

−−−

−=

adspricesales

adspricesales

r

The model :

εβββ +++= 22110 XXY

Scatter Diagram Y versus X1

0

5

10

15

20

25

0.5 1 1.5 2 2.5

price

sal

es

weeky X1 X2 y^2 X1^2 X2^2 X1 y

X2y X1X2

1 10 1.3 9 100 1.69 81 13 90 11.7

2 6 2 7 36 4 49 12 42 14.0

3 5 1.7 5 25 2.89 25 8.5 25 8.5

4 12 1.5 14 144 2.25 196 18 168 21.0

5 10 1.6 15 100 2.56 225 16 150 24.0

6 15 1.2 12 225 1.44 144 18 180 14.4

7 5 1.6 6 25 2.56 36 8 30 9.6

8 12 1.4 10 144 1.96 100 16.8 120 14.0

9 17 1 15 289 1 225 17 255 15.0

10 20 1.1 21 400 1.21 441 22 420 23.1

sum

11214.

4 1488 21.56 1522.0 149.3

1480.0 155.3

Normal equations:

∑∑∑∑∑∑∑∑

∑∑∑

++=

++=

++=

222211202

212211101

22110

ˆˆˆ

ˆˆˆ

ˆˆˆ

iiiii

iiiii

iii

xxxxyx

xxxxyx

xxny

βββ

βββ

βββ

1522ˆ3.155ˆ144ˆ1480

3.155ˆ56.21ˆ4.14ˆ3.149

114ˆ4.14ˆˆ10112

210

210

210

βββ

βββ

βββ

++=

++=

++=

In matrix form:

⎥⎥⎥⎥

⎦

⎤

⎢⎢⎢⎢

⎣

⎡

=⎥⎥⎥

⎦

⎤

⎢⎢⎢

⎣

⎡=′

⎥⎥⎥⎥⎥⎥

⎦

⎤

⎢⎢⎢⎢⎢⎢

⎣

⎡

=

⎥⎥⎥⎥⎥⎥

⎦

⎤

⎢⎢⎢⎢⎢⎢

⎣

⎡

=

2

1

0

ˆ

ˆ

ˆ

ˆ

15223.1551143.15556.214.14

1144.1410

2110.11......700.21930.11

20..610

β

β

β

βXXXY

( )⎥⎥⎥

⎦

⎤

⎢⎢⎢

⎣

⎡−=′′=

′′=′

−

5851.02476.8

4064.16)(ˆ

)(ˆ

1 YXXX

XXYX

β

β

31 59.025.841.16ˆ xxy +−=

51.1310

9.15)ˆ(ˆ

22

=−

=−

−=

−= ∑∑

knyy

knerror

eσ

Variables used to explain variance of Y

none 0 233.6

Price 0.75 59.4

Price and advertisement 0.93 15.9

week

Price x1

Advertising

x2

Actual y

Estimated y error error^2

1 1.3 9 10 10.95 -0.95 0.9032

2 2 7 6 4.01 1.99 3.972

3 1.7 5 5 5.31 -0.31 0.0967

4 1.5 14 12 12.22 -0.22 0.0512

5 1.6 15 10 11.98 -1.98 3.947

6 1.2 12 15 13.53 1.46 2.159

7 1.6 6 5 6.72 -1.72 2.961

8 1.4 10 12 10.71 1.28 1.622

9 1 15 17 16.93 0.064 0.0042

10 1.1 21 20 19.62 0.379 0.14355

Sum0 15.90

( )( ) 91248.0

9/6.2337/9.151

1/)(/)ˆ(

1ˆˆ

1 2

2

2

22 =−=

−−

−−−=−=∑∑

nyyknyy

ry

ec σ

σ

4.2 Non‐Linear Regression

• Exponential Equation of best fit

• Power equation of best fit

xY =ablogY =loga+xlogb

bY = aXlo g Y = lo g a+ b lo g X

A start‐up company has developed an improved electronic chip for use in laboratory equipment. The company needs to project the manufacturing cost, so it develops spreadsheet models that takes into

account the purchase of production equipment, overhead, raw materials, depreciation, maintenance and other business costs.

The spreadsheet estimates the cost of producing 10,000 to 200,000 chips per year.

Chips Produced (1000s)

Cost

per

chi

p

200150100500

150

125

100

75

50

Scatterplot of Cost per chip vs Chips Produced (1000s)

correlation = ‐0.823

Prod Costfitted Cost residual

10 146.1 96.7941 49.3059

20 105.8 92.482 13.318

30 85.75 88.1699 -2.4199

40 77.02 83.8578 -6.8378

50 66.1 79.5457 -13.4457

60 63.92 75.2336 -11.3136

70 58.8 70.9215 -12.1215

80 50.91 66.6094 -15.6994

90 47.22 62.2973 -15.0773

100 44.31 57.9852 -13.6752

120 42.88 49.361 -6.481

140 39.05 40.7368 -1.6868

160 37.47 32.1126 5.3574

180 35.09 23.4884 11.6016

200 34.04 14.8642 19.1758

Fitted Value

Res

idua

l

100908070605040302010

50

40

30

20

10

0

-10

-20

Residuals Versus the Fitted Values(response is Cost per chip)

Chips Produced (1000s)

Res

idua

l

200150100500

50

40

30

20

10

0

-10

-20

R esiduals Versus Chips Produced (1 0 0 0 s)(response is Cost per chip)

logprod

cost

5.55.04.54.03.53.02.52.0

150

125

100

75

50

Scatterplot of cost vs logprod

prod.

logc

ost

200150100500

5.00

4.75

4.50

4.25

4.00

3.75

3.50

Scatterplot of logcost vs prod.

logprod

logc

ost

5.55.04.54.03.53.02.52.0

5.00

4.75

4.50

4.25

4.00

3.75

3.50

Scatterplot of logcost vs logprod

Correlations: logcost, logprod, prod., cost

Logcost Logprod prod

logprod -0.997

prod -0.920 0.923

logcost 0.975 -0.976 -0.823

Regression Analysis: logcost versus logprod The regression equation is

logcost = 6.16 ‐ 0.502 logprod

Predictor Coef SE Coef T PConstant 6.15922 0.04454 138.30 0.000logprod ‐0.50162 0.01034 ‐48.52 0.000

S = 0.0329768 R‐Sq = 99.5% R‐Sq(adj) = 99.4%

logprod

logc

ost

5.55.04.54.03.53.02.52.0

5.00

4.75

4.50

4.25

4.00

3.75

3.50

Scatterplot of logcost vs logprod

Fitted Value

Stan

dard

ized

Res

idua

l

5.004.754.504.254.003.753.50

2

1

0

-1

-2

Residuals Versus the Fitted Values(response is logcost)

![Web Search – Summer Term 2006 VII. Selected Topics - Metasearch Engines [1] (c) Wolfgang Hürst, Albert-Ludwigs-University.](https://static.fdocuments.in/doc/165x107/56649d645503460f94a46e97/web-search-summer-term-2006-vii-selected-topics-metasearch-engines-1.jpg)