The SKA Project - The World's Largest Streaming Data Processor

-

Upload

insidehpc -

Category

Technology

-

view

3.341 -

download

1

description

Transcript of The SKA Project - The World's Largest Streaming Data Processor

ISC 2014

Dr Paul Calleja

Director Cambridge HPC Service

ISC 2014

• Introduction to Cambridge HPCS

• Overview of the SKA project and the SKA SDP consortium

• Two SDP work pages

• SDP Open Architecture Lab

• SDP operations

Overview

ISC 2014

Cambridge University

• The University of Cambridge is a world leading teaching & research institution, consistently ranked within the top 3 Universities world wide

• Annual income of £1200M - 40% is research related - one of the largest R&D budgets within the UK HE sector

• 17000 students, 9,000 staff

• Cambridge is a major technology centre– 1535 technology companies in surrounding science parks– £12B annual revenue– 53000 staff

• The HPCS has a mandate to provide HPC services to both the University and wider technology company community

ISC 2014

Four domains of activity

Commodity HPC Centre of

Excellence

Promoting uptake of HPC by UK Industry

Driving Discovery

Advancing development and

application of HPCHPCR& D

ISC 2014

• Over 1000 registered users from 35 departments

• 856 Dell Servers - 450 TF sustained DP performance• 128 node Westmere (1536 cores) (16 TF)

• 600 node (9600 core) full non blocking Mellanox FDR IB 2,6 GHz sandy bridge (200 TF) one of the fastest Intel clusters in he UK

• SKA GPU test bed -128 node 256 card NVIDIA K20 GPU • Fastest GPU system in UK 250 TF • Designed for maximum I/O throughput and message rate

• Full non blocking Dual rail Mellanox FDR Connect IB• Full GPUDirect functionality

• Design for maximum energy efficiency • 2 in Green500 (Nov 2013)• Most efficient air cooled supercomputer in the world

• 4 PB storage – Lustre parallel file system 50GB/s

Cambridge HPC vital statistics

ISC 2014

CORE – Industrial HPC service & consultancy

ISC 2014

Dell | Cambridge HPC Solution Centre• The Solution Centre is a Dell Cambridge joint funded HPC centre of

excellence, provide leading edge commodity open source HPC solutions.

ISC 2014

DiRAC national HPC service

ISC 2014

• Cambridge were the first European NVIDIA CUDA COE

• Cambridge has had first large scale GPU cluster in UK for the last four years

• Key technology demonstrator for SKA

• Strong CUDA development skill within HPCS

• New large GPU system– largest GPU system in UK one of the most energy efficient supercomputers in the world – built to push parallel scalability of GPU clusters by deploying the best network possible and combining with GPUDirect

NVIDIA CCOE

ISC 2014

• The HPCS is providing all the data storage and computational recourse for a major new genomics study

• The study involves the gene sequencing of 20,000 decease patients from the UK

• This is a major data throughput workload with high data security issues

• It requires building designing an efficient data throughput pipeline in terms of hardware and software.

20K genome project

ISC 2014

• 5 year research project with JLR

• Drive capability in simulation & data mining

• HPC design, implementation and operation best practice

JLR R&D

ISC 2014

SA CHPC collaboration

• HPCS has a long term strategic

partnership with CHPC

• HPCS has been working closely

with CHPC for last 6 years

• Technology strategy, system design

procurement

• HPC system stack development

• SKA platform development

ISC 2014

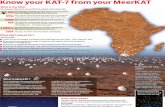

• Next generation radio telescope

• 100 x more sensitive• 1000000 X faster • 5 square km of dish over 3000 km

• The next big science project

• Currently the worlds most ambitious IT Project

• First real exascale ready application

• Largest global big-data challenge

Square Kilometre Array - SKA

ISC 2014

SKA location

• Needs a radio-quiet site• Very low population density• Large amount of space• Two sites:

• Western Australia• Karoo Desert RSA

A Continental sized Radio A Continental sized Radio

TelescopeTelescope

ISC 2014

SKA – Key scientific drivers

Cradle of lifeCosmic Magnetism

Evolution of galaxies

Pulsar surveygravity waves

Exploring the dark ages

ISC 2014

But……

Most importantly the SKA will investigate

phenomena we have not even

imagined yet

Most importantly the SKA will investigate

phenomena we have not even

imagined yet

ISC 2014

SKA timeline

2022 Operations SKA1 10% 2025: Operations SKA2 100%

2023-2027 Construction of Full SKA, SKA2

€2 B

2017-2022 10% SKA construction, SKA1

€650M

2012 Site selection

2012 - 2016 Pre-Construction: 1 yr Detailed design

€90MPEP 3 yr Production Readiness

2008 - 2012 System design and refinement of specification

2000 - 2007 Initial concepts stage

1995 - 2000 Preliminary ideas and R&D

ISC 2014

SKA project structure

SKA BoardSKA Board

Director GeneralDirector General

Work Package Consortium 1 Work Package Consortium 1

Work Package Consortium n Work Package Consortium n

Advisory Committees(Science, Engineering, Finance, Funding …)

Advisory Committees(Science, Engineering, Finance, Funding …)

……

Project Office (OSKAO)

Project Office (OSKAO)

Locally funded

ISC 2014

Work package breakdown

UK (lead), AU (CSIRO…), NL (ASTRON…) South Africa SKA, Industry (Intel, IBM…)

UK (lead), AU (CSIRO…), NL (ASTRON…) South Africa SKA, Industry (Intel, IBM…)

1. System

2. Science

3. Maintenance and support /Operations Plan

4. Site preparation

5. Dishes

6. Aperture arrays

7. Signal transport

8. Data networks

9. Signal processing

10. Science Data Processor

11. Monitor and Control

12. Power

SPO

ISC 2014

SDP = Streaming data processor challenge

• The SDP consortium led by Paul Alexander University of Cambridge

• 3 year design phase has now started (as of November 2013)

• To deliver SKA ICT infrastructure need a strong multi-disciplinary team

• Radio astronomy expertise

• HPC expertise (scalable software implementations; management)

• HPC hardware (heterogeneous processors; interconnects; storage)

• Delivery of data to users (cloud; UI …)

• Building a broad global consortium:

• 11 countries: UK, USA, AUS, NZ, Canada, NL, Germany, China, France, Spain, South Korea

• Radio astronomy observatories; HPC centres; Multi-national ICT companies; sub-contractors

ISC 2014

SDP consortium membersManagement Groupings Workshare (%)

University of Cambridge (Astrophysics & HPFCS) 9.15Netherlands Institute for Radio Astronomy 9.25International Centre for Radio Astronomy Research 8.35SKA South Africa / CHPC 8.15STFC Laboratories 4.05Non-Imaging Processing Team 6.95 University of Manchester Max-Planck-Institut für Radioastronomie University of Oxford (Physics)University of Oxford (OeRC) 4.85Chinese Universities Collaboration 5.85New Zealand Universities Collaboration 3.55Canadian Collaboration 13.65Forschungszentrum Jülich 2.95Centre for High Performance Computing South Africa 3.95iVEC Australia (Pawsey) 1.85Centro Nacional de Supercomputación 2.25Fundación Centro de Supercomputación de Castilla y León 1.85Instituto de Telecomunicações 3.95University of Southampton 2.35University College London 2.35University of Melbourne 1.85French Universities Collaboration 1.85Universidad de Chile 1.85

ISC 2014

SDP –strong industrial partnership

• Discussions under way with

• DelI, NVIDIA, Intel, HP IBM, SGI, l, ARM, Microsoft Research

• Xyratex, Mellanox, Cray, DDN

• NAG, Cambridge Consultants, Parallel Scientific

• Amazon, Bull, AMD, Altera, Solar flare, Geomerics, Samsung,

CISCO

• Apologies to those I’ve forgotten to list

ISC 2014

SKA data rates

..

Sparse AA

Dense AA

..

Central Processing Facility - CPF

User interfacevia Internet

...

To 250 AA Stations

DSP...

DSP

To 1200 Dishes

...15m Dishes

16 Tb/s

10 Gb/s

Data

Time

Control

70-450 MHzWide FoV

0.4-1.4 GHzWide FoV

1.2-10 GHzWB-Single Pixel feeds

Tile &Station

Processing

OpticalData links

... AA slice

... AA slice

... AA slice

...D

ish & AA+D

ish Correlation

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

ProcessorBuffer

Data sw

itch ......Data

Archive

ScienceProcessors

Tb/s Gb/s Gb/s

...

...

TimeStandard

Ima

gin

g P

roce

ssors

Control Processors & User interface

Pb/s

Correlator UV Processors Image formation Archive

Aperture Array Station

16 Tb/s 4 Pb/s

24 Tb/s

20 Gb/s

20 Gb/s

1000Tb/s

ISC 2014

SKA conceptual data flowTiered Data Delivery

Astronomer

Regional Centre

Cloud

Sub-set of Archive

Data routing

Regional Centre

Sub-set of Archive

Regional Centre

Sub-set of Archive

Cloud access

SDP Core Facility South Africa

SDP Core Facility Australia

ISC 2014

Science data processor pipeline

10 Pflop 1 Eflop

100 Pflop

Software complexity

10 Tb/s 200 Pflop

10 Eflop

…IncomingData fromcollectors

Switch

Buffer store

Switch

Buffer store

Bulk StoreBulk Store

CorrelatorBeam

former

UV

Processor

Imaging:

Non-Imaging:

CornerTurning

CourseDelays

Fine F-step/Correlation

VisibilitySteering

ObservationBuffer

GriddingVisibilities Imaging

ImageStorage

CornerTurning

CourseDelays

Beamforming/De-dispersion

BeamSteering

ObservationBuffer

Time-seriesSearching

Searchanalysis

Object/timingStorage

HPC science

HPC science

processingprocessing

Image

Processor

1000Tb/s 1 Eflop10 EB/y SKA 2 SKA 1 1 EB/y 50 PB

2.5 EB

ISC 2014

SDP processing rack – feasibility model

Host processorMulti-core X86

M-Core - >10TFLO

P/s

M-Core

- >10TFLOP/s

To rackswitches

Disk 1≥1TB

56Gb/s

PCI Bus

Disk 2≥1TB

Disk 3≥1TB

Disk 4≥1TB

Processing blade 1

Processing blade 2

Processing blade 3

Processing blade 4

Processing blade 5

Processing blade 6

Processing blade 7

Processing blade 8

Processing blade 9

Processing blade 10

Processing blade 11

Processing blade 12

Processing blade 13

Processing blade 14

Processing blade 15

Processing blade 16

Processing blade 17

Processing blade 18

Processing blade 19

Processing blade 20

Leaf Switch-1 56Gb/sLeaf Switch-2 56Gb/s

42U Rack

Processing Blade:

GGPU, MIC,…?GGPU, MIC,…?

Blade Specification

Blade Specification

ISC 2014

SKA feasibility model

…

…

…

…

…

…

…

…

…

…

…

…

AA-low

Data 1

1

280

AA-low

Data 2

1

280

Dishes

Data 4

1 2 16

… 1 3 N

…

HPCHPC

BulkBulkStoreStore

2

SwitchSwitch

Correlator/UV processor

Further UV processors

Imaging Processor

Corner Turnerswitches

56Gb/s each

…

…

AA-low

Data 3

1

280

1

250

……

ISC 2014

SKA conceptual software stack

SKA subsystems and service components

SKA Common Software Application FrameworkUIF Toolkit

Access ControlMonitoring Archiver

Live Data Access

Logging System

Alarm ServiceConfiguration Management

Scheduling Block Service

Communication Middleware

Database SupportThird-party tools and

librariesDevelopment tools

Operating System

High-level APIs and Tools

Core Services

Base Tools

ISC 2014

• Create a critical mass of HPC & astronomy knowledge combined with HPC equipment and lab staff to produce a shared resource to drive SKA system development studies and SDP prototyping – Strong coordination of all activities with COMP

• Provide a coordinated engagement mechanism with Industry to drive SKA platform development studies Dedicated OAL project manager

• Benchmarking• Perform standardised benchmarking across range different vendor solutions• Undertake a consistent analysis of benchmark systems and report into COMP

• Manage a number of industry contracts driving system studies• Low level software RAID / Lustre performance testing• Large scale archives• Software defined networking• Openstack in HPC environment • SLUM as a telescope scheduler

Open Architecture Lab function

ISC 2014

• Build prototype systems under direction from COMP

• Undertaken system studies directed from COMP to investigate particular system aspects Dedicated HPC engineer being hired to drive studies

• Act as managed Lab for COMP and wider SDP work packages., build systems, perform technical studies, make systems accessible. Dedicated Lab engineer to service LAB

Open Architecture Lab function

ISC 2014

• Emphasis on testing scalable components in terms of hardware and software

• Key considerations in architectural studies will be:-• Energy efficient architectures• Scalable cost effective storage• Interconnects• Scalable system software • Operations

Open Architecture Lab function

ISC 2014

•Act as interface with industry providing coordinated engagement with SDP• Benchmark study papers• Roadmap papers• Discussion digests

•Industrial system study contracts • Design papers• Benchmark papers

•Build target test platform • Design papers• benchmark papers

•Managed lab providing a service to SDP consortium• Service function is output

Open Architecture Lab – Outputs

ISC 2014

• Coordinated by Cambridge jointly run HPCS (Cambridge) and CHPC (SA)– Dedicated PM– Dedicated HPC engineer– Dedicated lab engineer

• Collaborate and coordinate with distributed labs • Cambridge• CHPC• Astron• Julich• Oxford

Open Architecture Lab – Organisation

ISC 2014

•Large scale systems

• 600 node (9600 core) full non blocking Mellanox FDR IB 2,6 GHz sandy bridge (200 TF) one of the fastest Intel clusters in he UK

• SKA GPU test bed -128 node 256 card NVIDIA K20 GPU • GPU test bed built extensive testing underway – good

understanding of GPU –GPU RDMA functionality - GPU focused engineer in place

• Good understanding of current best practise in energy efficient computing and data build and design

•4 PB storage – Lustre parallel file system 50GB/s

Cambridge test beds

ISC 2014

• Small scale CPU test beds• Phi – Installed last week – larger cluster being designed • Arm – evaluating solutions• Atom – evaluating solutions• Agreed Intel Radio Astronomy IPPC 2 head count to be

put in place looking at PHI

• Storage test beds• Lustre on commodity hardware – H/W RAID - Installed• Lustre on commodity hardware – SW RAID – under test• Lustre on proprietary hardware – discussions with vendors• Lustre on ZFS – under test• Ceph – under test• Archive test bed –discussions with vendors• Distributed file system flash accelerated - in design

• Strong storage test programme underway – head count of 4 in place driving the programme

Cambridge test beds

ISC 2014

• Networking• Use current production system as large scale test bed• Dedicated equipment to be ordered

• IB• Ethernet• Software defined networks• RDMA data transfers co-p to co-p

• Networking SOW being constructed – 1FTE to be hired

• Slurm test bed at Cambridge & CHPC• Headcount at Cambridge CHPC

• Openstack test bed under construction – • Openstack development in CHPC/HPCS

• SDP operations – OAL to feed into operation WP

• Data centre issues

Cambridge test beds

ISC 2014

• The SKA SDP compute facility will be at the time of deployment one of the largest HPC systems in existence

• Operational management of large HPC systems is challenging at the best of times - When HPC systems are housed in well established research centres with good IT logistics and experienced Linux HPC staff

• The SKA SDP could be housed in a desert location with little surrounding IT infrastructure, with poor IT logistics and little prior HPC history at the site

• Potential SKA SDP exascale systems are likely to consist of 100,000 nodes occupy 800 cabinets and consume 20 MW. This is very large – around 5 times the physical size of Titan Cray at Oakridge national labs.

• The SKA SDP HPC operations will be very challenging but tractable

SKA Exascale computing in the desert

ISC 2014

• We can describe the operational aspects by functional element

Machine room requirements **SDP data connectivity requirementsSDP workflow requirements System service level requirementsSystem management software requirements**Commissioning & acceptance test procedures System administration procedureUser access proceduresSecurity procedureMaintenance & logistical procedures **Refresh procedure System staffing & training procedures **

SKA HPC operations – functional elements

ISC 2014

• Machine room infrastructure for exascale HPC facilities is challenging

• 800 racks, 1600M squared• 30MW IT load• ~40 Kw of heat per rack

• Cooling efficiency and heat density management is vital

• Machine infrastructure at this scale is both costly and time consuming

• The power cost alone at todays cost is 10’s of millions (£) per year

• Desert location presents particular problems for data centre

• Hot ambient temperature - difficult for compressor less cooling

• Lack of water - difficult for compressor less cooling• Very dry air - difficult for humidification• Remote location - difficult for DC maintenance

Machine room requirements

ISC 2014

• System management software is the vital element in HPC operations

• System management software today does not scale to exascale

• Worldwide coordinated effort to develop system management software for exascale in HPC community

• We are very interested in leveraging Openstack technologies from non HPC communities

System management software

ISC 2014

• Current HPC technology MBTF for hardware and system software result in failure rates of ~ 2 nodes per week on a cluster a ~600 nodes.

• It is expected that SKA exascale systems could contain ~100,000 nodes

• Thus expected failure rates of 300 nodes per week could be realistic

• During system commissioning this will be 3 or 4 X

• Fixing nodes quickly is vital otherwise the system will soon degrade into a non functional state

• The manual engineering processes for fault detection and diagnosis on 600 will not scale to 100,000 nodes. This needs to be automated by the system software layer

• Vendor hardware replacement logistics need to cope with high turn around rates

Maintenance logistics

ISC 2014

• Providing functional staffing levels and experience at remote desert location will be challenging

• Its hard enough finding good HPC staff to run small scale HPC systems in Cambridge – finding orders of magnitude more staff to run much more complicated systems in a remote desert location will be very Challenging

• Operational procedures using a combination of remote system administration staff and DC smart hands will be needed.

• HPC training programmes need to be implemented to skill up way in advance

Staffing levels and training

ISC 2014

Early Cambridge SKA solution - EDSAC 1

Maurice Wilkes