Task Assessment of Fourth and Fifth Grade Teachers

-

Upload

christopher-peter-makris -

Category

Documents

-

view

45 -

download

0

Transcript of Task Assessment of Fourth and Fifth Grade Teachers

Page 1

Task Assessment of Fourth and Fifth Grade

Teachers

Emily Butler, Argi Harianto, Christopher Peter Makris

Carnegie Mellon University

May 3, 2010

Abstract

In today’s society, urban schools often do not have academic programs as strong as non-urban

schools. A possible source of weakness may be the quality of teachers’ assignments. One way to

measure instructors’ teaching quality is by analyzing the assignments they give to their students;

however, we must decide on how many assignments need to be collected to assess each teacher’s

quality of instruction. Along with the students’ assignments, each teacher must also take time to

submit required paperwork. In order to receive optimal participation, it is important to collect

only the appropriate number of assignments from each teacher. Additionally, we must also

determine the amount of raters needed to assess the assignments. Because the collection of

assignments and use of raters is both costly and time consuming, we would like to minimize the

number of assignments and raters needed while also maximizing the accuracy of our estimate of

the quality of assignments that teachers assign. To do so, we use generalizability theory on our

data to generate results that allow us to predict the reliability of any combination of assignments

and raters. By analyzing these results, we found that it would be optimal in future studies to

collect approximately seven assignments from each teacher while having only two raters. We

believe this to be the best model as it requires the least amount of raters and assignments, saving

both time and money, while also allowing the researcher to attain accurate results.

Page 2

Introduction

In a society that is putting greater emphasis on education, it is important to make sure that

the quality of education is sufficient. To gauge this level of sufficiency, one can look at the

performance of the students. In previous years, there has been a lot of emphasis placed on rating

schools through standardized tests, such as the Scholastic Aptitude Test (SAT). It is intuitive to

think that if student performance is high, than the educational standard is high, and if student

performance is low, then the educational standard is low; however, these scores alone may not

help us diagnose and fix what is wrong (Bryk, 1998, 7). Indeed there are a lot of factors that

affect a student’s education, such as the type of school they attend (private vs. public), the

location of the school (urban vs. rural), and the quality of the school’s teachers. For this research,

we are interested in looking at the quality of the teachers in public schools in urban areas.

Recently, a lot of work has been done developing measures of teaching quality using task

assessment (L. Matsumura, Personal Conversation, February 17, 2010). For example, this study

is another piece in a line of research investigating this area on multiple levels. In essence, task

assessment involves collecting assignments that teachers give to their students and determining

the quality of the task. The belief is that the type of task a student engages in influences how they

learn (Doyle, 1999, 171-172). Students usually will not go beyond what the task demands of

them, therefore the teacher defines what is considered acceptable and complete work. If more is

demanded from the student, they will be forced to push themselves farther and develop deeper

understandings of the material. Consequently, they will develop more complex skills. On the

other hand, if a teacher is only grading a student’s work based on mechanics (spelling,

punctuation, grammar, etc.), then the student will only pay attention to those requirements (Bryk,

2001, 2).

Page 3

A student’s understanding of the demand of an assignment depends on three things: what

is said by the teacher, what is heard by the student, and what is listed in the task instructions.

Ideally, these would be the same, but this is not always the case (Matsumura, 2008, 269-270).

In our work, we focus on the written assignments that are given to the students in the area

of reading comprehension. In these assignments, there are three parts that are important. The first

is the quality of the textual basis of the assignment (this is important for assignments in English

or writing classes). If the text is a developed, more complex piece of literature, then the teacher

has the ability to assign more in-depth assignments. The second criterion is the difficulty of the

assignment. This focuses on how much the assignment requires from the student intellectually.

The final criterion looks at what the teacher considers to be “good work.” As stated above, if the

teacher expects much from a student, it is believed that the student will perform better.

In this paper, we begin by explaining the previous work that has been done in the field.

We will then explain the goals of our research and the theory we used. Included will be a

description of our data, how we dealt with missing observations using imputation, and the model

we ended up choosing. We will display our results and graphics, and explain what they mean in

context.

Previous Work

Three major institutions that have investigated the quality of teachers’ assignments are

the Consortium for Chicago School Reform, the Center for Research on Evaluation Standards &

Student Testing, and the Learning Research & Development Center at the University of

Pittsburgh. These organizations, as well as others, have made some general findings. First, they

found that high quality assignments are very rare. They also found that regardless of students’

achievement level, all students benefit equally from higher quality assignments (L. Matsumura,

Page 4

Personal Conversation, February 17, 2010). This means that, regardless of a student’s academic

level, all students learn and develop more advanced cognitive skills if the assignment is more

demanding. Furthermore, there appears to be an association between high quality tasks and high

quality writing. This makes sense because a more advanced assignment will require more

advanced skills. Finally, there is a lot of pre-published learning material for the primary level

(grades 1 through 5). These materials include texts for the students to read as well as related

questions and tasks. Unfortunately, not all of these assignments are of sufficient quality to

mentally engage the students.

Goals

Our work focuses on data from a study conducted by Dr. Lindsay Clare Matsumura, PhD,

of the University of Pittsburgh. The major goal of our work is to find the optimal number of

assignments that need to be collected from each teacher to gain an accurate assessment of a

teacher’s assignment quality. We also would like to determine the optimal number of raters

needed to assess these assignments for Dr. Matsumura’s data. It is important to understand the

balance between the number of assignments and raters because it is not feasible to have many of

either. Because each rater is hired by the researcher, having more raters means the researcher

must spend more time and money. Also, for each task a teacher submits, they must spend about

45 minutes preparing the documentation for Dr. Matsumura. Although these teachers are being

modestly compensated, it may still be a cumbersome time commitment.

Theory

Generalizability theory is a statistical tool used to estimate the dependability of

measurements, which in our study corresponds to quality scores of assignments. The theory

Page 5

postulates that there is some universal score or grand mean of scores for all subjects, on which

there exists variation that is explainable between subjects and along factors (also known as

“facets” in generalizability theory jargon). There are two aspects of generalizability theory

models: generalizability studies (G studies) and decision studies (D studies).

The G study essentially decomposes the overall variation in scores from the universal

score in terms of each component of variation. In other words, the G study breaks down the

variability in scores into three categories: the variability between subjects, the variability

between facets (and their interaction), and some residual term that corresponds to hidden,

unmeasured facets (noise or measurement errors). Generalizability theory distinguishes between

two different interpretations of the variability in scores between subjects: absolute and relative

(Shavelson and Webb, 1991). An academic test that is “curved” is an example of a score that is

interpreted relatively; an individual’s performance on a standardized test depends not only on

their performance on the test but also everyone else’s. A driver’s license test is a typical example

of an absolute interpretation of a score; a prospective driver’s score on the test does not depend

on others’ results. The output of interest from the G study is a coefficient measuring the

reliability of a measurement, called the G coefficient for relative interpretation, and a ф (phi)

coefficient for absolute interpretation.

The D study uses the information from the G study to estimate the measurement

reliability of scores that would be obtained using different facet levels. In an ideal world, the

experimenter would repeat this same study multiple times using the same subjects while using

different numbers of assignments and raters each time. They would then calculate the reliability

of score measurement in each study and determine the optimal number of assignments and raters.

Page 6

Since the experimenter can only feasibly perform one study, we use the D study to estimate the

G coefficients across different levels of facets and to determine the optimal level of each facet.

To conduct our analysis of the data using generalizability theory, we will be using a

program developed by Christopher Mushquash and Brian P. O’Connor in congruence with the

statistical software package SAS (Mushquash, 2006, 542-547).

Missingness

Before performing the generalizability theory analysis, we must first discuss the

missingness within our dataset. Upon initial consideration, one may believe that the missing

observations can simply be disregarded without consequence; however, this is not the case.

Important information may be contained within the missing values, so before an accurate

analysis can be done we must first assess the missingness of our dataset.

There are various different kinds of missing values. Consider the case in which the

ratings that are missing within our dataset are simply a random subset of the entire sample of

assignments. This type of missing data is called Missing Completely At Random (MCAR). One

reason for why our missing observations could be considered MCAR is if the missing ratings

were accidentally misplaced or lost. In this case, if we were to estimate the associations among

our variables using only the complete observations of our dataset, our results would be unbiased

(Donders, 2006, 1087-1091). This is because each observation would have an equally likely

chance of being MCAR. This type of missingness is easy to work around, yet rare.

Another type of missingness is called Missing Not At Random (MNAR). Consider the

case in which some teachers are already aware that their assignments are consistently of low

quality. They may choose to purposely not share their assignments because of this reason. If this

were to be the case, then the missing values within our dataset all have something in common:

Page 7

the missing ratings would all be low. In this case, the missingness is not completely at random

because there is an unobserved underlying reason as to why the observations are not reported

(Donders, 2006, 1087-1091). Therefore, if data is MNAR, valuable information is inevitably lost.

Unfortunately, this type of missingness is tough to deal with, and there is no universal method to

account for the missing observations properly.

The third type of missingness is referred to as Missing At Random (MAR). Suppose that

for our study we had information about whether teachers taught in English or in Spanish.

Consider the case in which we know all ratings for those assignments from every teacher who

teaches in English, but are missing values for a random sample of teachers who teach in Spanish.

In this case, the probability that an observation is missing depends on information that we

actually do know, i.e., whether or not the teacher teaches in English or Spanish (Donders, 2006,

1087-1091). If data are MAR, we cannot simply use only complete observations since this would

induce selection bias in our data.

Data

Our dataset is comprised of 107 teachers, each of whom submitted four assignments to be

assessed. The 107 teachers in the sample represent 29 elementary schools and 1,750 students.

Each assignment was rated on a scale of 1-4 on two different criteria: the cognitive demand the

assignment requires from the students, and the grading criteria used by the teacher. Each

assignment was rated by two independent raters based on a rubric provided in Appendix A. We

observed complete observations for the cognitive demand of assignments variable; however,

there were seven teachers with missing values in the grading criteria variable. An analysis of

how we dealt with missing data is provided in the Imputation section.

Page 8

We performed exploratory data analysis to provide a better understanding of the data.

Table 1 displays summary measures for the cognitive demand and grading criteria of each

assignment. We also looked at the distributions of these two variables conditioned on raters to

gain an indication of how strongly related the raters are across different assignments,

summarized in Table 2 and Table 3:

Table 1: Summary Measures for Cognitive Demand and Grading Criteria

Table 2: Cognitive Demand Conditioned on Raters Table 3: Grading Criteria Conditioned on Raters

Table 1 summarizes the score variables in our data. We notice that attaining the highest

possible score of 4 is extremely rare, which provides evidence that high quality assignments are

very uncommon. The conditional distributions of scores across raters in Table 2 show a slight

difference in the mean scores between rater; however, the distribution of levels of scores is fairly

similar across the two raters. We further examine the inter-rater relationship with two agreement

plots in Figure 1 on the following page:

Criteria Mean Median Range # Missing Counts

Cognitive

Demand 1.783 2 1-4 0

1-261 (30.5%) 2-523 (61.1%)

3-69 (8.1%) 4-3(0.4%)

Grading

Criteria 1.724 2 1-4 30

1-271 (32.8%) 2-513(62.1%)

3-41(5.0%) 4-1(0.1%)

AR 3 Mean Counts AR 4 Mean Counts

Rater 1 1.761 1-135 (31.5%) 2-262 (61.2%)

3-29 (6.8%) 4-2 (0.5%)

Rater 1 1.704

1-140 (33.9%) 2-255 (61.7%)

3-18 (4.4%) 4-0 (0%)

Rater 2 1.804 1-126 (29.4%) 2-261 (61.0%)

3-40 (9.3%) 4-1 (0.2%)

Rater 2 1.743

1-131(32.4%) 2-258 (62.5%)

3-23 (5.6%) 4-1 (0.2%)

Page 9

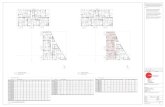

Figure 1: Rater Agreement Plots for Cognitive Demand and Grading Criteria Variables

Figure 1 shows agreement plots for the cognitive demand and grading criteria scores

across the two raters. The height and width of the boxes are proportional to how many times

Rater 1 and Rater 2 gave each score level respectively. The black boxes indicate perfect

agreement, the grey boxes indicate difference in score rating by one level, and the white shades

within the boxes indicate difference in score rating by more than one level. For both variables,

we see that the black shades dominate the boxes, meaning we have very good agreement in

scores across the two raters. For the cognitive demand variable we observe 85.6% rater

agreement – meaning that the two raters agreed upon the same score 85.6% of the time with a

Cohen’s kappa of 0.729 (a statistical measure of rater agreement), which corresponds to high

agreement (Cohen, 1960). For the grading criteria variable, there was similarly high rater

agreement at 85.7%, with a Cohen’s kappa of 0.649, which also corresponds to high agreement.

In terms of our model, this means we should expect to see little variation between raters due to

high inter-rater agreement.

Page 10

Model

The study used a two-facet partially-nested G study design. Each teacher (t) submitted

four assignments (a) which were rated by each of two raters (r). The model design nested

assignments within teachers, which we crossed with raters, denoted by (a : t) x r. Figure 2 shows

the relationships between the variance components for further clarification:

Figure 2: Relationships Between Sources of Variation

The statistical parallel to the generalizability study model is the mixed-effects ANOVA.

At its core, generalizability theory is a means and variances model, where variations in the facets

account for the variability in scores along some universal mean. This translates directly to an

analysis of variance model with random effects along a universal mean (fixed intercept). In this

study, the equivalent ANOVA model would be:

where µ is the universal mean or model intercept, Ut is the random effect for each teacher t, Va:t

is the random effect for each assignment nested within teachers (a:t), Wr is the random effect for

Teacher 1

A1 A2 A3 A4

Teacher 2

A1 A2 A3 A4

Teacher 3

A1 A2 A3 . . .

Rater 2 Rater 1

A4

Page 11

each rater, Xr,t is the random effect for each teacher crossed with rater, and εa:t,r denotes the error

term.

The model used in the study has one major assumption: the response variable of

assignment scores is a continuous variable to be used in an ANOVA model. Technically, the

assignment scores are an ordered categorical variable. The model also assumes that there is no

difference between any assignments that are given a particular rating score. However, having

raters rate assignment scores as a continuous variable is impractical. We also believe the score

level cutoffs provided by the rubric are concise and meaningful enough to provide informative

variation in teacher assignment scores.

Reliability & The G Coefficient

The estimated variance components and percent of variance explained by each element in

our model are summarized in the Table 4 and Table 5. We notice that the models perform

approximately equally well within each component across both variables. This is an indication

that our model is stable:

Table 4: Cognitive Demand Estimated Variance Components and Percent of Variance Explained

Component Variance % of Variance Explained

Teacher 0.139 34.9

Rater 0.001 0.2

Assignment : Teacher 0.178 44.6

Teacher * Rater 0.004 1.0

(Assignment : Teacher) * Rater 0.077 19.4

Table 5: Grading Criteria Estimated Variance Components and Percent of Variance Explained

Component Variance % of Variance Explained

Teacher 0.109 32.0

Rater 0.000 0.1

Assignment : Teacher 0.138 40.5

Teacher * Rater 0.000 0.1

(Assignment : Teacher) * Rater 0.092 27.2

Page 12

In both cases, the [Assignment : Teacher] component absorbed nearly half of the variance

among the given data. Following is the [Teacher] component, which likewise absorbed

approximately a third of the variance in both variables. Next is the [(Assignment : Teacher) *

Rater] component, which absorbed approximately a quarter of the variance in both variables.

These three terms alone account for nearly all of the variation among our data. As expected, the

[Rater] and [Teacher * Rater] components did not contribute much to our model because raters

did not significantly differ in their assessments of a given teacher’s assignments.

We now assess the reliability of our results, i.e., to what extent would our study produce

consistent results if we asked another set of teachers for assignments under the same conditions.

It is important that our results be reliable because as our results increase in reliability, they also

increase in statistical power (B. Junker, Personal Conversation, April 12, 2010). Also, without

reliability, our test cannot be considered valid. Essentially, without high reliability, our study

would be worthless. In generalizability theory, to assess the reliability of our results we look at

the G coefficient.

To understand the interpretation of the G coefficient, we first must consider running our

study twice on the same set of teachers. Every aspect of these two studies would be identical,

except for the natural random variation among the assignments and the rater scores between the

two studies. Now suppose that we would like to predict the average score for each teacher’s

assignments in one study by assessing the average score for each teacher’s assignments in the

other study. This is analogous to fitting a regression where the score for each teacher’s

assignments in one study is the explanatory variable, and the score for each teacher’s

assignments in the other study is the response variable (B. Junker, Personal Conversation, March

10, 2010). To measure the percentage of variance explained by this regression, we would

Page 13

calculate the R2 value. Although this value is important, for our particular study we are more

concerned with reliability and reproducibility, i.e., the G coefficient. It just so happens that the G

coefficient is the square-root of the R2 of such a regression. Table 6 summarizes the relationship

between these two values:

Table 6: The Relationship Between the G Coefficient and R2 Values

G Coefficient Value R2 Value Typical Interpretation

.950 .903 Excellent

.900 .810 Good

.800 .640 Moderate

.700 .490 Minimal

.500 .250 Inadequate

We desire our reliability to be as high as possible, so therefore we would like our G

coefficient to be as high as possible. As a rule of thumb, we consider a G coefficient of .800 or

greater to be sufficient to deem a study reliable.

Imputation

In an effort to not lose valuable information because our dataset contains missing

observations, we choose to replace the missingness within the grading criteria variable with

artificial values based on a logical analysis. This replacement process is called imputation. For

our study, because we are not sure whether our missing observations are MCAR, MNAR, or

MAR, we must be careful when doing so. Our goal is to impute in such a way that we keep the

reliability of our study high. We also want our results to not depend on the values we impute. If

our results change each time we impute missing values, our study would be unstable. Therefore,

we would also like to keep the stability of our study high.

There are many ways to impute data. Each imputation method comes with both

advantages and disadvantages which arise from the various assumptions that must be made for

Page 14

an imputation method to be accurate. Because of our uncertainty of the nature of the missing

values within our dataset, we chose to impute values using four different processes as described

by List 1 and Table 7:

List 1: Different Processes of Imputation

Type I

- Calculate an overall mean µo from all complete observations

- Calculate an overall variance σo2 from all complete observations

- Impute missing values using a random draw from N~(µo , σo2)

Type II

- Calculate a teacher mean µt for each teacher with missing values

- Calculate a teacher variance σt2 for each teacher with missing values

- Impute missing values using a random draw from each teachers’ N~(µt , σt2)

Type III

- Calculate a teacher mean µt for each teacher with missing values

- Calculate a common variance σcom2 as follows:

- Impute missing values using a random draw from each teachers’ N~(µt , σcom2)

Type IV

- Calculate a teacher mean µt for each teacher with missing values

- Calculate a teacher variance σt2 for each teacher with missing values

- Impute missing values using a random draw from each teachers’ N~(µt , σt2)

- If σt2 = 0, impute missing values using a random draw from each teachers’ N~(µt , σcom

2)

Table 7: Imputation Assumptions*

Imputation

Method

Average Quality Varies From

Teacher To Teacher

Average Range Of Quality Varies From

Teacher To Teacher

Type I No No

Type II Yes Yes

Type III Yes No

Type IV Yes No

*Note: Each of the above imputation methods rely also upon the assumption that the difference

between raters is negligible, as we have seen in previous analyses.

Within each of the four types of imputation, we constructed five separate datasets for a

total of twenty imputed grading criteria datasets. We then compared the G coefficients of each of

Page 15

these datasets to assess the reliability and stability of each imputation procedure. Our findings

are summarized within Table 8:

Table 8: Imputation Results

Imputation

Method

Imputation

#1 G Coef

Imputation

#2 G Coef

Imputation

#3 G Coef

Imputation

#4 G Coef

Imputation

#5 G Coef

Reliability Stability

Type I 0.669 0.681 0.687 0.664 0.676 Min - Mod No

Type II 0.695 0.689 0.698 0.683 0.691 Min - Mod Yes

Type III 0.696 0.699 0.698 0.696 0.697 Min - Mod Yes

Type IV 0.694 0.690 0.695 0.668 0.693 Min - Mod No

As mentioned before, we would like both reliable and stable results. From the analysis

above, we see that an imputation using method Type III gives us both moderately reliable and

stable results. We also note that the highest G coefficient was within the second imputed Type III

dataset. Therefore, we choose to use this imputed dataset to account for the missingness within

the grading criteria variable, and use this dataset for the remainder of our analysis.

Results

Having dealt with the missing observations, we can now analyze the D studies for both

the cognitive demand and grading criteria variables. Tabled versions of the D study G

coefficients for the cognitive demand and grading criteria variables are provided in Appendix B.

To better visualize the D Study output, we present graphical representations of estimated G

coefficient and associated relative error values. We also present graphical representations from

the D Study output of estimated Phi coefficient and associated absolute error values. The

Page 16

corresponding graphs for the cognitive demand variable and the chosen imputed dataset for

grading criteria variable1 are presented in Figure 3 and Figure 4:

Figure 3: Graphical Representation of D Study for Cognitive Demand Variable

1 The graphs for each imputed dataset are extremely similar, and thus are omitted from the body of this paper;

however, for comparison reasons, the graphs of Type I dataset 2 (the most unstable imputation method and most

unreliable imputed dataset) are located within Appendix C. The interpretations are in congruence with the graphs of

both the cognitive demand variable and the chosen imputed dataset for the grading criteria variable, and thus are also

omitted from the body of this paper.

Page 17

Figure 4: Graphical Representation of D Study for Grading Criteria Variable

Page 18

It is important to note that if we increase the number of measurements in each factor, the

error contributed by that factor in the study decreases. For example, if we increase the number of

raters who grade each assignment, we assume that the error or disagreement for an assignment’s

grade will decrease. Such a decrease in error is greatly desirable; however having more raters

means we must spend more money, and one of our primary goals is to have a cost efficient

design. Likewise, we would like to maximize our G coefficient value, which is inversely related

to the error. Therefore, as we minimize our error, we maximize our G coefficient.

To balance the maximization of our G coefficient and accuracy with a minimization of

cost, error, and efficiency, we assess the D-Study. Within the graphs of Figure 3 and Figure 4,

the different lines represent a different number of raters, and the x-axis represents different

numbers of assignments. For the graphs on the left, the y-axis represents the reliability of our

estimate (G or Phi coefficient values). For the graphs on the right, the y-axis represents the

associated error (relative or absolute). Notice that as we increase either the number of raters or

assignments, the reliability of our estimate increases and our error decreases, as we would

expect.

Within the initial dataset comprised of measurements taken with two raters and four

assignments per teacher, the G coefficient values for the cognitive demand and grading criteria

variables is .713 and .699, respectively. We would like to increase these values to at least .800.

Notice that, while holding the number of assignments constant, the increase in the G coefficient

is very minimal after increasing the number of raters beyond two. This is again probably due to

the high agreement rate between raters. Therefore, it is probably not worth the cost to have any

more than two raters for future studies. Furthermore, notice that if we choose to have two raters,

to achieve a G coefficient of at least .800 we must increase the number of assignments collected

Page 19

per teacher to seven. This will yield desirable G coefficients of .809 and .802 for the cognitive

demand and grading criteria variables, respectively.

Conclusion

It is important that all students receive a high quality of education. One way to assure that

students are receiving a good education it to make sure that they are actively synthesizing

material. Because there appears to be an association between the quality of task and a student’s

writing, it is beneficial for the student if teachers not only provide assignments that require

students to use their minds in complex ways, but also grade the assignments beyond simple

mechanics. To analyze the quality of various teachers’ assignments, we require the collection of

task examples and their grading criteria. We also need raters to assess the quality of each

assignment. Because it is impractical to collect many assignments and to have many raters, we

need to find the minimum number of each that maximizes the reliability and stability of our

results. Using generalizability theory, we found that to achieve a G coefficient of at least .800 we

must collect approximately seven assignments from each teacher and have two raters assess each

assignment.

Page 20

Appendix A: Scoring Rubrics for Cognitive Demand and Grading Expectations of Assignments

Cognitive Demand of the Task

4 Task guides students to engage with the underlying meanings/nuances of a text

Students interpret/analyze a text AND use extensive and detailed evidence

Provides an opportunity to fully develop the students’ thinking

3 Task guides students to engage with some underlying meanings/nuances of a text

Students use limited evidence from the text to support their ideas

Provides some opportunity to develop students’ thinking

2 Task guides students to construct a literal summary of a text

Based on straightforward/surface-level information about the text

Students use little to no evidence from the text

1 Task guides students to recall isolated, straightforward facts of a text

Assessment Criteria for Grading Students

4 At least one of the teacher’s expectations focuses on analyzing the text, AND

At least one of the teacher’s expectations focuses on including evidence or examples

to support a position

3 At least one of the teacher’s expectations focuses on analyzing the text

2 Teacher’s expectations focus on building a basic understanding of the text

1 Teacher’s expectations do not focus on reading comprehension, OR

Teacher’s expectations do not focus on coherent understanding of the text

Page 21

Appendix B: Extended D Study Tables

Cognitive Demand Variable

# Assignments # Raters G Coefficient Relative Error Phi Coefficient Absolute Error

1 1 .350 .259 .349 .259

1 2 .389 .218 .389 .218

1 3 .405 .205 .404 .205

1 4 .413 .198 .413 .198

1

2

2

2

2

2

3

3

3

3

3

4

4

4

4

4

5

5

5

5

5

6

6

6

6

6

7

7

7

7

7

8

8

8

8

8

5

1

2

3

4

5

1

2

3

4

5

1

2

3

4

5

1

2

3

4

5

1

2

3

4

5

1

2

3

4

5

1

2

3

4

5

.418

.514

.558

.575

.583

.588

.610

.653

.668

.676

.681

.673

.713

.727

.735

.739

.717

.755

.768

.775

.779

.750

.785

.798

.804

.808

.775

.809

.821

.827

.831

.795

.827

.839

.845

.848

.194

.131

.110

.103

.099

.097

.089

.074

.069

.067

.065

.068

.056

.052

.050

.049

.055

.045

.042

.040

.039

.046

.038

.035

.034

.033

.040

.033

.030

.029

.028

.036

.029

.027

.026

.025

.418

.513

.558

.574

.583

.588

.608

.652

.667

.676

.681

.671

.712

.726

.734

.739

.714

.753

.767

.774

.779

.747

.784

.797

.804

.808

.772

.807

.820

.826

.830

.792

.826

.838

.844

.847

.194

.132

.110

.103

.100

.097

.090

.074

.069

.067

.065

.068

.056

.052

.050

.049

.056

.046

.042

.041

.040

.047

.038

.035

.034

.033

.041

.033

.031

.029

.029

.037

.029

.027

.026

.025

Page 22

Grading Criteria Variable

# Assignments # Raters G Coefficient Relative Error Phi Coefficient Absolute Error

1 1 .318 .228 .318 .229

1 2 .367 .184 .367 .184

1 3 .387 .169 .387 .169

1 4 .397 .161 .397 .162

1

2

2

2

2

2

3

3

3

3

3

4

4

4

4

4

5

5

5

5

5

6

6

6

6

6

7

7

7

7

7

8

8

8

8

8

5

1

2

3

4

5

1

2

3

4

5

1

2

3

4

5

1

2

3

4

5

1

2

3

4

5

1

2

3

4

5

1

2

3

4

5

.404

.483

.573

.558

.569

.576

.583

.635

.654

.664

.671

.651

.699

.716

.725

.731

.700

.743

.759

.767

.772

.737

.777

.791

.798

.803

.766

.802

.815

.822

.826

.789

.823

.835

.841

.844

.157

.114

.092

.084

.081

.078

.076

.061

.056

.054

.052

.057

.046

.042

.040

.039

.046

.037

.034

.032

.031

.038

.031

.028

.027

.026

.033

.026

.024

.023

.022

.029

.023

.021

.020

.020

.404

.482

.563

.557

.568

.575

.582

.634

.654

.664

.670

.649

.697

.715

.725

.730

.698

.742

.758

.767

.772

.734

.775

.790

.798

.802

.763

.801

.814

.821

.825

.786

.821

.833

.840

.844

.157

.115

.092

.085

.081

.079

.077

.061

.056

.054

.052

.058

.046

.042

.040

.039

.046

.037

.034

.032

.031

.039

.031

.028

.027

.026

.033

.026

.024

.023

.023

.029

.023

.021

.020

.020

Page 23

Appendix C: Graphical Representation of D Study for Grading Criteria Variable

Page 24

References

Anthony S. Bryk, Newmann, Fred M., and Jenny K. Nagaoka. (2001) "Authentic Intellectual

Work and Standardized Tests: Conflict or Coexist." The Consortium on Chicago School

Research, 1-48.

Bryk, Anthony S, Lopes, Gudelia and Newmann, Fred M. (1998) “The Quality of Intellectual

Work in Chicago Schools: A Baseline Report”, The Consortium on Chicago School

Research, 1-59.

Cohen, Jacob (1960). A coefficient of agreement for nominal scales, Educational and

Psychological Measurement Vol.20, No.1, pp. 37–46.

Donders, A. Rogier T., Geert J.M.G. Van Der Heijden, Theo Stijnen, and Karel G.M. Moons.

"Review: A Gentle Introduction To Imputation Of Missing Values." Journal of Clinical

Epidemiology (2006): 1087-091. Print

Doyle, Watler. (1998) "Work in Mathematics Classes: The Context of Students' Thinking During

Instruction." Educational Psychologist 23.2: 167-180.

Graham, S., and Hebert, M. A. (2010). Writing to read: Evidence for how writing can improve

reading. A Carnegie Corporation Time to Act Report. Washington, DC: Alliance for

Excellent Education.

Matsumura, Lindsay Clare, Garnier, Helen E., Slater, Sharon Cadman and Boston, Melissa D.

(2008) 'Toward Measuring Instructional Interactions “At-Scale”', Educational

Assessment, 13:4,267 -300.

Mushquash, Christopher, and Brian P. O’Connor. "SPSS and SAS Programs for Generalizability

Theory Analyses." Behavior Research Methods (2006): 542-47. Web. 2 Mar. 2010.

<http://brm.psychonomic-journals.org/content/38/3/542.full.pdf>.

Page 25

O'Connor, Brian P. "G Theory Programs." Web. 02 Mar. 2010.

<https://people.ok.ubc.ca/brioconn/gtheory/gtheory.html>.

Shavelson, Richard J., and Noreen M. Webb. Generalizability Theory: A Primer. Newbury Park,

Calif.: Sage Publications, 1991. Print.