Suppose hypothesis

Transcript of Suppose hypothesis

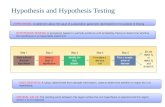

Hypothesis Testing-

we will introduce the hypothesis testing framework as a

decision problem in the Bayesian framework .

Whether we are

in the Bayesian framework onthe classical framework

,there

are several definitions that are common to both frameworks.

Suppose I = RH URA ,where RH n AA = 0 .

The statement

that o era is called the hypothesis and labelled H.The

statement that 0 Era is called the alternative and is labelled A.

So H '

.O C- Sit

A ! O E DA

A decision problem is called hypothesis testing ifand the loss function satisfies

A = { 0, I},

- to

⇒ L LO , I ) > LIO , o) if O E RH

o -I LCO , i) a L ( O,o) ← if 0 ERA )-

The decision 0 corresponds to the decision that H is true.

The decision I correspond to the decision that A is true .

The decisions I is often described as

"

rejecting the hypothesis"

.

The decision o is often described as"

accepting the hypothesis"

.

There are 2 types of error one can make.

If we reject It but Otra C " H is true" ) this is called

a Type T error=

If we accept H but O E DA ("

H is false" ) this is called

a TypetierrorA type I error is sometimes ( more descriptively) called a false positiveA type II error is sometimes called a false negative

-

# i

A common practice in specifying the loss functions iscis set the loss to 0 if one makes a correct decision .

iii) set the loss to a positive constant if one makes a type Ierror

(iii) set the loss to a ( possibly different) positive constant ifone makes a type II error .

one can without loss of generality set one of these positiveconstants equal to 1 .

This gives what is called the o - I - c lossfunctions :

L ( O , a) = 0 if O E SH and a = O,or Otra and a = ,

if O EDA and a = O{ "

if o era and a = IC

where C > o.

If C =L we call this a o - I loss function.

Let's consider the formal Bayes rule when the loss function is theo - I - c loss function

.There are 2 possible posterior risks .

rc o l x ) = E [ LC④ , o) l X = K] =P (④ ERA I × = x )r C l l x) = ECL (④ ,

I ) l X - K) = c PC④ Er # Ix = x)Then the formal Bayes rule is to choose a =L l reject H ) if

c P (④ c- SH IX -

- K) s p (④ era IX ⇒c)= I - P (④ Era / X '- K)

⇐ p (④ Era l X ' k) s Ic

Exampte Suppose Po says that X = ( Xi , . . , X n) are iii. d .

N ( m , o' )

,O = CM , 02) E R

-

- R x ( o , t) . Suppose

SL , = { ( M, 04 Er ! M Z Mo } for some fixed Mo ER .

Let the loss function be the o - I - c loss function.In this

example we will derive the one - sided t -test that is usuallyintroduced in an introductory statistics course , but in the

Bayesian decision theoretic framework .

Some distribution preliminariesy

'

① The t distribution with p degrees of freedom ( p is a

positive integer) , denoted by tp ,has pdf

h Culp) = T (Pitt) ,

iE¥)#② Location - Scale family !

If U has pdf hln) and a and b are constants with a >o

then the pdf of aVtb is

'a- hcu.at)

③ Inverse Gamma Distribution !

The Inverse Gamma distribution with parameters 2 > oand B > o has pdf

g (uld

,B) = 17¥ IF e - Blu Ico , o, la)

Going back to our problem ,let M and E

' denote the

parameters considered as random variables .We want to compute

the posterior density of CM ,E') givin X = K .

We will use

the prior Ilo)= Ico

,

This is what is called animpwperpriorinthat it integrates to to .

This can be viewed approximately astaking an Inverse Gamma prior on E

' with 2 =P and a verysmall

,and take the prior density on M to be Normal with a

very large variance .

Even though the prior is improper , theposterior density of CM ,

5) given X = K will be proper .

The posterior isTcu , o

' l K ) L L CO ) ILO)

= ⇐⇒ne - Hi -n)'

Ico,- ,CoD

& matte-

'Eti ki -m)')I

, . . ., cry

we want to integrate out o' to get the marginal posterior of

M given X - K .For any fixed M ,

TCM, O' l x) as a function

of 82,is proportional to an Inverse Gamma density with parameters

Z and II. ( sci -m )'

.

Then doing the integral one obtains thatthe marginal posterior density of M given X - k

satisfies

Tink) 4.iq#.ns)"'

2 ( .is#TnT)"

This is proportional to a location - scale transformation ofa t density .

To see this we write

Tcu 1H h ( E.cc#iIEmpT)" "

,

where I = 's ki

l h 12= (ims¥¥) 5- '

I. sci -I )

'

= ( t Kh - 1) S' n 12

-

Yt : )-¥tf)""

We can recognize this as a location scale transformation of

a t density with n - I degrees of freedom , with location. Iand scale Sir .

Thus,the marginal posterior distribution

of M giron X - x is the distribution of ⇐Ut I,where

U n th - i .

Then we getP (④ E SH IX = x) =P ( M Z Mo IX = x)

=P U TI Z Mo) where U - th - I

=pcuz%)The formal Bayes rule is to reject It if P (④ Era IX -

- x) - the

This is PC U ZY) -¥⇐ Mo - I area Fte

area Ftc> tie,n - i

⇐ I - Mo TA AE L - t# ,

n - I- t

,n - I t.tt , n - I

.aretha to the night ofthis value is it underthe th - i density .

The formal Bayes rule issix) = I tf III L - t# in - i ){o if Isin Z - tie

,n - I

This is the usual one - sided t - test that one sees in an

introductory statistics course .

A couple of concluding remarks !① The statistic TCX ) =Mg_ is

,in the classical framework

,

usually called a t test statistic .

To see this note that

give-

nO = ( Mo

,02) for any o

'so

,the distribution of

T (x) is th - i .

For such 0,I - MoE

n N lo,I) and

Cnj - X? - , ,and these z random variables are

independent .

Then I - no

T= = TCX )

#Ch - i )

In the classical framework,this t test is usually derived

using the likelihood ratio rule as a likelihood ratio test,

which we shall be discussing in a couple of weeks .

② In this example a conjugate family of priors is theInverted Gamma / Normal family :TCM ,

E) = ,B÷, Itt e- Bto' Eo e

-⇐ ( M - n 't'

Ico,→Co2)

for parameters 2 so , B > o, m' EIR

.

If you goahead and compute

the posterior marginal density of M givin X - K, you would

If in a ¢¥iFfi)""""

,but a and b will

depend on 2 , B,M

' and p , will notin general equal Pa ,

and p , and pa will not in general be integers .

In hypothesis testing we will goback to using the notation 0

to denote the decision rule ,and we will also be referring to §

as the testfun-t.ie .

So 0 CX) = I if we reject H ( a - i){ o if we accept It ( a = o )

Note onhow tests are usually described :

usually we describe a test by the specification of a teststatistic,

say TCX) , and a critical ( or rejection region) , say C

such that the test rejects It if TLx) EC .That is

,

0 CX) = I if T Cx) E C{ o if T Cx) IEC

classical Framework for Hypothesis TestingTnthaafaebedte risk function.

Related to the risk function is what is called the power function

of a test 0 .

This is denoted by Poto) andgwei.by/3olo)=Eof0CxD--PC0Cx)--I I ④ = o)

Under the o - I - c loss function,the risk function of a

givin test 0 is

RCO,0) = Eo Luo , Cx))]

= c P ( 047=11④ --o) if OES#{ P lol Cx) -- ol④ -- o) if 0 ERA

= c Boy (O) if OE RH

{ I - Bolo) if 0 Era

In the classical framework ,the most common approach to defining

what is meant by an optimal test is to constrain the power functions

( or the risk function ) to be below a givin threshold on DH , and then

amongall tests that satisfy this constraint an optimal test will

be one that has uniformly greatest power to- lowest risk) on Da ,if one exists .

Det.

The size of a test 0 is sup Boy 10)- OEDH

( i.e.,the size is the supremum of type I error probabilitiesover OE DH ) .

Def .

A test is said to be levelly if its size is less than

or equal to 2 .