Seed Working Group Swiss National Grid Association (SwiNG) [email protected]

-

Upload

caleb-holmes -

Category

Documents

-

view

28 -

download

1

description

Transcript of Seed Working Group Swiss National Grid Association (SwiNG) [email protected]

May 20, 2008

1CCGrid 2008, Lyon, France

Initializing a National Grid Infrastructure: Lessons Learned from the Swiss National

Grid Association Seed Project

Seed Working GroupSwiss National Grid Association (SwiNG)

May 20, 2008

2CCGrid 2008, Lyon, France

Members of the Seed Working Group

Nabil Abdennadher, Haute École Spécialisée de Suisse Occidentale (HES-SO)

Peter Engel, University of Bern (UniBE)

Derek Feichtinger, Paul Scherrer Institute (PSI)

Dean Flanders, Friedrich Miescher Institute (FMI)

Placi Flury, SWITCH

Sigve Haug, University of Bern (UniBE)

Pascal Jermini, École Polytechnique Fédérale de Lausanne (EPFL)

Sergio Maffioletti, Swiss National Supercomputing Centre (CSCS)

Cesare Pautasso, University of Lugano (USI)

Heinz Stockinger, Swiss Institute of Bioinformatics (SIB)

Wibke Sudholt, University of Zurich (UZH) – Chair

Michela Thiemard, École Polytechnique Fédérale de Lausanne (EPFL)

Nadya Williams, University of Zurich (UZH)

Christoph Witzig, SWITCH

May 20, 2008

3CCGrid 2008, Lyon, France

Outline

Background• Grid projects

• SwiNG

Seed Project• Introduction

• Middleware

• Applications

Conclusions and outlook

May 20, 2008

4CCGrid 2008, Lyon, France

Grid Projects and InfrastructuresInternational Grid projects• EGEE (Enabling Grids for E-sciencE): 91 partners,

PRAGMA (Pacific Rim Applications and Grid Middleware Assembly): 29 partners,etc.

National Grid projects• Open Science Grid (USA), ChinaGrid, NAREGI (Japan), e-Science Programme (UK), D-

Grid (Germany), Austrian Grid, etc.

Domain-specific Grid projects• LCG, Chemomentum, GRIDCHEM, Swiss Bio Grid, EMBRACE, DEGREE, etc.

Local Grid projects• XtremWeb-CH, JOpera, etc.

Homogeneous Grid middleware• gLite, UNICORE, Globus, ARC, etc.

May 20, 2008

5CCGrid 2008, Lyon, France

Situation in Europe

Funding for Grid projects by the EU• Within FP5 / FP6 / FP7

• Collaboration projects

National Grid Initiatives (NGIs)• In most European countries

• Some with considerable funding

European Grid Initiative (EGI)• http://web.eu-egi.eu/

• Design study under way

• Following the model of the National Research Networks (NRENs)

May 20, 2008

6CCGrid 2008, Lyon, France

National Grid Initiative (NGI)

Must • Have a mandate to represent

researchers and institutions in Grid-related matters towards

— International bodies (e.g., EU)— Funding agencies— Federal government (SBF, BBT)

• Have only one NGI per country

May• Involve only coordination

• Develop and operate national Grid infrastructure(s)

• Be a legal entity on its own

• Be limited to academic or research institutions

• Also involve participation by the industry

“Coordinating body” for Grid activities within a nation

May 20, 2008

7CCGrid 2008, Lyon, France

Grid in Switzerland before SwiNG

Various, somewhat isolated efforts in the Swiss higher education sector • Some projects within individual

research groups

• Some projects between a limited number of Swiss partners

• Participation in EU-sponsored projects by some institutions

• Participation in international projects by some institutions

No national coordinationNo dedicated fundingNo homogeneous Grid

middleware or infrastructure

May 20, 2008

8CCGrid 2008, Lyon, France

Swiss National Grid Association (SwiNG)

Mission• Ensure competitiveness of Swiss science, education and industry by creating

value through resource sharing.• Establish and coordinate a sustainable Swiss Grid infrastructure, which is a

dynamic network of resources across different locations and administrative domains.

• Provide a platform for interdisciplinary collaboration to leverage the Swiss Grid activities, supporting end-users, researchers, industry, education centres, resource providers.

• Represent the interests of the national Grid community towards other national and international bodies.

History• Initialized in September 2006• Founded as association in May 2007• Operational since January 2008

May 20, 2008

9CCGrid 2008, Lyon, France

Organisational Structure

May 20, 2008

10CCGrid 2008, Lyon, France

Institutional MembersETH domain• École Polytechnique Fédérale de

Lausanne (EPFL)• Eidgenössische Technische Hochschule

Zürich (ETHZ)• ETH Research Institutions (EAWAG,

EMPA, PSI, WSL)• Swiss National Supercomputing Centre

(CSCS)

Cantonal universities• Universität Basel (UniBas)• Universität Bern (UniBE)• Université de Geneve (UniGE)• Université de Neuchâtel (UniNE)• Université de Lausanne (UNIL)• Università della Svizzera Italiana (USI)• Universität Zürich (UZH)

Universities of Applied Sciences• Berner Fachhochschule (BFH)

• Fachhochschule Nordwestschweiz (FHNW)

• Haute Ecole Spécialisée de Suisse Occidentale (HES-SO)

• Hochschule Luzern (HSLU)

• Scuola Universitaria Professionale della Svizzera Italiana (SUPSI)

Specialized institutions• Friedrich Miescher Institute (FMI)

• Swiss Institute of Bioinformatics (SIB)

• Swiss Academic and Research Network (SWITCH)

May 20, 2008

11CCGrid 2008, Lyon, France

Working Groups

Initial WGs• Mandate Letter

• Seed Project— Founded in November 2006— Finished in November 2007

Currently active WGs• ATLAS: High energy physics

• Proteomics: Bioinformatics

• Infrastructure & Basic Grid Services— Grid Architecture Team (GAT)— Grid Operations Team (GOT)— Data Management Team (DMT)

• Education & Training

WGs in planning• AAA/SWITCH projects

• Grid Workflows

• Industry Relations

May 20, 2008

12CCGrid 2008, Lyon, France

Seed Project Working Group Goals

1. Identify which resources (people, hardware, middleware, applications, ideas) are readily available and represent strong interest among the current SwiNG partners.

2. Based on available resources, propose one or more Seed Projects that will help to initialize, test, and demonstrate the SwiNG collaboration. The Seed Project should be realizable in a fast, easy and inexpensive manner (“low hanging fruit”).

3. Help with the coordination and realization of the defined Seed Project.

May 20, 2008

13CCGrid 2008, Lyon, France

Seed Project Survey

Informal inventory of resources available for the Seed Project• Member groups

• Available personnel

• Computer hardware

• Lower-level grid middleware

• Higher-level grid middleware

• Scientific application software and data

• Seed project ideas

12 answers in December 2006

Results• There is a lot of interest and expertise.

• There is enough hardware available, but no direct funding for people.

• Middleware and applications are diverse, but some are more common.

• Main interest is in specific tools and Grid interoperability.

Build a cross-product/matrix infrastructure of selected Grid middleware and applications by gridifying each application on each middleware pool in a non-intrusive manner

Avoid “chicken-and-egg” dilemma in bootstrapping a Grid infrastructure by using known tools and addressing early adopters

May 20, 2008

14CCGrid 2008, Lyon, France

Selection Process

Middleware• Criteria

— Already deployed at partner sites— Sufficient expertise and manpower— Supported within existing larger Grid

efforts— Not too complex requirements— Must be diverse and provide sufficient

set of capabilities

• Initial focus— EGEE gLite (deployed at CSCS, PSI,

SIB, SWITCH, UniBas)— Nordugrid ARC (deployed at CSCS,

SIB, UniBas, UniBE, UZH)— XtremWeb-CH (developed and deployed

at HES-SO)— Condor (deployed at EPFL)

Applications• Criteria

— Need from the Swiss scientific user community

— Computational demand warrants Grid execution

— Sufficient expertise and manpower— Not too complex requirements— Simple gridification, without changing

the source code if possible— Should be diverse and cover sufficient

set of requirements— Reusage of existing Grid-enabled

applications

• Initial focus— Cones (mathematical crystallography,

individual code)— GAMESS (quantum chemistry, standard

free open source code) Huygens (remote deconvolution for

imaging, standard commercial code)— PHYLIP (bioinformatics, standard free

open source code)

May 20, 2008

15CCGrid 2008, Lyon, France

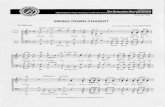

Seed Project Definition

First focus

gLite(Globus

2)CondorARC

XtremWeb-CH

UnitedDevices

Globus4

(WSRF)

Lower-level middleware systems

Meta-middleware and grid interoperability

First focus

Stan-dards

SecurityImple-menta-

tion

Require-ments

Manage-ment

Testing

First focus

Scientific application software

GAMESS

PHYLIP MascotConesHuy-gens

…Swiss

BioGridPhysics

Produc-tion

…

UNICORE

…

May 20, 2008

16CCGrid 2008, Lyon, France

Grid Security: SWITCHslcsSWITCH Short Lived Credential Service (SLCS)• http://www.switch.ch/grid/slcs/

• Ad-hoc generated X.509 certificates

• Based on SWITCHaai (Authentication and Authorization Infrastructure)

• EUGridPMA accredited

• Valid 1’000’000 seconds (ca. 11 days)

• Java-based client software

Advantages• The user does not have to keep track of

where he/she copied his/her certificates between hosts.

• He/she only needs to use his/her SWITCHaai federation account to obtain a certificate, thus he/she has to maintain one credential less.

• He/she does not have to take care of the expiration, respectively, renewal of the certificates. He/she simply requests a new one.

• Identity management becomes simpler since the central Certification Authority (CA) is not required to keep a separate master user database.

• As the SLCS is accredited by the International Grid Trust Federation (IGTF), the certificate is recognized by all Grid resources where the IGTF certificate bundle is installed.

Achievements in Seed Project• Testing on gLite, ARC and Condor pools• MyProxy server for automatic renewal of

expiring proxy certificates to bridge long-running jobs

May 20, 2008

17CCGrid 2008, Lyon, France

Middleware: EGEE gLiteDeployment status in Switzerland• In production in LCG and DILIGENT projects since

2002

• SWITCH (Zurich): Resource Broker, VOMS, CE/UI locally behind firewall

• CSCS (Manno): CE, SE, UI

• SIB (Lausanne): UI

• UZH (Zurich): UI

Achievements in Seed Project• Small test-bed with all essential services, but no big

computer resources• Creation of new Virtual Organization (VO)• Working with SWITCHslcs

• Testing with Cones application

• Access to EGEE resources possible

Disadvantages• Complex due to rich functionality, many vendors, and

partly competing implementations

• Installing and running the UI is straightforward, but large efforts and manpower required for installing and running service components

• Comparatively intrusive on resources (e.g., requires Scientific Linux, workers on compute nodes)

Middleware of the world’s largest Grid infrastructure• http://www.glite.org/• Grid middleware developed and deployed in the EGEE

project, installed in most European countries, and used for CERN’s LCG project

• Based on Globus, adding VO support and extending data management functionalities

• Computing Elements (CEs) interfacing worker nodes to LRMS, Storage Elements (SEs) providing standardized data access and transfer services, information system and resource management, User Interface (UI) client

• Offers many Data Grid components (e.g., file catalogues, storage management)

• Security based on GSI with VOMS (VO Membership Service) support, including hierarchical subgroups

• Several different flavours, running on Scientific Linux

May 20, 2008

18CCGrid 2008, Lyon, France

Middleware: NorduGrid ARCAdvanced Resource Connector• http://www.nordugrid.org/middleware/• Grid middleware developed and deployed in the

NorduGrid project of the Nordic countries• Enables production-quality grids, including information

services, resource, job and data management• Uses replacements and extensions of Globus pre-WS

services (e.g., GridFTP)• Cluster-of-clusters model, Computing and Storage

Elements (CEs and SEs), application Runtime Environments (REs)

• Security based on GSI with VOMS support• Open source under GPL license, supports up to 22

different Unix distributions

Deployment status in Switzerland• Originally deployed as part of the LHC and Swiss Bio

Grid projects• CSCS (Manno): GIIS, CE, SE• UZH (Zurich): CE (10 node cluster)• EPFL (Lausanne): CE (Condor pool)• SIB (Lausanne): CE

Achievements in Seed Project• Configuration of resources at CSCS and UZH,

interfacing of Condor pool at EPFL• Working with SWITCHslcs• Deployment and testing of Cones and GAMESS

applications, running of Cones in production by scientific user

Most successful middleware pool, non-intrusive solution

Disadvantages• Only limited support for complex data management

(e.g., no notion of data proximity)• Compute nodes usually expected to have shared file

system• Information service limited and not very scalable• Coordination among sites necessary for stable

configuration, application REs, and error tracking

May 20, 2008

19CCGrid 2008, Lyon, France

Middleware: XtremWeb-CHHigh-performance Desktop Grid / volunteer computing / P2P middleware• http://www.xtremwebch.net/

• Developed by Nabil Abdennadher et al. at HES-SO

• For deployment and execution on public, non-dedicated platforms via user participation

• Symmetric model of providers and consumers

• Supports direct communication of jobs between compute nodes, also across firewalls

• Can fix the granularity of the application according to the state of the platform

Functionalities• Four modules: Coordinator, worker, warehouse, and

broker

• Volatility of workers

• Automatic execution of parallel and distributed applications

• Direct communication between workers, pull model

• Load balancing

Deployment status in Switzerland• Ca. 200 workers (mainly Windows, few Linux

platforms)

• Sites: EIG (Geneva), HEIG-VD (Yverdon)

Achievements in Seed Project• Test installation at UZH

• PHYLIP application deployed previously

• Integration of GAMESS application

Disadvantages• Security limited and based on central user database,

not compatible with GSI, VOs, and SWITCHslcs

• Porting of applications needs some effort

• No special data management features

May 20, 2008

20CCGrid 2008, Lyon, France

Middleware: CondorHigh-throughput computing environment• http://www.cs.wisc.edu/condor/

• Provides infrastructure for volatile Desktop Grid resources, also cross-institutional

• Several authentication and authorisation mechanisms (e.g., GSI, Kerberos)

• Job queue and resource management for fair and optimized assignment and sharing

• Shared file system or input/output file transfer to/from the compute nodes

• Multi-platform (Linux, Windows, Mac OS X, some other Unix variants), open source

• Can be interfaced with other middleware (e.g., UNICORE, Globus, ARC) as LRMS

Deployment status in Switzerland• Existing production pool at EPFL:

http://greedy.epfl.ch/

• Ca. 200 desktop CPUs (60% Windows, 40% Linux or Mac OS X machines), behind firewall

• Computing power available only during nights and weekends, machine owner has priority

• One submit server and one central manager

• No access to compute nodes for third-party software installation (Condor installed by node owners, not Grid managers), thus built-in file transfer protocol required to transport application binaries along with input data

• Due to desktop nature relatively short jobs advised (6 h max., not enforced)

Achievements in Seed Project• Interfacing to ARC pool

• Working with SWITCHslcs

• Testing of Cones and GAMESS applications

May 20, 2008

21CCGrid 2008, Lyon, France

Middleware Interoperability

Despite existing OGF standards such as JSDL (Job Submission Description Language), most middleware systems have their own mechanisms for resource and data management, information representation, or job submission.

Solutions for interoperability1. Meta-middleware: Complex due to different interfaces and missing standards,

thus out of scope for the Seed Project

2. One-to-one wrappers: Some middleware as entry point and bridge, transforming one format to another

Achievements in Seed Project• Integration of Condor pool in ARC pool based on existing wrapper

• ARC installed on gateway machine

• Modified to allow transparent appending of required binaries

May 20, 2008

22CCGrid 2008, Lyon, France

Seed Project Definition

First focus

gLite(Globus

2)CondorARC

XtremWeb-CH

UnitedDevices

Globus4

(WSRF)

Lower-level middleware systems

Meta-middleware and grid interoperability

First focus

Stan-dards

SecurityImple-menta-

tion

Require-ments

Manage-ment

Testing

First focus

Scientific application software

GAMESS

PHYLIP MascotConesHuy-gens

…Swiss

BioGridPhysics

Produc-tion

…

UNICORE

…

May 20, 2008

23CCGrid 2008, Lyon, France

Application: Cones

Mathematical crystallography program• For given representative quadratic form,

calculates its subcone of equivalent combinatorial types of parallelohedra

• For dimension d = 6, number expected to be greater than 200’000’000 (currently 161’299’100)

Code properties• Single-threaded C program• Developed by Peter Engel, UniBE• Several text input files, one execution

command, several text output files

Possibilities for Grid distribution• Running of several jobs off the same

input file• Cutting of input file into pieces

Achievements in Seed Project• Refactoring of source code• Creation of configure and make files• Testing on gLite, ARC and Condor

pools• Running in production on ARC pool

with first scientific user• Ca. 50’000 new combinatorial types of

primitive parallelohedra identified• Still a lot of room for improvement

regarding ease and efficiency of use

May 20, 2008

24CCGrid 2008, Lyon, France

Application: GAMESSGeneral Atomic and Molecular Electronic Structure System• http://www.msg.chem.iastate.edu/gamess/

• Program package for ab initio molecular quantum chemistry

• Computing of molecular systems and reactions in gas phase and solution (properties, energies, structures, spectra, etc.)

• Wide range of methods for approximate solutions of the Schrödinger equation from quantum mechanics

• Standard free open source code developed and used by many groups (e.g., at UZH)

Code properties• Mainly Fortran 77 and C code and shell scripts• Available for large variety of hardware architectures

and operating systems• Usually one keyword-driven text input file, one

execution command, several text output files• Well parallelized by its own implementation, called

Distributed Data Interface (DDI)• Comes with more than 40 functional test cases

Possibilities for Grid distribution• External: Embarrassingly parallel parameter scans in

input file• Internal: Component distribution based on current DDI

parallelization implementation

Achievements in Seed Project• Deployment and testing on ARC and Condor pools at

CSCS, EPFL, and UZH• Integration into XtremWeb-CH• Simple corannulene DFT

functional scan test caseprovided by Laura Zoppi,UZH

• 223 small molecule MP2calculations test case provided by Kim Baldridge,UZH

May 20, 2008

25CCGrid 2008, Lyon, France

Application: PHYLIPPHYLogeny Inference Package• http://evolution.genetics.washington.edu/phylip

.html

• Used to generate “life trees” (evolutionary trees, interfering phylogenies)

• Most widely distributed phylogeny package, in development since the 1980s, 15’000 users

• Tree composed of several branches, subbranches, and leaves (sequences), which are complex and CPU-intensive to construct, compare, and select

“Life tree”

Code properties• Package of ca. 34 program modules• C source code and executables (Windows,

Mac OS X, Linux) available• Input data read from text files, data

processed, output data written onto text files

• Data types: DNA sequences, protein sequences, etc.

• Methods available: Parsimony, distance matrix, likelihood methods, bootstrapping, and consensus trees

Possibilities for Grid distribution• Workflows constructed by users• Distribution following Single Program

Multiple Data (SPMD) modelAchievements in Seed Project

• Integration and deployment on XtremWeb-CH as independent project (Seqboot, Dnadist, Fitch-Margoliash, Neighbor-Joining and Consensus modules)

• Parallel version of Fitch module• Execution of HIV sequences-related test

case on XtremWeb-CH pool• Web service for dynamic configuration of

application platform and parameters

May 20, 2008

26CCGrid 2008, Lyon, France

Overview of AchievementsEGEE gLitemiddleware

NorduGrid ARC middleware

XtremWeb-CH middleware

Condor middleware

Pool established CSCS, SIB, and SWITCH, VO created, UI at UZH

CSCS, SIB, and UZH, from Swiss Bio Grid

HES-SO and UZH EPFL, coupling with ARC

SLCS security Tested at CSCS, SWITCH, and SIB

Tested at UniBE and UZH

Needs changes in or interface to middleware

Tested at EPFL, via ARC

Conesapplication

Tested at SIB Scientific usage from UniBE

Not started yet Tested at EPFL

GAMESS application

Not started yet Test usage from UZH

Work in progress at HES-SO

Tested at EPFL

Huygens application

No personnel or license

No personnel or license

No personnel or license

No personnel or license

PHYLIP application

Not started yet Not started yet Preexisting at HES-SO

Work in progress at EPFL

May 20, 2008

27CCGrid 2008, Lyon, France

Lessons LearnedProject• Approach based on heterogeneous set of Grid middleware and applications turned out to be useful to

initialize technical collaboration.• There is considerable interest and expertise in Grid resource and knowledge sharing in Switzerland,

which previously has been directed mostly towards external projects.• Dedicated partners, clear responsibilities, continuous communication, detailed documentation, and

active project management are required.• Funding, in particular for people, has to be properly secured for production setup.

Middleware• Middleware is still demanding to install, maintain, and use, mainly due to its complexity and insufficient

documentation.• Middleware architectures and interfaces differ considerably, and require efforts in interoperability.• There is strong need for simplification and standardization, both technically and regarding procedures

(e.g., for policy, development, deployment, and execution mechanisms).

Applications• Applications are diverse and can be put onto the Grid in different ways, and therefore need direct

cooperation among scientific developers and Grid experts, that is, interdisciplinary work.• Scientists are mainly interested in the implementation of new methods, thus standards for software

development and packaging are often ignored, leading to poor installation, documentation, and sometimes performance.

• To run applications on the Grid, applications need to provide well documented and packaged distributions including standardized installation, configuration, testing and use procedures.

May 20, 2008

28CCGrid 2008, Lyon, France

OutlookFuture topics

• Setup of a production infrastructure• Inclusion of additional resources• Extension to new applications• Evaluation of further middleware• Expansion to data management• Connection to other Grid infrastructures

Continuation of work in Switzerland• SwiNG Working Groups• SwiNG-related, funded projects• Securing of dedicated funding for SwiNG

General focus• Standardisation and interoperation of middleware• Professionalisation and standardisation of applications

May 20, 2008

29CCGrid 2008, Lyon, France

Thank you!

Questions?