Retrofitted Parallelism Considered Grossly Sub-Optimal

Transcript of Retrofitted Parallelism Considered Grossly Sub-Optimal

© 2011 IBM Corporation

Retrofitted Parallelism Considered Grossly Sub-Optimal

HotPar '12: 4th USENIX Workshop on Hot Topics in Parallelism

Paul E. McKenney, IBM Distinguished Engineer, Linux Technology Center

June 8, 2012

© 2011 IBM Corporation2

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Labirinto do Outeiro do Cribo, A Armenteira, Meis, Pontevedra, Galicia, Spain. Possibly dating from as early as the Bronze Age (though rock carvings are notoriously difficult to date with certainty). 10 October 2006, Froaringus

© 2011 IBM Corporation3

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

But Now We Use Computers To Solve Mazes

© 2011 IBM Corporation4

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Goals

© 2011 IBM Corporation5

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Goals (Why Was I Messing With Mazes???)

© 2011 IBM Corporation6

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Goals (Why Was I Messing With Mazes???)

An example of near-perfect partitioning for “Is Parallel Programming Hard, And If So, What Can You Do About It?”

Use case for RCU-protected union-find data structure

© 2011 IBM Corporation7

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

But First, A Sequential Maze Solver

© 2011 IBM Corporation8

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Sequential Maze Solving (SEQ)

Start

End

© 2011 IBM Corporation9

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Sequential Maze Solving

Start

End

© 2011 IBM Corporation10

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Sequential Maze Solving

Start

End

© 2011 IBM Corporation11

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Sequential Maze Solving

Start

End

© 2011 IBM Corporation12

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Parallel Maze Solving: Work-Queue Approach

© 2011 IBM Corporation13

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Parallel Work Queue (PWQ)

Start

End

© 2011 IBM Corporation14

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Parallel Work Queue

Start

End

© 2011 IBM Corporation15

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Parallel Work Queue

Start

End

© 2011 IBM Corporation16

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Parallel Work Queue: Saved An Iteration!!!

Start

End

But can you see the weak point?

© 2011 IBM Corporation17

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

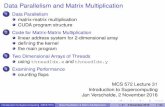

Performance Comparison: PWQ vs. SEQ

© 2011 IBM Corporation18

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Performance Comparison: PWQ vs. SEQ(Two Threads)

© 2011 IBM Corporation19

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Everything I Need to Know, I Learned in Kindergarten

© 2011 IBM Corporation20

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Everything I Need to Know, I Learned in Kindergarten

In this case, when solving a maze, start at both ends!!!

© 2011 IBM Corporation21

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Partitioned Parallel Solution (PART)

Start

End

© 2011 IBM Corporation22

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Partitioned Parallel Solution

Start

End

© 2011 IBM Corporation23

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Performance Comparison: SEQ vs. PWQ vs. PART

© 2011 IBM Corporation24

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Performance Comparison: SEQ vs. PWQ vs. PART:Two Threads

Lots of overlap – are these really different???

© 2011 IBM Corporation25

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Performance Comparison: SEQ vs. PWQ vs. PART

The CDFs assume independence

This is not true: data is highly correlated–Test script generates a maze, then runs all solvers on that same maze–CDFs lose the relationship between those solutions

© 2011 IBM Corporation26

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Performance Comparison: SEQ vs. PWQ vs. PART

The CDFs assume independence

This is not true: data is highly correlated–Test script generates a maze, then runs all solvers on that same maze–CDFs lose the relationship between those solutions

Preserve this relationship by taking CDF of ratios–SEQ/PWQ and SEQ/PART

© 2011 IBM Corporation27

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Performance Comparison: SEQ/PWQ vs. PWQ/PART:Two Threads

Anything odd about this graph?

© 2011 IBM Corporation28

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

What is Going on Here???

Median speedup of 4x on only two threads!!!

Individual data points show speedups of up to 40x!!!

This is not merely embarrassingly parallel–Embarrassingly parallel: Adding threads does not significantly

increase the aggregate amount of work, resulting in linear scaling

© 2011 IBM Corporation29

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

What is Going on Here???

Median speedup of 4x on only two threads!!!

Individual data points show speedups of up to 40x!!!

This is not merely embarrassingly parallel–Embarrassingly parallel: Adding threads does not significantly

increase the aggregate amount of work, resulting in linear scaling

This is humiliatingly parallel

© 2011 IBM Corporation30

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

What is Going on Here???

Median speedup of 4x on only two threads!!!

Individual data points show speedups of up to 40x!!!

This is not merely embarrassingly parallel–Embarrassingly parallel: Adding threads does not significantly

increase the aggregate amount of work, resulting in linear scaling

This is humiliatingly parallel–Humiliatingly parallel: Adding threads significantly decreases the

aggregate amount of work, resulting in superlinear scaling

© 2011 IBM Corporation31

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

What is Going on Here???

Median speedup of 4x on only two threads!!!

Individual data points show speedups of up to 40x!!!

This is not merely embarrassingly parallel–Embarrassingly parallel: Adding threads does not significantly

increase the aggregate amount of work, resulting in linear scaling

This is humiliatingly parallel–Humiliatingly parallel: Adding threads significantly decreases the

aggregate amount of work, resulting in superlinear scaling

Yeah, yeah, it is great to have a definition, but how is this happening???

© 2011 IBM Corporation32

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

What is Going on Here???

First assumption: there is a bug in either the solver or the data-reduction scripts

–There probably still is, but the solutions and times checked out

© 2011 IBM Corporation33

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

What is Going on Here???

First assumption: there is a bug in either the solver or the data-reduction scripts

–There probably still is, but the solutions and times checked out

The solver also prints the fraction of cells visited–SEQ and PWQ never visited fewer than 9% for 500x500 maze

© 2011 IBM Corporation34

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

What is Going on Here???

First assumption: there is a bug in either the solver or the data-reduction scripts

–There probably still is, but the solutions and times checked out

The solver also prints the fraction of cells visited–SEQ and PWQ never visited fewer than 9% for 500x500 maze–But PART sometimes visited fewer than 2%!!!

© 2011 IBM Corporation35

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Visit Fraction vs. Solution Time Correlation

But correlation is not causation, nor is it “why”...

© 2011 IBM Corporation36

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Partitioned Parallel Solution

Start

End

© 2011 IBM Corporation37

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Partitioned Parallel Solution

Start

End

The threads get in each others' way!

© 2011 IBM Corporation38

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

But Why The Separation Between PWQ and PART?

© 2011 IBM Corporation39

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

PWQ Has Many Potential Contention Points:Contention is Expensive

Start

End

© 2011 IBM Corporation40

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Does PART Always Achieve Humiliating Parallelism?

© 2011 IBM Corporation41

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Does PART Always Achieve Humiliating Parallelism?

Start

End

© 2011 IBM Corporation42

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Partitioning is a Powerful Parallelization Tool

© 2011 IBM Corporation43

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Partitioning is a Powerful Parallelization ToolBut Let's Not Forget Sequential Optimizations!!!

© 2011 IBM Corporation44

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Partitioning is a Powerful Parallelization ToolBut Let's Not Forget Sequential Optimizations!!!

-O3 much better than PWQ, almost as good as PART!

© 2011 IBM Corporation45

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Compiler Optimizations Beat PWQ!!!

Yes, PART is even better, but if all you need is a 2x improvement (rather than optimality), compiler optimization is an extremely attractive option

These results indicate that parallel-programming research making use of high-level/overhead languages is vulnerable to invalidation given improvements in optimization

© 2011 IBM Corporation46

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

And The Threads Will Get In Each Other's Way Even If They Are Running on One CPU... (Coroutines!!!)

© 2011 IBM Corporation47

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

And The Threads Will Get In Each Other's Way Even If They Are Running on One CPU... (Coroutines!!!)

© 2011 IBM Corporation48

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Effect Of Maze Size

Back to merely modest speedups!

© 2011 IBM Corporation49

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Effect Of Increasing Numbers of Threads

Larger, older, less tightly integrated HW: Smaller speedups

© 2011 IBM Corporation50

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Summary and Conclusions

© 2011 IBM Corporation51

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

How Did I Do Against My Goals?

© 2011 IBM Corporation52

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

How Did I Do Against My Goals?

An example of near-perfect partitioning for “Is Parallel Programming Hard, And If So, What Can You Do About It”

–Not so good!–From modestly scalable to humiliatingly parallel and back again

Use case for RCU-protected union-find data structure–Not so good!–No need for RCU in this problem

© 2011 IBM Corporation53

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

How Did I Do Against My Goals?

An example of near-perfect partitioning for “Is Parallel Programming Hard, And If So, What Can You Do About It”

–Not so good!–From modestly scalable to humiliatingly parallel and back again

Use case for RCU-protected union-find data structure–Not so good!–No need for RCU in this problem

On the other hand, this problem turned out to be interesting in its own unexpected way!

–And a nice change of pace from Linux kernel's RCU implementation

© 2011 IBM Corporation54

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Open Questions

Can other human-maze-solver techniques be applied?– Follow walls to exclude portions of maze– Choosing internal starting points based on traversal

Do these results apply to unsolvable or cyclic mazes?

Do other problems exhibit humiliating parallelism?

Does humiliating parallelism always lead to a more-efficient sequential solution?

How much current parallel-programming research can stand up to improved optimization?

© 2011 IBM Corporation55

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Open Questions

Can other human-maze-solver techniques be applied?– Follow walls to exclude portions of maze– Choosing internal starting points based on traversal

Do these results apply to unsolvable or cyclic mazes?

Do other problems exhibit humiliating parallelism?

Does humiliating parallelism always lead to a more-efficient sequential solution? (No, it does not.)

How much current parallel-programming research can stand up to improved optimization?

© 2011 IBM Corporation56

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Conjecture

Conjecture (Due to Jon Walpole):– Thinking from a parallel perspective leads to a much more efficient

search strategy.– It is not the parallelism of the implementation that is important, but

rather the parallelism of the strategy.

© 2011 IBM Corporation57

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Parting Words of Advice

Apply parallelism as a first-class optimization technique–Apply at as high a level as possible, to full application–Often simplifies solution–Usually reduces synchronization overhead, thereby improving both

performance and scalability

In contrast, retrofitted parallelism is likely to be grossly suboptimal

–Especially when applied as a low-level after-the-fact optimization–Might be OK in some situations, but we can do much better

© 2011 IBM Corporation58

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Legal Statement

This work represents the view of the author and does not necessarily represent the view of IBM.

IBM and IBM (logo) are trademarks or registered trademarks of International Business Machines Corporation in the United States and/or other countries.

Linux is a registered trademark of Linus Torvalds.

Other company, product, and service names may be trademarks or service marks of others.

© 2011 IBM Corporation59

HotPar'12: Retrofitted Parallelism Considered Grossly Suboptimal

Questions?