Query Operations. Query Models n IR Systems usually adopt index terms to process queries; n Index...

-

Upload

ophelia-sanders -

Category

Documents

-

view

215 -

download

2

Transcript of Query Operations. Query Models n IR Systems usually adopt index terms to process queries; n Index...

Query Models

IR Systems usually adopt index terms to process queries;

Index term: A keyword or a group of selected words; Any word (more general);

Stemming may be used: connect: connecting, connection, connections

An inverted file is built for the selected index terms.

Boolean queries

Query: terms, AND, OR, NOT, (, ); Result: documents that “satisfy” the query; Implementation by inverted lists

(secondary index); Use of special data structures: B-trees,

trie tables; digital trees("trie"); hashing; Pat-trees ...

Boolean queries: problems

It is difficult to elaborate the query; The match must be exact; A mismatch between the term and the concept

can occur; It is not adapted to browsing.

Boolean queries: extensions

Information about term positions:- Adjacent to;- In the same sentence;- ex: informatics in the same sentence

of knowledge;- In the same paragraph;- In a window of n words ...

Boolean queries: extensions

Implementation:- To analyze the entire text;- To create an inverted list relating positions

and terms;- ex: for each term #paragraph, #sentence,

#term (Stairs da IBM). Increases precision.

Boolean queries: extensions

Term weighting : increases precision.

Synonymy: increases recall.

Stemming: increases recall.

Queries

• Direct Matching with index terms is very imprecise;

• Users frequently are frustrated;

• Most of the users don`t have query elaboration abilities;

• Relevance decision is a critic point in IR systems, specially the order in which the documents are shown.

Queries

• The ranking of selected documents must indicate relevance, according to the user query;

• A ranking is based on fundamental premises, such as:

• common sets of index terms;• weighted terms sharing;• relevance.

• Each set of premises indicates a different IR model.

Changing the query by feedback

Query (information need) Documents

Similarity

Calculus

Extracted documents

Treatment

Formal Queries

Indexing

Indexed

Documents

Feedback

Relevance Feedback

After initial retrieval results are presented, allow the user to provide feedback on the relevance of one or more of the retrieved documents;

Use this feedback information to reformulate the query;

Produce new results based on reformulated query;

Allows more interactive, multi-pass process.

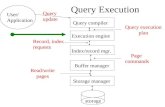

Relevance Feedback Architecture

RankingsIR

System

Documentcorpus

RankedDocuments

1. Doc1 2. Doc2 3. Doc3 . .

1. Doc1 2. Doc2 3. Doc3 . .

Feedback

Query String

Revised

Query

ReRankedDocuments

1. Doc2 2. Doc4 3. Doc5 . .

QueryReformulation

Query Reformulation

Revise query to account for feedback: Query Expansion: Add new terms to query

from relevant documents. Term Reweighting: Increase weight of terms

in relevant documents and decrease weight of terms in irrelevant documents.

Several algorithms for query reformulation.

Query Reformulation for Vector Space Retrieval

Change query vector using vector algebra; Add the vectors for the relevant

documents to the query vector; Subtract the vectors for the irrelevant

docs from the query vector; This both adds both positive and

negatively weighted terms to the query as well as reweighting the initial terms.

Optimal Query

Assume that the relevant set of documents Cr are known.

Then the best query that ranks all and only the relevant docs. at the top is:

rjrj Cd

jrCd

jr

opt dCN

dC

q

11

Where N is the total number of documents.

Standard Rocchio Method

Since all relevant documents unknown, just use the known relevant (Dr) and irrelevant (Dn) sets of documents and include the initial query q.

njrj Dd

jnDd

jr

m dD

dD

: Tunable weight for initial query;: Tunable weight for relevant documents;: Tunable weight for irrelevant documents.

The Rocchio method automatically: Recalculate the weights of the terms; Addition new terms (form the relevant documents);

Careful use of negative terms; Rocchio`s is not a Machine Learning algorithm;

Most of the methods have similar performance; Results depend strongly on the collection; ML methods must present better results then the ones

of Rocchio`s.

Rocchio method

Ide Regular Method

Since more feedback should perhaps increase the degree of reformulation, do not normalize for amount of feedback:

njrj Dd

jDd

jm ddqq

: Tunable weight for initial query;: Tunable weight for relevant documents;: Tunable weight for irrelevant documents.

Ide “Dec Hi” Method

Bias towards rejecting just the highest ranked of the irrelevant documents:

)(max jrelevantnonDd

jm ddqqrj

: Tunable weight for initial query;: Tunable weight for relevant documents;: Tunable weight for irrelevant document.

Comparison of Methods

Overall, experimental results indicate no clear preference for any one of the specific methods;

All methods generally improve retrieval performance (recall & precision) with feedback;

Generally just let tunable constants equal 1.

Evaluating Relevance Feedback

By construction, reformulated query will rank explicitly-marked relevant documents higher and explicitly-marked irrelevant documents lower.

Method should not get credit for improvement on these documents, since it was told their relevance.

In machine learning, this error is called “testing on the training data”.

Evaluation should focus on generalizing to other un-rated documents.

Fair Evaluation of Relevance Feedback

Remove from the corpus any documents for which feedback was provided.

Measure recall/precision performance on the remaining residual collection.

Compared to complete corpus, specific recall/precision numbers may decrease since relevant documents were removed.

However, relative performance on the residual collection provides fair data on the effectiveness of relevance feedback.

Retrieval

Information

0.5

1.0

0 0.5 1.0

D1

D2

Q0

Q’

Q”

Q0 = retrieval of information = (0.7,0.3)D1 = information science = (0.2,0.8)D2 = retrieval systems = (0.9,0.1)

Q’ = ½*Q0+ ½ * D1 = (0.45,0.55)Q” = ½*Q0+ ½ * D2 = (0.80,0.20)

Rocchio method

)04.1,033.0,488.0,022.0,527.0,01.0,002.0,000875.0,011.0(

12

25.0

75.0

1

)950,.00.0,450,.00.0,500,.00.0,00.0,00.0,00.0(

)00.0,020,.00.0,025,.005,.00.0,020,.010,.030(.

)120,.100,.100,.025,.050,.002,.020,.009,.020(.

)120,.00.0,00.0,050,.025,.025,.00.0,00.0,030(.

121

1

2

1

new

new

Q

SRRQQ

Q

S

R

R

Relevant docs.

Non-rel. docs.

Original query

Constants

Rocchio computation

New query

Rocchio method

Why is Feedback Not Widely Used

Users sometimes reluctant to provide explicit feedback;

Results in long queries that require more computation to retrieve, and search engines process lots of queries and allow little time for each one;

Makes it harder to understand why a particular document was retrieved.

Pseudo Feedback

Use relevance feedback methods without explicit user input.

Just assume the top m retrieved documents are relevant, and use them to reformulate the query.

Allows for query expansion that includes terms that are correlated with the query terms.

Pseudo Feedback Architecture

RankingsIR

System

Documentcorpus

RankedDocuments

1. Doc1 2. Doc2 3. Doc3 . .

Query String

Revised

Query

ReRankedDocuments

1. Doc2 2. Doc4 3. Doc5 . .

QueryReformulation

1. Doc1 2. Doc2 3. Doc3 . .

PseudoFeedbac

k

Pseudo-Feedback Results

Found to improve performance on TREC competition ad-hoc retrieval task.

Works even better if top documents must also satisfy additional Boolean constraints in order to be used in feedback.

Thesaurus

A thesaurus provides information on synonyms and semantically related words and phrases.

Example:

physician syn: ||croaker, doc, doctor, MD, medical, mediciner, medico, ||sawbones

rel: medic, general practitioner, surgeon,…

Thesaurus-based Query Expansion

For each term, t, in a query, expand the query with synonyms and related words of t from the thesaurus.

May weight added terms less than original query terms.

Generally increases recall. May significantly decrease precision, particularly

with ambiguous terms. “interest rate” “interest rate fascinate evaluate”

Three steps: Query representation as index terms;2 Based on the similarity thesaurus, compute

the similarity sim(q,kv) between each term kv correlated with the query terms;

3 Query expansion using the n better terms, according to the sim(q,kv) ranking.

Query expansion with a thesaurus

Query expansion – step 1

• A vector q is associated to the query

• Where wi,q is the weight associated to the pair index-query [ki,q]

iqk

qi kwi

,q

• sim(q,kv) computation between each term kv and the user query q:

• Where Cu,v is the correlation factor.

qk

vu,qu,vv

u

cwkq)ksim(q,

Query expansion – step 2

• Addition to the original query q of the r better terms according to the ranking sim(q,kv), resulting in the expanded query q’;

• For each term kv in the expansion query q’ a weight wv,q’ is associated:

• The expanded query q’ is then used to retrieve new documents for the user.

qkqu,

vq'v,

u

w

)ksim(q,w

Query expansion – step 3

Doc1 = D, D, A, B, C, A, B, C Doc2 = E, C, E, A, A, D Doc3 = D, C, B, B, D, A, B, C, A Doc4 = A

c(A,A) = 10.991 c(A,C) = 10.781 c(A,D) = 10.781 ... c(D,E) = 10.398 c(B,E) = 10.396 c(E,E) = 10.224

Query expansion - example

Query: q = A E E sim(q,A) = 24.298 sim(q,C) = 23.833 sim(q,D) = 23.833 sim(q,B) = 23.830 sim(q,E) = 23.435

New query: q’ = A C D E E w(A,q')= 6.88 w(C,q')= 6.75 w(D,q')= 6.75 w(E,q')= 6.64

Query expansion – example

WordNet

A more detailed database of semantic relationships between English words;

Developed by famous cognitive psychologist George Miller and a team at Princeton University;

About 144,000 English words; Nouns, adjectives, verbs, and adverbs grouped

into about 109,000 synonym sets called synsets.

WordNet Synset Relationships

Antonym: front back Attribute: benevolence good (noun to adjective) Pertainym: alphabetical alphabet (adjective to noun) Similar: unquestioning absolute Cause: kill die Entailment: breathe inhale Holonym: chapter text (part-of) Meronym: computer cpu (whole-of) Hyponym: tree plant (specialization) Hypernym: fruit apple (generalization)

WordNet Query Expansion

Add synonyms in the same synset;

Add hyponyms to add specialized terms;

Add hypernyms to generalize a query;

Add other related terms to expand query.

Statistical Thesaurus

Existing human-developed thesauri are not easily available in all languages.

Human thesuari are limited in the type and range of synonymy and semantic relations they represent.

Semantically related terms can be discovered from statistical analysis of corpora.

Automatic Global Analysis

Determine term similarity through a pre-computed statistical analysis of the complete corpus.

Compute association matrices which quantify term correlations in terms of how frequently they co-occur.

Expand queries with statistically most similar terms.

Association Matrix

w1 w2 w3 …………………..wn

w1

w2

w3

.

.wn

c11 c12 c13…………………c1n

c21

c31

.

.cn1

cij: Correlation factor between term i and term j

Dd

jkikij

k

ffc

fik : Frequency of term i in document k

Correlation analysis

If terms t1 and t2 are independents:

If t1 and t2 are correlated:

mn

n

n

nn 21

mn

n

n

nn 21

Mea

sure

of the c

orrelat

ion degree

Normalized Association Matrix

Frequency based correlation factor favors more frequent terms.

Normalize association scores:

Normalized score is 1 if two terms have the same frequency in all documents.

ijjjii

ijij ccc

cs

Metric Correlation Matrix

Association correlation does not account for the proximity of terms in documents, just co-occurrence frequencies within documents.

Metric correlations account for term proximity.

iu jvVk Vk vu

ij kkrc

),(

1

Vi: Set of all occurrences of term i in any document.r(ku,kv): Distance in words between word occurrences ku and kv

( if ku and kv are occurrences in different documents).

Normalized Metric Correlation Matrix

Normalize scores to account for term frequencies:

ji

ijij

VV

cs

Query Expansion with Correlation Matrix

For each term i in query, expand query with the n terms, j, with the highest value of cij (sij).

This adds semantically related terms in the “neighborhood” of the query terms.

Example

d1d2d3d4d5d6d7

K1 2 1 0 2 1 1 0

K2 0 0 1 0 2 2 5

K3 1 0 3 0 4 0 0

11 4 6

4 34 11

6 11 26

1.0 0.097 0.193

0.097 1.0 0.224

0.193 0.224 1.0

Correlatio

n

Matrix

Norm

alized

Correlation

Matrix

1th Assoc cluster for K2 is K3

4)12(34)22(11)11(4)12(

12 ssssS

Example

d1d2d3d4d5d6d7

K1 2 1 0 2 1 1 0

K2 0 0 1 0 2 2 5

K3 1 0 3 0 4 0 0

1.0 0.097 0.193

0.097 1.0 0.224

0.193 0.224 1.0

Normalized correlation matrix AK1

USER(43): (neighborhood normatrix)

0: (COSINE-METRIC (1.0 0.09756097 0.19354838) (1.0 0.09756097 0.19354838))

0: returned 1.0

0: (COSINE-METRIC (1.0 0.09756097 0.19354838) (0.09756097 1.0 0.2244898))

0: returned 0.22647195

0: (COSINE-METRIC (1.0 0.09756097 0.19354838) (0.19354838 0.2244898 1.0))

0: returned 0.38323623

0: (COSINE-METRIC (0.09756097 1.0 0.2244898) (1.0 0.09756097 0.19354838))

0: returned 0.22647195

0: (COSINE-METRIC (0.09756097 1.0 0.2244898) (0.09756097 1.0 0.2244898))

0: returned 1.0

0: (COSINE-METRIC (0.09756097 1.0 0.2244898) (0.19354838 0.2244898 1.0))

0: returned 0.43570948

0: (COSINE-METRIC (0.19354838 0.2244898 1.0) (1.0 0.09756097 0.19354838))

0: returned 0.38323623

0: (COSINE-METRIC (0.19354838 0.2244898 1.0) (0.09756097 1.0 0.2244898))

0: returned 0.43570948

0: (COSINE-METRIC (0.19354838 0.2244898 1.0) (0.19354838 0.2244898 1.0))

0: returned 1.0

1.0 0.226 0.383

0.226 1.0 0.435

0.383 0.435 1.0

Scalar cluster matrix

The 1th cluster for K2 is K3

Problems with Global Analysis

Term ambiguity may introduce irrelevant statistically correlated terms. “Apple computer” “Apple red fruit computer”

Since terms are highly correlated anyway, expansion may not retrieve many additional documents.

Automatic Local Analysis

At query time, dynamically determine similar terms based on analysis of top-ranked retrieved documents.

Base correlation analysis on only the “local” set of retrieved documents for a specific query.

Avoids ambiguity by determining similar (correlated) terms only within relevant documents. “Apple computer”

“Apple computer Powerbook laptop”

Global vs. Local Analysis

Global analysis requires intensive term correlation computation only once at system development time.

Local analysis requires intensive term correlation computation for every query at run time (although number of terms and documents is less than in global analysis).

But local analysis gives better results.

Global Analysis Refinements

Only expand query with terms that are similar to all terms in the query.

“fruit” not added to “Apple computer” since it is far from “computer.”

“fruit” added to “apple pie” since “fruit” close to both “apple” and “pie.”

Use more sophisticated term weights (instead of just frequency) when computing term correlations.

Qk

iji

j

cQksim ),(