Productionizing dl from the ground up

-

Upload

adam-gibson -

Category

Data & Analytics

-

view

1.695 -

download

0

Transcript of Productionizing dl from the ground up

Open DataSciCon May 2015

Productionizing Deep Learning

From the Ground Up

Overview

● What is Deep Learning?● Why is it hard?● Problems to think about● Conclusions

What is Deep Learning?Pattern recognition on unlabeled & unstructured data.

What is Deep Learning?

● Deep Neural Networks >= 3 Layers● For media/unstructured data● Automatic Feature Engineering● Benefits From Complex Architectures● Computationally Intensive● Accelerates With Special Hardware

Get why it’s hard yet?

Deep Networks >= 3 Layers

● Backpropagation and Old School ANNs = 3

Deep Networks

● Neural Networks themselves as hidden Layers

● Different Types of Layers can be Interchanged/stacked

● Multiple Layer Types, each with own Hyperparameters and Loss Functions

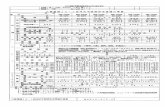

What Are Common Layer Types?

Feedforward

1.MLPs2.AutoEncoders3.RBMs

Recurrent

1.MultiModal2.LSTMs3.Stateful

Convolutional

Lenet: Mixes convolutional & subsampling layers

Recursive/Tree

Uses a parser to form a tree structure

Other kinds

● Memory Networks● Deep Reinforcement Learning● Adversarial Architectures● New recursive ConvNet variant to

come in 2016?● Over 9,000 Layers? (22 is already

pretty common)

Automatic Feature Engineering

Automatic Feature Engineering (TSNE)Visualizations are crucial:Use TSNE to render different kinds of data:http://lvdmaaten.github.io/tsne/

deeplearning4j.org

presentation@

Google, Nov. 17 2014

“TWO PIZZAS SITTING ON A STOVETOP”

Benefits from Complex Architectures

Google’s result combined:● LSTMs (learning captions) ● Word Embeddings ● Convolutional features from images

(aligned to be same size as embeddings)

Computationally Intensive

● One iteration of ImageNet (1k label dataset and over 1MM examples) takes 7 hours on GPUs

● Project Adam● Google Brain

Special Hardware required

Unlike most solutions, multiple GPUs are used today (Not common in Java-based stacks!)

Software Engineering Concerns

● Pipelines to deal with messy data, not canned problems...(Real life is not Kaggle, people.)

● Scale/Maintenance (Clusters of GPUs aren’t done well today.)

● Different kinds of parallelism (model and data)

Model vs Data Parallelism

● Model is sharding model across servers

(HPC style)● Data is mini batch

Vectorizing unstructured data

● Data is stored in different databases● Different kinds of files (raw)● Deep Learning works well on mixed

signal

Parallelism

● Model (HPC)● Data (Mini batch param averaging)

Production Stacks today

● Hadoop/Spark not enough● GPUs not friendly to average

programmer● Cluster management of GPUs as a

resource not typically done● Many frameworks don’t work well in a

distributed env (getting better, though)

Problems With Neural Nets● Loss functions● Scaling data● Mixing different neural nets● Hyperparameter tuning

Loss Functions

● Classification● Regression● Reconstruction

Scaling Data

● Zero mean and unit variance● Zero to 1● Other forms of preprocessing relative

to distribution of data● Processing can also be columnwise

(categorical?)

Mixing and Matching Neural Networks

● Video: ConvNet + Recurrent● Convolutional RBMs?● Convolutional -> Subsampling -> Fully

Connected● DBNs: Different hidden and visible

units for each layer

Hyperparameter tuning

● Underfit● Overfit● Overdescribe (your hidden layers)● Layerwise interactions● What activation function? (Competing?

Relu? Good ol’ Sigmoid?)

Hyperparameter Tuning (2)

● Grid search for neural nets (Don’t do it!)

● Bayesian (Getting better. There are at least priors here.)

● Gradient-based approaches (Your hyper- parameters are a neural net, so there are neural nets optimizing your neural nets...)

Questions?

Twitter: @agibsonccc Github: agibsoncccLinkedIn: /in/agibsoncccEmail: [email protected] (combo breaker!)Web: deeplearning4j.org