Performance of Multithreaded Chip Multiprocessors and Implications for Operating System Design...

-

Upload

hester-marshall -

Category

Documents

-

view

214 -

download

0

Transcript of Performance of Multithreaded Chip Multiprocessors and Implications for Operating System Design...

Performance of Multithreaded Chip Multiprocessors and Implications for

Operating System Design

Hikmet Aras2006720612

Main Papers

Performance of Multithreaded Chip Multiprocessors and Implications for Operating System Design, 2004

Throughput-Oriented Scheduling on Chip Multithreaded Systems, 2004

– Authors Alexandra Fedorova, Harvard University, Sun Microsystems Margo Seltzer, Harvard University Christopher Small, Sun Microsystems Daniel Nussbaum, Sun Microsystems

Agenda

Introduction Performance Impacts of Shared Resources

on CMTs Implementation Related Work Conclusion

Part I - Introduction

Performance of Multithreaded Chip Multiprocessors and Implications for Operating System Design

What industry needs...

Modern applications (Application servers, OLTP systems) can not utilize pipeline.

– Multiple threads of control, executing short integer operations.

– Frequent dynamic branches.– Poor cache locality and branch prediction accuracy.

SPEC CPU reported the utilization could be as low as 19% for some configurations.

CMPs and MTs are designed to solve this problem.

CMP and MT

CMP : Chip Multiprocessing– Multiple processor cores on a single chip,

allowing more than one thread to be active at a time, improving utilization of the chip.

MT : Hardware Multithreading– Has multiple sets of registers, interleaves the

execution of threads, either by switching between them in each cycle, or by executing multiple threads simultaneously(using different functional units)

Examples

CMPs– IBM’s Power4– Sun’s Ultra Sparc IV

MTs– Intel’s hyper-threaded Pentium IV– IBM’s RS64 IV

What is CMT?

CMT (Multithreaded Chip Multiprocessor) is a new generation of processors, that exploit thread level parallelism to mask the memory latency in modern workloads.

CMT = CMP + MT Studies have demonstrated the performance benefits

of CMTs, and vendors are planning to ship their CMTs in 2005.

So it is important to understand how to best take advantage of CMTs.

CMTs share resources...

A CMT may be equipped with many simultaneously active thread contexts. So, competition for shared resources is intense.

It is important to understand the conditions leading to performance bottlenecks on shared resources, and avoid performance degradation.

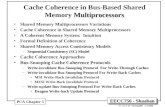

CMT Simulation

CMT systems not exist yet, we will work on CMT system simulator kit (similar to Simics)

The simulated CPU core has a simple RISC pipeline, with one set of functional units.

Each core has a TLB, L1 data and instruction caches.

L2 cache is shared by all CPU cores in the chip.

CMT Simulation

A schematic view of the simulated CMT Processor

CMT Simulation

Accurately simulate: Pipeline contention L1 and L2 caches Bandwidth limits on crossbar connections between L1-

L2 caches Bandwidth limits on the path between L2 cache and

memory.

1 to 4 cores, each including 4 hardware contexts, 8KB-16KB L1 data and instruction caches, and L2 cache.

Part II - Performance Impacts of Shared Resources on CMTs

Performance of Multithreaded Chip Multiprocessors and Implications for Operating System Design

Shared Resources on CMTs

We will analyze the potential performance bottlenecks on:– Processor Pipeline– L1 Data Cache– L2 Cache

Processor Pipeline

Threads differ in how they use the pipeline– Compute-intensive vs. Memory-intensive threads.

Processor Pipeline

Co-scheduling compute-intensive threads.

While each thread is able to issue an instruction on every cycle, it can not do so, because the processor has to switch among all the threads.

Processor Pipeline

Co-scheduling memory-intensive threads

The pipeline is under-utilized.

Processor Pipeline

Co-scheduling compute intensive and memory-intensive threads

The scheduler can identify the thread by measuring the single threaded CPI:

– Low CPI -> high pipeline utilization -> compute-intensive– High CPI -> low pipeline utilization -> memory-intensive

Processor Pipeline

A Simple example on performance gain of such a scheduling.

– A machine with 4 processor cores and 4 thread contexts.– Try to schedule 16 threads, with CPIs 1,6,11,16, 4 in each

group.

Processor Pipeline

• Throughput of 4 different scheduling ways

• Throughput can be improved with a smart scheduling algorithm.

Processor Pipeline

CPI-based scheduling works if the threads have varying single-threaded CPIs, which is not the case in real environments.

Processor Pipeline

Performance gains of scheduling based on pipeline usage is negligible in this system.

May be more advantageous in SMTs with multiple functional units, having threads with variable CPIs.

L1 Data Cache

L1 data cache is small. What about hitrates on MT config?

L1 data cache hitrates for baseline single-threaded and 4-way multithreaded configs. Data Cache size is 8KB.

What happens in different cache sizes?

L1 Data Cache

Pipeline utilization and L1 cache miss rates as a function of Cache Size.

L1 Data Cache

Pipeline utilization as a function of Cache Size for different applications.

L1 Data Cache

Increasing size of L1 data cache does not improve performance on MTs. Even if it decreases cache misses, no need for such a cost.

L1 instruction cache is also small, but hitrates are always high (above 97%), so no need to consider.

L2 Cache

L2 cache is more likely to be a potential bottleneck when hitrate decreases.– Latency (on hyperthreaded Pentium IV)

A trip from L1 to L2 takes 18 cycles A trip from L2 to memory takes 360 cycles.

– Bandwidth The bandwidth between L1-L2 is typically greater than

the bandwidth between L2 and main memory.

L2 Cache

A simple experiment.– Single core MT machine with 4 contexts, 8KB L1

cache and varying L2 cache size. – A workload of 8 applications having different

cache behaviours.– On simulated Solaris operating system.

L2 Cache

Performance is highly related with L2 cache miss ratio, so our scheduling algorithm should be targeted to decreasecache misses on L2.

Part III - Implementation

Performance of Multithreaded Chip Multiprocessors and Implications for Operating System Design

A new Scheduling Algorithm

Balance set scheduling (Denning, 1968) Basic idea :

– To avoid thrashing, schedule a group of threads whose working set fits in the cache.

– Working set is the amount of data that a thread touches in its lifetime.

Problem with Working-Set Model

Denning’s assumption: – Working set is accessed uniformly.– Small working sets have good locality, large ones

have poor locality.

Does it apply to modern workloads?

Problem with Working-Set Model

A simple experiment to prove it. – Footprint for gzip : 200K– Footprint for crafty : 40K– According to Denning’s assumption, crafty should

have better cache hit rates than gzip.– We proved this assumption was wrong for

modern workloads, so we can not use working-set model.

Problem with Working-Set Model

L1 data cache hit rates for crafty and gzip.

A better metric of Locality

Hypothesis : Even though gzip has a larger working set, it has better cache hit rates than crafty. It should be accessing the data in smaller chunks.

Reuse distance : amount of time that passes between the references to a memory location.

A better metric of Locality

crafty gzip

Reuse distance distributions for crafty and gzip Degree of locality can be better represented with reuse distance model,

than working set model.

Reuse Distance Model

A cache model based on reuse distance distributions. The smaller the reuse distance, the greater the probability of cache hit.

Model needs Reuse Distance histogram as an input. (Cost ?)

2 methods to adapt the model to multithread environments.

Method #1 : COMB

Combines the reuse distance histograms for several threads– Sum the number of references in each bucket.– Multiply the value of each reuse distance by N.

Accurate but may be too expensive. (assume on a machine with 32 contexts and 100 threads)

Method #2 : AVG

Predict the miss rate of individual threads, if they were run with a fraction of total cache – Cache = TotalCache / #Threads

Take average of miss rates Preferrable method : Less expensive, same

accuracy.

Reuse Distance Model

Actual vs. Predicted miss rates

Balance-Set Scheduling

Adapted balance set principle to work with reuse distance model instead of working set.

When a scheduling decision is to be done :– Predict miss rates of all possible groups of

threads.– Schedule the groups whose predicted miss rates

are below a threshold.

Balance-Set Scheduling

What is the threshold to be used?

Balance-Set Scheduling

Two policies for selecting the group to schedule :– PERF : Select the groups with lowest miss rate

(making sure no workload will be starved)– FAIR : Each workload receives equal share of

processor. Keep track of how many times each workload is selected In each selection, favor groups that has least frequently

selected workloads.

Balance-Set Scheduling

IPC achieved with default, PERF and FAIR schedulers

- Lowest performance gain : 16% , FAIR when L2=384KB- Highest performance gain : 32%, PERF when L2=48KB

Balance-Set Scheduling

• L2 cache miss rates are reduced by 20-40%

• Minimum gain is 12% with FAIR scheduler in L2 = 384KB, which could be achieved by using a 4 times larger L2 cache.

Fairness

Trying to optimize cache hit rate, we favor the well-behaving workloads, not fair?

All workloads should get an equal share of CPU in a fair environment.

A metric for fairness : – Standart Deviation from average CPU share.

Fairness

Processor share of 8 workloads in PERF and FAIR• FAIR is better, but stdDev is still high.• Each thread is represented in the diagram, so no one is starved.

Implementation Cost

We talked about the potential benefits, but a useful approach must be practical to implement.

Cost of predicting the miss rates based on reuse distance histograms, was previously discussed.

– To adapt the model to MT environment, we should combine the histogram informations.

– Little cost with AVG method, more expensive in COMB method.

Implementation Cost

Cost of collecting the data required for building reuse distance histograms. – Monitor memory locations and record their reuse

distances. – A user-level watching tool was implemented, with

20% overhead. – Overhead is reduced multiple watch points (could

be done in UltraSparc) and kernel level instead of user-level.

Implementation Cost

Data to be stored for each thread is small. The size of reuse distance histogram can be

fixed. Reuse distance histograms can be

compressed:– Aggregated reuse distances in buckets.– Results stayed accurate even for a few buckets.

Part IV – Related Work

Performance of Multithreaded Chip Multiprocessors and Implications for Operating System Design

SMT Scheduling [3][4][5]

Samples the space of possible schedules, identifies best grouping of threads, attempts to co-schedule them.

Easy to implement, with trivial hardware support and no overhead.

Improves response time by %17. Not preferred when sample space becomes

very large.

Cohort Scheduling [6]

A scheduling infra for server applications. Similar operations from different server

requests are executed together, improves data locality.

Reduces L2 misses by 50%, improves IPC by 30%.

Limited applicability, requires application code change.

Capriccio Thread Package [7]

Resource-aware scheduling by monitoring thread’s behaviour.

Better scheduling decisions. Optimizes resource utilization, but requires very detailed monitoring of the program state.

Part V – Conclusion

Performance of Multithreaded Chip Multiprocessors and Implications for Operating System Design

Conclusion

We investigated the performance effects of shared resources in a CMT system, and found L2 cache has the greatest effect on performance.

Using Balance-Set Scheduling, we reduced L2 cache miss rates by 20-40% and improved performance by 16-32%. (same improvement when L2 cache size multiplied by 4)

Conclusion

We adapted Reuse-Distance cache miss rate model to work with MT and CMP processors.

Next thing to do is, to repeat experiments on larger machine configurations under commercial workloads, and to evaluate implementation costs on real systems.

References

[1] A.Fedorova, M.Seltzer, C.Small, D.Nussbaum, “Performance of Multithreaded Chip Multiprocessors and Implications on Operating System Design”, 2004.

[2] A.Fedorova, M.Seltzer, C.Small, D.Nussbaum, “Throughput Oriented Scheduling on Chip Multithreading Systems”, 2004.

[3] A.Snavely, D.Tullsten, “Symbiotic Job Scheduling for a Simultaneous Multithreading Machine”, 2000

[4] A.Snavely,D.Tullsten,G.Voelker, “Symbiotic JobScheduling with priorities for a Simultaneous Multithreading Processor”, 2002

[5] S.Parekh,S.Eggers,H.Levy,J.Lo, “Thread-sensitive scheduling for SMT processors”.

[6] J.Larus,M.Parkes, “Using Cohort Scheduling to enhance server performance”, 2002.

[7] R.Behren,J.Condit,F.Zhou,G.Necula, E.Brewer, “Capriccio:Scalable threads for internet services”, 2003.

THANKS...

QUESTIONS ?