Author 内閣サイバーセキュリティセンター(NISC) Created Date 9/27/2016 2:06:16 PM

NISC 2D Processor Arrays for - VLSI Academy...1. Raw Processor, The Birth of Tile Processors - In...

Transcript of NISC 2D Processor Arrays for - VLSI Academy...1. Raw Processor, The Birth of Tile Processors - In...

• Motivation and Problem Statement

A need is rising for a high performance

reconfigurable computing platform to

perform heavy scientific computations

with high accuracy and low cost in order

to be able to provide a reasonable

replacement for super-computers in

some applications.

• Statically scheduled problems

• General purpose processors.

• Massively parallel GPUs

• FPGAs as hardware accelerators

• 2D array of reconfigurable NISC

processing elements

• Research Goals

The objective set out for this project is

implement the architecture in “NOA’S-

Arc: NISC based, Optimized Array

Scalable Architecture” in the 65 nm

CMOS technology. Some of the minor

goals achieved in the project are:

1. Design a high speed double precision

floating point unit.

2. Design a scalable NoC router.

3. Design a micro programmed NISC

control unit.

Outline

Introduction Background Related Work Overall Design

FMA Divider Control Unit NoC

Memory Realization Verification Future Work

&

Conclusion

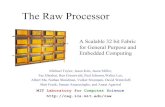

1. Raw Processor, The Birth of Tile

Processors

- In 1997, the raw architecture was first introduced by a

group from MIT; one of them is professor Anand

Agarwal who is the co-founder and CTO of Tilera corporation.

What’s a Raw Processor?

• Identical tiles.

• Interconnection NoC.

• Distributed Memory.

• Control Unit.

2. Tile Processor, The Raw Processors Delivered

Commercially

• Processors based on the highly scalable tile

processor architecture.

• Each tile is powerful enough to run an entire

operating system.

• It also has additional instructions to accelerate

DSP applications.

3. NOA’S-Arc: NISC based, Optimized Array

Scalable Architecture

A new architecture of an array of

reconfigurable NISC processing elements

connected by a reconfigurable NoC to

target statically scheduled scientific

computing problems.

• A single PE contains two floating point units

(Divider, FMA), reconfigurable control unit

and data cache unit.

• Reconfigurable control unit.

• The architecture is simulated using a tile of

64 PEs to run LU decomposition algorithm of

a dense matrix, and the results show a

performance of 177 GFLOP/s.

4. An Example of Multi-core Based Implementations For Solving Statically Scheduled Problems

3 parallel strategies:

1. Row block.

2. Row cyclic.

3. Proposed algorithm.

5. An Example of GPU Based Implementations For Solving Statically Scheduled Problems • Drawbacks:

− Dedicated to graphics.

− Needs large memory.

6. An Example of an FPGA-Accelerated

Implementation For Solving Statically Scheduled Problems

• Drawbacks:

− Single precision FPU.

− Shared memory.

• Overall architecture of a single core

- Control Unit

- FMA (Fused Multiply Add)

- Divider

- Memory

- Router

Introduction

Related Work:

1. Fused Multiply-Add Floating Point and Integer data-

path:

- point’s and integer’s logic and arithmetic

operations.

- Frequency of this FMA is 1.2 GHz on 65 nm

technology.

2. Similar Design:

- Performance of 1.81 GHz on 45 nm technology.

- Scaling these results to the 65 nm.

3. Other Processors.

Design Choices

1. Number representation.

2. Supported operation.

Instruction Input Operation

Multiply-Add A;B;C: Floating-point (signed) C+AxB

Multiply-Subtract A;B;C: Floating-point (signed) C-AxB

Multiply A;B: Floating-point (signed) A xB

Add A;C: Floating-point (signed) C+A

Subtract A;C: Floating-point (signed) C-A

Equations of alignment:

• Shift Count= Ae+Be-Ce–Bias+(Significant width+3

Guard bits+ 2 Multiply compensation)

• Exponent= Ae+Be-Bias+(Significant width+3 Guard

bits+ 2 Multiply compensation)

• Exponent= Ae+Be-1023+57= Ae+Be-996

Booth encoder algorithms:

1. radix-2 (53 partial product).

2. radix-4 (27 partial product) best for delay

and area.

3. radix-8 (14 partial product).

4. Rounding:

Using rounding nearest to zero mode

(truncation).

5. pipelining:

Depart the critical path to 7 stages.

Results

Critical Path Length 0.6 ns

Maximum Frequency 1.66 GHz

Latency 7 cycles

Cell Count 16200 cells

Total cell Area 0.05 mm2

Total Dynamic Power 132 mW

Number of Nets 16605 nets

Scaling the results presented in ”Galal, S.;

Horowitz, M., "Energy-Efficient Floating-Point

Unit Design," Computers, IEEE Transactions on ,

vol.60, no.7, pp.913,922, July 2011”, we get

1.26 GHz maximum frequency, 146 mW total

dynamic power and 0.083 mm2 so it is clear

that despite the extra pipeline stage, our

results are much better.

• Floating point divider is the most complex of

the four basic arithmetic operations and the

hardest to speed up.

• Expensive/Slow.

Types of Divider

1. Combinational (Array) Dividers.

2. Convergence Dividers.

3. Sequential Dividers.

Design Choices

• Paper-and-pen.

• SRT radix-2.

• SRT radix-4:

– Minimally redundant.

– Maximally redundant.

• Intel Pentium.

3. Look-Up table:

Partial Remainder Range Q

Partial Remainder Range Q Q+ Q-

Traditional look-up table

Our proposed look-up table

4. Normalization.

5. Sign.

• Contributions.

• Results.

Critical Path Length 0.78 ns

Maximum Frequency 1.28 GHz

Latency 30 cycles

Cell Count 4307 cells

Total Cell Area 0.01 mm2

Total Dynamic Power 13.8099 mW

Number of Nets 3782 nets

Advantages of this architecture

• Simple.

• Faster due to the use of CAM instead of RAM.

• Re-configurable.

Introduction

• MPSoCs.

• Busses.

• NoC solution Provides:

- High bandwidth.

- Low latency communication.

- Minimum cost and energy.

- More scalability.

- Efficient link utilization.

2. Switching

• There are several methods for switching:

1. Store and Forward.

2. Wormhole.

3. Virtual Cut-through.

3. Flow Control

• Determines how to allocate network resources to messages transmitted through the network.

• Network resources:

- Channel bandwidth.

- Buffer capacity.

- Control state.

• Packet size.

Router Micro-architecture

• Components of a router

• Types of router blocks

− Data path.

− Control plane.

• Buffers organization

− Single fixed-length queue.

− Multiple fixed-length queue.

− Multiple variable-length queue.

• Crossbar switch

• Allocators and arbiters

− Round Robin arbiter.

− Matrix arbiter.

− Separable allocator.

− Wavefront allocator.

• Conclusion

Results Critical Path Length 0.96 ns

Maximum Frequency 1.04 GHz

Cell Count 24179 cells

Total cell Area 0.085 mm2

Total Dynamic Power 114.3113 mW

Number of Nets 22404 nets

Critical Path Length 0.5 ns

Maximum Frequency 2 GHz

Cell Count 8105 cells

Total cell Area 0.04 mm2

Total Dynamic Power 78 mW

Number of Nets 7765 nets

Synthesis results of the NoC router

Synthesis results of the new NoC router

Overview

• Design Constraints.

• Different Memory Architectures.

• Memory Peripherals:

– Row Decoder.

– Column Decoder.

– Sense Amplifier.

• Multi-Port SRAM.

• Approach.

Different Memory Architectures

• The One-Block Architecture.

• Optimized Fan-in Architecture.

• The unity- Aspect ratio Architecture.

Digital design flow:

Specification & Architecture

RTL code(VHDL or Verilog)

Simulation (modelsim)

Logic synthesis(dc compiler)

Static timing analysis

Place & routing(ic compiler)

Milkeway (.gds)

Physical verification(caliber)

Static timing analysis

*Due to the large design we are dealing with,

we preferred to take the bottom up

approach, due to its speed. Each block is

synthesized separately.

Synthesizing Memory using Milkyway

• Memory Compiler to generate .lib file.

• Converting .lib to .db file to synthesize

the memory using the memory

standard cell and reference standard

cell.

Synthesizing Memory using Milkyway

• Creating Memory_Milkyway on two steps:

1. Creating new milkyway with the default

technology files and synthesized netlist.

2. Adding the .vclef file created by memory

compiler which contains the FRAM size and

structure.

3. Then we combine both to get FRAM size

and structure.

Placement and Routing {PnR}

1. Setup

- We create new Milkyway for our design PnR including two libraries: reference mw_lib and memory mw_lib.

- We set target and link libraries to both tech files.

- Adding TLUPLUS delay files for max and min delay.

• set link_library [list ./tcbn65lplvttc_ccst_pg.db/]

• set target_library [list ./tcbn65lplvttc_ccst_pg.db]

• create_mw_lib -technology ./techfiles/tsmcn65_9lmT2.tf -mw_reference_library {./libs/tcbn65lplvt} -bus_naming_style {[%d]} -open ./PnR_primetime_2

• set_tlu_plus_files -max_tluplus ./libs/TECH/techfiles/tluplus/cln65lp_1p09m+alrdl_top2_rcworst.tluplus -min_tluplus ./libs/TECH/techfiles/tluplus/cln65lp_1p09m+alrdl_top2_rcbest.tluplus -tech2itf_map /home/elmoghany/opt/milkyway/PR_0409_TSMC65/libs/TECH/tech.map

• import_designs -format verilog -top router_3 -cel router_3

{/home/elmoghany/work/may8/jun22/netlists/noc.v}

Placement and Routing {PnR} Initialize floorplan, rings, powerstraps

• initialize_floorplan -start_first_row -flip_first_row -left_io2core 1.0 -bottom_io2core 1.0 -

right_io2core 1.0 -top_io2core 1.0

• derive_pg_connection -power_net VDD -power_pin VDD -ground_net VSS -ground_pin

VSS

• create_rectangular_rings -nets {VDD} -left_segment_layer M4 -right_segment_layer M4

-bottom_segment_layer M5 -top_segment_layer M5

• create_rectangular_rings -nets {VSS} -left_offset 0.2 -left_segment_layer M4 -right_offset

0.2 -right_segment_layer M4 -bottom_offset 0.2 -bottom_segment_layer M5 -top_offset

0.2 -top_segment_layer M5

• create_power_straps -direction horizontal -num_placement_strap 20 -increment_x_or_y

15 -nets {VDD} -layer M5

• create_power_straps -direction vertical -num_placement_strap 20 -increment_x_or_y

15 -nets {VSS} -layer M4

5. Pre-route • preroute_standard_cells -connect horizontal -port_filter_mode off -

cell_master_filter_mode off -cell_instance_filter_mode off -

voltage_area_filter_mode off-route_type {P/G Std. Cell Pin Conn}

Placement and Routing {PnR} Chip Finishing, LVS, DRC Fixing • insert_metal_filler -out self

• signoff_drc

Placement and Routing {PnR} GDSII

• write_parasitics -format sbpf -output {Top.sbpf}

• write_parasitics

• save_design

• save_mw_cell

• write_stream -format gds Top.gds

• Verification aimed goals.

• Our verification related work:

- Arithmetic Blocks.

- Router.

- Single Tile.

- Single Core.

Block Area

Overall Area (after placement and routing) 0.77 mm2

FMA 0.06 mm2

Divider 0.01 mm2

Control Unit 0.08 mm2

Memory 0.2 mm2

Router 0.085 mm2

Scratch Pad Memory 0.01 mm2

Fetch Unit 0.01 mm2

Area Results

Block Total Dynamic Power

FMA 132 mW

FDIV 13 mW

Memory 22 mW

Router 114 mW

Control Unit 6 mW

Scratch Pad 7 mW

Others 0.5 mW

Overall Power Consumption 294.5 mW

Power Results

Timing Results

Critical path with old router

0.96 ns (router) Maximum frequency 1.04 GHz

Critical path with new router

0.84 ns (data memory/scratch pad memory )

Maximum frequency 1.19 GHz

• Scalable tiled-based NISC 2D processor

arrays.

• 65 nm CMOS technology.

• Goals.

• Successfully one tile of the array: operating

frequency higher than 1 GHz and with

further work this frequency can be raised to

1.5 GHz.

Contributions • Reconfigurable micro-programmed NISC CU, providing a

high level of parallelism.

• Designing a fast double precision floating point fused-multiply-add pipeline.

• Modifying the design of hardware SRT radix-4 divider and

designing a fast floating point divider based on this modifications.

• Designing of NOC router that is characterized by its high

speed, scalability, ease of interface and operating frequency higher than 2 GHz with optimizations made to

its crossbar switch.

• An optimized core organization for matrix operations.

Limitations

• Data Memory low performance.

• The lack of proper CAM technology for the

control unit.

Future Work

1. NoC Router Improvement.

2. Floating Point Unit.

3. Data Memory.

4. Micro Instruction Memory.

5. ASIC Implementation.

6. Verification.

7. Compiler Design.

8. Chip Implementation.