New Developments in Integral Reinforcement Learning ... talks 2017/2017 11 new RL... · Nonlinear...

Transcript of New Developments in Integral Reinforcement Learning ... talks 2017/2017 11 new RL... · Nonlinear...

Moncrief-O’Donnell Chair, UTA Research Institute (UTARI)The University of Texas at Arlington, USA

and

F.L. Lewis National Academy of Inventors

Talk available online at http://www.UTA.edu/UTARI/acs

New Developments in Integral Reinforcement Learning:Continuous-time Optimal Control and Games

Qian Ren Consulting Professor, State Key Laboratory of Synthetical Automation for Process Industries

Northeastern University, Shenyang, China

Supported by :ONRNSF

Supported by :China NNSFChina Project 111

3

Thanks to Dongbin ZhaoS. Jagannathan

Optimal Control is Effective for:Aircraft AutopilotsVehicle engine controlAerospace VehiclesShip ControlIndustrial Process Control

Multi-player Games Occur in:Networked Systems Bandwidth AssignmentEconomicsControl Theory disturbance rejectionTeam gamesInternational politicsSports strategy

But, optimal control and game solutions are found byOffline solution of Matrix Design equationsA full dynamical model of the system is needed

Optimality and Games

1 Tu R B Px Kx

BuAxx

( ( )) ( ) ( ) ( )T T T

t

V x t x Qx u Ru d x t Px t

0 ( , , ) 2 2 ( )T

T T T T T T TV VH x u V x Qx u Ru x x Qx u Ru x P Ax Bu x Qx u Rux x

Derivation of Linear Quadratic Regulator

System

Cost

Differentiate using Leibniz’ formula

Optimal Control is

Scalar equation

( )T TV x Qx u Ru

Differential equivalent is the Bellman equation

Stationarity condition 0 2 2 TH Ru B Pxu

1 10 2 ( )T T T T Tx P Ax BR B Px x Qx x PBR B Px HJB equation

10 T T T T T Tx PAx x A Px x Qx x PBR B Px

u Kx Given any stabilizing FB policy

0 ( , , ) ( ) ( )T T TVH x u x A BK P P A BK Q K RK xx

Lyapunov equation

Full system dynamics must be knownOff‐line solution

Rudolph Kalman 1960

Riccati equation

xux Ax Bu

SystemControlK

PBPBRQPAPA TT 10

1 TK R B P

On-line real-timeControl Loop

Off-line Design LoopUsing ARE

Optimal Control- The Linear Quadratic Regulator (LQR)

An Offline Design Procedurethat requires Knowledge of system dynamics model (A,B)

System modeling is expensive, time consuming, and inaccurate

( , )Q R

User prescribed optimization criterion ( ( )) ( )T T

t

V x t x Qx u Ru d

Adaptive Control is online and works for unknown systems.Generally not Optimal

Optimal Control is off-line, and needs to know the system dynamics to solve design eqs.

Reinforcement Learning turns out to be the key to this!

We want to find optimal control solutions Online in real-time Using adaptive control techniquesWithout knowing the full dynamics

For nonlinear systems and general performance indices

Bring together Optimal Control and Adaptive Control

D. Vrabie, K. Vamvoudakis, and F.L. Lewis,Optimal Adaptive Control and DifferentialGames by Reinforcement LearningPrinciples, IET Press,2012.

BooksF.L. Lewis, D. Vrabie, and V. Syrmos,Optimal Control, third edition, John Wiley andSons, New York, 2012.

New Chapters on:Reinforcement LearningDifferential Games

F.L. Lewis and D. Vrabie,“Reinforcement learning and adaptive dynamic programming for feedback control,”IEEE Circuits & Systems Magazine, Invited Feature Article, pp. 32-50, Third Quarter 2009.

IEEE Control Systems Magazine,F. Lewis, D. Vrabie, and K. Vamvoudakis,“Reinforcement learning and feedback Control,” Dec. 2012

Multi‐player Game SolutionsIEEE Control Systems Magazine,Dec 2017

How can one do Policy Iteration for Unknown Continuous‐Time Systems?What is Value Iteration for Continuous‐Time systems?How can one do ADP for CT Systems?

( , , ) ( , ) ( , ) ( , ) ( , )T TV V VH x u V r x u x r x u f x u r x u

x x x

)()())(,()),(,( 1 khkhkkkk xVxVxhxrhxVxH Directly leads to temporal difference techniques System dynamics does not occur Two occurrences of value allow APPROXIMATE DYNAMIC PROGRAMMING methods

Leads to off‐line solutions if system dynamics is knownHard to do on‐line learning

Discrete‐Time System Hamiltonian Function

Continuous‐Time System Hamiltonian Function

How to define temporal difference? System dynamics DOES occur Only ONE occurrence of value gradient

RL ADP has been developed for Discrete-Time Systems1 ( , )k k kx f x u

Four ADP Methods proposed by Paul Werbos

Heuristic dynamic programming

Dual heuristic programming

AD Heuristic dynamic programming

AD Dual heuristic programming

(Watkins Q Learning)

Critic NN to approximate:

Value

Gradient xV

)( kxV Q function ),( kk uxQ

GradientsuQ

xQ

,

Action NN to approximate the Control

Bertsekas- Neurodynamic Programming

Barto & Bradtke- Q-learning proof (Imposed a settling time)

Discrete-Time SystemsAdaptive (Approximate) Dynamic Programming

Value Iteration

Integral Reinforcement Learning for CT systemsSynchronous IRLMulti-player Non-zero Sum GamesGraphical Games on NetworksZero-Sum GamesQ Learning for CT SystemsExperience ReplayOff-Policy IRL

t

T

t

dtRuuxQdtuxrtxV ))((),())((

( , , ) ( , ) ( , ) ( ) ( ) ( , ) 0T TV V VH x u V r x u x r x u f x g x u r x u

x x x

112( ) ( )T Vu h x R g x

x

dxdVggR

dxdVxQf

dxdV T

TT *1

*

41

*

)(0

, (0) 0V

Nonlinear System dynamics

Cost/value

Bellman Equation, in terms of the Hamiltonian function

Stationary Control Policy

HJB equation

CT Systems‐ Derivation of Nonlinear Optimal Regulator

Off‐line solutionHJB hard to solve. May not have smooth solution.Dynamics must be known

Stationarity condition 0Hu

( , ) ( ) ( )x f x u f x g x u

Leibniz gives Differential equivalent

To find online methods for optimal control Focus on these two equations

Problem‐ System dynamics shows up in Hamiltonian

),,(),(),(0 uxVxHuxruxf

xV T

0 ( , ( )) ( , ( ))T

jj j

Vf x h x r x h x

x

(0) 0jV

1121( ) ( ) jT

j

Vh x R g x

x

CT Policy Iteration – a Reinforcement Learning Technique

• Convergence proved by Leake and Liu 1967, Saridis 1979 if Lyapunov eq. solved exactly

• Beard & Saridis used Galerkin Integrals to solve Lyapunov eq.• Abu Khalaf & Lewis used NN to approx. V for nonlinear systems and proved convergence

RuuxQuxr T )(),(Utility

The cost is given by solving the CT Bellman equation

Policy Iteration Solution

Pick stabilizing initial control policy

Policy Evaluation ‐ Find cost, Bellman eq.

Policy improvement ‐ Update control Full system dynamics must be knownOff‐line solution

dxdVggR

dxdVxQf

dxdV T

TT *1

*

41

*

)(0

Scalar equation

M. Abu-Khalaf, F.L. Lewis, and J. Huang, “Policyiterations on the Hamilton-Jacobi-Isaacs equation for H-infinity state feedback control with input saturation,”IEEE Trans. Automatic Control, vol. 51, no. 12, pp.1989-1995, Dec. 2006.

( ) ( )u x h xGiven any admissible policy

0 ( )h x

Converges to solution of HJB

PBPBRQPAPA TT 10

1 Tu R B Px Kx

BuAxx

( ( )) ( ) ( ) ( )T T T

t

V x t x Qx u Ru d x t Px t

Full system dynamics must be knownOff‐line solution

0 ( , , ) 2 2 ( )T

T T T T T T TV VH x u V x Qx u Ru x x Qx u Ru x P Ax Bu x Qx u Rux x

Policy Iterations for the Linear Quadratic Regulator

0 ( ) ( )T TA BK P P A BK Q K RK

u Kx

System

Cost

Differential equivalent is the Bellman equation

Given any stabilizing FB policy

The cost value is found by solving Lyapunov equation = Bellman equation

Optimal Control is

Algebraic Riccati equation

LQR Policy iteration = Kleinman algorithm

1. For a given control policy solve for the cost:

2. Improve policy:

If started with a stabilizing control policy the matrix monotonically converges to the unique positive definite solution of the Riccati equation.

Every iteration step will return a stabilizing controller. The system has to be known.

ju K x

0 T Tj j j j j jA P P A Q K RK

11

Tj jK R B P

j jA A BK

0K jP

Kleinman 1968

Bellman eq. = Lyapunov eq.

OFF‐LINE DESIGNMUST SOLVE LYAPUNOV EQUATION AT EACH STEP.

Matrix equation

Can Avoid knowledge of drift term f(x)

Work of Draguna Vrabie

Policy iteration requires repeated solution of the CT Bellman equation

0 ( , ( )) ( , ( )) ( , ( )) ( ) ( , , ( ))T T

TV V VV r x u x x r x u x f x u x Q x u Ru H x u xx x x

This can be done online without knowing f(x)using measurements of x(t), u(t) along the system trajectories

Integral Reinforcement Learning

( ) ( )x f x g x u

D. Vrabie, O. Pastravanu, M. Abu-Khalaf, and F. L. Lewis, “Adaptive optimal control for continuous-time linear systems based on policy iteration,” Automatica, vol. 45, pp. 477-484, 2009.

Lemma 1 – Draguna Vrabie

Solves Bellman equation without knowing f(x,u)

( ( )) ( , ) ( ( )), (0) 0t T

t

V x t r x u d V x t T V

0 ( , ) ( , ) ( , , ), (0) 0TV Vf x u r x u H x u V

x x

Allows definition of temporal difference error for CT systems

( ) ( ( )) ( , ) ( ( ))t T

t

e t V x t r x u d V x t T

Integral reinf. form (IRL) for the CT Bellman eq.Is equivalent to

( ( )) ( , ) ( , ) ( , )t T

t t t T

V x t r x u d r x u d r x u d

value

Key Idea

Work of Draguna Vrabie 2009Integral Reinforcement Learning

Bad Bellman Equation

Good Bellman Equation

( ) ( ) ( )( ) ( ) ( ) ( )t T

T T T T

tx t Px t x Q L RL x d x t T Px t T

0T Tc cA P P A L RL Q

Lemma 1 ‐ D. Vrabie‐ LQR case

is equivalent to

( ) ( ) ( )T

T T T Tc c

d x P x x A P PA x x L RL Q xdt

Proof:

( ) ( ) ( ) ( ) ( ) ( )t T t T

T T T T T

t t

x Q L RL xd d x Px x t Px t x t T Px t T

Solves Lyapunov equation without knowing A or B

, cA A BL Integral Reinforcement Learning form

Lyapunov equation

( ( )) ( , ) ( ( )), (0) 0t T

t

V x t r x u d V x t T V

LQR Case

IRL Bellman equation

Value function ( ( )) ( ) ( )TV x t x t Px t

( ( )) ( , ) ( ( ))t T

k k kt

V x t r x u dt V x t T

IRL Policy iteration

Initial stabilizing control is needed

Cost update

Control gain update

f(x) and g(x) do not appear

g(x) needed for control update

Policy evaluation‐ IRL Bellman Equation

Policy improvement11

21 1( ) ( )T kk k

Vu h x R g xx

),,(),(),(0 uxVxHuxruxf

xV T

Equivalent to

Solves Bellman eq. (nonlinear Lyapunov eq.) without knowing system dynamics

CT Bellman eq.

Integral Reinforcement Learning (IRL)- Draguna Vrabie

D. Vrabie proved convergence to the optimal value and controlAutomatica 2009, Neural Networks 2009

Converges to solution to HJB eq.dx

dVggRdx

dVxQfdx

dV TTT *

1*

41

*

)(0

( ( )) ( ) ( ( ))t T

Tk k k k

t

V x t Q x u Ru dt V x t T

Approximate value by Weierstrass Approximator Network ( )TV W x

( ( )) ( ) ( ( ))t T

T T Tk k k k

t

W x t Q x u Ru dt W x t T

( ( )) ( ( )) ( )t T

T Tk k k

t

W x t x t T Q x u Ru dt

regression vector Reinforcement on time interval [t, t+T]

kWNow use RLS along the trajectory to get new weights

Then find updated FB1 11 1

2 21 1( ( ))( ) ( ) ( )

( )

TT Tk

k k kV x tu h x R g x R g x Wx x t

Nonlinear Case- Approximate Dynamic Programming

Direct Optimal Adaptive Control for Partially Unknown CT Systems

Value Function Approximation (VFA) to Solve Bellman Equation– Paul Werbos (ADP), Dimitri Bertsekas (NDP)

Scalar equation with vector unknowns

Same form as standard System ID problems in Adaptive Control

Optimal ControlandAdaptive Controlcome togetherOn this slide.Because of RL

( ( )) ( ( )) ( )t T

T Tk k k

t

W x t x t T Q x u Ru dt

2

( ( )) ( ( 2 )) ( )t T

T Tk k k

t T

W x t T x t T Q x u Ru dt

3

2

( ( 2 )) ( ( 3 )) ( )t T

T Tk k k

t T

W x t T x t T Q x u Ru dt

11 12

12 22

p pp p

11 12 22TW p p p

Solving the IRL Bellman Equation

Need data from 3 time intervals to get 3 equations to solve for 3 unknowns

Now solve by Batch least-squares

Solve for value function parameters

( ( )) ( ) ( )TV x t x t Px tLQR case

( ( )) ( ( )) ( ( )) ( ) ( )t T

T T Tk k k k

t

W x t W x t x t T Q x u Ru dt t

2

( ( )) ( ( )) ( ( 2 )) ( ) ( )t T

T T Tk k k k

t T

W x t T W x t T x t T Q x u Ru dt t T

3

2

( ( 2 )) ( ( 2 )) ( ( 3 )) ( ) ( 2 )t T

T T Tk k k k

t T

W x t T W x t T x t T Q x u Ru dt t T

11 12

12 22

p pp p

11 12 22TW p p p

Solving the IRL Bellman Equation

Need data from 3 time intervals to get 3 equations to solve for 3 unknowns

Now solve by Batch least-squares

Or can use Recursive Least-Squares (RLS)

Solve for value function parameters

Put together

( ( )) ( ( )) ( ( 2 )) ( ) ( ) ( 2 )TkW x t x t T x t T t t T t T

t t+T

observe x(t)

apply uk=Lkx

observe cost integral

update P

Do RLS until convergence to Pk

update control gain

Integral Reinforcement Learning (IRL)

Data set at time [t,t+T)

( ), ( , ), ( )x t t t T x t T

t+2T

observe x(t+T)

apply uk=Lkx

observe cost integral

update P

t+3T

observe x(t+2T)

apply uk=Lkx

observe cost integral

update P

11

Tk kK R B P

( , )t t T ( , 2 )t T t T ( 2 , 3 )t T t T

Or use batch least-squares

(x( )) ( ( )) ( ) ( ) ( ) ( , )t T

T T Tk k k

tW t x t T x Q K RK x d t t T

Solve Bellman Equation - Solves Lyapunov eq. without knowing dynamics

This is a data-based approach that uses measurements of x(t), u(t)Instead of the plant dynamical model.

A is not needed anywhere

( ) ( )k ku t K x t

Continuous‐time control with discrete gain updates

t

Kk

k0 1 2 3 4 5

Reinforcement Intervals T need not be the sameThey can be selected on‐line in real time

Gain update (Policy)

Control

T

Interval T can vary

Persistence of Excitation

Regression vector must be PE

Relates to choice of reinforcement interval T

( ( )) ( ( )) ( )t T

T Tk k k

t

W x t x t T Q x u Ru dt

Implementation Policy evaluationNeed to solve online

Add a new state= Integral Reinforcement

T Tx Q x u R u

This is the controller dynamics or memory

(x( )) ( ( )) ( ) ( ) ( ) ( , )t T

T T Tk k k

tW t x t T x Q K RK x d t t T

Direct Optimal Adaptive Controller

A hybrid continuous/discrete dynamic controller whose internal state is the observed cost over the interval

Draguna Vrabie

DynamicControlSystemw/ MEMORY

xu

V

ZOH T

x Ax Bu System

T Tx Q x u Ru

Critic

ActorK

T T

Run RLS or use batch L.S.To identify value of current control

Update FB gain afterCritic has converged

Reinforcement interval T can be selected on line on the fly – can change

Solves Riccati Equation Online without knowing A matrix

CT time Actor‐Critic Structure

Actor / Critic structure for CT Systems

Theta waves 4-8 Hz

Reinforcement learning

Motor control 200 Hz

Optimal Adaptive IRL for CT systems

A new structure of adaptive controllers

( ( )) ( , ) ( ( ))t T

k k kt

V x t r x u dt V x t T

1121 1( ) ( )T k

k kVu h x R g xx

D. Vrabie, 2009

PBPBRQPAPA TT 10

1 Tu R B Px Kx

Full system dynamics must be knownOff‐line solution

Algebraic Riccati equation

( ) ( ) ( )( ) ( ) ( ) ( )t T

T T T Tk k k k

tx t P x t x Q K RK x d x t T P x t T

11

Tk kK R B P Only B is needed

On line solution in real timeUses data measurements along system trajectory

CT Bellman eq.

1 1( ) ( )k ku t K x t

The Optimal Control Solution

ARE solution can be found online in real-time by using the IRL Algorithmwithout knowing A matrix

Iterate for k=0,1,2,….

The Bottom Line about Integral Reinforcement Learning Off-line ARE Solution

Data-driven On-line ARE Solution

Data set at time [t,t+T)

( ), ( , ), ( )x t t t T x t T

xux Ax Bu

SystemControlK

PBPBRQPAPA TT 10

1 TK R B P

On-line Control Loop

Off-line Design Loop

Optimal Control- The Linear Quadratic Regulator (LQR)

An Offline design Procedurethat requires Knowledge of system dynamics model(A,B)

System modeling is expensive, time consuming, and inaccurate

( , )J Q RUser prescribed optimization criterion

1 TK R B P

PBPBRQPAPA TT 10

xux Ax Bu

SystemControlK On-line Control Loop

On-line Performance Loop

Data-driven Online Adaptive Optimal ControlDDO

An Online Supervisory Control Procedurethat requires no Knowledge of system dynamics model A

Automatically tunes the control gains in real time to optimize a user given cost functionUses measured data (u(t),x(t)) along system trajectories

( , )J Q RUser prescribed optimization criterion

( ) ( ) ( )( ) ( ) ( ) ( )t T

T T T Tk k k k

tx t P x t x Q K RK x d x t T P x t T

11

Tk kK R B P

Data set at time [t,t+T)

( ), ( , ), ( )x t t t T x t T

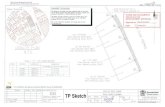

Simulation 1‐ F‐16 aircraft pitch rate controller

1.01887 0.90506 0.00215 00.82225 1.07741 0.17555 0

0 0 1 1x x u

Stevens and Lewis 2003

*1 11 12 13 22 23 33[p 2p 2p p 2p p ]

[1.4245 1.1682 -0.1352 1.4349 -0.1501 0.4329]

T

T

W

PBPBRQPAPA TT 10 ARE

Exact solution

,Q I R I

][ eqx

Select quadratic NN basis set for VFA

0 0.5 1 1.5 2-0.3

-0.2

-0.1

0Control signal

Time (s)

0 0.5 1 1.5 2-0.4

-0.2

0Controller parameters

Time (s)

0 0.5 1 1.5 20

0.5

1

1.5

2

2.5

3

3.5

4System states

Time (s)

0 1 2 3 4 5 60

0.05

0.1

0.15

0.2

Critic parameters

Time (s)

P(1,1)P(1,2)P(2,2)P(1,1) - optimalP(1,2) - optimalP(2,2) - optimal

Simulations on: F‐16 autopilot

A matrix not needed

Converge to SS Riccati equation soln

Solves ARE online without knowing A

PBPBRQPAPA TT 10

1/ / 0 0 00 1/ 1/ 0 0

,1/ 0 1/ 1/ 1/

0 0 0 0

p p p

T T

G G G G

E

T K TT T

A BRT T T T

K

x Ax Bu

( ) [ ( ) ( ) ( ) ( )]Tg gx t f t P t X t E t

FrequencyGenerator outputGovernor positionIntegral control

Simulation 2: Load Frequency Control of Electric Power system

PBPBRQPAPA TT 10

0.4750 0.4766 0.0601 0.4751

0.4766 0.7831 0.1237 0.3829

0.0601 0.1237 0.0513 0.0298

0.4751 0.3829 0.0298 2.3370

.AREP

ARE

ARE solution using full dynamics model (A,B)

A. Al-Tamimi, D. Vrabie, Youyi Wang

0.4750 0.4766 0.0601 0.4751

0.4766 0.7831 0.1237 0.3829

0.0601 0.1237 0.0513 0.0298

0.4751 0.3829 0.0298 2.3370

.AREP

PBPBRQPAPA TT 10

0 10 20 30 40 50 60-0.5

0

0.5

1

1.5

2

2.5P matrix parameters P(1,1),P(1,3),P(2,4),P(4,4)

Time (s)

P(1,1)P(1,3)P(2,4)P(4,4)

( ), ( ), ( : )x t x t T t t T Fifteen data points

Hence, the value estimate was updated every 1.5s.

IRL period of T= 0.1s.

Solves ARE online without knowing A

0.4802 0.4768 0.0603 0.4754

0.4768 0.7887 0.1239 0.3834

0.0603 0.1239 0.0567 0.0300

0.4754 0.3843 0.0300 2.3433

.critic NNP

0 1 2 3 4 5 6-0.1

-0.08

-0.06

-0.04

-0.02

0

0.02

0.04

0.06

0.08

0.1System states

Time (s)

Optimal Control Design Allows a Lot of Design Freedom

Optimal Control for Constrained Input Systems

This is a quasi-norm

2

0

2 ( )u

Tq

u d

Weaker than a norm –homogeneity property is replaced by the weaker symmetry property

qqxx

(Used by Lyshevsky for H2 control)

Control constrained by saturation function tanh(p)

p

1

-1

0 0

( , ) ( ) 2 ( )u

TJ u d Q x d dt

Encode constraint into Value function

Then Is BOUNDED1 ( )T Vu R g xx

Murad Abu-Khalaf

-0.1 0 0.1 0.2 0.3 0.4 0.5 0.6-0.8

-0.6

-0.4

-0.2

0

0.2

0.4

0.6State Evolution for both controlllers

x1

x2

Switching Surface methodNonquadratic functionals method

1

0 0

tanh( ) 2 ( )u

TTV x Q x Rd dt

Near Minimum-Time ControlEncode into Value Function

Murad Abu-Khalaf

Do not need to find switching surface

Batch LS

LS local smooth solution for Critic NN updateIssues with Nonlinear ADP

Integral over a region of state-spaceApproximate using a set of points

time

x1

x2

time

x1

x2

Take sample points along a single trajectory

Recursive Least-Squares RLS

Set of points over a region vs. points along a trajectory

For Nonlinear systemsPersistence of excitation is needed to solve for the weightsBut EXPLORATION is needed to identify the complete value function

- PE Versus Exploration

For Linear systems- these are the same

Selection of NN Training Set

( ( )) ( , ) ( ( )), (0) 0t T

t

V x t r x u d V x t T V

0 ( , ) ( , ) ( , , ), (0) 0TV Vf x u r x u H x u V

x x

( ( )) ( , ) ( ( ))t T

k k kt

V x t r x u dt V x t T

IRL Policy iteration Initial stabilizing control is needed

Cost update

Control gain update

Policy evaluation‐ IRL Bellman Equation

Policy improvement11

21 1( ) ( )T kk k

Vu h x R g xx

CT PI Bellman eq.= Lyapunov eq.

IRL Value Iteration - Draguna Vrabie

Converges to solution to HJB eq.dx

dVggRdx

dVxQfdx

dV TTT *

1*

41

*

)(0

1( ( )) ( , ) ( ( ))t T

k k kt

V x t r x u dt V x t T

IRL Value iteration Initial stabilizing control is NOT needed

Cost update

Control gain update

Value evaluation‐ IRL Bellman Equation

Policy improvement1 11

21 1( ) ( )T kk k

Vu h x R g xx

CT VI Bellman eq.

Converges if T is small enough

Kung Tz 500 BCConfucius

ArcheryChariot driving

MusicRites and Rituals

PoetryMathematics

孔子Man’s relations to

FamilyFriendsSocietyNationEmperorAncestors

Tian xia da tongHarmony under heaven

Pythagoras 500 BC Natural Philosophy, Ethics, and Mathematics

Numbers and the harmony of the spheres

Music, Mathematics, Gymnastics, Astronomy, Medicine

Translate music to mathematical equationsRatios in music

Fire, air, water, earth

The School of Pythagoras esoterikoi and exoterikoi

Mathematikoi - learnersAkoustiki- listeners

Patterns in NatureSerenity and Self-Possession

Actor / Critic structure for CT Systems

Theta waves 4-8 Hz

Reinforcement learning

Motor control 200 Hz

Optimal Adaptive IRL for CT systems

A new structure of adaptive controllers

( ( )) ( , ) ( ( ))t T

k k kt

V x t r x u dt V x t T

1121 1( ) ( )T k

k kVu h x R g xx

D. Vrabie, 2009

Motor control 200 Hz

Oscillation is a fundamental property of neural tissue

Brain has multiple adaptive clocks with different timescales

theta rhythm, Hippocampus, Thalamus, 4-10 Hzsensory processing, memory and voluntary control of movement.

gamma rhythms 30-100 Hz, hippocampus and neocortex high cognitive activity.

• consolidation of memory• spatial mapping of the environment – place cells

The high frequency processing is due to the large amounts of sensorial data to be processed

Spinal cord

D. Vrabie and F.L. Lewis, “Neural network approach to continuous-time direct adaptive optimal control forpartially-unknown nonlinear systems,” Neural Networks, vol. 22, no. 3, pp. 237-246, Apr. 2009.

D. Vrabie, F.L. Lewis, D. Levine, “Neural Network-Based Adaptive Optimal Controller- A Continuous-Time Formulation -,” Proc. Int. Conf. Intelligent Control, Shanghai, Sept. 2008.

Doya, Kimura, Kawato 2001

Limbic system

Motor control 200 Hz

theta rhythms 4-10 HzDeliberativeevaluation

control

69

Synchronous Real‐time Data‐driven Optimal Control

Actor / Critic structure for CT Systems

Theta waves 4-8 Hz

Motor control 200 Hz

Optimal Adaptive Integral Reinforcement Learning for CT systems

A new structure of adaptive controllers

( ( )) ( , ) ( ( ))t T

k k kt

V x t r x u dt V x t T

1121 1( ) ( )T k

k kVu h x R g xx

D. Vrabie, 2009

Policy Iteration gives the structure needed for online optimal solution

1 1ˆ( ) ( ) ( )TV x W x x Take VFA as

Then IRL Bellman eq

becomes

Critic Network

1 1̂, ( ) TV x W

Action Network for Control Approximation

111 22

ˆ( ) ( ) ,T Tu x R g x W

Synchronous Online Solution of Optimal Control for Nonlinear SystemsKyriakos Vamvoudakis

( ( )) ( ) ( ( ))t

Tk k

t T

V x t Q x u Ru dt V x t T

1 1

ˆ ˆ( ( )) ( ) ( ( ))t

T T Tk k

t T

W x t T Q x u Ru dt W x t

( ( )) ( ( )) ( ( ))x t x t x t T

11 2 21ˆ ˆ ˆ( ( )) ( ) 04

tT T

t T

x t W Q x W D W

Define

Bellman eq becomes

Then there exists an N0 such that, for the number of hidden layer units

Theorem (Vamvoudakis & Vrabie)‐ Online Learning of Nonlinear Optimal Control

Let be PE. Tune critic NN weights as

Tune actor NN weights as

0N N

the closed‐loop system state, the critic NN error

and the actor NN error are UUB bounded. 2 1 2ˆW W W

1 1 1̂W W W

Learning the Value

Learning the control policy

Data‐driven Online Synchronous Policy Iteration using IRLDoes not need to know f(x)

( ( )) ( ( )) ( ( ))x t x t x t T

11 1 1 2 22

( ( )) 1ˆ ˆ ˆ ˆ( ( )) ( )41 ( ( )) ( ( ))

tT T

Tt T

x tW a x t W Q x W D W dx t x t

1 12 2 2 2 1 1 2 2 14 2( ( ))ˆ ˆ ˆ ˆ ˆ( ( )) ( )

1 ( ( )) ( ( ))

TT

T

x tW a F W F x t W a D x W Wx t x t

Data set at time [t,t+T)

( ), ( , ), ( )x t t T t x t T

Vamvoudakis & Vrabie

1 11 1 1 2 2 2

1 1( ) ( ) ( ) ( ).2 2

T TL t V x tr W a W tr W a W

Lyapunov energy‐based Proof:

1140 ( )

T TTdV dV dVf Q x gR g

dx dx dx

V(x)= Unknown solution to HJB eq.

2 1 2ˆW W W

1 1 1̂W W W

1 1 1( , , ) ( ) ( )T THH x W u W f gu Q x u Ru

W1= Unknown LS solution to Bellman equation for given N

Guarantees stability

Adaptive Critic structure Reinforcement learning

Two Learning NetworksTune them Simultaneously

Synchronous Online Solution of Optimal Control for Nonlinear Systems

A new form of Adaptive Control with TWO tunable networks

A new structure of adaptive controllers

K.G. Vamvoudakis and F.L. Lewis, “Online actor-critic algorithm to solve the continuous-time infinitehorizon optimal control problem,” Automatica, vol. 46, no. 5, pp. 878-888, May 2010.

11 1 1 2 22

( ( )) 1ˆ ˆ ˆ ˆ( ( )) ( )41 ( ( )) ( ( ))

tT T

Tt T

x tW a x t W Q x W D W dx t x t

1 12 2 2 2 1 1 2 2 14 2( ( ))ˆ ˆ ˆ ˆ ˆ( ( )) ( )

1 ( ( )) ( ( ))

TT

T

x tW a F W F x t W a D x W Wx t x t

System

Action network

Policy Evaluation(Critic network)cost

The Adaptive Critic Architecture

Adaptive CriticsValue update- solve Bellman eq.

Control policy update

Critic and Actor tuned simultaneouslyLeads to ONLINE FORWARD-IN-TIME implementation of optimal control

Optimal Adaptive Control

1 1( ) ( )TV x W x

111 22

ˆ( ) ( )T Tu x R g x W

A New Adaptive Control Structure with Multiple Tuned Loops

A New Class of Adaptive Control

Plantcontrol output

Identify the Controller-Direct Adaptive

Identify the system model-Indirect Adaptive

Identify the performance value-Optimal Adaptive

)()( xWxV T

Simulation 1‐ F‐16 aircraft pitch rate controller

1.01887 0.90506 0.00215 00.82225 1.07741 0.17555 0

0 0 1 1x x u

Stevens and Lewis 2003

*1 11 12 13 22 23 33[p 2p 2p p 2p p ]

[1.4245 1.1682 -0.1352 1.4349 -0.1501 0.4329]

T

T

W

PBPBRQPAPA TT 10

Solves ARE online

Exact solution

1̂( ) [1.4279 1.1612 -0.1366 1.4462 -0.1480 0.4317] .TfW t

Algorithm converges to

,Q I R I

Must add probing noise to get PE

111 22

ˆ( ) ( ) ( )T Tu x R g x W n t

2ˆ ( ) [1.4279 1.1612 -0.1366 1.4462 -0.1480 0.4317]TfW t

(exponentially decay n(t))

1

2 1

3 11 11 12 2 2

2

3 2

3

2 0 0 1.42790 1.1612

0 0 -0.1366ˆ ( ) 01.44620 2 01-0.148000.43170 0 2

T

T

T

xx xx x

u x R B Px Rxx x

x

][ eqx

Select quadratic NN basis set for VFA

Critic NN parameters‐Converge to ARE solution

System states

Simulation 2. – Nonlinear System

2( ) ( ) ,x f x g x u x R

21 2

1 2

1

1

0.5 0.5 (1 ( )( )

cos(2 ) 2

0 ( )

)

.cos(2 ) 2

x xf x

x

g x

x x

x

,Q I R I

* 2 21 2

1( )2

V x x x Optimal Value

*1 2( ) (cos(2 ) 2) .u x x x Optimal control

2 21 1 1 2 2( ) [ ] ,Tx x x x x Select VFA basis set

1̂( ) [0.5017 -0.0020 1.0008] .TfW t

Algorithm converges to

2ˆ ( ) [0.5017 -0.0020 1.0008] .T

fW t 111

2 2 121

2

2 0 0.50170ˆ ( ) -0.0020

cos(2 ) 20 2 1.0008

TT x

u x R x xx

x

Nevistic V. and Primbs J. A. (1996)Converse optimal

dxdVggR

dxdVxQf

dxdV T

TT *1

*

41

*

)(0

Solves HJB equation online

Critic NN parameters states

Optimal value fn. Value fn. approx. error Control approx error

New RL Structures Inspired byExperimental Neurocognitive Psychology

ThalamusBasal ganglia-

Dopamine neurons

Cerebellum-direct and inverse

models

Brainstem

Spinal cord

Interoceptivereceptors

Exteroceptivereceptors

Muscle contraction and movement

ReinforcementLearning

inf. olive

Hippocampus-Spatial maps &

Task context

Limbic System Amygdala-

Emotion, intuitive

OFCACC

DLPFCCerebral Cortex

New actor-critic Reinforcement Learning loop

Timing pulses

RL

RLtheta rhythms 4-10 Hz

Motor control 200 Hz

gamma rhythms30-100 Hz

reset

There Appear to be Multiple Reinforcement Learning Loops in the Brain

Known work by Doya

MultipleTime scales

Fast skilled response using gist

Work by Dan Levine and others

2. OFC classifies data quickly into pre‐learned categories of responses

1. Amygdala processes environmental cuesUsing fast heuristics, emotional triggers, and past experience

3. Fast skilled response using gist and SATISFICING

Intuition and Limited data

Amygdala and OFC

Long‐term Memory

Short‐term Memory

4. ACC brings in slower deliberative feedbackIf there is risk

Hippocampus-Spatial maps &

Task context

ThalamusBasal ganglia-Dopamine neurons

If there is mismatch or dissonanceACC sends reset to OFC to form new category

If there is risk or stress, ACC recruits DLPFCFor deliberative decision & control based on real‐time dataPerhaps optimality?

Hippocampus is invoked for detailed task knowledge

Basal ganglia select actual actions taken

LTM

STM

ACC and DLPFC

DLPFCDeliberative

Decision

Longer‐term Memory

Fast skilled response based on stored memoriesusing gist and SATISFICING

Intuition and Limited data

Amygdala and OFCdeliberative decision & control based on more real‐time data

Dan Levine, Neural Dynamics of affect, gist, probability, and choice, Cognitive Sys Research, 2012

The human brain operates with MULTIPLE REINFORCEMENT LOOPS

0

0( )

Th j j j

j Tj h j j

g w x w bs

a f c x c

Standard Value Function approximation is based only on excitatory NN synapses

ˆ ˆ( ) ( ) ( )Ti i i i iV x W x w x

Shunting Inhibition NN

No System Dynamics Model neededNo Model Identification needed

New Fast Decision Structure Using Shunting Inhibitionand Multiple Actor‐Critic Learning

Ruizhuo Song, F.L. Lewis, Qinglai Wei, and Huaguang Zhang, “Multiple Actor‐Critic Structures forContinuous‐Time Optimal Control Using Input‐Output Data,” IEEE Transactions on NeuralNetworks and Learning Systems, vol. 26, no. 4, pp. 851‐865, April 2015.

We use shunting inhibition‐ it is faster

New Value Function Approximation (VFA) Structures Can Encode Risk, Gist, and Emotional Salience

1. Adaptive Self‐Organizing Map

ASOM

New Actor‐CriticADP structure

1 1( ( )) ( ) ( ( ( )))

J L

j ij ij i

V X k I k w X k

Our New VFA structure is No System Dynamics Model needed

No Model Identification needed

Standard NN approximation is based on excitatory NN synapses

ˆ ˆ( ) ( ) ( )Ti i i i iV x W x w x

Indicator function depends on ASOM Classification

2. New Actor‐Critic ADP structure

New Fast Decision Structure Using Adaptive Self‐organizing Map and Multiple Actor‐Critic Learning

B. Kiumarsi, F.L. Lewis, and D. Levine, “Optimal Control of Nonlinear Discrete Time‐varying Systems Usinga New Neural Network Approximation Structure,” Neurocomputing, vol. 156, pp. 157‐165, May 2015

New Value Function Approximation (VFA) Structures Can Encode Risk, Gist, and Emotional Salience

Data‐driven Online Solution of Differential GamesSynchronous Solution of Multi‐player Non Zero‐sum Games

Sun Tz bin fa孙子兵法

500 BC

Multi‐player Differential Games

Manufacturing as the Interactions of Multiple AgentsEach machine has it own dynamics and cost functionNeighboring machines influence each other most stronglyThere are local optimization requirements as well as global necessities

( )i

i i i i i i ij j jj N

A d g B u e B u

12

0

( (0), , ) ( )i

T T Ti i i i i ii i i ii i j ij j

j N

J u u Q u R u u R u dt

Each process has its own dynamics

And cost function

Each process helps other processes achieve optimality and efficiency

Real‐Time Solution of Multi‐Player NZS Games

1

( ) ( )N

j jj

x f x g x u

Multi‐Player Nonlinear Systems

Optimal control *1 2

10

( (0), , , ) min ( ( ) ) ;i

NT

i N i i ij ij

V x Q x R dt i N

* * * * * * * *1 2 1 1 2( , , ,..., ) ( , , ,..., ),i i i iV V V i N Nash equilibrium

1

1

0 ,N

T T Ti c c i i j j jj ij jj j j

j

P A A P Q P B R R R B P i N

Requires Offline solution of coupled Hamilton‐Jacobi –Bellman eqs.

Kyriakos Vamvoudakis, Automatica 2011

Continuous‐time, N players

112

1

0 ( ) ( ) ( ) ( )N

T Ti j jj j j

j

V f x g x R g x V

114

1

( ) ( ) ( ) , (0) 0N

T T Ti j j jj ij jj j j i

j

Q x V g x R R R g x V V

Linear Quadratic Regulator Case‐ coupled AREs

These are hard to solveIn the nonlinear case, HJB generally cannot be solved

106

112( ) ( ) ,T

i ii i ix R g x V i N

Control policies

Real‐Time Solution of Multi‐Player Games

1 210

( (0), , , ) ( ( ) ) ;N

Ti N i i ij i

j

V x Q x R dt i N

Value functions

Solve Bellman eq.

Policy Iteration Solution:

11

0 ( , , , ) ( ) ( ) ( ) , (0) 0N

k k k T i kN i j j i

j

r x V f x g x V i N

1 112( ) ( ) ,k T k

i ii i ix R g x V i N Policy Update

Non‐Zero Sum Games – Synchronous Policy Iteration Kyriakos Vamvoudakis

Convergence has not been provenHard to solve Hamiltonian equationBut this gives the structure we need for online Synchronous PI Solution

Differential equivalent gives coupled Bellman eqs.

11 1

0 ( ) ( ) ( ( ) ( ) ) ( , , , , ),N N

T Ti j ij j i j j i i N

j jQ x u R u V f x g x u H x V u u i N

Leibniz gives Differential equivalent

Real‐Time Solution of Multi‐Player Games

N Critic Neural Networks for VFA 1 1 1ˆ ˆ( ) ( ) ,TV x W x 2 2 2

ˆ ˆ( ) ( )TV x W x

11 1 1 1 1 1 11 1 2 12 22

1 1

ˆ ˆ[ ( ) ]( 1)

T T TTW a W Q x u R u u R u

13 3 2 3 1 3 1 1 11 21 11 1 3 2 2 1 3 1 1

1 1ˆ ˆ ˆ ˆ ˆ ˆ ˆ{( ) ( ) ( ) ( ) }4 4

T T T T T TW F W F W g x R R R g x W m W D x W m W

N Actor Neural Networks

On‐Line Learning – for Player 1:

111 11 1 1 32

ˆ( ) ( ) ,T Tu x R g x W

Online Synchronous PI Solution for Multi‐Player Games

Learns Bellman eq. solution

Kyriakos Vamvoudakis

112 22 2 2 42

ˆ( ) ( )T Tu x R g x W

2-player case

Each player needs 2 NN – a Critic and an Actor

Learns control policy

Player 1 Player 2

Convergence is proven using Lyapunov functions

K.G. Vamvoudakis and F.L. Lewis, “Multi-Player Non-Zero Sum Games: Online Adaptive LearningSolution of Coupled Hamilton-Jacobi Equations,” Automatica, vol. 47, pp. 1556-1569, 2011.

1 11 1 1 2 2 2

1 1( ) ( ) ( ) ( ).2 2

T TL t V x tr W a W tr W a W

Lyapunov energy‐based Proof:

1140 ( )

T TTdV dV dVf Q x gR g

dx dx dx

V(x)= Unknown solution to HJB eq.

2 1 2ˆW W W

1 1 1̂W W W

1 1 1( , , ) ( ) ( )T THH x W u W f gu Q x u Ru

W1= Unknown LS solution to Bellman equation for given N

Guarantees stability

Multi‐Agent Learning Guaranteed Convegence Proof

Process i Cost Function Optimization

Process j1 controlupdate

Process i controlupdate

Process j2 controlupdate

Optimal Performance of Each Process Depends on the Control of its Neighbor Processes

Control Policy of Each Process Depends on the Performance of its Neighbor Processes

Process i Cost Function Optimization

Process i controlupdate

Process j2 Cost Function Optimization

Process j1 Cost Function Optimization

Multi-player Games for Multi-Process Optimal ControlData-Driven Optimization (DDO)

Sun Tz bin fa孙子兵法

Games on Communication Graphs

500 BC

F.L. Lewis, H. Zhang, A. Das, K. Hengster-Movric, Cooperative Control of Multi-Agent Systems: Optimal Design and Adaptive Control, Springer-Verlag, 2013

Key Point

Lyapunov Functions and Performance IndicesMust depend on graph topology

Hongwei Zhang, F.L. Lewis, and Abhijit Das“Optimal design for synchronization of cooperative systems: state feedback, observer and output feedback,”IEEE Trans. Automatic Control, vol. 56, no. 8, pp. 1948-1952, August 2011.

H. Zhang, F.L. Lewis, and Z. Qu, "Lyapunov, Adaptive, and Optimal Design Techniques for Cooperative Systems on Directed Communication Graphs," IEEE Trans. Industrial Electronics, vol. 59, no. 7, pp. 3026‐3041, July 2012.

,i i i ix Ax B u

0 0x Ax

0( ) ( ),ix t x t i

0( ) ( ),i

i ij i j i ij N

e x x g x x

( ) ,nix t ( ) im

iu t

Graphical GamesSynchronization‐ Cooperative Tracker Problem

Node dynamics

Target generator dynamics

Synchronization problem

Local neighborhood tracking error (Lihua Xie)

x0(t)

( )i

i i i i i i ij j jj N

A d g B u e B u

12

0

( (0), , ) ( )i

T T Ti i i i i ii i i ii i j ij j

j N

J u u Q u R u u R u dt

12

0

( ( ), ( ), ( ))i i i iL t u t u t dt

Local nbhd. tracking error dynamics

Define Local nbhd. performance index

Local agent dynamics driven by neighbors’ controls

Values driven by neighbors’ controls

K.G. Vamvoudakis, F.L. Lewis, and G.R. Hudas, “Multi-Agent Differential Graphical Games: online adaptive learning solution for synchronization with optimality,” Automatica, vol. 48, no. 8, pp. 1598-1611, Aug. 2012.

M. Abouheaf, K. Vamvoudakis, F.L. Lewis, S. Haesaert, and R. Babuska, “Multi-Agent Discrete-Time GraphicalGames and Reinforcement Learning Solutions,” Automatica, Vol. 50, no. 12, pp. 3038-3053, 2014.

1u

2u

iu Control action of player i

Value function of player i

New Differential Graphical Game

( )i

i i i i i i ij j jj N

A d g B u e B u

State dynamics of agent i

Local DynamicsLocal Value Function

Only depends on graph neighbors

12

0

( (0), , ) ( )i

T T Ti i i i i ii i i ii i j ij j

j N

J u u Q u R u u R u dt

1

N

i ii

z Az B u

12

10

( (0), , ) ( )N

T Ti i i j ij j

j

J z u u z Qz u R u dt

1u

2u

iu Control action of player i

Central Dynamics

Value function of player i

Standard Multi-Agent Differential Game

Central DynamicsLocal Value Functiondepends on ALL

other control actions

Def. Local Best response.is said to be agent i’s local best response to fixed policies of its neighbors if

* * *( , ) ( , ), ,i j G j i j G jJ u u J u u i j N

A restriction on what sorts of performance indices can be selected in multi‐player graph games.

A condition on the reaction curves (Basar and Olsder) of the agents

This rules out the disconnected counterexample.

*( , ) ( , ),i i i i i i iJ u u J u u u

*iu iu

New Definition of Nash Equilibrium for Graphical Games

* * *1 2, ,...,u u u

* * * *( , ) ( , ),i i i G i i i G iJ J u u J u u i N

Def: Interactive Nash equilibrium

are in Interactive Nash equilibrium if

2. There exists a policy such that ju

1.

That is, every player can find a policy that changes the value of every other player.

i.e. they are in Nash equilibrium

1 1 12 2 2( , , , ) ( ) 0

i i

TT T Ti i

i i i i i i i i i ij j j i ii i i ii i j ij ji i j N j N

V VH u u A d g B u e B u Q u R u u R u

10 ( ) Ti ii i i ii i

i i

H Vu d g R B

u

2 1 2 1 11 1 12 2 2( ) ( ) 0,

i

TT Tj jc T T Ti i i

i i ii i i i i ii i j j j jj ij jj ji i i j jj N

V VV V VA Q d g B R B d g B R R R B i N

2 1 1( ) ( ) ,i

jc T Tii i i i i ii i ij j j j jj j

i jj N

VVA A d g B R B e d g B R B i N

* *( , , , ) 0ii i i i

i

VH u u

12( ( )) ( )

i

T T Ti i i ii i i ii i j ij j

j Nt

V t Q u R u u R u dt

Value function

Differential equivalent (Leibniz formula) is Bellman’s Equation

Stationarity Condition

1. Coupled HJ equations

where

Graphical Game Solution Equations

Now use Synchronous PI to learn optimal Nash policies online in real‐time as players interact

Online Solution of Graphical Games

Use Reinforcement Learning Convergence Results

POLICY ITERATION

Kyriakos Vamvoudakis

Multi‐agent Learning

Data‐driven Online Solution of Differential GamesZero‐sum 2‐Player Games and H‐infinity Control

2

0

2

0

2

0

2

0

2

)(

)(

)(

)(

dttd

dtuhh

dttd

dttz T

System( ) ( ) ( )( )

TT T

x f x g x u k x dy h x

z y u

( )u l x

d

u

z

x control

Performance output disturbance

Find control u(t) so that

For all L2 disturbancesAnd a prescribed gain

L2 Gain Problem

H‐Infinity Control Using Neural Networks

Zero‐Sum differential gameNature as the opposing player

Disturbance Rejection

2* 2

0

( (0)) min max ( (0), , ) min max ( ) ( )T T

u ud dV x V x u d h x h x u Ru d dt

Define 2‐player zero‐sum game as

min max ( (0), , ) max min ( (0), , )u ud d

V x u d V x u d

The game has a unique value (saddle‐point solution) iff the Nash condition holds

min max ( , , , ) max min ( , , , )u ud d

H x V u d H x V u d

A necessary condition for this is the Isaacs Condition

0 , 0H Hu d

Stationarity Conditions

( , ) ( ) ( ) ( )( )

x f x u f x g x u k x dy h x

System

Cost

Online Zero‐Sum Differential Games

22( ( ), , ) ( , , )T T

t t

V x t u d h h u Ru d dt r x u d dt

H-infinity Control

2 players

Leibniz gives Differential equivalent

K.G. Vamvoudakis and F.L. Lewis, “Online solution of nonlinear two-player zero-sum games using synchronous policy iteration,” Int. J. Robust and Nonlinear Control, vol. 22, pp. 1460-1483, 2012.

D. Vrabie and F.L. Lewis, “Adaptive dynamic programming for online solution of a zero-sum differential game,” J Control Theory App., vol. 9, no. 3, pp. 353–360, 2011

Optimal control/dist. policies found by stationarity conditions

Game saddle point solution found from Hamiltonian ‐ ZS Game BELLMAN EQUATION

0 , 0H Hu d

112 ( )Tu R g x V 2

1 ( )2

Td k x V

22( , , , ) ( ( ) ( ) ( ) ) 0TT TVH x u d h h u Ru d V f x g x u k x dx

HJI equation * *

12

0 ( , , , )1 1( ) ( ) ( ) ( ) ( ) ( ) ( ) ( )4 4

T T T T T T

H x V u d

h h V x f x V x g x R g x V x V x kk V x

1. For a given control policy solve for the value ( )ju x 1( ( ))jV x t

111 12( ) ( )T

j ju x R g x V

Double Policy Iteration Algorithm to Solve HJI

Start with stabilizing initial policy 0 ( )u x

ZS game Bellman eq.

3. Improve policy:

• Convergence proved by Van der Schaft if can solve nonlinear Lyapunov equation exactly

• Abu Khalaf & Lewis used NN to approximate V for nonlinear systems and proved convergence

ONLINE SOLUTION‐ Use IRL to solve the ZS Bellman eq. and update the actors at each step

220 ( )( )T iT i T ij j j jh h V x f gu kd u Ru d

Add inner loop to solve for available storage

12

1 ( )2

i T ijd k x V

2. Set . For i=0,1,… solve for 1( ( )),i ijV x t d 0 0d

On convergence set 1( ) ( )ij jV x V x

Murad AbukhalafDraguna Vrabie

Actor‐Critic structure ‐ three time scales

x

u2 1 0;x Ax B u B w x

System

Controller/Player 1

w( 1)T i kuu B P x 1

1T i

ww B P x

Disturbance/Player 2

CriticLearning procedure

ˆ ˆ, if =1

ˆ ˆ ˆ ˆ, if >1

T T T

T T

V x C Cx u u i

V w w u u i

T T

1 2

1iuP ( 1) 1 ( 1)i k i i k

u u uP P Z

V

iuP

x

( ) 1 ( )i k i i ku u uP P Z

149

New Developments in IRL for CT SystemsQ Learning for CT SystemsExperience ReplayOff-Policy IRL

Q learning for CT SystemsJ.Y. Lee, J.B. Park, Y.H. Choi, Automatica 2012Biao Luo, Derong Liu, T. Huang, 2014M. Palanisamy, H. Modares, F.L. Lewis, M. Aurangzeb, IEEE T Cybern 2015

CT Q function is quadratic in x, u, and the derivatives of u ‐

( )( ), [ : ) ( )t T

t T T T

t

Q x t u t t T e x S x R d e V x t T

( )1 1 1 1( ) ( ) ( ) ( ) ( ) ( ) ( )

t Tt T T T T

k k k k k k kt

e x S x u R u d Z t M S M R Z t E t T

( )

( )( )( )( )

( )

k

k

x tu tu tZ t

u t

IRL with Experience ReplayHumans use memories of past experiences to tune current policies

( ) ( ( )) ( ( )) ( )x t f x t g x t u t= +

1

0( ( )) ( ( )) 2 ( tanh ( ))( )u

T

t T

V x t T Q x v Rdv dt l l t¥

-

-

- = +ò ò

1ˆ ˆ( ) ( )TV x W xf=

( ( )) ( ( )) ( ( ))x t x t x t Tf f fD = - -

1

0( ) 2 ( tanh ( ))( )

tu

T

t T

p t Q v Rdv dl l t-

-

= +ò ò

1 1 12

1 121

( )ˆ ˆ( ) ( ) ( ) ( )(1 ( ) ( ))

ˆ ( )(1 )

( )

( )

T

T

lj T

j jTj j j

tW t p t t W t

t t

p W t

fa f

f f

fa f

f f=

D= - +D

+D D

D- +D

+D Då

system

Value

VFA

NN weight tuning uses past samples

Modares and Lewis, Automatica 2014Girish Chowdhary‐ concurrent learningSutton and Barto book

IRL Bellman equation ( ( )) ( ) ( ( ))V x t T p t V x t- = +

1̂ ( ( )) ( ( )) ( ) 0TW x t x t T p t

On‐policy RL

155

Target and behaviorpolicy

SystemRef.

Target policy: The policy that we are learning about.Behavior policy: The policy that generates actions and behavior

Target policy and behavior policy are the same

Sutton and Barto Book

Off‐Policy Reinforcement Learning

Humans can learn optimal policies while actually playing suboptimal policies

Off‐policy RL

156

Behavior Policy System

Target policy

Ref.

Target policy and behavior policy are different

Humans can learn optimal policies while actually applying suboptimal policies

H. Modares, F.L. Lewis, and Z.-P. Jiang, “H-infinity Tracking Control of Completely-unknown Continuous-time Systems via Off-policyReinforcement Learning ,” IEEE Transactions on Neural Networks and Learning Systems, vol. 26, no. 10, pp. 2550-2562, Oct. 2015.

Ruizhuo Song, F.L. Lewis, Qinglai Wei, “Off-Policy Integral Reinforcement Learning Method to Solve Nonlinear Continuous-TimeMulti-Player Non-Zero-Sum Games,” IEEE Transactions on Neural Networks and Learning Systems, vol. 28, no. 3, pp. 704-713, 2017.

Bahare Kiumarsi, Frank L. Lewis, Zhong-Ping Jiang, “H-infinity Control of Linear Discrete-time Systems: Off-policy ReinforcementLearning,” Automatica, vol. 78, pp. 144-152, 2017.

Off-policy IRLHumans can learn optimal policies while actually applying suboptimal policies

( ) ( )x f x g x u

( ) ( ), ( )t

J x r x u d

[ [ [] [ ]] ]( ( )) ( ( )) ( )i i

t T t

t i i

T

t TJ x t J x t T Q x d Ru du

On-policy IRL

[ 1] 1 [ ]12

i T ixu R g J

[ ] [ ]( )i ix f gu g u u Off-policy IRL

[ ] [ ] [ ] [ ] [ 1] [ ]( ( )) ( ( )) ( ) 2 ( )t t tii i

t T t T t T

T i i T iJ x t J x t T Q x d R d du u u R u u

This is a linear equation for andThey can be found simultaneously online using measured data using Kronecker product and VFA

[ ]iJ [ 1]iu

1. Completely unknown system dynamics2. Can use applied u(t) for –

disturbance rejection – Z.P. Jiang - R. Song and Lewis, 2015robust control – Y. Jiang & Z.P. Jiang, IEEE TCS 2012exploring probing noise – without bias ! - J.Y. Lee, J.B. Park, Y.H. Choi 2012

DDO

Yu Jiang & Zhong-Ping Jiang, Automatica 2012

system

value

Must know g(x)

Off‐policy for Multi‐player NZS Games

1( ) ( )N

jjx f x g x u

1 2 1( ( )) ( ( , , , , )) ( ( ) )N T

i i N i j ij jjt tV x t r x u u u d Q x u R u d

[ ] [ ]1 1

( ) ( ) ( )( )N Nk kj j jj j

x f x g x u g x u u

Off‐policy

[ ] [ ] [ ] [ ] [ 1] [ ]1 1

( ( )) ( ( 2 ( ))) ( )t T t T t TN Nkk k T k k T k

j ij j i ii j jji i it t jtu R u u R u uV x t T V x t Q x d d d

1. Solve online using measured data for 2. Completely unknown dynamics3. Add exploring noise with no bias

[ ] [ 1],k ki iV u

DDO

Angela Song and Lewis

On‐policy

Off‐policy IRL for ZS Games – H‐infinity Control

2( ) [ ( ) ( ) ]T T T nd

X t e t r t= Î

Optimal tracker

( ) ( ( )) ( ( )) ( ) ( ( )) ( )X t F X t G X t u t K X t d t= + +

( ) 2( , ) ( )t T T TTt

V u d e X Q X u Ru d d da t g t¥

- -= + -ò

( ) ( )i i i i

X F G u K d G u u K d d= + + + - + -

Off‐policy

1. Solve online using data for 2. Completely unknown dynamics3. Disturbance does not need to be specified4. Add exploring noise with no bias

( ) 2

( ( )) ( ( ))

( )

Ti i

t Tt T T T

T i i i it

e V X t T V X t

e X Q X u R u d d d

a

a t g t

-

+- -

+ - =

- - +ò

1 1, ,i i iV u d

DDO

Biao Luo, H.N. Wu, T. Huang, IEEE T. Cybern. Modares, Lewis, Z.P. Jiang, TNNLS 2015H. Li, Derong Liu, D. Wang, IEEE TASE 2015

Extended stateIncluding ref. traj. dynamics

On‐policy

160

Output Synchronization of Heterogeneous MAS

161

Heterogeneous Multi‐Agents

Leader

Output regulation error

leader node x0

i i i i i

i i i

x A x B u

y C x

= +

=

0 0

Sz z=0 0

y R z=

0( ) ( ) ( ) 0i it y t y t

Output regulator equations

i i i i i

i i

A B S

C R

P + G = P

P =

Dynamics are different, state dimensions can be differento/p reg eqs capture the common core of all the agents dynamicsAnd define a synchronization manifold

i i i i i

i i i

x A x B u

y C x

= +

=

0 0

Sz z=0 0

y R z=MAS Leader

Pioneered by Jie Huang

Output regulator equations

i i i i i

i i

A B S

C R

P + G = P

P =

A standard o/p regulation Controller

01

( ) ( )N

i i ij j i i ij

S c a gz z z z z z=

é ùê ú= + - + -ê úë ûå

1 1 1 1 2( ) ( )

i i i i i i i i i i i i i i i i iu K x K x K K x Kz z z z= -P +G = + G- P º +

Must know leader’s dynamics S,RAnd solve the o/p regulator equations

0( ) ( ) 0,

iy t y t i- "o/p regulation

Optimal Output Synchronization of Heterogeneous MAS Using Off-policy IRLNageshrao, Modares, Lopes, Babuska, Lewis

i i i i i

i i i

x A x B u

y C x

= +

=

0 0

Sz z=

0 0y R z=

0

( ) ( ) iT

n pT T

iX t x t z +é ù= Îê úë û

1i i i i iX T X B u= + 1

0,

0 0i i

i i

A BT B

S

é ù é ùê ú ê ú= =ê ú ê úê ú ê úë û ë û

( )

1 1( ( )) ( )

( ) ( )

i t T T Ti i i i i i i it i

Ti i i

V X t e X C Q C K W K X d

X t P X t

g t t¥ - -= +

=ò

1 2 0i i i i i iu K x K K Xz= + =

MAS Leader

Optimal Tracker Problem

Augmented Systems

Performance index

Control

164

Optimal Tracker Solution by Reinforcement Learning

11 2 1

[ , ] Ti i i i i i

K K K W B P-= =-

11 1 1 1

0T T Ti i i i i i i i i i i i i i

T P T P P C QC P B W B Pg -+ - + - =

Tracker ARE

Algorithm 1. On-policy IRL State-feedback algorithm

( )

0 0( ) ( ) ( ) ( ) ( ) ( )i i

t tt tT T Ti i i i i i i i it

e X t t P X t t X t P X t e y y Q y y ddg d g tk kd d t

+- - -+ + - = - - -ò

Policy Evaluation‐ Solve IRL Bellman equation

Policy Update‐1 1 1 1

1 2 1[ , ] T

i i i i i iK K K W B Pk k k k+ + + -= =-

Must know 1iB

Bellman equation is solved using RLS or batch LSIt requires a Persistence of Excitation (PE) condition that may be hard to satisfy

Theorem‐ Algorithm 1 converges to the solution to the ARE

165

1 1 1

( ) ( ) ( )i i i i i i i i i i i i i i i

X T B K X B u K X T X B u K Xk k k= + + - º + -

Off‐Policy RL

1i i i i i

X T X B u= +

Tracker dynamics

Rewrite as

( )

0 0

( ) 1

( ) ( ) ( ) ( ) ( ) ( )

2 ( )

i i

i

t tt tT T Ti i i i i i i i it

t t t Ti i i i i it

e X t t P X t t X t P X t e y y Q y y d

e u K X W K X d

dg d g tk k

d g t k k

d d t

t

+- - -

+ - - +

+ + - = - - -

+ -

ò

ò

Now the Bellman equation becomes

Extra term containing 1i

Kk+

Algorithm 2. Off-policy IRL Data-based algorithm

Iterate on this equation and solve for simultaneously at each step 1,i i

P Kk k+

Note about probing noise If then ( )i i i i i i

u K X e u K X ek k= + - =

Do not have to know any dynamics i i i i i

i i i

x A x B u

y C x

= +

=0 0

Sz z=

0 0y R z=agent Or leader

166

11 1 1 1

0T T Ti i i i i i i i i i i i i i

T P T P P C QC P B W B Pg -+ - + - =

Theorem‐ Off‐policy Algorithm 2 converges to the solution to the ARE

Then the solution to the output regulator equations i i i i i

i i

A B S

C R

P + G = P

P =

Is given by 1

11 12( )i i

iP P-P = -

Let 11 12

21 22

i i

i ii

P PP

P P

é ùê ú= ê úê úë û

Theorem‐ o/p reg eq solution

1

2 1 11 12( )i i

i i iK K P P-G = -

Do not have to know the Agent dynamics or the leader’s dynamics (S,R)

Applications of Reinforcement Learning

Human‐Robot Interactive LearningIndustrial process control‐Mineral grinding in Gansu, ChinaResilient Control to Cyber‐Attacks in Networked Multi‐agent SystemsDecision & Control for Heterogeneous MAS (different dynamics)

Intelligent Operational Control for Complex Industrial Processes

Professor Chai Tianyou

State Key Laboratory of Synthetical Automation for Process Industries

Northeastern UniversityMay 20, 2013

Jinliang Ding

1. Jinliang Ding, H. Modares, Tianyou Chai, and F.L. Lewis, "Data-based Multi-objective Plant-widePerformance Optimization of Industrial Processes under Dynamic Environments,” IEEE Trans. IndustrialInformatics, vol.12, no. 2, pp. 454-465, April 2016.

2. Xinglong Lu, B. Kiumarsi, Tianyou Chai, and F.L. Lewis, “Data-driven Optimal Control of OperationalIndices for a Class of Industrial Processes,” IET Control Theory & Applications, vol. 10, no. 12, pp. 1348-1356, 2016.

Manufacturing as the Interactions of Multiple AgentsEach machine has it own dynamics and cost functionNeighboring machines influence each other most stronglyThere are local optimization requirements as well as global necessities

Production line for mineral processing plant

Mineral Processing Plant in Gansu China

Existing Manual Control for Plant production indices, unit operational indices, and unit process control for a production line

Overall

ˆ ( )kQ t

( )kQ mT

,~ { } 1,

1, 2,3i jr i n

j

r r

ˆ( )tr

( )mTr

*min max, ,k k kQ Q Q

*,i jr

*min max, ,k k kQ Q Q

( )kQ mT

*min max, ,k k kQ Q Q

, ( )i jr mT

Automated online reinforcement learning for determining operational indices

Implemented by Jingliang Ding and Chai Tianyou’s group in biggest mineral processingfactory of hematite iron ore in China, Gansu Province.

Savings of 30.75 million RMB per year were realized by implementing this automatedoptimization procedure instead of the standard industry practice of human operatorselection of process operational indices.

2 RL loopsAnd Value Function Approximation

RL for Human-Robot Interaction (HRI)1. H. Modares, I. Ranatunga, F.L. Lewis, and D.O. Popa, “Optimized Assistive Human-robot

Interaction using Reinforcement Learning,” IEEE Transactions on Cybernetics, vol. 46, no. 3,pp. 655-667, 2016.

2. I. Ranatunga, F.L. Lewis, D.O. Popa, and S.M. Tousif, "Adaptive Admittance Control forHuman-Robot Interaction Using Model Reference Design and Adaptive Inverse Filtering" IEEETransactions on Control Systems Technology, vol. 25, no. 1, pp. 278-285, Jan. 2017.

3. B. AlQaudi, H. Modares, I. Ranatunga, S.M. Tousif, F.L. Lewis, and D.O. Popa, “Modelreference adaptive impedance control for physical human robot interaction,” Control Theory andTechnology, vol. 14, no. 1, pp. 1-15, Feb. 2016.

PR2 meets Isura

Robot dynamics

Prescribed Error system

Control torque depends onImpedance model parameters

Impedance Control

Human task learning has 2 components:1. Human learns a robot dynamics model to compensate for robot nonlinearities2. Human learns a task model to properly perform a task

Inner Robot Specific Control LoopINDEPENDENT OF TASK

Outer Task Specific Control LoopINDEPENDENT OF ROBOT DETAILS

Human Performance Factors Studies

H. Modares, I. Ranatunga, F.L. Lewis, and D.O. Popa, “Optimized Assistive Human‐robot Interaction using Reinforcement Learning,” IEEE Transactions on Cybernetics, to appear 2015.

RL for Human‐Robot Interactions

RobotManipulator

TorqueDesign

PrescribedImpedance Model

xhf

mx

e

th

KHuman

FeedforwardControl

‐d

x+

-

Performance

Robot‐specific inner control loop

Task‐specific outer‐loop control designUsing IRL Optimal Tracking

Inspired by work of Sam S. Ge Implemented on PR2 robot