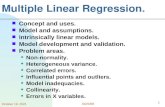

Multiple Linear Regression Review

description

Transcript of Multiple Linear Regression Review

Multiple Linear RegressionReview

Outline Outline Outline

• Simple Linear Regression Simple Linear Regression

• Multiple Regression Multiple Regression

• Understanding the Regression Output Understanding the Regression Output

• Coefficient of Determination R Coefficient of Determination R2

• Validating the Regression Model

Linear Regression: An Example

Firs

t Y

ear

Sal

es (

$Mill

ions

)Advertising Expenditures ($Millions)

A pplegloFirst-YearAdvertisingExpenditures($ m illions)

First-YearSales

($ millions )Reg ion

MaineNew HampshireVermontMassachusettsConnecticutRhode IslandNew YorkNew JerseyPennsylvaniaDelawareMarylandWest VirginiaVirginiaOhio

Questions: a) How to relate advertising expenditure to sales? b) What is expected first-year sales if advertising expenditure is $2.2 million? c) How confident is your estimate? How good is the “fit?”

The Basic Model: Simple Linear Regression

Data: (x1, y1), (x2, y2), . . . , (xn, yn)Model of the population : Yi = β0 + β1 xi + εi

ε1, ε2, . . . , εn are are i.i.d. random variables, random variables, N(0, σ ) This is the true relation between Y and x, but we do not know not know β0 and β1 and have to estimate them based on the data.

Comments:• E (Yi | xi ) = β0 + β1xi

• SD(Yi | xi) = σ• Relationship is linear Relationship is linear – described by a “line” • β0 = “baseline” value of Y (i.e., value of Y if x is 0) • β1 = “slope” of line (average change in Y per unit change in x)

How do we choose the line that “best” fits the data?

Best choices:bo = 13.82 b1 = 48.60

Advertising Expenditures ($M)

Firs

t Y

ear

Sal

es (

$M)

Regression coefficients : b0 and b1 are are estimates of β0 and β1

Regression estimate for Y at xi : (prediction)

Residual (error):The “best” regression line is the one that chooses b0 and b1 to minimize the total errors (residual sum of squares):

Example: Sales of Nature-Bar ($ million)

region sales advertising promotions competitor’s sales

SelkirkSusquehannaKitteryActon Finger LakesBerkshireCentralProvidenceNashuaDunsterEndicottFive-TownsWaldeboroJacksonStowe

Multiple Regression

• In general, there are many factors in addition to advertising expenditures that affect sales • Multiple regression allows more than one x variables.Independent variables: x1, x2, . . . , xk (k of them)Data: (y1, x11, x21, . . . , xk1), . . . , (yn, x1n, x2n, . . . , xkn),Population Model: Yi = β0 + β1x1i + . . . + βkxki + εi

ε1, ε2, . . . , εn are are iid random variables, ~ N(0, σ)

Regression coefficients : b0, b1,…, bk are estimates of β0, β1,…,βk .Regression Estimate of yi :Goal: Choose b0, b , b1, ... , , ... , bk to minimize the residual sum of squares. I.e., minimize:

Regression Output (from Excel)Regression Statistics

Multiple R R Square Adjusted R Square Standard Error Observations

Analysis ofVariance

df Sum ofSquares

MeanSquare

F SignificanceF

Regression ResidualTotal

Coefficients StandardError

tStatistic

P-value

Lower95%

Upper95%

Intercept Advertising Promotions Competitor’sSales

Understanding Regression Output

1) Regression coefficients : b0, b1, . . . , bk are estimates are estimates of β0, β1, . . . , βk based on sample data. Fact: E[ Fact: E[bj ] = βj .

Example: b0 = 65.705 (its interpretation is context dependent .

b1 = 48.979 (an additional $1 million in advertising is expected to result in an additional $49 million in sales)

b2 = 59.654 (an additional $1 million in promotions is expected to result in an additional $60 million in sales)b3 = -1.838 (an increase of $1 million in competitor sales is expected to decrease sales by $1.8 million)

Understanding Regression Output, Continued

2) Standard errors : an estimate of σ, the SD of each the SD of each εi. It is a measure of the amount of “noise” in the model. Example: s = 17.60

3) Degrees of freedom : #cases - #parameters, relates to over relates to over-fitting phenomenon

4) Standard errors of the coefficients: sb0 , sb1, . . . , sbk

They are just the standard deviations of the estimates b0, b1, . . . , bk.

They are useful in assessing the quality of the coefficient estimates and validating the model.

R2 takes values between 0 and 1 (it is a percentage).

R2 = 0; x values account for none variation in the Y values

R2 = 1; x values account for all variation in the Y values

R2 = 0.833 in ourAppleglo Example

Understanding Regression Output, Continued

5) Coefficient of determination: R2

• It is a measure of the overall quality of the regression. • Specifically, it is the percentage of total variation exhibited in the yi data that is accounted for by the sample regression line. The sample mean of Y:

Total variation in Y Total variation in Y =

Residual (unaccounted) variation in Y

total variation

variation accounted for by x variables

total variation

variation not accounted for by x variables

Coefficient of Determination: R2

• A high R2 means that most of the variation we observe in the yi data can be attributed to their corresponding x values −− a desired property.

• In simple regression, the R2 is higher if the data points are is better aligned along a line. But outliers better aligned– Anscombe example.• How high a R2 is “good” enough depends on the situation (for example, the intended use of the regression, and complexity of the problem).

• Users of regression tend to be fixated on R2, but it’s not the , whole story. It is important that the regression model is “valid.”

Coefficient of Determination: R2

• One should not include x variables unrelated to Y in the model, just to make the R2 fictitiously high. (With more x variables fictitiously high. there will be more freedom in choosing the bi’s ’s to make the residual variation closer to 0).

• Multiple R is just the square root of R Multiple R is just the square root

Validating the Regression Model

Assumptions about the population: about the population: Yi = β0 + β1x1i + . . . + βkxki + εi (i = 1, . . . , n) ε1, ε2, . . . , εn are are iid random variables, ~ N(0, σ)

1) Linearity • If k = 1 (simple regression), one can check visually from scatter plot.

• “Sanity check:” the sign of the coefficients, reason for non-linearity?

2) Normality of Normality of εi

• Plot a histogram of the residuals• Usually, results are fairly robust with respect to this assumption.

3) Heteroscedasticity• Do error terms have constant Std. Dev.? (i.e., SD(εi) = σ for all i?) • Check scatter plot of residuals vs. Y and x variables.

Residuals

Advertising Expenditures

Residuals

Advertising

Residu

No evidence of heteroscedasticity Evidence of heteroscedasticity

• May be fixed by introducing a transformation • May be fixed by introducing or eliminating some independent variables

4) Autocorrelation : Are error terms independent? Plot residuals in order and check for patterns

Time Plot Time Plot

Res

idua

l

Res

idua

l

No evidence of autocorrelation Evidence of autocorrelation

• Autocorrelation may be present if observations have a naturalsequential order (for example, time).• May be fixed by introducing a variable or transforming a variable.

Pitfalls and Issues1) Overspecification • Including too many x variables to make R2 fictitiously high. • Rule of thumb: we should maintain that Rule of thumb: n >= 5(k+2).

2) Extrapolating beyond the range of data

Advertising

Validating the Regression Model

3) Multicollinearity • Occurs when two of the x variable are strongly correlated. • Can give very wrong estimates for βi’s.• Tell-tale signs: - Regression coefficients (bi’s) have the “wrong” sign. - Addition/deletion of an independent variable results in large changes of regression coefficients - Regression coefficients (bi’s ) not significantly different from 0 • May be fixed by deleting one or more independent variables

ExampleStudent Graduate CollegeNumber GPA GPA GMAT

Regression Output

What happened?

College GPA and GMAT are highly correlated!

Eliminate GMAT

R SquareStandard ErrorObservations

InterceptCollege GPA GMAT

Coefficients Standard Error

Graduate College GMAT

GraduateCollege GMAT

R Square Standard Error Observations

InterceptCollege GPA

Coefficients Standard Error

Regression Models

• In linear regression, we choose the “best” coefficients b0, b1, ... , bk as the estimates for β0, β1,…, βk .

• We know on average each bj hits the right target βj .

• However, we also want to know how confident we are aboutour estimates

Back to Regression Output

Intercept Advertising Promotions Compet.Sales

Upper95%

Lower95%

P-value

tStatistic

StandardError

Coefficients

Regression ResidualTotal

dfSum ofSquares

MeanSquare

Regression Statistics

Multiple R R Square Adjusted R Square Standard Error Observations Analysis of Variance

Regression Output Analysis

1) Degrees of freedom (dof) • Residual dof = n = n - (k+1) (We used up (k + 1) degrees of freedom in forming (k+1) sample estimates b0, b1, . . . , bk .)

2) Standard errors of the coefficients : sb0 , sb1, . . . , sbk

• They are just the SDs of estimates of b0, b1, . . . , bk .

• Fact: Before we observe bj and sbj, obeys a t-distribution with dof = (n - k - 1), the same dof as the residual.

• We will use this fact to assess the quality of our estimates bj . • What is a 95% confidence interval for βj? • Does the interval contain 0? Why do we care about this?

3) t-Statistic:

• A measure of the statistical significance of each individual xj

in accounting for the variability in Y.

• Let c be that number for which

where T obeys a t-distribution distribution with dof = (n - k - 1).

• If > c, then the c, then the α% C.I. for βj does not contain zero

• In this case, we are α% confident that confident that βj different from zero.

Example: Executive Compensation

Pay Years in Change in Change inNumber ($1,000) position Stock Price (%) Sales (%) MBA?

variables: Dummy

• Often, some of the explanatory variables in a regression are categorical rather than numeric.

• If we think whether an executive has an MBA or not affects his/her pay, We create a dummy variable and let it be 1 if the executive has an MBA and 0 otherwise.

• If we think season of the year is an important factor to determinesales, how do we create dummy variables? How many?

• What is the problem with creating 4 dummy variables?

• In general, if there are m categories an x variable can belong to,

then we need to create m-1 dummy variables for it.

OILPLUS data

August, 1989September, 1989October, 1989November, 1989December, 1989January, 1990February, 1990March, 1990April, 1990May, 1990June, 1990July, 1990August, 1990September, 1990October, 1990November, 1990December, 1990

Month heating oil temperature

Hea

ting

Oil

Con

sum

ptio

n(1

,000

gal

lons

)

Average Temperature (degrees Fahrenheit)

oil c

onsu

mpt

ion

inverse temperature

heating oil temperature inverse temperature

The Practice of Regression

• Choose which independent variables to include in the model, based on common sense and context specific knowledge.

• Collect data (create dummy variables in necessary).

• Run regression Run regression −− −− the easy part.

• Analyze the output and make changes in the model −− this is where the action is.

• Test the regression result on “out-of of-sample” data

The Post-Regression Checklist1) Statistics checklist: Calculate the correlation between pairs of x variables −− watch for evidence of multicollinearity Check signs of coefficients – do they make sense? Check 95% C.I. (use t-statistics as quick scan) – are coefficients significantly different from zero? R2 :overall quality of the regression, but not the only measure

2) Residual checklist: Normality – look at histogram of residuals Heteroscedasticity – plot residuals with each x variable Autocorrelation – if data has a natural order, plot residuals in order and check for a pattern

The Grand Checklist• Linearity: scatter plot, common sense, and knowing your problem, transform including interactions if useful• t-statistics: are the coefficients significantly different from zero? Look at width of confidence intervals • F-tests for subsets, equality of coefficients tests for subsets, • R2: is it reasonably high in the context? • Influential observations, outliers in predictor space, dependent variable space• Normality: plot histogram of the residuals•Studentized residuals• Heteroscedasticity : plot residuals with each x variable, transform if necessary, Box-Cox transformations • Autocorrelation:”time series plot” • Multicollinearity : compute correlations of the x variables, do signs of coefficients agree with intuition?• Principal Components • Missing Values