Mining the Maintenance History of a Legacy Software System Jelber Sayyad-Shirabad SITE, University...

-

Upload

alicia-allison -

Category

Documents

-

view

217 -

download

0

description

Transcript of Mining the Maintenance History of a Legacy Software System Jelber Sayyad-Shirabad SITE, University...

MiningMiningthe Maintenance Historythe Maintenance History

of a Legacy Software Systemof a Legacy Software System

Jelber Sayyad-Shirabad

SITE, University of Ottawa, Canada

2004/10/6 J. Sayyad Shirabad 2

Premise of our workPremise of our work

Maintenance is a very knowledge-rich activity

While looking at a piece of code, a maintainer will often ask: “What other modules might be relevant to the task at hand?”

• E.g. Files that also need changing

AI techniques, such as machine learning, can help answer this by extracting latent knowledge

• E.g. hidden in source code, bug-tracking systems etc.

2004/10/6 J. Sayyad Shirabad 3

Relevance RelationRelevance Relation

A Relevance Relation (RR) is a predictor that maps two or more software entities to a value r quantifying how relevant i.e. connected or related, the entities are to each other. In other words r shows the strength of relevance among the entities. Therefore, "relevant" here is embedded in, and dependent on, the definition of the relation.

If r is a number between 0 and 1, the relevance relation is continuous. If r can take one of k discrete values, the relevance relation is discrete.

2004/10/6 J. Sayyad Shirabad 4

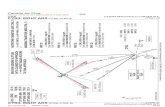

System Relevance GraphSystem Relevance Graph

A System Relevance Graph for R or SRGR is a hypergraph where the set of vertices is a subset of the set of software entities in the system. Each hyperedge in the graph connects vertices that are relevant to each other according to a relevance relation R . A System Relevance Subgraph SRGR,r is a subgraph of SRGR where software entities on each hyperedge are mapped to a relevance value r by relevance relation R.

2004/10/6 J. Sayyad Shirabad 5

System Relevance Graph (Continued)System Relevance Graph (Continued)

f1

f3f6

f2

f4

f5

f9

f7

f8

f1

f3f6

f2

f4

f5

f9

f7

f8

SRG based on Relevance Relation I

SRG based on Relevance Relation J

2004/10/6 J. Sayyad Shirabad 6

We learn a relation that exists between files from the information stored in the maintenance history of the system.

We call this relation which holds between a pair of files the co-update relation. The co-update relation is true for a pair of files if a change in one file may require a change in another file.

We refer to relations such as co-update which are learned in the context of software maintenance activity Maintenance Relevance Relations.

We will learn a decision tree classifier that models the co-update relation.

This classifier will classify a pair of files as either Relevant or Not-Relevant.

Learning the co-update relationLearning the co-update relation

2004/10/6 J. Sayyad Shirabad 7

Case StudyCase Study

Based on a large legacy telephone switching system.• Originally created in 1983 by Mitel Networks. • Multiple programming languages• A major source of revenue for the company

The company has a large source code management and bug tracking system with extensive data we can study

2004/10/6 J. Sayyad Shirabad 8

Data we useData we use

To learn the decision tree classifier first we extract two kinds of file pairs from the source management system

• Relevant: Pairs of files that have been changed together (in the same update)

• Not Relevant: Other file pairs (not updated together) i.e. Closed world assumption

For each file pair we create an example i.e. describe it, by calculating the values of a predefined set of features.The example will be labeled as either Relevant or Not-Relevant

2004/10/6 J. Sayyad Shirabad 9

Training and Testing SetsTraining and Testing Sets

#Relevant #Not Relevant #Relevant/#Not Relevant

All 4,547 1,226,827 0.00371

Training 3,031 817,884 0.00371

Testing 1,516 408,943 0.00371

File pairs are split into 2/3 training set and 1/3 testing set

The 0.00371 values mean the data is skewed

• Skewness ratio = 1/.00371 = 270

To avoid high false-negative rates, we learn from less skewed, stratified, training sets with skewness ranging up to 50

We test on the full test set with original skewness of 270

2004/10/6 J. Sayyad Shirabad 10

Feature SetsFeature Sets

File pairs are described in terms of the following feature sets:• Syntactic features extracted from the source code

E.g. routines called, files included, etc.• Textual features

Source code comments Problem report words

These features are represented as vectors

2004/10/6 J. Sayyad Shirabad 11

Syntactic AttributesSyntactic Attributes

Attribute Name Attribute Type Attribute Name Attribute Type

Number of shared files included *

Integer Number of Defined/Directly Referred routines *

Integer

Directly include you * Boolean Number of Defined/FSRRG Referred routines *

Integer

Directly Include me * Boolean Number of Shared routines directly referred *

Integer

Transitively include you Boolean Number of Shared routines among All routine *s referred

Integer

Transitively include me Boolean Number of nodes shared by FSRRGs * Integer

Number of files including us Integer Common prefix length * Integer

Number of shared directly referred Types *

Integer Same File Name* Boolean

Number of shared directly referred non type data items *

Integer ISU File Extension * Text

Number of Directly Referred/Defined routines *

Integer OSU File Extension * Text

Number of FSRRG Referred/Defined routines *

Integer Same extension * Boolean

2004/10/6 J. Sayyad Shirabad 12

Text based featuresText based features

Associate a bag of words and subsequently a feature vector to each file

Create a file pair bag of words by finding the intersection of feature vectors (commonality between feature vectors)

If a feature value is unknown the intersection can be interpreted as either false or unknown , should the learning method support features with unknown values

2004/10/6 J. Sayyad Shirabad 13

Creating Bag of Words VectorsCreating Bag of Words Vectors

Wf1

f1 w1 w4 w6 w4

f2 w2 w4 w3 wN

f3

w1 w2 w3 w4 w5 w6 … … … … … … … … wN G

t f f t f t … … … … … … … … … … … … f V f1

f f t t f f … … … … … … … … … … … … t V f2

? ? ? ? ? ? … … … … … … … … … … … ? V f3

Wf2

Wf3

2004/10/6 J. Sayyad Shirabad 14

Problem report bag of wordsProblem report bag of words

f3

P1

P3

f5

P1

P5

P10

wf wu …

wz we wd wt …

wi wj, …

wj wd wz …

.

.

. . . .

WP1

WP3

WP5

WP10 Combine

Combine wz we wd wt wf wu …

wi wj wf wu wd wz …

Wf3

Wf5

2004/10/6 J. Sayyad Shirabad 15

File pair BOW feature VectorFile pair BOW feature Vector

We find the intersection of the two BOW feature vectors corresponding to the files in a pair to create a new BOW feature vector for the file pair .

Possible feature values in the result are true, false, or unknown

2004/10/6 J. Sayyad Shirabad 16

B

A

0 1

1

TP R

ate

FP Rate

ROC (Receiver Operating Characteristic) CurvesROC (Receiver Operating Characteristic) Curves

ROC curves allow one to compare the performance of classifiers.

The closer a point (corresponding to a classifier) is to point (0,1) * the better.

A point to the north west of another point is said to dominate the second point.

*

2004/10/6 J. Sayyad Shirabad 17

Contingency TableContingency Table

Classified R Classified NR

Actually R a b a+b

Actually NR c d c+d

a+c

PrecisionR : a/(a+c)

RecallR : a/(a+b)

True positive rateR : RecallR

False positive rateR :c/(c+d)

2004/10/6 J. Sayyad Shirabad 18

ROC Curves of ROC Curves of SyntacticSyntactic (bottom) (bottom)and and Text-BasedText-Based Classifiers Classifiers

35

45

55

65

75

85

95

0 6 12False positive rate

True

pos

itive

rate

Syntactic

Source Comments

Problem Reports

2004/10/6 J. Sayyad Shirabad 19

Results Derived from the Last SlideResults Derived from the Last Slide

Classifiers created from text based features outperform classifiers created from syntactic features

Classifiers created from problem report features generate the best results

Next slides: Using problem report features, we can achieve a precision of 62% and a recall of 86%

2004/10/6 J. Sayyad Shirabad 20

Comparing the Comparing the PrecisionPrecisionof Syntactic and Text-Based Classifiersof Syntactic and Text-Based Classifiers

0

10

20

30

40

50

60

70

0 10 20 30 40 50Not-Relevant/Relevant Ratio

Prec

isio

n

Syntactic

Source Comments

Problem Reports

2004/10/6 J. Sayyad Shirabad 21

Comparing the Comparing the RecallRecallof Syntactic and Text-Based Classifiersof Syntactic and Text-Based Classifiers

30

40

50

60

70

80

90

100

0 5 10 15 20 25 30 35 40 45 50

Not-Relevant/Relevant Ratio

Rec

all

SyntacticSource CommentsProblem Reports

2004/10/6 J. Sayyad Shirabad 22

Effect of Unknown Values on Comment Based Effect of Unknown Values on Comment Based ClassifiersClassifiers

2004/10/6 J. Sayyad Shirabad 23

Effect of Unknown Values on Problem Report Effect of Unknown Values on Problem Report Based ClassifiersBased Classifiers

2004/10/6 J. Sayyad Shirabad 24

CombiningCombining Feature Sets Feature Sets

We have also performed experiments by combining:• Syntactic feature set with each text based feature set• The two text based feature sets

We only used text based features that appeared in the decision trees generated by text based feature sets in the previous experiments

Combining the two text based feature sets did not improve already existing results and is not reported here

2004/10/6 J. Sayyad Shirabad 25

Combining Combining SyntacticSyntacticand and Source File CommentSource File Comment Features Features

30

40

50

60

70

80

90

0 2 4 6 8 10 12

False positive rate

True

pos

itive

rate

SyntacticSource CommentsJux. Syntact+ Source Comments

2004/10/6 J. Sayyad Shirabad 26

Combining Combining SyntacticSyntacticand and Problem ReportProblem Report Features Features

84

86

88

90

92

94

96

98

0 1 2 3 4 5 6 7

False positive rate

True

pos

itive

rate

Problem Report

Jux. Syntactic + Used Problem Report

2004/10/6 J. Sayyad Shirabad 27

Results of Combining Feature SetsResults of Combining Feature Sets

Classifiers generated from concatenation of syntactic and text based features generally outperform classifiers generated from the individual feature sets.

The improvement is more pronounced in the case of combining comment word and syntactic feature sets.

• But since problem report feature sets are fairly good on their own, improvements will be more difficult to achieve.

2004/10/6 J. Sayyad Shirabad 28

Top features in problem report decision treesTop features in problem report decision trees

Level Frequency Feature Level Frequency Feature

9 hogger 10 Same File Name3 rad 4 acd2 play 4 ce..ss2 trunk 4 e&m1 fd 2 group1 ver 1 appear_show

1 call1 display1 extension1 internal1 mitel1 ms20081 per1 set1 single1 ss4301 tapi

0 1

2004/10/6 J. Sayyad Shirabad 29

Combining Text Based Features (Juxt. used Combining Text Based Features (Juxt. used attr.)attr.)

2004/10/6 J. Sayyad Shirabad 30

Combining Text Based features (union)Combining Text Based features (union)

2004/10/6 J. Sayyad Shirabad 31

Combining Text Based features (union Combining Text Based features (union used attr.)used attr.)

2004/10/6 J. Sayyad Shirabad 32

Results of Combining Text Based Feature SetsResults of Combining Text Based Feature Sets

Juxtaposing used source file comment features with problem report features create classifiers that always dominate the file comment features models. In the case of problem report features the degradation in higher imbalance ratios offsets the improvements in lower ratios. The size of a

The union of source file comment features and problem report features create classifiers that always dominate the file comment features models but performs worse than problem report features models. This is true both in the case of the union of all features and the union of used features.

2004/10/6 J. Sayyad Shirabad 33

Most accurate paths in training ratio 50 problem Most accurate paths in training ratio 50 problem report based classsifiersreport based classsifiers

Path # Examples

hogger & ce..ss 935

~hogger & Same File Name & fd & ~fac 153/2

~hogger & ~Same File Name & alt & corrupt

103/2

~hogger & ~Same File Name ~alt & zone & command

99/3

~hogger & ~Same File Name ~alt & ~zone & msx & debug

76/1

2004/10/6 J. Sayyad Shirabad 34

Learning with cost associated with Learning with cost associated with missclassifying Relevant as Not Relvantmissclassifying Relevant as Not Relvant

86

87

88

89

90

91

92

93

94

0 0.2 0.4 0.6 0.8 1 1.2

False Positive

True

Pos

itive

Cost 1 Cost 2 Cost 5 Cost 10 Cost 15 Cost 20

2004/10/6 J. Sayyad Shirabad 35

Learning with cost associated with Learning with cost associated with missclassifying Relevant as Not Relvant missclassifying Relevant as Not Relvant

(Continued)(Continued)

20

25

30

35

40

45

50

55

60

65

30 35 40 45 50

Not-Relevant/Relevant Ratio

Prec

isio

n

Cost 1 Cost 2 Cost 5 Cost 10 Cost 15 Cost 20

2004/10/6 J. Sayyad Shirabad 36

Learning with cost associated with Learning with cost associated with missclassifying Relevant as Not Relvant missclassifying Relevant as Not Relvant

(Continued)(Continued)

86

87

88

89

90

91

92

93

94

30 35 40 45 50

Not-Relevant/Relevant Ratio

Rec

all

Cost 1 Cost 2 Cost 5 Cost 10 Cost 15 Cost 20

2004/10/6 J. Sayyad Shirabad 37

Summary of the above experimentsSummary of the above experiments

True positive rate improves at the expense of false positive rateRecall of Relevant improves at the expense of the precisionCost factors above 5 will deteriorate the results in most casesThis approach is good if you care to find as many Relevant files as possible and can afford sifting through extra Not Relevant files

2004/10/6 J. Sayyad Shirabad 38

Learning with cost associated with Learning with cost associated with missclassifying Not Relvant as Relevantmissclassifying Not Relvant as Relevant

0

10

20

30

40

50

60

70

80

90

0 0.05 0.1 0.15 0.2 0.25 0.3 0.35

False Positive Rate

Trie

Pos

itive

Rat

e

Cost 1 Cost 2 Cost 5 Cost 10 Cost 15 Cost 20

2004/10/6 J. Sayyad Shirabad 39

Learning with cost associated with Learning with cost associated with missclassifying Not Relvant as Relevant missclassifying Not Relvant as Relevant

(Continued)(Continued)

0

10

20

30

40

50

60

70

80

90

100

30 35 40 45 50

Not-Relevant/Relevant Ratio

Prec

isio

n

Cost 1 Cost 2 Cost 5 Cost 10 Cost 15 Cost 20

2004/10/6 J. Sayyad Shirabad 40

Learning with cost associated with Learning with cost associated with missclassifying Not Relvant as Relevant missclassifying Not Relvant as Relevant

(Continued)(Continued)

0

10

20

30

40

50

60

70

80

90

30 35 40 45 50

Not-Relevant/Relevant Ratio

Rec

all

Cost 1 Cost 2 Cost 5 Cost 10 Cost 15 Cost 20

2004/10/6 J. Sayyad Shirabad 41

Summary of the above experimentsSummary of the above experiments

False positive rate improves at the expense of true positive ratePrecision of Relevant improves at the expense of the recallCost factors above 5 will deteriorate the results in most casesThis approach is good if you care to be correct as much as possible when you suggest a file is Relevant and can afford missing some of the Relevant files

2004/10/6 J. Sayyad Shirabad 42

Cost sensitive error

Classified R Classified NRActually R a CR->NR*b a+b

Actually NR CNR->R*c d c+d

a+c

CR->NR = False Negative Cost

CNR->R = False Positive Cost

Error = (CR->NR*b + CNR->R*c)/(a + CR->NR*b + CNR->R*c + d)

2004/10/6 J. Sayyad Shirabad 43

Cost sensitive error comparisonCost sensitive error comparison

2004/10/6 J. Sayyad Shirabad 44

Cost sensitive error comparison Cost sensitive error comparison (rotated)(rotated)

2004/10/6 J. Sayyad Shirabad 45

Cost sensitive error comparison Cost sensitive error comparison (High cost factors)(High cost factors)

2004/10/6 J. Sayyad Shirabad 46

ConclusionsConclusionsFrom past software update records we learned a relation that could be used to predict whether a change in one source file may require a change in another source file.Textual feature sets show the most promise

• especially problem report feature sets.

The precision and recall values of problem report based classifier (Skewness ratio 50) suggest this classifier could be deployed in the field.We can further improve some of the results by combining syntactic and textual feature sets. Depending on user needs one can generate models that are more sensitive to certain misclassification errors

2004/10/6 J. Sayyad Shirabad 47

Future WorkFuture Work

Experimenting with• Other feature sets• Alternative learning methodologies

Using the learned co-update relation in other applications, such as subsystem clustering.

Further cost-benefit analysis

Thank You !

Questions?

Suggestions ?