Massive Data Sets and Information Theory Ziv Bar-Yossef Department of Electrical Engineering...

-

Upload

dennis-conley -

Category

Documents

-

view

217 -

download

0

Transcript of Massive Data Sets and Information Theory Ziv Bar-Yossef Department of Electrical Engineering...

Massive Data Setsand

Information Theory

Ziv Bar-YossefDepartment of Electrical Engineering

Technion

What are Massive Data Sets?

Technology

The World-Wide WebIP packet flowsPhone call logs

Technology

The World-Wide WebIP packet flowsPhone call logs

Science

Astronomical sky surveysWeather data

Science

Astronomical sky surveysWeather data

Business

Credit card transactionsBilling records

Supermarket sales

Business

Credit card transactionsBilling records

Supermarket sales

Traditionally

Cope with the complexity of the problem

Traditionally

Cope with the complexity of the problem

New challenges Restricted access to the data Not enough time to read the whole data Tiny fraction of the data can be held in main memory

Massive Data Sets

Cope with the complexity of the data

Massive Data Sets

Cope with the complexity of the data

Nontraditional Challenges

Approximation of

Computing over Massive Data Sets

Data

(n is very large)

• Approximation of f(x) is sufficient

• Program can be randomized

Computer Program

Examples

Mean

Parity

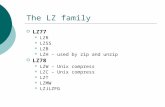

Models for Computing over Massive Data Sets

Sampling Data Streams Sketching

Query a few data items

Sampling

Data

(n is very large)

Computer Program

Examples

Mean

O(1) queries

Parity

n queries

Approximation of

Data Streams

Data

(n is very large)

Computer Program

Stream through the data;Use limited memory

Examples

Mean

O(1) memory

Parity

1 bit of memory

Approximation of

Sketching

Data1

(n is very large)

Data2Data1 Data2Sketch2Sketch1

Compress eachdata segment intoa small “sketch”

Compute overthe sketches

Examples

Equality

O(1) size sketch

Hamming distance

O(1) size sketch

Lp distance (p > 2)

n1-2/p) size sketch

Approximation of

Algorithms for Massive Data Sets

• Mean and other moments

• Median and other quantiles

• Volume estimations

• Histograms

• Graph problems

• Low-rank matrix approximations

Sampling

• Frequency moments

• Distinct elements

• Functional approximations

• Geometric problems

• Graph problems

• Database problems

Data Streams

• Equality

• Hamming distance

Sketching

• Edit distance

• Lp distance

Our goal

Study the limits of computingover massive data sets

Study the limits of computingover massive data sets

Query complexitylower bounds

Query complexitylower bounds

Data streammemory

lower bounds

Data streammemory

lower bounds

Sketch sizelower bounds

Sketch sizelower bounds

Main ToolsCommunication complexity, information theory, statistics

Main ToolsCommunication complexity, information theory, statistics

CC(f) = min: computes f cost()CC(f) = min: computes f cost()

Communication Complexity [Yao 79]

Alice Bobm1

m2

m3

m4

Referee

cost() = i |mi|cost() = i |mi|

a,b) “transcript”

Communication Complexity View of Sampling

Alice Bobi1

X[i1]

i2

X[i2]

Referee

cost() = # of queries QC(f) = min computes f cost()

x) “transcript”

Approximation of

icost() = I(X; (X)); IC(f) = mincomputes f icost()icost() = I(X; (X)); IC(f) = mincomputes f icost()

Information Complexity[Chakrabarti, Shi, Wirth, Yao 01]

distribution on inputs to f

X: random variable with distribution

Information Complexity:Minimum amount of information a protocol

that computes f has to reveal about its inputs

Information Complexity:Minimum amount of information a protocol

that computes f has to reveal about its inputs

Note: For some functions, any protocol must reveal much more information about X than just f(X).

CC Lower Bounds via IC Lower Bounds

Useful properties of information complexity:

• Lower bounds communication complexity

• Amenable to “direct sum” decompositions

Framework for bounding CC via IC

1. Find an appropriate “hard input distribution” .

2. Prove a lower bound on IC(f)

1. Decomposition: decompose IC(f) into “simple” information quantities.

2. Basis: Prove a lower bound on the simple quantities

Applications of Information Complexity

• Data streams [Bar-Yossef, Jayram, Kumar, Sivakumar 02] [Chakrabarti, Khot, Sun 03]

• Sampling [Bar-Yossef 03]

• Communication complexity and decision tree complexity [Jayram, Kumar, Sivakumar 03]

• Quantum communication complexity [Jain, Radhakrishnan, Sen 03]

• Cell probe [Sen 03] [Chakrabarti, Regev 04]

• Simultaneous messages [Chakrabarti, Shi, Wirth, Yao 01]

The “Election Problem”• Input: a sequence x of n votes to k parties

7/18 4/18 3/18 2/18 1/18 1/18

(n = 18, k = 6)

Want to get D s.t. ||D – f(x)|| < .Vote Distribution f(x)

Theorem QC(f) = (k/2)

Sampling Lower Bound

Lemma 1 (Normal form lemma):

WLOG, in any protocol that computes the election problem, the queries are uniformly distributed and independent.

x) : transcript of a full protocol

(x) : transcript of a single random query “protocol”

If cost() = q, then (x) = ((x),…,(x)) (q times)

Sampling Lower Bound (cont.)

Lemma 2 (Decomposition lemma):

For any X, I(X ; (X)) <= q I(X; (X)).

I(X; (X)) : information cost of w.r.t. X

I(X; (X)) : information cost of w.r.t. X

Therefore, q >= I(X; (X)) / I(X; (X)).

Combinatorial Designs

1. Each of them constitutes half of U.2. The intersection of each two of them is relatively small.

B1

B2

B3U

A family of subsets B1,…,Bm of a universe U s.t.

Fact: There exist designs of size exponential in |U|.

(Constant rate, constant relative minimum distance binary error-correcting codes).

Hard Input Distribution for the Election Problem

Let B1,…,Bm be a family of subsets of {1,…,k} that form a design of size m = 2(k).

X is uniformly chosen among x1,…,xm, where in xi:

Bi Bic

• ½ + of the votes are split among parties in Bi.

• ½ - of the votes are split among parties in Bi

c.

1. Unique decoding: For every i,j, || f(xi) – f(xj) || > 2. Therefore, I(X; (X)) >= H(X) = (k).

2. Low diameter: I(X; (X)) = O(2).

Conclusions

Information theory plays a growingly major role in complexity theory.

More applications of information complexity? Can we use deeper information theory in

complexity theory?

Thank You