Parallel Architectures Based on Parallel Computing , M. J. Quinn

M-Machine and Grids Parallel Computer Architectures

description

Transcript of M-Machine and Grids Parallel Computer Architectures

M-Machine and M-Machine and GridsGrids

Parallel Computer ArchitecturesParallel Computer Architectures

Navendu JainNavendu Jain

ReadingsReadings

The M-machine multicomputerThe M-machine multicomputer Marco et al., MICRO 1995 Marco et al., MICRO 1995

Exploiting fine-grain thread level parExploiting fine-grain thread level parallelism on the MIT multi-ALU proceallelism on the MIT multi-ALU processorssor

Keckler et al., MICRO 1998Keckler et al., MICRO 1998 A design space evaluation of grid proA design space evaluation of grid pro

cessor architecturescessor architectures

Nagarajan et al., MICRO 2001Nagarajan et al., MICRO 2001

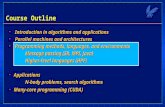

OutlineOutline

The M-Machine MulticomputerThe M-Machine Multicomputer

Thread Level Parallelism on M-Thread Level Parallelism on M-MachineMachine

Grid Processor ArchitecturesGrid Processor Architectures

Review and Discussion Review and Discussion

The M-Machine The M-Machine MulticomputerMulticomputer

Design MotivationDesign Motivation

Achieve higher throughput of memory Achieve higher throughput of memory resourcesresources Increase chip area devoted to processorsIncrease chip area devoted to processors Arithmetic to bandwidth ratio of 12 Arithmetic to bandwidth ratio of 12

operations/wordoperations/word Minimize global communication (local sync.)Minimize global communication (local sync.) Faster execution of fixed size problemsFaster execution of fixed size problems Easier programmability of parallel Easier programmability of parallel

computerscomputers Incremental approachIncremental approach

ArchitectureArchitecture

A bi-directional 3-D network mesh of A bi-directional 3-D network mesh of multi-threaded processing nodesmulti-threaded processing nodes

A chip comprises of a multi-ALU processor A chip comprises of a multi-ALU processor (MAP) and 128KB on-chip sync. DRAM (MAP) and 128KB on-chip sync. DRAM

A user-accessible message passing system A user-accessible message passing system (SEND)(SEND)

Single global virtual address space Single global virtual address space Target CLK 100 MHz (control logic Target CLK 100 MHz (control logic

40MHz)40MHz)

Multi-ALU processor Multi-ALU processor (MAP)(MAP)

A MAP chip A MAP chip comprises : comprises : Three 64-bit 3-issue Three 64-bit 3-issue

clustersclusters 2-way interleaved on-2-way interleaved on-

chip cachechip cache A Memory SwitchA Memory Switch A Cluster switchA Cluster switch External memory External memory

interfaceinterface On-chip network On-chip network

interfaces and routersinterfaces and routers

A MAP ClusterA MAP Cluster

64-bit three issue 64-bit three issue pipelined processorpipelined processor

2 Integer ALUs 2 Integer ALUs 1 Floating point ALU1 Floating point ALU Register Files Register Files 4KB Instruction 4KB Instruction

cachecache A MAP instruction A MAP instruction

has 1, 2 or 3 has 1, 2 or 3 operationsoperations

Map Chip Die Map Chip Die (18 mm side, 5M transistors)(18 mm side, 5M transistors)

Exploiting Parallelism Exploiting Parallelism on on

M-MachineM-Machine

ThreadsThreads

Exploit ILP both with-in and across Exploit ILP both with-in and across the clustersthe clusters

Horizontal Threads (H-Threads)Horizontal Threads (H-Threads) Instruction level parallelismInstruction level parallelism Executes on a single MAP clusterExecutes on a single MAP cluster 3-wide instruction stream3-wide instruction stream Communication/synchronization Communication/synchronization

through messages/registers/memorythrough messages/registers/memory Max. 6 H-Threads can be interleaved Max. 6 H-Threads can be interleaved

dynamically on a cycle-by-cycle basis dynamically on a cycle-by-cycle basis

Threads (contd.)Threads (contd.)

Vertical Threads (V-Threads)Vertical Threads (V-Threads) Thread level parallelism (a standard Thread level parallelism (a standard

process) process) contains up-to 4 H-Threads (one per contains up-to 4 H-Threads (one per

cluster)cluster) Flexibility of scheduling (compiler/run-time)Flexibility of scheduling (compiler/run-time) Communication/synchronization through Communication/synchronization through

registersregisters At-most 6 resident V-ThreadsAt-most 6 resident V-Threads

4 user slots, 1 event slot, 1 exception 4 user slots, 1 event slot, 1 exception

Concurrency Model Concurrency Model Three Levels of ParallelismThree Levels of Parallelism

Instruction Level Parallelism ( 1 instruction)Instruction Level Parallelism ( 1 instruction) VLIW, Superscalar processorsVLIW, Superscalar processors Issues: Control Flow, Data dependency, Issues: Control Flow, Data dependency,

ScalabilityScalability Thread Level Parallelism (~ 1000 Thread Level Parallelism (~ 1000

instructions)instructions) Chip MultiprocessorsChip Multiprocessors Issues: Limited coarse TLP, Inner cores non-Issues: Limited coarse TLP, Inner cores non-

optimaloptimal Fine grain Parallelism (~ 50 – 1000 Fine grain Parallelism (~ 50 – 1000

instructions)instructions)

MappingMapping

ILPILP Sub-exprSub-expr ClusterCluster

Fine-GrainFine-GrainInner loopsInner loops

sub-sub-routinesroutines

MAP chipMAP chip

TLPTLPOuter Outer loopsloops

Co-Co-routinesroutines

NodesNodes

ProgramProgram ArchitectureArchitectureGranularityGranularity

Fine-grain overheadsFine-grain overheads

Thread creation (11 cycles – Thread creation (11 cycles – hforkhfork)) CommunicationCommunication

Register-Register read/writesRegister-Register read/writes Message passing/on-chip cacheMessage passing/on-chip cache

SynchronizationSynchronization Blocking on a register (full/empty bit)Blocking on a register (full/empty bit) Barrier Instruction (Barrier Instruction (cbarcbar instruction) instruction) Memory (sync bit)Memory (sync bit)

Grid Processor Grid Processor ArchitectureArchitecture

Design MotivationDesign Motivation

Continued scaling of the clock rateContinued scaling of the clock rate Scalability of the processing coreScalability of the processing core Higher ILP - Instruction throughput Higher ILP - Instruction throughput

(IPC)(IPC) Mitigate global wire and delay Mitigate global wire and delay

overheadsoverheads Closer coupling of Architecture and Closer coupling of Architecture and

compilercompiler

ArchitectureArchitecture

An inter-connected 2-D network of ALU arraysAn inter-connected 2-D network of ALU arrays Each node has a IB and a execution unitEach node has a IB and a execution unit A single control thread maps instructions to A single control thread maps instructions to

nodes nodes Block-Atomic Execution ModelBlock-Atomic Execution Model

Mapping blocks of statically scheduled instructionsMapping blocks of statically scheduled instructions Dynamic execution in data-flow orderDynamic execution in data-flow order Forwarding temp. values to the consumer ALUsForwarding temp. values to the consumer ALUs Critical path scheduled along shortest physical pathCritical path scheduled along shortest physical path

GPA ArchitectureGPA Architecture

Example: Block-Atomic Example: Block-Atomic MappingMapping

ImplementationImplementation

Instruction fetch and map Instruction fetch and map predicated hyper-block, predicated hyper-block, move move instructionsinstructions

Execution - control logicExecution - control logic Operand routing – max 3 dest., Operand routing – max 3 dest., split split

instructionsinstructions Hyper-block controlHyper-block control

Predication (execute-all approach), Predication (execute-all approach), cmove cmove instructionsinstructions

Block-commitBlock-commit Block-stitchingBlock-stitching

Review and DiscussionReview and Discussion

Key Ideas: ConvergenceKey Ideas: Convergence

Microprocessor – no. of superscalar processors Microprocessor – no. of superscalar processors comm./sync. via registers – low overheadscomm./sync. via registers – low overheads

Exploiting ILP – TLP granularitiesExploiting ILP – TLP granularities Dependency mapped to a grid of ALUsDependency mapped to a grid of ALUs

Replication reduces design/verification effortReplication reduces design/verification effort Point-to-point communicationPoint-to-point communication Exposing architecture partitioning and flow of Exposing architecture partitioning and flow of

operations to the compileroperations to the compiler Avoid wire, routing delays, memory wall Avoid wire, routing delays, memory wall

problemsproblems

Ideas: DivergenceIdeas: Divergence

M-MachineM-Machine On-chip cache On-chip cache Register based mech. Register based mech.

[Delays][Delays] Broadcasting and Point-to-point communicationBroadcasting and Point-to-point communication GPAGPA Register Set Register Set Grid: Chaining [Scalability] Grid: Chaining [Scalability] Point-to-point communicationPoint-to-point communicationTERATERA Fine-grain threads – Memory comm/sync Fine-grain threads – Memory comm/sync

(full/empty)(full/empty) No support for single threaded codeNo support for single threaded code

Drawbacks (Unresolved Drawbacks (Unresolved Issues)Issues)

M-MachineM-Machine ScalabilityScalability Clock speedsClock speeds Memory Memory

SynchronizationSynchronization

(use (use hforkhfork))

Grid Processor Arch.Grid Processor Arch. Data Caches far from Data Caches far from

ALUsALUs Incur delays between Incur delays between

dependent operations due dependent operations due to network router and to network router and wireswires

Complex Frame-Complex Frame-management and Block-management and Block-stitchingstitching

Explicit compiler Explicit compiler dependencedependence

Challenges/Future Challenges/Future DirectionsDirections

Architectural support to extract TLPArchitectural support to extract TLP

Parallelizing compiler technologyParallelizing compiler technology

How many cores/threadsHow many cores/threads No. of threads – memory latency, wire delays No. of threads – memory latency, wire delays

[Flynn][Flynn] Inter-thread communicationInter-thread communication Height of Grid == 8 (IPC 5-6) [GPA, Peter]Height of Grid == 8 (IPC 5-6) [GPA, Peter] Optimization - f(comm., delays, memory costs)Optimization - f(comm., delays, memory costs)

Challenges (contd.)Challenges (contd.)

On-fly data-dependence detection On-fly data-dependence detection (RAW/WAR)(RAW/WAR)

TLP/ILP Balance – M Multi-TLP/ILP Balance – M Multi-ComputerComputer

ThanksThanks