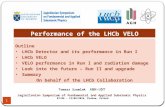

Status of the LHCb Experiment LHCb RRB at CERN 16 April 2008

LHCb Computing Model and Grid Status Glenn Patrick GRIDPP13, Durham – 5 July 2005.

-

Upload

edwina-ferguson -

Category

Documents

-

view

216 -

download

1

Transcript of LHCb Computing Model and Grid Status Glenn Patrick GRIDPP13, Durham – 5 July 2005.

LHCb Computing Model

and Grid Status

Glenn PatrickGRIDPP13, Durham – 5 July 2005

2Glenn Patrick GridPP13 – 5 July 2005

LHCb – June 2005

03 June 2005

HCAL

MF1-MF3Mu-filters

MF4

LHCb Magnet

ECAL

3Glenn Patrick GridPP13 – 5 July 2005

Computing completes TDRs

Jan 2000June 2005

4Glenn Patrick GridPP13 – 5 July 2005

Online System

Multiplexing Layer

FE FE FE FE FE FE FE FE FE FE FE FE

SwitchSwitch SwitchSwitch

Level-1Traffic

HLTTraffic

1000 kHz5.5 GB/s

40 kHz1.6 GB/s

94 SFCs

Front-end Electronics

7.1 GB/s

TRM

Sorter

L1-Decision

StorageSystem

Readout NetworkReadout Network

SwitchSwitch SwitchSwitch SwitchSwitch

SFC

Switch

CPU

CPU

CPU

SFC

Switch

CPU

CPU

CPU

Switch

CPUCPU

CPUCPU

CPUCPU

SFC

Switch

CPU

CPU

CPU

SFC

Switch

CPU

CPU

CPU

Switch

CPUCPU

CPUCPU

CPUCPU

SFC

Switch

CPU

CPU

CPU

SFC

Switch

CPU

CPU

CPU

Switch

CPUCPU

CPUCPU

CPUCPU

SFC

Switch

CPU

CPU

CPU

SFC

Switch

CPU

CPU

CPU

Switch

CPUCPU

CPUCPU

CPUCPU

SFC

Switch

CPU

CPU

CPU

SFC

Switch

CPU

CPU

CPU

Switch

CPUCPU

CPUCPU

CPUCPU

CPUFarm

~1600 CPUs

~ 250 MB/stotal

TIER0

Scalable in depth:more CPUs (<2200)Scalable in width: more detectors in Level- 1

TFCSystem

ECS

40 MHz

Level-0Hardware

1 MHz

Level-1Software

40 kHz

HLTSoftware

2 kHz Tier 0

Raw Data:2kHZ, 50MB/s Tier 1Tier 1Tier 1Tier 1Tier 1Tier 1

5Glenn Patrick GridPP13 – 5 July 2005

HLT Output

b-exclusive dimuon D* b-inclusive Total

Trigger Rate (Hz)

200 600 300 900 2000

Fraction 10% 30% 15% 45% 100%

Events/year (109)

2 6 3 9 20

200 Hz Hot StreamWill be fully reconstructed on online farmin real time. “Hot stream” (RAW + rDST) written to Tier 0.

2kHzRAW data written to Tier 0 for reconstruction at CERN and Tier 1s.

XJ / )(0* KDD Calibration for proper-

time resolution.Clean peak allows PID

calibration.

bUnderstand bias on other

B selections.

6Glenn Patrick GridPP13 – 5 July 2005

Data Flow

ReconstructionBrunel

SimulationGauss

DigitisationBoole

AnalysisDaVinci

MC Truth Raw Data DST AnalysisObjects

StrippedDST

Framework - Gaudi

DetectorDescription

ConditionsDatabase

Event Model/Physics Event Model

7Glenn Patrick GridPP13 – 5 July 2005

LHCb Computing Model

14 candidates

CERN Tier 1 essential

for accessing “hot stream” for1. First alignment

& calibration.2. First high-level

analysis.

8Glenn Patrick GridPP13 – 5 July 2005

Distributed Data

RAW DATA500 TB

CERN = Master Copy

2nd copy distributed over six Tier 1s

STRIPPING140 TB/pass/copy

Pass 1: During data taking at CERN and Tier 1s (7 months)Pass 2: After data taking at CERN and Tier 1s (1 month)

RECONSTRUCTION500TB/pass

Pass 1: During data taking at CERNand Tier 1s (7 months)

Pass 2: During winter shutdown atCERN, Tier 1s and online farm (2months)

Pass 3: During shutdown at CERN, Tier 1s and online farm

Pass 4: Before next year data taking at CERN and Tier 1s (1 month)

9Glenn Patrick GridPP13 – 5 July 2005

Check File integrity

DaVinci stripping

Check File integrity

DaVinci stripping

Check File integrity

DaVinci stripping

Stripping Job - 2005

Read INPUTDATA and stage them in 1 go

Check File status

Not yet Staged

Prod DB

group2group1

groupN

staged

Send bad file info

Check File integrity

DaVinci stripping

Good file

Merging processDST and ETC

ETC

DST

Send file info

Usage of SRM

Stripping runs on reducedDSTs (rDST).

Pre-selection algorithmscategorise events into streams.

Events that pass are fullyreconstructed and full DSTs written.

CERN, CNAF, PIC used so far – sites based on CASTOR.

10Glenn Patrick GridPP13 – 5 July 2005

Resource ProfileCPU (MSI2k.yr)

2006 2007 2008 2009 2010

CERN 0.27 0.54 0.90 1.25 1.88

Tier-1’s 1.33 2.65 4.42 5.55 8.35

Tier-2’s 2.29 4.59 7.65 7.65 7.65

TOTAL 3.89 7.78 12.97 14.45 17.88

DISK (TB)2006 2007 2008 2009 2010

CERN 248 496 826 1095 1363

Tier-1’s 730 1459 2432 2897 3363

Tier-2’s 7 14 23 23 23

TOTAL 984 1969 3281 4015 4749

MSS (TB)2006 2007 2008 2009 2010

CERN 408 825 1359 2857 4566

Tier-1’s 622 1244 2074 4285 7066

TOTAL 1030 2069 3433 7144 11632

11Glenn Patrick GridPP13 – 5 July 2005

Tier1 CPU

0

20

40

60

80

100

120

140

160

Jan

Feb

Ma

r

Ap

r

Ma

y

Jun Jul

Au

g

Se

p

Oct

No

v

De

c

Jan

Feb

Ma

r

Ap

r

Ma

y

Jun Jul

Au

g

Se

p

Oct

No

v

De

c

Jan

Feb

Ma

r

Ap

r

Ma

y

Jun Jul

Au

g

Se

p

Oct

No

v

De

c

Date

MS

I2k

LHCb

CMS

ATLAS

ALICE

2008 2009 2010

Comparisons - CPUTier 1 CPU – integrated (Nick Brook)

LHCb

12Glenn Patrick GridPP13 – 5 July 2005

Comparisons- DiskLCG TDR – LHCC, 29.6.2005 (Jurgen Knobloch)

0

20

40

60

80

100

120

140

160

2007 2008 2009 2010Year

PB

LHCb-Tier-2

CMS-Tier-2

ATLAS-Tier-2

ALICE-Tier-2

LHCb-Tier-1

CMS-Tier-1

ATLAS-Tier-1

ALICE-Tier-1

LHCb-CERN

CMS-CERN

ATLAS-CERN

ALICE-CERN

54%

pled

ged

CERN

Tier-1

Tier-2

13Glenn Patrick GridPP13 – 5 July 2005

Comparisons - TapeLCG TDR – LHCC, 29.6.2005 (Jurgen Knobloch)

0

20

40

60

80

100

120

140

160

2007 2008 2009 2010Year

PB

LHCb-Tier-1

CMS-Tier-1

ATLAS-Tier-1

ALICE-Tier-1

LHCb-CERN

CMS-CERN

ATLAS-CERN

ALICE-CERN75%

pled

ged

CERN

Tier-1

14

DIRAC Architecture

DIRAC JobManagement

Service

DIRAC JobManagement

Service

DIRAC CEDIRAC CEDIRAC CEDIRAC CE

DIRAC CEDIRAC CE

LCGLCGResourceBroker

ResourceBroker

CE 1CE 1

DIRAC SitesDIRAC Sites

AgentAgent AgentAgent AgentAgent

CE 2CE 2

CE 3CE 3

Productionmanager

Productionmanager GANGA UIGANGA UI User CLI User CLI

JobMonitorSvcJobMonitorSvc

JobAccountingSvcJobAccountingSvc

AccountingDB

Job monitorJob monitor

InformationSvcInformationSvc

FileCatalogSvcFileCatalogSvc

MonitoringSvcMonitoringSvc

BookkeepingSvcBookkeepingSvc

BK query webpage BK query webpage

FileCatalogbrowser

FileCatalogbrowser

Userinterfaces

DIRACservices

DIRACresources

DIRAC StorageDIRAC Storage

DiskFileDiskFile

gridftpgridftpbbftpbbftp

rfiorfio

Services Oriented Architecture

15Glenn Patrick GridPP13 – 5 July 2005

Data Challenge 2004

DIRAC alone

LCG inaction

1.8 106/day

LCG paused

Phase 1 Completed

3-5 106/day

LCG restarted

187 M Produced Events

LHCb DC'04

0

20

40

60

80

100

120

140

160

180

200

Total may june july august

Month

Ev

en

ts (

M)

LCG

DIRAC

20 DIRAC sites + 43 LCG sites were used.

Data written to Tier 1s.

• Overall, 50% of events produced using LCG.

• At end, 75% produced by LCG.

UK second largest producer (25%) after

CERN.

16Glenn Patrick GridPP13 – 5 July 2005

RTTC - 2005

Real Time Trigger Challenge – May/June 2005

150M Minimum bias events to feed online farmand test software trigger chain.

Completed in 20 days (169M events) on 65 different sites.95% produced with LCG sites5% produced with “native” DIRAC sites

Average of 10M events/day.Average of 4,000 cpus

CountriesEvents

Produced

UK 60 M

Italy 42 M

Switzerland 23 M

France 11 M

Netherlands 10 M

Spain 8 M

Russia 3 M

Greece 2.5 M

Canada 2 M

Germany 0.3 M

Belgium 0.2M

Sweden 0.2 M

Romany, Hungary, Brazil, USA

0.8 M

37%

17Glenn Patrick GridPP13 – 5 July 2005

Looking Forward

SC3

LHC Service OperationFull physics run

2005 20072006 2008

First physicsFirst beams

cosmicsSC4

Next ChallengeSC3 – Sept. 2005

Start DC06Processing phase

May 2006

Alignment/calibrationChallenge

October 2006

Ready for data takingApril 2007

Analysisat Tier 1sNov. 2005

18Glenn Patrick GridPP13 – 5 July 2005

LHCb and SC3

Phase 1 (Sept. 2005 ):

a) Movement of 8TB of digitised data from CERN/Tier 0 to LHCb Tier 1 centres in parallel over a 2 week period (~10k files). Demonstrate automatic tools for data movement and bookkeeeping.

b) Removal of replicas (via LFN) from all Tier 1 centres.

c) Redistribution of 4TB data from each Tier 1 centre to Tier 0 and other Tier 1 centres over a 2 week period. Demonstrate data can be redistributed in real time to meet stripping demands.

d) Moving of stripped DST data (~1TB, 190k files) from CERN to all Tier 1 centres.

Phase 1 (Sept. 2005 ):

a) Movement of 8TB of digitised data from CERN/Tier 0 to LHCb Tier 1 centres in parallel over a 2 week period (~10k files). Demonstrate automatic tools for data movement and bookkeeeping.

b) Removal of replicas (via LFN) from all Tier 1 centres.

c) Redistribution of 4TB data from each Tier 1 centre to Tier 0 and other Tier 1 centres over a 2 week period. Demonstrate data can be redistributed in real time to meet stripping demands.

d) Moving of stripped DST data (~1TB, 190k files) from CERN to all Tier 1 centres.

Phase 2 (Oct. 2005 ):a) MC production in Tier 2 centres with DST data collected in Tier

1 centres in real time followed by stripping in Tier 1 centres (2 months). Data stripped as it becomes available.

b) Analysis of stripped data in Tier 1 centres.

Phase 2 (Oct. 2005 ):a) MC production in Tier 2 centres with DST data collected in Tier

1 centres in real time followed by stripping in Tier 1 centres (2 months). Data stripped as it becomes available.

b) Analysis of stripped data in Tier 1 centres.

19Glenn Patrick GridPP13 – 5 July 2005

SC3 RequirementsTier 1 Read only LCG file catalogue (LFC) for > 1 Tier 1. SRM version 1.1 interface to MSS. GRIDFTP server for MSS. File Transfer Service (FTS) and LFC client/tools. gLite CE. Hosting CE for agents (with managed, backed up file system).

Tier 1 Read only LCG file catalogue (LFC) for > 1 Tier 1. SRM version 1.1 interface to MSS. GRIDFTP server for MSS. File Transfer Service (FTS) and LFC client/tools. gLite CE. Hosting CE for agents (with managed, backed up file system).

Tier 2 SRM interface to SE. GRIDFTP access FTS and LFC tools.

Tier 2 SRM interface to SE. GRIDFTP access FTS and LFC tools.

Job AgentJob AgentWN

Job

CEHosting CE

LocalSESoftwarerepository

Monitor AgentMonitor Agent

Transfer AgentTransfer Agent

Request DB

20Glenn Patrick GridPP13 – 5 July 2005

SC3 Resources

Phase 1 Temporary MSS access to ~10TB of data at each Tier 1 (with SRM). Permanent access to 1.5TB on disk at each Tier 1 with SRM interface.

Phase 1 Temporary MSS access to ~10TB of data at each Tier 1 (with SRM). Permanent access to 1.5TB on disk at each Tier 1 with SRM interface.

Phase 2 LCG version 2.5.0 in production for whole of LCG. CPU

MC production: ~250 (2.4GHz) WN over 2 months (non Tier1).

Stripping: ~2 (2.4GHz) WN per Tier 1 for duration of this phase.

Storage (permanent) 10TB of storage across all Tier 1s and CERN for MC output. 350 GB disk with SRM interface at each Tier 1 and CERN for

stripping output.

Phase 2 LCG version 2.5.0 in production for whole of LCG. CPU

MC production: ~250 (2.4GHz) WN over 2 months (non Tier1).

Stripping: ~2 (2.4GHz) WN per Tier 1 for duration of this phase.

Storage (permanent) 10TB of storage across all Tier 1s and CERN for MC output. 350 GB disk with SRM interface at each Tier 1 and CERN for

stripping output.

21

Data Production on the Grid

DIRAC JobManagement

Service

DIRAC JobManagement

Service

PilotJobAgentPilotJobAgent

LCGLCGResourceBroker

ResourceBroker

CE 1CE 1 CE 2CE 2

CE 3CE 3AgentAgent

AgentAgent

Production DB

DIRAC JobMonitoring

Service

DIRAC JobMonitoring

Service

Production manager

LocalSERemoteSE

File CatalogLFC

File CatalogLFC

DIRAC CEDIRAC CEDIRAC CEDIRAC CE

DIRAC CEDIRAC CE

DIRAC SitesDIRAC Sites

WN

Job

AgentAgent

22Glenn Patrick GridPP13 – 5 July 2005

UK:Workflow Control

Primary eventSpill-over event

Production DesktopGennady Kuznetsov

(RAL)

Gauss B Gauss MBGauss MBGauss MB

Boole B Boole MBBoole MBBoole MB

Brunel B Brunel MB

Sim

Digi

Reco

Software installation

Gaussexecution

Check logfile

Dir listing

Bookkeeping

report

Steps

Modules

23Glenn Patrick GridPP13 – 5 July 2005

Web

Browser

Bookkeep

ing

AR

DA

S

erv

er

TCP/IPStreaming

AR

DA

C

lien

t AP

I

Tomcat S

erv

let

AR

DA

Clie

nt

AP

I

GANGA

application

UK: LHCb Metadata and ARDA

Carmine Cioffi (Oxford)

24Glenn Patrick GridPP13 – 5 July 2005

AtlasPROD

DIAL

DIRAC

LCG2

gLite

localhost

LSF

submit, kill

get outputupdate status

store & retrieve job definition

prepare, configure

Ganga4

JobJobJobJob

scripts

Gaudi

Athena

AtlasPROD

DIAL

DIRAC

LCG2

gLite

localhost

LSF

+ split, merge, monitor, dataset selection

UK: Ganga

See next talk!

Karl Harrison (Cambridge)

Alexander Soroko (Oxford)

Alvin Tan (Birmingham)Ulrik Egede (Imperial)

Andrew Maier (CERN)

Kuba Moscicki (CERN)

25Glenn Patrick GridPP13 – 5 July 2005

UK: Conditions Database

Data sourceData source

VersionVersion

TimeTime

t1t1 t2t2 t3t3 t4t4 t5t5 t6t6 t7t7 t8t8 t9t9 t10t10 t11t11

VELO alignmentVELO alignmentHCAL calibration HCAL calibration

RICH pressure RICH pressure ECAL temperature ECAL temperature

Production version: Production version: VELO: v3 for T<t3, v2 for t3<T<t5, v3 for t5<T<t9, v1 for T>t9VELO: v3 for T<t3, v2 for t3<T<t5, v3 for t5<T<t9, v1 for T>t9HCAL: v1 for T<t2, v2 for t2<T<t8, v1 for T>t8HCAL: v1 for T<t2, v2 for t2<T<t8, v1 for T>t8RICH: v1 everywhereRICH: v1 everywhereECAL: v1 everywhereECAL: v1 everywhere

Time = TTime = T

LCG COOL project providing underlying structure for conditions database.

User-interfaceNicolas Gilardi (Edinburgh)

26Glenn Patrick GridPP13 – 5 July 2005

UK: Analysis with DIRAC

Task-Queue

Agent

Job executes on WN

DIRACJob

Installs software

Closest SE

Data as LFN

Matching

Check for allSE’s whichhave data

If no dataspecified

Software Installation + Analysis via DIRAC WMS

Stuart Patterson (Glasgow)

DIRAC API for analysis job submission

[ Requirements = other.Site == "DVtest.in2p3.fr"; Arguments = "jobDescription.xml"; JobName = "DaVinci_1"; OutputData = { "/lhcb/test/DaVinci_user/v1r0/LOG/DaVinci_v12r11.alog" }; parameters = [ STEPS = "1"; STEP_1_NAME = "0_0_1" ]; SoftwarePackages = { "DaVinci.v12r11" }; JobType = "user"; Executable = "$LHCBPRODROOT/DIRAC/scripts/jobexec"; StdOutput = "std.out"; Owner = "paterson"; OutputSandbox = { "std.out", "std.err", "DVNtuples.root", "DaVinci_v12r11.alog", "DVHistos.root" }; StdError = "std.err"; ProductionId = "00000000"; InputSandbox = { "lib.tar.gz", "jobDescription.xml", "jobOptions.opts" }; JobId = ID ]

PACMANDIRAC installation tools

Half way there!

But the climb getssteeper.

2005 Monte-Carlo Production on the Grid

2007 Data Taking

Data Stripping

Distributed Analysis

Distributed Reconstruction

Conclusion

DC04

DC03