Ising Model - physics.mcgill.ca

Transcript of Ising Model - physics.mcgill.ca

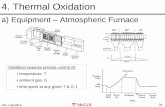

Chapter 14

Ising Model

There are many phenomena where a large number of constituents interact togive some global behavior. A magnet, for example, is composed of many in-teracting dipoles. A liquid is made up of many atoms. A computer programhas many interacting commands. The economy, the psychology of road rage,plate techtonics, and many other things all involve phenomena where the globalbehavior is due to interactions between a large number of entities. One wayor another, almost all such systems end up being posed as an ”Ising model” ofsome kind. It is so common, that it is almost a joke.

The Ising model has a large number of spins which are individually in mi-croscopic states +1 or −1. The value of a spin at a site is determined by thespin’s (usually short–range) interaction with the other spins in the model. SinceI have explained it so vaguely, the first, and major import, of the Ising model isevident: It is the most simple nontrivial model of an interacting system. (Theonly simpler model would have only one possible microscopic state for each spin.I leave it as an exercise for the reader to find the exact solution of this trivialmodel.) Because of this, whenever one strips away the complexities of someinteresting problem down to the bone, what is left is usually an Ising model ofsome form.

In condensed–matter physics, a second reason has been found to use thesimplified–to–the–bone Ising model. For many problems where one is interestedin large length scale phenomena, such as continuous phase transitions, the addi-tional complexities can be shown to be unimportant. This is called universality.This idea has been so successful in the study of critical phenomena, that it hasbeen tried (or simply assumed to be true) in many other contexts.

For now, we will think of the Ising model as applied to anisotropic magnets.The magnet is made up of individual dipoles which can orient up, correspondingto spin +1 or down corresponding to spin −1. We neglect tilting of the spins,

121

122 CHAPTER 14. ISING MODEL

arrow Spin S = +1

arrow Spin S = −1

Figure 14.1:

such as ↖, or ↗, or ↘, and so on. For concreteness, consider dimension d = 2,putting all the spins on a square lattice, where there are i = 1, 2, 3, ...N spins.In the partition function, the sum over states is

or or . . .

Figure 14.2: Possible configurations for a N = 42 = 16 system.

∑

{States}

=+1∑

s1=−1

+1∑

s2=−1

...+1∑

sN=−1

=N∏

i=1

+1∑

si=−1

where each sum has only two terms, of course. The energy in the exp −EState/kBT now must be prescribed. Since we want to model a magnet, whereall the spins are +1 or −1, by and large, we make the spin of site i want tooriented the same as its neighbors. That is, for site i

Energy of Site i =∑

neighbors of i

(Si − Sneighbors)2

= S2i − 2

∑

neighbors of i

SiSneigh. + S2neigh.

But S2i = 1 always, so up to a constant (additive and multiplicative)

Energy of Site i = −∑

neighbors of i

SiSneighbors of i

On a square lattice, the neighbors of “i” are the spin surrounding it (fig. 14.3).As said before, the idea is that the microscopic constituents -the spins- don’t

know how big the system is, so they interact locally. The choice of the 4 spins

123

Middle Spin is i, 4 Neighbors are

to the North, South, East, and West

Figure 14.3: Four nearest neighbors on a square lattice.

above, below and to the right and left as “nearest neighbors” is a convention.The spins on the diagonals (NW, NE, SE, SW) are called “next–nearest neigh-bors”. It doesn’t actually matter how many are included, so long as the inter-action is local. The total energy for all spins is

EState = −J∑

⟨ij⟩

SiSj

where J is a positive interaction constant, and ⟨ij⟩ is a sum over all i andj = 1, 2, ...N , provided i and j are nearest neighbors, with no double counting.Note

∑

⟨ij⟩

=N

∑

i=1

N∑

j=1︸ ︷︷ ︸

neighbors no double counting

= N ×4

2

If there are “q” nearest neighbors, corresponding to a different interaction, or adifferent lattice (say hexagonal), or another dimension of space

∑

⟨ij⟩

=q

2N

So the largest (negative) energy is E = − qJ2 N . If there is constant external

magnetic field H, which tends to align spins, the total energy is

EState = −J∑

⟨ij⟩

SiSj − H∑

i

Si

and the partition function is

Z =N∏

i=1

+1∑

S1=−1

e( JkBT )

P

⟨jk⟩ SjSk+( HkBT )

P

i Sj

= Z(N,J

kBT,

H

kBT)

As described above, the sum over q nearest neighbors is q = 4 for a two–dimensional square lattice. For three dimensions, q = 6 on a simple cubiclattice. The one–dimensional Ising model has q = 2.

124 CHAPTER 14. ISING MODEL

The one–dimensional model was solved by Ising for his doctoral thesis (wewill solve it below). The two–dimensional model was solved by Ousager, for H =0, in one of the premiere works of mathematical physics. We will not give thatsolution. you can read it in Landan and Lifshitz’s “Statistical Physics”. Theygive a readable, physical, but still difficult treatment. The three–dimensionalIsing model has not been solved.

The most interesting result of the Ising model is that it exhibits brokensymmetry for H = 0. In the absence of a field, the energy of a state, and thepartition function, have an exact symmetry

Si ↔ −Si

(The group is Z2.) Hence (?)

⟨Si⟩ ↔ −⟨Si⟩

and⟨Si⟩

?= 0

In fact, for dimension d = 2 and 3 this is not true. The exact result in d = 2 issurprisingly simple: The magnetization/spin is

m = ⟨Si⟩ =

{

(1 − cosech2 2JkBT )1/8, T < Tc

0, T > Tc

The critical temperature Tc ≈ 2.269J/kB is where (1−cosech2 2JkBT )1/8 vanishes.

A rough and ready understanding of this transition is easy to get. Consider the

0

1

m = ⟨Si⟩

Tc T

Figure 14.4: Two–dimensional square lattice Ising model magnetization per spin

Helmholtz free energyF = E − TS

where S is now the entropy. At low temperatures, F is minimized by minimizingE. The largest negative value E has is − q

2JN , when all spins are aligned +1 or−1. So at low temperatures (and rigorously at T = 0), ⟨Si⟩ → +1 or ⟨Si⟩ → −1.At high temperatures, entropy maximization minimizes F . Clearly entropy ismaximized by having the spins helter–skelter random: ⟨Si⟩ = 0.

The transition temperature between ⟨Si⟩ = 1 and ⟨Si⟩ = 0 should be whenthe temperature is comparable to the energy per spin kBT = O( q

2J) which is

125

in the right ball park. There is no hand–waving explanation for the abruptincrease of the magnetization per spin m = ⟨Si⟩ at Tc. Note it is not like aparamagnet which shows a gradual decrease in magnetization with increasingtemperature (in the presence of an external field H). One can think of it (or

Paramagnet

m = ⟨Si⟩

1

T

No BrokenSymmetryH > 0

Figure 14.5:

at least remember it) this way: At Tc, one breaks a fundamental symmetry ofthe system. In some sense, one breaks a symmetry of nature. One can’t breaksuch a symmetry in a tentative wishy–washy way. It requires a firm, determinedhand. That is, an abrupt change.

The phase diagram for the Ising model (or indeed for any magnet) look likefig. 14.6. It is very convenient that H is so intimately related with the broken

Paramagnet

Tc

coexisting phases)magnetization (andis there spontaneousOnly on the shaded line

T Tc H = 0

+1−1 m

for single phaseforbidden region

m

H > 0

+1

T

−1

T

H < 0

m

Figure 14.6:

symmetry of the Ising model. Hence it is very easy to understand that brokensymmetry is for H = 0, not H = 0. Things are more subtle in the lattice–gasversion of the Ising model.

126 CHAPTER 14. ISING MODEL

14.1 Lattice–Gas Model

In the lattice–gas model, one still has a lattice labeled i = 1, 2...N , but nowthere is a concentration variable ci = 0 or 1 at each site. The most simplemodel of interaction is the lattice–gas energe

ELG = −ϑ∑

⟨ij⟩

cicj − µ∑

i

ci

where ϑ is an interaction constant and µ is the constant chemical potential.One can think of this as a model of a binary alloy (where Ci is the local

concentration of one of the two phases), or a liquid–vapour system (where Ci isthe local density).

This is very similar to the Ising model

EIsing = −J∑

⟨ij⟩

SiSj − H∑

i

Si

To show they are exactly the same, let

Si = 2Ci − 1

then

EIsing = −J∑

⟨ij⟩

(2Ci − 1)(2Cj − 1) − H∑

i

(2Ci − 1)

= −4J∑

⟨ij⟩

CiCj + 4J∑

⟨ij⟩

Ci + Const. − 2H∑

i

Ci + Const.

= −4J∑

⟨ij⟩

CiCj + 4Jq

2

∑

i

Ci − 2H∑

i

Ci + Const.

And

EIsing = −4J∑

⟨ij⟩

CiCj − 2(H − Jq)∑

i

Ci + Const.

Since the additive constant is unimportant, this is the same as ELG, provided

ϑ = 4J

and

µ = 2(H − jq)

Note that the plane transition here is not at some convenient point µ = 0, itis at H = 0, which is the awkward point µ = 2Jq. This is one reason why theliquid–gas transition is sometimes said — incorrectly — to involve no changeof symmetry (Z2 group) as the Ising model. But the external field must have acarefully tuned value to get that possibility.

14.2. ONE–DIMENSIONAL ISING MODEL 127

T

µ

Tc

⟨c⟩ ≈ 1

⟨c⟩ ≈ 0

10

T

Tc

⟨c⟩

TTc

T

µ = 2Jq

2Jq

P

Pc Tc

Density

Real liquid – gas transition

Lattice – gas transition

P = Pc

Figure 14.7:

14.2 One–dimensional Ising Model

It is easy to solve the one–dimensional Ising model. Of course, we already knowthat it cannot exhibit phase coexistance, from the theorm we proved earlier.Hence we expect

⟨Si⟩ = m = 0

for all T > 0, where m is the magnetization per spin. The result and the methodare quite useful, though.

Z =N∏

i=1

+1∑

Si=−1

eJ

kBT

PNj=1 SjSj+1+ H

kBT

PNj=1 Sj

Note we have simplified the∑

⟨ij⟩ for one dimension. We need, however, aprescrition for the spin SN+1. We will use periodic boundary conditions so thatSN+1 ≡ S1. This is called the Ising ring. You can also solve it, with a little

1

2

3

N

N − 1

Ising Ring Periodoc Boundary Conditions

. . . .

Figure 14.8:

128 CHAPTER 14. ISING MODEL

more work, with other boundary conditions. Let the coupling constant

k ≡ J/kBT

andh = H/kBT

be the external field. Then

Z(k, h,N) =N∏

i=1

+1∑

Si=−1

ePN

j=1(kSjSj+1+hSj)

or, more symmetrically,

Z =N∏

i=1

+1∑

Si=−1

eP

j [kSjSj+1+ 12 h(Sj+Sj+1)]

=N∏

i=1

+1∑

Si=−1

N∏

j=1

ekSjSj+1+ 12 h(Sj+Sj+1)

Let matrix elements of P be defined by

⟨Sj |P |Sj+1⟩ ≡ ekSjSj+1+ 12 h(Sj+Sj+1)

There are only four elements

⟨+1|P | + 1⟩ = ek+h

⟨−1|P |− 1⟩ = ek−h

⟨+1|P |− 1⟩ = e−k

⟨−1|P | + 1⟩ = e−k

Or, in matrix form,

P =

[

ek+h e−k

e−k ek−h

]

The partition function is

Z =N∏

i=1

+1∑

Si=−1

⟨S1|P |S2⟩⟨S2|P |S3⟩...⟨SN |P |S1⟩

=+1∑

S1=−1

⟨S1|P (+1∑

S2=−1

|S2⟩⟨S2|) P (+1∑

S3=−1

|S3⟩⟨S3|)...P |S1⟩

But, clearly, the identity operator is

1 ≡∑

S

|S⟩⟨S|

14.2. ONE–DIMENSIONAL ISING MODEL 129

so,

Z =+1∑

S1=−1

⟨S1|PN |S1⟩

= Trace(PN )

Say the eigenvalues of P are λ+ and λ−. Then,

Z = λN+ + λN

−

Finally, say λ+ > λ−. Hence as N → ∞

Z = e−F/kBT = λN+

andF = −NkBTλ+

It remains only to find the eigenvalues of P .We get the eigenvalues from

det(λ − P ) = 0

This gives(λ − ek+h)(λ − ek−h) − e−2k = 0

or,

λ2 − 2λ (ek+h + ek−h

2)

︸ ︷︷ ︸

ek cosh h

+e2k − e−2k = 0

The solutions are

λ± =2ek cosh h ±

√

4e2k cosh2 h − 4e2k + 4e−2k

2

= ek[cosh h ±√

cosh2 h − 1 + e−4k]

So,

λ+ = ek(cosh h +√

sinh2 h + e−4k)

is the larger eigenvalue. The free energy is

F = −NkBT [k + ln (cosh h +√

sinh2 h + e−4k)]

where k = J/kBT , and h = H/kBT .The magnetization per spin is

m = −1

N

∂F

∂H= −

1

NkBT

∂F

∂h

Taking the derivative and simplifying, one obtains

m(h, k) =sinhh

√

sinh2 h + e−4k

As anticipated, m(h = 0, k) = 0. There is magnetization for h = 0, althoughthere is no broken symmetry of course. There is a phase transition at T = 0.

130 CHAPTER 14. ISING MODEL

low T

high T

−1

+1

m

H

Figure 14.9: Magnetization of 1-d Ising model

14.3 Mean–Field Theory

There is a very useful approximate treatment of the Ising model. We will nowwork out the most simple such “mean–field” theory, due in this case to Braggand Williams.

The idea is that each spin is influenced by the local magnetization, or equiva-lently local field, of its surrounding neighbors. In a magnetized, or demagnetizedstate, that local field does not fluctuate very much. Hence, the idea is to replacethe local field, determined by a local magnetization, with an average field, de-termined by the average magnetization. “Mean” field means average field, notnasty field.

It is easy to solve the partition function if there is only a field, and nointeractions. This is a (very simple) paramagnet. Its energy is

EState = −H∑

i

Si

and,

Z =∏

i

+1∑

Si=−1

eH

kBT

P

j Sj

= (∑

S1=±1

eH

kBT S1)...(∑

SN=±1

eH

kBT SN )

= (∑

S=±1

eH

kBT S)N

so,

Z = (2 coshH

kBT)N

and

F = −NkBT ln 2 coshH

kBT

14.3. MEAN–FIELD THEORY 131

so since,

m = −1

N∂F/∂H

thenm = tanhH/kBT

For the interacting Ising model we need to find the effective local field Hi at

+1

−1

m

H

1

m

T

H > 0

Figure 14.10: Noninteracting paramagnet solution

site i. The Ising model is

EState = −J∑

⟨ij⟩

SiSj − H∑

i

Si

we want to replace this — in the partition function weighting — with

EState = −∑

i

HiSi

Clearly

−∂E

∂Si= Hi

Thus, from the Ising model

−∂E

∂Si= J

∑

⟨jk⟩

(Sjδk,i + δj,iSk) + H

= J · 2 ·∑

j neighbors of i(no double counting)

Sj + H

Now let’s get rid of the 2 and the double–counting restriction. Then

Hi = J∑

j neighbors of i

Sj + H

is the effective local field. To do mean–field theory we let

Hi ≈ ⟨Hi⟩

132 CHAPTER 14. ISING MODEL

where

⟨Hi⟩ = J∑

j neighbors

⟨Sj⟩ + H

but all spins are the same on average, so

⟨Hi⟩ = qJ⟨S⟩ + H

and the weighting of states is given by

EState = − (qJ⟨S⟩ + H)︸ ︷︷ ︸

mean field, ⟨H⟩

∑

i

Si

For example, the magnetization is

m = ⟨S⟩ =

∑

S=±1 Se⟨H⟩kBT S

∑

S=±1 e⟨H⟩kBT S

where ⟨S⟩ = ⟨S1⟩, and contributions due to S2, S3... cancel from top and bottem.So

m = tanh⟨H⟩kBT

or

m = tanhqJm + H

kBT

This gives a critical temperature

kBTc = qJ

as we will now see.Consider H = 0.

m = tanhm

T/Tc

Close to Tc, m is close to zero, so we can expand the tanh function:

m =m

T/Tc−

1

3

m3

(T/Tc)3+ ...

One solution is m = 0. This is stable above Tc, but unstable for T < Tc.Consider T < Tc, where m is small, but nonzero. Dividing by m gives

1 =1

T/Tc−

1

3

m2

(T/Tc)3+ ...

14.3. MEAN–FIELD THEORY 133

Rearranging

m2 = 3(T

Tc)2(1 −

T

Tc) + ...

Or,

m = (3T

Tc)1/2(1 −

T

Tc)1/2

for small m, T < Tc. Note that this solution does not exist for T > Tc. ForT < Tc, but close to Tc, we have

m ∝ (1 −T

Tc)β

where the critical exponent, β = 1/2. All this is really saying is that m is a

Close to Tc, mean

field gives m ∝ (1 − T/Tc)1/2

m

TTc

H = 0

Figure 14.11:

parabola near Tc, as drawn in fig. 14.12 (within mean field theory). This is

+1

−1

T

mParabola near m = 0, T = Tc, H = 0

“Classical” exponent β = 1/2

same as parabola

Figure 14.12:

interesting, and useful, and easy to generalize, but only qualitatively correct.Note

kBTc = qJ =

⎧

⎪⎨

⎪⎩

2J , d = 1

4J , d = 2

6J , d = 3

The result for d = 1 is wildly wrong, since Tc = 0. In d = 2, kBTc ≈ 2.27J , sothe estimate is poor. Also, if we take the exact result from Ousageris solution,

134 CHAPTER 14. ISING MODEL

m ∝ (1 − T/Tc)1/8, so β = 1/8 in d = 2. In three dimensions kBTc ∼ 4.5Jand estimates are β = 0.313.... Strangely enough, for d ≥ 4, β = 1/2 (althoughthere are logarithmic corrections in d = 4)!

For T ≡ Tc, but H = 0, we have

m = tanh (m +H

kBTc)

or

H = kBTc(tanh−1 m − m)

Expanding giving

H = kBTc(m +1

3m3 + ... − m)

so

H =kBTc

3m3

for m close to zero, at T = Tc. This defines another critical exponent

T = Tcm

H

Shape of critical isotherm

is a cubic H ∝ m3

in classical theory

Figure 14.13:

H ∝ mδ

for T = Tc, where δ = 3 in mean–field theory.The susceptibility is

XT ≡ (∂m

∂H)T

This is like the compressability in liquid–gas systems

κT = −1

V(∂V

∂P)T

Note the correspondence between density and magnetization per spin, and be-tween pressure and field. The susceptibility “asks the question”: how easy is itto magnetize something with some small field. A large XT means the materialis very “susceptible” to being magnetized.

14.3. MEAN–FIELD THEORY 135

Also, as an aside, the “sum rule” for liquids∫

dr⟨∆n(r)∆n(0)⟩ = n2kBTκT

has an analog for, magnets∫

dr⟨∆m(r)∆m(0)⟩ ∝ XT

We do not need the proportionality constant.From above, we have

m = tanhkBTcm + H

kBT

or,H = kBT · tanh−1 m − kBTcm

and

(∂H

∂m)T = kBT

1

1 − m2− kBTc

so

XT =1

T − Tc

close to Tc. OrXT ∝ |T − Tc|−γ

where γ = 1. This is true for T → T+c , or, T → T−

c . To get the energy, and so

χT , susceptibility

diverges near Tc

Tc

χT

Figure 14.14:

the heat capacity, consider

⟨E⟩ = ⟨−J∑

⟨ij⟩

SiSj − H∑

i

Si⟩

All the spins are independent, in mean–field theory, so

⟨SiSj⟩ = ⟨Si⟩⟨Sj⟩

Hence

⟨E⟩ = −Jq

2Nm2 − HNm

136 CHAPTER 14. ISING MODEL

The specific heat is

c =1

N(∂E

∂T)N

Near Tc, and at H = 0,

m2 ∝

{

(Tc − T ) T < Tc

0 T > Tc

So,

⟨E⟩ =

{

−(Const.)(Tc − T ), T < Tc

0, T > Tc

and

c =

{

Const. T < Tc

0 T > Tc

If we include temperature dependence away from Tc, the specific heat looksmore like a saw tooth, as drawn in fig. 14.15. This discontinuity in c, a second

Tc

C

Figure 14.15: Discontinuity in C in mean field theory.

derivative of the free energy is one reason continuous phase transitions werecalled “second order”. Now it is known that this is an artifact of mean fieldtheory.

It is written asc ∝ |T − Tc|−α

where the critical exponentα = o(disc.)

To summarize, near the critical point,

m ∼ (Tc − T )β , T < Tc, H = 0

H ∼ mδ, T = Tc

XT ∼ |T − Tc|−γ , H = 0

and

c ∼ |T − Tc|−α, H = 0

14.3. MEAN–FIELD THEORY 137

The exponents are:

mean field d = 2 (exact) d = 3 (num) d ≥ 4α 0 (disc.) 0 (log) 0.125... 0 (disc.)β 1/2 1/8 0.313... 1/2γ 1 7/4 1.25... 1δ 3 15 5.0... 3

Something is happening at d = 4. We will see later that mean–field theoryworks for d ≥ 4. For now we will leave the Ising model behind.

notransition

non trivialexponents

mean fieldtheory works

1 2 3 4 5

0.20.30.40.5

0.1

β

d

Figure 14.16: Variation of critical exponent β with spatial dimension

138 CHAPTER 14. ISING MODEL

Chapter 5

Molecular Dynamics

At this point, one might say, computers are so big and fierce, why not simlpysolve the microscopic problem completely! This is the approach of moleculardynamics. It is a very important numerical technique, which is very very con-servative. It is used by physicists, chemists, and biologists.

Imagine a classical solid, liquid or gas composed of many atoms or molecules.Each atom has a position ri and a velocity vi (or momentum mvi). The Hamil-

ri

rj

vi

vj

|ri − rj |

particle i

particle j

o

Figure 5.1:

tonian is (where m is the atom’s mass)

H =N

∑

i=1

mv2i

2+ V (r1, ...rN )

Usually, the total potential can be well approximated as the sum of pairwiseforces, i.e.

V (r1, ...rN ) =N

∑

i=1, j=1(i>j)

V (|ri − rj |)

which only depends on the distance between atoms. This potential usually has

31

32 CHAPTER 5. MOLECULAR DYNAMICS

a form like that drawn. Where the depth of the well must be

r0

v

rϵ

Figure 5.2:

ϵ ∼ (100′sK)kB

and

ro ∼ (a few A′s)

This is because, in a vapor phase, the density is small and the well is unimpor-tant; when the well becomes important, the atoms condense into a liquid phase.Hence ϵ ∼ boiling point of a liquid, and ro is the separation of atoms in a liquid.Of course, say the mass of an atom is

m ∼ 10 × 10−27kg

ro ∼ 2A

then the liquid density is

ρ ∼m

r3o

=10 × 10−27kg

10A3

=10−27 × (103gm)

(10−8cm)3

ρ ∼ 1gm/cm3

of course. To convince oneself that quantum effects are unimportant, simlpyestimate the de Broglie wavelength

λ ∼h√

mkBT

∼ 1A/√

T

where T is measured in Kelvin. So this effect is unimportant for most liq-uids. The counter example is Helium. In any case, quantum effects are usually

33

marginal, and lead to no quantitative differences of importance. This is sim-ply because the potentials are usually parameterized fits such as the Lennard -Jones potential

V (r) = 4ϵ[(σ

r)12 − (

σ

r)6]

where

ro = 21/6σ ≈ 1.1σ

and

V (ro) = −ϵ

by construction. For Argon, a good “fit” is

ro = 3.4A

ϵ = 120K

Nummerically it is convenient that V (2ro) << ϵ, and is practically zero. Henceone usually considers

V (r) =

{

Lennard – Jones , r < 2ro

0 , r > 2ro

where ro = 21/6σ. Lennard-Jones has a run–of–the–mill phase diagram whichis representative of Argon or Neon, as well as most simple run–of–the–mill puresubstances. Simpler potentials like the square well also have the same properties.

s l

v

P

T

and

T

ρ

lv s

Coexistance

Figure 5.3: Leonnard Jousium (The solid phase is fcc as I recall)

34 CHAPTER 5. MOLECULAR DYNAMICS

But the hard sphere potential has a more simple–minded phase diagram. This

V

r

V =

⎧

⎪⎨

⎪⎩

∞ , r < σ

−ϵ , σ < r < σ′0 , r > σ′

Figure 5.4:

scoex.

lP

T

s

l

T

ρ

and

V

r

V =

{

∞ , r < σ

0 , r > σ

where,

Figure 5.5:

is because of the lack of an attractive interaction.Finally, lets get to the molecular dynamics method. Newton’s equations (or

Hamilton’s) are,dri

dt= vi

and ai = Fim

dvi

dt=

1

m

N∑

j=1(i=j)

∂

∂riV (|ri − rj |)

Clearly, we can simply solve these numerically given all the positions and veloc-ities initially, i.e. {ri(t = 0), vi(t = 0)}. A very simple (in fact too simple) way

35

to do this is bydri

dt=

ri(t + ∆t) − ri

∆t

for small ∆t. This gives

ri(t + ∆t) = ri(t) + ∆t vi(t)

and

ri(t + ∆t) = vi(t) + ∆t1

mFi

where Fi(t) is determined by {ri(t)} only. A better (but still simple) methodis due to Verlet. It should be remembered that the discretization method has alittle bit of black magic in it.

Valet notes that,

ri(t ± ∆t) = ri(t) ± ∆tvi +(∆t)2

2ai(t) + ...

where ai = Fi/m = d2ridt2 . Add this to itself

ri(t + ∆t) = 2ri(t) − ri(t − ∆t) + (∆t)21

mFi + O(∆t)

And us the simple rule

vi(t + ∆t) =ri(t + ∆t) − ri(t − ∆t)

2∆t

Thats all. You have to keep one extra set of ri’s, but it is the common method,and works well. To do statistical mechanics, you do time averages, after thesystem equilibrates. Or ensemble averages over different initial conditions. Theensemble is microcanonical sine energy is fixed. Usually one solves it for a fixedvolume, using periodic boundary conditions. A common practice is to do a

Figure 5.6: Periodic Boundary Conditions

36 CHAPTER 5. MOLECULAR DYNAMICS

“cononical” ensemble by fixing temperature through the sum rule

1

2m⟨v2⟩ =

1

2kBT

or,

T =⟨v2⟩mkB

This is done by constantly rescaling the magnitude of the velocities to be

|v| ≡ ⟨v2⟩1/2 ≡√

mkBT

What about microscopic reversibility and the arrow of time?

Recall the original equations

∂ri

∂t= vi

∂vi

∂t=

1

m

∑

j(j =i)

∂

∂riV (|ri − rj |)

The equations are invariant under time reversal t → −t if

r → r

v → −v

How then can entropy increase? This is a deep and uninteresting question.Entropy does increase; its a law of physics. The real question is how to calculatehow how fast entropy increases; e.g. calculate a viscosity or a diffusion constant,which set the time scale over which entropy increases.

Nevertheless, here’s a neat way to fool around with this a bit. Take a gasof N atoms in a box of size Ld where energy is fixed such that the averageequilibrium separation between atoms

seq = O(3 or 4)ro

where ro is a parameter giving the rough size of the atoms. Now initialize theatoms in a corner of the box with

s(t = 0) = O(ro)

or smaller (see fig. 5.7). Now, at time t = later, let all v → −v. By microscopicreversibility you should get fig. 5.8. Try it! You get fig. 5.9. It is round off

37

t = 0 t = later t = equilibrium

later t

“Entropy”

Figure 5.7:

t = 0 t = later t = 2later

tlater

1

“Entropy”

Figure 5.8:

tlater

“Entropy”

1

Figure 5.9:

error, but try to improve your precision. You will always get the same thing.Think about it. The second law is a law, not someone’s opinion.

38 CHAPTER 5. MOLECULAR DYNAMICS

Chapter 6

Monte Carlo and the

Master Equation

An improtant description for nonequilibrium statistical mechanics is provided bythe master equation. This equation also provides the motivation for the MonteCarlo method, the most important numerical method in many–body physics.

The master equation, despite the pretentious name, is phenomenology fol-lowing from a few reasonable assumptions. Say a system is defined by a state{S}. This state might, for example, be all the spins ±1 that an Ising model hasat each site. That is {S} are all the states appearing in the

Z =∑

{S}

e−E{S}/kBT (6.1)

partition function above. We wish to know the probability of being in somestate {S} at a time t,

p({S}, t) =? (6.2)

from knowledge of probabilities of states at earlier times. In the canonicalensemble (fixed T , fixed number of particles)

Peq({S}) ∝ e−E{S}/kBT (6.3)

of course. For simplicity, lets drop the { } brackets and let

{S} ≡ S (6.4)

As time goes on, the probability of being in state S increases because one makestransitions into this state from other (presumably nearby in some sence) statesS′. Say the probability of going from S → S′, in a unit time is

W (S, S′) ≡(Read As)

W (S ← S′) (6.5)

This is a transition rate as shown in fig. 6.1. Hence

39

40 CHAPTER 6. MONTE CARLO AND THE MASTER EQUATION

S′ S

W (S′, S)

W (S, S′)

Figure 6.1:

W (S, S′)P (S′) (6.6)

are contributions increasing P (S).However, P (S) decreases because one makes transitions out of S to the states

S′ with probabilityW (S′, S) ≡

(Read As)W (S′ ← S) (6.7)

So thatW (S′, S)P (S) (6.8)

are contributions decreasing P (S) as time goes on.The master equation incorporates both these processes as

∂P (S, t)

∂t=

∑

S′

[W (S, S′)P (S′) − W (S′, S)P (S)] (6.9)

which can be written as

∂P (S, t)

∂t=

∑

S′

L(S, S′)P (S′) (6.10)

where the inappropriately named Liouvillian is

L(S, S′) = W (S, S′) − (∑

S′′

W (S′′, S))δS, S′ (6.11)

Note that the master equation

1. has no memory of events further back in time than the differential element“dt”. This is called a Markov process

2. is linear.

3. is not time reversal invariant as t → −t (since it is first order in time).

In equilibrium,∂Peq(S)

∂t= 0 (6.12)

41

since we know this to be the definition of equilibrium. Hence in Eq. 6.9,

W (S, S′)Peq(S′) = W (S′, S)Peq(S) (6.13)

which is called detailed balance of transitions or fluctuations. For the canonicalensemble therefore,

W (S, S′)

W (S′, S)= e−(E(S)−E(S′))/kBT (6.14)

This gives a surprisingly simple result for the transitions W , it is necessary (butnot sufficient) that they satisfy Eq. 6.14. In fact, if we are only concerned withequilibrium properties, any W which satisfies detailed balance is allowable. Weneed only pick a convenient W !

The two most common W ’s used in numerical work are the metropolis rule

WMetropolis(S, S′) =

{

e−∆E/kBT ,∆E > 0

1 ,∆E ≤ 0(6.15)

and the Glauber rule

WGlauber(S, S′) =1

2(1 − tanh

∆E

2kBT) (6.16)

where∆E = E(S) − E(S′) (6.17)

Let us quickly check that WMetropolis satisfies detailed balance. If E(S) > E(S′)

W (S, S′)

W (S′, S)=

e−(E(S)−E(S′))/kBT

1= e−∆E/kBT

Now if E(S) < E(S′)

W (S, S′)

W (S′, S)=

1

e−(E(S)−E(S′))/kBT= e−∆E/kBT

where ∆E = E(S) − E(S′) as above. So Detailed balance works. You cancheck yourself that the Glauber rule works. The form of the rule is shown infigures 6.2 and 6.3. To do numerical work via Monte Carlo, we simply maketransitions from state {S′} → {S} using the probability W ({S}, {S′}). A lastbit of black magic is that one usually wants the states {S′} and {S} to be“close”. For something like the Ising model, a state {S} is close to {S′} if thetwo states differ by no more than one spin flip, as drawn in fig. 6.4. Let us dothis explicitly for the 2 dimensional Ising model in zero field, the same thingthat Onsager solved!

ESTATE = −J∑

⟨ij⟩

Si Sj (6.18)

42 CHAPTER 6. MONTE CARLO AND THE MASTER EQUATION

1 1 1

∆E ∆E ∆ELow T Moderate T High T

Metropolis

Figure 6.2:

1 1

∆E ∆E ∆ELow T Moderate T High T

1

1/2 1/2

Glauber

Figure 6.3:

? or

Outcome withprobabilityW ({S}, {S′})

Source State {S′} Target State {S}

Figure 6.4:

43

?

prob = e−8J/kBTCase 1

?

?

?Case 4

?

Case 2

Case 3

Case 5

prob = e−4J/kBT

prob = 1

prob = 1

prob = 1

Figure 6.5:

44 CHAPTER 6. MONTE CARLO AND THE MASTER EQUATION

where Si = ±1 are the spins on sites i = 1, 2, ... N spins (not to be confusedwith our notation for a state {S}!), and ⟨ij⟩ means only nearest neighboursare summed over. The positive constant J is the coupling interaction. If onlyone spin can possibly flip, note there are only five cases to consider as shown infig. 6.5.

To get the probabilities (Metropolis)For Case 1:

EBefore = −4J , EAfter = +4J

∆E = 8J

W (S, S′) = Prob. of flip = e−8J/kBT

For Case 2:

EBefore = −2J , EAfter = +2J

∆E = 4J

W (S, S′) = Prob. of flip = e−4J/kBT

For Case 3:

EBefore = 0 , EAfter = 0

∆E = 0

W (S, S′) = Prob. of flip = 1

For Case 4:

EBefore = +2J , EAfter = −2J

∆E = −4J

W (S, S′) = Prob. of flip = 1

For Case 5:

EBefore = +4J , EAfter = −4J

∆E = −8J

W (S, S′) = Prob. of flip = 1

This is easy if W = 1, if W = e−4J/kBT , then you have to know the temper-ature in units of J/kB . Let’s say, for example,

W = 0.1

You know the spin flips 10% of the time. You can enforce this by comparingW = 0.1 to a random number uniformly between 0 and 1. If the random numberis less that or equal to W , then you flip the spin.

Here is an outline of a computer code. If you start at a large temperature and

45

Initialize System

- How many spins, How long to average

- How hot, (give T = (...)J/kB)?

- How much field H?

- Give all N spins some initial value (such as Si = 1).

use periodic boundary conditions

Do time = 0 to maximum time

Do i = 1 to N

- Pick a spin at random

- Check if it flips

- Update the system

Do this N times

(each spin is flipped on average)

Calculate magnetization per spin

⟨M⟩ = 1N ⟨

∑

i Si⟩

Calculate energy per spin

⟨EJ ⟩ = −1

N ⟨∑

⟨ij⟩ SiSj⟩

averaging over time (after throwing away some early time).

time t = 0

Grinding

any 1mcs

time step

as N updates

Output

Figure 6.6:

46 CHAPTER 6. MONTE CARLO AND THE MASTER EQUATION

M

1high T > Tc

t

t in Monte Carlo step 1, 1 unit of time

corresponds to attempting N spin flips

Figure 6.7:

1

M transient

time average to get ⟨M⟩

t

low T < Tc

Figure 6.8:

M ∼ |T − Tc|−beta, β = 1/8

Tc ≈ 2.269J/kB

T

1

⟨M⟩

Figure 6.9:

47

Tc

T

−2

⟨E/J⟩

Figure 6.10:

initially Si = 1, the magnetization quickly relaxes to zero (see fig. 6.7). Below Tc,the magnetization quickly relaxes to its equilibrium value (see fig. 6.8). Doingthis for many temperatures, for a large enough latice gives fig. 6.9. Doing thisfor the energy per spin gives fig. 6.10. which is pretty boring until one plots theheat capacity per spin

C ≡1

J

∂⟨E⟩∂T

Try it. You’ll get very close to Ousager’s results with fairly small systems

ln |T − Tc|

Tc ≈ 2.269 J/kB

C

Figure 6.11:

N = 10× 10, or 100× 100 over a thousand or so time steps, and you’ll do muchbetter than the mean field theory we did in class.

As an exercise, think how would you do this for the solid–on–solid model

Z =∑

{States}

e−EState/kBT

where

EState = JN

∑

i=1

|hi − hi+1|

where hi are heights at sites i.

48 CHAPTER 6. MONTE CARLO AND THE MASTER EQUATION

Chapter 5

Nonequilibrium Theory Primer

How to go from microscopic to macroscopic? What is the most useful (mesoscopic) description?

Macroscopict > µs

Mesoscopic

µs > t > 10−12s

Microscopict < 10−12s

Newton’s laws

Mori–Zwanzig

Langevin, Fokker–Planck

neglect thermal

Hydrodynamics, Diffusion

noise

5.1 Brownian Motion and L-R Circuits

Figure 5.1: 1mu particles (balls) in fluid execute random looking path

Just looking in one dimension, d = 1

⟨x2⟩ ∝ t (5.1)

From which one can experimentally extract a diffusion constant

D ≡⟨x2⟩2t

(5.2)

57

58 CHAPTER 5. NONEQUILIBRIUM THEORY PRIMER

What is the equation of motion for a ball in a fluid?

mdv

dt= Force (5.3)

Newton’s law. And the force is a drag due to the fluid viscosity

Force = −αv (5.4)

whereα = 6πηa (5.5)

η is the viscosity, a the ball radius. Hencedv

dt= −αv (5.6)

(where I will let m = 1 to simplify writing). This can be interpreted as a “hydrodynamic–like” equationfor transport. In fact it is derived from the hydrodynamic equations, but I mean that it is a macroscopicdescription of a nonequilibrium — that is a transport — process.

It is unlike most dynamical equations that one sees for mechanics or in microphysics because it breakstime–reversal. Remember that v → −v under t → −t, so Eq. 5.6 is not invariant under time reversal. Itssolution is

v(t) = voe−αt (5.7)

for some initial condition vo. Note that any initial bump in v just decays away as shown in Fig. 5.2. This

Figure 5.2:

has something to do with entropy increasing, but that’s another story.So how does time reversal get broken in Eq. 5.6 (or equivalently in hydrodynamics). There are a very

large number of independent variables in the ball and fluid ∼ 1023. Most of these are of no consequenceto the motion of a ball, and they have very fast characteristic relaxation times ∼ 10−10 seconds, which isthe time for a sound wave to get scattered. In an experiment (or if one just watches) over the course of asecond or a fraction of a second, one averages over something like 1010 of these very fast times. In theory,one ensemble averages the effects of “fast” variables to obtain the result on a “slow” variable like v. Thereis a large literature in this but its very involved.

Instead, let us simply look at the most simple correction to Eq. 5.6, in the spirit of the theory ofthermodynamic fluctuations. Recall that there one finds that the corrections to thermodynamics satisfyrelations like

⟨(∆T )2⟩ =kBT 2

Cv(5.8)

5.1. BROWNIAN MOTION AND L-R CIRCUITS 59

so that Cv, which is not calculated in thermodynamics, is given in terms of the temperature correlation func-tion, for which one needs statistical mechanics. This is called a correlation–response relation or fluctuation–dissipation relation of the first kind. Following Langevin we say that the ball in the fluid is knocked aroundby the fluid particules (the other 1023 variables). Hence, instead of the macroscopic Eq. 5.6 we have themicroscopic (or sometimes called mesoscopic) equation of motion.

dv

dt= −αv + f(t) (5.9)

The properties of f(t), a random force, must be

⟨f(t)⟩ = 0 (5.10)

And only the second moment is nontrivial(

by the central–limit theorem, all higher–order moments areGaussian, such as

⟨f(t1)f(t2)f(t3)f(t4)⟩ = ⟨f(t1)f(t2)⟩⟨f(t3)f(t4)⟩+ ⟨f(t1)f(t3)⟩⟨f(t2)f(t4)⟩+ ⟨f(t1)f(t4)⟩⟨f(t2)f(t3)⟩

or essentially

⟨f4⟩ = 3⟨f2⟩2)

Since correlation of the f degrees of freedom decay in 10−10 seconds, we make the approximation, whichis like taking the thermodynamic limit in equilibrium, that they decay instantaneously. That is

⟨f(t)f(t′)⟩ = kBTΓδ(t− t′) (5.11)

where Γ gives the strength of the noise (the 2kBT is for convenience). (Note Γ > 0).That is all there is to it, now we can solve the whole thing, and relate Γ to α, and obtain a fluctuation

dissipation relation of the second kind. The solution of Eq. 5.9 (if vo = 0 for convenience) is

v(t) = e−αt

∫ t

0dt′eαt′f(t′) (5.12)

So if f is Gaussian, so is v. First we have⟨v(t)⟩ = 0 (5.13)

Now consider

⟨v(t)2⟩ = e−2αt

∫ t

0dt′∫ t

0dt′′eα(t′+t′′)⟨f(t′)f(t′′)⟩

= 2kBTΓe−2αt

∫ t

0dt′e2αt′

thanks to the physicist’s friend, the Dirac delta function. Hence

⟨v2(t)⟩ = kBT

(Γ

α

)(

1− e−2αt)

(5.14)

60 CHAPTER 5. NONEQUILIBRIUM THEORY PRIMER

But equipartition says that in equilibrium (presumably reached as t→∞),

1

2m⟨v2⟩ =

1

2kBT

so⟨v2(t =∞)⟩ = kBT (5.15)

and we have the Einstein relation (implying α > 0).

α︸︷︷︸

macroscopic

= Γ︸︷︷︸

microscopic

(5.16)

and the second fluctuation–dissipation relation:

⟨f(t)f(t′)⟩ = 2kBTαδ(t− t′) (5.17)

Compare this to Eq. 5.8 and think. It is easy to calculate other things, and here is one useful one:

⟨v(t′)v(t′ + t)⟩ = ⟨v(0)v(t)⟩ = kBTe−α|t| (5.18)

For example, let’s say one wants ⟨x2(t)⟩, note that

x(t) =

∫ t

0dt′v(t′)

so that

⟨x2(t)⟩ =

∫ t

0dt′∫ t

0dt′′⟨v(t′)v(t′′)⟩

=

∫ t

0dt′∫ t

0dt′′⟨v(0)v(t′′ − t′)⟩

(5.19)

this takes a little fiddling. Let

τ = t′′ − t′, t =1

2(t′ + t′′)

so that the Jacobian is unity and

t′ = t−τ

2, t′′ = t +

τ

2The domain of integration is the box sketched in Fig. 5.3, so

⟨x2(t)⟩ =

[∫ 0

−tdτ

∫ t′=t

t′′=0dt +

∫ t

0dτ

∫ t′′=t

t′=0dt

]

⟨v(0)v(τ)⟩

or,

⟨x2(t)⟩ =

[∫ 0

−tdτ

∫ t+ τ2

− τ2

dt +

∫ t

0dτ

∫ t− τ2

τ2

dt

]

⟨v(0)v(τ)⟩

The integrals are equal, and the t integral can be done to give

⟨x2(t)⟩ = 2

∫ t

0(t− t′)⟨v(0)v(t′)⟩dt′ (5.20)

5.1. BROWNIAN MOTION AND L-R CIRCUITS 61

t

t

t′′

t′

τ = 0

τ = t

τ = −t

Figure 5.3:

From Eq. 5.18 one has

⟨x2(t)⟩ = 2kBTt

∫ t

0

(

1−t′

t

)

e−αt′dt′

≈ 2

(kBT

α

)

t + ... (for late t)

(5.21)

(Do it more carefully if you want. Try ⟨x(t)x(t′)⟩ too, if you want.) This is the form for one–dimensionaldiffusion, with the diffusion constant

D =kBT

α(5.22)

This was used in the early 20TH century to estimate kB , and hence Avagadro’s number from the gas constantR.

R

kB= 6.02× 1023

The content is the same as Eqs. 5.16 and 5.17, but its more physical. This helped establish that liquids werecomposed of many particles at that time.

The same aproach can be used for noise in electrical circuits, called Johnson noise. If the current changes

V L R

Figure 5.4:

di/dt with time the voltage resists it by V = iR in

Ldi

dt= −Ri (5.23)

62 CHAPTER 5. NONEQUILIBRIUM THEORY PRIMER

where L ≡ 1 is inductance, R is resistance and i is current. Using unit inductance, and adding a randomvoltage:

di

dt= −Ri + f(t) (5.24)

where R plays the role of transport coefficient and ⟨f⟩ = 0

⟨f(t)f(t′)⟩ = 2kBTΓδ(t− t′) (5.25)

as before. As above

⟨i2(t)⟩ = kBT

(Γ

R

)(

1− e−2Rt)

(5.26)

But the energy in an inductor is

Energy =1

2Li2 ≡

1

2i2

So by equipartition⟨i2⟩ = kBT

in equilibrium as t→∞ and hence:Γ = R (5.27)

the Nyquist theorem for the intensity of Johnson noise. Again, R > 0 implied.

5.2 Fokker–Planck Equation

These are both special (linear) cases. Now we will do a more general case. Say we have the Langevinequation

∂m(t)

∂t= v(m) + f(t) (5.28)

where⟨f(t)f(t′)⟩ = 2kBTΓδ(t− t′)

(It is straightforward to generalize this to mi(t) or m(r, t), where ⟨fifj⟩ ∼ δijδ(t − t′), and ⟨f(r)f(r′)⟩ ∼δ(r − r′)δ(t− t′)).

We shall turn this into an equation for the probability distribution, called the Fokker–Planck equation,and derive a more sophisticated version of the fluctuation–dissipation theorm, (namely, v(m) = −Γ ∂F

∂m ,where F is a free energy).

Say M is the solution of the Langevin equation for some particular history of m being kicked around bythe random force f . Clearly, the normalized probability distribution is the micro canonical

ρ(M, t) = δ(M −m(t)) (5.29)

Now let’s be tricky by taking a time derivative

∂ρ

∂t= ρ = −m

∂

∂Mδ(M −m(t))

or,

ρ =∂

∂M

(

m∣∣m=M

ρ(M, t))

5.2. FOKKER–PLANCK EQUATION 63

The formal solution of this isρ(M, t) =

[

e−R t0 dt′ ∂

∂M m(t′)|m=M

]

ρ(M, 0) (5.30)

Now let us get the complete distribution by averaging over all histories of random forcings:

P (M, t) = ⟨ρ(M, t)⟩ (5.31)

and of course P (M, 0) = ρ(M, 0). Hence,

P (M, t) = ⟨e−R t0 dt′ ∂

∂M m(t′)|m=M ⟩P (M, 0)

or, recalling the Langevin equation 5.28:

P (M, t) = e−R t0 dt′ ∂

∂M v(M)⟨e−R t0 dt′ ∂

∂M f(t)⟩P (M, 0)

But if a variable u is Gaussian, with ⟨u2⟩ = σ2, then ⟨eu⟩ = eσ2/2, and here we have

⟨f(t)f(t′)⟩ = 2kBTΓδ(t− t′)

so

⟨e−R t0 dt′ ∂

∂M f(t′)⟩ = ekBTΓt ∂2

∂M2

andP (M, t) = et ∂

∂M [−v+kBTΓ ∂∂M ]P (M, 0)

or,∂P

∂t=

∂

∂M

(

−v(M) + kBTΓ∂

∂M

)

P (M, t) (5.32)

which is almost the Fokker–Planck equation. Note that the equilibrium solution, when ∂Peq/∂t = 0, is

v(M) = kBTΓ∂

∂MlnPeq(M) (5.33)

ButPeq(M) = e−F (M)/kBT (5.34)

Hence we must have

v(M) = −Γ∂F

∂M(5.35)

which is, again, the fluctuation–dissipation relation. In summary, we have shown the equivalence of theLangevin equation

∂m

∂t= −Γ

∂F

∂m+ f (5.36)

where f is a Gaussian random noise with

⟨f(t)f(t′)⟩ = 2kBTΓδ(t− t′) (5.37)

and the Fokker–Planck equation

∂P

∂t= Γ

∂

∂M

(∂F

∂M+ kBT

∂

∂M

)

P (M, t) (5.38)

with the equilibrium distributionPeq ∝ e−F (M)/kBT (5.39)

Next stop will be to show the limit in which the Master equation is equivalent to either the Fokker–Planckequation, or the Langevin equation.

64 CHAPTER 5. NONEQUILIBRIUM THEORY PRIMER

5.3 Appendix 1

For brownian motion

m∂v

∂t= −αv + f

⟨f(t)f(t′)⟩ = 2kBTmαδ(t− t′)

with

α = 6πηa = 2× 10−2gm/sec

⎧

⎪⎪⎪⎪⎪⎪⎨

⎪⎪⎪⎪⎪⎪⎩

η = 10−2 poise︸ ︷︷ ︸

gmcm·sec

(for water)

a = 10−1cm

m = 1gmcm3 × 4π

3 (10−1)3

= 4× 10−3g

α

m=

5

sec=

1

τmicro; τmicro ∼ 0.2sec

which is macroscopic. Forcing a length by l ≡ mα

√

kBTm ∼ 200A, which is almost microscopic.

What are the properties of a random noise

dx

dt= F

︸︷︷︸

deterministic

+ f︸︷︷︸

random

Do time averages over an interval t ≤ tmacro. Say noise is only correlated for times∼ tmicro, where

⟨f2⟩1/2

F

t

Figure 5.5: Cartoon, F should be a single valued function of t.

tmacro ∼seconds and tmicro ∼ 10−10seconds. Or consider the hydrodynamic limit, or Markov process where,

tmacro

tmicro→∞ (5.40)

which is the analog of the thermodynamic limit. Here we take it by tmicro → 0. It is by this limit that timereversal symmetry is broken and entropy increases. Call

N =tmacro

tmicro(5.41)

the number of independent parts.

5.4. MASTER EQUATION AND FOKKER–PLANCK EQUATION 65

F

t

tmicro

tmacro

i = 1i = 2i = 3

⟨F ⟩

Figure 5.6: Cartoon, F should be a single valued function of t.

Hence

⟨F ⟩ =1

N

N∑

i=1

⟨Fi⟩ = O(1) (5.42)

since the boxes are independent, and ⟨Fi⟩ knows nothing about the boxes. Similarly, the total for the randomnoise (over the tmacro time interval)

f2 =∑

i

fi

∑

j

fj

So its average is

⟨f2⟩ =1

N2

∑

j

⟨fifj⟩

=1

N

∑

i

⟨f2i ⟩+ uncorrelated

= O(1

N)

If we consider 1/N the result from averaging over some macroscopically short time interval, then

⟨f(t)f(t′)⟩ = (Const.)δ(t− t′)

gives the appropriate result for any macroscopically short time interval, regardless of how short (i.e. astmicro → 0). For convenience this gives Γ as

⟨f(t)f(t′)⟩ = 2kBTΓδ(t− t′)

All higher moments are Gaussian by similar arguments.

5.4 Master Equation and Fokker–Planck Equation

Master Equation:

∂P (s)

∂t=∑

s′

⎡

⎢⎢⎢⎢⎣

w(s, s′)P (s′)︸ ︷︷ ︸

s′→sFluctuations fromother states to s

−w(s, s′)P (s)︸ ︷︷ ︸

s→s′

Fluctuationsout of s

⎤

⎥⎥⎥⎥⎦

(5.43)

66 CHAPTER 5. NONEQUILIBRIUM THEORY PRIMER

In equilibrium∂Peq

∂t= 0 (5.44)

wherePeq(s) = e−E(s) (5.45)

(in units of temperature), so

w(s, s′)e−E(s′) = w(s′, s)e−E(s) (5.46)

which is the condition of detailed balance of fluctuations. One choice which satisfies this is due to Metropolis

WMetropolis(s, s′) =

{

e−∆E(s,s′) , ∆Es

1 , ∆Es′

(5.47)

where ∆E(s, s′) = E(s)− E(s′). But the choice is somewhat arbitrary. For example, if we let

w(s, s′) = D(s, s′)e12 (E(s′)−E(s)) (5.48)

then detailed balance is satisfied provided

D(s, s′) = D(s′, s) (5.49)

With this transformation the particular Metropolis choise (Eq. 5.47) becomes:

DMetropolis(s, s′) = e−

12 |∆E(s,s′)| (5.50)

Note that all the equilibrium statistical mechanics is satisfied by Eq. 5.49 however.Hence, Eq. 5.43 becomes:

∂P (s)

∂t=∑

s′

D(s, s′)[

e12 (E(s′)−E(s))P (s′)− e−

12 (E(s′)−E(s))P (s)

]

(5.51)

where D is only due to transport.Now we will derive the Fokker–Planck equation.Most fluctuations involve nearby states s and s′. So we can expand the transport matrix as (Kramers–

Moyal expansion)

D(s, s′) = Doδs,s′ + D2∂2

∂(s− s′)2δs,s′ + .... (5.52)

Odd terms cannot appear since D(s, s′) = D(s′, s) and we are evidently writing D(s, s′) = f(s− s′).The first term, Doδs,s′ , gives no contribution to Eq. 5.51. So we consider the first nontrivial term

D(s, s′) = D2∂2

∂s2δs,s′ (5.53)

Note ∂2

∂(s−s′)2 has the same action on δs,s′ as ∂2/∂s2.

∂P (s)

∂t= D2

∑

s′

(∂2

∂s2δs,s′

)[

e−12∆EP (s′)− e

12∆EP (s′)

]

(5.54)

5.4. MASTER EQUATION AND FOKKER–PLANCK EQUATION 67

or, on simplifying

∂P (s)

∂t= D2e

−E(s)2

∂2

∂s2

∑

s′

δs,s′eE(s′)

2 P (s′)−D2eE(s)

2 P (s)∂2

∂s2

∑

s′

δs,s′e−E(s)

2

or,∂P

∂t= D2e

−E(s)2

∂2

∂s2

(

eE(s)

2 P (s))

−D2eE(s)

2 P (s)∂2

∂s2

(

e−E(s)

2

)

(5.55)

Now consider∂

∂se

E(s)2 p(s) =

1

2

∂E

∂se

E2 P + e

E2∂P

∂s

and∂

∂se−

E2 = −

1

2

∂E

∂Se−

E2 (5.56)

so∂2

∂s2

(

eE2 P)

=1

2

∂2E

∂s2e

E2 P +

1

4

(∂E

∂s

)2

eE2 P +

2

2

∂E

∂se

E2∂P

∂s+ e

E2∂2P

∂s2(5.57)

or

e−E2∂2

∂s2

(

eE2 P)

=1

2

∂2E

∂s2P +

1

4

(∂E

∂s

)2

P +∂E

∂s

∂P

∂s+∂2P

∂s2(5.58)

and from Eq. 5.56∂2

∂s2e−E/2 = −

1

2

∂2E

∂s2e−

E2 +

1

4

(∂E

∂s

)2

e−E2 (5.59)

or,

eE/2P∂2

∂s2e−E/2 = −

1

2

∂2E

∂s2P +

1

4

(∂E

∂s

)2

P (5.60)

Using Eq. 5.58 and Eq. 5.60 in Eq. 5.55 (just subtract them):

∂P

∂t= D2

[∂2E

∂s2P +

∂E

∂s

∂P

∂s+∂2P

∂s2

]

Note how the 1/2 factor disappears, or,

∂P

∂t= D2

∂

∂s

[∂E

∂s+

∂

∂s

]

P (5.61)

called the Fokker–Planck equation. To check its consistancy with equilibrium, note

∂Peq

∂t= 0

or [∂E

∂s+

∂

∂s

]

Peq = 0

∂E

∂s= −

∂ lnPeq

∂s

68 CHAPTER 5. NONEQUILIBRIUM THEORY PRIMER

orPeq ∝ e−E (5.62)

as it should.Evidently the Fokker–Planck equation can be rewritten as

∂P (s)

∂t=∑

s′

LFP (s, s′)P (s′) (5.63)

where

LFP (s, s′) = D2∂

∂s

[∂E

∂s+

∂

∂s

]

δs,s′ (5.64)

We’ll now return to the Master equation to give a similar form.Consider Eq. 5.51. The second piece on the right–hand side is

Piece =∑

s′

D(s, s′)[

−e−12 (E(s′)−E(s))P (s)

]

(5.65)

The awkwardness is that P (s) is not summed out. First let the dummy index s′ be s′′, so

Piece =∑

s′′

D(s, s′′)[

−e−12 (E(s′′)−E(s))P (s)

]

Now replace P (s) by∑

s′ δs,s′P (s′), giving

Piece =∑

s′

⎡

⎢⎢⎢⎢⎣

−

⎛

⎜⎜⎜⎜⎝

∑

s′

D(s, s′′)e−12 (E(s′′)−E(s))

︸ ︷︷ ︸

some function of s

⎞

⎟⎟⎟⎟⎠

δs,s′P (s)

⎤

⎥⎥⎥⎥⎦

(5.66)

Now put this back into Eq. 5.51 to get

∂P (s)

∂t=∑

s′

{

D(s, s′)e12 (E(s′)−E(s))P (s′)−

(

∑

s′′

D(s, s′′)e−12 (E(s′′)−E(s))

)

δs,s′P (s′)

}

(5.67)

or,∂P (s)

∂t=∑

s′

LME(s, s′)P (s′) (5.68)

where

LME = D(s, s′)e12 (E(s′)−E(s)) −

(

∑

s′′

D(s, s′′)e−12 (E(s′′)−E(s))

)

δs,s′ (5.69)

Making the Kramers–Moyal approximation

D(s, s′)→ D2∂2

∂s2δs,s′

5.4. MASTER EQUATION AND FOKKER–PLANCK EQUATION 69

should recoverLME(s, s′)→ LFP (s, s′)

Note there is still freedom to choose the symmetric matrix D(s, s′) in Eq. 5.69.In either the Fokker–Planck equation (Eq. 5.63) or the Master equation (Eq. 5.68), we can introduce a

transformation which makes L(s, s′) symmetric. Let

Λ ≡ e12 E(s)L(s, s′)e−

12 E(s′) (5.70)

Then in Eq. 5.69 we have

ΛME(s, s′) = D(s, s′)−

[

∑

s′′

D(s, s′′)e−12 (E(s′′)−E(s))

]

δs,s′ (5.71)

which is symmetric, since D is symmetric.For the Fokker–Planck equation (Eq. 5.64)

ΛFP = D2eE(s)

2∂

∂s

[∂E

∂s+

∂

∂s

]

δs,s′e−E(s)/2

= D2

[∂2E

∂s2δs,s′ +

∂E

∂seE(s)/2 ∂

∂s

(

e−E(s)/2δs,s′

)

+ eE(s)/2 ∂2

∂s2δs,s′e−E(s)/2

] (5.72)

Now consider∂

∂sδs,s′e−E(s)/2 = −

1

2

∂E

∂se−E(s)/2δs,s′ + e−E(s)/2 ∂

∂sδs,s′ (5.73)

Taking one more derivative gives

∂2

∂s2δs,s′e−E/2 = −

1

2

∂2E

∂s2e−E/2δs,s′ +

1

4

(∂E

∂s

)2

e−E/2δs,s′ + e−E/2 ∂2

∂s2δs,s′ (5.74)

Using Eq. 5.73 and Eq. 5.74 in Eq. 5.72 gives

ΛFP (s, s′) = D2

[

1

2

∂2E

∂s2δs,s′ −

1

4

(∂E

∂s

)2

δs,s′ +∂2

∂s2δs,s′

]

(5.75)

so, letting

ρ(s) ≡ eE(s)

2 P (s) (5.76)

we have∂ρ(s)

∂t=∑

s′

Λ(s, s′)ρ(s) (5.77)

whereΛ(s, s′) = Λ(s′, s) (5.78)

and

ΛME(s, s′) = D(s, s′)−

[

∑

s′′

D(s, s′′)e−12 (E(s′′)−E(s))

]

δs,s′ (5.79)

70 CHAPTER 5. NONEQUILIBRIUM THEORY PRIMER

or if D(s, s′) = D2∂2

∂s2 δs,s′ ,

ΛFP (s, s′) = D2

[

1

2

∂2E

∂s2−

1

4

(∂E

∂s

)2

+∂2

∂s2

]

δs,s′ (5.80)

Note that detailed balance is satisfied by D(s, s′) = D(s′, s). Also, the equilibrium distribution of ρ(s) is

ρeq(s) ∝ e−E(s)

2 (5.81)

Normalization can be fixed by a multiplicative constant in Eq. 5.76.The formal solution of Eq. 5.77 is

ρ(s, t) =∑

s′

eΛ(s,s′)tρ(s′, 0) (5.82)

Since Λ(s, s′) is symmetric, it has real eigenvalues denoted by (−1/τs) say, i.e.

ρ(s, t) =∑

s′

e−t/τs′ρ(s′, 0) (5.83)

The largest of these, τ say, should often satisfy

τ(N) = Nz/d (5.84)

where d is the dimension of space of a system with N constituents, and z is an exponent for slowing down.Let us return now to the dependence of D(s, s′). As mentioned before, detailed balance is satisfied by

requiring this to be a symmetric matrix. Furthermore, we obtained the Fokker–Planck equation by expandingit. That is

D(s, s′) = D(s′, s)

and

D(s, s′) ≈ Doδs,s′ + D2∂2

∂s2δs,s′

are our only conditions on D, and neither fix the temperature dependence — if any — of this quantity. Infact, we require no further dependence on temperature for consistancy with equilibrium.

But let us consider the Metropolis and Glauber choices for transition probabilities. Eq. 5.47

WMetropolis =

{

e−∆E(s,s′), ∆E > c

1, ∆E ≤ c

and

WGlauber =1

2

(

1− tanh∆E(s, s′)

2

)

(5.85)

which both satisfy detailed balance, and give, Eq. 5.50

DMetropolis = e−12 |∆E|

and

DGlauber =1

2 cosh ∆E(5.86)

5.4. MASTER EQUATION AND FOKKER–PLANCK EQUATION 71

e−12∆E

e−12 |∆E|

∆E

D1 Metroplis

Glauber

Figure 5.7:

which are symmetric, but with strong dependences on temperature and the Hamiltonian.Evidently they are of this form, at least partly, so that W ≤ 1. Note that, if W = 1, D = e−

12∆E , so

that the largest D can be is bounded by the Metropolis rule (Eq. 5.50), shown in Fig. 5.7.Hence any rule sitting “under” the Metropolis rule is valid, e.g. Fig. 5.8 or Fig. 5.9. The second of these,

or perhaps something else, may be useful for high–temperature series expansions, since

DMetropolis ≈ 1−O(|∆E|)

while

DGlauber ≈1

2−O((∆E)2)

as ∆E → 0.But now let’s consider the Fokker–Planck equation with the operator

DFP (s, s′) = Doδs,s′ + D2∂2

∂s2δs,s′

Note D is now negative close to ∆E = 0.This seems at odds with interpreting

w = D e12∆E

as a probability on 0→ 1, (up to a trivial rate constant).Perhaps one should consider a coarse–grained DFP , which will give a positive definite DFP (coarse–grain

over a narrow Gaussian say).In any case, it is interesting to note that if one chooses

D(s, s′) = D

∆E

D1 Metroplis

Figure 5.8:

72 CHAPTER 5. NONEQUILIBRIUM THEORY PRIMER

∆E

D1 Metroplis

Figure 5.9:

a constant, and scales time in these units, that one has the symmetric Master equation from Eq. 5.77

∂ρ(s)

∂t=∑

s′

Λ(s, s′)ρ(s′)

with (Eq. 5.78):

ΛME(s, s′) = 1− δs,s′

∑

s′′

e−12 (E(s′′)−E(s))

This is fine for Ising models above a temperature To where

e12∆E(s,s′,To) ≡ D

This looks “not too bad” since∑

s′′ e−12 E(s′′) = Z(2T ) giving

ΛME(s, s′) = 1− δs,s′e12 E(s)Z

∆E = ∂E∂s (s − s′)

DFokker–Planck

Figure 5.10:

5.5 Appendix 2: H theorem for Master Equation

For convenience let T = 1 (that is measure energy in units of temperature). The free energy

F = E − TS

becomesF = E − S (5.87)

5.5. APPENDIX 2: H THEOREM FOR MASTER EQUATION 73

which, in thermodynamics, is minimized in equilibrium. We will show that

∂F

∂t≤ 0 (5.88)

from the Master equation, whereF = ⟨E⟩ − ⟨− ln P ⟩

ThenF =

∑

s

EsPs +∑

s

Ps lnPs (5.89)

so,

dF

dt=∑

s

EsdPs

dt+∑

s

dPs

dtlnPs +

❃0

∑

s

dPs

dt(5.90)

Using∑

s Ps = 1 so that

d

dt

(

∑

s

Ps

)

=d

dt1 = 0 (5.91)

HencedF

dt=∑

s

(Es + ln Ps)Ps

dt(5.92)

But, from Eq. 5.51dPs

dt=∑

s′

Ds,s′

{

e−12∆Es,s′ Ps′ − e

12∆Es,s′ Ps

}

(5.93)

is the Master equation, where

∆Es,s′ ≡ Es − Es′ (5.94)

Ds,s′ = Ds′,s (5.95)

and

Ds,s′ ≥ 0 (5.96)

Dropping all the subscripts gives

dF

dt=∑

s,s′

D{

(E + ln P )e−12∆EP ′ − (E + ln P )e

12∆EP

}

(5.97)

Switching the (invisible) indices gives the equivalent equation,

dF

dt=∑

s,s′

D{

(E′ + ln P ′)e12∆EP − (E′ + ln P ′)e−

12∆EP ′

}

(5.98)

Adding these together, dividing by 2, gives the handy symmetric form

dF

dt=∑

s,s′

D

2

{

(E − E′ + ln P − lnP ′)e−12∆EP ′ − (E − E′ + ln P − lnP ′)e

12∆EP

}

(5.99)

74 CHAPTER 5. NONEQUILIBRIUM THEORY PRIMER

or,

=∑

s,s′

D

2

{

(∆E + ln P − lnP ′)(e−12∆EP ′ − e

12∆EP )

}

=∑

s,s′

D

2

{[

lnP +∆E

2− (ln P ′ −

∆E

2)

][

e−12∆EP ′ − e

12∆EP

]}

dF

dt= −

∑

s,s′

D

2

{[

ln(P ′e−12∆E)− ln(Pe

12∆E)

] [

e−12∆EP ′ − e

12∆EP

]}

(5.100)

Note that the term in the curly brackets is of the form

{....} = (ln x− ln y)(x− y) (5.101)

But if lnx > ln y, then x > y so,

ln xMonotonicallyIncreasing

x

Figure 5.11:

(ln x− ln y)(x− y) ≥ 0 (5.102)

where the equality only applies for x = y. Hence, since D > 0 in Eq. 5.100 we have

dF

dt≤ 0 (5.103)

which is the H theorem for the canonical ensemble. The equality holds if

P ′e−12∆E = Pe

12∆E

i.e., forP ∝ e−E (5.104)

5.6 Appendix 3: Derivation of Master Equation

Write down a reasonable starting point for the dynamics of the probability P being in a state s ≡ {Mi},where Mi is, say, an order parameter, and i denotes lattice positions.

As time goes on, the probability of being in state s increases because one makes transitions into this statefrom other states like s′ with probability W (s, s′). It can be handy to read this as W (s, s′) = W (s ← s′).Anyway,

W (s, s′)P (s′)

are contributions increasing P (s).

5.7. MORI–ZWANZIG FORMALISM 75

SS′

W (S, S′)

Figure 5.12:

However, P (s) decreases because one makes transitions out of this state into states s′ with probabilityW (s′, s) = W (s′ ← s). Thus, W (s′, s)P (s) are contributions decreasing P (s).

The Master equation is therefore

∂P (s)

∂t=∑

s′

⎡

⎣W (s, s′)P (s′)︸ ︷︷ ︸

s′→s

−W (s′, s)P (s)︸ ︷︷ ︸

s→s′

⎤

⎦

which evidently breaks time reversal.Note that P (t) only depends on states infinitesmally close in time P (t− dt). Thus, the Master equation

considers a Markov process.

SS′

W (S, S′)

W (S′, S)

Figure 5.13:

5.7 Mori–Zwanzig Formalism

The fundamental equations of motion for particles of mass m interacting via a potential V are

∂ri

∂t=

1

mρi

and

∂ρi

∂t= −

∂

∂ri

N∑

j=1

V (|ri − rj |) (5.105)

in the classical limit, where i = 1, 2, ..., N are the N particles, with positions ri and momenta ρi. A run–of–the–mill potential has the form shown in Fig. 5.14 where N ∼ 1023. Hence there are 1023 coupled equationsof motion with 1023 initial conditions.

3.3. CORRELATIONS IN SPACE 15

⟨x⟩ = O(1)

O(1/N1/2)ρ(x)

x

Figure 3.7: Gaussian (Width Exaggerated)

Which is to say again that fluctuations are small, and average values are well

⟨x⟩ = O(1)

ρ(x)

x

O(1/N1/2)

Figure 3.8: Gaussian (Width (sort of) to scale)

defined for a system of many (N → ∞) independent parts.

3.3 Correlations in Space

The results so far apply to a large system, and perhaps it is not surprising thatthe global fluctuations in a large system are small. These results for the globalfluctuations have implications for the correlations of quantities, though. Saythe intensive variable x has different values at points r in space. (Precisely, letdr, the volume element, be microscopically large, but macroscopically small).Consider the correlation function

⟨∆x(r) ∆x(r′

)⟩ ≡ C(r, r′

) (3.34)

We can say a few things about C(r, r′

) from general considerations.

1. If space is translationally invariant, then the origin of the coordinate sys-tem for r and r

′

is arbitrary. It can be placed at point r or r′

. Thephysical length of intrest is (r − r

′

) or (r′ − r). Hence

C(r, r′

) = C(r − r′

) = C(r′

− r) (3.35)

16 CHAPTER 3. INDEPENDENCE OF PARTS

r

r′

r − r′∆x(r)

∆x(r′)

o

Figure 3.9: Transitional invariance (only r − r′ is important)

and, of course,

⟨∆x(r) ∆x(r′

)⟩ = ⟨∆x(r − r′

) ∆x(0)⟩ (3.36)

etc.

2. If space is isotropic, then the direction of ∆x(r) from ∆x(r′

) is unim-portant, and only the magnitude of the distance | r − r

′ | is physically

∆x(r′)

∆x(r)|r − r′|

Figure 3.10: Isotropy (only |r − r′| is important)

relevant. HenceC(r, r

′

) = C(| r − r′

|) (3.37)

So what was a function of 6 numbers (r, r′

) is now a function of only 1,| r − r

′ |.

3. Usually correlations fall off if points are arbitrarily far apart. That is,

⟨∆x(r → ∞) ∆x(0)⟩ = ⟨∆x( ✿0r → ∞)⟩⟨ ✿0

∆x(0)⟩= 0

orC(r → ∞) = 0 (3.38)

The scale of the correlation is set by the correlation length.

Now we knowC(| r − r

′

|) = ⟨∆x(r) ∆x(r′

)⟩ (3.39)

Subject to these conditions. A constraint on C(| r − r′ |) is provided by our

previous result

⟨(∆x)2⟩ = O(1

N)

3.3. CORRELATIONS IN SPACE 17

C(r)

r

O(ξ)

Figure 3.11: Typical correlation function

for a global fluctuation. Clearly,

∆x =

∫

dr ∆x(r)∫

dr

=1

V

∫

dr ∆x(r)

(3.40)

So we have

1

V

∫

dr

∫

dr′

⟨∆x(r) ∆x(r′

)⟩ = ⟨(∆x)2⟩

= O(1

N)

(3.41)

or,∫

dr

∫

dr′

C(r − r′

) = O(V 2

N)

Let R = r − r′

, R′

= 12 (r − r

′

). The Jacobian of the transformation is unity,and we obtain

∫

dR′∫

dR C(R) = O(V 2

N)

= V

∫

dR C(R)

(3.42)

So letting R → r, ∫

dr C(r) = O(V

N)

But N = Vξ3 , or in d dimensions, N = Ld

ξd , so∫

dr C(r) = O(ξ3)

or

∫

dr ⟨∆x(r) ∆x(0)⟩ = O(ξ3)

(3.43)

18 CHAPTER 3. INDEPENDENCE OF PARTS

for a system of N → ∞ independant parts. This provides a constraint oncorrelations. It is a neat result in Fourier space. Let

C(k) ≡∫

dr eik·rC(r)

and finally

C(r) =

∫dk

(2π)de−ik·rC(k)

(3.44)

and,

⟨|∆x(k)|2⟩ ≡∫

dr eik·r ⟨∆x(r) ∆x(0)⟩

Hence the constraint of Eq. 3.43 is

limk→0

C(k) = O(ξ3)

or

limk→0

⟨|∆x(k)|2⟩ = O(ξ3)

(3.45)

Many functions satisfy the constraint of Eq. 3.43 (see Fig. 3.11). It turns outthat usually,

⟨∆x(r) ∆x(0)⟩ ∼ e−r/ξ (3.46)

for large r, which satisfies Eq. 3.43. This is obtained from analyticity of (C(k))−1

near k = 0. It can be argued,

1

C(k)≈ (const.) + (const.)k2 + ...

so that ⟨|∆x(k)|2⟩ = const.const.+k2 near k = 0. Fourier transformation gives the

result of Eq. 3.46 above.(Note: In the previous notation with boxes, as in Fig. 3.4, we had

∆X =∑

i

∆Xi

or

∆x =1

N

∑

i

∆Xi

This means1

V

∫

dr ∆x(r) =1

N

∑

i

∆Xi (3.47)

3.3. CORRELATIONS IN SPACE 19

This is satisfied by

∆x(r) =V

N

N∑

i=1

δ(r − ri)∆Xi

where ri is a vector pointing to box i, and ∆Xi = ∆X(ri).)Let me emphasize again that all these results follow from a system having

N → ∞ independent parts. To recapitulate, for x = X/N , where X is extensive,N = V/ξ3 is the number of independent parts. V is the volume, and ξ is thecorrelation length:

⟨x⟩ = O(1) (3.7)

⟨(∆x)2⟩ = O(1

N) (3.15)

ρ(x) ∝ e(x−⟨x⟩)2

2⟨(∆x)2⟩ (3.30)∫

dr ⟨∆x(r) ∆x(0)⟩ = O(ξ3) (3.43)

The purpose of this course is to examine the cases where V/ξ3 = O(1) and theseresults break down! It is worthwhile to give some toy examples now where, inparticular, Eq. 3.43 breaks down.

72 CHAPTER 10. FLUCTUATIONS OF SURFACES

where n = 1 is the solid–on–solid model, and n = 2 is the discrete Gaussianmodel. With Eq. 10.9, the partition function is

Z = (∏

i

∑

hi

)e− J

kBT

LP

i′=1

|hi′−hi′+1|2

(10.11)

This is for d = 2, but the generalization to d=3 is easy. Note that

Z = Z(kBT

J, L) (10.12)

One can straight forwardly solve Z numerically. Analytically it is a little tough.It turns out to be easier to solve for the continuum version of Eq. 10.11 whichwe shall consider next.

10.2 Continuum Model of Surface

Instead of using a discrete mesh, let’s do everything in the continuum limit.Let h(x) be the height of the interface at any point x as shown below. This

y

xh(x)

y = h(x)

is the interface

Figure 10.7:

is a useful description if there are no overhangs or bubbles, which cannot bedescribed by y = h(x). Of course, although it was not mentioned above, the

These give a multivalued h(x), as shown

Overhang Bubble

Figure 10.8:

“lattice model” (fig. 10.5) also could not have overhangs or bubbles. The states

10.2. CONTINUUM MODEL OF SURFACE 73

the system can have are any value of h(x) at any point x. The continuumanalogue of Eq. 10.7 is

∑

{states}

=∑

{hx}

=∏

x

∫

dh(x)

≡∫ −

−

∫

Dh(·)

(10.13)

where the last step just shows some common notation for∏

x

∫

dh(x). Thingsare a little subtle because x is a continuous variable, but we can work it through.One other weaselly point is that the sum over states is dimensionless, while∏

x

∫

dh(x) has dimensions of (∏

x L). This constant factor of dimensionalitydoes not affect our results, so we shall ignore it.

For the energy of a state, we shall simply make it proportional to the amountof area in a state h(x), as shown in fig. 10.7.

Estate{h(x)} ∝ (Area of surface y = h(x))

orEstate = σ(L′)d−1 (10.14)

where (L′)d−1 is the area of the surface y = h(x), and σ is a positive constant.It is worth stopping for a second to realize that this is consistent with out

definition of excess free energy ∆F = σLd−1. In fact, it almost seems a littletoo consistent, being essentially equal to the excess free energy!

This blurring of the distinction between microscopic energies and macro-scopic free energies is common in modern work. It means we are considering a“coarse–grained” or mesoscopic description. Consider a simple example

e−F/kBT ≡ Z =∑

{h}

e−Eh/kBT

where h labels a state. Now say one has a large number of states which havethe same energy Eh. Call these states, with the same Eh, H. Hence we canrewrite ∑

{h}

e−Eh/kBT =∑

{H}

gHe−EH/kBT

wheregH = # of states h for state H

Now let the local entropyS = kB ln gH

So we havee−F/kBT =

∑

{H}

e−FH/kBT

where FH = EH −TSH . This example is partially simlpe since we only partiallysummed states with the same energy. Nevertheless, the same logic applies if one

74 CHAPTER 10. FLUCTUATIONS OF SURFACES

does a partial sum over states which might have different energies. this longdigression is essentially to rationalize the common notation (instead of Eq. 10.14)of

Estate = Fstate = σ(L′)d−1 (10.15)

Let us now calculate (L′)d−1 for a given surface y = h(x)

√

(dh)2 + (dx)2

dh

dx

x

y

Figure 10.9:

As shown above, each element (in d = 2) of the surface is

dL′ =√

(dh)2 + (dx)2

= dx

√

1 + (dh

dx)2

(10.16)

In d–dimensions one has

dd−1L′ = dd−1x

√

1 + (∂h

∂x)2 (10.17)

Integrating over the entire surface gives

(L′)d−1 =

∫

dd−1x

√

1 + (∂h

∂x)2

so that

Estate = σ

∫

dd−1x

√

1 + (∂h

∂x)2 (10.18)

Of course, we expect

(∂h

∂x)2 << 1 (10.19)

(that is, that the surface is fairly flat), so expanding to lowest order gives

Estate = σLd−1 +σ

2

∫

dd−1x(∂h

∂x)2

︸ ︷︷ ︸

Extra surface area due to fluctuation

(10.20)

The partition function is therefore,

Z =∑

{states}

e−Estate/kBT

10.2. CONTINUUM MODEL OF SURFACE 75

or,

Z = e−σLd−1

kBT (∏

x

∫

dh(x)) exp−σ

2kBT

∫

dd−1x(∂h

∂x′)2 (10.21)

This looks quite formidable now, but it is straightforward to evaluate. In par-ticle physics it is called a free–field theory. Note the similarity to the discretepartition function of Eq. 10.11. The reason Eq. 10.21 is easy to evaluate is thatit simply involves a large number of Gaussian integrals

∫

dh e−ah2

As we know, Gaussian integrals only have two important moments,

⟨h(x)⟩

and

⟨h(x)h(x′)⟩

All properties can be determined from knowledge of these two moments! How-ever, space is translationally invariant and isotropic in the x plane (the planeof the interface), so properties involving two points do not depend upon their

x1

|x1 − x2|

x2

o

Figure 10.10: x plane

exact positions, only the distance between them. That is

⟨h(x)⟩ = const.

and

⟨h(x1)h(x2)⟩ = G(|x1 − x2|) (10.22)

For convenience, we can choose

⟨h⟩ = 0 (10.23)

then our only task is to evaluate the correlation function

G(x) = ⟨h(x)h(0)⟩= ⟨h(x + x′)h(x′)⟩

(10.24)

76 CHAPTER 10. FLUCTUATIONS OF SURFACES

To get G(x), we use the partition function from Eq. 10.21, from which theprobability of a state is

ρstate ∝ e−σ

2kBT

R

dd−1x( ∂h∂x )2 (10.25)

The subtle thing here is the gradients which mix the probability of h(x) with thatof a very near by h(x′). Eventually this means we will use fourier transforms.To see this mixing, consider

∫

dx(∂h

∂x)2 =

∫

dx[∂

∂x

∫

dx′h(x′)δ(x − x′)]2

=

∫

dx

∫

dx′

∫

dx′′h(x)h(x′′)(∂

∂xδ(x − x′)) · (

∂

∂xδ(x − x′′))

Using simple properties of the Dirac delta function. Now let

x → x′′

x′ → x

x′′ → x′

Since they are just dummy indices, giving

=

∫

dx

∫

dx′

∫

dx′′h(x)h(x′)(∂

∂x′′δ(x′′ − x)) · (

∂

∂x′′δ(x′′ − x′))

=

∫

dx

∫

dx′

∫

dx′′h(x)h(x′)∂

∂x·

∂

∂x′(δ(x′′ − x) δ(x′′ − x′))

=

∫

dx

∫

dx′h(x)h(x′)∂

∂x·

∂

∂x′δ(x − x′)

=

∫

dx

∫

dx′h(x)h(x′)[−∂2

∂x2δ(x] − x′)

(you can do this by parts integration, and there will be terms involving surfacesat ∞, which all vanish.) Hence,

∫

dd−1x(∂h

∂x)2 =

∫

dd−1x

∫

dd−1x′h(x)M(x − x′)h(x′) (10.26)

where the interaction matrix,

M(x) = −∂2

∂x2δ(x) (10.27)

which has the form shown in fig. 10.11. This “Mexican hat” interaction matrixappears in many different contexts. It causes h(x) to interact with h(x′).

In fourier space, gradients ∂/∂x become numbers, or rather wave numbers k.We will use this to simplify our project. It is convenient to introduce continuous

10.2. CONTINUUM MODEL OF SURFACE 77

x

M(x) = − ∂2

∂x2 δ(x)

Figure 10.11:

and discrete fourier transforms. One or another is more convenient at a giventime. We have,

h(x) =

⎧

⎨

⎩

∫dd−1k

(2π)d−1 eik·xh(k) (Continuous)1

Ld−1

∑

k

eik·xhk (Discrete) (10.28)

where

h(k) =

∫

dd−1xe−ik·xh(x)

= hk

(10.29)

Closure requires

δ(x) =

⎧

⎨

⎩

∫dd−1k

(2π)d−1 eik·x

1Ld−1

∑

k

eik·x (10.30)

and

δ(k) =

∫dd−1x

(2π)d−1eik·x

but

δk, 0 =1

Ld−1

∫

dd−1xeik·x (10.31)

is the Kronecker delta

δk, 0 =

{

1 , if k = 0

0 , otherwise(10.32)

not the Dirac delta function.We have used the density os states in k space as

(L

2π)d−1

so that the discrete → continuum limit is

78 CHAPTER 10. FLUCTUATIONS OF SURFACES

∑

k

→ (L

2π)d−1

∫

dd−1k

and

δk, 0 → (L

2π)d−1δ(k) (10.33)