Introduction to Machine Learning · Single State: K-armed Bandit 3 Q a Q a r a Q a t t t t 11 m...

Transcript of Introduction to Machine Learning · Single State: K-armed Bandit 3 Q a Q a r a Q a t t t t 11 m...

INTRODUCTION

TO

MACHINE

LEARNING3RD EDITION

ETHEM ALPAYDIN

© The MIT Press, 2014

http://www.cmpe.boun.edu.tr/~ethem/i2ml3e

Lecture Slides for

CHAPTER 18:

REINFORCEMENT

LEARNING

Introduction2

Game-playing: Sequence of moves to win a game

Robot in a maze: Sequence of actions to find a goal

Agent has a state in an environment, takes an action

and sometimes receives reward and the state

changes

Credit-assignment

Learn a policy

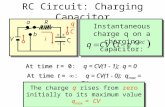

Single State: K-armed Bandit3

1 1 t t t tQ a Q a r a Q a

Among K levers, choose

the one that pays best

Q(a): value of action a

Reward is ra

Set Q(a) = ra

Choose a* if

Q(a*)=maxa Q(a)

Rewards stochastic (keep an expected reward):

Elements of RL (Markov Decision Processes)

4

st : State of agent at time t

at: Action taken at time t

In st, action at is taken, clock ticks and reward rt+1

is received and state changes to st+1

Next state prob: P (st+1 | st , at )

Reward prob: p (rt+1 | st , at )

Initial state(s), goal state(s)

Episode (trial) of actions from initial state to goal(Sutton and Barto, 1998; Kaelbling et al., 1996)

Policy,

Value of a policy,

Finite-horizon:

Infinite horizon:

Policy and Cumulative Reward5

: t ta s S A

tV s

1 2

1

T

t t t t T t i

i

V s E r r r E r

2 1

1 2 3

1

0 1 is the discount rate

i

t t t t t i

i

V s E r r r E r

6

1

* *

1

1

1

1 1

1

*

1 1

* *

1 1 1

* *

*

max , = max ,

max

max

max

max | ,

max , Value of in

t

t

t

t

tt

t

t t t t ta

i

t ia

i

i

t t ia

i

t ta

t t t t t ta

s

t t t t ta

V s V s s Q s a

E r

E r r

E r V s

V s E r P s s a V s

V s Q s a a s

Q s

1

1

*

1 1 1 1, | , max ,t

t

t t t t t t t ta

s

a E r P s s a Q s a

Bellman’s equation

Environment, P (st+1 | st , at ), p (rt+1 | st , at ) known

There is no need for exploration

Can be solved using dynamic programming

Solve for

Optimal policy

Model-Based Learning7

1

* *

1 1 1max | , t

t

t t t t t ta

s

V s E r P s s a V s

1

* *

1 1 1arg max | , | , t t

t t t t t t t ta s S

s E r s a P s s a V s

Temporal Difference Learning10

Environment, P (st+1 | st , at ), p (rt+1 | st , at ), is not

known; model-free learning

There is need for exploration to sample from

P (st+1 | st , at ) and p (rt+1 | st , at )

Use the reward received in the next time step to

update the value of current state (action)

The temporal difference between the value of the

current action and the value discounted from the

next state

Exploration Strategies11

ε-greedy: With pr ε, choose one action at random uniformly; and choose the best action with pr 1-ε

Probabilistic:

Move smoothly from exploration to exploitation.

Decrease ε

Annealing

1

exp ,|

exp ,b

Q s aP a s

Q s b

A

1

exp , /|

exp , /b

Q s a TP a s

Q s b T

A

Deterministic Rewards and Actions12

We had:

Deterministic: single possible reward and next state

used as an update rule (backup)

Starting at zero, Q values increase, never decrease

1

1

* *

1 1 1 1, | , max , t

t

t t t t t t t ta

s

Q s a E r P s s a Q s a

1

1 1 1, max , t

t t t t ta

Q s a r Q s a

1

1 1 1ˆ ˆ, max ,

t

t t t t ta

Q s a r Q s a

13

Consider the value of action marked by ‘*’:

If path A is seen first, Q(*)=0.9×max(0,81)=72.9

Then B is seen, Q(*)=0.9×max(100,81)=90

Or,

If path B is seen first, Q(*)=0.9×max(100,0)=90

Then A is seen, Q(*)=0.9×max(100,81)=90

Q values increase but never decrease

γ = 0.9

When next states and rewards are nondeterministic (there is an opponent or randomness in the environment), we keep averages (expected values) instead as assignments

Q-learning (Watkins and Dayan, 1992):

Off-policy vs on-policy (Sarsa)

Learning V (TD-learning: Sutton, 1988)

Nondeterministic Rewards and Actions14

1 1 t t t t tV s V s r V s V s

1

1 1 1ˆ ˆ ˆ ˆ, , max , ,

t

t t t t t t t t ta

Q s a Q s a r Q s a Q s a

backup

1

1

1 1 1 1 1, | , max , t

t

t t t t t t t ta

s

Q s a E r P s s a Q s a

Eligibility Traces17

1

1 1 1

1 if and ,

, otherwise

, ,

, , , , ,

t t

t

t

t t t t t t

t t t t t t

s s a ae s a

e s a

r Q s a Q s a

Q s a Q s a e s a s a

Keep a record of previously visited states (actions)

Example of an eligibility trace for a value. Visits are marked by an asterisk.

Tabular: Q (s , a) or V (s) stored in a table

Regressor: Use a learner to estimate Q(s,a) or V(s)

Generalization19

2

1 1 1

1 1 1

1 1 1

1 θ 0

, ,

, , ,

Eligibility

, ,

, with all zeros

t

t

t

t t t t t

t t t t t t t

t t

t t t t t t

t t t t

E r Q s a Q s a

r Q s a Q s a Q s a

r Q s a Q s a

Q s a

θ

θ

θ

θ e

e e e

* Partially Observable States20

The agent does not know its state but receives an

observation p(ot+1|st,at) which can be used to infer

a belief about states

Partially observable

MDP

* The Tiger Problem21

Two doors, behind one of which there is a tiger

p: prob that tiger is behind the left door

R(aL)=-100p+80(1-p), R(aR)=90p-100(1-p)

We can sense with a reward of R(aS)=-1

We have unreliable sensors

22

If we sense oL, our belief in tiger’s position changes

( | ) ( ) 0.7' ( | )

( ) 0.7 0.3(1 )

( | ) ( , ) ( | ) ( , ) ( | )

100 ' 80(1 ')

0.7 0.3(1 )100 80

( ) ( )

( | ) ( , ) ( | ) ( , ) ( | )

90 ' 100(1 ')

0.790

L L LL L

L

L L L L L L L R R L

L L

R L R L L L R R R L

P o z P z pp P z o

P o p p

R a o r a z P z o r a z P z o

p p

p p

P o P o

R a o r a z P z o r a z P z o

p p

0.3(1 )

100( ) ( )

( | ) 1

L L

S L

p p

P o P o

R a o

23

' max ( | ) ( )

max( ( | ), ( | ), ( | )) ( ) max( ( | ), ( | ), ( | )) ( )

100 80(1 )

43 46(1 )max

33 26(1 )

90 100(1 )

i i j j

j

L L R L S L L L R R R S R R

V R a o P o

R a o R a o R a o P o R a o R a o R a o P o

p p

p p

p p

p p

25

Let us say the tiger can move from one room to the

other with prob 0.8

' 0.2 0.8(1 )

100 ' 80(1 ')

' max 33 26(1 ')

90 100(1 ')

p p p

p p

V p p

p p

![Chapter 1, Solution 1 C] = 103.84 mC C] = 198.65 mC C] = 3 ... · Chapter 1, Solution 3 (a) ³ q(t) i(t)dt q(0) (3t 1) C (b) ³ q(t) (2t s) dt q(v). 2 (t 5t) mC (c) q(t) 20 cos 10t](https://static.fdocuments.in/doc/165x107/5fe345d4aab5001e855e90e5/chapter-1-solution-1-c-10384-mc-c-19865-mc-c-3-chapter-1-solution.jpg)

![e ¥ q a z m ¥ q 4 T ¡ 4 { m ¥ q 4 Ö E £ - Ök µ ³þ 2 < ? 5 e ¥ q a z m ¥ q 4 - ë K è m ü i ] Ò - Ã e ¥ q a z m ¥ q 4 T ¡ 4 { e ¥ q a z m ¥ q 4 T ¡ 4 { m ¥ q](https://static.fdocuments.in/doc/165x107/607b2b5e18c6fd1a9f6830ff/e-q-a-z-m-q-4-t-4-m-q-4-e-k-2-5-e-q-a.jpg)