Image-Based Question Answering with Visual-Semantic Embeddingmren/slides/imageqa.pdf · Recent...

Transcript of Image-Based Question Answering with Visual-Semantic Embeddingmren/slides/imageqa.pdf · Recent...

Image-Based Question Answering with Visual-SemanticEmbedding

Mengye Ren

Engineering Science, ECE OptionUniversity of Toronto

Supervisor: Prof. Richard S. Zemel

March 31, 2015

Mengye Ren ImageQA March 31, 2015 1 / 27

Interactive output with natural language

Recent progress in object recognition and detection using deeplearning.

Can we interpret images in more interactive ways?

Caption generation: describe an image with a sentence.

Question answering on the image.

Figure: Object recognition example [Krizhevsky et al., 2012]

Mengye Ren ImageQA March 31, 2015 2 / 27

Interactive output with natural language

Recent progress in object recognition using deep learning.

Can we interpret images in more interactive ways?

Caption generation: describe an image with a sentence.

Question answering on the image.

Figure: Caption generation example [Kiros et al., 2014]

Mengye Ren ImageQA March 31, 2015 3 / 27

Question answering on images

See the world and interact with people (AI dream).

Retrieve and describe the relevant part of the image.

-What is on the table ?

Mengye Ren ImageQA March 31, 2015 4 / 27

Question answering on images

See the world and interact with people (AI dream).

Retrieve and describe the relevant part of the image.

-What is on the table ? -Books.

Mengye Ren ImageQA March 31, 2015 5 / 27

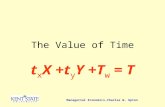

Problem formulation

The input is an image with a question (a sequence of words).

The output is an answer (a sequence of words).

Find a function that maps the input to the correct output.

Want to use a joint embedding vector space to represent both imageand language.

Mengye Ren ImageQA March 31, 2015 6 / 27

Long short-term memory (LSTM)

Recurrent neural networks (RNNs): “feedback” connection from theoutput back to input.

Aim to capture sequential patterns.

Gradient explosion and vanishing in training traditional RNNs.

LSTM [Hochreiter et al., 1997]: use linear propagation for memorycells, non-linear activation only at input and output.

Training is much faster and stable.

Recent success in natural language proccessing (NLP).

Xt

Xt

Xt

Xt

Yt

Yt−1

Yt−1

Yt−1

Ct

Ct−1 Ct

It Ot

Ft

Figure: RNN (left) and long short-term memory (right)

Mengye Ren ImageQA March 31, 2015 7 / 27

Visual semantic embedding

Images can be represented in feature space, and words can berepresented in vectors (word embedding).

“king” - “man” + “woman” ∼ “queen” [Mikolov et al., 2013]

Goal: project image and words into a common space.

Figure: DeViSE: A Deep Visual-Semantic Embedding Model [Frome et al., 2013]

Mengye Ren ImageQA March 31, 2015 8 / 27

Previous attempt

A multi-world approach to question answering about real-worldscenes based on uncertain input [Malinowski et al., 2014]

Use automatic image segmentation with uncertainty.

Parse the question into logical form.

Search for nearest neighbours in the training set to make inference ondifferent possible segmentations.

Does not scale to large dataset.

Results can be improved by a lot.

Mengye Ren ImageQA March 31, 2015 9 / 27

DAQUAR dataset

Around 1500 images, 7000 questions (37 classes).

Three types of questions: Object type, object color, number of object

98.3% of the answers are composed of single word only.

Q7: what color is the ornamental plant in front of the fan coil but not close to sofa ?-red

Mengye Ren ImageQA March 31, 2015 10 / 27

Image-word model

Idea: treat image as the first word of the question sequence.

Use the last hidden layer of the Oxford VGG conv net[Simonyan et al., 2014] as the feature extractor.

Map image features into the joint embedding space.

We can also use random numbers as images and call it a “blind”model.

t = 1 t = 2 t = T

how many books

dropout p

LSTM

CNN

softmax

two three five.56 .21 .09

random

image

blind

image-word

word embedding

Mengye Ren ImageQA March 31, 2015 11 / 27

Q193: what is the largest object ?Correct answer: table

Image-word: table (0.576)Blind: bed (0.440)

Mengye Ren ImageQA March 31, 2015 12 / 27

Bidirectional image-word model

At the last timestep, the model needs to immidiately come up with ananswer.Sometimes the main body of the question is at the end.The 1st LSTM reads the sentence from beginning to end, and the2nd one reads from end to beginning.Benefit: the 2nd LSTM “knows” the whole sentence at each timestep.

t = 1 t = 2 t = T

how many books

dropout p1

forward LSTM

dropout p2, p3

backward LSTM

CNN

softmax

two three five.56 .21 .09

random

image

blind

image-word

word embedding

Mengye Ren ImageQA March 31, 2015 13 / 27

Image-word model results

Table: DAQUAR results

Accuracy WUPS 0.9 WUPS 0.02-IMGWD 0.3276 0.3298 0.7272IMGWD 0.3188 0.3211 0.7279BLIND 0.3051 0.3069 0.7229RANDWD 0.3036 0.3056 0.7206BOW 0.2299 0.2340 0.6907GUESS 0.1756[Malinowski et al., 2014] 0.1273 0.1810 0.5147HUMAN 0.6027 0.6104 0.7896

Mengye Ren ImageQA March 31, 2015 14 / 27

Image-word model results summary

The results are strong, but blind model can do almost equally well!

Maybe we need a better dataset.1 1500 images are a very small dataset.2 Many questions are not very obvious to answer.

i.e. The images features from the CNN are not that useful.

Mengye Ren ImageQA March 31, 2015 15 / 27

Synthetic question-answer pairs

Recently released large dataset on image descriptions.

Idea: transform descriptions into questions and answers.

Approach: use Stanford parser [Klein et al., 2003] to parsedescriptions into syntactic trees, and operate on the tree structure.

Example: A man is riding a horse => What is the man riding?

Mengye Ren ImageQA March 31, 2015 16 / 27

COCO-QA dataset

Dataset # Images # Questions # Answers GUESS baseline6.6K 3.6K+3K 14K+11.6K 298 0.1156Full 80K+20K 177K+83K 794 0.0346

Used Microsoft COCO dataset [Lin et al., 2014]. 80K+20K images,400K+100K descriptions.

Three types of questions: object, colour, number.

Reduced the number of common answers (e.g. “man”, “white”, and“two”). The probability of an answer appearing again is decreasing.

Mengye Ren ImageQA March 31, 2015 17 / 27

COCO-QA results

Q3082: how many separate benches are people sitting on on a sidewalk ?-Two

Mengye Ren ImageQA March 31, 2015 18 / 27

Learning results on COCO-QA

Table: COCO-QA results

6.6K FullAcc. WUPS 0.9 WUPS 0 Acc. WUPS 0.9 WUPS 0

2-IMGWD 0.3358 0.3454 0.7534 0.3208 0.3304 0.7393IMGWD 0.3260 0.3357 0.7513 0.3153 0.3245 0.7359

BOW 0.1910 0.2018 0.6968 0.2365 0.2466 0.7058RANDWD 0.1366 0.1545 0.6829 0.1971 0.2058 0.6862BLIND 0.1321 0.1396 0.6676 0.2517 0.2611 0.7127GUESS 0.1156 0.0346

Mengye Ren ImageQA March 31, 2015 19 / 27

Bidirectional model comparison

Q2352: what is being pulled on a runway ?2IMGWD: plane (0.3746) airplane (0.3583) jet (0.1609)IMGWD: bus (0.1393) airplane (0.0888) truck (0.0867)

Mengye Ren ImageQA March 31, 2015 20 / 27

Summary

LSTM is a very suitable for language modelling.

Bidirectional model improves language understanding.

Still doesn’t quite know how to count, “two” is the best guess.

Very limited colour recognition ability, mostly from knowledge of thequestion: e.g. “flower” is always yellow.

how many jet airplanes are flying in formation in a cloudless sky ?IMGWD: four (0.4215)

Mengye Ren ImageQA March 31, 2015 21 / 27

Future works

Visual attention model

Better dataset (human or machine generated)

Better image understanding

Longer answers

Mengye Ren ImageQA March 31, 2015 22 / 27

Acknowledgement

Ryan Kiros and Rich Zemel

Thanks Russ Salakhudinov for helpful discussion

Thanks Nitish Srivastava for Toronto Conv Net

Thanks UofT CS department machine learning group for computingresources

Mengye Ren ImageQA March 31, 2015 23 / 27

References

Mateusz Malinowski and Mario Fritz (2014)

A multi-world approach to question answering about real-world scenes based onuncertain Input

Neural Information Processing Systems (NIPS’14)

Ryan Kiros and Ruslan Salakhutdinov and Richard S. Zemel, 2014

Unifying Visual-Semantic Embeddings with Multimodal Neural Language Models

NIPS Deep Learning Workshop (2014)

Sepp Hochreiter and Jurgen Schmidhuber

Long Short-Term Memory

Neural Computation 9(8) 1735–1780

Mengye Ren ImageQA March 31, 2015 24 / 27

References

Andrea Frome and Gregory S. Corrado and Jonathon Shlens and Samy Bengio andJeffrey Dean and Marc’Aurelio Ranzato and Tomas Mikolov (2013)

DeViSE: A Deep Visual-Semantic Embedding Model

Advances in Neural Information Processing Systems 26: 27th Annual Conferenceon Neural Information Processing Systems 2013, 2121–2129

Tomas Mikolov and Kai Chen and Greg Corrado and Jeffrey Dean (2013)

Efficient Estimation of Word Representations in Vector Space

http://arxiv.org/abs/1301.3781

Karen Simonyan and Andrew Zisserman (2014)

Very Deep Convolutional Networks for Large-Scale Image Recognition

http://arxiv.org/abs/1409.1556

Dan Klein and Christopher D. Manning (2003)

Accurate Unlexicalized Parsing

In proceedings of the 41st Annual Meeting of the Association for ComputationalLinguistics (2003) 423–430

Mengye Ren ImageQA March 31, 2015 25 / 27

References

Alex Krizhevsky and Ilya Sutskever and Geoffrey E. Hinton (2012)

ImageNet Classification with Deep Convolutional Neural Networks

Advances in Neural Information Processing Systems 25: 26th Annual Conferenceon Neural Information Processing Systems 2012. 1106–1114.

Tsung-Yi Lin and Michael Maire and Serge Belongie and James Hays and PietroPerona and Deva Ramanan and Piotr Dollar and C. Lawrence Zitnick (2014)

Microsoft COCO: Common Objects in Context

Computer Vision - ECCV 2014 - 13th European Conference, Zurich, Switzerland,September 6-12, 2014, Proceedings, Part V 740–755

Nathan Silberman and Derek Hoiem and Pushmeet Kohli and Rob Fergus

Indoor Segmentation and Support Inference from RGBD Images

ECCV (2012)

Mengye Ren ImageQA March 31, 2015 26 / 27

The End

Mengye Ren ImageQA March 31, 2015 27 / 27

![Machine Learning and Dynamical Systems - Approximation ......inf 2 L 1 ([0; T];) J ( ) := E 2 6 4(x T; y) + Z T 0 R (x t; t) | {z } Regularizer dt 3 7 5 x _ t = f (t; t)0 t T 0; y](https://static.fdocuments.in/doc/165x107/6134c0addfd10f4dd73bee96/machine-learning-and-dynamical-systems-approximation-inf-2-l-1-0-t.jpg)

![MonetaryEconomics Lecture1 TheNewKeynesianmodel/menu/... · 2012. 3. 30. · t −logY] AnotherexampleassumingY t = F (X t,Z t) = F elogXt,elogZt Y t ≈F (X,Z)+F x (X,Z)X [logX t](https://static.fdocuments.in/doc/165x107/608dd51bcbec24250167cf0d/monetaryeconomics-lecture1-the-menu-2012-3-30-t-alogy-anotherexampleassumingy.jpg)

![Digital Filters. A/DComputerD/A x(t)x[n]y[n]y(t) Example:](https://static.fdocuments.in/doc/165x107/56649cdb5503460f949a5cbe/digital-filters-adcomputerda-xtxnynyt-example.jpg)

![arxiv.org · 8 T 0(PUXY ) , n Pe UXY ∈ P(U ×X ×Y) : PeUX = PUX, Pe Y = PY ,EPe[logq(X,Y )] ≥ EP [logq(X,Y)] o (49) T 1(PUXY ) , n Pe UXY ∈ P(U ×X ×Y) : PeUX = PUX, Pe UY](https://static.fdocuments.in/doc/165x107/606ee1e48ff9574c445b8f83/arxivorg-8-t-0puxy-n-pe-uxy-a-pu-x-y-peux-pux-pe-y-py-epelogqxy.jpg)

![5 5 c U $ 4 6 6 3 5 4 · 2019-12-11 · g ] \ f V e ] d X c T X W T ] a V T ] W T _ ] T V Z c b a V ` _ ^ V T ] \ [ Z Y X W T V U T R S R Q P T ] [ c _ Y X c T m c X i V Y T g l Q](https://static.fdocuments.in/doc/165x107/5e71768f79fc6e4d114de53f/5-5-c-u-4-6-6-3-5-4-2019-12-11-g-f-v-e-d-x-c-t-x-w-t-a-v-t-w-t-.jpg)