Health Care Claims System Prototype · Health Care Claims System Prototype ... Hadoop map‐reduce...

Transcript of Health Care Claims System Prototype · Health Care Claims System Prototype ... Hadoop map‐reduce...

7701 Greenbelt Road, Suite 400, Greenbelt, MD 20770 Tel: (301) 614-8600 Fax: (301) 614-8601 www.sgt-inc.com

©2015 SGT, Inc. All Rights Reserved

SGT WHITE PAPER

Health Care ClaimsSystem Prototype

MongoDB and Hadoop

Health Care Claims System Prototype

Purpose:

The prototype health care claims system database was built to explore the possibilities of using

MongoDB and Hadoop for big data processing across a cluster of servers in the cloud. The idea was to

use MongoDB to store hundreds of millions of records and then to use Hadoop’s map‐reduce

functionality to efficiently process the data. MongoDB is a NoSQL, document based, database that

provides easy scalability, thus it was a fitting choice for this project. Apache Hadoop is a framework that

enables the distributed processing of large data sets across clusters of computers. Hadoop is designed to

scale from use on a single server, to use on thousands of servers. Hadoop’s next generation map‐reduce,

YARN, is a framework used for job scheduling, managing cluster resources, and provides the ability for

parallel processing of large data sets.

Components:

1. The simulation data generator is a java program that generates all of the simulation data for the

health care providers, the beneficiaries, and the claims. This is the only part of the health care

claims system that is not required to live on the cloud because it only needs to be used once to

generate the simulation data, it doesn’t need to be continually running like the rest of the

system’s components.

2. MongoDB is a NoSQL database used for storing all of the data from the simulation data

generator and the map‐reduce jobs.

3. Hadoop is a tool which is designed for parallel processing of large data sets. Hadoop’s processing

power is completely scalable from one machine to a cluster built from any number of machines.

4. The Mongo‐Hadoop Connector is a program that converts BSON (Binary JSON) data from

MongoDB to a serialized format that Hadoop can use and then writes it back to a BSON data and

into MongoDB. The Mongo‐Hadoop Connector is essential for communication between

MongoDB and Apache Hadoop.

5. The Google Map Overlay is simply used for marking any locations of interest on a map. For

example, one of the map‐reduce programs finds all claims over $1,000,000, the program would

then read in the whole collection of claims over $1,000,000 and mark the beneficiary’s location

on the map. This way if there are a suspicious number of high claims in any one area it will be

easy to determine. This Google map overlay reads data from MongoDB and then marks

locations on the map.

6. Amazon Web Services (Amazon cloud) is used to run the MongoDB, Hadoop, the Mongo‐

Hadoop Connector, and the program for the Google map overlays on the cloud across a cluster

of multiple servers.

SGT Innovation Center Health Care Claims System Prototype White Paper ©2015 SGT, Inc. All Rights Reserved

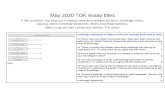

A high‐level model of the whole system looks like:

Figure 1: Component Diagram of the System

How Does MongoDB store data?

MongoDB stores all of its data in structured documents which each contain a collection of key‐

value pairs. For example, in this project a document for a Provider might look something like:

{ "_id" : ObjectId("53207ae0ac4fb2e887177162"), "Provider_NPI_Number" : NumberLong("3905304760"), "Name" : "AAAA 10", "Address" : "43499 5th Street ", "City" : "Houston", "State" : "TX", "Zip" : 77213, "Longitude" : -95.434241, "Latitude" : 29.83399, "Claim 1" : { "Therapy Code" : 71, "Covered Paid Amount" : 352248.0684500998, "HCPCS Code" : "0145T", "HCPCS Code Modifier" : "GP", "Facility Type Code" : 1, "Class Function Code" : 10, "Beneficiary ID" : 43, "Year" : 2014, "Month" : 1

SGT Innovation Center Health Care Claims System Prototype White Paper ©2015 SGT, Inc. All Rights Reserved

}, "Claim 2" : { "Therapy Code" : 62, "Covered Paid Amount" : 707612.6592194028, "HCPCS Code" : "90207", "HCPCS Code Modifier" : "GW", "Facility Type Code" : 9, "Class Function Code" : 3, "Beneficiary ID" : 16, "Year" : 2014, "Month" : 12 }, "Claim 3" : { "Therapy Code" : 40, "Covered Paid Amount" : 1067684.5161930372, "HCPCS Code" : "G0455", "HCPCS Code Modifier" : "GO", "Facility Type Code" : 10, "Class Function Code" : 5, "Beneficiary ID" : 41, "Year" : 2014, "Month" : 12 } }

Everything in every single document in MongoDB is a key‐value pair. For example, in the line ‘"Address" : "43499 5th Street "’ the key is “Address” and the value is “43499 5th Street”. All subdocuments, such as Claim 1, Claim 2, and Claim 3, in MongoDB are also considered values in a key‐value pair. For example, in the above document, the key “Claim 3” is matched with the value of the subdocument: { "Therapy Code" : 40, "Covered Paid Amount" : 1067684.5161930372, "HCPCS Code" : "G0455", "HCPCS Code Modifier" : "GO", "Facility Type Code" : 10, "Class Function Code" : 5, "Beneficiary ID" : 41, "Year" : 2014, "Month" : 12 }

What does Hadoop map‐reduce do?

Hadoop map‐reduce accepts input parameters for a key, which is a key‐value pair from a

document, and a value, which is also a key‐value pair from a document, it then combines (maps) all of

the keys with an equal value into a single document and then allows the programmer to perform any

calculations necessary with the value data(reduce). The general data flow for a hadoop map‐reduce job

using MongoDB for input and output looks as follows:

SGT Innovation Center Health Care Claims System Prototype White Paper ©2015 SGT, Inc. All Rights Reserved

For example, in this project there is a map reduce program called AVGperNPI (Appendices A, B,

& C), it takes in the key‐value pair “Provider_NPI_Number” for the key for the mapper, and it takes in

the key‐value pair “Covered Paid Amount” as the value. The mapper then combines all claims that have

the same NPI number and sends the NPI number and a list of all the “Covered Paid Amount” values as

doubles (or DoubleWritable) to the Reducer. The reducer then iterates through the list of doubles, sums

them together, and divides by the number of elements in the list to calculate the average claim amount

for all the claims with the matching NPI number. The reducer writes the NPI number and the calculated

average to a BSON object and writes this BSON object into a new collection in MongoDB. This new

document will look something like:

{ "_id" : ObjectId("5320855cac4f1874ff31ce7d"), "Provider NPI Number" : NumberLong("2389959670"), "Average Claim Amount" : 613212.3526743455 }

This map‐reduce program maps all claims with the same NPI number together, and reduces

them to one document with the average claim amount for the NPI number listed instead of the

individual claim amounts. It should be noted, that doubles, floats, ints, etc. cannot be directly used for a

mapper key or passed to the reducer. Instead classes that implement Writable must be used, such as

DoubleWritable, FloatWritable, IntWritable, etc. A complete list of these classes is in the

org.apache.hadoop.io package which can be viewed here. Hadoop cannot read or write any data that is

SGT Innovation Center Health Care Claims System Prototype White Paper ©2015 SGT, Inc. All Rights Reserved

not contained in a class that implements Writable, because Hadoop needs the data in a serialized format

that Writable provides.

Custom Map‐Reduce Key Types:

Hadoop also provides the ability to write custom keys as well for when data is used for a key

that is not simply a primitive data type. For example, if the desired outcome was the average amount of

each claim for each NPI number for each Year, the only additional code that would be required would be

a custom key class definition as can be seen in Appendix F, and slight modifications to the mapper and

reducer class where LongWritable would be replaced with the custom key type as seen in Appendices D

and E.

To create a custom Key, a class must be created that implements Hadoop’s WritableComparable

interface and overrides the hashCode() and equals() methods. The custom key class must override the

methods equals(), hashcode(), write(), readFields(), and compareTo(). If the map‐reduce program is

being written in the Eclipse IDE, it can generate the hashCode() and the equals() methods, but the

methods for the WritableComparable interface must still be written manually.

After using Hadoop to run the Jar file containing the code in Appendices D‐G, the output in

MongoDB will be a collection of documents with the average claim amount per NPI number, per year:

{ "_id" : ObjectId("53209a94ac4f6eb2433b7012"), "Provider NPI Number" : NumberLong("8884844805"), "Year" : 2014, "Average Claim Amount" : 496265.4353595429 }

SGT Innovation Center Health Care Claims System Prototype White Paper ©2015 SGT, Inc. All Rights Reserved

Appendix A: AvgPerNPIMapper

package avgPerNPI; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.DoubleWritable; import org.apache.hadoop.mapreduce.Mapper; import org.bson.BSONObject; import java.io.IOException; public class AvgPerNPIMapper extends Mapper<Object, BSONObject, LongWritable, DoubleWritable> { @Override public void map(final Object pKey, final BSONObject pValue, final Context pContext) throws IOException, InterruptedException { BSONObject temp; long npi = (long)pValue.get("Provider_NPI_Number"); int i = 0; while(true) { try { i++; temp = ((BSONObject)pValue.get("Claim " + Integer.toString(i))); pContext.write(new LongWritable(npi), new DoubleWritable((double)temp.get("Covered Paid Amount"))); } catch(Throwable t) { break; }//end try/catch }//end while(true) } }

SGT Innovation Center Health Care Claims System Prototype White Paper ©2015 SGT, Inc. All Rights Reserved

Appendix B: AvgPerNPIReducer

package avgPerNPI; import com.mongodb.hadoop.io.BSONWritable; import org.apache.hadoop.mapreduce.Reducer; import org.bson.BasicBSONObject; import java.io.IOException; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.DoubleWritable; public class AvgPerNPIReducer extends Reducer<LongWritable, DoubleWritable, BSONWritable, BSONWritable> { @Override public void reduce(final LongWritable pKey, final Iterable<DoubleWritable> pValues, final Context pContext) throws IOException, InterruptedException { double avg = 0; int cnt = 0; for (final DoubleWritable value : pValues) { cnt++; avg += value.get(); } avg = avg/cnt; BasicBSONObject output = new BasicBSONObject(); output.put("Provider NPI Number", pKey.get()); output.put("Average Claim Amount", avg); pContext.write(null, new BSONWritable(output)); } }

SGT Innovation Center Health Care Claims System Prototype White Paper ©2015 SGT, Inc. All Rights Reserved

Appendix C: TestXMLConfig

package avgPerNPI; import com.mongodb.hadoop.util.MongoTool; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.util.ToolRunner; public class TestXMLConfig extends MongoTool { static { Configuration.addDefaultResource("test.xml"); } public static void main(final String[] pArgs) throws Exception { System.exit(ToolRunner.run(new TestXMLConfig(), pArgs)); } }

SGT Innovation Center Health Care Claims System Prototype White Paper ©2015 SGT, Inc. All Rights Reserved

Appendix D: AvgPerNPIMapper (Year)

package avgPerNPI.year; import org.apache.hadoop.io.DoubleWritable; import org.apache.hadoop.mapreduce.Mapper; import org.bson.BSONObject; import java.io.IOException; public class AvgPerNPIMapper extends Mapper<Object, BSONObject, YearNPI_Key, DoubleWritable> { @Override public void map(final Object pKey, final BSONObject pValue, final Context pContext) throws IOException, InterruptedException { BSONObject temp; long npi = (long)pValue.get("Provider_NPI_Number"); int i = 0; System.out.println("27"); while(true) { try { i++; temp = ((BSONObject)pValue.get("Claim " + Integer.toString(i))); pContext.write(new YearNPI_Key(npi, (int)temp.get("Year")), new DoubleWritable((double)temp.get("Covered Paid Amount"))); } catch(Throwable t) { break; }//end try/catch }//end while(true) } }

SGT Innovation Center Health Care Claims System Prototype White Paper ©2015 SGT, Inc. All Rights Reserved

Appendix E: AvgPerNPIReducer (Year)

package avgPerNPI.year; import com.mongodb.hadoop.io.BSONWritable; import org.apache.hadoop.mapreduce.Reducer; import org.bson.BasicBSONObject; import java.io.IOException; import org.apache.hadoop.io.DoubleWritable; public class AvgPerNPIReducer extends Reducer<YearNPI_Key, DoubleWritable, BSONWritable, BSONWritable> { @Override public void reduce(final YearNPI_Key pKey, final Iterable<DoubleWritable> pValues, final Context pContext) throws IOException, InterruptedException { double avg = 0; int cnt = 0; for (final DoubleWritable value : pValues) { cnt++; avg += value.get(); } avg = avg/cnt; BasicBSONObject output = new BasicBSONObject(); output.put("Provider NPI Number", pKey.npi); output.put("Year", pKey.year); output.put("Average Claim Amount", avg); pContext.write(null, new BSONWritable(output)); } }

SGT Innovation Center Health Care Claims System Prototype White Paper ©2015 SGT, Inc. All Rights Reserved

Appendix F: YearNPI_Key

package avgPerNPI.year; import java.io.DataInput; import java.io.DataOutput; import java.io.IOException; import org.apache.hadoop.io.WritableComparable; public class YearNPI_Key implements WritableComparable<YearNPI_Key> { public long npi; public int year; public YearNPI_Key() { npi = 0; year = 0; } public YearNPI_Key(long _npi, int _year) { npi = _npi; year = _year; } @Override public void readFields(DataInput in) throws IOException { npi = in.readLong(); year = in.readInt(); } @Override public void write(DataOutput out) throws IOException { out.writeLong(npi); out.writeInt(year); } @Override public int hashCode() { final int prime = 31; int result = 1; result = prime * result + (int) (npi ^ (npi >>> 32)); result = prime * result + year; return result; } @Override public boolean equals(Object obj)

SGT Innovation Center Health Care Claims System Prototype White Paper ©2015 SGT, Inc. All Rights Reserved

{ if (this == obj) return true; if (obj == null) return false; if (getClass() != obj.getClass()) return false; YearNPI_Key other = (YearNPI_Key) obj; if (npi != other.npi) return false; if (year != other.year) return false; return true; } @Override public int compareTo(YearNPI_Key temp) { int ret_val = 1; if(npi == temp.npi && year == temp.year) { ret_val = 0; } return ret_val; } }

SGT Innovation Center Health Care Claims System Prototype White Paper ©2015 SGT, Inc. All Rights Reserved

Appendix G: TestXMLConfig (Year)

package avgPerNPI.year; import com.mongodb.hadoop.util.MongoTool; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.util.ToolRunner; public class TestXMLConfig extends MongoTool { static { Configuration.addDefaultResource("test.xml"); Configuration.addDefaultResource("mongo‐defaults.xml"); } public static void main(final String[] pArgs) throws Exception { System.exit(ToolRunner.run(new TestXMLConfig(), pArgs)); } }

‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐

Some Screenshots that I might use

SGT Innovation Center Health Care Claims System Prototype White Paper ©2015 SGT, Inc. All Rights Reserved

SGT Innovation Center Health Care Claims System Prototype White Paper ©2015 SGT, Inc. All Rights Reserved

SGT Innovation Center Health Care Claims System Prototype White Paper ©2015 SGT, Inc. All Rights Reserved