Gradient Descent - Stephan RobertGradient Descent Learning rate (intuitive) •Practicaltrick:...

Transcript of Gradient Descent - Stephan RobertGradient Descent Learning rate (intuitive) •Practicaltrick:...

Gradient Descent

Stephan Robert

Model

• Other inputs– # of bathrooms– Lot size– Year built– Location– Lake view

0

1000000

2000000

3000000

4000000

5000000

6000000

0 200 400 600 800 1000

Pric

e (C

HF)

Size (m2)

2

Model

• How it works…– Linear regression with one variable

0

1000000

2000000

3000000

4000000

5000000

6000000

0 200 400 600 800 1000

Pric

e (C

HF)

Size (m2)

y

x

3

Model

• How it works…– Linear regression with one variable

0

1000000

2000000

3000000

4000000

5000000

6000000

0 200 400 600 800 1000

Pric

e (C

HF)

Size (m2)

y

f✓(x) = f(x) = ✓0 + ✓1x

x

yi = ✓0 + ✓1xi + ✏i

4

Model

• Idea: – Choose so that is close to for our

training example

0

1000000

2000000

3000000

4000000

5000000

6000000

0 200 400 600 800 1000

Pric

e (C

HF)

Size (m2)

y

x

f(x) = ✓0 + ✓1x

✓0, ✓1 f(x) y

5

ModelCost function:

Aim:

min✓0,✓1

J(✓0, ✓1) = min✓0,✓1

1

2m

mX

i=1

(f(xi)� yi)2

J(✓0, ✓1) =1

2m

mX

i=1

(f(xi)� yi)2

6

Other functions

• Quadratic

• Higher order polynomial

f(x) = ✓0 + ✓1x+ ✓2x2

f(x) = ✓0 + ✓1x+ ✓2x2 + ✓3x

3 + . . .

7

A very simple example

01234567

0 1 2 3 4

1

6(1 + 4 + 9) =

14

6= 2.33

f(x3)

y3

y2

y1

✓0 = 0, ✓1 = 1

J(✓0, ✓1) =1

2m

mX

i=1

(f(xi)� yi)2 =

1

2m((1� 2)2 + (2� 4)2 + (3� 6)2 =

8

A very simple example

0

1

2

3

4

5

6

7

8

9

10

0 0.5 1 1.5 2 2.5 3 3.5 4 ✓1

J(0, ✓1) =1

2m

mX

i=1

(f(xi)� yi)2

9

A very simple example

0

1

2

3

4

5

6

7

8

9

10

0 0.5 1 1.5 2 2.5 3 3.5 4 ✓1

Minimum

J(0, ✓1) =1

2m

mX

i=1

(f(xi)� yi)2

10

A very simple example

From Andrew Ng, Stanford

J(✓0, ✓1) =1

2m

mX

i=1

(f(xi)� yi)2

11

3D-plots

12

Cost function

J(✓0, ✓1)

✓0

✓1

-1

1

3

5

7

0 2 4

13

Gradient descent (intuition)

Have some function

Goal:

Solution: we start with some and wekeep changing these parameters to reduce until we hopefully end up to a minimum

J(✓0, ✓1)

min✓0,✓1

J(✓0, ✓1)

J(✓0, ✓1)

(✓0, ✓1)

14

Gradient descent (intuition)

Have some function

Goal:

Proposition:

J(✓0, ✓1)

min✓0,✓1

J(✓0, ✓1)

✓j = ✓j � ↵@

@✓jJ(✓0, ✓1)

0

1

2

3

4

5

6

7

8

9

10

0 1 2 3 4

Negative slope

15

Gradient descent

Formallyis convex and differentiable

We want to solvei.e. find such that

J(⇥) : Rn ! R

min⇥2Rn

J(⇥)

⇥⇤ J(⇥⇤) = min⇥2Rn

J(⇥)

⇥ =

0

BBB@

✓0✓1...✓n

1

CCCA2 Rn+1

16

Gradient descent

Algorithm

• Choose an initial

• Repeat

• Stop at some pointk = 1, 2, 3, . . .

⇥[k] = ⇥[k � 1]� ↵[k � 1]rJ(⇥[k � 1])

⇥[0] 2 Rn+1

17

Example with 2 parameters

• Choose an initial

• Repeat

• Stop at some point

⇥[0] = (✓0[0], ✓1[0])

j = 0, 1

✓j [k] = ✓j [k � 1]� ↵[k � 1]@

@✓j [k � 1]J(✓0[k � 1], ✓1[k � 1])

18

Example with 2 parameters

Implementation detail

Repeat (simultaneous update)

✓0[k] = temp0

✓1[k] = temp1

k = 1, 2, 3, . . .

temp0 = ✓0[k � 1]� ↵[k � 1]@

@✓0[k � 1]J(✓0[k � 1], ✓1[k � 1])

temp1 = ✓1[k � 1]� ↵[k � 1]@

@✓1[k � 1]J(✓0[k � 1], ✓1[k � 1])

19

Learning rate

• Search of one parameter only (one issupposed to be set already)

✓1[k] = ✓1[k � 1]� ↵@

@✓1[k � 1]J(✓0[k � 1], ✓1[k � 1])

✓1[k] = ✓1[k � 1]� ↵@

@✓1[k � 1]J(✓0, ✓1[k � 1])

✓1[k] = ✓1[k � 1]� ↵[k � 1]@

@✓1[k � 1]J(✓0[k � 1], ✓1[k � 1])

20

↵k�1 = ↵k = ↵

Learning rate✓1[k] = ✓1[k � 1]� ↵

@

@✓1[k � 1]J(✓0, ✓1[k � 1])

-100

0

100

200

300

400

500

600

700

-10 -5 0 5 10 -100

0

100

200

300

400

500

600

700

-10 -5 0 5 10

J(✓1) J(✓1)

✓1✓1

↵ ↵too small too large

21

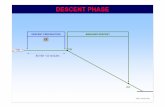

Gradient DescentLearning rate (intuitive)

• Practical trick:– Debug: J should decrease

after each iteration– Fix a stop-threshold

(0.001?)– Observe the cost function

Number of iterations

J(⇥⇤) = min⇥2Rn

J(⇥)

J(⇥)

22

Gradient descent

Wikimedia

Important: Weassume there are no local minimums for now!

23

Gradient DescentLearning rate (intuitive)

• Practical trick:– Debug: J should decrease

after each iteration– Fix a stop-threshold

(0.001?)– Observe the cost function

Number of iterations

J(⇥⇤) = min⇥2Rn

J(⇥)

J(⇥)

24

Gradient DescentBacktracking line search (Boyd)

• The porridge is

• Convergence analysis might help– Take an arbitrary parameter and fix it:– At each iteration start with and while

update Simple but works quite well in practice (check!)

↵ = 1

J(⇥� ↵rJ(⇥)) > J(⇥)� ↵

2||rJ(⇥)||2

↵ = �↵

0.1 � 0.8

25

Gradient DescentBacktracking line search (Boyd)

• Interpretation

J(⇥� ↵rJ(⇥))

J(⇥)� ↵

2||rJ(⇥)||2

J(⇥)� ↵||rJ(⇥)||2

↵ = 0 ↵↵0

26

Gradient DescentBacktracking line search (Boyd)

• Example (13 steps) – (Geoff Gordon & Ryan Tibshirani)– Convergence analysis

by GG&RT

� = 0.8

J =10(✓20) + ✓21

2

27

Gradient DescentExact line search (Boyd)

• At each iteration do the best along the gradient direction

– Often not much more efficient thanbacktracking, not really worth it…

↵ = argmaxs

J(⇥� srJ(⇥))

28

Gradient descentlinear regression

j = 0, 1

f(x) = ✓0 + ✓1x

Repeat

Stop at some point

J(✓0, ✓1) =1

2m

mX

i=1

(f(xi)� yi)2

=1

2m

mX

i=1

(✓0 + ✓1xi � yi)2

✓j [k] = ✓j [k � 1]� ↵[k � 1]@

@✓j [k � 1]J(✓0[k � 1], ✓1[k � 1])

29

Gradient descentlinear regression

J(✓0, ✓1) =1

2m

mX

i=1

(✓0 + ✓1xi � yi)2

@

@✓0J(✓0, ✓1) =

@

@✓0

1

2m

mX

i=1

(✓0 + ✓1xi � yi)2

!

=1

m

mX

i=1

(✓0 + ✓1xi � yi)

30

Gradient descentlinear regression

J(✓0, ✓1) =1

2m

mX

i=1

(✓0 + ✓1xi � yi)2

@

@✓1J(✓0, ✓1) =

@

@✓1

1

2m

mX

i=1

(✓0 + ✓1xi � yi)2

!

=1

m

mX

i=1

(✓0 + ✓1xi � yi)xi

31

Gradient descentlinear regression

• Choose an initial

• Repeat

• Stop at some point

↵k�1 = ↵k = ↵

✓0[k] = ✓0[k � 1]� ↵@

@✓0[k � 1]J(✓0[k � 1], ✓1[k � 1])

✓1[k] = ✓1[k � 1]� ↵@

@✓1[k � 1]J(✓0[k � 1], ✓1[k � 1])

⇥[0] = (✓0[0], ✓1[0])

32

Gradient descentlinear regression

• Repeat

• Stop at some point

✓0[k] = ✓0[k � 1]� ↵

m

mX

i=1

(✓0[k � 1] + ✓1[k � 1]xi � yi)

✓1[k] = ✓1[k � 1]� ↵

m

mX

i=1

(✓0[k � 1] + ✓1[k � 1]xi � yi)xi

WARNING: Update and simultaneously✓1✓0

33

Generalization• Higher order polynomial

• Multi-features

f(x) = ✓0 + ✓1x+ ✓2x2 + ✓3x

3 + . . .

WARNING: Notation is a scalaris a variable

is a scalarf(x1, x2, . . .) : x1

(x1)i

f(x) : (x)1 = x1

f(x1, x2, . . .) = f(x) = ✓0 + ✓1x1 + ✓2x2 + ✓3x3 + . . .

34

Gradient Descent, n=1Linear Regression

• Choose an initial

• Repeat

• Stop at some point

↵k�1 = ↵k = ↵

⇥[0] = (✓0[0], ✓1[0])

✓0[k] = ✓0[k � 1]� ↵

m

mX

i=1

(✓0[k � 1] + ✓1[k � 1]xi � yi)

✓1[k] = ✓1[k � 1]� ↵

m

mX

i=1

(✓0[k � 1] + ✓1[k � 1]xi � yi)xi

35

Gradient Descent, n>1Linear Regression

• Choose an initial • Repeat

• Stop at some point

⇥[0] = (✓0[0], ✓1[0], ✓2[0], . . . , ✓n[0])

✓0[k] = ✓0[k � 1]� ↵

m

mX

i=1

(f(xi)� yi)

...

✓1[k] = ✓1[k � 1]� ↵

m

mX

i=1

(f(xi)� yi) (x1)i

✓2[k] = ✓2[k � 1]� ↵

m

mX

i=1

(f(xi)� yi) (x2)i

36

Gradient DescentScaling

• Practical trick: – all features on the similar scale

–Mean normalizatione.g. size of the house = 300 m2, number of floors: 3

xi =Sizei �MeanSize

MaxSize-MinSize

37

Vector Notation

x =

0

BBB@

x0

x1...xn

1

CCCA2 Rn+1 ⇥ =

0

BBB@

✓0✓1...✓n

1

CCCA2 Rn+1

Often:

= ✓0 + ✓1x1 + ✓2x2 + . . .+ ✓nxn

(x0)i = 1, i 2 {0, 1, . . . , n}

f(x0, x1, x2, . . . , xn) = f(x) = ⇥tx

38

Vector Notation

⇥ =

0

BBB@

✓0✓1...✓n

1

CCCA2 Rn+1(x)i =

0

BBB@

(x0)i(x1)i...

(xn)i

1

CCCA

= ✓0 + ✓1(x1)i + ✓2(x2)i + . . .+ ✓n(xn)i

f((x0)i, (x1)i, (x2)i, . . . , (xn)i) = f((x)i) = ⇥txi

39

Vector Notation

X =

0

BBB@

(x)t1(x)t2...

(x)tm

1

CCCA=

0

BBB@

(x0)1 (x1)1 . . . (xn)1(x0)2 (x1)2 . . . (xn)2...

(x0)m (x1)m . . . (xn)m

1

CCCA2 Rm⇥(n+1)

(n+1)

m

40

Vector Notation

⇥ =

0

BBB@

✓0✓1...✓n

1

CCCA2 Rn+1

Cost function

J(⇥) =1

2m(X⇥� y)t (X⇥� y)

y =

0

BBB@

(y)1(y)2...

(y)m

1

CCCA=

0

BBB@

y1y2...ym

1

CCCA2 Rm

41

Examplem=4=#nb of samples

Size #bedrooms #floors Age Price (mios)

1 125 3 2 20 0.8

1 220 5 2 15 1.2

1 400 7 3 5 4

1 250 4 2 10 2

X =

0

BB@

1 125 3 2 201 220 5 2 151 400 7 3 51 250 4 2 10

1

CCA

x0 x1 x2 x3 x4 y

y =

0

BB@

0.81.242

1

CCA 2 Rm

42

Example

X =

0

BB@

1 125 3 2 201 220 5 2 151 400 7 3 51 250 4 2 10

1

CCA 2 Rm⇥(n+1)

Xt =

0

BBBB@

1 1 1 1125 220 400 2503 5 7 42 2 3 220 15 5 10

1

CCCCA2 R(n+1)⇥m

43

Normal equation

• Method to resolve analytically (Least squares) –Derivates = 0

⇥

⇥⇤ = (XtX)�1Xty

44

Comparison

• Gradient Descent– Choose a learning

parameter– Many iterations– Works even for large n

(in the order of 106)

• Normal Equation– No need to choose a

learning parameter– No iteration– Need to compute

– Slow when n is large (-> 10’000)

(XtX)�1

45