Accuracy and Reliability Frame error Nonresponse error Specification error Measurement error

Finite-Length Scaling and Error Floors

description

Transcript of Finite-Length Scaling and Error Floors

1

Finite-Length Scalingand Error Floors

Abdelaziz AmraouiAndrea MontanariRuediger Urbanke

Tom Richardson

2

Approach to Asymptotic PDF File

3

Finite Length Scaling

4

Finite Length Scaling

5

Finite Length Scaling

6

Finite Length Scaling

7

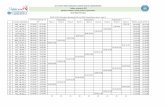

Analysis (BEC): Covariance evolution

Fraction of check nodes of degreegreater than one and equal to one.

Covariance terms.

As a function of residual graph fractional size.

8

Covariance evolution

9

Finite Length Curves

10

Analysis (BEC)

• Follow Luby et al: single variable at a time with the trajectory converging to a differential equation.

• Covariance of state space variables also follows a d.e.

• Increments have Markov property and regularity.

11

Results

12

Finite Threshold Shift

13

Generalizing from the BEC?

•No obvious incremental form (diff. Eq.)•No state space characterization of failure.•No clear finite dimensional state space.•Not clear what the right coordinates are for the general case (Capacity?).

Nevertheless, it is useful in practice to havethis interpretation of iterative failure and tohave the basic form of the scaling law.

14

Empirical Evidence

15

Error Floors

16

17

18

Error Floors:Review of the BEC

19

Error floors on the erasure channel: Stopping sets.

20

Error floors on the erasure channel: average performance.

21

Error floors on the erasure channel: decomposition

22

Error floors on the erasure channel: average and typical performance.

23

Error floors for general channels: Expurgated Ensemble Experiments.

AWGN channel rate 51/64 block lengths 4k

Random

Girth (8) optimized

Neighborhood optimized

24

Error floors for general channels: Trapping set distribution.

AWGN channel rate 51/64 block lengths 4k

(3,1)

(5,1)

(7,1)

25

Observations.

•Error floor region dominated by small weight errors.

•Subset on which error occur usually induces a subgraph with only degree 2 and degree 1 check nodes where the number of degree 1 check nodes is relatively small.

•Optimized graphs exhibit concentration of types of errors.

26

IntuitionIn the error floor event, nodes in the trapping sets receive 1’s with some reliability. Other nodes receive typical inputs.

(Reliable 1)

(Definite 0)

After a few iterations ‘exterior’ nodes and messages converge to high reliability 0s. Internally messages are 1s.

(Definite 0)

1

1

11

1

Nevertheless, if internal received are 1s, internal messaging reaches highly reliable 1 and message state gets trapped.

(Definite 0)

(9,3)

27

A decoder on an input ℇ Y is a sequence of maps:

Dl : ℇ {0,1}n

Defining Failure: Trapping Sets

(Assume the all-0 codeword is the desired decoding.

For the BEC let 1 denote an erasure.)

We say that bit j is eventually correct if there exists L so that l > L implies Dl(ℇ ) = 0.

Assuming failure, the trapping set T is the set of all bits that are not eventually correct.

28

Defining Failure for BP: Practice

Decode for 200 iterations. If the decoding is not successful decode an additional 20 iterations and take the union of all bits that do not decode to 0 during this time.

29

Trapping Sets: Examples

1. Let the decoder be the maximum likelihood decoder in one step. Then the trapping sets are the non-zero codewords.

2. Let the decoder be belief propagation over the BEC. Then the trapping sets are the stopping sets.

3. Let the decoder be serial (strict) flipping over the BSC. T is a trapping set if and only if the in the subgraph induced by T each node has more even then odd degree neighbors, and the same holds for the complement of T.

30

Analysis with Trapping Sets:Decomposition of failure

FER() = T P(ℇT, )

ℇT: The set of all inputs giving rise to failure on trapping set T.

Error Floors dominated by “small” events.

31

1. Find (cover) all trapping sets likely to have significant contribution to the error floor region.

T1,T2,T3,….,Tm

2. Evaluate contribution of each set to the error floor.

P(ℇT1, ), P(ℇT2, ),…

Predicting Error Floors: A two pronged attack.

Strictly speaking, we get a (tight) lower bound

FER() > i P(ℇTi, )

32

Finding Trapping Sets

Simulation of decoding can be viewed as stochastic process for finding trapping sets.

It is very inefficient, however.

We could use (aided) flipping to get some speed up.

It is still too inefficient.

33

Finding Trapping Sets (Flipping)

•Trapping sets can be viewed as “local” extrema of certain functions. E.g., number of odd degree induced checks.

•“Local” means, e.g., under single element removal, addition, or swap.

Therefore, we can look for subsets that are “local” extrema.

34

Finding Trapping Sets (Flipping)Basic idea:

•Build up a connected subset with bias towards minimizing induced odd degree checks.

•Check occasionally for containment of a in-flipping stable set by applying flipping decoding. Eventually such a set is contained.

•Check now for other types of variation:

•Out-flipping stability.

•Single aided flip stability (chains).

•……

35

Differences: BP and Flipping

36

Differences: BP and Flipping

r1

r1+r2+r3

r3

r2

37

Differences: BP and Flipping

38

Differences: BP and FlippingPDF File

39

•Find random variable x on which to condition the decoder input Y that “mostly” determines membership in ℇT . I.e.,

Pr{ℇT | x} is nearly a step function in x.

•Perform in situ simulation of trapping set while varying x to measure Pr{ℇT | x}.

•Combine with density of x to get Pr{ℇT }.

Evaluating Trapping SetsBasic idea:

40

Evaluating Trapping Sets

Condition input to trapping set

Otherwise simulate channel

41

Evaluating Trapping Sets: BECX is the number of erasures in T (=S).

42

Evaluating Trapping Sets: AWGNX is the mean noise input in T.

43

Evaluating Trapping Sets: AWGN

44

Evaluating Trapping Sets: Margulis 12,4

45

A tougher test case:G

46

Evaluating Trapping Sets: G

47

A Curve from a single point

48

Extrapolating a curve

49

Variation in Trapping Sets: (10,4) (10,2) (10,0)

50

Variation in Trapping Sets: (12,4) (12,2) (12,0)

51

Variation in Trapping Sets

52

Conclusions

•Error floor performance is predictable with considerable computational effort. (Would be nice to have scaling law for “best” codes.)

•Trade off between error floor and threshold (waterfall) can be optimized even for very deep error floors.

53

Conclusions

“It is interesting to observe that the search for theoretical understanding of turbo codes has transformed coding theorists into experimental scientists.”physicists.”