Finding Structure in Data: Bayesian Inference for Discrete ... · Small Trees & Long Memory:...

Transcript of Finding Structure in Data: Bayesian Inference for Discrete ... · Small Trees & Long Memory:...

Finding Structure in Data:Bayesian Inference for Discrete Time Series

Ioannis KontoyiannisAthens U of Economics & Business

Stochastic Methods in Finance and PhysicsHeraklion, Crete, July 2015

1

Acknowledgment

Small Trees & Long Memory: Bayesian Inference for Discrete Time Series

I. Kontoyiannis, M. Skoularidou, A. Panotopoulou

Athens U of Economics & Business

This research has been co-financed by the European Union(European Social Fund - ESF) and Greek national funds

through the Operational Program “Education and Lifelong Learning”of the National Strategic Reference Framework (NSRF)

Research Funding Program: THALES:Investing in knowledge society through the European Social Fund

2

Outline

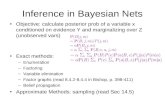

Background: Variable-memory Markov chains

Bayesian Modeling and InferenceNew prior structure, the posterior

Efficient Algorithms

MMLA, MAPT, k-MAPT

3

Outline

Background: Variable-memory Markov chains

Bayesian Modeling and InferenceNew prior structure, the posterior

Efficient Algorithms

MMLA, MAPT, k-MAPT

Theory ❀ The algorithms work

Experimental Results ❀ How the algorithms work

Applications Sequential prediction

MCMC exploration of the posterior

Causality detection

. . .

4

Variable-Memory Markov Chains

Markov chain {. . . , X0, X1, . . .} with alphabet A = {0, 1, . . . , m− 1}

of size m

5

Variable-Memory Markov Chains

Markov chain {. . . , X0, X1, . . .} with alphabet A = {0, 1, . . . , m− 1}

of size m

Memory length d P (Xn|Xn−1, Xn−2, . . .) = P (Xn|Xn−1, Xn−2, . . . , Xn−d)

6

Variable-Memory Markov Chains

Markov chain {. . . , X0, X1, . . .} with alphabet A = {0, 1, . . . , m− 1}

of size m

Memory length d P (Xn|Xn−1, Xn−2, . . .) = P (Xn|Xn−1, Xn−2, . . . , Xn−d)

Distribution To fully describe it, we need to specify

md conditional distributions P (Xn|Xn−1, . . . , Xn−d)

one for each context (Xn−1, . . . , Xn−d)

7

Variable-Memory Markov Chains

Markov chain {. . . , X0, X1, . . .} with alphabet A = {0, 1, . . . , m− 1}

of size m

Memory length d P (Xn|Xn−1, Xn−2, . . .) = P (Xn|Xn−1, Xn−2, . . . , Xn−d)

Distribution To fully describe it, we need to specify

md conditional distributions P (Xn|Xn−1, . . . , Xn−d)

one for each context (Xn−1, . . . , Xn−d)

Problem md grows very fast, e.g., with m = 8 symbols

and memory length d = 10, we need ≈ 109 distributions

8

Variable-Memory Markov Chains

Markov chain {. . . , X0, X1, . . .} with alphabet A = {0, 1, . . . , m− 1}

of size m

Memory length d P (Xn|Xn−1, Xn−2, . . .) = P (Xn|Xn−1, Xn−2, . . . , Xn−d)

Distribution To fully describe it, we need to specify

md conditional distributions P (Xn|Xn−1, . . . , Xn−d)

one for each context (Xn−1, . . . , Xn−d)

Problem md grows very fast, e.g., with m = 8 symbols

and memory length d = 10, we need ≈ 109 distributions

Idea Use variable length contexts described by a context tree T

9

Variable-Memory Markov Chains: An Example

Alphabet m = 3 symbols θ02000

Memory length d = 5 θ02001 context tree T

θ02002

Each past string Xn−1, Xn−2, . . ....

corresponds to a unique context ...

on a leaf of the tree ...

The distr of Xn given the past θ2

is given by the distr on that leaf θ022

0

1

2

0

1

2

0

1

2

0

1

2

0

1

2

0

1

2

E.g. P (Xn = 1|Xn−1 = 0, Xn−2 = 2, Xn−2 = 2, Xn−3 = 1, . . .) = θ022(1)

10

Variable-Memory Representation: Advantages

❀ E.g., above with memory length 5,

instead of 35 = 243 conditional distributions, only need to specify 13 (!)

❀ For an alphabet of size m and memory depth D there are mD contexts

⇒ potentially huge savings

11

Variable-Memory Representation: Advantages

❀ E.g., above with memory length 5,

instead of 35 = 243 conditional distributions, only need to specify 13 (!)

❀ For an alphabet of size m and memory depth D there are mD contexts

⇒ potentially huge savings

❀ Determining the underlying

context tree of an empirical

time series is of great scientific

and engineering interest 0

1

2

0

1

2

0

1

2

0

1

2

0

1

2

0

1

2

12

Computing the Likelihood

Write Xji for the block (Xi, Xi+1, . . . , Xj)

The likelihood of X = Xn1 is:

f(X) = P (Xn1 |X

0−d+1) =

n∏

i=1

P (Xi|Xi−1i−d)

=∏

s∈T

∏

j∈A

θs(j)as(j)

where the count vectors as are defined by:

as(j) = # times letter j follows context s in Xn1

13

Aside – Motivation and Earlier Results

△ Our results are primarily motivated by

❀ The basic results of Willems, Shtarkov, Tjalkens and co.on data compression via the CTW and related algorithms

❀ Basic questions of Bayesian inference for discrete time series

△ Several results can be seen as generalizations or extensionsof results and algorithms in these earlier works

△ Here we ignore the compression connection entirely and present everythingfrom the point of view of Bayesian statistics

14

Bayesian Modeling for VMMCs

Prior on models Indexed family of priors on trees T

Given m,D, for each β ∈ (0, 1) :

π(T ) = πD(T ;β) = α|T |−1β|T |−LD(T )

with α = (1− β)1/m−1; |T | = # leaves of T ; LD(T ) = # leaves at depth D

15

Bayesian Modeling for VMMCs

Prior on models Indexed family of priors on trees T

Given m,D, for each β ∈ (0, 1) :

π(T ) = πD(T ;β) = α|T |−1β|T |−LD(T )

with α = (1− β)1/m−1; |T | = # leaves of T ; LD(T ) = # leaves at depth D

Prior on parameters Given a context tree T , the parameters θ = {θs; s ∈ T}

are taken to be independent

with each π(θs|T ) ∼ Dirichlet(12, 12, . . . , 1

2):

16

Bayesian Modeling for VMMCs

Prior on models Indexed family of priors on trees T

Given m,D, for each β ∈ (0, 1) :

π(T ) = πD(T ;β) = α|T |−1β|T |−LD(T )

with α = (1− β)1/m−1; |T | = # leaves of T ; LD(T ) = # leaves at depth D

Prior on parameters Given a context tree T , the parameters θ = {θs; s ∈ T}

are taken to be independent

with each π(θs|T ) ∼ Dirichlet(12, 12, . . . , 1

2):

Likelihood Given a model T and parameters θ = {θs; s ∈ T}

the likelihood of X = Xn1 is as above:

f(X) = f(Xn1 |X

0−D+1, θ, T ) =

∏

s∈T

∏

j∈A

θs(j)as(j)

17

Bayesian Inference for VMMCs

Notation. θ = {θs; s ∈ T} for all the parameters (given T )

X = X−D+1, . . . X0, X1, . . . , Xn for all the observed data

Suppress dependence of the likelihood on the past X0−D+1

18

Bayesian Inference for VMMCs

Notation. θ = {θs; s ∈ T} for all the parameters (given T )

X = X−D+1, . . . X0, X1, . . . , Xn for all the observed data

Suppress dependence of the likelihood on the past X0−D+1

The one and only goal of Bayesian inference

Determination of the posterior distributions:

π(θ, T |X) =π(T )π(θ|T )f(X|θ, T )

f(X)

and π(T |X) =

∫

θ f(X|θ, T )π(θ|T ) dθ π(T )

f(X)

19

Bayesian Inference for VMMCs

Notation. θ = {θs; s ∈ T} for all the parameters (given T )

X = X−D+1, . . . X0, X1, . . . , Xn for all the observed data

Suppress dependence of the likelihood on the past X0−D+1

The one and only goal of Bayesian inference

Determination of the posterior distributions:

π(θ, T |X) =π(T )π(θ|T )f(X|θ, T )

f(X)

and π(T |X) =

∫

θ f(X|θ, T )π(θ|T ) dθ π(T )

f(X)Main obstacle

Determination of the mean marginal likelihood:

f(X) =∑

T

π(T )

∫

θ

f(X|θ, T )π(θ|T ) dθ

E.g. the number of models in the sum grows doubly exponentially in D

20

Computation of the Marginal Likelihood

Given the structure of the model, it is perhaps not surprising

that the marginal likelihoods f(X|T ) can be computed explicitly

Lemma The marginal likelihood f(X|T ) can be computed as

f(X|T ) =∏

s∈T

Pe(as)

where Pe(as) =

∏m−1j=0 [(1/2)(3/2) · · · (as(j)− 1/2)]

(m/2)(m/2 + 1) · · · (m/2 +Ms − 1)

with the count vectors as as before and Ms = as(0) + · · ·+ as(m− 1)

21

Computation of the Marginal Likelihood

Given the structure of the model, it is perhaps not surprising

that the marginal likelihoods f(X|T ) can be computed explicitly

Lemma The marginal likelihood f(X|T ) can be computed as

f(X|T ) =∏

s∈T

Pe(as)

where Pe(as) =

∏m−1j=0 [(1/2)(3/2) · · · (as(j)− 1/2)]

(m/2)(m/2 + 1) · · · (m/2 +Ms − 1)

with the count vectors as as before and Ms = as(0) + · · ·+ as(m− 1)

What should be surprising is that the entire

mean marginal likelihood f(X) =∑

T π(T )f(X|T )

can also be computed effectively

22

The Mean Marginal Likelihood Algorithm (MMLA)

Given. Data X = X−D+1, . . . , X0, X1, X2, . . . , Xn [The algorithm

Alphabet size m Maximum depth D formerly known

Prior parameter β as CTW]

23

The Mean Marginal Likelihood Algorithm (MMLA)

Given. Data X = X−D+1, . . . , X0, X1, X2, . . . , Xn [The algorithm

Alphabet size m Maximum depth D formerly known

Prior parameter β as CTW]

△ 1. [Tree. ] Construct a tree with nodes corresponding to all contexts

of length 1, 2, . . . , D contained in X

24

The Mean Marginal Likelihood Algorithm (MMLA)

Given. Data X = X−D+1, . . . , X0, X1, X2, . . . , Xn [The algorithm

Alphabet size m Maximum depth D formerly known

Prior parameter β as CTW]

△ 1. [Tree. ] Construct a tree with nodes corresponding to all contexts

of length 1, 2, . . . , D contained in X

△ 2. [Estimated probabilities. ] At each node s compute the vectors as[as(j) = # times letter j follows context s in Xn

1 ]

and the probabilities Pe,s = Pe(as) as in the Lemma

25

The Mean Marginal Likelihood Algorithm (MMLA)

Given. Data X = X−D+1, . . . , X0, X1, X2, . . . , Xn [The algorithm

Alphabet size m Maximum depth D formerly known

Prior parameter β as CTW]

△ 1. [Tree. ] Construct a tree with nodes corresponding to all contexts

of length 1, 2, . . . , D contained in X

△ 2. [Estimated probabilities. ] At each node s compute the vectors as[as(j) = # times letter j follows context s in Xn

1 ]

and the probabilities Pe,s = Pe(as) as in the Lemma

△ 3. [Weighted probabilities. ] At each node s compute

Pw,s =

{

Pe,s, if s is a leaf

βPe,s + (1− β)∏

j∈A Pw,sj, o/w

26

The MMLA Computes the Mean Marginal Likelihood

Theorem

The weighted probability Pw,λ given by the MMLA at the root λ

is exactly equal to the mean marginal likelihood of the data X:

Pw,λ = f(X) =∑

T

π(T )

∫

θ

f(X|θ, T )π(θ|T ) dθ

27

The MMLA Computes the Mean Marginal Likelihood

Theorem

The weighted probability Pw,λ given by the MMLA at the root λ

is exactly equal to the mean marginal likelihood of the data X:

Pw,λ = f(X) =∑

T

π(T )

∫

θ

f(X|θ, T )π(θ|T ) dθ

Note

The MMLA computes a “doubly exponentially hard” quantity

in O(n ·D2) time

This is one of the very few examples of nontrivial Bayesian models

for which the marginal likelihood is explicitly computable

and maybe even the most complex one!

28

Maximum A Posteriori Probability Tree Algorithm (MAPT)

Given. Data X = X−D+1, . . . , X0, X1, X2, . . . , Xn [The algorithm

Alphabet size m Maximum depth D formerly known

Prior parameter β as CTM]

△ 1. [Tree. ] and △ 2. [Estimated probabilities. ]

Construct the tree and compute as and Pe,s as before

29

Maximum A Posteriori Probability Tree Algorithm (MAPT)

Given. Data X = X−D+1, . . . , X0, X1, X2, . . . , Xn [The algorithm

Alphabet size m Maximum depth D formerly known

Prior parameter β as CTM]

△ 1. [Tree. ] and △ 2. [Estimated probabilities. ]

Construct the tree and compute as and Pe,s as before

△ 3. [Maximal probabilities. ]

At each node s compute

Pm,s =

{

Pe,s, if s is a leaf

max{βPe,s, (1− β)∏

j∈A Pm,sj}, o/w

30

Maximum A Posteriori Probability Tree Algorithm (MAPT)

Given. Data X = X−D+1, . . . , X0, X1, X2, . . . , Xn [The algorithm

Alphabet size m Maximum depth D formerly known

Prior parameter β as CTM]

△ 1. [Tree. ] and △ 2. [Estimated probabilities. ]

Construct the tree and compute as and Pe,s as before

△ 3. [Maximal probabilities. ]

At each node s compute

Pm,s =

{

Pe,s, if s is a leaf

max{βPe,s, (1− β)∏

j∈A Pm,sj}, o/w

△ 4. [Pruning. ]

For each node s, if the above max is achieved

by the first term, then prune all its descendants

31

Theorem: The MAPT Computes the MAP Tree

Theorem

The (pruned) tree T ∗1 resulting from the MAPT procedure

has maximal a posteriori probability among all trees:

π(T ∗1 |X) = max

Tπ(T |X) = max

T

{∫

θ f(X|θ, T )π(θ|T ) dθ π(T )

f(X)

}

32

Theorem: The MAPT Computes the MAP Tree

Theorem

The (pruned) tree T ∗1 resulting from the MAPT procedure

has maximal a posteriori probability among all trees:

π(T ∗1 |X) = max

Tπ(T |X) = max

T

{∫

θ f(X|θ, T )π(θ|T ) dθ π(T )

f(X)

}

Note – as with the MLA

The MAPT computes a “doubly exponentially hard” quantity

in O(n ·D2) time

Again, one of the very few examples of nontrivial Bayesian models

for which the mode of the posterior is explicitly identifiable

and maybe the most complex one

33

Finding the k A Posteriori Most Likely Trees (k-MAPT)

△ 1. [Construct full tree. ] △ 2. [Compute as and Pe,s. ]

△ 3. [Matrix representation. ] Each node s contains a k ×m matrix Bs

Line i represents the ith best subtree starting at s

Either entire line consists of ∗ meaning “prune at s”

Or jth element describes which line of the j child of s to follow

Line i also contains the “maximal probab” P(i)m,s associated with ith subtree

△ 4. [At each leaf s. ] Entire matrix Bs contains ∗’s and all P(i)m,s are = Pe,s

△ 5. [At each internal node s. ]

Consider all km combinations of subtrees of the children of s

For each combination compute the associated maximal prob as in MAPT

Order the results by prob, keep the top k, describe them in the matrix Bs

△ 6. [Bottom-to-top-to-bottom. ] Repeat (5.) recursively until the root

Starting at the root, read the top k trees

34

k-MAPT Finds the k A Posteriori Most Likely Trees

Theorem

The k trees T ∗1 , T

∗2 , . . . , T

∗k described recursively at the root

after the k-MAPT procedure

are the k a posteriori most likely models w.r.t.:

π(T |X) =

∫

θ f(X|θ, T )π(θ|T ) dθ π(T )

f(X)

Note – as with MAPT

The k-MAPT computes a “doubly exponentially hard” quantity

in O(n ·D2 · km) time

This is one of the very few examples of nontrivial Bayesian models

for which the area near the mode of the posterior is explicitly identifiable

and probably the most complex and interesting one!

35

Experimental results: MAP model for a 5th Order Chain

5th order VMMC data X−D+1, . . . , X0, X1, X2, . . . , Xn

Alphabet size m = 3

VMMC with d = 5 as in the example

Data length n = 80000 samples

❀ Space of more than 1024 models!

MAPT Find MAP models with max depth D = 1, 2, 3, . . . , β = 1/2

36

Experimental results: MAP model for a 5th Order Chain

5th order VMMC data X−D+1, . . . , X0, X1, X2, . . . , Xn

Alphabet size m = 3

VMMC with d = 5 as in the example

Data length n = 80000 samples

❀ Space of more than 1024 models!

MAPT Find MAP models with max depth D = 1, 2, 3, . . . , β = 1/2

0

1

2

0

1

2

0

1

2

D = 1 D = 2 D = 3

37

MAP model for a 5th Order Chain (cont’d)

5th order VMMC data X−D+1, . . . , X0, X1, X2, . . . , Xn

m = 3, d = 5, n = 80000

MAPT results with β = 1/2, cont’d

1

2

0

1

2

0

D = 4 D ≥ 5 – also TRUE model

38

Additional Results

(i) Model posterior probabilities π(T |X) =π(T )

∏

s∈T Pe(as)

Pw,λ

for ANY model T , where Pw,λ = mean marginal likelihood

and Pe(as) = Pe,s are the estimated probabilities in MMLA

39

Additional Results

(i) Model posterior probabilities π(T |X) =π(T )

∏

s∈T Pe(as)

Pw,λ

for ANY model T , where Pw,λ = mean marginal likelihood

and Pe(as) = Pe,s are the estimated probabilities in MMLA

(ii) Posterior oddsπ(T |X)

π(T ′|X)=

π(T )

π(T ′)

∏

s∈T,s6∈T ′ Pe(as)∏

s∈T ′,s6∈T Pe(as).

for ANY pair of models T, T ′

40

Additional Results

(i) Model posterior probabilities π(T |X) =π(T )

∏

s∈T Pe(as)

Pw,λ

for ANY model T , where Pw,λ = mean marginal likelihood

and Pe(as) = Pe,s are the estimated probabilities in MMLA

(ii) Posterior oddsπ(T |X)

π(T ′|X)=

π(T )

π(T ′)

∏

s∈T,s6∈T ′ Pe(as)∏

s∈T ′,s6∈T Pe(as).

for ANY pair of models T, T ′

(iii) Full conditional density of θ

π(θ|T,X) ∼∏

s∈T

Dirichlet(as(0) + 1/2, as(1) + 1/2, . . . , as(m− 1) + 1/2)

41

Experimental results: 5th Order Chain - Revisited

5th order VMMC data X−D+1, . . . , X0, X1, X2, . . . , Xn

Alphabet size m = 3

VMMC with d = 5 as before

Data length n = 10000 samples

MAPT Find MAP models, with max depth D = 1, 2, 3, . . . , β = 1/2

42

Experimental results: 5th Order Chain - Revisited

5th order VMMC data X−D+1, . . . , X0, X1, X2, . . . , Xn

Alphabet size m = 3

VMMC with d = 5 as before

Data length n = 10000 samples

MAPT Find MAP models, with max depth D = 1, 2, 3, . . . , β = 1/2

0

1

2

0

1

2

0

1

2

D = 1 D = 2 D = 3

π(T ∗1 ) = 1/2 π(T ∗

1 ) = 1/16 π(T ∗1 ) = 0.00781

π(T ∗1 |X) ≈ 1 π(T ∗

1 |X) = 0.99998 π(T ∗1 |X) = 0.99977

43

MAP model for a 5th Order Chain - Revisited

5th order VMMC data X−D+1, . . . , X0, X1, X2, . . . , Xn

m = 3, d = 5, n = 10000

MAPT results (cont’d)

1

2

0

1

2

0

D = 4 D ≥ 5 – also TRUE model

π(T ∗1 ) = 0.00098 π(T ∗

1 ) = 1.53× 10−5

π(T ∗1 |X) = 0.9947 π(T ∗

1 |X) = 0.74

44

k-MAPT models for the same 5th Order Chain

D = 10 ❀ more than 105900 models! n = 10000, k = 3, β = 3/4

��������������������������������������������������������������������������������������������������

��������������������������������������������������������������������������������������������������

����������������������������������������

1

2

0

1

2

0

1

2

0

π(T ∗1 |X) ≈ 0.368

π(T ∗1 |X)/π(T ∗

2 |X) ≈ 6.29

π(T ∗1 |X)/π(T ∗

3 |X) ≈ 8.82

45

k-MAPT for a 2nd Order, 8-Symbol Chain

2nd order VMMC: alphabet m = 8, memory d = 2, n = 40000 samples

k-MAPT: k = 3 top models, with D = 5, β = 1/2 [first tree = true model]

0

2

1

3

4

5

6

7 7

6

5

4

3

1

2

0

7

6

5

4

3

1

2

0

46

Sequential Prediction

Bayesian predictive distribution

f(Xn+1|Xn−D+1) =

∑

T

∫

θ

f(Xn+1|Xn−D+1, θ, T )︸ ︷︷ ︸

likelihood

π(θ, T |Xn−D+1)︸ ︷︷ ︸

posterior

dθ

47

Sequential Prediction

Bayesian predictive distribution

f(Xn+1|Xn−D+1) =

∑

T

∫

θ

f(Xn+1|Xn−D+1, θ, T )︸ ︷︷ ︸

likelihood

π(θ, T |Xn−D+1)︸ ︷︷ ︸

posterior

dθ

=f(Xn+1

−D+1)

f(Xn−D+1)

=mean marginal likelihood up to n + 1

mean marginal likelihood up to n

48

Sequential Prediction

Bayesian predictive distribution

f(Xn+1|Xn−D+1) =

∑

T

∫

θ

f(Xn+1|Xn−D+1, θ, T )︸ ︷︷ ︸

likelihood

π(θ, T |Xn−D+1)︸ ︷︷ ︸

posterior

dθ

=f(Xn+1

−D+1)

f(Xn−D+1)

=mean marginal likelihood up to n + 1

mean marginal likelihood up to n

Example Same 5th order chain, β = 1/2. Prediction rates:

PST MMLA Optimal

n = 1000, D = 2 38.9% 42.7% 45.6%

n = 1000, D = 50 38.9% 43.1% 45.6%

n = 10000, D = 2 40.6% 43.5% 44.6%

n = 10000, D = 50 39.5% 44.4% 44.6%

49

Metropolis-within-Gibbs Exploration of the Posterior

Given. Data X = X−D+1, . . . , X0, X1, . . . , Xn

Parameters m,D, β

❀ Run the MAPT algorithm

❀ Initialize T (0) = T ∗1 , θ(0) ∼

∏

s∈T (0)Unif

50

Metropolis-within-Gibbs Exploration of the Posterior

Given. Data X = X−D+1, . . . , X0, X1, . . . , Xn

Parameters m,D, β

❀ Run the MAPT algorithm

❀ Initialize T (0) = T ∗1 , θ(0) ∼

∏

s∈T (0)Unif

❀ Iterate at each time t ≥ 1:

△ [Metropolis proposal ] Given T (t− 1) propose T ′

by randomly adding or removing m sibling leaves

��������������������������������������������������������������������������������������������������

��������������������������������������������������������������������������������������������������

51

Metropolis-within-Gibbs Exploration of the Posterior

Given. Data X = X−D+1, . . . , X0, X1, . . . , Xn

Parameters m,D, β

❀ Run the MAPT algorithm

❀ Initialize T (0) = T ∗1 , θ(0) ∼

∏

s∈T (0)Unif

❀ Iterate at each time t ≥ 1:

△ [Metropolis proposal ] Given T (t− 1) propose T ′

by randomly adding or removing m sibling leaves

��������������������������������������������������������������������������������������������������

��������������������������������������������������������������������������������������������������

△ [Metropolis step ] Define T (t) by accepting or rejecting T ′

according to:π(T ′|X)

π(T (t− 1)|X)=

π(T ′)

π(T (t− 1))

∏

s∈T ′,s6∈T (t−1)Pe(as)∏

s∈T (t−1),s6∈T ′ Pe(as)

52

Metropolis-within-Gibbs Exploration of the Posterior

Given. Data X = X−D+1, . . . , X0, X1, . . . , Xn

Parameters m,D, β

❀ Run the MAPT algorithm

❀ Initialize T (0) = T ∗1 , θ(0) ∼

∏

s∈T (0)Unif

❀ Iterate at each time t ≥ 1:

△ [Metropolis proposal ] Given T (t− 1) propose T ′

by randomly adding or removing m sibling leaves

��������������������������������������������������������������������������������������������������

��������������������������������������������������������������������������������������������������

△ [Metropolis step ] Define T (t) by accepting or rejecting T ′

according to:π(T ′|X)

π(T (t− 1)|X)=

π(T ′)

π(T (t− 1))

∏

s∈T ′,s6∈T (t−1)Pe(as)∏

s∈T (t−1),s6∈T ′ Pe(as)

△ [Gibbs step ] Take θ(t) = sample from the full cond’l density

π(θ|T (t),X) ∼∏

s∈T (t)

Dirichlet(as(0) + 1/2, as(1) + 1/2, . . . , as(m− 1) + 1/2)

53

Causality, Conditional Independence & Directed Information

Given data X = . . . , X0, X1, . . . , Xn

Y = . . . , Y0, Y1, . . . , Yn

Assume {(Xi, Yi)} is Markov

of order (no greater than) D

54

Causality, Conditional Independence & Directed Information

Given data X = . . . , X0, X1, . . . , Xn

Y = . . . , Y0, Y1, . . . , Yn

Assume {(Xi, Yi)} is Markov

of order (no greater than) D

❀ X has no causal influence on Y

⇔ each Yi is conditionally independent of Xii−D given Y i−1

i−D

. . . , X0, X1, . . . , Xi−D−1, Xi−D, . . . , Xi−1, Xi, Xi+1, . . . , Xn

. . . , Y0, Y1, . . . , Yi−D−1, Yi−D, . . . , Yi−1, Yi, Yi+1, . . . , Yn

55

Causality, Conditional Independence & Directed Information

Given data X = . . . , X0, X1, . . . , Xn

Y = . . . , Y0, Y1, . . . , Yn

Assume {(Xi, Yi)} is Markov

of order (no greater than) D

❀ X has no causal influence on Y

⇔ each Yi is conditionally independent of Xii−D given Y i−1

i−D

. . . , X0, X1, . . . , Xi−D−1, Xi−D, . . . , Xi−1, Xi, Xi+1, . . . , Xn

. . . , Y0, Y1, . . . , Yi−D−1, Yi−D, . . . , Yi−1, Yi, Yi+1, . . . , Yn

⇔∑

i

KL(

PYi|Xii−D,Y i−1

i−D

∥∥∥PYi|Y

i−1i−D

)

= 0

56

Causality, Conditional Independence & Directed Information

Given data X = . . . , X0, X1, . . . , Xn

Y = . . . , Y0, Y1, . . . , Yn

Assume {(Xi, Yi)} is Markov

of order (no greater than) D

❀ X has no causal influence on Y

⇔ each Yi is conditionally independent of Xii−D given Y i−1

i−D

. . . , X0, X1, . . . , Xi−D−1, Xi−D, . . . , Xi−1, Xi, Xi+1, . . . , Xn

. . . , Y0, Y1, . . . , Yi−D−1, Yi−D, . . . , Yi−1, Yi, Yi+1, . . . , Yn

⇔∑

i

KL(

PYi|Xii−D,Y i−1

i−D

∥∥∥PYi|Y

i−1i−D

)

= 0⇔

Directed Information Rate I({Xi} → {Yi}) = 0

57

Jiao et. al.’s Approach

Given data Xn−D+1, Y

n−D+1

Assume {(Xi, Yi)} is Markov of order D

Define an estimator In({Xi} → {Yi})

Show In({Xi} → {Yi}) → I({Xi} → {Yi})

a.s. consistency + bounds

58

Jiao et. al.’s Approach

Given data Xn−D+1, Y

n−D+1

Assume {(Xi, Yi)} is Markov of order D

Define an estimator In({Xi} → {Yi})

Show In({Xi} → {Yi}) → I({Xi} → {Yi})

a.s. consistency + bounds

59

Jiao et. al.’s Approach

Given data Xn−D+1, Y

n−D+1

Assume {(Xi, Yi)} is Markov of order D

Define an estimator In({Xi} → {Yi})

Show In({Xi} → {Yi}) → I({Xi} → {Yi})

a.s. consistency + bounds

❀ Questions.

Is there causal influence {Xi} → {Yi}?

Or {Yi} → {Xi}? Which is larger?

❀ Idea.

Estimate both I({Xi} → {Yi})

and I({Yi} → {Xi}) and compare. . .

60

Jiao et. al.’s Approach

Given data Xn−D+1, Y

n−D+1

Assume {(Xi, Yi)} is Markov of order D

Define an estimator In({Xi} → {Yi})

Show In({Xi} → {Yi}) → I({Xi} → {Yi})

a.s. consistency + bounds

❀ Questions.

Is there causal influence {Xi} → {Yi}?

Or {Yi} → {Xi}? Which is larger?

❀ Idea.

Estimate both I({Xi} → {Yi})

and I({Yi} → {Xi}) and compare. . .

61

A Different Statistic/Estimator

Given data Xn−D+1, Y

n−D+1, Markov of order D, alphabet sizes m, ℓ

Define a new, likelihood-ratio-like test estimator

Jn({Xi} → {Yi}) = 2 log

(fMMLA(X, Y )

fMMLA(Y )fMMLA(X|Y )

)

62

A Different Statistic/Estimator

Given data Xn−D+1, Y

n−D+1, Markov of order D, alphabet sizes m, ℓ

Define a new, likelihood-ratio-like test estimator

Jn({Xi} → {Yi}) = 2 log

(fMMLA(X, Y )

fMMLA(Y )fMMLA(X|Y )

)

Theorem

(a) 12nJn({Xi} → {Yi}) → I({Xi} → {Yi}) a.s.

(b) In the absence of causal influence {Xi} → {Yi} :

Jn({Xi} → {Yi})D

−→ χ2(

ℓD(ℓ− 1)(mD+1 − 1))

63

A Different Statistic/Estimator

Given data Xn−D+1, Y

n−D+1, Markov of order D, alphabet sizes m, ℓ

Define a new, likelihood-ratio-like test estimator

Jn({Xi} → {Yi}) = 2 log

(fMMLA(X, Y )

fMMLA(Y )fMMLA(X|Y )

)

Theorem

(a) 12nJn({Xi} → {Yi}) → I({Xi} → {Yi}) a.s.

(b) In the absence of causal influence {Xi} → {Yi} :

Jn({Xi} → {Yi})D

−→ χ2(

ℓD(ℓ− 1)(mD+1 − 1))

❀ A new hypothesis test!

Under the “null” hypothesis, the limiting distr is completely specified

Compute Jn, and get a p-value!

64

Results on the Same Data

Given data Xn−D+1 = HSI, Y n

−D+1 = DJIA

Parameters n = 6159 days, D = 3, 5

65

Results on the Same Data

Given data Xn−D+1 = HSI, Y n

−D+1 = DJIA

Parameters n = 6159 days, D = 3, 5

Results Jn({Xi} → {Yi}) ≈ 4111, 10050

p-values ≈ 99.6%, 100%

Jn({Yi−1} → {Xi}) ≈ 4111, 10050

p-values ≈ 98.7%, 100%

66

Results on the Same Data

Given data Xn−D+1 = HSI, Y n

−D+1 = DJIA

Parameters n = 6159 days, D = 3, 5

Results Jn({Xi} → {Yi}) ≈ 4111, 10050

p-values ≈ 99.6%, 100%

Jn({Yi−1} → {Xi}) ≈ 4111, 10050

p-values ≈ 98.7%, 100%

❀ Conclusion:

NO significant evidence of causal influence!!

67

Extensions, Further Results, Applications

❀ Further applications

△ Online anomaly detection

△ Change-point detection

△ Hidden Markov trees

△ Markov order estimation

△ MLE computations

△ Entropy estimation

❀ Results on real data

✄ Financial data of different types

✄ Genetics

✄ Neuroscience

✄ Wind and rainfall measurements

✄ Whale/dolphin song data

✄ BIG DATA . . . !

68