Dense image matching: comparisons and analyses -...

Transcript of Dense image matching: comparisons and analyses -...

Dense image matching: comparisons and analyses

Fabio Remondino, Maria Grazia Spera, Erica

Nocerino, Fabio Menna, Francesco Nex

3D Optical Metrology (3DOM) unit

Bruno Kessler Foundation (FBK)

Trento, Italy

<remondino, spera, nocerino, fmenna, franex>@fbk.eu

Sara Gonizzi-Barsanti

Dept. Mechanics

Politecnico of Milano

Milano, Italy

Abstract—The paper presents a critical review and analysis of

dense image matching algorithms. The analyzed algorithms stay

in the commercial as well open-source domains. The employed

datasets include scenes pictured in terrestrial and aerial blocks,

acquired with convergent and parallel-axis images and different

scales. Geometric analyses are reported, comparing the dense

point clouds with ground truth data.

Index Terms—Image Matching, 3D, Comparison,

Photogrammetry

I. INTRODUCTION

Nowadays 3D modeling of scenes and objects at different

scale is generally performed using image or range data. Range

or active sensors (e.g. laser scanners, stripe projection systems,

etc.) are nowadays a quite common source of dense point

clouds for 3D modeling purposes due to their easiness, speed

and ability to capture millions of points. The associated

processing pipeline is also quite straightforward and based on

reliable and powerful commercial software. Indeed since 2000

airborne and terrestrial active sensors have been used in

various applications, with continuous scientific investigations

and improvements in both hardware and software. So for more

than ten years range sensors were growing in popularity as a

means to produce dense point clouds for 3D documentation,

mapping and visualization purposes at various scales.

Photogrammetry, at that time, could not efficiently deliver

dense and detailed 3D results similar to those achieved with

range instruments and consequently they became the dominant

technology for dense 3D recording, replacing photogrammetry

in many application areas. Further, many photogrammetric

scientists shifted their research interests to laser scanning,

resulting in a further decline in advancements and development

of automated procedures in the photogrammetric technique.

Luckily, thanks to the great improvements in hardware and

algorithms, primarily from the computer vision community [1]-

[4], different automated procedures are nowadays available and

photogrammetry-based surveying and 3D modeling can deliver

comparable geometrical 3D results for many terrestrial and

aerial applications. In particular photogrammetric methods for

dense point clouds generation (“dense image matching”) are

increasingly available for professional and amateur

applications with performances that cover a wide variety of

applications. But a keypoint is the selection of the most

appropriate method and algorithm able to achieve the desire

accuracy and completeness

The paper presents a critical review and analysis of dense

image matching algorithms. The analyzed image matching

algorithms lay in the commercial as well open-source domains

(Fig. 1). The employed datasets (Fig. 2 and Table I) include

scenes pictured in terrestrial and aerial blocks, acquired with

convergent and parallel-axis images and at different scales.

With respect to other benchmarking datasets, the employed

images feature more real and complex scenarios. The

algorithms and results are evaluated according to their ability

to produce a dense and a high quality 3D point cloud. So

geometric analyses are reported, comparing the dense point

clouds with ground truth data or between them.

A. Range sensors vs photogrammetry

Several recent publications compared ranging and imaging

dense 3D potentialities based on factors such as accuracy and

resolution [5]-[7]. Therefore the choice between the two

techniques depends primarily on project constraints,

requirements, budget and experience. Range sensors are still

relatively cumbersome and expensive compared to terrestrial

digital cameras (off-the-shelf or SLR-type) and their bulkiness

might be problematic in some field campaigns or research

projects. The point clouds recorded with range instruments are

already metrically correct, but they are not based on redundant

measurements which may be problematic for projects

concerned with absolute accuracy. Typical photogrammetric

measures derived in adjustment procedures (variance

estimations or other statistical matrices) are not available to

evaluate the quality of point clouds produced with range

sensors. Moreover few statistics (normally provided by

vendors) are given to describe errors for the entire dataset.

Such errors are normally treated as ‘black boxes’ as they lack

well-defined procedures to assess per-project quality. On the

other hand, the photogrammetric processing, although more

labor intensive, can be carried out so that calibration

procedures, systematic error corrections and error metrics are

explicitly stated. This is mainly valid for pure photogrammetric

processes while Structure from Motion tools are more black-

boxes leading often to bundle divergences or geometric

deformations [8][9]. Photogrammetric processing algorithms

generally suffer of problems due to the initial image quality

(noise, low radiometric quality, shadows, etc.) or certain

surface materials (shiny or texture-less objects), resulting in a

noisy point clouds or difficulties in feature extraction.

Furthermore, in order to derive metric 3D results from images,

a known distance or Ground Control Points (GCP) are

required. In case of aerial acquisitions, the typical point density

of laser scanning datasets is around 1-25 points/m2 while an

aerial photogrammetric image typically has a Ground Sampling

Distance (GSD) on the order of 10 cm which could

theoretically be used to produce a dense point cloud with 100

points/m2.

II. DENSE IMAGE MATCHING ALGORITHMS

A. Concepts and history

Matching can be defined as the establishment of the

correspondence between various data sets (e.g. images, maps,

3D shapes, etc.). In particular, image matching is referred to

the establishment of correspondences between two or more

images [10]. In computer vision it is often called as stereo

correspondences problem [11][12]. Image matching represents

the establishment of correspondences between primitives

extracted from two or more images and estimate the

corresponding 3D coordinate via collinearity or projective

model. In image space this process produce a depth map (that

assigns relative depths to each pixel of an image) while in

object space we normally call it point cloud. Considering a

simple image pair, the disparity (or parallax, i.e. the horizontal

motion) is inversely proportional to the distance camera-object.

But if the visual understanding and basic geometry relating

disparity and scene structure are well understood, the

automated measurement of such disparity by establishing dense

and accurate image correspondences is a challenging task.

For historical reasons, the photogrammetric developments

in the field of image matching were mainly related to aerial

images. The earliest matching algorithms were developed by

the photogrammetry community in the 1950s [13]. With the

advent of digital imaging, researchers studied automated

procedures to replace the manual intervention of the operators.

In its oldest form, image matching involved 4 transformation

parameters (cross-correlation) and could already provide for

successful results on single points [14]. Further extensions

considered a 6- and 8-parameters transformation, leading to the

well-known non-linear Least Squares Matching (LSM)

estimation procedure [15][16]. Gruen [15] and Gruen &

Baltsavias [17][18] introduced the Multi-Photo Geometrical

Constraints (MPGC) concept into the image matching

procedure and integrated also the surface reconstruction into

the process. Then from image space, the matching procedure

was generalized to object space, introducing the concept of

‘groundel’ or ‘surfel’ [19][20].

In the computer vision community, stereo matching was

investigated already at the end of the 70’s [21] and continued

in the 80’s in particular for terrestrial applications [22]-[25].

Then in the 90’s the focus started to move to multi-view

approaches [26]-[28].

B. Algorithms and classifications

It is quite complicate to summarize all the image matching

algorithms developed in the scientific community. Surveys and

comparisons of matching algorithms are presented in [29]-[33].

Matching can be solved using a stereo pair (stereo matching)

[34]-[39] or identifying correspondences in multiple images

(multi-view stereo - MVS) [1][40]-[47].

The most intuitive classification of image matching

algorithms is based on the used primitives - image intensity

patterns (windows composed of grey values around a point of

interest) or features (e.g. edges and regions) - which are then

transformed into 3D information through a mathematical

model (e.g. collinearity model or camera projection matrix).

According to these primitives, the resulting matching

algorithms are generally classified as area-based matching

(ABM) or feature-based matching (FBM) [45]. ABM,

especially the LSM method with its sub-pixel capability, has a

very high accuracy potential (up to 1/50 pixel) if well textured

image patches are used. Disadvantages of ABM are the need

for small searching range for successful matching, the large

data volume which must be handled and, normally, the

requirement of good initial values for the unknown parameters

- although this is not the case for other techniques such as

graph-cut [48]. Problems occur in areas with occlusions, lack

of or repetitive texture and if the surface does not correspond to

the assumed model (e.g. planarity of the matched local surface

patch). FBM is often used as alternative or combined with

ABM [49]. FBM techniques are more flexible with respect to

surface discontinuities, less sensitive to image noise and

require less approximate values. Because of the sparse and

irregularly distributed nature of the extracted features, the

matching results are in general sparse point clouds which are

then used as seeds to grow additional matches [50].

Another possible way to distinguish image matching

algorithms is based on the created point clouds, i.e. sparse or

dense reconstructions. Sparse correspondences were the initial

stages of the matching developments due to computational

resource limitations but also for a desire to reconstruct scenes

using only few sparse 3D points (e.g. corners). Nowadays all

the algorithms focus on dense reconstructions - using stereo or

multi-view approaches. To our knowledge, dense multi-view

reconstruction with multi-image radiometric consistency

measures and geometric constraints - as proposed in [18] -

were implemented only in [40][45]. The other methods apply

consistency measures only on single stereo pairs while

geometric constraints are applied during the fusion of the point

clouds derived by the stereo pairs or with some volumetric

approach.

According to [12], stereo methods can be local or global.

Local (or window-based) compute the disparity at a given point

using the intensity values within a finite region, with implicit

smoothing assumptions and a local “winner-take-all”

optimization at each pixel. On the other hand, global methods

make explicit smoothness assumptions and then solve for a

global optimization problem using an energy minimization

approach, based on regularization (variational) Markov

Random Fields, graph-cut, dynamic programming or max-flow

methods.

Most of the proposed matching methods are based on

similarity or photo-consistency measures, i.e. they compare

pixel values between the images. These measures can be define

in image or object space, according to the algorithms (stereo or

multi-view). The most common measures (or matching costs)

include squared and absolute intensity differences, normalized

cross-correlation, dense feature descriptors, census transform,

gradient-based, BRDFs, etc.

C. Innovations, characteristics and challenges

The real innovation that has been introduced in several

dense image matching methods during the last years regards

the integration of different basic correlation algorithms,

consistency measures and constraints into a multi-step

procedure, which in many cases works through a multi-

resolution approach. Indeed, local correlation algorithms

assume constant disparities within a correlation window. The

larger is the size of this window, the greater is the robustness of

matching. But this implicit assumption about constant disparity

inside the area is violated for elements like geometric

discontinuities, which lead to blurred object boundaries and

smoothing results. Furthermore, the matching phase, as

commonly based on intensity differences, is very sensitive to

recording and illumination differences and is not reliable in

poorly textured or homogeneous regions.

A dense matching algorithm should be able to extract 3D

points with a sufficient resolution to describe the object’s

surface and its discontinuities. Two critical issues should be

considered for an optimal approach: (i) the point resolution

must be adaptively tuned to preserve edges and to avoid too

many points in flat areas; (ii) the reconstruction must be

guaranteed also in regions with poor textures or illumination

and scale changes.

A rough surface model of the object is often required by

some techniques in order to initialize the matching procedure.

Such models can be derived in different ways, e.g. by using a

point cloud interpolated on the basis of tie points obtained from

the orientation stage, from already existing 3D models, or from

low-resolution range data. Other methods are organized in a

hierarchical framework which generates first a rough surface

reconstruction, which is refined and made denser at a later

stage.

Many algorithms are based on normalized and distortion-

free images, whose adoption simplifies and speeds up the

search of correspondences. Possible outliers are generally

removed following two opposite strategies: (i) the use of multi-

image techniques to discard possible blunders by intersecting

the homologous rays of the matched point in object space; (ii)

by computing a surface model as dense as possible without any

care of outliers and applying different filtering / smoothing

methods.

As dense matching is a task involving a large computing

effort, the use of advanced techniques like parallel computing

and implementation at GPU / FPGA level can reduce this effort

and allow real-time depth map production.

The accuracy and reliability of the derived 3D

measurements rely on the accuracy of the camera calibration

and image orientation, the accuracy and number of the image

observations and the imaging geometry (i.e. the effects of

camera optics, image overlap and the distance camera-object

[51]). Therefore a successful image matcher should (1) use

accurately calibrated cameras and images with strong

geometric configuration, (2) use local and global image

information, to extract all the possible matching candidates and

get global consistency among the matching candidates, (3) use

constraints to restrict the search space, (4) consider an

estimated shape of the object as a priori information and (5)

employ strategies to monitor the matching results and quality.

III. EVALUATED DENSE IMAGE MATCHING ALGORITHMS

A. SURE

SURE is a MVS method [38] where a reference image is

matched to a set of adjacent images using a SGM-like stereo

algorithm [34]. For each pair a disparity map is computed and

then all disparity maps sharing the same reference view are

merged into a unique final point cloud capitalizing redundancy

across the stereo pairs. Within a preprocessing module, a

network analysis and selection of suitable image pairs for the

reconstruction process is performed. Epipolar images are then

generated and a time and memory efficient SGM algorithm is

applied to produce depth maps. All these maps are then

converted in 3D coordinates using a fusion method based on

geometric constraints which helps in reducing the number of

outliers and increasing precision. With respect to the classical

SGM approach, SURE searches pixel correspondences using

dynamic disparity search ranges and use a tube-shape structure

to store costs of potential correspondences. Moreover it

implements a blunder removal approach and reduces

significantly the computational time.

B. Micmac

Micmac (http://www.micmac.ign.fr) is a multi-resolution and

multi-image method [42] which implement a coarse-to-fine

extension of the maximum flow image matching algorithm

presented in [52]. The surface measurement and reconstruction

is formulated as an energy function minimization problem i.e.

finding a minimal cut in a graph. Thus the problem is solved in

polynomial time with classical minimal cut and maximal flow

graph theory algorithms. The procedure was originally

developed to deal with large and high-resolution satellite

imagery but now it can also process large and complex

terrestrial sequences or aerial blocks. Micmac works according

to a pyramidal processing: starting from a lower resolution, the

matching results achieved in each pyramid level guide the

higher resolution one in order to improve the quality of the

matching up to the full resolution. The pyramidal approach

speed up the processing time too. The user selects a set of

master images for the correlation procedure. Then for each

hypothetic 3D points, a patch in the master image is identified,

projected in all the neighborhood images and a global

similarity is derived. Finally an energy minimization approach

is applied to enforce surface regularities and avoid undesirable

jumps. The global energetic function keeps in count both the

correlation and the smoothing term. Micmac is open-source

and provides for detailed and accurate 3D reconstructions

preserving surface discontinuities thanks to its optimization

process. Micmac can be associated to the IGN orientation

module Apero and the orthoimage generator Porto.

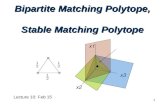

Figure 1. An example processed with all the tested algorithms (SURE, Micmac, PMVS, PhotoScan) and the achieved results.

C. PMVS

PMVS (http://www.di.ens.fr/pmvs/) is a Patch-based Multi-

Vie Stereo matching method [46]. The matching and

reconstruction procedure follows a multi-step approach that

does not need any initial approximation of the surface. A

‘patch’ p is a local tangent rectangle approximating a surface,

whose geometry is fully defined by the position of its centre

c(p) and the unit normal vector oriented towards a reference

image R(p) where it is viewed. After the initial matching step,

a propagation of reconstructed semi-dense patches is

performed with a final filtering to remove possible local

outliers. In its original implementation, the surface growing

method used simultaneously all the images of the processed

dataset which implied large memory demand. This issue was

then solved clustering the input images and then reconstructing

sub spaces of the scene. PMVS software is open-source and

uses oriented and distortion-free images.

D. Agisoft Photoscan

Agisoft Photoscan (http://www.agisoft.ru) is a commercial

package able to automatically orient and match large datasets

of images. Due to commercial reasons, few information about

the used algorithms is available. Nevertheless, from our

experience and from the achievable 3D results, the

implemented image matching algorithm seems to be a stereo

SGM-like method. Normally the software delivers already

meshed results but for our evaluation we computed and

exported the “raw” point clouds.

IV. DATASETS AND EVALUATION RESULTS

In order to evaluate the matching performances and

potentialities in various situations, five datasets were selected.

Specifically, we consider four terrestrial photogrammetric

datasets (A: Fountain, B: Buergerhaus, C: Stele and D: Cube)

and one aerial case (E: Aerial) (Fig. 2). The main

characteristics of the employed photogrammetric datasets are

summarized in Tab.1. The case studies are characterized by

different image scales (ranging from 1/16000 for the Aerial

case to 1/10 for the Cube), image resolution, number of

images, camera network, and object texture and size. In order

to have a common starting point for testing the selected image-

matching algorithms, all the datasets were firstly oriented with

the same bundle adjustment and the images undistorted. The

achieved orientation parameters and idealized images were

then used to run the matching tests. The matching tests were

realized using the second image pyramid, i.e. a quarter of the

original image resolution, thus a sampling resolution of the

dense point cloud 2 times the original image GSD. The image

matching results were evaluated through the following

procedures (see Tab.1):

1. for the Fountain and Buergerhaus case studies, the

photogrammetric point clouds (PH) were compared

against a meshed model from Terrestrial Laser Scanner

(TLS);

2. for the Cube dataset, a flatness measurement was

performed on the top and a side face, highlighted in red

and blue respectively in Fig. 2;

3. for the Stele and Aerial cases, some cross-sections were

derived and the obtained profiles were compared.

A. The Fountain dataset

The Fountain dataset was selected for its interesting shape,

with undercuts, relief details and a quite uniform texture. The

image matching results were compared with the reference TLS

mesh model and the deviations as Euclidean distances are

shown in Fig.3a. On the wall besides the fountain and on flat

Figure 2: The datasets (few images for each of them) employed for the evaluation analysis of dense matching algorithms.

A) B) C) D) E)

TABLE I. MAIN CHARACTERISTICS OF THE EMPLOYED DATASETS FOR THE EVALUATION ANALYSIS OF DENSE MATCHING ALGORITHMS.

DATASET

(W×L×H) # img Camera model

Sensor

size

(mm)

Pixel size

(mm)

Nominal focal

length (mm)

Min-Max dist.

cam-obj (m)

Min-Max

GSD (mm)

Ground

Truth Evaluation

A (5×6×2.5)m 25 Canon EOS D60 22.7 x 15.1

0.0074 20 7.5 - 10.6 2.8 – 3.9 TLS TLS vs PH

B (9.5×9.5×1.5)m 12 Nikon D90 23.6 x 15.8

0.0110 20 7 - 11 3.8 – 6 TLS TLS vs PH

C (0.7×2×0.35)m 8 Canon EOS 5D

mark II 36 x 24 0.0064 50 2.4 - 4 0.3 – 0.5 - Profiles

D (100×100×100)mm 24 Nikon D3X 35.9 x

24 0.0059 50 0.5 - 0.6 0.06 - 0.07 Plane Flatness error

E (800×500×20)m 9 Nikon D3X 35.9 x

24 0.0059 50 800 95 - Profiles

fountain elements, the deviations for all the matching

algorithms were in the order of the image matching sampling

resolution (twice the original GSD), except for Micmac whose

result is nosier than the other point clouds. For all the matching

algorithms, the highest deviations were concentrated in

correspondence of the edges. Globally, the results from

Micmac and PhotoScan resulted nosier than PMVS and SURE.

B. The Buergerhaus dataset

The terrestrial dataset features convergent acquisitions and

highly variable baselines and camera-object distances. Possibly

due to the non-conventional camera network, the following

behaviors of the image matching algorithms were observed: (i)

SURE was not able to reconstruct the bottom left part of the

façade; (ii) a similar problem was observed with Micmac as the

software requires a reference image while the dataset lacks an

image depicting the whole façade; (iii) PMVS delivered the

whole façade but the bottom left part resulted highly noisy. In

Fig.3b shows the difference between the photogrammetric

point clouds and the reference TLS mesh model. The highest

standard deviations, in the order of 10 mm, were observed for

the Micmac and PMVS point clouds, while the lowest, equal to

7 mm, was obtained with SURE. It is noteworthy that these

values are all in the order of the point cloud spatial resolution,

(about 10 mm i.e. the GSD of the image pyramid level used for

the matching). As expected, the highest deviations of ±40 mm

were observed in textureless and dark parts of the object. A

twist of the photogrammetric point clouds obtained with

Micmac and, especially, with PhotoScan was also observed.

C. The Cube dataset

The dataset features a very high GSD and quite convergent

images for the lateral side of the cube. The top and a side face

on the cube were selected on each derived point clouds and on

each face a best fitting plane was computed, excluding the

photogrammetric targets placed on the object. The deviations

from the best fitting plane are shown in Fig.3c and 3d. For both

faces all the matching algorithms delivered an average flatness

error below the image matching sampling resolution. The

matching algorithms showed similar behavior, except for

PMVS that was characterized by an opposite systematic

deviation trend. Nevertheless, for the top face, the highest

deviations, in the order of 4 times the average flatness error,

were localized in the same position for all the matching results,

corresponding to true roughness of the object surface. All the

tested algorithms, as the images viewing the lateral faces were

quite inclined, did not match / reconstruct correctly the true

roughness of the object.

D. The Stele dataset

The Stele is an interesting case study since it is made of

marble with quite homogenous texture. To evaluate the

matching results, two profiles in correspondence of two letters

(see Fig. 4) were derived on the derived point clouds. The

profiles refer to letters “MA” and “BI”. In the first case, the

profiles from SURE and PhotoScan were smoother and more

regular than Micmac and PMVS. In the second case, the

differences among the profiles from all the algorithms were

less evident. The maximum difference was in the order of 1

mm, i.e. the mean GSD of the image pyramid level used for the

matching.

E. The Aerial dataset

The derived point clouds were sectioned in correspondence

of three buildings (profile 1 of Fig.5) and on a large roof

(profile 2 of Fig.5). The SURE profiles reveal that the software

was able to reconstruct correctly the ground and the shape of

the roofs, with little noise and limited outliers. Similar results

were obtained with Micmac, while PMVS and PS show noisier

/ smoother profiles. In the profile from PhotoScan the vertical

walls of the buildings were more completely reconstructed,

clearly showing that the matching algorithm uses stereo pairs

that are then merged into a unique final point cloud.

V. CONCLUSIONS

The paper presented a review of the actual dense image

matching methods with an evaluation of four state-of-the-art

algorithms available in the commercial and open-source

domains. Photogrammetry is definitively back, out of the

LiDAR’s shadow and able to provide precise and dense surface

measurements and 3D reconstructions of complex and detailed

objects at various scales. Stereo or multi-image approaches are

available, each one with advantages and disadvantages. The

tested algorithms have pros and cons highlighted in the

achieved results and most of them depend very much on the

input parameters. This is, from one side, good as the user can

control and adjust the performances according to the employed

dataset. On the other hand, too many and complex parameters

might affect the results achieved by non-experts who prefer

fully automated black-box tools. To assess the accuracy and

performances of the algorithms is not an easy task. We have

reported different evaluations using various datasets and

imaging configurations, trying to cover all the possible real

applications. The results show how all the methods have great

potentialities but extreme care must be taken in the image

acquisition and successive parameters selection otherwise even

a great matching method cannot achieve any good result.

REFERENCES

[1] Goesele, M., Snavely, N., Seitz, S. M., Curless, B., Hoppe, H.,

2007. Multi-view stereo for community photo collections. Proc.

ICCV, Vol. 2, pp. 265–270.

[2] Snavely, N., Seitz, S.M., Szeliski, R., 2008. Modeling the world

from Internet photo collections. Int. Journal of Computer Vision,

Vol. 80(2), pp. 189–210.

[3] Pollefeys, M., Nister, D., Frahm, J.-M., Akbarzadeh, A.,

Mordohai, P., Clipp, B., Engels, C., Gallup, D., Kim, S.-J.,

Merrell, P., Salmi, C., Sinha, S., Talton, B., Wang, L., Yang, Q.,

Stewenius, H., Yang, R., Welch, G., Towles, H., 2008. Detailed

Real-Time Urban 3D Reconstruction From Video, Int. Journal

of Computer Vision, Vol. 78(2), pp. 143-167.

[4] Furukawa, Y., Curless, B., Seitz, S.M., Szeliski, R., 2010.

Towards internet-scale multi-view stereo. Proc. CVPR.

[5] Opitz, R., Simon, K., Barnes, A., Fisher, K., Lippiello, L., 2012.

Close-range photogrammetry vs 3D scanning: comparing data

capture, processing and model generation in the field and the

lab. Proc. CAA.

[6] Nguyen, M.H., Wuensche, B., Delmas, P., Lutteroth, C., 2012.

3D models from the black box: investigating the current state of

image-based modelling. Proc. WSCG conference.

[7] Koutsoudis, A., Vidmar, B., Ioannakis, G., Arnaoutoglou, F.,

Pavlidis, G., Chamzas, C., 2013. Multi-image 3D reconstruction

data evaluation. Journal of Cultural Heritage.

STANDARD

DEVIATION

a) Fountain

SURE=0.009 m

MM=0.018 m

PMVS=0.011 m

PS=0.010 m

STANDARD

DEVIATION

b) Buergerhaus

SURE=0.007 m

MM=0.010 m

PMVS=0.010 m

PS=0.009 m

STANDARD

DEVIATION

c) Cube (1)

SURE=0.127 mm

MM=0.114 mm

PMVS=0.106 mm

PS=0.097 mm

STANDARD

DEVIATION

c) Cube (2)

SURE=0.113 mm

MM=0.125 mm

PMVS=0.059 mm

PS=0.090 mm

Figure 3: The evaluation results for the Fountain, Buergerhaus and Cube (two faces) datasets. Most of the errors are in the order of

the point clouds spatial resolution (set to 2 times the image GSD). Grey values in the figures represent no matching data.

Figure 4: Derived cross-sections for the Stele dataset (C).

Figure 5: Derived cross-sections for the Aerial dataset (E).

[8] Remondino, F., Del Pizzo, S., Kersten, T., Troisi, S., 2012.

Low-cost and open-source solutions for automated image

orientation – A critical overview. Proc. EuroMed 2012

Conference, LNCS 7616, pp. 40-54.

[9] Nocerino, E., Menna, F., Remondino, F., Salieri, R., 2013.

Accuracy and deformation analysis in automatic UAV and

terrestrial photogrammetry – lesson learnt. ISPRS Annals of

Photogrammetry, Remote Sensing and Spatial Information

Sciences, Vol. 2(5/W1): 203-208. Proc. 24th CIPA Symposium.

[10] Schenk, T. 1999. Digital photogrammetry. Volume I.

Terrascience, Laurelville OH, USA. 428 pages.

[11] Sonka, M., Hlavac, V. & Boyle, R. 1998. Image processing,

analysis and machine vision. 2nd ed. PWS Publishing. 770 pages.

[12] Szeliski, R., 2011. Computer Vision – Algorithms and

applications. Springer, 812 pages.

[13] Hobrough, G. 1959. Automatic stereoplotting. Photogrammetric

Engineering and Remote Sensing, Vol. 25(5), pp. 763-769.

[14] Foerstner, W., 1982. On the geometric precision of digital

correlation. International Archives of Photogrammetry, Vol.

24(3), pp.176-189.

[15] Gruen, A., 1985. Adaptive least square correlation: a powerful

image matching technique. South African Journal of PRS and

Cartography, Vol. 14(3), pp. 175-187.

[16] Foerstner, W., 1986. A feature based correspondence algorithm

for image matching. International Archives of Photogrammetry,

Vol. 26(3).

[17] Gruen, A. and Baltsavias, E., 1986. Adaptive least squares

correlations with geometrical constraints. Proc. of SPIE, Vol.

595, pp. 72-82.

[18] Gruen, A., Baltsavias, E., 1988. Geometrically constrained

multiphoto matching. Photogrammetric Engineering and

Remote Sensing, Vol. 54, pp. 633-641.

[19] Wrobel, B., 1987. Facet Stereo Vison (FAST Vision) – A new

approach to computer stereo vision and to digital

photogrammetry. Proc. ISPRS Conf. on ‘Fast Processing of

Photogrammetric Data’, Interlaken, Switzerland, pp. 231-258

[20] Helava, U.V., 1988. Object-space least-squares correlation.

Photogr. Eng. &Remote Sensing, Vol. 54(6), pp. 711-714.

[21] Marr, D., Poggio, T., 1976. Cooperative computation of stereo

disparity. Science, Vol. 194, pp. 283-287.

[22] Baker, H. H. and Binford, T. O., 1981. Depth from edge and

intensity based stereo. Proc. IJCAI81, pp. 631–636.

[23] Marr, D., 1982. Vision: A Computational Investigation into the

Human Representation and Processing of Visual Information.

W. H. Freeman, San Francisco, USA.

[24] Ohta, Y. , Kanade, T., 1985. Stereo by intra- and inter-scanline

search using dynamic programming. IEEE Trans. PAMI, Vol.

7(2), pp. 139-154.

[25] Dhond, U. R., Aggarwal, J. K., 1989. Structure from stereo - a

review. IEEE Transactions on Systems, Man, and Cybernetics,

Vol. 19(6), pp.1489-1510.

[26] Okutomi, M. and Kanade, T., 1993. A multiple-baseline stereo.

IEEE Trans. PAMI, Vol. 15(4), pp. 353-363.

[27] Fua, P., Leclerc, Y. G., 1995. Object-centered surface

reconstruction: combining multi-image stereo and shading.

International Journal of Computer Vision, Vol. 16(1), pp. 35-56.

[28] Narayanan, P., Rander, P., Kanade, T., 1998. Constructing

virtual worlds using dense stereo. Proc. ICCV, pp. 3-10.

[29] Scharstein D., Szeliski. R., 2002. A taxonomy and evaluation of

dense two-frame stereo correspondence algorithms. Int. Journal

of Computer Vision, Vol. 47(1/2/3), pp. 7-42.

[30] Brown, M. Z., Burschka, D., Hager, G. D., 2003. Advance in

computational stereo. IEEE Trans. PAMI, Vol. 25(8): 993-1008.

[31] Seitz, S.M., Curless, B., Diebel, J., Scharstein, D., Szeliski, R.,

2006. A Comparison and evaluation of multi-view stereo

reconstruction algorithms. CVPR 2006, Vol. 1, pp. 519-526.

[32] Hirschmueller, H., Scharstein, D., 2009. Evaluation of stereo

matching costs on images with radiometric differences. IEEE

Trans. PAMI, Vol.31(9), pp. 1582-1599.

[33] Hosseininaveh, A., Robson, S., Boehm, J., Shortis, M., &

Wenzel, K., 2013. A Comparison of dense matching algorithms

for scaled surface reconstruction using stereo camera rigs.

ISPRS Journal of Photogrammetry and Remote Sensing, Vol.

78, pp. 157-167.

[34] Hirschmuller, H., 2008. Stereo processing by semi-global

matching and mutual information. IEEE Trans. PAMI, Vol. 30.

[35] Gehrig, S., Eberli, F. and Meyer, T., 2009. A real-time low-

power stereo vision engine using semi-global matching.

Computer Vision Systems, LNCS, Vol. 5815, pp. 134-143.

[36] Haala, N., Rothermel, M., 2012. Dense multi-stereo matching

for high quality digital elevation models. PFG Photogrammetrie,

Fernerkundung, Geoinformation. Vol. 4, p. 331-343.

[37] Hirschmueller, H., Ernst, I. Buder, M., 2012. Memory efficient

semi-global matching. ISPRS Annals of Photogrammetry and

Remote Sensing, Vol. 1(3), pp. 371-376.

[38] Rothermel, M., Wenzel, K., Fritsch, D., Haala, N., 2012. SURE:

Photogrammetric surface reconstruction from imagery.

Proceedings LC3D Workshop, Berlin, Germany.

[39] Hermann, S., Klette, R., 2013. Iterative semi-global matching

for robust driver assistance systems. Proc. ACCV 2012, LNCS,

Vol. 7726, pp. 465-478.

[40] Zhang, L., 2005. Automatic Digital Surface Model (DSM)

generation from linear array images. Ph.D. Thesis, Institute of

Geodesy and Photogrammetry, ETH Zurich, Switzerland.

[41] Sinha, S. N., Pollefeys, M. 2005. Multi-view reconstruction

using photo-consistency and exact silhouette constraints: a

maximum-flow formulation. Proc. 10th ICCV, pp. 349-356.

[42] Pierrot-Deseilligny, M., Paparoditis, N., 2006. A multiresolution

and optimization-based image matching approach: an

application to surface reconstruction from SPOT5-HRS stereo

imagery. Int. Archives of Photogrammetry, Remote Sensing and

Spatial Information Sciences, Vol. 36(1/W41).

[43] Vogiatzis, G., Hernandez, C., Torr, P., Cipolla, R., 2007. Multi-

view stereo via volumetric graph-cuts and occlusion robust

photo-consistency. IEEE Trans. PAMI, Vol. 29(12): 2241-2246.

[44] Pons, J.-P., Keriven, R., Faugeras, O., 2007. Multi-view stereo

reconstruction and scene flow estimation with a global image-

based matching score. International Journal of Computer Vision,

Vol. 72(2), pp. 179-193.

[45] Remondino, F., El-Hakim, S., Gruen, A., Zhang, L.,

2008.Turning images into 3D models - Development and

performance analysis of image matching for detailed surface

reconstruction of heritage objects. IEEE Signal Processing

Magazine, Vol. 25(4), pp. 55-65.

[46] Furukawa, Y., Ponce, J., 2010. Accurate, dense and robust

multiview stereopsis. IEEE Trans. PAMI, Vol.32: 1362-1376.

[47] Hoang-Hiep Vu, Labatut, P., Pons, J.-P., Keriven, R., 2012.

High accuracy and visibility-consistent dense multiview stereo.

IEEE Trans. PAMI, Vol. 34(5), pp. 889-901.

[48] Boykov, Y., Veksler, O., Zabih, R., 2001. Fast Approximate

Energy Minimization via Graph Cuts. IEEE Trans. PAMI, Vol.

23(11), pp. 1222-1239.

[49] Remondino, F., Zhang, L., 2006. Surface reconstruction

algorithms for detailed close-range object modeling. Int.

Archives of Photogrammetry, Remote Sensing and Spatial

Information Sciences, Vol. 36(3), pp. 117-123.

[50] Lhuillier, M., Quan, L., 2002. Match propagation for image-

based modeling and rendering. IEEE Trans. on PAMI, Vol.

24(8), pp. 1140-1146.

[51] Kraus, K. 1993. Photogrammetry. Volume 1. Fundamentals and

standard processes. Dummler Bonn, 397 pages.

[52] Roy, S., Cox, I. J., 1998. A maximum-flow formulation of the n-

camera stereo correspondence problem. Proc. ICCV.

ACKNOWLEDGMENTS The authors are very thankful Konrad Wenzel and Mathias Rothermel (IFP, Stuttgart University) for the useful discussions and support of the SURE

matching and to Thomas Kersten (HCU Hamburg) for the Buergerhaus

dataset.