CS4 Parallel Architectures - Introduction

description

Transcript of CS4 Parallel Architectures - Introduction

CS4/MSc Parallel Architectures - 2009-2010

CS4 Parallel Architectures - Introduction Instructor : Marcelo Cintra ([email protected] – 1.03 IF) Lectures: Tue and Fri in G0.9 WRB at 10am Pre-requisites: CS3 Computer Architecture Practicals: Practical 1 – out week 3 (26/1/10); due week 5 (09/2/10) Practical 2 – out week 5 (09/2/10); due week 7 (23/2/10) Practical 3 – out week 7 (23/2/10); due week 9 (09/3/10) (MSc only) Practical 4 – out week 7 (26/2/10); due week 9 (12/3/10) Books:

– (**) Culler & Singh - Parallel Computer Architecture: A Hardware/Software Approach – Morgan Kaufmann

– (*) Hennessy & Patterson - Computer Architecture: A Quantitative Approach – Morgan Kaufmann – 3rd or 4th editions

Lecture slides (no lecture notes) More info: www.inf.ed.ac.uk/teaching/courses/pa/

1

CS4/MSc Parallel Architectures - 2009-2010

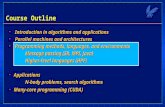

Topics Fundamental concepts

– Performance issues– Parallelism in software

Uniprocessor parallelism– Pipelining, superscalar, and VLIW processors– Vector, SIMD processors

Interconnection networks– Routing, static and dynamic networks– Combining networks

Multiprocessors, Multicomputers, and Multithreading– Shared memory and message passing systems– Cache coherence and memory consistency

Performance and scalability

2

CS4/MSc Parallel Architectures - 2009-2010

Lect. 1: Performance Issues Why parallel architectures?

– Performance of sequential architecture is limited (by technology and ultimately by the laws of physics)

– Relentless increase in computing resources (transistors for logic and memory) that can no longer be exploited for sequential processing

– At any point in time many important applications cannot be solved with the best existing sequential architecture

Uses of parallel architectures– To solve a single problem faster (e.g., simulating protein

folding: researchweb.watson.ibm.com/bleugene)– To solve a larger version of a problem (e.g., weather forecast:

www.jamstec.go.jp/esc)– To solve many problems at the same time (e.g., transaction

processing)

3

CS4/MSc Parallel Architectures - 2009-2010

Limits to Sequential Execution Speed of light limit

– Computation/data flow through logic gates, memory devices, and wires

– At all of these there is a non-zero delay that is at a minimum equal to delay of the speed of light

– Thus, the speed of light and the minimum physical feature sizes impose a hard limit on the speed of any sequential computation

Von Neumann’s limit– Programs consist of ordered sequence of instructions– Instructions are stored in memory and must be fetched in

order (same for data)– Thus, sequential computation is ultimately limited by the

memory bandwidth

4

CS4/MSc Parallel Architectures - 2009-2010

Examples of Parallel Architectures

An ARM processor in a common mobile phone has 10s of instructions in-flight in its pipeline

Pentium IV executes up to 6 microinstructions per cycle and has up to 126 microinstructions in-flight

Intel’s quad-core chips have four processors and are now in mainstream desktops and laptops

Japan’s Earth Simulator has 5120 vector processors, each with 8 vector pipelines

IBM’s largest BlueGene supercomputer has 131,072 processors

Google has about 100,000 Linux machines connected in several cluster farms

5

CS4/MSc Parallel Architectures - 2009-2010

Comparing Execution Times Example: system A: TA execution time of program P on A system B: TB execution time of program P’ on

B

Notes:– For fairness P and P’ must be “best possible implementation” on

each system– If multiple programs are run then report weighted arithmetic

mean – Must report all details such as: input set, compiler flags, command

line arguments, etc

6

Speedup: S =TB

TA

; we say: A is S times faster

or A is( TB

TA

X 100 - 100)% faster

CS4/MSc Parallel Architectures - 2009-2010

Amdahl’s Law Let: F fraction of problem that can be optimized Sopt speedup obtained on optimized fraction

e.g.: F = 0.5 (50%), Sopt = 10 Sopt = ∞

Bottom-line: performance improvements must be balanced

7

Soverall =1

(1 – F) +F

Sopt

Soverall =1

(1 – 0.5) +0.5

10

= 1.8 Soverall =1

(1 – 0.5) + 0= 2

CS4/MSc Parallel Architectures - 2009-2010

Amdahl’s Law and Efficiency Let: F fraction of problem that can be parallelized Spar speedup obtained on parallelized fraction P number of processors

e.g.: 16 processors (Spar = 16), F = 0.9 (90%),

Bottom-line: for good scalability E>50%; when resources are “free” then lower efficiencies are acceptable

8

Soverall =1

(1 – F) +F

Spar

Soverall =1

(1 – 0.9) +0.9

16

= 6.4

E =Soverall

P

E =6.4

16= 0.4 (40%)

CS4/MSc Parallel Architectures - 2009-2010

Performance Trends: Computer Families

Bottom-line: microprocessors have become the building blocks of most computer systems across the whole range of price-performance

9

0.1

1

10

100

Per

form

ance

Year

Minicomputers

Mainf rames

Culler and SinghFig. 1.1

CS4/MSc Parallel Architectures - 2009-2010

Technological Trends: Moore’s Law

10

Bottom-line: overwhelming number of transistors allow for incredibly complex and highly integrated systems

4004

8086

80286 80486

PentiumPentium II

Pentium IIIPentium IV

Core Duo

Xeon Multi-Core

1

10

100

1000

10000

100000

1000000

10000000

1970 1975 1980 1985 1990 1995 2000 2005 2010

Tra

nsi

sto

rs (

x100

0)

Year

Intel CPUs

CS4/MSc Parallel Architectures - 2009-2010

Tracking Technology: The role of CA

Bottom-line: architectural innovation complement technological improvements

11

H&PFig. 1.1

0

200

400

600

800

1000

1200

1400

1600

1800

1984

1985

1986

1987

1988

1989

1990

1991

1992

1993

1994

1995

1996

1997

1998

1999

2000

SP

EC

int

rati

ng

1.35x/year 1.58x/year

DEC Alpha

Intel Pentium III

DEC Alpha

HP 9000

IBM Pow er1

MIPS R2000

CS4/MSc Parallel Architectures - 2009-2010

The Memory Gap

Bottom-line: memory access is increasingly expensive and CA must devise new ways of hiding this cost

12

H&PFig. 5.2

1

10

100

1000

10000

100000

1980

1985

1990

1995

2000

2005

Per

form

ance

Memory CPU

CS4/MSc Parallel Architectures - 2009-2010

Software Trends Ever larger applications: memory requirements

double every year More powerful compilers and increasing role of

compilers on performance Novel applications with different demands: e.g., multimedia

– Streaming data– Simple fixed operations on regular and small data MMX-

like instructions e.g., web-based services

– Huge data sets with little locality of access– Simple data lookups and processing Transactional

Memory(?) (www.cs.wisc.edu/trans-memory)

Bottom-line: architecture/compiler co-design

13

CS4/MSc Parallel Architectures - 2009-2010

Current Trends in CA Very complex processor design:

– Hybrid branch prediction (MIPS R14000)– Out-of-order execution (Pentium IV)– Multi-banked on-chip caches (Alpha 21364)– EPIC (Explicitly Parallel Instruction Computer) (Intel Itanium)

Parallelism and integration at chip level:– Chip-multiprocessors (CMP) (Sun T2, IBM Power6, Intel Itanium 2)– Multithreading (Intel Hyperthreading, IBM Power6, Sun T2)– Embedded Systems On a Chip (SOC)

Multiprocessors:– Servers (Sun Fire, SGI Origin)– Supercomputers (IBM BlueGene, SGI Origin, IBM HPCx)– Clusters of workstations (Google server farm)

Power-conscious designs

14

CS4/MSc Parallel Architectures - 2009-2010

Lect. 2: Types of Parallelism Parallelism in Hardware (Uniprocessor)

– Parallel arithmetic– Pipelining– Superscalar, VLIW, SIMD, and vector execution

Parallelism in Hardware (Multiprocessor)– Chip-multiprocessors a.k.a. Multi-cores– Shared-memory multiprocessors– Distributed-memory multiprocessors– Multicomputers a.k.a. clusters

Parallelism in Software– Tasks– Data parallelism– Data streams

(note: a “processor” must be capable of independent control and of operating on non-trivial data types)

1

CS4/MSc Parallel Architectures - 2009-2010

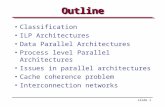

Taxonomy of Parallel Computers

According to instruction and data streams (Flynn):– Single instruction single data (SISD): this is the standard

uniprocessor– Single instruction, multiple data streams (SIMD):

Same instruction is executed in all processors with different data E.g., graphics processing

– Multiple instruction, single data streams (MISD): Different instructions on the same data Never used in practice

– Multiple instruction, multiple data streams (MIMD): the “common” multiprocessor

Each processor uses it own data and executes its own program (or part of the program)

Most flexible approach Easier/cheaper to build by putting together “off-the-shelf” processors

2

CS4/MSc Parallel Architectures - 2009-2010

Taxonomy of Parallel Computers

According to physical organization of processors and memory:– Physically centralized memory, uniform memory access

(UMA) All memory is allocated at same distance from all processors Also called symmetric multiprocessors (SMP) Memory bandwidth is fixed and must accommodate all

processors does not scale to large number of processors Used in most CMPs today (e.g., IBM Power5, Intel Core

Duo)

3

Interconnection

CPU

Main memory

CPU CPU CPU

Cache Cache Cache Cache

CS4/MSc Parallel Architectures - 2009-2010

Taxonomy of Parallel Computers

According to physical organization of processors and memory:– Physically distributed memory, non-uniform memory

access (NUMA) A portion of memory is allocated with each processor (node) Accessing local memory is much faster than remote memory If most accesses are to local memory than overall memory

bandwidth increases linearly with the number of processors

4

Interconnection

CPU

Mem.

CPU CPU CPU

Cache Cache Cache Cache

Mem. Mem. Mem.

Node

CS4/MSc Parallel Architectures - 2009-2010

Taxonomy of Parallel Computers

According to memory communication model– Shared address or shared memory

Processes in different processors can use the same virtual address space

Any processor can directly access memory in another processor node Communication is done through shared memory variables Explicit synchronization with locks and critical sections Arguably easier to program

– Distributed address or message passing Processes in different processors use different virtual address spaces Each processor can only directly access memory in its own node Communication is done through explicit messages Synchronization is implicit in the messages Arguably harder to program Some standard message passing libraries (e.g., MPI)

5

CS4/MSc Parallel Architectures - 2009-2010

Shared Memory vs. Message Passing

Shared memory

Message passing

6

flag = 0;…a = 10;flag = 1;

flag = 0;…while (!flag) {}x = a * y;

Producer (p1) Consumer (p2)

…a = 10;send(p2, a, label);

…receive(p1, b, label);x = b * y;

Producer (p1) Consumer (p2)

CS4/MSc Parallel Architectures - 2009-2010

Types of Parallelism in Applications

Instruction-level parallelism (ILP)– Multiple instructions from the same instruction stream can

be executed concurrently– Generated and managed by hardware (superscalar) or by

compiler (VLIW)– Limited in practice by data and control dependences

Thread-level or task-level parallelism (TLP)– Multiple threads or instruction sequences from the same

application can be executed concurrently– Generated by compiler/user and managed by compiler and

hardware– Limited in practice by communication/synchronization

overheads and by algorithm characteristics

7

CS4/MSc Parallel Architectures - 2009-2010

Types of Parallelism in Applications

Data-level parallelism (DLP)– Instructions from a single stream operate concurrently

(temporally or spatially) on several data– Limited by non-regular data manipulation patterns and

by memory bandwidth

Transaction-level parallelism– Multiple threads/processes from different transactions

can be executed concurrently– Sometimes not really considered as parallelism– Limited by access to metadata and by interconnection

bandwidth

8

CS4/MSc Parallel Architectures - 2009-2010

Example: Equation Solver Kernel

The problem:– Operate on a (n+2)x(n+2) matrix– Points on the rim have fixed value– Inner points are updated as:

– Updates are in-place, so top and left are new values and bottom and right are old ones– Updates occur at multiple sweeps– Keep difference between old and new values and stop when difference for all points is small enough

9

A[i,j] = 0.2 x (A[i,j] + A[i,j-1] + A[i-1,j] + A[i,j+1] + A[i+1,j])

CS4/MSc Parallel Architectures - 2009-2010

Example: Equation Solver Kernel

Dependences:– Computing the new value of a given point requires the

new value of the point directly above and to the left– By transitivity, it requires all points in the sub-matrix in

the upper-left corner– Points along the top-right to bottom-left diagonals can be

computed independently

10

CS4/MSc Parallel Architectures - 2009-2010

Example: Equation Solver Kernel

ILP version (from sequential code):– Machine instructions from each j iteration can occur in

parallel– Branch prediction allows overlap of multiple iterations of

j loop – Some of the instructions from multiple j iterations can

occur in parallel

11

while (!done) { diff = 0; for (i=1; i<=n; i++) { for (j=1; j<=n; j++) { temp = A[i,j]; A[i,j] = 0.2*(A[i,j]+A[i,j-1]+A[i-1,j] + A[i,j+1]+A[i+1,j]); diff += abs(A[i,j] – temp); } } if (diff/(n*n) < TOL) done=1;}

CS4/MSc Parallel Architectures - 2009-2010

Example: Equation Solver Kernel

TLP version (shared-memory):

12

int mymin = 1+(pid * n/P);int mymax = mymin + n/P – 1;

while (!done) { diff = 0; mydiff = 0; for (i=mymin; i<=mymax; i++) { for (j=1; j<=n; j++) { temp = A[i,j]; A[i,j] = 0.2*(A[i,j]+A[i,j-1]+A[i-1,j] + A[i,j+1]+A[i+1,j]); mydiff += abs(A[i,j] – temp); } } lock(diff_lock); diff += mydiff; unlock(diff_lock); barrier(bar, P); if (diff/(n*n) < TOL) done=1; barrier(bar, P);}

CS4/MSc Parallel Architectures - 2009-2010

Example: Equation Solver Kernel

TLP version (shared-memory) (for 2 processors):– Each processor gets a chunk of rows

E.g., processor 0 gets: mymin=1 and mymax=2 and processor 1 gets: mymin=3 and mymax=4

13

int mymin = 1+(pid * n/P);int mymax = mymin + n/P – 1;

while (!done) { diff = 0; mydiff = 0; for (i=mymin; i<=mymax; i++) { for (j=1; j<=n; j++) { temp = A[i,j]; A[i,j] = 0.2*(A[i,j]+A[i,j-1]+A[i-1,j] + A[i,j+1]+A[i+1,j]); mydiff += abs(A[i,j] – temp); } ...

CS4/MSc Parallel Architectures - 2009-2010

Example: Equation Solver Kernel

TLP version (shared-memory):– All processors can access freely the same data structure

A– Access to diff, however, must be in turns– All processors update together their own done variable

14

... for (i=mymin; i<=mymax; i++) { for (j=1; j<=n; j++) { temp = A[i,j]; A[i,j] = 0.2*(A[i,j]+A[i,j-1]+A[i-1,j] + A[i,j+1]+A[i+1,j]); mydiff += abs(A[i,j] – temp); } } lock(diff_lock); diff += mydiff; unlock(diff_lock); barrier(bar, P); if (diff/(n*n) < TOL) done=1; barrier(bar, P);}

CS4/MSc Parallel Architectures - 2009-2010

Types of Speedups and Scaling

Scalability: adding x times more resources to the machine yields close to x times better “performance”– Usually resources are processors, but can also be memory

size or interconnect bandwidth– Usually means that with x times more processors we can

get ~x times speedup for the same problem– In other words: How does efficiency (see Lecture 1) hold

as the number of processors increases? In reality we have different scalability models:

– Problem constrained– Time constrained– Memory constrained

Most appropriate scalability model depends on the user interests

15

CS4/MSc Parallel Architectures - 2009-2010

Types of Speedups and Scaling

Problem constrained (PC) scaling:– Problem size is kept fixed– Wall-clock execution time reduction is the goal– Number of processors and memory size are increased– “Speedup” is then defined as:

– Example: CAD tools that take days to run, weather simulation that does not complete in reasonable time

16

SPC =Time(1 processor)

Time(p processors)

CS4/MSc Parallel Architectures - 2009-2010

Types of Speedups and Scaling

Time constrained (TC) scaling:– Maximum allowable execution time is kept fixed– Problem size increase is the goal– Number of processors and memory size are increased– “Speedup” is then defined as:

– Example: weather simulation with refined grid

17

STC =Work(p processors)

Work(1 processor)

CS4/MSc Parallel Architectures - 2009-2010

Types of Speedups and Scaling

Memory constrained (MC) scaling:– Both problem size and execution time are allowed to increase– Problem size increase with the available memory with

smallest increase in execution time is the goal– Number of processors and memory size are increased– “Speedup” is then defined as:

– Example: astrophysics simulation with more planets and stars

18

SMC =Work(p processors)

Time(p processors)xTime(1 processor)

Work(1 processor)=Increase in Work

Increase in Time

CS4/MSc Parallel Architectures - 2009-2010

Lect. 3: Superscalar Processors I/II

Pipelining: several instructions are simultaneously at different stages of their execution

Superscalar: several instructions are simultaneously at the same stages of their execution

(Superpipelining: very deep pipeline with very short stages to increase the amount of parallelism)

Out-of-order execution: instructions can be executed in an order different from that specified in the program

Dependences between instructions:– Read after Write (RAW) (a.k.a. data dependence)– Write after Read (WAR) (a.k.a. anti dependence)– Write after Write (WAW) (a.k.a. output dependence)– Control dependence

Speculative execution: tentative execution despite dependences

1

CS4/MSc Parallel Architectures - 2009-2010

A 5-stage Pipeline

2

Generalregisters

ID MEMIF EXE WB

MemoryMemory

IF = instruction fetch (includes PC increment)ID = instruction decode + fetching values from general purpose registersEXE = arithmetic/logic operations or address computationMEM = memory access or branch completionWB = write back results to general purpose registers

CS4/MSc Parallel Architectures - 2009-2010

A Pipelining Diagram Start one instruction per clock cycle

3

IF I1 I2

I1 I2ID

EXE

MEM

WB

I1 I2

I1 I2

I1 I2

I3 I4

I3

I3 I4 I5

I3 I4 I5 I6

cycle 1 2 3 4 5 6

instructionflow

each instruction still takes 5 cycles, but instructions now complete every cycle: CPI 1

CS4/MSc Parallel Architectures - 2009-2010

Multiple-issue Start two instructions per clock cycle

4

IF I1 I3

I1 I3ID

EXE

MEM

WB

I1 I3

I1 I3

I1 I3

I5 I7

I5

I5 I7 I9

I5 I7 I9 I11

cycle 1 2 3 4 5 6

instructionflow I2 I4 I6 I8 I10 I12

I2 I4 I6 I8 I10

I2 I4 I6 I8

I2 I4 I6

I2 I4

CPI 0.5;IPC 2

CS4/MSc Parallel Architectures - 2009-2010

A Pipelined Processor (DLX)

5

H&PFig. A.18

CS4/MSc Parallel Architectures - 2009-2010

Advanced Superscalar Execution

6

Ideally: in an n-issue superscalar, n instructions are fetched, decoded, executed, and committed per cycle

In practice:– Control flow changes spoil fetch flow– Data, control, and structural hazards spoil issue flow– Multi-cycle arithmetic operations spoil execute flow

Buffers at issue (issue window or issue queue) and commit (reorder buffer) decouple these stages from the rest of the pipeline and regularize somewhat breaks in the flowGeneral

registers

ID MEMFetchengine EXE WB

MemoryMemory

instructions instructions

CS4/MSc Parallel Architectures - 2009-2010

Problems At Instruction Fetch

7

Crossing instruction cache line boundaries– e.g., 32 bit instructions and 32 byte instruction cache

lines → 8 instructions per cache line; 4-wide superscalar processor

– More than one cache lookup are required in the same cycle

– What if one of the line accesses is a cache miss?– Words from different lines must be ordered and packed

into instruction queue

Case 1: all instructions located in same cache line and no branch

Case 2: instructions spread in more lines and no branch

CS4/MSc Parallel Architectures - 2009-2010

Problems At Instruction Fetch

8

Control flow– e.g., 32 bit instructions and 32 byte instruction cache

lines → 8 instructions per cache line; 4-wide superscalar processor

– Branch prediction is required within the instruction fetch stage

– For wider issue processors multiple predictions are likely required

– In practice most fetch units only fetch up to the first predicted taken branch

Case 1: single not taken branch

Case 2: single taken branch outside fetch range and into other cache line

CS4/MSc Parallel Architectures - 2009-2010

Example Frequencies of Control Flow

9

benchmark taken % avg. BB size# of inst. between taken

branches

eqntott 86.2 4.20 4.87

espresso 63.8 4.24 6.65

xlisp 64.7 4.34 6.70

gcc 67.6 4.65 6.88

sc 70.2 4.71 6.71

compress 60.9 5.39 8.85

Data from Rotenberg et. al. for SPEC 92 Int

One branch/jump about every 4 to 6 instructions One taken branch/jump about every 4 to 9

instructions

CS4/MSc Parallel Architectures - 2009-2010

Solutions For Instruction Fetch

10

Advanced fetch engines that can perform multiple cache line lookups– E.g., interleaved I-caches where consecutive program

lines are stored in different banks that can accessed in parallel

Very fast, albeit not very accurate branch predictors (e.g., next line predictor in the Alpha 21464)– Note: usually used in conjunction with more accurate

but slower predictors (see Lecture 4) Restructuring instruction storage to keep

commonly consecutive instructions together (e.g., Trace cache in Pentium 4)

CS4/MSc Parallel Architectures - 2009-2010

Example Advanced Fetch Unit

11

Figure fromRotenberg et. al.

Control flow predictionunits:i) Branch Target Bufferii) Return Address Stackiii) Branch Predictor

Final alignment unit

2-way interleaved I-cache

Mask to select instructionsfrom each of the cache lines

CS4/MSc Parallel Architectures - 2009-2010

Trace Caches

12

Traditional I-cache: instructions laid out in program order

Dynamic execution order does not always follow program order (e.g., taken branches) and the dynamic order also changes

Idea:– Store instructions in execution order (traces)– Traces can start with any static instruction and are

identified by the starting instruction’s PC– Traces are dynamically created as instructions are

normally fetched and branches are resolved– Traces also contain the outcomes of the implicitly

predicted branches– When the same trace is again encountered (i.e., same

starting instruction and same branch predictions) instructions are obtained from trace cache

– Note that multiple traces can be stored with the same starting instruction

CS4/MSc Parallel Architectures - 2009-2010

Pros/Cons of Trace Caches

13

+ Instructions come from a single trace cache line+ Branches are implicitly predicted

– The instruction that follows the branch is fixed in the trace and implies the branch’s direction (taken or not taken)

+ I-cache still present, so no need to change cache hierarchy

+ In CISC IS’s (e.g., x86) the trace cache can keep decoded instructions (e.g., Pentium 4)

- Wasted storage as instructions appear in both I-cache and trace cache, and in possibly multiple trace cache lines

- Not very good at handling indirect jumps and returns (which have multiple targets, instead of only taken/not taken) and even unconditional branches

- Not very good when there are traces with common sub-paths

CS4/MSc Parallel Architectures - 2009-2010

Structure of a Trace Cache

14

Figure fromRotenberg et. al.

CS4/MSc Parallel Architectures - 2009-2010

Structure of a Trace Cache

15

Each line contains n instructions from up to m basic blocks

Control bits:– Valid– Tag– Branch flags and mask: m-1 bits to specify the direction

of the up to m branches– Branch mask: the number of branches in the trace– Trace target address and fall-through address: the

address of the next instruction to be fetched after the trace is exhausted

Trace cache hit:– Tag must match– Branch predictions must match the branch flags for all

branches in the trace

CS4/MSc Parallel Architectures - 2009-2010

Trace Creation

16

Starts on a trace cache miss Instructions are fetched up to the first predicted taken branch Instructions are collected, possibly from multiple basic blocks (when branches are predicted taken) Trace is terminated when either n instructions or m branches have been added Trace target/fall-through address are computed at the end

CS4/MSc Parallel Architectures - 2009-2010

Example

17

I-cache lines contain 8 32-bit instructions and Trace Cache lines contain up to 24 instructions and 3 branches

Processor can issue up to 4 instructions per cycle

L1: I1 [ALU] ... I5 [Cond. Br. to L3]L2: I6 [ALU] ... I12 [Jump to L4]L3: I13 [ALU] ... I18 [ALU]L4: I19 [ALU] ... I24 [Cond. Br. to L1]

Machine Code

B1(I1-I5)

B2(I6-I12)

B3(I13-I18)

B4(I19-I24)

Basic Blocks

I1 I2 I3

I4 I5 I6 I7 I8 I9 I10 I11

I12 I13 I14 I15 I16 I17 I18 I19

I20 I21 I22 I23

Layout in I-Cache

I24

CS4/MSc Parallel Architectures - 2009-2010

Example

18

Step 1: fetch I1-I3 (stop at end of line) → Trace Cache miss → Start trace collection

Step 2: fetch I4-I5 (possible I-cache miss) (stop at predicted taken branch)

Step 3: fetch I13-16 (possible I-cache miss) Step 4: fetch I17-I19 (I18 is predicted not taken branch, stop at

end of line) Step 5: fetch I20-I23 (possible I-cache miss) (stop at predicted

taken branch) Step 6: fetch I24-I27 Step 7: fetch I1-I4 replaced by Trace Cache accessB1

(I1-I5)

B2(I6-I12)

B3(I13-I18)

B4(I19-I24)

Basic Blocks

I1 I2 I3

I4 I5 I6 I7 I8 I9 I10 I11

I12 I13 I14 I15 I16 I17 I18 I19

Layout in I-Cache

Common path

I1 I2 I3 I4 I5 I13 I14 I15

I16 I17 I18 I19 I20 I21 I22 I23

Layout in Trace Cache

I20 I21 I22 I23 I24

I24

CS4/MSc Parallel Architectures - 2009-2010

References and Further Reading

19

Original hardware trace cache:“Trace Cache: a Low Latency Approach to High

Bandwidth Instruction Fetching”, E. Rotenberg, S. Bennett, and J. Smith, Intl. Symp. on Microarchitecture, December 1996.

Next trace prediction for trace caches:“Path-Based Next Trace Prediction”, Q. Jacobson, E.

Rotenberg, and J. Smith, Intl. Symp. on Microarchitecture, December 1997.

A Software trace cache:“Software Trace Cache”, A. Ramirez, J.-L. Larriba-Pey, C.

Navarro, J. Torrellas, and M. Valero, Intl. Conf. on Supercomputing, June 1999.

CS4/MSc Parallel Architectures - 2009-2010

Lect. 4: Superscalar Processors II/II

n-wide instruction width + m-deep pipeline + d delay to resolve branches:– Up to n*m instructions in-flight– Up to n*d instructions must be re-executed on branch misprediction– Current processors have 10 to 20 cycles of branch misprediction

penalty

Current branch prediction accuracy is around 80%-90% for “difficult” applications and >95% for “easy” applications

Increasing prediction accuracy usually involves increasing the size of tables

Different predictor types are good at different types of branch behavior

Current processors have multiple branch predictors with different accuracy-delay tradeoffs

1

CS4/MSc Parallel Architectures - 2009-2010

Quantifying Prediction Accuracy

2

Two measures:– Coverage: the fraction of branches for which the

predictor has a prediction (Note: usually, it is considered that coverage is 100% and no prediction equals predict not taken)

– Accuracy: the ratio of correctly predicted branches over the total number of branches predicted (Pitfall: higher accuracy is not necessarily better when coverage is lower)

Performance impact is proportional to (1-accuracy), penalty, and amount of branches in the application

Two ways of looking at accuracy improvements:– E.g., accuracy improves from 95% to 97%:

97 - 95

95= 0.021

Only 2% increasein accuracy

5 - 3

5= 0.4

40% reductionin mispredictions

CS4/MSc Parallel Architectures - 2009-2010

2-bit Branch Prediction Branch prediction buffers:

– Match branch PC during IF or ID stages

2-bit saturating counter:– 00: do not take– 01: do not take– 10: take– 11: take

3

Branch PC

0x135c8

0x147e0

…

Outcome

00

01

…

…0x135c4: add r1,r2,r30x135c8: bne r1,r0,n…

CS4/MSc Parallel Architectures - 2009-2010

(2,2) Correlating Predictor

For example: if the four counter values are 00 01 10 01 and the last two branches were, respectively, taken and not taken, then we will predict the branch as not taken (01)

Organized as a table of values indexed by the sequence of past branch outcomes and by the branch PC

This is an example of a context-based branch predictor

4

Prediction bits

00

01

10

11

Do not take

Do not take

Take

Take

PredictionIf NT/NT If T/NT If NT/T If T/T

00

01

10

11

00

01

10

11

00

01

10

11

CS4/MSc Parallel Architectures - 2009-2010

Two Level Branch Predictors

5

Two types of arrangement/indexing:– Global: Information is not particular to a branch and the

table/information is not directly indexed by the branch’s PC

Good when branches are highly correlated– Local (a.k.a. per address): Information is particular to a

branch and the table/information is indexed by the branch’s PC

Good when branches are individually highly biased– Partially local: Table/information is indexed by part of

the branch’s PC (in order to save bits in the tags for the tables)

– Note: sometimes global information may be indexed by information that was local, and is then somewhat indexed by the branch’s PC

CS4/MSc Parallel Architectures - 2009-2010

Two Level Branch Predictors

6

1st level: history of the last n branches– If global:

Single History Register (HR) (n-bit shift register) with the last outcomes of all branches

– If local: Multiple HR’s in a History Register Table (HRT) that is

indexed by the branch’s PC, where each HR contains the last outcomes of the corresponding branch only

2nd level: the branch behavior of the last s occurrences of the history pattern– If global:

Single Pattern History Table (PHT) indexed by the resulting HR contents

– If local: Multiple PHT’s that are indexed by the branch’s PC, where

each entry is indexed by the resulting HR contents– Thus, 2n entries for each HR

CS4/MSc Parallel Architectures - 2009-2010

Two Level Branch Predictors

7

Example with global history and global pattern table (GAg)

– All branches use the same HR– All branches use the same PHT– 2-bit saturating counter is only an example and other

schemes are possible– Meaning: “When the outcome of the last any n branches

is 11…10 then the prediction is P, regardless of what branch is being predicted”

1 1 1... 0

Branch History Register

00 … 00

00 … 01

00 … 10

11 … 10

11 … 11

…

Pattern History Table

P

2-bit SaturatingCounter

P = 01

Predict Not Taken

IndexingPrediction

Result

CS4/MSc Parallel Architectures - 2009-2010

Example with local history and global pattern table (PAg)

– Each branch uses its own HR– All branches use the same PHT– Meaning: “When the outcome of the last n instances of

the branch being predicted is 11…10 then the prediction is P, regardless of what branch is being predicted”

Two Level Branch Predictors

8

1 1 1... 0

Branch History Registers00 … 00

00 … 01

00 … 10

11 … 10

11 … 11

…

Pattern History Table

PIndexing0 1 0... 0

tag

tag

PC

1 1 1... 0tagIndexing

CS4/MSc Parallel Architectures - 2009-2010

Example with local history and local pattern table (PAp)

– Each branch uses its own HR– Each branch uses its own PHT– Meaning: “When the outcome of the last n instances of

the branch being predicted is 11…10 then the prediction is P for this particular branch”

Two Level Branch Predictors

9

1 1 1... 0

Branch History Registers00 … 00

00 … 01

00 … 10

11 … 10

11 … 11

…

Pattern History Table

PIndexing0 1 0... 0

tag

tag

PC

1 1 1... 0tagIndexing

tag

P’

tag

CS4/MSc Parallel Architectures - 2009-2010

Two Level Branch Predictors

10

Notes:– When only part of the branch’s PC is used for indexing

there is aliasing (i.e., multiple branches appear to be same)

– In practice there is a finite number of entries in the tables with local information, so

Either these only cache information for the most recently seen branches

Or the tables are indexed by hashing (usually with an XOR) the branch’s PC (this also leads to aliasing)

– Aliasing also happens with global information, as multiple branches appear to have the same behavior/prediction

– Accuracy of predictor depends on: Local versus Global information at each level Size of the tables in local schemes (number of different

branches that can be tracked) Depth of the history (n) Amount of aliasing

CS4/MSc Parallel Architectures - 2009-2010

Two Level Branch Predictors

11

Updates:– The HR’s are updated with the outcome of the branch

being predicted (only the corresponding HR in case of local scheme)

– The predictor in the selected PHT entry is updated with the outcome of branch (e.g., a 2-bit saturating counter is incremented/decremented if the outcome is taken/not taken)

Taxonomy:– History Table type:

Global: GA; Local (per address): PA– Pattern Table type:

Global: g; Local (per address): p– Thus: GAg=global history table and global pattern table PAg=local history table and global pattern table– GAp combination does not make much sense

CS4/MSc Parallel Architectures - 2009-2010

Local vs. Global Predictors

12

Simple 2-bit predictor performs best for small predictor sizes, but saturates quickly and below other predictors

Local outperforms global for all these predictor sizes

84

86

88

90

92

94

96

98

64B 128B 256B 512B 1KB 2KB 4KB 8KB 16KB 32KB 64KB

Predictor Size

Pre

dic

tor

Acc

ura

cy (

%)

Gag Pag 2-bit

Data from McFarling for SPEC 1989 Int and FP

CS4/MSc Parallel Architectures - 2009-2010

Combining Branch Predictors

13

Different predictors are good at different behaviors

Different predictors have different accuracy and latency

Combining predictors– Can lead to schemes that are good at more behaviors– Can generate quickly a reasonably accurate prediction

and with some more delay a highly accurate prediction, which corrects the previous prediction if necessary

– Usually combine a simple and a complex predictor Choosing between multiple predictors:

– “Meta-predictor” to choose the predictor that most likely has the correct prediction

– Augment predictors with confidence estimators

CS4/MSc Parallel Architectures - 2009-2010

Combining Branch Predictors

14

Meta predictor– Use 2-bit saturating counter to select predictor to use

Selector Counters

S

tag

tag

tag

tag

tag

PCIndexing

2-bit SaturatingCounter

S = 01

Use Predictor P2

SelectionResult

P1 P2

Predictors

2:1 MUX

Predictions

Final prediction

CS4/MSc Parallel Architectures - 2009-2010

Combining Branch Predictors

15

Meta predictor– 2-bit saturating counter interpretation:

00: Use P2 01: Use P2 10: Use P1 11: Use P1

– Updating counter: P1 correct and P2 correct this time: no change to counter P1 correct and P2 incorrect this time: increment counter P1 incorrect and P2 correct this time: decrement counter P1 incorrect and P2 incorrect this time: no change to

counter

Choosing among more than 2 predictors is more involved and rarely pays off

CS4/MSc Parallel Architectures - 2009-2010

Example: The Alpha 21464 Predictors

16

8-wide out-or-order superscalar processor with very deep pipeline and multithreading

Predictors take approximately 44KBytes of storage

Up to 16 branches predicted every cycle Minimum misprediction penalty of 14 cycles (112

instructions) and most common is 20 or 25 cycles (160 or 200 instructions)

Based on global schemes; local schemes were ruled out because:– They would require up to 16 parallel lookups of the

tables– Difficult to maintain per-branch information (e.g., the

same branch may appear multiple times in such a deeply pipelined wide issue machine)

In addition to conditional branch prediction it has a jump predictor and a return address stack predictor

CS4/MSc Parallel Architectures - 2009-2010

Example: The Alpha 21464 Predictors

17

Fetch unit:– Can fetch up to 16 instructions from 2 dynamically

consecutive I-cache lines– Instruction fetch stops at the first taken branch

(predicted not taken branches (up to 16) do not stop fetch)

1st Predictor: Next Line Predictor– Operates within a single cycle– Unacceptably high misprediction rate

2nd Predictor: 2Bc-gskew– Operates over 2 cycles and is pipelined– Actually consists of 2 different predictors (a 2-bit

saturating counter and an e-gskew) combined and with a meta predictor selector

– Uses “de-aliasing” approach: Partition the tables into multiple sets and use special

hashing functions Shown to reduce aliasing in global schemes

CS4/MSc Parallel Architectures - 2009-2010

References and Further Reading

18

Seminal branch prediction work:“Two-Level Adaptive Training Branch Prediction”, T.-Y.

Yeh and Y. Patt, Intl. Symp. on Microarchitecture, December 1991.

“Alternative Implementations of Two-Level Adaptive Branch Prediction”, T.-Y. Yeh and Y. Patt, Intl. Symp. on Computer Architecture, June 1992.

“Improving the Accuracy of Dynamic Branch Prediction Using Branch Correlation”, S.-T. Pan, K. So, and J. T. Rahmeh, Intl. Conf. on Architectural Support for Programming Languages and Operating Systems, October 1992.

“Combining Branch Predictors”, S. McFarling, WRL Technical Note TN-36, June 1993.

Adding confidence estimation to predictors:“Assigning Confidence to Conditional Branch

Predictions”, E. Jacobsen, E. Rotenberg, and J. Smith, Intl. Symp. on Microarchitecture, December 1996.

CS4/MSc Parallel Architectures - 2009-2010

References and Further Reading

19

Alpha 21464 predictor:“Design Tradeoffs for the Alpha EV8 Conditional Branch

Predictor”, A. Seznec, S. Felix, V. Krishnan, and Y. Sazeides, Intl. Symp. on Computer Architecture, June 2002.

“Next Cache Line and Set Prediction”, B. Calder and D. Grunwald, Intl. Symp. on Computer Architecture, June 1995.

“Trading Conflict and Capacity Aliasing in Conditional Branch Predictors”, P. Michaud, A. Seznec, and R. Uhlig, Intl. Symp. on Computer Architecture, June 1997.

Neural net based branch predictors:“Fast Path-Based Neural Branch Prediction”, D. Jimenez,

Intl. Symp. on Microarchitecture, December 2003. Championship Branch Prediction

– www.jilp.org/cbp/– camino.rutgers.edu/cbp2/

CS4/MSc Parallel Architectures - 2009-2010

Probing Further

20

Advanced register allocation and de-allocation“Late Allocation and Early Release of Physical Registers”,

T. Monreal, V. Vinals, J. Gonzalez, A. Gonzalez, and M. Valero, IEEE Trans. on Computers, October 2004.

Value prediction“Exceeding the Dataflow Limit Via Value Prediction”, M.

H. Lipasti and J. P. Shen, Intl. Symp. on Microarchitecture, December 1996.

Limitations to wide issue processors“Complexity-Effective Superscalar Processors”, S.

Palacharla, N. P. Jouppi, and J. Smith, Intl. Symp. on Computer Architecture, June 1997.

“Clock Rate Versus IPC: the End of the Road for Conventional Microarchitectures”, V. Agarwal, M. S. Hrishikesh, S. W. Keckler, and D. Burger, Intl. Symp. on Computer Architecture, June 2000.

Recent alternatives to out-of-order execution“”Flea-flicker” Multipass Pipelining: An Alternative to the

High-Power Out-of-Order Offense”, R. D. Barnes, S. Ryoo, and W. Hwu, Intl. Symp. on Microarchitecture, November 2005.

CS4/MSc Parallel Architectures - 2009-2010

Lect. 5: Vector Processors Many real-world problems, especially in science and

engineering, map well to computation on arrays RISC approach is inefficient:

– Based on loops → require dynamic or static unrolling to overlap computations– Indexing arrays based on arithmetic updates of induction variables– Fetching of array elements from memory based on individual, and unrelated,

loads and stores– Small register files– Instruction dependences must be identified for each individual instruction

Idea:– Treat operands as whole vectors, not as individual integer of float-point numbers– Single machine instruction now operates on whole vectors (e.g., a vector add)– Loads and stores to memory also operate on whole vectors– Individual operations on vector elements are independent and only dependences

between whole vector operations must be tracked

1

CS4/MSc Parallel Architectures - 2009-2010

Execution Model

Straightforward RISC code:– F2 contains the value of s– R1 contains the address of the first element of a– R2 contains the address of the first element of b– R3 contains the address of the last element of a + 8

2

for (i=0; i<64; i++)a[i] = b[i] + s;

loop: L.D F0,0(R2) ;F0=array element of b ADD.D F4,F0,F2 ;main computation S.D F4,0(R1) ;store result DADDUI R1,R1,8 ;increment index DADDUI R2,R2,8 ;increment index BNE R1,R3,loop ;next iteration

CS4/MSc Parallel Architectures - 2009-2010

Execution Model

Straightforward vector code:– F2 contains the value of s– R1 contains the address of the first element of a– R2 contains the address of the first element of b– Assume vector registers have 64 double precision elements

– Notes: Some vector operations require access to integer and FP register files as well In practice vector registers are not of the exact size of the arrays Refer to Figure G.3 of Hennessy&Patterson for a list of the most common types of

vector instructions Only 3 instructions executed compared to 6*64=384 executed in the RISC

3

for (i=0; i<64; i++)a[i] = b[i] + s;

LV V1,R2 ;V1=array b ADDVS.D V2,V1,F2 ;main computation SV V2,R1 ;store result

CS4/MSc Parallel Architectures - 2009-2010

Execution Model (Pipelined)

With multiple vector units, I2 can execute together with I1 (as we will see later)

In practice, the vector units takes several cycles to operate on each element, but is pipelined

4

IF I1

I1ID

EXE

MEM

WB

I1.1

I1.1

I1.1

cycle 1 2 3 4 5 6

I1.2

7

I1.2

I1.3

8

I2

I1.2

I1.3

I1.4

I1.3

I1.4

I1.5

I1.4

I1.5

I1.6

CS4/MSc Parallel Architectures - 2009-2010

Pros of Vector Processors Reduced pressure on instruction fetch

– Fewer instructions are necessary to specify the same amount of work

Reduced pressure on instruction issue– Reduced number of branches alleviates branch prediction– Much simpler hardware for checking dependences

Simpler register file– No need for too many ports as only one element used per

cycle (for pipeline approach)

More streamlined memory accesses– Vector loads and stores specify a regular access pattern– High latency of initiating memory access is amortized

5

CS4/MSc Parallel Architectures - 2009-2010

Cons of Vector Processors Requires a specialized, high-bandwidth, memory system

– Caches do not usually work well with vector processors– Usually built around heavily banked memory with data

interleaving

Still requires a traditional scalar unit (integer and FP) for the non-vector operations

Difficult to maintain precise interrupts (can’t rollback all the individual operations already completed)

Compiler or programmer has vectorize programs Not very efficient for small vector sizes Not suitable/efficient for many different classes of

applications

6

CS4/MSc Parallel Architectures - 2009-2010

Performance Issues Performance of a vector instruction depends on the length of

the operand vectors Initiation rate

– Rate at which individual operations can start in a functional unit– For fully pipelined units this is 1 operation per cycle– Usually >1 for load/store unit

Start-up time– Time it takes to produce the first element of the result– Depends on how deep the pipeline of the functional units are– Especially large for load/store unit

With an initiation rate of 1, the time to complete a single vector instruction is equal to the vector size + the start-up time, which is approximately equal to the vector size for large vectors

7

CS4/MSc Parallel Architectures - 2009-2010

Performance Issues Common vector processor performance metrics:

– R∞ : the rate of execution of the processor with vectors of infinite size (i.e., with no overheads due to smaller vectors)

– N1/2: the vector length required for the processor to reach half of R∞

– NV: the vector length required for the processor to match the performance of scalar execution (i.e., the point at which it pays off to execute in vector mode)

8

CS4/MSc Parallel Architectures - 2009-2010

Dealing with Vector Sizes Two new registers are used:

– vector length register (VLR) specifies (to the hardware) what length is to be assumed for the next instruction to be issued

– maximum vector length (MVL) specifies (to the programmer/compiler) what the maximum length is (i.e., the size of the registers in the particular machine)

Use strip mining for user arrays larger than MVL

9

for (i=0; i<n; i++) a[i] = b[i] + s;

low = 0;VL = n % MVL;for (j=0; j<n/MVL; j++) { for (i=low; i<low+VL-1; i++) a[i] = b[i] + s; low = low + VL; VL = MVL;}

Set length to the remainderpart of the array when the sizeis not divisible by MVL.For instance with n=140 andMVL=64, we have 2 chunks of64 and 1 remainder chunk of 16

This is the loop thatgets vectorized

Set the length back to MVL

CS4/MSc Parallel Architectures - 2009-2010

Advanced Features: Masking What if the operations involve only some elements of the

array, depending on some run-time condition?

Solution: masking– Add a new boolean vector register (the vector mask register)– The vector instruction then only operates on elements of the vectors

whose corresponding bit in the mask register is 1– Add new vector instructions to set the mask register

E.g., SNEVS.D V1,F0 sets to 1 the bits in the mask registers whose corresponding elements in V1 are not equal to the value in F0

CVM instruction sets all bits of the mask register to 1

10

for (i=0; i<64; i++) if (b[i] != 0) a[i] = b[i] + s;

CS4/MSc Parallel Architectures - 2009-2010

Advanced Features: Masking

Vector code:– F2 contains the value of s and F0 contains zero– R1 contains the address of the first element of a– R2 contains the address of the first element of b– Assume vector registers have 64 double precision elements

11

for (i=0; i<64; i++) if (b[i] != 0) a[i] = b[i] + s;

LV V1,R2 ;V1=array b SNEVS.D V1,F0 ;mask bit is 1 if b !=0 ADDVS.D V2,V1,F2 ;main computation CVM SV V2,R1 ;store result

CS4/MSc Parallel Architectures - 2009-2010

Advanced Features: Scatter-Gather

How can we handle sparse matrices?

Solution: scatter-gather– Use the contents of an auxiliary vector to select which elements

of the main vector are to be used– This is done by pointing to the address in memory of the

elements to be selected– Add new vector instruction to load memory values based on this

auxiliary vector E.g. LVI V1,(R1+V2) loads the elements of a user array from

memory locations R1+V2(i) Also SVI store counterpart

12

for (i=0; i<64; i++) a[K[i]] = b[K[i]] + s;

CS4/MSc Parallel Architectures - 2009-2010

Advanced Features: Scatter-Gather

Vector code:– F2 contains the value of s– R1 contains the address of the first element of a– R2 contains the address of the first element of b– V3 contains the indices of a and b that need to be used– Assume vector registers have 64 double precision elements

13

for (i=0; i<64; i++) a[K[i]] = b[K[i]] + s;

LVI V1,(R2+V3) ;V1=array b indexed by V3 ADDVS.D V2,V1,F2 ;main computation SVI V2,(R1+V3) ;store result

CS4/MSc Parallel Architectures - 2009-2010

Advanced Features: Striding

Assume that the 2D array b is laid out by rows– Iterations access non-contiguous elements of b– Could use scatter-gather, but this would waste a vector

register– Access pattern is very regular and a single integer, the

stride, fully defines it– Add a new vector instruction to load values from memory

based on the stride E.g., LVWS V1,(R1,R2) loads the elements of a user array from

memory locations R1+i*R2 Also SVWS store counterpart

14

for (i=0; i<64; i++) a[i] = b[i,j] + s;

CS4/MSc Parallel Architectures - 2009-2010

Advanced Features: Chaining

Forwarding in pipelined RISC processors allow dependent instructions to execute as soon as the result of the previous instruction is available

15

IF add mul

add mulID

EXE

MEM

WB

add mul

add mul

add mul

I3 I6

cycle 1 2 3 4 5 6

I3

I3

I5

I3

I4

I4

I4

I5

ADD.D R1,R2,R3 # R1=R2+R3MUL.D R4,R5,R1 # R4=R5+R1

value

CS4/MSc Parallel Architectures - 2009-2010

Advanced Features: Chaining

Similar idea applies to vector instructions and is called chaining– Difference is that chaining of vector instructions requires

multiple functional units as the same unit cannot be used back-to-back

16

IF add mul

add mulID

EXE

MEM

MEM

A.1 A.2

A.1

I3

cycle 1 2 3 4 5 6

A.3 A.4

ADDV.D V1,V2,V3 # V1=V2+V3MULV.D V4,V5,V1 # V4=V5+V1

value

EXE M.1M.2 M.3

M.1 M.2

A.2 A.3

WB

CS4/MSc Parallel Architectures - 2009-2010

Example: The Earth Simulator

17

73rd fastest supercomputer as of Top500 list of November 2008 (was 1st March 2002 to September 2004)

Multiprocessor Vector architecture– 640 nodes, 8 vector processors per node → 5120

processors– 8 pipelines per vector processor– 10 TBytes of main memory– Vector units contain 72 vector registers, each with 256

elements Performance and Power consumption

– 35.9 TFLOPS on Top500 benchmark (closest RISC-based multiprocessor (#72) reaches 36.6 TFLOPS using 9216 processors)

– 12800 KWatts power consumption Designed specifically to simulate nature (e.g.,

weather, ocean, earthquakes) at a global scale (i.e., the whole earth)

CS4/MSc Parallel Architectures - 2009-2010

Further Reading

18

The first truly successful vector supercomputer:“The CRAY-1 Computer System”, R. M. Russel,

Communications of the ACM, January 1978. A recent vector processor on a chip:

“Vector vs. Superscalar and VLIW Architectures for Embedded Multimedia Benchmarks”, C. Kozyrakis and D. Patterson, Intl. Symp. on Microarchitecture, December 2002.

Integrating a vector unit with a state-of-the-art superscalar:“Tarantula: A Vector Extension to the Alpha

Architecture”, R. Espasa, F. Ardanaz, J. Elmer, S. Felix, J. Galo, R. Gramunt, I. Hernandez, T. Ruan, G. Lowney, M. Mattina, and A. Seznec, Intl. Symp. on Computer Architecture, June 2002.

CS4/MSc Parallel Architectures - 2009-2010

Lect. 6: SIMD Processors Superscalar execution model:

– Mix of scalar ALUs– n unrelated instructions per cycle– 2n unrelated operands per cycle– Results from any ALU can feed back to any ALU individually– Operands are wide (32/64 bits)

Vector execution model:– Vector ALU– 1 vector instruction → multiple of the same operation– Operands belong to an array– Results are written back to reg. file– Operands are wide (32/64 bits)

1

Instr.Sequencer

Reg. file

Reg. fileInstr.

Sequencer

CS4/MSc Parallel Architectures - 2009-2010

Network of simple processing elements (PE)– PEs operate in lockstep under the control of a master sequencer,

the array control unit (ACU) (note: masking is possible)– PEs can exchange results with a small number of neighbors via

special data-routing instructions– Each PE has its own local memory or (less common) accesses

memory via an alignment network– PEs operate on very narrow operands (1 bit in the extreme case of

the CM-1)– Very large (up to 64K) number of PEs– Usually operated as co-processors with a host computer to perform

I/O and to handle external memory Suitable for some scientific, AI, and vizualization

applications Intended for use as supercomputers Programmed via custom extensions of common HLL

2

Original SIMD Idea

CS4/MSc Parallel Architectures - 2009-2010 3

Original SIMD Idea

Instr.Sequencer

M M M M

M M M M

M M M M

CS4/MSc Parallel Architectures - 2009-2010

Example: Equation Solver Kernel

The problem:– Operate on a (n+2)x(n+2) matrix

SIMD implementation:– Assign one node to each PE– Step 1: all PE’s send their data to their east neighbors and

simultaneously read the data sent by their west neighbors (nodes at the right, top, and bottom rim are masked out at this step)– Steps 2 to 4: same as step 1 for west, south, and north (again,

appropriate nodes are masked out)– Step 5: all PE’s compute the new value using equation above– Note: strictly speaking we need some extra tricks to juggle new and

old values

4

A[i,j] = 0.2 x (A[i,j] + A[i,j-1] + A[i-1,j] + A[i,j+1] + A[i+1,j])

CS4/MSc Parallel Architectures - 2009-2010

Example: MasPar MP-1 Key features

– First SIMD to use a traditional RISC IS– ACU also performs non-SIMD operations/computation– From 1K to 16K PE’s– PE array interconnects

2D mesh for 8-way (N, S, E, W, NE, SE, SW, NW) neighbor communication (X net)

Circuit-switched 3 stage hierarchical crossbar for any-to-any communication

Two global buses for ACU-PE lockstep control

– PE’s have local memory for data (16KB) (instructions are stored in the ACU)

– PE’s commonly operate on 32 bit words, but can also operate on individual bits, bytes, 16 bit words, and 64 bit words

5

CS4/MSc Parallel Architectures - 2009-2010

Example: MasPar MP-1

6

Figure fromBlank

PE array with 2D mesh

ACU and Unix host

Crossbar with routers

CS4/MSc Parallel Architectures - 2009-2010

A Modern SIMD Co-processor

ClearSpeed CSX600– Intended as an accelerator for high performance technical computing– Current implementation has 96 PE’s plus a scalar unit for non-SIMD

operations (including control flow)– Each PE is in fact a VLIW core– 1, 2, 4, and 8 byte operands– PE’s can communicate directly with right and left neighbors– Also supports multithreading to hide I/O latency (Lecture 12)– Uses traditional instruction and data caches in addition to memory

local to each PE– Programmed with a extension of C

Poly variables: replicated in each PE with different values Mono variables: only a single instance exists (either at the host, or

replicated at the PE’s but with synchronized values)

7

CS4/MSc Parallel Architectures - 2009-2010

A Modern SIMD Co-processor

8

Figure fromClearSpeed

PE array with local memories(SRAM) and registers

RISC scalar processor andACU

Neighbor communicationinfrastructure (swazzle)

CS4/MSc Parallel Architectures - 2009-2010

Multimedia SIMD Extensions Key ideas:

– No network of processing elements, but an array of (narrow) ALU’s

– No memories associated with ALU’s, but a pool of relatively wide (64 to 128 bits) registers that store several operands

– Still narrow operands (8 bits) and instructions that use operands of different sizes

– No direct communication between ALU’s, but via registers and with special shuffling/permutation instructions

– Not co-processors or supercomputers, but tightly integrated into CPU pipeline

– Still lockstep operation of ALU’s– Special instructions to handle common media operations (e.g.,

saturated arithmetic)

9

CS4/MSc Parallel Architectures - 2009-2010

Multimedia SIMD Extensions SIMD ext. execution model:

10

Instr.Sequencer

Reg. file

Shuffling network

Inter register operations

R1

R2

+ + + +

R3

Intra register operations

R1

+ + + +

R2or

R1

+ + + +

R2

CS4/MSc Parallel Architectures - 2009-2010

Example: Intel SSE Streaming SIMD Extensions introduced in 1999 with

Pentium III Improved over earlier MMX (1997)

– MMX re-used the FP registers– MMX only operated on integer operands

70 new machine instructions (SEE2 added 144 more in 2001) and 8 128bit registers– Registers are part of the architectural state– Include instructions to move values between SEE and x86 registers– Operands can be: single (32bit) and double (64bit) precision FP; 8,

16, and 32 bit integer– Some instructions to support digital signal processing (DSP) and 3D– SSE2 included instructions for handling the cache (recall that

streaming data does not utilize caches efficiently)

11

CS4/MSc Parallel Architectures - 2009-2010

A Modern SIMD Variation: Cell

12

IBM/Sony/Toshiba Cell Broadband Engine: Heterogeneous “multi-core” system with 1 PowerPC

(PPE) + 8 SIMD engines (SPE – “Synergistic Processor Units”)

On-chip storage based on “scratch pads” (very, very hard to program)

Used in the Playstation 3 SIMD support

SPE’s are incapable of independent control and are “slaves” to PowerPC

PPE already supports SIMD extensions (IBM’s VMX) SPE supports SIMD through specific IS 128 128-bit registers and 128 bit datapath (note: no

scalar registers in SPE) Accessible to programmer through HLL intrinsics (i.e.,

function calls, e.g., spu_add(a,b)) Additional support for synchronization across SPE’s

and PPE and for data transfer

CS4/MSc Parallel Architectures - 2009-2010

References and Further Reading

13

Seminal SIMD work:“A Model of SIMD Machines and a Comparison of Various

Interconnection Networks”, H. Siegel, IEEE Trans. on Computers, December 1979.

“The Connection Machine”, D. Hillis, Ph.D. dissertation, MIT, 1985.

Two commercial SIMD supercomputers:“The CM-2 Technical Summary”, Thinking Machines

Corporation, 1990.“The MasPar MP-1 Architecture”, T. Blank, Compcon,

1990. A modern SIMD co-processor:

“CSX Processor Architecture”, ClearSpeed, Whitepaper, 2006.

CS4/MSc Parallel Architectures - 2009-2010

Lect. 7: Shared Mem. Multiprocessors I/V

Obtained by connecting full processors together– Processors contain normal width (32 or 64 bits) datapaths– Processors are capable of independent execution and control– Processors have their own connection to memory(Thus, by this definition, Sony’s Playstation 3 is not a multiprocessor as

the 8 SPE’s in the Cell are not full processors)

Have a single OS for the whole system, support both processes and threads, and appear as a common multiprogrammed system(Thus, by this definition, Beowulf clusters are not multiprocessors)

Can be used to run multiple sequential programs concurrently or parallel programs

Suitable for parallel programs where threads can follow different code

1

CS4/MSc Parallel Architectures - 2009-2010

Recall the communication model:– Threads in different processors can use the same virtual

address space– Communication is done through shared memory variables– Explicit synchronization with locks (e.g., variable flag

below) and critical sections

2

flag = 0;…a = 10;flag = 1;

flag = 0;…while (!flag) {}x = a * y;

Producer (p1) Consumer (p2)

Shared Memory Multiprocessors

CS4/MSc Parallel Architectures - 2009-2010

Shared Memory Multiprocessors

Recall the two common organizations:– Physically centralized memory, uniform memory access (UMA) (a.k.a. SMP)– Physically distributed memory, non-uniform memory access (NUMA)

(Note that both organizations have caches between processors and memory)

3

CPU

Main memory

CPU CPU CPU

Cache Cache Cache Cache

CPU

Mem.

CPU CPU CPU

Cache Cache Cache Cache

Mem. Mem. Mem.

CS4/MSc Parallel Architectures - 2009-2010

The Cache Coherence Problem

4

CPU

Main memory

CPU CPU

Cache Cache Cache

T0: A=1

T0: A not cached T0: A not cached T0: A not cachedT1: load A (A=1)

T1: A=1

T1: A not cached T1: A not cachedT2: load A (A=1)T2: A not cachedT2: A=1

T2: A=1

T3: store A (A=2)T3: A not cachedT3: A=1

T3: A=1

stale

stale

T4: load A (A=1)T4: A=1 T4: A=2

T4: A=1

use old value

T5: load A (A=1)

use stale value!

CS4/MSc Parallel Architectures - 2009-2010

Cache Coherence Protocols Idea:

– Keep track of what processors have copies of what data– Enforce that at any given time a single value of every data exists:

By getting rid of copies of the data with old values → invalidate protocols By updating everyone’s copy of the data → update protocols

In practice:– Guarantee that old values are eventually invalidated/updated (write

propagation) (recall that without synchronization there is no guarantee that a load

will return the new value anyway)– Guarantee that a single processor is allowed to modify a certain datum

at any given time (write serialization)– Must appear as if no caches were present

Note: must fit with cache’s operation at the granularity of lines

5

CS4/MSc Parallel Architectures - 2009-2010

Write-invalidate Example

6

CPU

Main memory

CPU CPU

Cache Cache Cache

T1: load A (A=1)

T1: A=1

T1: A not cached T1: A not cachedT2: load A (A=1)T2: A not cachedT2: A=1

T2: A=1

T3: store A (A=2)T3: A not cachedT3: A not cached

T3: A=1

invalidate

stale

T4: load A (A=2)T4: A not cached T4: A=2

T4: A=1

new valueT5: load A (A=2)

new value

CS4/MSc Parallel Architectures - 2009-2010

Write-update Example

7

CPU

Main memory

CPU CPU

Cache Cache Cache

T1: load A (A=1)

T1: A=1

T1: A not cached T1: A not cachedT2: load A (A=1)T2: A not cachedT2: A=1

T2: A=1

T3: store A (A=2)T3: A not cachedT3: A = 2

T3: A=2

update

update

T4: load A (A=2)T4: A = 2 T4: A=2

T4: A=2

new value

T5: load A (A=2)

CS4/MSc Parallel Architectures - 2009-2010

Invalidate vs. Update Protocols

Invalidate:+ Multiple writes by the same processor to the cache block only

require one invalidation+ No need to send the new value of the data (less bandwidth)– Caches must be able to provide up-to-date data upon request– Must write-back data to memory when evicting a modified blockUsually used with write-back caches (more popular)

Update:+ New value can be re-used without the need to ask for it again+ Data can always be read from memory+ Modified blocks can be evicted from caches silently– Possible multiple useless updates (more bandwidth)Usually used with write-through caches (less popular)

8

CS4/MSc Parallel Architectures - 2009-2010

Cache Coherence Protocols Implementation

– Can be in either hardware or software, but software schemes are not very practical (and will not be discussed further in this course)

Add state bits to cache lines to track state of the line– Most common: Invalid, Shared, Owned, Modified, Exclusive– Protocols usually named after the states supported

Global state of a memory line corresponds to the collection of its state in all caches

Cache lines transition between states upon load/store operations from the local processor and by remote processors

These state transitions must guarantee the invariant: no two cache copies can be simultaneously modified

9

CS4/MSc Parallel Architectures - 2009-2010

Example: MSI Protocol States:

– Modified (M): block is cached only in this cache and has been modified

– Shared (S): block is cached in this cache and possibly in other caches (no cache can modify the block)

– Invalid (I): block is not cached

10

CS4/MSc Parallel Architectures - 2009-2010

Example: MSI Protocol Transactions originated at this CPU:

11

Invalid Shared

Modified

CPU read miss

CPU read hit

CPU write miss

CPU write

CPU write hitCPU read hit

CS4/MSc Parallel Architectures - 2009-2010

Example: MSI Protocol Transactions originated at other CPU:

12

Invalid Shared

Modified

CPU read miss

CPU read hit

CPU write miss

CPU write

CPU write hitCPU read hit

Remote write miss

Remote write missRemote read miss

Remote read miss

CS4/MSc Parallel Architectures - 2009-2010

Example: MESI Protocol States:

– Modified (M): block is cached only in this cache and has been modified– Exclusive (E): block is cached only in this cache, has not been modified,

but can be modified at will– Shared (S): block is cached in this cache and possibly in other caches– Invalid (I): block is not cached

State E is obtained on reads when no other processor has a shared copy– All processors must answer if they have copies or not

Easily done in bus-based systems with a shared-OR line

– Or some device must know if processors have copies

Advantage over MSI– Often variables are loaded, modified in register, and then stored– The store on state E then does not require asking for permission to write

13

CS4/MSc Parallel Architectures - 2009-2010

Example: MESI Protocol Transactions originated at this CPU:

14

Invalid Shared

Modified

CPU read miss & sharing

CPU read hit

CPU write miss

CPU write

CPU write hitCPU read hit

ExclusiveCPU read hit

CPU read miss & no sharing

CPU write

Must inform everyone(upgrade)

Can be done silently

CS4/MSc Parallel Architectures - 2009-2010

Example: MESI Protocol Transactions originated at other CPU:

15

Invalid Shared

Modified Exclusive

Remote writemiss

Remote read miss

Remote write miss

Remote read miss

Remote read miss

Remote write miss

CS4/MSc Parallel Architectures - 2009-2010

Possible Implementations Three possible ways of implementing coherence

protocols in hardware– Snooping: all cache controllers monitor all other caches’

activities and maintain the state of their lines Commonly used with buses and in many CMP’s today

– Directory: a central control device directly handles all cache activities and tells the caches what transitions to make

Can be of two types: centralized and distributed Commonly used with scalable interconnects and in many CMP’s

today

– List: each cache controller keeps track of its own state and the identity and state of its neighbors in a linked list

E.g., IEEE SCI protocol (ANSI/IEEE Std 1596-1992) Only used in a few machines in the late 90’s

16

CS4/MSc Parallel Architectures - 2009-2010

Behavior of Cache Coherence Protocols

Uniprocessor cache misses (the 3 C’s):– Cold (or compulsory) misses: when a block is accessed for the first

time– Capacity misses: when a block is not in the cache because it was

evicted because the cache was full– Conflict misses: when a block is not in the cache because it was

evicted because the cache set was full

Coherence misses: when a block is not in the cache because it was invalidated by a write from another processor– Hard to reduce relates to intrinsic communication and sharing of

data in the parallel application

– False sharing coherence misses: processors modify different words of the cache block (no real communication or sharing) but end up invalidating the complete block

17

CS4/MSc Parallel Architectures - 2009-2010

Behavior of Cache Coherence Protocols

False sharing increases with larger cache line size– Only true sharing remains with single word/byte cache lines

False sharing can be reduced with better placement of data in memory

True sharing tends to decrease with larger cache line sizes (due to locality)

Classifying misses in a multiprocessor is not straightforward– E.g., if P0 has line A in the cache and evicts it due to capacity limitation,

and later P1 writes to the same line: is this a capacity or a coherence miss?

It is both, as fixing one problem (e.g., increasing cache size) won’t fix the other (see Figure 5.20 of Culler&Singh for a complete decision chart)

18

CS4/MSc Parallel Architectures - 2009-2010

Behavior of Cache Coherence Protocols

Common types of data access patterns– Private: data that is only accessed by a single processor– Read-only shared: data that is accessed by multiple processors but

only for reading (this includes instructions)– Migratory: data that is used and modified by multiple processors,

but in turns– Producer-consumer: data that is updated by one processor and

consumed by another– Read-write: data that is used and modified by multiple processors

simultaneously Falsely shared data Data used for synchronization (Lecture 10)

Bottom-line: threads don’t usually read and write the same data indiscriminately

19

CS4/MSc Parallel Architectures - 2009-2010

Snooping coherence on simple shared bus

– “Easy” as all processors and memory controller can observe all transactions

– Bus-side cache controller monitors the tags of the lines involved and reacts if necessary by checking the contents and state of the local cache

– Bus provides a serialization point (i.e., every transaction A is either before or after another transaction B)

More complex with split transaction buses

1

P1L1

0 0

Line stateP2

L1

0 0

Line stateCache states:00 = invalid01 = shared10 = modified

Lect. 8: Shared Mem. Multiprocessors II/V

CS4/MSc Parallel Architectures - 2009-2010

“The devil is in the details”, Classic Proverb

Problem: conflict when processor and bus-side controller must check the cache

Solutions:– Use dual-ported modules for the tag and state array– Or, duplicate tag and state array

Both must be kept consistent when one is changed, which introduces some amount of conflicts

2

P1L1

0 0

Line stateP2

L1

0 0

Line stateCache states:00 = invalid01 = shared10 = modified

Snooping on Simple Bus

Ld/St

CS4/MSc Parallel Architectures - 2009-2010

Problem: even if bus is atomic, transactions are not instantaneous and may require several steps → transactions are not atomic– E.g., part of a transaction may be delayed by a memory response

or by a bus-side controller that had to wait to access its tags– E.g., out-of-order processors may issue cache requests that

conflict with the current request being served– E.g., an upgrade request may lose bus arbitration to another

processor’s and may have to be re-issued as a full write miss (due to the required invalidation)

Solution:– Introduce transient states to cache lines and the protocol (the I,

S, M, etc states seen in Lecture 7 are then called the stable states)

3

Snooping on Simple Bus

CS4/MSc Parallel Architectures - 2009-2010

Example: Extended MESI Protocol

Transactions originated at this CPU:

4

Invalid Shared

Modified

CPU write miss

CPU write

CPU write hitCPU read hit

ExclusiveCPU

read hit

CPU read miss & shr.

CPU read miss & no shr.

CPU write

I→S,E

CPU read hit

I→M

S→M

CPU write miss

bus granted

CPU read

bus granted & shr.

bus granted

& no shr. CPU write

bus granted& no conflict

conflict

CS4/MSc Parallel Architectures - 2009-2010

Problems:– Processor interacts with L1 while bus snooping device

interacts with L2, and propagating such operations up or down is not instantaneous

– L2 lines are usually bigger than L1 lines

5

Snooping with Multi-Level Hierarchies

P1L1

0 0

Line stateP2

L1

0 0

Line state Cache states:00 = invalid01 = shared10 = modified

Ld/St

L2

0 0

Line stateL2

0 0

Line state

CS4/MSc Parallel Architectures - 2009-2010

Solution: 1. Maintain inclusion property

– Lines in L1 must also be in L2 → no data is found solely in L1, so no risk of missing a relevant transaction when snooping at L2

– Lines M state in L1 must also be in M state in L2→ snooping controller at L2 can identify all data that is modified locally

2. Propagate coherence transactions

6

Snooping with Multi-Level Hierarchies

CS4/MSc Parallel Architectures - 2009-2010

Maintaining inclusion property Assume: L1: associativity a1, number of sets n1,

block size b1 L2: associativity a2, number of sets n2,

block size b2– Difficulty: Replacement policy (e.g., LRU) Assume: a1=a2=2; b1=b2; n2=k*n1; lines m1, m2, and

m3 map to same set in L1 and the same set in L2

7

Snooping with Multi-Level Hierarchies

m1

L1

m1

L2

P

miss2 miss3

Ld m21

m2m2fill 4fill 5

miss8 miss9

Ld m16hit

Ld m37

fill 10fill 11

m3 m3

CS4/MSc Parallel Architectures - 2009-2010

Maintaining inclusion property Assume: L1: associativity a1, number of sets n1, block size b1 L2: associativity a2, number of sets n2, block size b2

– Difficulty: Different line sizes Assume: a1=a2=1; b1=1, b2=2; n1=4, n2=8

Thus, words w0 and w17 can coexist in L1, but not in L2

8

Snooping with Multi-Level Hierarchies

w0L1 L2w1w2w3

w16

w17

CS4/MSc Parallel Architectures - 2009-2010

Maintaining inclusion property– Most combinations of L1/L2 size, associativity, and line size do

not automatically lead to inclusion– One solution is to have a1=1, a2≥1, b1=b2, and n1≤n2– More common solution is to invalidate the L1 line (or lines, if

b1<b2) upon replacing a line in L2– Must also invalidate L1 line(s) when L2 line is invalidated due

to coherence Propagate all invalidations from L2 to L1, whether relevant or not Keep extra state in the L2 lines to tell whether the line is also

present in L1 or not (inclusion bits)

– Finally, add a new state to L2 (modified-but-stale) to keep track of lines that are in state M in L1

9

Snooping with Multi-Level Hierarchies

CS4/MSc Parallel Architectures - 2009-2010