CPEG3231 Virtual Memory. CPEG3232 Review: The memory hierarchy Increasing distance from the...

-

Upload

hannah-rich -

Category

Documents

-

view

223 -

download

1

Transcript of CPEG3231 Virtual Memory. CPEG3232 Review: The memory hierarchy Increasing distance from the...

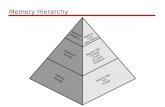

CPEG323 2

Review: The memory hierarchy

Increasing distance from the processor in access time

L1$

L2$

Main Memory

Secondary Memory

Processor

(Relative) size of the memory at each level

Inclusive– what is in L1$ is a subset of what is in L2$ is a subset of what is in MM that is a subset of is in SM

4-8 bytes (word)

1 to 4 blocks

1,024+ bytes (disk sector = page)

8-32 bytes (block)

Take advantage of the principle of locality to present the user with as much memory as is available in the cheapest technology at the speed offered by the fastest technology

CPEG323 3

Virtual memory Use main memory as a “cache” for secondary memory

Allows efficient and safe sharing of memory among multiple programs

Provides the ability to easily run programs larger than the size of physical memory

Automatically manages the memory hierarchy (as “one-level”)

What makes it work? – again the Principle of Locality A program is likely to access a relatively small portion of its

address space during any period of time

Each program is compiled into its own address space – a “virtual” address space

During run-time each virtual address must be translated to a physical address (an address in main memory)

CPEG323 5

VM simplifies loading and sharing

Simplifies loading a program for execution by avoiding code relocation

Address mapping allows programs to be load in any location in physical

memory

Simplifies shared libraries, since all sharing programs can use the same

virtual addresses

Relocation does not need special OS + hardware support as in the past

CPEG323 6

“Historically, there were two major motivations for virtual

memory: to allow efficient and safe sharing of memory among

multiple programs, and to remove the programming burden of a

small, limited amount of main memory.”

[Patt&Henn]

“…a system has been devised to make the core drum

combination appear to programmer as a single level store, the

requisite transfers taking place automatically”

Kilbum et al.

Virtual memory motivation

CPEG323 7

Terminology

Page: fixed sized block of memory 512-4096 bytes

Segment: contiguous block of segments

Page fault: a page is referenced, but not in memory

Virtual address: address seen by the program

Physical address: address seen by the cache or memory

Memory mapping or address translation: next slide

CPEG323 8

Memory management unit

Virtual Address

Mem Management Unit

Physical Address

from Processor

to Memory

Page fault

Using elaborateSoftware page faultHandlingalgorithm

CPEG323 9

Address translation

Virtual Address (VA)

Page offsetVirtual page number

31 30 . . . 12 11 . . . 0

Page offsetPhysical page number

Physical Address (PA)29 . . . 12 11 0

Translation

So each memory request first requires an address translation from the virtual space to the physical space A virtual memory miss (i.e., when the page is not in physical

memory) is called a page fault

A virtual address is translated to a physical address by a combination of hardware and software

CPEG323 10

Page 0

Page 1

Page 2

Page 3

Page 4

Page 5

Page 6

Page 7

Page 8

Page 9

Page 10

Page 11

Page 12

Page 13

Page 14

Page 15

0

4096

8192

12288

16384

20480

24576

28672

Page Frame 0

Page Frame 1

Page Frame 2

Page Frame 3

Page Frame 4

Page Frame 5

Page Frame 6

Page Frame 7

} 4K} 4K

Virtualaddress

Mainmemoryaddress

(a)

(b)

Mapping virtual to physical space

0

4096

8192

12288

16384

20480

24576

28672

32768

36864

40960

45056

49152

53248

57344

61440

64K virtual address space 32K main memory

CPEG323 11

Valid Physical page or disk address1111011

01101

Virtual pagenumber Page table

Physical memory

Disk storage

The page table maps each page in virtual memory to either a page in physical memory or a page stored on disk, which is the next level in the hierarchy.

A paging system

CPEG323 12

Valid Tag Physical page address101110

Valid Physical page or disk address111101101101

Virtual pagenumber

Page table

Physical memory

Disk storage

Physical memory

TLB

The TLB acts as a cache on the page table for the entries that map to physical pages only

A virtual address cache (TLB)

CPEG323 13

Two Programs Sharing Physical Memory

Program 1virtual address space

main memory

A program’s address space is divided into pages (all one fixed size) or segments (variable sizes) The starting location of each page (either in main memory or in

secondary memory) is contained in the program’s page table

Program 2virtual address space

CPEG323 14

These figures, contrasted with the values for caches, represent increases of 10 to 100,000 times.

Block (page) size 512-8192 bytes

Hit time 1-10 clock cycles

Miss penalty 100,000 - 600,000 clock cycles

(Access time) (100,000 - 500,000 clock cycles)

(Transfer time) (10,000 - 100,000 clock cycles)

Miss rate 0.00001% - 0.001%

Main memory size 4 MB - 2048 MB

Typical ranges of VM parameters

CPEG323 15

Some virtual memory design parameters

Paged VM TLBs

Total size 16,000 to 250,000 words

16 to 512 entries

Total size (KB) 250,000 to 1,000,000,000

0.25 to 16

Block size (B) 4000 to 64,000 4 to 32

Miss penalty (clocks) 10,000,000 to 100,000,000

10 to 1000

Miss rates 0.00001% to 0.0001%

0.01% to 2%

CPEG323 16

Technology

Technology Access Time $ per GB in 2004

SRAM 0.5 – 5ns $4,000 – 10,000

DRAM 50 - 70ns $100 - 200

Magnetic disk 5 -20 x 106ns $0.5 - 2

CPEG323 17

Address Translation Consideration

Direct mapping using register sets

Indirect mapping using tables

Associative mapping of frequently used pages

CPEG323 18

The Page Table (PT) must have one entry for each page in virtual memory!

How many Pages?

How large is PT?

Fundamental considerations

CPEG323 19

Pages should be large enough to amortize the high access time. From 4 KB to

16 KB are typical, and some designers are considering size as large as 64 KB.

Organizations that reduce the page fault rate are attractive. The primary

technique used here is to allow flexible placement of pages. (e.g. fully

associative)

4 key design issues

CPEG323 20

Page fault (misses) in a virtual memory system can be handled in

software, because the overhead will be small compared to the

access time to disk. Furthermore, the software can afford to used

clever algorithms for choosing how to place pages, because even

small reductions in the miss rate will pay for the cost of such

algorithms.

Using write-through to manage writes in virtual memory will not

work since writes take too long. Instead, we need a scheme that

reduce the number of disk writes.

4 key design issues (cont.)

CPEG323 21

Page Size Selection Constraints

Efficiency of secondary memory device (slotted disk/drum)

Page table size

Page fragmentation: last part of last page

Program logic structure: logic block size: < 1K ~ 4K

Table fragmentation: full PT can occupy large, sparse space

Uneven locality: text, globals, stack

Miss ratio

CPEG323 22

An Example

Case 1

VM page size 512

VM address space 64K

Total virtual page = = 128 pages

64K

512

CPEG323 23

Case 2

VM page size 512 = 29

VM address space 4G = 232

Total virtual page = = 8M pages

Each PTE has 32 bits: so total PT size

8M x 4 = 32M bytes

Note : assuming main memory has working set

4M byte or = = = 213 = 8192 pages

4G512

~~

4M512

222

29

An Example (cont.)

CPEG323 24

How about

VM address space =252 (R-6000)

(4 Petabytes)

page size 4K bytes

so total number of virtual pages:

252

212 = 240 = !

An Example (cont.)

CPEG323 25

Techniques for Reducing PT Size

Set a lower limit, and permit dynamic growth

Permit growth from both directions (text, stack)

Inverted page table (a hash table)

Multi-level page table (segments and pages)

PT itself can be paged: ie., put PT itself in virtual address space

(Note: some small portion of pages should be in main memory

and never paged out)

CPEG323 27

VM implementation issues

Page fault handling: hardware, software or both

Efficient input/output: slotted drum/disk

Queue management. Process can be linked on

CPU ready queue: waiting for the CPU

Page in queue: waiting for page transfer from disk

Page out queue: waiting for page transfer to disk

Protection issues: read/write/execute

Management bits: dirty, reference, valid.

Multiple program issues: context switch, timeslice end

CPEG323 28

Placement:

OS designers always pick lower miss rates vs.

simpler placement algorithm

So, “fully associativity -

VM pages can go anywhere in the main M

(compare with sector cache)

Question:

why not use associative hardware?

(# of PT entries too big!)

Where to place pages

CPEG323 29

pid ip iw

Virtual address

TLBPage map

RWX pid M C P Page frame address in memory(PFA)

PFA in S.M. iw

Physical address

Operationvalidation

RWX

Requestedaccess type

S/U

Access fault

Page faultPME (x)

Replacementpolicy

If s/u = 1 - supervisor mode

PME(x) * C = 1-page PFA modifiedPME(x) * P = 1-page is private to processPME(x) * pid is process identification

numberPME(x) * PFA is page frame address

Virtual to read address translation using page map

How to handle protection and multiple users

CPEG323 30

Page fault handling When a virtual page number is not in TLB, then PT in

M is accessed (through PTBR) to find the PTE

Hopefully, the PTE is in the data cache

If PTE indicates that the page is missing a page fault

occurs

If so, put the disk sector number and page number

on the page-in queue and continue with the next

process

If all page frames in main memory are occupied, find

a suitable one and put it on the page-out queue

CPEG323 31

Fast address translation

PT must involve at least two accesses of memory for each

memory fetch or store

Improvement:

Store PT in fast registers: example: Xerox: 256 regs

Implement VM address cache (TLB)

Make maximal use of instruction/data cache

CPEG323 32

Block size 1-2 page-table entries (typically 4-8 bytes each)

Hit time 1/2 to 1 clock cycle

Miss penalty 10-30 clock cycles

Miss rate 0.01%-1%

TLB size 32-1,024 entries

Some typical values for a TLB might be:

Miss penaly some time may be as high as upto 100 cycles.TLB size can be as long as 16 entries.Miss penaly some time may be as high as upto 100 cycles.TLB size can be as long as 16 entries.

CPEG323 33

TLB design issues

Placement policy:

Small TLBs: full-associative can be used

large TLBs: full-associative may be too slow

Replacement policy: random policy is used for

speed/simplicity

TLB miss rate is low (Clark-Emer data [85] 3~4 times

smaller then usual cache miss rate

TLB miss penalty is relatively low; it usually results in a

cache fetch

CPEG323 34

TLB-miss implies higher miss rate for the main cache

TLB translation is process-dependent

strategies for context switching

1. tagging by context

2. flushing

cont’d

complete

purge by context (shared)No absolute answer

TLB design issues (cont.)

CPEG323 35

A Case Study: DECStation 3100

Virtual page number Page offset31 30 29 28 27 …………….....15 14 13 12 11 10 9 8 ………..…3 2 1 0

Virtual address

Valid Dirty Tag Physical page number

Physical address

Valid Tag Data

=

=

Data

12

20

20

32

14 216

Cache hit

Cache

TLB

Byteoffset

IndexTag

TLB hit

CPEG323 36

TLB access

TLB hit?

Virtual address

Write?

Try to read datafrom cache Check protection

Write data into cache,update the dirty bit, and

Put the data and the addressinto the write buffer

Cache hit?Cache miss stallYes

Yes

Yes

No

NoTLB missexception

No

DECStation 3100 TLB and cache

CPEG323 38

Segment (12) Offset (12)Virtual Address (32) Page (8)

Offset (12)Page (12)Bus-out Address (from CPU)

Offset (12)Page (12)Bus-in Address (to memory)

Dynamic Address Translation (DAT)

IBM System/360-67 address translation

CPEG323 39

Offset (12)VM Page (12)Bus-out Address (from CPU)

Offset (12)PH Page (12)Bus-in Address (to memory)

11522

559

3188

4445

9110

13041

777

1227

IBM System/360-67 associative registers

CPEG323 40

(4) Offset (12)

1

0

0

1

…

4095

4095

Virtual Address (24)

…

VRW 255

Page (8)

Phys Page (24 bit addr)

1

0

1,048,575

…

Virtual Page (32 bit addr)

VRW 0

VRW 1

…

Segment Table Reg (32)

+Segment Table

2

3

Page Table 2

VRW 255

VRW 0

VRW 1

…

Page Table 4

4

V Valid bitR Reference BitW Write (dirty) Bit

3

2

5

4

IBM System/360-67 segment/page mapping

CPEG323 41

Virtual addressing with a cache

Thus it takes an extra memory access to translate a VA to a PA

CPUTrans-lation

Cache MainMemory

VA PA miss

hitdata

This makes memory (cache) accesses very expensive (if every access was really two accesses)

The hardware fix is to use a Translation Lookaside Buffer (TLB) – a small cache that keeps track of recently used address mappings to avoid having to do a page table lookup

CPEG323 42

Making address translation fast

Physical pagebase addr

Main memory

Disk storage

Virtual page #

V11111101010

11101

TagPhysical page

base addrV

TLB

Page Table(in physical memory)

CPEG323 43

Translation lookaside buffers (TLBs)

Just like any other cache, the TLB can be organized as fully associative, set associative, or direct mapped

V Virtual Page # Physical Page # Dirty Ref Access

TLB access time is typically smaller than cache access time (because TLBs are much smaller than caches)

TLBs are typically not more than 128 to 256 entries even on high end machines

CPEG323 44

A TLB in the memory hierarchy

A TLB miss – is it a page fault or merely a TLB miss? If the page is loaded into main memory, then the TLB miss can be

handled (in hardware or software) by loading the translation information from the page table into the TLB

- Takes 10’s of cycles to find and load the translation info into the TLB

If the page is not in main memory, then it’s a true page fault- Takes 1,000,000’s of cycles to service a page fault

TLB misses are much more frequent than true page faults

CPUTLB

LookupCache Main

Memory

VA PA miss

hit

data

Trans-lation

hit

miss

¾ t¼ t

CPEG323 45

Two Machines’ Cache Parameters

Intel P4 AMD Opteron

TLB organization 1 TLB for instructions and 1TLB for data

Both 4-way set associative

Both use ~LRU replacement

Both have 128 entries

TLB misses handled in hardware

2 TLBs for instructions and 2 TLBs for data

Both L1 TLBs fully associative with ~LRU replacement

Both L2 TLBs are 4-way set associative with round-robin LRU

Both L1 TLBs have 40 entries

Both L2 TLBs have 512 entries

TBL misses handled in hardware

CPEG323 47

TLB Event Combinations

TLB Page Table

Cache Possible? Under what circumstances?

Hit Hit Hit

Hit Hit Miss

Miss Hit Hit

Miss Hit Miss

Miss Miss Miss

Hit Miss Miss/

Hit

Miss Miss Hit

Yes – what we want!

Yes – although the page table is not checked if the TLB hits

Yes – TLB miss, PA in page table

Yes – TLB miss, PA in page table, but datanot in cache

Yes – page fault

Impossible – TLB translation not possible ifpage is not present in memory

Impossible – data not allowed in cache if page is not in memory

CPEG323 48

Reducing Translation Time Can overlap the cache access with the TLB access

Works when the high order bits of the VA are used to access the TLB while the low order bits are used as index into cache

Tag Data

=

Tag Data

=

Cache Hit Desired word

VA TagPATag

TLB Hit

2-way Associative Cache Index

PA Tag

Block offset

CPEG323 49

Why Not a Virtually Addressed Cache? A virtually addressed cache would only require address

translation on cache misses

data

CPUTrans-lation

Cache

MainMemory

VA

hit

PA

but Two different virtual addresses can map to the same physical

address (when processes are sharing data), i.e., two different cache entries hold data for the same physical address – synonyms

- Must update all cache entries with the same physical address or the memory becomes inconsistent

CPEG323 50

The Hardware/Software Boundary

What parts of the virtual to physical address translation is done by or assisted by the hardware?

Translation Lookaside Buffer (TLB) that caches the recent translations

- TLB access time is part of the cache hit time

- May allot an extra stage in the pipeline for TLB access

Page table storage, fault detection and updating- Page faults result in interrupts (precise) that are then handled by

the OS

- Hardware must support (i.e., update appropriately) Dirty and Reference bits (e.g., ~LRU) in the Page Tables

Disk placement- Bootstrap (e.g., out of disk sector 0) so the system can service a

limited number of page faults before the OS is even loaded

CPEG323 51

Valid Tag Physical page address101110

Valid Physical page or disk address111101101101

Virtual pagenumber

Page table

Physical memory

Disk storage

Physical memory

TLB

The TLB acts as a cache on the page table for the entries that map to physical pages only

Very little hardware with software assisst

Software

CPEG323 52

Summary

The Principle of Locality: Program likely to access a relatively small portion of the

address space at any instant of time.- Temporal Locality: Locality in Time

- Spatial Locality: Locality in Space

Caches, TLBs, Virtual Memory all understood by examining how they deal with the four questions1. Where can block be placed?

2. How is block found?

3. What block is replaced on miss?

4. How are writes handled? Page tables map virtual address to physical address

TLBs are important for fast translation