Digital Economy P1 - Hardware P. Bakowski [email protected].

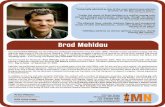

Copyright Brad Morantz 2003 Neural Networks An Overview Brad Morantz PhD Viral Immunology Center...

-

Upload

poppy-horn -

Category

Documents

-

view

214 -

download

0

Transcript of Copyright Brad Morantz 2003 Neural Networks An Overview Brad Morantz PhD Viral Immunology Center...

Copyright Brad Morantz 2003

Neural NetworksAn Overview

Brad Morantz PhD

Viral Immunology Center

Georgia State [email protected]

www.geocities.com/bradscientist

Copyright Brad Morantz 2003

Neural Networks

I think, therefore I am

Copyright Brad Morantz 2003

Contents

1. Introduction2. Expert Systems3. Neural Networks4. Prediction5. Classification6. Pattern

Recognition7. Overview8. Comparisons

9. How a NN works10.Examples11.Applications12.Future13.Resources14.Appendices

1. Degrees of freedom2. Machine Learning3. Quantitative Methods

Copyright Brad Morantz 2003

The flight to Dallas is cancelled

That’s Wonderful!

Copyright Brad Morantz 2003

Why is it wonderful?

Either there is something wrong with :– The airplane– The Pilot– Dallas

If there is something wrong with any of these, I don’t want to be on the airplane

This example courtesy of Zig Ziglear

Copyright Brad Morantz 2003

Has Anyone been turned down on a credit card transaction? For reasons other than late payments?

Copyright Brad Morantz 2003

I was ID’d buying a VCR

This is what I normally do:– I normally buy gas once a week– I usually spend about $10– I buy a book once a month

This is MY pattern

Copyright Brad Morantz 2003

I need to see your ID

That’s Wonderful!

Scene at the Store

Copyright Brad Morantz 2003

Why did the Salesperson ask for ID?

The credit card machine told her to Why did the machine ask for it?

– They have a pattern of my normal activity

– Buying a VCR was not part of that pattern

– Expensive purchases were not normal for me

– It wanted to be sure that it was not a stolen card

– Criminals with stolen cards often buy expensive electronic items like VCR’s

– It is a pattern for a stolen credit card

Copyright Brad Morantz 2003

How did the credit card machine do this?

It used Artificial Intelligence Computer program

– Expert System– Neural Network– Hybrid

Copyright Brad Morantz 2003

Prediction

Last week, 4 apples cost $2.00 Yesterday, 6 apples cost $3.00 Today, 10 apples cost $5.00 How much will 5 apples cost tomorrow?

$2.50

This is linear, but an ANN can handle nonlinear equally well

Copyright Brad Morantz 2003

Classification Problem

# wheels length Noise # seats what is it

4 short quiet 4 car

2 short loud 1 motorcycle

6 long loud lots bus

18 v long loud 2 semi With a few inputs, we classified the

vehicleNotice that is in classes, not a specific vehicle, i.e. 300ZX

Copyright Brad Morantz 2003

Pattern Recognition

Your Friend Mary George WashingtonThese are very specific answers

Copyright Brad Morantz 2003

What do these examples have in common?

Learned From experience From historical data By example By organization

Copyright Brad Morantz 2003

What is an Expert System

Knowledge based system Having an expert that you can ask questions Computer program

– Has set of rules or heuristics– From a human expert– Behaves like human expert

Copyright Brad Morantz 2003

Quick overview of expert system

Requires Expert Requires Knowledge Engineer Stores knowledge in set of rules Must have rule for all possibilities Contains bias, opinions, and emotions of expert May not be current Can ask it reason for its answer Mycin, Teresius, etc (Gordon & Shortliffe)

Copyright Brad Morantz 2003

What is a Neural Network?

A human Brain A porpoise brain The brain in a living creature A computer program

– Emulates biological brain– Limited connections

Specialized computer chip

Copyright Brad Morantz 2003

Three types of Functions

Prediction and Time Series Forecasting– Like regression, but not constrained to linear

Classification– Sort into a class, like cluster analysis

Pattern Recognition– Fined tuned classification

Not constrained to linear or Gauss Normal distribution

Copyright Brad Morantz 2003

Advantages of Neural Network

No Expert needed No Knowledge Engineer needed Does not have bias of expert Can interpolate for all cases Learns from facts Can resolve conflicts Variables can be correlated (multicollinearity)

Copyright Brad Morantz 2003

More Advantages

Learns relationships Can make good model with noisy or

incomplete data Can handle nonlinear or discontinuous data Can Handle data of unknown or undefined

distribution Data Driven

Copyright Brad Morantz 2003

Disadvantages of Neural Net

Black Box– don’t know why or how– not sure of what it is looking at

Operator dependent Don’t have knowledge in hand

* Many of these disadvantages are being overcome

Copyright Brad Morantz 2003

Black Box

What happens inside the box is unknown We can’t see into the box We don’t know what it knows

input output

Copyright Brad Morantz 2003

Key to Neural Networks

Learns input to output relationship

Copyright Brad Morantz 2003

Biological Neural Network

Human Brain has 4 x 1010 to 1011 Neurons Each can have 10,000 connections* Human baby makes 1 million connections

per second until age 2 Speed of synapse is 1 kHz, much slower

than computer (3.0gHz) Massively parallel structure* Some estimates are much greater

Copyright Brad Morantz 2003

How does a neuron work?

It sums the weighted inputs– If it is enough, then neuron fires– There can be as many as 10,000 or more inputs

Copyright Brad Morantz 2003

Computer Neural Network

Von Neuman architecture Serial machine with inherently parallel

process Series of mathematical equations Simulates relatively small brain Limited connectivity Closely approximates complex nonlinear

functions

Copyright Brad Morantz 2003

Computer Emulation of NN

Called Artificial Neural Network (ANN) Two uses

– Applications as shown• Forecasting/Prediction

• Classification

• Pattern recognition

– Use model to help understand biological neural network

Copyright Brad Morantz 2003

Neuron Activation

Weights can be positive or negative Negative weight inhibits neuron firing Sum = W1N1 + W2N2 + …. + WnNn

If sum is negative, neuron does not fire If sum is positive neuron fires Fire means an output from neuron Non-linear function Some models include a threshold

Copyright Brad Morantz 2003

Neuron Activation

Linear Sigmoidal

– 1.0/(1.0+e-s) where s = Σ inputs– 0 or +1 result

Hyperbolic Tangent– (es – e-s) / (es + e-s) where s = Σ inputs– -1 or +1 result

Also called squashing or clamping function

Copyright Brad Morantz 2003

What does the network look like?

This is a computer model, not biological Left has 11 neurons, sea slug has 100

Feed Forward Recurrent or Feedback

Copyright Brad Morantz 2003

Small Neural NetworkInput Nodes Hidden Nodes Output Nodes

I1

I2

I3

F11

F21

F31

W111W112W113

W211

W212

W213

W311

W312

W313

F12

F22

F32

W121

W122

W221W222

W321

W322

F13

F23

Out1

Out2

Copyright Brad Morantz 2003

Mathematical Equations

Input to Hidden12=H1

H1= [(I1*F11)*W111] + [(I2*F21)*W211] + [(I3*F31)*W311]

H2 = . . . . .

H3 = . . . . .

Out1=[(H1*F12)*W121] + [(H2*F22)*W221] + [(H3*F32)*W321]

Copyright Brad Morantz 2003

Comparison to Regression

OLS with 3 independent and 1 dependent would have a maximum of 3 variables, 3 coefficients, and 1 intercept

With 2 dependent variables, it would require Canonical Correlation (general linear model) and the same number of coefficients

ANN has 15 coefficients (weights) and activation functions can be nonlinear

Multicollinearity and VIF are not a problem in ANN

Copyright Brad Morantz 2003

Macro View of Training

Setting all of the weights To create optimal performance Optimal adherence to training data Really an optimization problem

– Optimal methods depends on many variables

– See optimization lecture

Need objective function Beware of local minima!

Copyright Brad Morantz 2003

Optimization Methods

Back Propagation (most popular) Gradient Descent Generalized reduced gradient (GRG) Simulated Annealing Genetic Algorithm Two or more output nodes

– Multi objective optimization

Many more methods

Copyright Brad Morantz 2003

Training

Supervised– Data given, along with answer– Compare to prediction and go try again– Historical data and/or rules

Unsupervised– No teacher or feedback– Self organizing

Copyright Brad Morantz 2003

Training Data Set

Need more observations than weights – Positive # degrees freedom

More observations is usually better– Lower variance– More knowledge

Watch aging of data

Copyright Brad Morantz 2003

Data Window

Rolling window– Keep all data– Add new data as available

Moving window– Train with fixed amount of data– As new observation is added, oldest is deleted– Necessary when underlying factors change

Copyright Brad Morantz 2003

Data Window Continued

Weighted Window– Morantz, Whalen, & Zhang– Superset of rolling & moving window– Oldest data is reduced in importance– Has reduced residual by as much as 50%– Multi factor ANOVA shows results significant

in majority of applications with real world data

Copyright Brad Morantz 2003

Dynamic Learning

Continuous learning– From mistakes and successes

– From new information

Shooting baskets example– Too low. Learned: throw harder

– Too high. Learned: throw softer, but not as soft as before

– Basket! Learned: correct amount of “push”

Loaning $10 example

Copyright Brad Morantz 2003

inputs

One per input node Ratio Logical Dummy Categorical Ordinal

Copyright Brad Morantz 2003

Outputs

One for single dependent variable Multiple

– Prediction– Classification– Pattern recognition

Copyright Brad Morantz 2003

Hidden Layer(s)

Increase complexity Can increase accuracy Can reduce degrees of freedom

– Need larger data set

Presently architecture up to programmer Source for error In future will be more automatic

Copyright Brad Morantz 2003

Methodology

Split training data– 80/20 is most common– Other methods depending on # observations

Train on the 80% Validate on the 20% Never test on what you used for training

Copyright Brad Morantz 2003

Hybrids

Use advantages of both Expert and ANN Uses more knowledge than just one type Can seed system with expert knowledge

and then update with data Sometimes hard to get all parts to work

together Harder to validate model

Copyright Brad Morantz 2003

Example

You go some place that you have never been before, and get “bad vibes”– Atmosphere, temperature, lighting, smell,

coloring, numerous things

For some reason, brain associates these together, possibly some past experience

Gives you “bad feeling”

Copyright Brad Morantz 2003

Applications

Credit card fraud Military: submarine, tank, sniper detect, or

target recognition Security Classify stars & planets Data mining Natural language recognition

Copyright Brad Morantz 2003

Future

Rule extraction Hybrids Dynamic learning Parallel processing Dedicated chips Bigger & more automatic Machine Learning

Copyright Brad Morantz 2003

Contacts IEEE Transactions on Neural Networks IEEE Intelligent Systems Journal IEEE Neural Network Society AAAI American Association for Artificial

Intelligence www.ieee.org www.zsolutions.com Internet www.geocities.com/bradscientist

Copyright Brad Morantz 2003

Appendix – degrees of freedom

Number of values free to vary– Complex math definition

Must watch, not less than zero i.e. 3X + 4Y = Z

– 3 unknowns, if know Z only one other can vary– If have 3 equations, values can not vary

Large ANN needs big sample data

Copyright Brad Morantz 2003

Appendix - Machine Learning

Machine learns from experience From experience and data it improves its

knowledge (rule base or weights) Shooting baskets example

Copyright Brad Morantz 2003

Appendix- Quantitative Methods

OLS Regression Canonical Correlation- General Linear

Model Classification Cluster Analysis