Chapter 12 Multiple Linear Regression Doing it with more variables! More is better. Chapter 12A.

-

Upload

isabel-pope -

Category

Documents

-

view

240 -

download

2

Transcript of Chapter 12 Multiple Linear Regression Doing it with more variables! More is better. Chapter 12A.

Chapter 12Multiple Linear Regression

Doing it with more variables!

More is better.

Chapter 12A

What are we doing?

12-1 Multiple Linear Regression Models

• Many applications of regression analysis involve situations in which there are more than one regressor variable.

• A regression model that contains more than one regressor variable is called a multiple regression model.

12-1.1 Introduction

• For example, suppose that the effective life of a cutting tool depends on the cutting speed and the tool angle. A possible multiple regression model could be

where

Y – tool life

x1 – cutting speed

x2 – tool angle

The Model

Y = + X1 + X2 + … + kXk +

More than one regressor or predictor variable.

Linear in the unknown parameters – the ’s.

The - intercept, i - partial regression coefficients, – errors.

Can handle nonlinear functions as predictors, e.g. X3 = Z2.

Interactions can be present, e.g. X1X2.

The Data

The data collection stepin a regression analysis

A Data Example

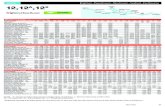

TeamGames Won

Passing Yds.

% Run

Plays

Opp. Rushing

Yds.

Oakland 13 2285 45.3 1903Pittsburgh 10 2971 53.8 1457Baltimore 11 2309 74.1 1848Los Angeles 10 2528 65.4 1564Dallas 11 2147 78.3 1821Atlanta 4 1689 47.6 2577Buffalo 2 2566 54.2 2476Chicago 7 2363 48 1984

Example – Oakland games won:

13 = + *2285 + 45.3 +

*1903 +

Similar equation for every data point.

More equations than beta’s

Least Squares Estimation of the Parameters

• The least squares function is given by

• The least squares estimates must satisfy

The least squares normal Equations

• The solution to the normal Equations are the least squares estimators of the regression coefficients.

The Matrix Approach

where

Vector of predicted

values

Our observations – the predictor

variablesUnknown vector of error terms –

possibly normally

distributed

The vector of coefficients we must estimate.

11 12 1 01 1

2 21 22 2 21

1 2

1 ...

1 ...

: ::: : : :

1 ...

k

k

n nkn n nk

x x xy

y x x xy X

y x x x

Solving those normal equations

ˆ

t t

-1t t

-1t t

Y = XB

X Y = X XB

X X X X B = B

B = X X X Y

11 12 1

11 21 32 21 22 2

1 2 3 1 2

1 ...1 1 1 ...... 1

... 1 ...'

: : : : : : : :

... 1 ...

k

nk k

k k k nk n n nk

x x x

x x x x x x xX X

x x x x x x x

Least-Squares in Matrix Form

2

1

' ( ) '( )

( ' ' )( ) ' 2 ' ' ' '

n

ii

L y X y X

y X y X y y X y X X

1

ˆ0 (the normal equations)

ˆ,

ˆ ˆ( ) analogous to xy

xx

Limplies X X X y

Solving for we get

SX X X y

S

More Matrix Approach

Example 12-2

Wire bonding is a method of making interconnections between a microchip and other electronics as part of semiconductor device fabrication.

Example 12-2

Example 12-2

Example 12-2

Example 12-2

Example 12-2

Some Basic Terms and Concepts

Residuals are estimators of the error term in the regression model:

iii yye ˆ

We use an unbiased estimator of the variance of the error term.

pn

SS

pn

eE

n

ii

1

2

2̂

SSE is called the residual sums of squares and n-p is the residual degrees of freedom. ‘residual’ – what remains after the regression explains all of the variability in the data it can.

Estimating 2

An unbiased estimator of 2 is

Properties of the Least Squares Estimators

Note that in this treatment, the elements of X are not random variables. They are the observed values of the xij. We treat them as though they are constants, often coefficients of random variables like the i.

12 )()ˆ(

)ˆ(

XXV

E

•The first result says that the estimators are unbiased.

•The second result shows the covariance structure of the estimators – diagonal and off-diagonal elements

•It is important to remember that in a typical multiple regression model the estimates of the coefficients are not independent of one another.

Properties of the Least Squares Estimators

Unbiased estimators:

Covariance Matrix:

Covariance Matrix of the Regression Coefficients

jiCC

CV

CCC

CCC

CCC

CCC

CXX

jiijji

jjj

jiij

for )ˆ,ˆcov(

and)ˆ(

. that so symmetric is

)(

22

2

222120

121110

020100

1

•In general, we do not know 2. We estimate it by the mean square error of the residuals (estimated standard error)

•the quality of our estimates of the regression coefficients is very much related to (X’X)-1.

•the estimates of the coefficients are not independent

Test for Significance of Regression

The appropriate hypotheses are

The test statistic is

ANOVA

•The basic idea is that the data (the yi values) has some variability – if it didn’t there would be nothing to explain.

•A successful model explains most of the variability, leaving little to be carried by the error term.

R2

The coefficient of multiple determination

The Adjusted R2

• The adjusted R2 statistic penalizes the analyst for adding terms to the model.

• It can help guard against overfitting (including regressors that are not really useful)

Tests on Individual Regression Coefficients and Subsets of Coefficients

The test statistic is

• Reject H0 if |t0| > t/2,n-p.

• This is called a partial or marginal test

H0: j = j0

H1: j = j0

Linear Independence of the Predictors - some random thoughts

Instabilities in regression coefficients will occur where the values of one of the predictors are ‘nearly’ a linear combination of other predictors.

It would be incredibly unlikely that you would get an exact linear dependence. Coming close is bad enough.

What is the dimension of the space you are working in? It is n, where n is the number of data points in your sample. The prediction you are trying to match is an n dimensional

vector. You are trying to match it with a set of k (k << n)

predictors. The predictors had better be related to the prediction if this

is going to be successful!

Interactions and Higher Order Terms – still thinking randomly

Including interaction terms (products of two predictors), higher order terms, or functions of predictors does not make the model nonlinear.

Suppose you believe that the following relation may apply: Y = 0 + 1X1 + 22X2X2 +23X2X3 + 4exp(X4) + This is still a linear regression model – linear in the

beta’s. After recording the values of X1 through X4, you simply

calculate the values of the predictors into the columns of the worksheet for the regression software.

The model would become nonlinear if you were trying to estimate a parameter inside of the exponential function, e.g. 4exp(4eX4).

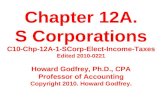

The NFL Again – problem 12-15 Predictor variables

Att pass attempts Comp – completed passes Pct Comp = percent completed passes Yds – yards gained passing Yds per Att – yards gained per pass attempt Pct TD = percent of attempts that are TDs Long – longest pass completion Int – number of interceptions Pct Int – percentage of attempts that are

interceptions Response Variable – quarterback rating

The NFL Again – problem 12-15

Player Att Comp Pct Yds Yds per TD Pct TD Long Int Pct Int Rating D.Culpepper, MIN 548 379 69.2 4717 8.61 39 7.1 82 11 2 110.9D.McNabb, PHI 469 300 64 3875 8.26 31 6.6 80 8 1.7 104.7B.Griese, TAM 336 233 69.3 2632 7.83 20 6 68 12 3.6 97.5M.Bulger, STL 485 321 66.2 3964 8.17 21 4.3 56 14 2.9 93.7B.Favre, GBP 540 346 64.1 4088 7.57 30 5.6 79 17 3.1 92.4J.Delhomme, CAR 533 310 58.2 3886 7.29 29 5.4 63 15 2.8 87.3K.Warner, NYG 277 174 62.8 2054 7.42 6 2.2 62 4 1.4 86.5M.Hasselbeck, SEA 474 279 58.9 3382 7.14 22 4.6 60 15 3.2 83.1A.Brooks, NOS 542 309 57 3810 7.03 21 3.9 57 16 3 79.5T.Rattay, SFX 325 198 60.9 2169 6.67 10 3.1 65 10 3.1 78.1M.Vick, ATL 321 181 56.4 2313 7.21 14 4.4 62 12 3.7 78.1J.Harrington, DET 489 274 56 3047 6.23 19 3.9 62 12 2.5 77.5V.Testaverde, DAL 495 297 60 3532 7.14 17 3.4 53 20 4 76.4P.Ramsey, WAS 272 169 62.1 1665 6.12 10 3.7 51 11 4 74.8J.McCown, ARI 408 233 57.1 2511 6.15 11 2.7 48 10 2.5 74.1P.Manning, IND 497 336 67.6 4557 9.17 49 9.9 80 10 2 121.1D.Brees, SDC 400 262 65.5 3159 7.9 27 6.8 79 7 1.8 104.8B.Roethlisberger, PIT 295 196 66.4 2621 8.88 17 5.8 58 11 3.7 98.1T.Green, KAN 556 369 66.4 4591 8.26 27 4.9 70 17 3.1 95.2T.Brady, NEP 474 288 60.8 3692 7.79 28 5.9 50 14 3 92.6C.Pennington, NYJ 370 242 65.4 2673 7.22 16 4.3 48 9 2.4 91B.Volek, TEN 357 218 61.1 2486 6.96 18 5 48 10 2.8 87.1J.Plummer, DEN 521 303 58.2 4089 7.85 27 5.2 85 20 3.8 84.5D.Carr, HOU 466 285 61.2 3531 7.58 16 3.4 69 14 3 83.5B.Leftwich, JAC 441 267 60.5 2941 6.67 15 3.4 65 10 2.3 82.2C.Palmer, CIN 432 263 60.9 2897 6.71 18 4.2 76 18 4.2 77.3J.Garcia, CLE 252 144 57.1 1731 6.87 10 4 99 9 3.6 76.7D.Bledsoe, BUF 450 256 56.9 2932 6.52 20 4.4 69 16 3.6 76.6K.Collins, OAK 513 289 56.3 3495 6.81 21 4.1 63 20 3.9 74.8K.Boller, BAL 464 258 55.6 2559 5.52 13 2.8 57 11 2.4 70.9

The NFL Again – problem 12-15

Fit a multiple regression model using Pct Comp, Pct TD, and the Pct Int

Estimate 2

Determine the standard errors of the regression coefficients

Predict the rating when Pct Comp = 60%, Pct TD is 4%, and the Pct Int = 3%

Now the solutions

More NFL – problem 12-31 Test the regression model for significance

using = .05 Find the p-value conduct a t-test on each regression coefficient

These are very good problems

to answer.

Again with the answers

Even more answers

Next Time

Confidence Intervals, againModeling and Model

AdequacyAlso, Doing it with Computers

Computers are good.