Cache Memory

description

Transcript of Cache Memory

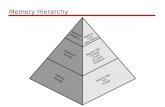

Big is Slow

• The more phone numbers stored, the slower the access

7.1

555-1212

Spatial Locality - You’re likely to call a lot of people you knowTemporal Locality - If you call somebody today, you’re more likely to call them tomorrow, too

Spatial Locality - You’re likely to call a lot of people you knowTemporal Locality - If you call somebody today, you’re more likely to call them tomorrow, too

• Consider looking up a telephone number

• In your memory

• In your personal organizer

• In the personal directory

• In the Phone book

And so it is with Computers

• Main memory• Big• Slow• “Far” from CPU

7.1

MainMemory

Registers

CPU

Load or I-FetchStore

Assembly language programmers andcompilers manage all transitions betweenregisters and main memory

Assembly language programmers andcompilers manage all transitions betweenregisters and main memory

• Our system has two kinds of memory

• Registers• Close to CPU• Small number of them• Fast

The problem...

7.1

IF RF MLW WBEX... ...

Instruction FetchInstruction Fetch Memory AccessMemory Access

• Since every instruction has to be fetched from memory, we lose big time

• We lose double big time when executing a load or store

• DRAM Memory access takes around 5ns

• At 1 GHz, that’s 5 cycles

• At 2 GHz, that’s 10 cycles

• At 3 GHz, that’s way too many cycles…

Note: Access time is much faster in some memory modes, but basic access is around 50ns

Note: Access time is much faster in some memory modes, but basic access is around 50ns

A hopeful thought

7.1

• Static RAMs are much faster than DRAMs

• <1 ns possible (instead of 5ns)

• So, build memory out of SRAMs

• SRAMs cost about 20 times as much as DRAM• Technology limitations cause the price difference

• Access time gets worse if larger SRAM systems are needed (small is fast...)

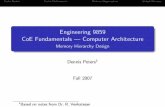

A more hopeful thought

7.1

• Remember the telephone directory?

• Do the same thing with computer memory

Registers

CPU

Load or I-FetchStore

MainMemory(DRAM)

SRAM CacheCache

The big question: What goes in the cache?

• Build a hierarchy of memories between the registers and main memory

• Closer to CPU: Small and fast (frequently used)

• Closer to Main Memory: Big and slow (more rarely used)

Locality

7.1

i = i+1;if (i<20) { z = i*i + 3*i -2;}q = A[i];

Temporal localityTemporal locality

p = A[i];q = A[i+1]r = A[i] * A[i+3] - A[i+2]

name = employee.name;rank = employee.rank;salary = employee.salary;

Spatial LocalitySpatial Locality

The program is very likelyto access the same dataagain and again over time

The program is very likelyto access data that is closetogether

The Cache

7.2

5600100001016

24471048431028

4 Most recently accessedMemory locations (exploitstemporal locality)

Issues: How do we know what’s in the cache? What if the cache is full?

Issues: How do we know what’s in the cache? What if the cache is full?

Cache

5600100032231004

23100811221012

01016323241020

8451024431028

9761032775541036

4331040778510442447104877510524331056

Main Memory Fragment

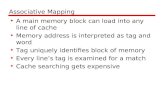

Goals for Cache Organization

• Complete

• Data may come from anywhere in main memory

• Fast lookup

• We have to look up data in the cache on every memory access

• Exploits temporal locality

• Stores only the most recently accessed data

• Exploits spatial locality

• Stores related data

Direct Mapping

7.2

IndexTag

Always zero (words)

Va

lidTag DataIndex

Cache

56003223

231122

032324

84543

97677554

43377852447775433

3649

Main Memory00 00 0000 01 0000 10 0000 11 0001 00 0001 01 0001 10 0001 11 0010 00 0010 01 0010 10 0010 11 0011 00 0011 01 0011 10 0011 11 00

6-bit Address

560000Y0077511Y0184501Y10

3323400N11

In a direct-mapped cache:-Each memory address

corresponds to one location in the cache-There are many differentmemory locations for each cache entry (four in this case)

In a direct-mapped cache:-Each memory address

corresponds to one location in the cache-There are many differentmemory locations for each cache entry (four in this case)

Hits and Misses

7.2

• The hit rate and miss rate are the fraction of memory accesses that are hits and misses

• Typically, hit rates are around 95%• Many times instructions and data are considered

separately when calculating hit/miss rates

• When the CPU reads from memory:

• Calculate the index and tag

• Is the data in the cache? Yes – a hit, you’re done!• Data not in cache? This is a miss.

• Read the word from memory, give it to the CPU.

• Update the cache so we won’t miss again. Write the data and tag for this memory location to the cache. (Exploits temporal locality)

A 1024-entry Direct-mapped Cache

7.2

Tag DataIndex V012

10231022

...

...

012111231

Hit! Data

Tag

Index 1020

3220

Memory AddressMemory Address

Byte offset

One BlockOne Block

Example - 1024-entry Direct Mapped Cache

11153432321214

1101

Tag DataIndex V012

2332232323998

34238829

1976894111023

3...

Assume the cache has been used for awhile, so it’s not empty...

01

Index- 10 bits211

Tag- 20 bits1231

LW $t3, 0x0000E00C($0)

address = 0000 0000 0000 0000 1110 0000 0000 1100tag = 14 index = 3 byte offset=0

Hit: Data is 34238829

LB $t3, 0x00003005($0) (let’s assume the word at mem[0x00003004] = 8764)

address = 0000 0000 0000 0000 0011 0000 0000 0101tag = 3 index = 1 byte offset=1

Miss: load word from mem[0x00003004] and write into cache at index 1

3 8764

7.2

byte address

So, how’d we do?

7.2

Miss rates for DEC 3100 (MIPS machine)

Note: This isn’tjust the average

Benchmark Instruction Data miss Combinedmiss rate rate miss rate

spice 1.2% 1.3% 1.2%

gcc 6.1% 2.1% 5.4%

Separate 64KB Instruction/Data Caches (16K 1-word blocks)

Direct Mapping Review

7.2

IndexTag

Always zero (words)

Each word has only one placeit can be in the cache: Index must match exactly

Each word has only one placeit can be in the cache: Index must match exactly

Va

lidTag DataIndex

Cache

56003223

231122

032324

84543

97677554

43377852447775433

3649

Main Memory00 00 0000 01 0000 10 0000 11 0001 00 0001 01 0001 10 0001 11 0010 00 0010 01 0010 10 0010 11 0011 00 0011 01 0011 10 0011 11 00

6-bit Address

560000Y0077511Y0184501Y10

3323400N11

01

Index2

Tag31

Memory Address:Split depends oncache size

Total Memory Requirements

7.2

Tag Data (1 word)V

1 bit1 bit 32 - n - 2 bits32 - n - 2 bits 32 bits32 bits

For a direct-mapped cache with 2n slots and 32-bit addressesFor a direct-mapped cache with 2n

slots and 32-bit addresses

Total size of a direct-mapped cache with 2n blocks = 2n x (32 + (32 - n - 2) + 1) = 2n x (63 - n) bits

Note: Small caches take more space per entry!

index byte offset

One Slot:

Warning: Normally “cache size” refers only to the data portion and ignores the tags and valid bits.

Missed me, Missed me...

7.2

• What to do on a hit:

• Carry on... (Hits should take one cycle or less)

• What to do on an instruction fetch miss:

• Undo PC increment (PC <-- PC-4)

• Do a memory read

• Stall until memory returns the data

• Update the cache (data, tag and valid) at index

• Un-stall• What to do on a load miss

• Same thing, except don’t mess with the PC

Missed me, Missed me...

7.2

• What to do on a store (hit or miss)

• Won’t do to just write it to the cache

• The cache would have a different (newer) value than main memory

• Simple Write-Through

• Write both the cache and memory• Works correctly, but slowly

• Buffered Write-Through

• Write the cache

• Buffer a write request to main memory• 1 to 10 buffer slots are typical

Types of misses

• Cold miss: During initialization, when the cache is empty

• Capacity miss: When the cache is full

• Conflict miss: The cache is not full, but the data asks for a location that’s taken

Replacement Policy

• Which data should be replaced on a capacity/conflict miss?

• Random: simple, but not very useful

• Least Recently Used (LRU): exploiting spatial locality

• Least Frequently Used (LFU): better, but harder to implement

Splitting up

• It is common to use two separate caches for Instructions and for Data

• All Instruction fetches use the I-cache

• All data accesses (loads and stores) use the D-cache

• This allows the CPU to access the I-cache at the same time it is accessing the D-cache

• Still have to share a single memory

IF RF M WBEX

7.2

Note: The hit rate will probably be lower than for a combined cache of the same total size.

What about Spatial Locality?

7.2

• Spatial locality says that physically close data is likely to be accessed close together

Word 2 Word 1 Word 0

012131431

Index

1018

AddressAddress

Tag

34

Blockoffset

2

Byteoffset

2

One 4-word BlockOne 4-word BlockAll words in the same block have the same index and tag

• On a cache miss, don’t just grab the word needed, but also the words nearby

• The easiest way to do this is to increase the block size

DataTagVWord

CacheEntry

Note: 22 = 4

3

TagData (4-word Blocks)

Index V

012

20472046

...

...

32KByte/4-Word Block D.M. Cache

7.2

014141531

Hit!

Tag Index

11

17

17

Byte offset23

32 KB / 4 Words/Block / 4 Bytes/Word --> 2K blocks

Block offset

Data

32

Mux0 1 2 3

211=2K

How Much Change?

7.2

Miss rates for DEC 3100 (MIPS machine)

spice 1 1.2% 1.3% 1.2%

gcc 1 6.1% 2.1% 5.4%

spice 4 0.3% 0.6% 0.4%

gcc 4 2.0% 1.7% 1.9%

Benchmark Block Size Instruction Data miss Combined(words) miss rate miss rate

Separate 64KB Instruction/Data Caches (16K 1-word blocks or 4K 4-word blocks)

The issue of Writes

7.2

Perform a write to a location with index 1000, tag 2420, word 1 (value 4334)

On a read miss, we read the entire block from memory into the cache

On a write hit, we write one word into the block. The other words in theblock are unchanged.

On a write miss, we write one word into the block and update the tag.

2330001 322 355 2word 3 word 2 word 1 word 0V tagBlock with

index 1000: 2420 4334

The other words are still the old data (for tag 3000). Bad news!

Solution 1: Don’t update the cache on a write miss. Write only to memory.

Solution 2: On a write miss, first read the referenced block in (including the old value of the word being written), then write the new word into the cache and write-through to memory.

Choosing a block size

7.2

• Large block sizes help with spatial locality, but...

• It takes time to read the memory in• Larger block sizes increase the time for misses

• It reduces the number of blocks in the cache• Number of blocks = cache size/block size

• Need to find a middle ground

• 16-64 bytes works nicely

Other Cache organizations

7.3

Direct MappedDirect Mapped

0:1:23:4:5:6:7:89:

10:11:12:13:14:15:

V Tag DataIndexIndex

Address = Tag | Index | Block offset

Fully AssociativeFully Associative

No IndexNo Index

Address = Tag | Block offset

Each address has only one possible location

Each address has only one possible location

Tag DataV

Fully Associative vs. Direct Mapped

7.3

• Fully associative caches provide much greater flexibility

• Nothing gets “thrown out” of the cache until it is completely full

• Direct-mapped caches are more rigid

• Any cached data goes directly where the index says to, even if the rest of the cache is empty

• A problem, though...

• Fully associative caches require a complete search through all the tags to see if there’s a hit

• Direct-mapped caches only need to look one place

A Compromise

7.3

2-Way set associative2-Way set associative

Address = Tag | Index | Block offset

4-Way set associative4-Way set associative

Address = Tag | Index | Block offset

0:

1:

2:

3:

4:

5:

6:

7:

V Tag Data

Each address has two possiblelocations with the same index

Each address has two possiblelocations with the same index

One fewer index bit: 1/2 the indexes

One fewer index bit: 1/2 the indexes

0:

1:

2:

3:

V Tag Data

Each address has four possiblelocations with the same index

Each address has four possiblelocations with the same index

Two fewer index bits: 1/4 the indexes

Two fewer index bits: 1/4 the indexes

Example: ARM processor cache• 4 Kbyte Direct mapped cache

• Source: ARM system’s developer’s guide

Example: ARM processor cache

• 4 Kbyte 4-way set associative cache

• Source: ARM system developer’s guide

Set Associative Example

V Tag DataIndex00000000

000:

001:

010:

011:

100:

101:

110:

111:

01001110001100110100010011110001101100001100111000

MissMissMissMissMiss

7.3

Index V Tag Data

0

0000000

00:

01:

10:

11:

V Tag DataIndex0

0000000

0:

1:

Direct-Mapped 2-Way Set Assoc. 4-Way Set Assoc.

01001110001100110100010011110001101100001100111000

MissMissHitMissMiss

01001110001100110100010011110001101100001100111000

MissMissHitMissHit

Byte offset (2 bits)Block offset (2 bits)Index (1-3 bits)Tag (3-5 bits)

010 -1 110010

0100 -

1 1100 -1

011110

01101100

1 01001

1 11001

1 01101

-

--

New Performance Numbers

7.3

Miss rates for DEC 3100 (MIPS machine)

spice Direct 0.3% 0.6% 0.4%

gcc Direct 2.0% 1.7% 1.9%

spice 2-way 0.3% 0.6% 0.4%

gcc 4-way 1.6% 1.4% 1.5%

Benchmark Associativity Instruction Data miss Combinedrate miss rate

Separate 64KB Instruction/Data Caches (4K 4-word blocks)

gcc 2-way 1.6% 1.4% 1.5%

spice 4-way 0.3% 0.6% 0.4%

Example

• Assuming a memory access time of 40 ns and a cache access time of 4 ns, estimate the average access time with a

• Hit rate of 90%

• Hit rate of 95%

• Hit rate of 99%

Example 2

• Assuming a memory access time of 40 ns and a cache access time of 4 ns calculate the hit rate required for an average access time of 5 ns

Example 3(A) Calculate the cache size, block size and total RAM size for a cache in a 32-bit

address bus system assuming:• A 2-bit block offset and a 6-bit index and direct-mapped cache• A 3-bit block offset and a 20-bit tag and a 4-way set associative cache

(B) Assuming a 32-bit memory address bus with a main memory access time of 40 ns and a assuming a direct-mapped cache access time of 4 ns, a cache size of 1 KB and a block size of 4 wordsi) Indicate whether each access in the following address access sequence is a hit or a miss and what kindii) calculate the access times. The replacement policy is Least Recently Used (LRU).

• Lw $4, 0x732A2120($0)• Lb $5, 0x732A2130($0)• Lb $6, 0xA32A232E($0)• Lb $6, 0x732A2320($0)• Lb $6, 0x923B2120($0)• Lb $6, 0x923B2121($0)• Lb $6, 0x532C2122($0)• Lb $6, 0xA32A2123($0)• Lw $4, 0x732A2120($0)• Lb $5, 0x532C2130($0)

iii) Calculate the cache hit rate after the above accesses(C) Repeat for a 2-way associative cache(D) Again for a 4-way associative cache

Example 4

Calculate the tag and index fields for a cache in a 32-bit address bus system assuming:

• 1 MByte cache size, block size 16, direct mapped cache

• 8 KByte cache size, block size 4, 4-way set associative cache

Calculate the total RAM required for the implementation of the two caches

Example 5

• Assuming a main memory access time of 40 ns a L1 cache access time of 4 ns and a L2 cache access time of 10 ns:

• Calculate the average access time assuming L1 hit rate 95% and combined L1-L2 hit rate 98%

• Calculate the required L1-L2 combined hit rate required for an average access time of 4.5 ns assuming L1 hit rate 95%

Example• For the 4KByte, direct-mapped cache with block

size 4 shown, show the cache contents after the following accesses

• Lw $4, 0x52134120($0)

• Lb $5, 0x732A2130($0)

• Lb $6, 0xA32A232E($0)

• Lb $6, 0x245A2310($0)

• Lb $6, 0x923B2120($0)

Index V Tag Word 3 Word 2 Word 1 Word 0

0

1

2

3

Address Data

0x245A2310 0x00001234

0x245A2314 0x00012345

0x245A2318 0x00023456

0x245A231C 0x00034567

…

0x52134120 0xFFFFABCD

0x52134124 0xFFFFBCDE

0x52134128 0xFFFFCDEF

0x5213412C 0xFFFFDEF0

…

0x732A2130 0x45678901

0x732A2134 0x56789012

0x732A2138 0x67890123

0x732A213C 0x78901234

…

0x923B2120 0x0000ABCD

0x923B2124 0x0000BCDE

0x923B2128 0x0000CDEF

0x923B212C 0x0000DEF0

…

0xA32A2320 0x2222ABCD

0xA32A2324 0x2222CDE

0xA32A2328 0x2222CDEF

0xA32A232C 0x2222DEF0

…