Benchmarking Domain-specific Expert Search using Workshop Program Committees

-

Upload

toine-bogers -

Category

Technology

-

view

373 -

download

3

description

Transcript of Benchmarking Domain-specific Expert Search using Workshop Program Committees

Benchmarking Domain-Specific Expert Search Using Workshop Program Committees

Georgeta Bordea1, Toine Bogers2 & Paul Buitelaar1

1 Digital Enterprise Research Institute National University of Ireland

2 Royal School of Library & Information Science University of Copenhagen

CSTA workshop @ CIKM 2013October 28, 2013

Outline

• Introduction

• Domain-specific test collections for expert search

- Information retrieval

- Semantic web

- Computational linguistics

• Benchmarking our new collections

- Expert finding

- Expert profiling

• Discussion & conclusions

2

Introduction

• Knowledge workers spend around 25% of their time searching for information

- 99% report using other people as information sources

- 14.4% of their time is spent on this (56% depending on your definition)

- Why do people search for other people? (Hertzum & Pejtersen, 2005)

‣ Search documents to find relevant people

‣ Search people to find relevant documents

• Expert search engines support this need for people search

- Searching for people instead of documents

3

Introduction

4

“machine learning” “speech recognition”

Related work

• Historical solution (80s and 90s)

- Manually constructing a database of people’s expertise

• Automatic approaches to expert search since 2000s

- Automatically retrieve expertise evidence and associate this with experts

- Expert finding (“Who is the expert on topic X?”)

‣ Find the experts on a specific topic

- Expert profiling (“What is the expertise of person Y?”)

‣ Find out what one expert knows about different topics

5

Related work

• TREC Enterprise track (2005-2008)

- Focused on enterprise search → searching the data of an organization

- W3C collection (2005-2006)

- CSIRO collection (2007-2008)

• UvT Expert Collection (2007, updated in 2012)

- University-wide crawl of expertise evidence

‣ Publications, course descriptions, research descriptions, personal home pages

- Topics & relevance (self-)assessments from manual expertise database

6

Related work

7

W3C CSIRO UvT

# people 1,092 3,490 496

# documents 331,037 370,715 36,699

# topics 99 50 981

• Problems with these data sets

- Relevance assessments

‣ W3C → Assessment by people outside organization inaccurate and incomplete

‣ CSIRO → Assessment by co-workers biased towards social network

‣ UvT → Self-assessment by experts is subjective and incomplete

- Focus on a single organization → relatively few experts per expertise area

Solution: Domain-specific test collections

• Documents

- Where? Collect publications from relevant journals and conferences in a specific domain

- Why? More challenging because of lower level of granularity

• Topics

- Where? Collect topics descriptions from conference workshop websites

- Why? Rich descriptions with explicitly identified sub-topics (“areas of interest”)

• Relevance assessments

- Where? Program committees listed on workshop websites

- Why? Combines peer judgments with self-assessment8

Collection 1: Information retrieval (IR)

9

• Research domain(s):

- Inform

• Topics

- Workshops held at conferences with substantial portion dedicated to

‣ IIiX

‣ RecSys

‣ ECDL

‣ JCDL

‣ TPDL

• Research domain(s)

- Information retrieval, digital libraries, and recommender systems

• Topics

- Workshops held at conferences with substantial portion dedicated to these domains between 2001 and 2012

‣ CIKM

‣ SIGIR

‣ ECIR

‣ WWW

‣ WSDM

Collection 1: Information retrieval (IR)

• Documents

- Based on DBLP Computer Science Bibliography

‣ Good coverage of research domains

‣ ArnetMiner version available with (automatically extracted) citation information

- Selected publications from all relevant IR venues

‣ Core venues → Hosting conferences for selected IR workshops (~9,000 docs)

‣ Curated venues → Additional venues with substantial IR coverage (~16,000 docs)

‣ Venue has to have at least 5 publications in ArnetMiner DBLP data set

‣ Resulted in ~25,000 publications

- Collected full-text versions using Google Scholar for 54.1% of publications

10

• Research domain(s)

- Semantic Web

• Topics

- Workshops held at conferences in the Semantic Web Dog Food data set

‣ ISWC

‣ EKAW

‣ ESWC

• Documents

- Based on Semantic Web Dog Food corpus (SPARQL public endpoint)

- Full-text PDF versions available for all publications

Collection 2: Semantic Web (SW)

11

‣ WWW

‣ ASWC

‣ I-Semantics

• Research domain(s)

- Computational linguistics, natural language processing

• Topics

- Workshops held at conferences in the ACL Anthology Reference Corpus

‣ ACL

‣ NAACL

‣ EACL

• Documents

- Based on ACL Anthology Reference Corpus

- Full-text PDF versions available for all publications

Collection 3: Computational linguistics (CL)

12

‣ SemEval

‣ ANLP

‣ EMNLP

‣ CoLing

‣ HLT

‣ LREC

• Topic representations

- Title

- Long description (complete workshop description)

- Short description (teaser description, typically first paragraph)

- Areas of interest

Topics & relevance assessments

13

14

<topic id="014"> <title>Workshop on Information Retrieval in Context (IRiX)</title>

<year>2004</year>

<website>http://ir.dcs.gla.ac.uk/context/</website>

<short_description>This workshop will explore a variety of theoretical

frameworks, characteristics and approaches to future interactive IR research.</

short_description> <long_description>There is a growing realisation that relevant inform

ation

[...] for future interactive IR (IIR) research.</long_description>

<areas_of_interest>

<area>Contextual IR theory - modeling context</area>

[...] </areas_of_interest>

<organizers> <name>Peter Ingwersen</name>

[...] </organizers> <program_committee>

<name>Pia Borlund</name>

[...] </program_committee></topic>

• Topic representations

- Title

- Long description (complete workshop description)

- Short description (teaser description, typically first paragraph)

- Areas of interest

- Manually annotated topics with fine-grained expertise topics

• Relevance assessments

- PC members and organizers typically have expertise in one or more areas of interest → combination of peer judgments and self-assessment

- Relevance value of ‘2’ for organizers and ‘1’ for PC members

Topics & relevance assessments

15

Collections by numbers

16

Information retrieval Semantic Web Computational

linguistics

# (unique) authors 26,098 9,983 4,480

# documents 24,690 10,921 2,311

% full-text documents 54.1% 100% 100%

# workshops (= topics) 60 340 190

# expertise topics 488 4,660 6,751

avg. # authors/document 2.7 2.2 3.3

avg. # experts/topic 14.9 25.8 24.9

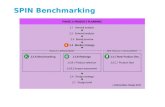

Benchmarking the collections

• Benchmark results on our collections using state-of-the-art approaches on two tasks

- Profile-centric model (M1, “Model 1”) — expert finding, expert profiling

‣ Aggregate all content for an expert into a document representation and produce ranking

- Document-centric model (M2, “Model 2”) — expert finding, expert profiling

‣ Retrieve relevant documents, then associate with experts and produce ranking

- Saffron (Bordea et al., 2012)

‣ Automatically extracts expertise terms from text, ranks them by term frequency, length, and ‘embeddedness’, associates documents and experts with these terms

‣ Topic-centric extraction (TC) — expert finding, expert profiling

‣ Document-count ranking (DC) — expert finding17

0.00

0.02

0.04

0.06

0.08

0.10

0.12

0.14

0.16

0.18

Information retrieval ! Semantic Web! Computational linguistics!

Profile-centric Document-centric Saffron - TC Saffron - DC

P@5

Expert finding

18

0.00

0.02

0.04

0.06

0.08

0.10

0.12

0.14

0.16

0.18

Information retrieval ! Semantic Web! Computational linguistics!

Profile-centric Document-centric Saffron - TC

MAP

Expert profiling

19

Discussion & conclusions

• Contributions

- Three new domain-specific test collections for expert search

‣ Available at http://itlab.dbit.dk/~toine/?page_id=631

- Workshop websites for topic creation & relevance assessment

- Benchmarked performance for expert finding and expert profiling

• Findings

- Term extraction approaches outperform language modeling on domain-centered collections (as opposed to organization-centric collections)

• Caveats

- Incomplete assessments & social selection bias for PC members?20

Future work

• Expansion

- Add additional domains

‣ Need an active workshop scene & access to documents

- Add additional topics to existing collections

‣ IR collection has 100+ workshops that need manual cleaning

‣ Conference tutorials could also be added (but very incomplete relevance assessments!)

• Drilling down

- Incorporate social evidence in the form of citation networks

- Investigate the temporal aspect (topic drift?)

21

Questions? Comments? Suggestions?

22