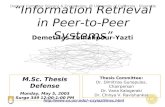

Availability in Global Peer-to-Peer Systems Qin (Chris) Xin, Ethan L. Miller Storage Systems...

-

date post

19-Dec-2015 -

Category

Documents

-

view

215 -

download

0

Transcript of Availability in Global Peer-to-Peer Systems Qin (Chris) Xin, Ethan L. Miller Storage Systems...

Availability in Global Peer-to-Peer Systems

Qin (Chris) Xin, Ethan L. Miller

Storage Systems Research Center

University of California, Santa Cruz

Thomas Schwarz, S.J.

Dept. of Computer Engineering

Santa Clara University

Availability in Global Peer-to-Peer Storage Systems 2WDAS'04

Motivations

It is hard to achieve high availability in a peer-to-peer storage system Great heterogeneity exists among peers Very few peers fall in the category of high availability Node availability varies

Nodes join & departure Cyclic behavior

Simple replication does not work for such a system Too expensive Too much bandwidth is required

Availability in Global Peer-to-Peer Storage Systems 3WDAS'04

A History-Based Climbing Scheme

Goal: improve overall data availability Approach: adjust data location based on

historical records of availability Select nodes that improve overall group availability These may not be the most reliable nodes…

Essentials Redundancy groups History statistics

Related work Random data swapping in FARSITE system OceanStore project

Availability in Global Peer-to-Peer Storage Systems 4WDAS'04

Redundancy Groups

Break data up into redundancy groups Thousands of redundancy groups

Redundancy group stored using erasure coding m/n/k configuration

m out of n erasure coding for reliability k “alternate” locations that can take the place of any of the n

locations Goal: choose the “best” n out of n+k locations

Each redundancy group may use a different set of n+k nodes Need a way to choose n+k locations for data

Use RUSH [Honicky & Miller] Other techniques exist

Preferably use low-overhead, algorithmic methods Result: a list of locations of data fragments in a group

Provide high redundancy with low storage overhead

Availability in Global Peer-to-Peer Storage Systems 6WDAS'04

History-Based Hill Climbing (HBHC)

Considers the cyclic behavior of nodes Keeps “scores” of nodes, based on statistics of

nodes’ uptime Swaps data location from the current node to a

node in the candidate list with higher score Non-lazy option: always do this when any node is

down Lazy option: climb only when the number of

unavailable nodes falls below a threshold

Availability in Global Peer-to-Peer Storage Systems 7WDAS'04

Migrating data based on a node’s score Currently, node’s score is its recent uptime

Longer uptime preferably move data to this node⇒ Node doesn’t lose data only because of a low score

Random selection will prefer nodes that are up longer Not deterministic: can pick any node that’s currently

up May not pick the best node, but will do so

probabilistically HBHC will always pick the “best” node

Deterministic and history-based Avoids the problem of a node being down at the

“wrong” time

Availability in Global Peer-to-Peer Storage Systems 8WDAS'04

Simulation Evaluation

Based on a simple model of a global P2P storage system 1,000,000 nodes Considers the time zone effect Node availability model: a mix distribution of always-

on and cyclic nodes [Douceur03] Evaluations

Comparison of HBHC and RANDOM, with no climbing

Different erasure coding configurations Different candidate list lengths Lazy climbing threshold

Availability in Global Peer-to-Peer Storage Systems 10WDAS'04

Coding 16/24/32

0

0.5

1

1.5

2

2.5

0 5 10 15Weeks

Data availability (9's)

No climbingRandomHBHC

Availability doesn’t improve without climbing

Availability gets a little better with Random

Move from an unavailable node to one that’s up

Selects nodes that are more likely to be up

Doesn’t select those that are likely to complement each other’s downtimes

Best improvement with HBHC Selects nodes that work “well”

together May include cyclically

available nodes from different time zones

Availability in Global Peer-to-Peer Storage Systems 11WDAS'04

Additional coding schemes

Coding scheme: 16/32/32

0

1

2

3

4

5

6

7

0 5 10 15

Weeks

Data availability (9's)

No climbing

RandomHBHC

Coding scheme: 32/64/32

0

1

2

3

4

5

6

7

8

0 5 10 15

Weeks

Data availability (9's)

No climbing

Random

HBHC

Availability in Global Peer-to-Peer Storage Systems 12WDAS'04

Candidate list length: RANDOM

Shorter candidate lists converge more quickly

Longer lists provide much better availability

2+ orders of magnitude Longer lists take much

longer to converge

System uses 16/32 redundancy

0

1

2

3

4

5

6

0 5 10 15

Weeks

Data availability (9's)

Length 4Length 8Length 16Length 32Length 64

Availability in Global Peer-to-Peer Storage Systems 13WDAS'04

Candidate Length: HBHC

Short lists converge quickly

Not much better than RANDOM

Longer lists gain more improvement over RANDOM

May be possible to use shorter candidate lists than RANDOM

System uses 16/32 redundancy

0

1

2

3

4

5

6

7

0 5 10 15

Weeks

Data availability (9's)

Length 4Length 8Length 16Length 32Length 64

Availability in Global Peer-to-Peer Storage Systems 14WDAS'04

Lazy option: RANDOM (16/32/32)

Good performance when migration done at 50% margin

This may be common! Waiting until within 2–4

nodes of data loss performs much worse

Reason: RANDOM doesn’t pick nodes well

0

1

2

3

4

5

6

7

0 5 10 15

Week

Data availability (9's)Non-lazyThreshold=8Threshold=12Threshold=14

Availability in Global Peer-to-Peer Storage Systems 15WDAS'04

Lazy option: HBHC (16/32/32)

Non-lazy performs much better

Don’t wait until it’s too late! HBHC selects good nodes

—if it has a chance Curves are up to 1.5

orders of magnitude higher than for RANDOM

Biggest difference as the algorithm does more switching

0

1

2

3

4

5

6

7

0 5 10 15

Week

Data availability (9's)Non-lazyThreshold=8Threshold=12Threshold=14

Availability in Global Peer-to-Peer Storage Systems 16WDAS'04

Future Work

Our model needs to be refined Better “scoring” strategy to measure the node

availability among a redundancy group Overhead measurement of message

dissemination

Availability in Global Peer-to-Peer Storage Systems 17WDAS'04

Future work: better scoring

Node score should reflect node’s effect on group availability

Higher score ⇒ could make the group more available Important: availability is measured for the group, not an

individual node If node is available when few others are, its score is increased

a lot If node is available when most others are, its score is not

increased by much Similar approach for downtime

Result: nodes with high scores make a group more available

This approach finds nodes whose downtimes don’t coincide

Availability in Global Peer-to-Peer Storage Systems 18WDAS'04

Conclusions

History-based hill climbing scheme improves data availability

Erasure coding configuration is essential to high system availability

The candidate list doesn’t need to be very long Longer lists may help, though

Lazy hill climbing can boost availability with fewer swaps Need to set the threshold sufficiently low