An Introduction to Parallel Algorithms

Transcript of An Introduction to Parallel Algorithms

An Introduction to Parallel Algorithms

By: Arash J. Z. Fard

GRSC7770 – Graduate Seminar

11/9/2009

2

Topics

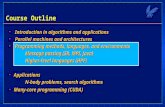

1. Definition of Parallel Algorithm2. Task/Channel Model3. Foster’s Design Methodology4. An Example

3

Reference

Michael J. Quinn, "Parallel Programming in C with MPI and OpenMP", McGraw-Hill, 2004.

4

Definition of Parallel Algorithm

A parallel algorithm is an algorithm which can be executed a piece at a time on many different processing devices, and then put back together again at the end to get the correct result. (wikipedia)

A parallel program usually contains some tasks which are executed concurrently on different processors, and may communicate with each other.

The main purpose for designing and using a parallel algorithm isreducing total running time of a process

5

Task/Channel Model

A task is a program, its local memory, and a collection of I/O ports.

A channel is a message queue that connects one task’s output port with another task’s input port.

Receive: SynchronousSend: Asynchronous

6

Foster’s Design Methodology

7

1. Partitioning

• Domain DecompositionDividing data into pieces and then determine how to associate computations with data

• Functional DecompositionFirst dividing the computation into pieces and thendetermine how to associate data items

8

1.1. Domain Decomposition

9

1.2. Functional Decomposition

Acquire patient images

Register

images

Determine image

locations

Display image

Track position of instruments

10

Partitioning Considerations

• An order of magnitude more primitive tasks than processors

• Minimized redundant computation and redundant data structure storage

• Roughly the same size for primitive tasks

• The number of tasks is an increasing function of the problem size

11

2. Communication

• Local CommunicationA task communicating with a small number of other tasksin order to perform a computation

• Global CommunicationA significant number of the primitive tasks must contribute data in order to perform a computation

12

Communication Considerations

• The communication operations are balanced among the tasks

• Each task communicate with only a small number of neighbors

• Task can perform their communications concurrently

13

3. Agglomeration

Agglomeration is the process of grouping tasks into larger tasks in order to improve performance or simplify program.

14

Agglomeration Considerations

• Increased the locality of the parallel algorithm

• Replicated computation take less time than the communication they replace

• The amount of replicated data is small enough to allow the algorithm to scale

• Agglomerated tasks have similar computational and communications costs

• The number of tasks is as small as possible, but greater than number of processors

• The trade-off between the chosen agglomeration and the cost of modification

15

4. Mapping

Goals: Maximize Processor Utilization, Minimize InterprocessorCommunication

Note: Increasing processor utilization and minimizing interprocessorcommunication are often conflicting goals

16

Mapping Considerations

• Designs based on one task per processors and multiple tasks per processor have been considered

• Both static and dynamic allocation of tasks to processors have been evaluated

• If a dynamic allocation of tasks to processors has been chosen, the manager is not a bottleneck to performance

17 An Example:Multiply Two Matrices

a1,1 a1,2 … a1,n

a2,1 a2,2 … a2,n

| | \ |

an,1 an,2 … an,n

b1,1 b1,2 … b1,n

b2,1 b2,2 … b2,n

| | \ |

bn,1 bn,2 … bnn

a11*b11 a12*b21 a13*b31 a14*b41 an?*b?n an?*b?n an?*b?n ann*bnn…

+ + … + +

Ann * Bnn =

+ +...

......

18

Multiply Two Matrices (cont.)

a1,1 a1,2 … a1,n

a2,1 a2,2 … a2,n

| | \ |

an,1 an,2 … an,n

b1,1 b1,2 … b1,n

b2,1 b2,2 … b2n

| | \ |

bn,1 bn,2 … bn,n

19

Multiply Two Matrices (cont.)

a11 a12 … a14

a21 a22 a23 a24

a31 a32 a33 a34

a41 a42 a43 a44

b11 b12 b13 b14

b21 b22 b23 b24

b31 b32 b33 b34

b41 b42 b43 b44

a11 a12 a13 a14

a21 a22 a23 a24

a31 a32 a33 a34

a41 a42 a43 a44

b11 b12 b13 b14

b21 b22 b23 b24

b31 b32 b33 b34

b41 b42 b43 b44

20

Conclusion

Now You should be able to1. Define Parallel Algorithm2. Describe Task/Channel Model3. Use Foster’s Design Methodology

I. PartitioningII. CommunicationIII. AgglomerationIV. Mapping

21

?Question

22

Homework

Write pseudo code of a parallel algorithm to multiply 2 matrices. Map it on a Quad-Processor system (SMP).

Due time: 11/16/2009, 5:00pm