Alise2014 30 40 d7

-

Upload

sarah-sutton -

Category

Documents

-

view

64 -

download

0

Transcript of Alise2014 30 40 d7

Using Concept Inventories to Assess Student Learning in a Core MLS Course

All Emporia State University School of Library and Information Management (SLIM) graduate core courses are delivered through a hybrid model. Multiple systems of asynchronous online modules and face-to-face instruction are combined to accomplish learning outcomes through best practices and delivery models most conducive for particular concepts. However, this goal leads to questions. What are these core concepts? To what extent are these identified concepts supported by academics and practitioners?

To develop and validate a concept inventory that will: • Inform iterative course improvement, and • Supply measureable evidence of student learning.

[email protected] [email protected]

This work is guided in part by the following frameworks: • core competencies (American Library Association), • concept inventories, and • Delphi Studies.

Overview

Objectives

Guiding Framework

Emporia State University School of Library & Information Management, 1200 Commercial Street, PO Box 4025, Emporia, KS 66801

The content is informed by subject matter concepts from: • textbooks, • practitioner and scholarly journal articles, and • LIS course syllabi and learning outcomes.

Concept inventories appear similar to a common assessment in format; however, the primary goal is to inform educators of core knowledge levels. The process consists of six general tasks: 1. define the content, 2. develop and select the instrument items, 3. informal informant sessions, 4. interview sessions with LIS educators and library

practitioners, 5. instrument administration, and 6. evaluation and final refinement.

Methodology

Tasks Progress

Developed items are tested with students enrolled in a core MLS course to measure variability. In a pre-test and a post-test, students were asked to rate their degree of confidence in understanding each of the concepts using a scale

Task 3 affords an opportunity to collect feedback prior to moving into the more formal instrument development tasks.

ASK US ABOUT HOW TO PARTICIPATE IN STEP 4!

Tasks 5 and 6 are iterative activities that are to be repeated as needed to produce an instrument that reflects reliability in detecting and describing distributions of the items.

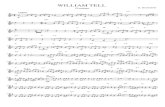

Figure 2. Comparison of pre-test and post-test results of the students’ answers to a single sub-domain item: return on investments in libraries.

Figure 3. Comparison of a pre-test and post-test domain level item, assessment of print resources.

Sarah W. Sutton, Ph.D. & Janet L. Capps, Ph.D.

where 5 is high. The instrument moves into Task 3 with 10 domain categories, e.g., acquisitions, selection, assessment of print resources. Each domain has a varying numbers of sub-domain items. For example, the acquisitions domain has 15 sub-domain items such as approval plans and blanket orders.

Figure 1. Student interface of the instrument during Task 2.

KEY

Pre-test count

Post-test count