Advanced Baseband Processing Circuits and Systems for 5G ... · Advanced Baseband Processing...

Transcript of Advanced Baseband Processing Circuits and Systems for 5G ... · Advanced Baseband Processing...

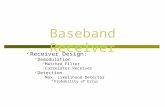

Advanced Baseband Processing Circuits andSystems for 5G CommunicationsIEEE SiPS 2018 Tutorial – Half Day.

Chuan Zhang1,2 and Xiaosi Tan1,2

November 7, 20181Lab of Efficient Architectures for Digital-communication and Signal-processing (LEADS)2National Mobile Communications Research Laboratory, Southeast University, Nanjing, China

Tutorial Speakers

Chuan Zhang is now an associate professor of National MobileCommunications Research Laboratory, School of InformationScience and Engineering, Southeast University, Nanjing, China.

Xiaosi Tan is currently research fellow in National MobileCommunications Research Laboratory, School of InformationScience and Engineering, Southeast University, Nanjing, China.

1

Tutorial Speakers

Chuan Zhang is now an associate professor of National MobileCommunications Research Laboratory, School of InformationScience and Engineering, Southeast University, Nanjing, China.

Xiaosi Tan is currently research fellow in National MobileCommunications Research Laboratory, School of InformationScience and Engineering, Southeast University, Nanjing, China.

1

Outline

1. Introduction

2. Polar Code Decoder

3. Algorithms and Implementations for MIMO Detection

4. Deep Learning Based Baseband Signal Processing

2

Introduction.

Introduction

• Baseband signal processing in 5G era- Massive MIMO;- Advanced coding and modulation;- Cognitive and cooperative radio baseband platform;- Configurable radio air-interface.

• What is challenging?- Advanced algorithms;- Implementation of circuits, architectures, and platforms;- Using AI techniques.

3

Introduction

Massive MIMO channel H

channel encoder

1

channel encoder

2

channel encoder

3

channel encoder

n

NOMA encoder

1

NOMA encoder

2

NOMA encoder

3

NOMA encoder

n

Massive MIMO

encoder/Precoder

iFFT

iFFT

iFFT

iFFT

digital filter

1

digital filter

2

digital filter

3

digital filter

n

RF chain

RF chain

RF chain

RF chain

+

+

+

bit stream

1

bit stream

2

bit stream

3

bit stream

n

...

...

...

...

...

...

......

...

...

1P

2P

3P

Pn

channel decoder

1

channel decoder

2

channel decoder

3

channel decoder

n

NOMA decoder

Massive MIMO

detector/Combiner

FFT

FFT

FFT

FFT

time-domain

pre-process

ing

RF chain

RF chain

RF chain

RF chain

output stream

1

output stream

2

output stream

3

output stream

n

...

...

...

...

...

...

...

...

1

1

-P

1

2

-P

1

3

-P

1-P

n

channel/noise estimation

...

Transmitter Side Receiver Side

Figure 1: System-level diagram of 5G wireless baseband architecture.

4

Introduction

• Targets- High spectral efficiency;- Low power and low area;- High throughput and low latency.

• This tutorial- Polar code decoder (Chuan Zhang);- Massive MIMO detection (Xiaosi Tan);- Uniform belief propagation processing (Chuan Zhang);- Deep learning based baseband signal processing (Xiaosi Tan).

5

Polar Code Decoder.

Polar Codes

Brief introduction of polar codes:

• A capacity-achieving codes proposed by Erdal Arıkan in 20091.• The forward error correction (FEC) code for eMBB’s control channel.

• Linear block code:

xN = uNGN,

where GN denotes the generator matrix.

1E. Arikan, “Channel polarization: a method for constructing capacity-achieving codes for symmetric binary-input memoryless channels,”IEEE Transactions on Information Theory, vol. 55, no. 7, pp. 3051–3073, 2009.

6

Polar Codes

The biggest asset of polar coding compared to SoA is its universal, andflexible, and versatile nature:

• Universal: the same hardware can be used with different codelengths, rates, channels.

• Flexible: the code rate can be adjusted readily to any numberbetween 0 and 1.

• Versatile: can be used in multiple coding scenarios.

Figure 2: Channel polarization.7

Polar Code’s Performance

With list-decoding and CRC polar codes deliver even better performanceto LDPC and Turbo codes used in present wireless standards2.

EsNo (dB)0 0.5 1 1.5 2 2.5 3 3.5 4

FE

R

10-5

10-4

10-3

10-2

10-1

100

P(1024,512), 4-QAM, L-1, CRC-0, SNR = 2P(1024,512), 4-QAM, L-32, CRC-0, SNR = 2P(1024,512), 4-QAM, L-32, CRC-16, SNR = 2Dispersion bound for (1024,512)WiMAX CTC (960,480)

Figure 3: Comparison with Turbo codes.

2E. Arıkan, “Polar coding for 5g wireless?,” International Workshop on Polar Code, 2015.

8

Polar Code’s Performance

With list-decoding and CRC polar codes deliver even better performanceto LDPC and Turbo codes used in present wireless standards3.

EsNo (dB)0 0.5 1 1.5 2 2.5 3 3.5 4

FE

R

10-5

10-4

10-3

10-2

10-1

100

P(2048,1024), 4-QAM, L-1, CRC-0, SNR = 2P(2048,1024), 4-QAM, L-32, CRC-0, SNR = 2P(2048,1024), 4-QAM, L-32, CRC-16, SNR = 2Dispersion bound for (2048,1024)WiMAX LDPC(2304,1152), Max Iter = 100

Figure 4: Comparison with LDPC codes.

3E. Arıkan, “Polar coding for 5g wireless?,” International Workshop on Polar Code, 2015.

9

Contents

Polar Code Decoder

Successive Cancelation (SC) Decoding for Polar Codes

SC-based Decoding of Polar Codes

Stochastic Polar BP decoding

Approximate BP Decoding Implementation

10

Decoding Trellis of SC Decoder

( ) ( )L y11 1

( ) ( )L y11 2

( ) ( )L y11 3

( ) ( )L y11 4

( ) ( )L y11 5

( ) ( )L y11 6

( ) ( )L y11 7

( ) ( )L y11 8

( ) ( )1 88 1L y

( ) ˆ( , )5 8 48 1 1L y u

( ) ˆ( , )3 8 28 1 1L y u

( ) ˆ( , )7 8 68 1 1L y u

( ) ˆ( , )2 88 1 1L y u

( ) ˆ( , )6 8 58 1 1L y u

( ) ˆ( , )4 8 38 1 1L y u

( ) ˆ( , )8 8 78 1 1L y u

1

1

1

1

2

2

3

4

5

5

6

7

8

8

8

8

9

9

10

11

12

12

13

14

Stage 3Stage 2Stage 1

ˆ ˆ ˆ ˆu u u u 1 2 3 4

ˆ ˆu u3 4

ˆ ˆu u2 4

u4 u6

u2

ˆ ˆu u5 6

ˆ ˆu u1 2

u7

u3

u5

u1

: Type I PE : Type II PE

u1

u5

u3

u7

u2

u6

u4

u8

• The trellis isFFT-like.

• SC decoder isa serialdecoder.

TypeI PE :(2i)N (yN

1 , u2i−11 )= (−1)u2i−1 (i)

N/2(yN/21 , u2i−2

1,o ⊕ u2i−21,e ) +

(i)N/2 (y

NN/2+1, u

2i−21,e ),

TypeII PE :(2i−1)N (yN

1 , u2i−11 )=sgn[(i)N/2(y

N/21 , u2i−2

1,o ⊕ u2i−21,e )]sgn[(i)N/2(y

NN/2+1, u

2i−21,e )]·

min[∣∣∣(i)N/2(y

N/21 , u2i−2

1,o ⊕ u2i−21,e )

∣∣∣ , ∣∣∣(i)N/2(yNN/2+1, u

2i−21,e )

∣∣∣].11

Decoding Trellis of SC Decoder

( ) ( )L y11 1

( ) ( )L y11 2

( ) ( )L y11 3

( ) ( )L y11 4

( ) ( )L y11 5

( ) ( )L y11 6

( ) ( )L y11 7

( ) ( )L y11 8

( ) ( )1 88 1L y

( ) ˆ( , )5 8 48 1 1L y u

( ) ˆ( , )3 8 28 1 1L y u

( ) ˆ( , )7 8 68 1 1L y u

( ) ˆ( , )2 88 1 1L y u

( ) ˆ( , )6 8 58 1 1L y u

( ) ˆ( , )4 8 38 1 1L y u

( ) ˆ( , )8 8 78 1 1L y u

Stage 3Stage 2Stage 1

ˆ ˆ ˆ ˆu u u u 1 2 3 4

ˆ ˆu u3 4

ˆ ˆu u2 4

u4 u6

u2

ˆ ˆu u5 6

ˆ ˆu u1 2

u7

u3

u5

u1

: Type I PE : Type II PE

u1

u5

u3

u7

u2

u6

u4

u8

A1

B1

A2

B2

A3

B3

A4

B4

C1

C3

D1

D3

C2

C4

D2

D4

E1

E3

E2

E4

F1

F3

F2

F4

• Marked eachprocesselement withred label.

• The dataflow graph(DFG) isobtained.

TypeI PE :(2i)N (yN

1 , u2i−11 )= (−1)u2i−1 (i)

N/2(yN/21 , u2i−2

1,o ⊕ u2i−21,e ) +

(i)N/2 (y

NN/2+1, u

2i−21,e ),

TypeII PE :(2i−1)N (yN

1 , u2i−11 )=sgn[(i)N/2(y

N/21 , u2i−2

1,o ⊕ u2i−21,e )]sgn[(i)N/2(y

NN/2+1, u

2i−21,e )]·

min[∣∣∣(i)N/2(y

N/21 , u2i−2

1,o ⊕ u2i−21,e )

∣∣∣ , ∣∣∣(i)N/2(yNN/2+1, u

2i−21,e )

∣∣∣].12

DFG of SC Decoder

• First, this a feed forward DFG.• Critical path of the DFG equals the processing time of a single PE.• The entire DFG is composed of two identical parts.

E1 F1 E1 F1

A1

A2

A3

A4

C1

C2

B1

B2

B3

B4

D1

D2

E1 F1 E1 F1

C1

C2

D1

D2

D

D

D

D

D

D

D

D

D

D

D

D

D

D

D

D D

D

D

D

D

D

D

D

D

D

D

D D

Цu1Цu2

Цu3Цu4

Цu5Цu6

Цu7Цu8

13

DFG of SC Decoder – One More Step

• Since the PEs A1,2,3,4 are functionally identical, we can merge themtogether and represent the merged one with A.

• Similar approaches can be applied to other PEs.

A

B

C

D

E

F

D D

D

D

start

end

Stage 1 Stage 2 Stage 3

1

8

2,9

5,12

3,6,10,13

4,7,11,14

1

1

21

1

1

2

1

1

2

4

1

14

Arch. and Latency of SC Decoder

The decoding latency for an N-bit SC polar decoder equals 2(N − 1)clock cycles.

2 ×∑log2N

i=12i−1 = 2 × 2log2N − 1

2 − 1 = 2(N − 1).

( ) ( )L y11 1

( ) ( )L y11 2

( ) ( )L y11 3

( ) ( )L y11 4

( ) ( )L y11 5

( ) ( )L y11 6

( ) ( )L y11 7

( ) ( )L y11 8

ˆ iu2 1

Stage 3Stage 2Stage 1

: Type I PE

ˆiu2

0

1

0

1

0

1

0

1

D

D

D

D

D

D

D

0

1

0

1

ˆ ˆ u or u2 6

orˆ ˆu u1 2

ˆ ˆu u5 6

ˆ ˆ ˆ ˆu u u u 1 2 3 4

ˆ ˆu u3 4

ˆ ˆu u2 4

u4

0

1

0

1

D

D

D

D

D

D

D

D

D

D

ˆ ˆ ˆ ˆu u u u 1 2 3 4

ˆ ˆu u2 4

ˆ ˆu u3 4

u4

orˆ ˆu u1 2ˆ ˆu u5 6

ˆ ˆ u or u2 6

U2U3

: Type II PE : Take sign bit

m1

m2

d2

Main frame

Feedback part

Figure 5: Arch. for SC decoder.Figure 6: Scheduling of SC decoder.

15

Lower Latency Consideration

There are only 2 possible outputs for every Type I PE, depending onwhat value u2i−1 will take.

( ) ( )L y11 1

( ) ( )L y11 2

( ) ( )L y11 3

( ) ( )L y11 4

( ) ( )L y11 5

( ) ( )L y11 6

( ) ( )L y11 7

( ) ( )L y11 8

ˆ iu2 1

Stage 3Stage 2Stage 1

: Type I PE

ˆiu2

0

1

0

1

0

1

0

1

D

D

D

D

D

D

0

1

0

1

ˆ ˆ oru u2 6

orˆ ˆu u1 2

ˆ ˆu u5 6

ˆ ˆ ˆ ˆ u u u u1 2 3 4

ˆ ˆu u3 4

ˆ ˆu u2 4

u4

: Type II PE : Take sign bit

m1

m2

D

Figure 7: Arch. for SC decoder.

( ) ( )L y11 1

( ) ( )L y11 2

( ) ( )L y11 3

( ) ( )L y11 4

( ) ( )L y11 5

( ) ( )L y11 6

( ) ( )L y11 7

( ) ( )L y11 8

ˆ iu2 1

Stage 3Stage 2Stage 1

: Type I PE

ˆiu2

0

1

0

1

0

1

0

1

D

D

D

D

D

D

0

1

0

1

ˆ ˆ oru u2 6

orˆ ˆu u1 2

ˆ ˆu u5 6

ˆ ˆ ˆ ˆ u u u u1 2 3 4

ˆ ˆu u3 4

ˆ ˆu u2 4

u4

: Type II PE : Take sign bit

m1

m2

D

Clock Cycle 1

Figure 8: Scheduling of SC decoder.

16

Pre-Computation DFG

According to the pre-computation look-ahead approach, Type I and TypeII PEs in the same stage are activated within the same clock cycle. TheDFG illustrated can then be modified as follows:

A/B C/D E/FD D

Dstart

end

Stage 1 Stage 2 Stage 3

2 3,6

5

4,7

4,751 2 1 2

2

4

Figure 9: Arch. for SC decoder.

Figure 10: Scheduling of SC decoder. 17

Pre-Comp. SC Decoder

The decoding latency reduces to (N − 1) clock cycles.∑log2N

i=12i−1 =

2log2N − 12 − 1 = (N − 1).

0

1

ˆ iu2 1

Stage 3Stage 2Stage 1

ˆiu2

: Merged PE

0

1

0

1

I1

I2

O1

O2

O3

ˆ ˆ u or u2 6

orˆ ˆu u1 2

ˆ ˆu u5 6

u4

0

1

0

1

0

1

0

1

I1

I2

O1

O2

O3

I1

I2

O1

O2

O3

ˆ ˆ ˆ ˆu u u u 1 2 3 4

ˆ ˆu u3 4

ˆ ˆu u2 4

( ) ( )L y11 1

( ) ( )L y11 2

( ) ( )L y11 3

( ) ( )L y11 4

( ) ( )L y11 5

( ) ( )L y11 6

( ) ( )L y11 7

( ) ( )L y11 8

I1

I2

O1

O2

O3

I1

I2

O1

O2

O3

I1

I2

O1

O2

O3

I1

I2

O1

O2

O3

0

1

0

1

ˆ ˆ ˆ ˆu u u u 1 2 3 4

ˆ ˆu u3 4

ˆ ˆu u2 4

u4

orˆ ˆu u1 2ˆ ˆu u5 6

ˆ ˆ u or u2 6

D

D

D

D

D

D

D

D

D

D

D

D D

D

D

D

D

D

D

D

D

D

D

D

0

1

0

1

0

1

0

1

0

1

0

1

m1

m2

d2

I1

I2

O1

O2

O3

U1U2

Figure 11: Pre-comp. SC decoder.

18

Contents

Polar Code Decoder

Successive Cancelation (SC) Decoding for Polar Codes

SC-based Decoding of Polar Codes

Stochastic Polar BP decoding

Approximate BP Decoding Implementation

19

SC Decoding

0

0

0

0 1

1.00

1.00

1.00

0.60 0.40

0.40

0.070.33

0 1

0.03 0.30

0 1

0.04 0.03

10

0 1

0.01 0.02

10

0.28 0.02 0.03 0.01

0 1

0.01 0.02

0 1

0

0.60

0.220.38

0 1

0.10 0.28

0 1

0.12 0.10

10

0 1

0.04 0.06

10

0.20 0.08 0.08 0.04

0 1

0.07 0.03

0 1

0

Visited node

Non-visited node

SC Decoding - Greedy Algorithm

• The frozen bit is set as 0.• The information bit extends to

0 and 1, calculate thetransmission possibility.

• Picking up the most likely codebit per step, continue pathextension.

• Decoding ends at the last level,decoding results are”0000 0010”.

20

SC List Decoding & SC Stack Decoding

0

0

0

0 1

1.00

1.00

1.00

0.60 0.40

0.40

0.070.33

0 1

0.03 0.30

0 1

0.04

10

0 1

0.01 0.02

10

0.28 0.02 0.03 0.01

0 1

0.01 0.02

0 1

0

0.60

0.220.38

0 1

0.10 0.28

0 1

0.12 0.10

10

0 1

0.04 0.06

10

0.20 0.08 0.08 0.04

0 1

0.07 0.03

0 1

0

Visited node

Non-visited node

SC List (SCL) Decoding Algorithm for L = 2

0

0

0

0 1

1.00

1.00

1.00

0.60 0.40

0.40

0.070.33

0 1

0.03 0.30

0 1

0.04

10

0 1

0.01 0.02

10

0.28 0.02 0.03 0.01

0 1

0.01 0.02

0 1

0

0.60

0.220.38

0 1

0.10 0.28

0 1

0.12 0.10

10

0 1

0.04 0.06

10

0.20 0.08 0.08 0.04

0 1

0.07 0.03

0 1

0

Visited node

Non-visited node

SC Stack (SCS) Decoding Algorithm for L = 2

21

SC Heap Decoding

-3

-5 -12

-9 -12 -14

0 1 0 1

0

1 0 1 0

1 1 0

0 0 0 1 1

0

1

-6

-5 -12

-9 -12 -14 -9

1 0 1 11 0 1 0

1 0 1

0 0 0 1 1

-6

-90 00

0

-5

-6 -9

-9 -12 -14 -12

1 1 0

1 0 1 1

1 0 1 0

1 0 1

0 0 0 1 1

0 0 0

0 1 1

0 1 1 1 1 0

0

0 1 1 0

Step 1 Step 2 Step 3

• Expansion in a non-full heap

-16

-3

-5 -12

-9 -12 -14

0 1 0 1

0 1 1 0

1 0 1 0

1 1 0

0 0 0 1 1

0

1

-6

-9

1 1 1 0

Compare with a random

node in the last layer

-5

-6 -9

-9 -12 -12 -16

1 1 0

1 0 1 1

1 0 1 0

1 0 1

0 0

0 0 0

1 1 1 00 1 1 0

-6

-5 -12

-9 -12 -9 -16

1 0 1 11 0 1 0

1 0 1

0 0

0 00

0

1 1 0 0 1 1

1 1 1 0

0

Step 1 Step 2 Step 3

• Expansion in a full heap

22

Connection between Data Structure and SC Family

• Access: Access each record exactly once.• Search: Find the location of the record with a given key value.• Insertion: Add a new record to the structure.• Deletion: Remove a record from the structure.

23

Connection between Data Structure and SC Family

Data Structure Access Search Insertion Deletion Space ComplexityArray O(1) O(n) O(n) O(n) O(n)Stack O(n) O(n) O(1) O(1) O(n)Queue O(n) O(n) O(1) O(1) O(n)

Linked List O(n) O(n) O(1) O(1) O(n)Binary Search Tree O(log n) O(log n) O(log n) O(log n) O(n)

Heap O(log n) O(log n) O(log n) O(log n) O(n)

• Access: Access each record exactly once.• Search: Find the location of the record with a given key value.• Insertion: Add a new record to the structure.• Deletion: Remove a record from the structure.

24

Complexity Analysis

SC-based decoders Insertion Deletion Searching Computational Complexity Space ComplexitySCL Decoder O(1) O(1) O(L log L) O(LN(log N + log L)) O(LN)

SCS Decoder O(D) O(1) O(1) O(LN log N + mD) O(DN)

SCH Decoder O(log D) O(log D) O(1) O(LN log N + m log D) O(DN)

25

Predicted SC-AVL Decoding Algorithm

-9

-12 -5

1 1 00 1 1 0

-9

-12 -5

1 1 00 1 1 0

-5

-12 -3

1 1 0

1 1 00 1 0 10

1

0

-9

-6

-5

-12 -3

1 1 0

1 1 00 1 0 10

1

0

-9

-6

-5

-9

1 1 0

-12

1 0 10 1

1 1 00

-5

-9

1 1 0

-12

1 0 10 1

1 1 00

-9

-12 -5

1 1 00

-9

-12 -5

1 1 00

1 0 10 11 0 10 1

1 1 01 1 0

1 0 10 11 0 10 1

-6

1 0 10 01 0 10 0

-6

1 0 10 0

• Searching: Search the best candidate path as well as the path that ispointed by the pointer in the tree. O(1)

• Deletion: Delete the pointed path from the tree and reconstruct theAVL tree4 O(log D)

• Insertion: Insert two expanded path in appropriate place and checkthe balance factor of AVL tree. If AVL tree is unbalanced, rotate torebalance it. Pointer points to the right leaf node. O(log D)

4It is named from its inventors G. M. Adelson-Velsky and Evgenii Landis.

26

Comparisons over SC-Based Decoding Algorithms

0.5 1 1.5 2 2.5

Eb/N0 (dB)

10-3

10-2

10-1

100

FE

R

SCH, SCT, SCL (2)

SCL (4), SCS (4)

conventional SCSC List (4)SC Stack (4)SC Heap (4)SC AVLTree (4)SC List (2)

FER performance comparison.

0.5 1 1.5 2 2.5

Eb/N0 (dB)

0

0.5

1

1.5

2

2.5

3

3.5

4

4.5

Com

puta

tiona

l Com

plex

ity

#106

conventional SCSC List (4)SC Stack (4) SC Heap (2) SC AVLTree (2)SC List (2)

Computational complexity comparison.

27

Memory-Aware Architecture

Memory Blocks

Path Length MetricPath Length Metric

Memory Blocks

Path Length Metric

Memory Blocks

Path Length MetricPath

Length

Metric

Path

Length

Metric

Path

Length

Metric

Data Structure Block

0

1

0

1SC CoreSC Core

Decoder Core

0

1SC Core

Decoder Core

AC

i

Stage

Instruction

Set

AC

i

Stage

Instruction

Set

Control Unit

Pointer

Memory

Memory

Address

Metrics

Sorter

SC-based decoders Latency (µs) Throughput (Mbps)SCL Decoder 490.92 2.09SCS Decoder 3628.53 0.28SCH Decoder 3247.45 0.32SCT Decoder 3315.28 0.31

28

Conclusion

• The connection between data structure and SC family is revealed.• The complexity of existing algorithms could be analyzed and proved

from a constructed data perspective.• New algorithms could be proposed and predicted based on the

methodology.• A formal architecture of SC-based decoding is discussed.

29

Contents

Polar Code Decoder

Successive Cancelation (SC) Decoding for Polar Codes

SC-based Decoding of Polar Codes

Stochastic Polar BP decoding

Approximate BP Decoding Implementation

30

Deterministic BP Decoding

+

=

+

=

+

=

+

=

+

=

+

=

+

=

+

=

+

=

+

=

+

=

+

=

(1,1)

(1,2)

(1,3)

(1,4)

(1,5)

(1,6)

(1,7)

(1,8)

(2,1)

(2,2)

(2,3)

(2,4)

(2,5)

(2,6)

(2,7)

(2,8)

(3,1)

(3,2)

(3,3)

(3,4)

(3,5)

(3,6)

(3,7)

(3,8)

(4,1)

(4,2)

(4,3)

(4,4)

(4,5)

(4,6)

(4,7)

(4,8)

=+ : F node : G node

Stage 1 Stage 2 Stage 3

Figure 12: Factor Graph of polar BP decoding with N = 8.

31

Deterministic BP Decoding

• Left to right and right to left messages• Initialization for 2 types of messages:

from left to right: R1,j =

1, if j ∈ A,0, if j ∈ Ac

from right to left: Ln+1,j =P(yj|xj = 0)P(yj|xj = 1)

(1)

32

Deterministic BP Decoding

• The iterative updating rules associated with L(t)i,j and R(t)

i,j :L(t)

i,j = g(L(t)i+1,2j−1, L

(t)i+1,2j + R(t)

i,j+N/2),

L(t)i,j+N/2 = g(R(t)

i,j , L(t)i+1,2j−1) + L(t)

i+1,2j,

R(t)i+1,2j−1 = g(R(t)

i,j , L(t−1)i+1,2j + R(t)

i,j+N/2),

R(t)i+1,2j = g(R(t)

i,j , L(t−1)i+1,2j−1) + R(t)

i,j+N/2,

(2)

where g(x, y) ≈ sign(x)sign(y)min(|x|, |y|).• After I iterations, we have

uj =

0 if R1,j ≥ 1,1 else.

(3)

33

Deterministic BP Decoding

+

=

(t)

i, jL

(t)

i,jR

+ /2

(t)

i,j NR

+ /2

(t)

i, j NL

+1

(t)

i , jR

-

+

1

1

(t )

i , jL

-

+ +

1

1 /2

(t )

i ,j NL

+ +1 /2

(t)

i ,j NR

BCB

(a) BCB module

F1 G1

G1 F1A

B

C

D

out1

out2

(b) BCB logic structure

Figure 13: BCB and its logic structure.

34

Stochastic Computing

• Requiring low complexity• High fault tolerance• Lack of accuracy

0 1 1 1 0 0 1 0

1 0 0 0 1 1 0 0

Bit-wise

Operation0 1 0 0 1 0 1 0

Bit stream A

Bit stream C

Bit stream B

a

c

b

Figure 14: Basic module for stochastic computing.

35

Stochastic BP Decoding

• Stochastic channel message

Pr(yi = 1) , P(yi|xi = 1) = 1e−LR(yi) + 1 (4)

• New initialization rules:

from left to right: R1,j =

0.5, if j ∈ A,0, if j ∈ Ac

from right to left: Ln+1,j = Pr(yi = 1)

(5)

36

Stochastic BP Decoding

• Reformulation for F node:

Pz , Pr(z = 1) = Px(1 − Py) + (1 − Px)Py (6)

• Reformulation for G node:

Pz =PxPy

PxPy + (1 − Px)(1 − Py)(7)

J

K

Q

A

Bout1

J

K

Q

C

D

out2

Basic computation block

Figure 15: Architecture of the Basic Computation Block (BCB).37

Stochastic BP Decoding

1 1.5 2 2.5 3

Eb/N0(dB)

10-3

10-2

10-1

100

BE

R

N=64, deterministic decodingN=64, stochastic decodingN=256, deterministic decodingN=256, stochastic decoding

Figure 16: Numerical results for straightforward stochastic BP decoder.

38

Approaches for Improvement

• The stochastic computing correlation SCC(A,B) of two unary bitstreams A and B is given by

SCC(A,B) =

PA&B − PAPB

min(PA,PB)− PAPB, if PA&B > PAPB

PA&B − PAPBPAPB − max(PA + PB − 1, 0) otherwise

(8)• For two maximally similar (or different) unary bit streams A and B,

we get SCC(A,B) = +1(or − 1).• If we have SCC(A,B) = 0, it indicates that bit stream A and B are

uncorrelated and suitable for stochastic computing.

39

Approaches for Improvement

• The stochastic computing correlation SCC(A,B) of two unary bitstreams A and B is given by

SCC(A,B) =

PA&B − PAPB

min(PA,PB)− PAPB, if PA&B > PAPB

PA&B − PAPBPAPB − max(PA + PB − 1, 0) otherwise

• If the length of unary bit stream A and B is infinite, we have

limL→∞

1L

L∑i=1

AiBi = limL→∞

PA&B = ab = PaPb

40

Bit Length Increasing

1.5 2 2.5 3

Eb/N

0 (dB)

10-3

10-2

10-1

BE

R

N=64, m=1024N=64, m=512N=64, m=128N=256, m=1024N=256, m=512N=256, m=128

Figure 17: Comparison of stochastic decoders with different stream lengths.

41

Permutation Change in Stochastic BP Decoding

1 2 3 4 5 6 7 8 9

Number of Bits

0

0.05

0.1

0.15

0.2

0.25

0.3

0.35

0.4

0.45

0.5

Val

ue

0

0

00

1

1

1

1

Figure 18: Permutation graph of bit stream 00101101.

42

Permutation Change in Stochastic BP Decoding

0 50 100 150 200 250

Number of Bits

0

0.1

0.2

0.3

0.4

0.5

0.6

Val

ue

bit stream in the 1st stagebit stream in the 2nd stagebit stream in the 3rd stagebit stream in the 4th stage

Figure 19: Permutation change of unary bit streams in different stages.

43

Stage-wise Re-randomization

+

=

+

=

+

=

+

=

+

=

+

=

+

=

+

=

+

=

+

=

+

=

+

=

(1,1)

(1,2)

(1,3)

(1,4)

(1,5)

(1,6)

(1,7)

(1,8)

(2,1)

(2,2)

(2,3)

(2,4)

(2,5)

(2,6)

(2,7)

(2,8)

(3,1)

(3,2)

(3,3)

(3,4)

(3,5)

(3,6)

(3,7)

(3,8)

(4,1)

(4,2)

(4,3)

(4,4)

(4,5)

(4,6)

(4,7)

(4,8)

Figure 20: Schedule of the Stage-wise Re-Randomization with N = 844

Stage-wise Re-randomization

+

=

+

=

+

=

+

=

+

=

+

=

+

=

+

=

+

=

+

=

+

=

+

=

(1,1)

(1,2)

(1,3)

(1,4)

(1,5)

(1,6)

(1,7)

(1,8)

(2,1)

(2,2)

(2,3)

(2,4)

(2,5)

(2,6)

(2,7)

(2,8)

(3,1)

(3,2)

(3,3)

(3,4)

(3,5)

(3,6)

(3,7)

(3,8)

(4,1)

(4,2)

(4,3)

(4,4)

(4,5)

(4,6)

(4,7)

(4,8)

(2,2)

(2,,44))

(2,6)

(2,8)

(3,2)

(3,,44))

(3,,6)

(3,8)

Figure 21: Schedule of the Stage-wise Re-Randomization with N = 8

45

Stage-wise Re-randomization

1 1.5 2 2.5 3

Eb/N0(dB)

10-3

10-2

10-1

100

BE

R

N=64, deterministic decodingN=64, original re-randomizationN=64, modified re-randomizationN=256, deterministic decodingN=256, original re-randomizationN=256, modified re-randomization

Figure 22: Numerical results for different stochastic decoders.

46

Early Termination

1 1.5 2 2.5 3

Eb/N0(dB)

10-3

10-2

10-1

100

BE

R

Imax

= 20

Imax

= 30

Imax

= 40

Imax

= 50

Figure 23: Performance comparison of different number of iterations.

47

Early Termination

• Here, we define thr is an empirically threshold, we havecovt = |

m∑i=1

(Lt2,j − Lt−1

2,j )| < thr,

covt+1 = |m∑

i=1(Lt+1

2,j − Lt2,j)| < thr

(9)

• If covt is less than the predetermined threshold, we believe that thenumber of iterations is sufficient and therefore terminate thedecoding process.

48

Hardware Architecture

switch

N/2

BCB

N/2

RE BCB

N/2

RE

BCB

N/2

BCB

N/2

RE RE

BCB

N/2

RE

BCB

N/2

RE

RE

RE

comparator reorder

ET

p1

Pn+1

L_S1 L_S2 L_Sn-1

R_S3 R_SnR_S2

û

Figure 24: Hardware architecture of fully parallel N-bit stochastic decoder.

49

Comparison of Different BP Decoders

Table 1: Polar BP Decoders for N = 8.

Implementation Logic Gate Register Processing DelayDet. BP 5, 680 1, 632 4 clksSto. BP 120 6, 144 1, 024 clksStage-wise sto. BP 120 2048 512 clksEarly Termination 120 2048 less than 512clks

50

Contents

Polar Code Decoder

Successive Cancelation (SC) Decoding for Polar Codes

SC-based Decoding of Polar Codes

Stochastic Polar BP decoding

Approximate BP Decoding Implementation

51

Motivation for Approximate Computing

• Applications of approximate computing

52

Motivation for Approximate Computing

• Exact Results NOT NecessaryA few erroneous pixels do not affect human recognizing the image.

(a) Accurate Result. (b) Inexact Result.

Figure 25: Approximate computing in image processing.

53

Motivation for Approximate Computing

• Numerical Values NOT MatterHandwritten Digit Recognition

8

0

Similarity

0.9257

0.1563

9

1 0.0984

0.2435

...

...

54

Motivation for Approximate Computing

• Numerical Values NOT MatterHandwritten Digit Recognition

8

0

Similarity

0.9257

0.1563

9

1 0.0984

0.2435

...

...

0.9331

0.8929

55

Motivation for Approximate Computing

• NO Golden Standard Answer

56

Motivation for Approximate Computing

• Design a circuit that may not be 100% correct• Targeting at error-tolerant applications• Trade accuracy for area/delay/power

00 01 11 10

00 000 000 000 000

01 000 001 011 010

11 000 011 110

10 000 010 110 100

1001

B1B0

A1A0

Figure 26: K-Map and digital circuit for 2-bit multiplier .

57

Motivation for Approximate Computing

• Design a circuit that may not be 100% correct• Targeting at error-tolerant applications• Trade accuracy for area/delay/power

00 01 11 10

00 000 000 000 000

01 000 001 011 010

11 000 011 110

10 000 010 110 100

111

B1B0

A1A0

Figure 27: K-Map and digital circuit for approximate 2-bit multiplier .

58

Approximate BP Decoder

an-1 a3a2

0 1

Comparator

bn-1 b3b2 a1a0 b1b0

sa sb

sa sn-1 s3s2 s1s0

0 1

Figure 28: Proposed approximate architecture for G node.

59

Approximate BP Decoder

Error analysis of the proposed G node:

• Supposing that a and b are random input numbers with uniformdistribution.

• the probability of a[n−1:k] = b[n−1:k] and the probability of thea[k−1:0] < b[k−1:0] are derived as:

P(a[n−1:k] = b[n−1:k]) = (12 )

n−k,

P(a[k−1:0] < b[k−1:0]) =2k − 12n+1 .

• The Error Rate (ER) of the proposed approximate G node isconsidered as:

ER = (12 )

n−k· 2k − 1

2k+1 =2k − 12n+1 . (10)

60

Approximate BP Decoder

0.5 1 1.5 2 2.5 3 3.5

Eb/N0(dB)

10-3

10-2

10-1

100

BE

R

floating pointignored bit k=1ignored bit k=2ignored bit k=3ignored bit k=4

Figure 29: Simulation results of BP decoders with different ignored bit k.

61

Approximate BP Decoder

Inventer Inventer

AOU AOU

1 0

MaSa MbSb

1 0

adder

Inventer

AOU

1 0

MsSs

Figure 30: Conventional architecture for F node.62

Approximate BP Decoder

Ma

Sa

Mb

Sb

Adder Subtracter Comparator

Inventer

AOU

1 0 0 1

0 1

Ss

Sa Sb

Ms

Figure 31: Proposed approximate architecture for F node.

63

Approximate BP Decoder

an-1 a4a3 a2 a1 a0

a2 a1 a0an-1 a4a3

Figure 32: Proposed 3-bit approximate AOU.

64

Approximate BP Decoder

0.5 1 1.5 2 2.5 3 3.5

Eb/N0(dB)

10-3

10-2

10-1

BE

R

floating pointfixed point3 bits of AOU1 bit of AOU

Figure 33: Simulation results of BP decoders with different bits of AOU.

65

Approximate BP Decoder

Table 2: Implementation of Different Arch.s for (64, 32) Code.

Hardware overheads Conventional Approximate ReductionALUT 97, 283 61, 958 36.3%

Registers 16, 214 14, 751 9.0%

66

Conclusion

• A general methodology to implement approximate&stochastic BPdecoder for polar code

• Alleviating the contradiction between higher throughput andhardware consumption

• Significant hardware reduction with negligible performancedegradation

• Future work will focus on the implementation of data-basedapproximate&stochastic BP decoder

67

Algorithms and Implementationsfor MIMO Detection.

Introduction of Large-Scale MIMO System

• AdvantagesIncreased spectral efficiencyEnhanced link reliabilityImproved coverage

• DisadvantagesHindering the application of conventional data detection methods

68

Limitations of Conventional Detection Methods

• Maximum likelihood (ML)Computational complexity grows exponentially with the number oftransmitting antennas

• K-Best and sphere decoding (SD)Only favorable for small-scale MIMOSuffering from excessive complexity for large-scale MIMO

• Minimum mean-squared error (MMSE)Relying on Neumann approximationThe approximate error is proportional to the ratio M2/N

69

Belief Propagation (BP) Algorithm

• Great advantages of BPProviding better performanceRobust and insensitive to the selection of initial solutionMatrix-inversion free

• Proposed BPSymbol-based: suitable for high-order modulationReal domain: reduce the constellation sizeBalancing performance and complexity

70

System Model

For complex MIMO model with M transmitting (Tx) and N receiving(Rx) antennas, the received vector r can be obtained by

r = Hx + n

• r = [r1, r2, . . . , rN]T, x = [x1, x2, ..., xM]T ∈ ΘM

• H =

hi,j

1≤i≤N,1≤j≤Mis a complex-valued channel matrix.

• n = [n1, n2, . . . , nN]T is the additive white Gaussian noise (AWGN)

with ni ∼ CN (0, σ2), 1 ≤ i ≤ N.• The complex constellation Θ composed of Q = ∥Θ∥ = 2Mc distinct

points.

71

Channel Model

A correlated model is expressed by

H = R1/2r HwR1/2

t

• R1/2r ∈ CN×N: correlation between Rx antennas

• R1/2t ∈ CM×M: correlation between Tx antennas

• Hw: i.i.d. real Gaussian distributed

72

Channel Model

Three kinds of correlated channels

• Only correlation among Rx antennas considered:

H = R1/2r HwΣ

1/2t

• Only correlation among Tx antennas considered:

H = Σ1/2r HwR1/2

t

• Correlation among both Tx and Rx antennas considered:

H = R1/2r HwR1/2

t

73

A FG for MIMO Channels

symbol nodes

observation nodes

1x

2x

2Mx

2Nr

2r

1r

®®i jx r

a prior

information ®®j ir xa posterior

information

Figure 34: Factor graph of a real domain large-scale MIMO.

74

A Prior Information at Symbol Nodes

Soft information:Fxi→rj , pxi→rj ,αxi→rj

• a prior probability vector:

p(l)xi→rj = [p(l)

i,j (s1), . . . , p(l)i,j (s√Q−1)]

• a prior LLR vector:

α(l)xi→rj = [α

(l)i,j (s1), . . . , α

(l)i,j (s√Q−1)]

whereα(l)i,j (sk) = ln p(l)(xi = sk)

p(l)(xi = s0), k = 1, . . . ,

√Q − 1

75

A Posteriori Information at Observation Nodes

A posteriori information is defined as:

β(l)rj→xi = [β

(l)j,i (s1), ....β

(l)j,i (s√Q−1)]

whereβ(l)j,i (sk) = ln p(l)(xi = sk|rj,H)

p(l)(xi = s0|rj,H)

76

Symbol-Based BP Detection in Real Domain

symbol nodes

observation nodes

1x

2x

2Mx

2Nr

2r

1r

®®i jx r

a prior

information ®®j ir xa posterior

information

• Step1: Message Updating of Observation Nodes- Receiving a prior information obtained from neighbouring symbol

nodes- Updating a posteriori information- Passing it back to all symbol nodes

77

Symbol-Based BP Detection in Real Domain

symbol nodes

observation nodes

1x

2x

2Mx

2Nr

2r

1r

®®i jx r

a prior

information ®®j ir xa posterior

information

• Step2: Message Updating of Symbol Nodes- Receiving a posteriori information obtained from neighbouring

observation nodes- Updating a prior information- Passing it back to all the connected observation nodes

78

Numerical Results with QPSK Modulation

0 2 4 6 8 1010

−4

10−3

10−2

10−1

100

Eb/N

0(dB)

BE

R

SISO, AWGNM=N=16, MMSEM=N=16, General SE−BPM=N=16, Proposed BP

Figure 35: Performance comparison of different detection methods fori.i.d.Rayleigh fading channel (QPSK)

79

Numerical Results with 16-QAM Modulation

0 5 10 1510

−5

10−4

10−3

10−2

10−1

100

Eb/N

0(dB)

BE

R

SISO, AWGNM=8,N=128, MMSEM=8,N=128, Approximate method, k=3M=8,N=32, MMSEM=8,N=32, Approximate method, k=4M=8,N=32, Proposed BPM=32,N=64, MMSEM=32,N=64, Approximate method, k=4M=32,N=64, Proposed BP

Figure 36: Performance comparison of approximate MMSE and BP detectionsin i.i.d.Rayleigh channel (16-QAM) 80

Numerical Results with 16-QAM Modulation

0 5 10 1510

−5

10−4

10−3

10−2

10−1

100

Eb/N

0(dB)

BE

R

SISO,AWGNM=8,N=32, iid Rayleigh, MMSEM=8,N=32, iid Rayleigh, BPM=8,N=32, Rx correlation, MMSEM=8,N=32, Rx correlation, BPM=8,N=32, Tx correlation, MMSEM=8,N=32, Tx correlation, BPM=8,N=32, Tx correlation, damped BPM=8,N=32, Rx−Tx correlation, MMSEM=8,N=32, Rx−Tx correlation, BPM=8,N=32, Rx−Tx correlation, damped BP

Figure 37: Performance comparison of BP detections for all kinds of MIMOchannels with M = 8, N = 32 (16-QAM) 81

Numerical Results with 16-QAM Modulation

0 5 10 1510

−5

10−4

10−3

10−2

10−1

100

Eb/N

0(dB)

BE

R

idd Rayleigh, General SE−BPidd Rayleigh, Proposed BPRx correlation, General SE−BPRx correlation, Proposed BPTx correlation, General damped SE−BPTx correlation, Proposed damped BPRx−Tx correlation, General damped SE−BP Rx−Tx correlation, Proposed damped BP

Figure 38: Comparison of general and proposed BP detections for MIMOchannels with M = 8, N = 32 (16-QAM)

82

Half Time Break

83

Deep Learning Based BasebandSignal Processing.

Applications of Deep Learning

84

Deep Learning in communication systems

Satellite communications Vehicle to everything Smart devices

Internet of things 5G networks

85

Deep Learning in communication systems

• MotivationProblems hard to model mathematicallyAdvanced deep learning techniquesNear optimal performanceUniform architecture for various modules

• ChallengesJoint optimization for multiple modulesHigh training complexityUnfriendly hardware architecture

86

Our work

Deep learning in the baseband

• Uniform baseband accelerator based on BP• Massive MIMO detection with DNN• DNN-based polar codes decoder• A CNN channel equalizer

87

Deep Learning techniques

• A model of machine learning• Use multiple layers of neural networks• Learn data representations with multiple levels of abstraction using a

training set

88

Deep Neural Network (DNN)

• Deep neural network model:

y = f(x0;θ)

• Mapping function in the l-th layer:

xl = f(l)(xl−1; θl), l = 1, ..., L

Hidden LayersyInput Layer Output Layer

,i jW 1

,i jW 2

,i jW 3

,

argmin || ||=

-åki j

N

i iW n

D Y 2

1

Input D

ata

,,,,

=

,,

nX

nN

12

Desired Data

, , , , = , ,, ,nD n N1 2

a

NNNN

Outp

ut D

ata

,,,,

=

,,n

Yn

N12

N

N

Figure 39: Multi-layer architecture of a feedforward DNN.

89

Convolutional Neural Network (CNN)

• A class of feed-forward DNN• Convolutional layers to build feature maps

ci,j = ReLU(hi,j ⋆ x + bi,j)

• Reduces the number of free parameters

Figure 40: The typical architecture of a CNN.

90

Contents

Deep Learning Based Baseband Signal Processing

Improving BP MIMO Detector with Deep Learning

Polar BP Decoder Based on Deep Learning

Convolutional Neural Network Channel Equalizer

91

Belief Propagation MIMO Detector

• Belief propagation (BP) DetectorPros: Good BER performance, robustness, lower complexityCons: loopy factor graph, convergence rate

...

...Sym

bol n

ode

sO

bse

rvatio

n n

ode

s

x1

x2

xn

y1

y2

y3

yn

a prior information

a posteriori information

Figure 41: Factor Graph of a large MIMO system.

92

Modified BP Algorithms

• Damped BP: Mitigate loopiness by message dampingIterative updating rules:

β(l)ji (sk) = log

p(l−1)(xi = sk|yj,H)

p(l−1)(xi = s1|yj,H)

α(l)ij (sk) =

N∑t=1,t =j

β(l)ti (sk)

p(l)ij (xi = sk) =

exp(α(l)ij (sk))∑K

m=1 exp(α(l)ij (sm))

p(l)ij ⇐ (1 − δ)p(l)

ij + δp(l−1)ij

93

Multiscale Modified BP Algorithms

• Damped BP: Mitigate loopiness by message dampingIterative updating rules:

β(l)ji (sk) = log

p(l−1)(xi = sk|yj,H)

p(l−1)(xi = s1|yj,H)

α(l)ij (sk) =

N∑t=1,t =j

β(l)ti (sk)

p(l)ij (xi = sk) =

exp(α(l)ij (sk))∑K

m=1 exp(α(l)ij (sm))

p(l)ij ⇐ (1 − δ

(l)ij )p

(l)ij + δ

(l)ij p(l−1)

ij

• Multiscaled factors lead to better performance?• How to find the optimal damping factors δ

(l)ij

94

Multiscale Modified BP Algorithms

• Max-Sum (MS) BP: Futher reduced complexity by eliminating thedivisionIterative updating rules:

β(l)ji (sk) = log

p(l−1)(xi = sk|yj,H)

p(l−1)(xi = s1|yj,H)

α(l)ij (sk) =

N∑t=1,t =j

β(l)ti (sk)

p(l)ij (xi = sk) = exp(α(l)

ij (sk)− maxsm∈Ω

α(l)ij (sm))

p(l)ij ⇐ (1 − δ)λp(l)

ij − ω + δp(l−1)ij

95

Multiscale Modified BP Algorithms

• Max-Sum (MS) BP: Futher reduced complexity by eliminating thedivisionIterative updating rules:

β(l)ji (sk) = log

p(l−1)(xi = sk|yj,H)

p(l−1)(xi = s1|yj,H)

α(l)ij (sk) =

N∑t=1,t =j

β(l)ti (sk)

p(l)ij (xi = sk) = exp(α(l)

ij (sk)− maxsm∈Ω

α(l)ij (sm))

p(l)ij ⇐ (1 − δ

(l)ij )λ

(l)ij p(l)

ij − ω(l)ij + δ

(l)ij p(l−1)

ij

• Multiscaled factors lead to better performance?• How to find the optimal δ(l)ij , λ(l)

ij and ω(l)ij ?

96

The Framework to Build DNN from BP

..

...

...Sym

bol n

ode

s

Ob

se

rvatio

n n

ode

s

x1

x2

xn

y1

y2

y3

yn

a prior information

a posteriori information

.... ...

...

...

Input

layer

Hidden

layers

Output

layer

97

Converting BP to DNN

Table 3: Mapping BP factor graph (FG) to DNN

BP FG DNNNodes Neurons

Received signals Input data xTransmitted signals Output data y

l-th iteration l-th hidden layerBelief messages α(l), β(l), p(l) Hidden signals xl

Message updating rules Mapping functions of layersModification factors δ, λ, ω Parameters θ

98

Architecture of the DNN MIMO Detector

......

......

...

∫

∫

∫

∫

Input

layer

Hidden

layers

Output

layer

One BP iteration step

Figure 42: The architecture of the DNN detector with 3 BP iterations.

99

Unfolded one full BP iteration in the DNN

Figure 43: One full iteration in the DNN with Tx = Rx = 4 and BPSKmodulation.

100

Proposed DNN MIMO Detectors

Table 4: Summary of the proposed DNN MIMO Detectors: DNN-dBP andDNN-MS

Method DNN-dBP DNN-MSThe iterative algorithm Damped BP Max-Sum BPTraining parameters ∆ δ δ,λ,ω

Inputs y, δ(0), p(0)ij y, δ(0),λ(0),ω(0), p(0)

ijMapping functions f(l) The updating rules

Loss function L(x,O) = − 1M

M∑i=1

K∑k=1

xi(sk) log(Oi(sk))

101

Training Details

• Configuration: Tx × Rx = 8 × 32• SNR: 0, 5, 10, 15, 20dB• Optimization method: Mini-batch stochastic gradient descent

(SGD)• Number of layers: selected by pre-simulation• Platform: TensorFlow5

• Learning rate = 0.01 with Adam optimization6

• Parameters initialized with all 0.5

5M. Abadi et al., TensorFlow: large-scale machine learning on heterogeneous systems, Software available from tensorflow.org, 2015.6D. P. Kingma and J. Ba, “Adam: a method for stochastic optimization,” arXiv preprint arXiv:1412.6980, 2014.

102

Experiment: DNN-dBP and DNN-MS in i.i.d. channels

0 5 10 15 20Average Received SNR(dB)

10-6

10-5

10-4

10-3

10-2

10-1

100

BE

R

MMSEBPDNN-dBPHADMSDNN-MSAMPSDRSISO, AWGN

Figure 44: Performance comparison of SD, MMSE, BP, DNN-dBP, HAD, MSand DNN-MS in i.i.d. Rayleigh channels with asymmetric antennaconfiguration (M = 8; N = 32).

103

Experiment: DNN-dBP in correlated channels

0 5 10 15 20Average Received SNR(dB)

10-6

10-5

10-4

10-3

10-2

10-1

100

BE

R Rx correlation, BPRx correlation, HADRx correlation, DNN-dBPRx correlation, MMSETx correlation, BPTx correlation, HADTx correlation, DNN-dBPTx correlation, MMSERx-Tx correlation, BPRx-Tx correlation, HADRx-Tx correlation, DNN-dBPRx-Tx correlation, MMSE

Figure 45: Performance comparison of MMSE, BP, DNN-dBP and HAD indifferent correlated channels with asymmetric antenna configuration (M = 8; N= 32).

104

Experiment: DNN-MS in correlated channels

0 5 10 15 20Average Received SNR(dB)

10-5

10-4

10-3

10-2

10-1

100

BE

R

Rx correlation, BPRx correlation, MSRx correlation, DNN-MSTx correlation, BPTx correlation, MSTx correlation, DNN-MSRx-Tx correlation, BPRx-Tx correlation, MSRx-Tx correlation, DNN-MS

Figure 46: Performance comparison of MMSE, BP, MS and DNNMS indifferent correlated channels with asymmetric antenna configuration (M = 8; N= 32).

105

Contents

Deep Learning Based Baseband Signal Processing

Improving BP MIMO Detector with Deep Learning

Polar BP Decoder Based on Deep Learning

Convolutional Neural Network Channel Equalizer

106

Polar BP Decoding

+

+

+

+

g

g

g

g

u1

u5

u3

u7

u2

u6

u4

u8

+

+

g

g

+

+

g

g

+

g

+

g

+

g

+

g

x1

x2

x3

x4

x5

x6

x7

x8

Stage 1 Stage 2 Stage 3

Figure 47: Factor graph of polar codes with N = 8.

107

Polar BP Decoding

• Left to right and right to left propagations• L(t)

i,j denotes left to right propagation in i-th stage j-th node duringt-th iteration.

• The iterative updating rules associated with L(t)i,j and R(t)

i,j :L(t)

i,j = g(L(t)i+1,2j−1, L

(t)i+1,2j + R(t)

i,j+N/2),

L(t)i,j+N/2 = g(R(t)

i,j , L(t)i+1,2j−1) + L(t)

i+1,2j,

R(t)i+1,2j−1 = g(R(t)

i,j , L(t−1)i+1,2j + R(t)

i,j+N/2),

R(t)i+1,2j = g(R(t)

i,j , L(t−1)i+1,2j−1) + R(t)

i,j+N/2,

where g(x, y) = ln 1 + ex+y

ex + ey .

108

Min-sum Decoding

• Computation complexity of exponential and logarithm function isprohibitive.

• Low-complexity min-sum approximation is introduced:

g(x, y) ≈ sign(x)sign(y)min(|x|, |y|)

• About 0.4 dB degradation from min-sum approximation under longcode.

109

Scaled/Offset Min-sum Decoding

• To compensate degradation of MS decoding, scaled/offset min-sum isproposed:

g(x, y) ≈α · sgn(x)sgn(y)min(|x|, |y|),

g(x, y) ≈sgn(x)sgn(y)max(

min(|x|, |y|)− β, 0).

• Scaling and offset factors play key roles for performance.• How to obtain optimal parameters?

110

Network Architectures

• Unfolding iterative polar decoder into recurrent structure.

Figure 48: Recurrent architecture of neural network decoder.

111

Training Details

• Optimization method: Mini-batch stochastic gradient descent(SGD)

• Learning rate = 0.01 with Adam optimization7

• Cross entropy loss function:

L(y, y) = − 1N

N∑i=1

ui log(oi) + (1 − ui) log(1 − oi).

• All zero codewords with AWGN noise at single SNR(SNR = 1dB).

7D. P. Kingma and J. Ba, “Adam: a method for stochastic optimization,” arXiv preprint arXiv:1412.6980, 2014.

112

Results

10 20 30 40 50 60 70 80 90 100

Epoch

0

0.2

0.4

0.6

0.8

1Lo

ss/ P

aram

eter

val

ue

Normalized lossL2R factor R2L factor

Figure 49: Evolution of scaling factors for (1024, 512) polar code.

113

Results

10 20 30 40 50 60 70 80 90 100

Epoch

-0.2

0

0.2

0.4

0.6

0.8

1Lo

ss/ P

aram

eter

val

ue

Normalized lossL2R offset R2L offset

Figure 50: Evolution of offset factors for (1024, 512) polar code.

114

Results

1 1.5 2 2.5 3 3.5 4

Eb/N0 (dB)

10-6

10-5

10-4

10-3

10-2

10-1

100

BE

R

BPApproximate MS2D Normalized MS, =[0.875, 0.9375]2D Offset MS, = [0.0, 0.25]

Figure 51: Performance comparison of BP decoding for (1024, 512) polarcodes.

115

VLSI Architecture

• Offset MS only requires one extra subtraction −→ More friendly tohardware design

• Bi-directional updating −→ Reduce 50% decoding latency

COMP

Z [q-1:0]

Offset

X [q-1:0]

Y [q-1:0]

1

1q-2

1

q

q-2 q-2

q-2

(a) Modified Offset PE.

0 1

Ro

utin

g

Channel Output

PEArray

==

=

PEArray

==

=

Ro

utin

g

Memory

R Message

L Message

(b) Diagram of polar BP decoder.

Figure 52: Hardware architecture of polar BP decoder.

116

Contents

Deep Learning Based Baseband Signal Processing

Improving BP MIMO Detector with Deep Learning

Polar BP Decoder Based on Deep Learning

Convolutional Neural Network Channel Equalizer

117

Channel Equalization

• Inter-symbol Interference (ISI) The channel with ISI is modeledas a finite impulse response (FIR) filter h. The signal with ISI isequivalent to the convolution of channel input with the FIR filter asfollows:

v = s ⊗ h.

• Nonlinear Distortion The nonlinearities in the communicationsystem are mainly caused by amplifiers and mixers:

ri = g[vi] + ni.

118

Maximum Likelihood Equalizer

• Estimation Use training sequence s to estimate channelcoefficients h that maximizes likelihood:

hML = arg maxh

p(r|s,h).

• BCJR Use BCJR algorithm to find codeword that maximizes aposterior probability:

p(si = s|r, hML), i = 1, 2...N.

• Drawbacks• Good performance for ISI, but bad for nonlinear distortion• Require accurate a priori information of channel• Prohibitive O(n2) complexity

119

Proposed CNN Equalizer

Channel

CNN Equalizer

Co

nv +

Re

LU

Co

nv +

Re

LU

Co

nv

. . .

NN Decoder

H(z) g(v)Channel

Encoder

!

"

v r ! "#

Figure 53: System model.

• Fully convolutional neural network with 1-D convolution:

yi,j = σ(C∑

c=1

K∑k=1

Wi,c,kxc,k+j + bi),

• Train parameters to maximize likelihood:

θ = arg maxθ

p(s = s|r,θ).

120

Training Details

• CNN structure: 6 layers with 1 × 3 filter• Learning rate = 0.001• Mean squared error (MSE) loss function:

L(s, s) = 1N∑

i|si − si|2,

• Random codewords from SNR 0 dB to 11 dB.• Weights initialization: N ∼ (µ = 0, σ = 1)

121

Results on Linear Channel with ISI

• Channel coefficients:

H(z) = 0.3482 + 8704z−1 + 0.3482z−2.

122

Results on Nonlinear Channel with ISI

• Channel coefficients:

H(z) = 0.3482 + 8704z−1 + 0.3482z−2.

• Nonlinear distortion:

|g(v)| = |v|+ 0.2|v|2 − 0.1|v|3 + 0.5 cos(π|v|).

123

Results on Joint DNN-CNN Detector

...

..

1

.

3

.

5

.

7

.

9

.

11

.

10−4

.

10−3

.

10−2

.

10−1

.

Eb/N0 (dB)

.

BE

R

.

. ..GPC+SC

. ..DL

. ..CNN+NND

. ..CNN+NND-Joint

• Outperforms GPC+SC.

• Only requires about 30% parameters compared with DL method.

124

Conclusion

With deep learning techniques we achieve:

• A framework to design DNN by unfolding BP• A CNN channel equalizer• Performance improvements• Uniform architecture for baseband modules• Efficient hardware implementations

125

Q & A

125