50320130403009 2

Transcript of 50320130403009 2

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

0976 – 6405(Print), ISSN 0976 – 6413(Online) Volume 4, Issue 3, September - December (2013), © IAEME

121

MONITORING AND VISUALIZING STUDENTS TRACKING DATA

ONLINE LEARNING ACTIVITIES (TRACKING IN E-LEARNING

PLATFORMS) MVSA

YAHYA AL-ASHMOERY*, ROCHDI MESSOUSSI*, YOUNESS CHAABI *,

RAJA TOUAHNI *

* Laboratory of Systems of Telecommunications and Engineering of the Decision (LSTED)

University IbnTofail, Faculty of Sciences, Kenitra, Morocco.

ABSTRACT

Most commercial or open source Course Management Systems CMS software does

not include comprehensive access tracking and log analysis capabilities and lack the support

for many aspects specific to evaluating participation level and analyzing interactions. CMS

does not provide any tools for visually representing ongoing interactions. It is difficult and

time consuming for the teachers and the educationalists to ascertain the number of

participants, non-participants and lurkers in an ongoing discussion. This paper presents

MVSA to Tracking student activity in online course management systems a system that takes

a novel approach of using Web log data generated by course management systems (CMS) to

help instructors become aware of what is happening in distance learning classes. Specifically,

techniques from information visualization are employed to graphically render complex

multidimensional student tracking data provided by the Course Management System. MVSA

system provides accurate tracking information with an easy-to-use interface, and offers a

wide range of activity information to instructors and educational researchers

Keywords: Web log; log analysis, Collaborative learning, Asynchronous discussions, CSCL.

INTRODUCTION

CMSs accumulate large logs of data of student activities in on-line courses and

usually have built-in monitoring features that enable the instructors to view some statistical

data, such as a student’s first and last login, the history of pages visited, the number of

messages the student has read and posted in discussions, marks achieved in quizzes, etc.

INTERNATIONAL JOURNAL OF INFORMATION TECHNOLOGY &

MANAGEMENT INFORMATION SYSTEM (IJITMIS)

ISSN 0976 – 6405(Print) ISSN 0976 – 6413(Online)

Volume 4, Issue 3, September - December (2013), pp. 121-135

© IAEME: http://www.iaeme.com/IJITMIS.asp

Journal Impact Factor (2013): 5.2372 (Calculated by GISI)

www.jifactor.com

IJITMIS

© I A E M E

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

0976 – 6405(Print), ISSN 0976 – 6413(Online) Volume 4, Issue 3, September - December (2013), © IAEME

122

Instructors may use this information to monitor the students’ progress and to identify

potential problems. However, tracking data is usually provided in a tabular format, is often

incomprehensible, with a poor logical organization, and is difficult to follow. As a result,

only few skilled and technically advanced distance-learning instructors use Web log data.

Moreover, CMSs do not provide information about the actual learning that is taking place

(e.g. the level of understanding achieved by a student on a particular concept and the

concepts from the course material the students face difficulties with), albeit such information

is very important for instructors in distance learning (Mazza, 2002), and is required to be

tracked by recognized accreditors for college and university programs, such as ABET1 in the

United States many university instructors have started to use Course Management Systems

(CMSes) to manage or distribute course-related materials and conduct online learning

activities. Students access course materials via the CMS, as well as participate in other

learning activities, such as submitting homework assignments and posting discussion

messages in online forums. Preliminary studies indicate that CMSes have the potential to

both increase interaction beyond the classroom (Knight, Almeroth&Bimber, 2006) and

positively affect student engagement with the course materials (Harley, Henke & Maher,

2004). However, most CMSes lack the comprehensive function to track, analyze, and report

students’ online learning activities within the CMS. As reported in a previous study (Hijon&

Carlos, 2006), the built-in student tracking functionality is far from satisfactory. The common

problem is that a CMS only provides low-level activity records, and lacks higher level

aggregation capabilities. Without students’ learning activity reports, an instructor has

difficulty in effectively tailoring instruction plans to dynamically fit students’ needs.

Our research focuses on exploiting available CMS logs to provide learning activity

tracking. One possible way in which CMS log data can be used is to visualize learning

activity data via a more human-friendly graphical interface. This interface is updated in real-

time to reflect all activities, and is integrated into the original CMS. Using this graphical

interface, instructors can gain an understanding about the activities of the whole class or can

focus on a particular student. Students can use the interface to understand how their progress

compares to the whole class. Our hypothesis is that the availability of this graphical interface

can provide important insight into how students are accessing online course materials, and

this insight will be useful to both the instructor and to students.

Our log analysis tool has been developed for the Moodle CMS (Moodle, 2013),

MVST is superior to the original Moodle log facility in several aspects:

1. Contact Online with E-learning Platforms LMS (Learning management system ) like

Moodle, (Integrated into LMS as a Plugin)

2. Mapping Asynchronous Discussions System

3. Materialization of an intelligent agent to assist teachers in a collaborative work

environment

4. Building a smart student profile

5. Advising teachers to help them manage distance classes is required to reason about the

students’ knowledge status, including both individual and group bases

6. Interaction Visualization in Web-based Learning using Complex Graphs

7. It provides aggregated and meaningful statistical reports

8. It visualizes the results, which makes comparisons between multiple students much

easier

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

0976 – 6405(Print), ISSN 0976 – 6413(Online) Volume 4, Issue 3, September - December (2013), © IAEME

123

9. Display the activities that a student has performed also identifies the materials a

student has not yet viewed

10. It has the capability to remind students to view those materials that they have not yet

downloaded

11. Implement a visualization tool that will enhance the means by which asynchronous

online communications in discussion forums can be analyzed

12. Choose evaluation procedures that can be integrated with the visualization tool that will

allow comparisons between groups participating in the online discussion environments

13. Observe any sort of user, including lurkers, and track finely any of their activities of

communications on forum on both server side and client side.

An important aspect of online teaching and learning is the monitoring of student

progress and tools utilization in online courses. Educational research shows that monitoring

the students’ learning is an essential component of high quality education. Using log files of

learning management systems can help to determine who has been active in the course, what

they did, and when they did it. (Romero, Ventura & Garcia, 2007). Feedback about the status

and the history of the activities in online-courses can be useful to teachers, students, study

program managers and administrators. For example it can help in better understanding

whether the courses provide a sound learning environment (availability and use of discussion

forums, etc.) or show to which extent best practices in online learning are implemented

(students provide timely responses, teachers are visible and active, etc.). Learning

Management Systems provide some reporting tools that aim to monitor students' and tools'

usage, but these are seldom used mainly because it is difficult to interpret and exploit them;

the obstacles to interpretation and exploitation are the following:

• Data are not aggregated following a didactical perspective;

• Certain types of usage data are not logged;

• The data that are logged may seem incomplete;

• Users are afraid that they could draw unsound inferences from some of the data.

In the attempt of overcoming these difficulties, new reporting functions of LMS have

been added, for instance Moodle now provides reporting tools which enable teachers to

evaluate the activity patterns of individual students. Moreover in the last few years

researchers have begun to investigate various data mining methods which allow exploring,

visualising, interpreting and analysing eLearning data thus helping teachers in better

understanding and improving their eLearning practice (Romero et al. 2010) (Mazza&Botturi,

2007).

USE CASE

Recently, research is focusing towards finding methods for the evolvement and

support of critical thinking through interactions, taking place within asynchronous

discussions, in order to achieve high quality learning. Such a goal requires tools, frameworks

and methods for the facilitation of monitoring, and/or self-reflection and therefore Self

regulation that could be supported by the automated analysis of the complex interactions that

occur:

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

0976 – 6405(Print), ISSN 0976 – 6413(Online) Volume 4, Issue 3, September - December (2013), © IAEME

124

Monitor Collaboration Among Students. Objective: to monitor how much the student

collaborates with other students

Two different indicators: observations and

contributions

-Observations: reading of messages (in forum) or

content (in wiki and chat).

-Contributions: creation of new messages (in forum)

or content (in wiki and chat)

-Contributions: sum of contributions on forum, wiki

and chats over the time

Monitor Interaction Teacher-Student. Objective: to monitor the interactions

betweenteacher and individual students.

Teacher view of forum

Teacher posting to forum

Teacher posting feedback to assignments

Teacher grading assignments.

Monitor Knowledge testing.

Objective: to monitor the student’s use of their

available knowledge in tests

Quiz view

Quiz submission

Assignment view

Assignment submitted

Monitor information access.

Objective: to monitor the student's access

toresources (file, HTML page, IMS

package),assignments and quizzes

View of resource, also over the time

Submission of assignment, also over the time

Submission of quizzes, also over the time

Monitor organization of learning. Objective: to monitor how the student organizes

her own learning process by planning, preparing

for f2f meetings, preparing exams, reviewing

learning goals, looking at and comparing

performances andreflecting about the learning

statistics andoutcomes as well as about the

process itself.

- Pending resources: resources not viewed out of

existing resources.

- Pending assignments: assignments not viewed (or

not submitted) out of existing assignments.

- Pending quizzes: quizzes not submitted out of

existing quizzes.

Monitor course activity level. The focus ison

the course. The administrator wants to seethe

level of usage of courses

-For Administrators only

Course observations: activities of users aimed at

observing course content (view of resources, reading

discussions, etc.)

- Course contributions: activities of users aimed at

creating new course contents (adding or updating new

materials, creation of quizzes, creating a new message

in discussions, etc.).

Monitor teacher facilitation level.The focusis

on the teacher. The study program managerwants

to identify the level of activity ofteachers in

facilitating learning with the LMS

-study program managers only

- Teacher’s facilitation of collaboration: observations

and contributions by a teacher

- Teacher’s facilitation of interaction: usage of

assignments and quizzes by a teacher

- Teacher’s facilitation of information: usage of

assignments and resources by a teacher

Monitor Student learning level. The focus is on

students (similar to the previous one)

-study program managers only

-Students learning by collaboration: observations and

contributions by a student

- Students learning by interaction: usage of

assignmentsand quizzes by a student

- Students learning by information: usage of

assignmentsand resources by a student

Monitor Course learning level. In this use case

the focus is on course and on identifyingthe level

of facilitation by teachers and thelevel of

learning by students.

-study program managers only

- Sum of Teacher’s facilitation of collaboration and

Students learning by collaboration

- Sum of Teacher’s facilitation of interaction and

Students learning by interaction

- Sum of Teacher’s facilitation of information and

Students learning by information

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

0976 – 6405(Print), ISSN 0976 – 6413(Online) Volume 4, Issue 3, September - December (2013), © IAEME

125

THE COLLABORATIVE ENVIRONMENT

Our system is based on some basic principles related to the CSCL (Computer

Supported Collaborative Learning) paradigm (Koschmann, 1996). These principles are:

(1) joint construction of a problem solution

(2) coordination of group members for planning the tasks,

(3) semi-structuration of the interaction mechanisms

(4) focus on both the learning process and the learning result, and therefore, explicit

representation of the production and interaction processes

Figure: The four levels of the architecture of MVSA

• Configuration level Once the teacher(s) have planned an experience of collaborative learning, on this level

they can configure and install automatically the environment needed to support the

activities of groups of students working together. The environment will provide the

resources needed for carrying out joint tasks. In the configuration level teachers specify

tasks, resources and groups, either by starting from the scratch or reusing generic

components

• Performance level This is the level where a group of students can carry out collaborative activities with

the support of the system. Activities may involve a variety of tasks with associated shared

workspaces. Collaboration is conversation-based. The system manages the users

interventions, named contributions, supporting the co-construction of a solution in a

collaborative argumentative discussion process. All the events related to each group and

experience are recorded. They can be analyzed and reused for different purposes in the

analysis and organization levels.

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

0976 – 6405(Print), ISSN 0976 – 6413(Online) Volume 4, Issue 3, September - December (2013), © IAEME

126

• Analysis Level In this level we analyze the user’s interaction and make interventions in order to

improve them. We offer tools for quantitative and qualitative analysis for observing and

analyzing the process of solving a task in the performance level. In the analysis level we

propose a way of observing and value the users attitudes when they are working together.

We offer the possibility of intervention by sending messages to the group or to individuals

explaining how to improve different points of their work. Finally, we register the messages

and the moment when we make this intervention and analyze the improvements.

• Organization level Here we gather, select and store the results of collaborative learning experiences and

the processes. The information is structured and valued for searching and reusing

purposes. This information is stored as cases forming an Organizational Learning

Memory. We offer functionalities for defining, searching, collecting different cases, and

for defining links to work material in the configuration level for related tasks. For more

information about this level.

MVSA ARCHITECTURE

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

0976 – 6405(Print), ISSN 0976 – 6413(Online) Volume 4, Issue 3, September - December (2013), © IAEME

127

MVSA INTERFACE

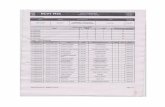

User Activity Provides a rich set of information about how often and how intensely students

interacted with Moodle. Figure 3 shows the student activity statistics for the MS110A course.

The statistics show six fields of data: total number of views, total number of sessions, total

online time, number of viewed resources, number of initial threads posted by the user, and the

number of follow-up messages posted. Normally student names are provided in the instructor

view, however, to protect student privacy, student names have been removed from Figure 3.

The first line of the table shows the average value for all students for each variable. The

average view count is 357, and the maximum is 776. The average number of sessions is 60,

and the maximum is 130. And the average online time is 7 hours 15 minutes, while the

maximum online time is 16 hours 32 minutes. In terms of the number of visited resources, we

note that only two students had viewed all 52 resources posted on the course page. The

average number of viewed resources is 36. Our preliminary analysis shows that there is a

positive correlation between a student’s forum activity and the overall course grade. Students

who frequently viewed the forums performed better on course assignments and exams. In

future work, we plan to identify which online activities predict academic performance

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

0976 – 6405(Print), ISSN 0976 – 6413(Online) Volume 4, Issue 3, September

Discussion in forum Discussion plot example: visualization of discussion threads focusing on the students

who have initiated the threads.

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

6413(Online) Volume 4, Issue 3, September - December (2013), © IAEME

128

Figure 2: User activity

Discussion plot example: visualization of discussion threads focusing on the students

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

December (2013), © IAEME

Discussion plot example: visualization of discussion threads focusing on the students

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

0976 – 6405(Print), ISSN 0976 – 6413(Online) Volume 4, Issue 3, September - December (2013), © IAEME

129

Summary of a user activities Indicator that features the statistical data related to four different activities of a user on

a discussion forum. The main objective of such indicator is to provide an overview of the

following activities:

� Reading messages

� Viewing course materials or assignments that are posted in the forum

� Posting new messages (or starting new discussion threads)

� Replying to messages

Data indicators for an overview of user activity on a discussion forum

MAPPING ASYNCHRONOUS DISCUSSIONS SYSTEM

• Online Participation and Interaction

• Visualization Mapping :

which could help them understand the flow of conversation

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

0976 – 6405(Print), ISSN 0976 – 6413(Online) Volume 4, Issue 3, September - December (2013), © IAEME

130

• Visualization of Interaction, Learning or lurking?

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

0976 – 6405(Print), ISSN 0976 – 6413(Online) Volume 4, Issue 3, September - December (2013), © IAEME

131

ADVICE GENERATION

We have discussed the need for a computational framework for advising teachers to

help them manage distance classes is required to reason about the students’ knowledge status,

including both individual and group bases, and to decide appropriate advice

• Advice concerning individual students performance

• Advice concerning each group performance

• Advice concerning the whole class performance

1. Generating Type-1 advice Type 1-1 Advice is used to inform a facilitator about the problems related to the

student’s knowledge status. As mentioned earlier measures the student's knowledge status

regarding each domain concept as “Completely Learned”, “Learned”, or “Unlearned”. Type

1-1 advice will be generated when the student knowledge model shows concepts with

“Unlearned” or “Learned” understanding levels. In this case, should investigate the reason(s)

that led to this problem, which may include:

• The student has not completely read and worked on the learning objects and

assessment quizzes related to the concept.

• The student has not completely mastered the related prerequisite concepts.

• The student has not participated in the communication activities related to the

concept.

To specifically investigate the possible reason(s), it is necessary to perform more

detailed analysis using the information available in the student behavior model and the

student knowledge model

Type 1-2 Advice is used to inform a facilitator about the student’s progress with

course material related to a certain concept. will use the course calendar and the student

behaviour model (which is part of the student model) to determine if the student is delayed

with (lagging behind) the course-studying plan. The AG(Advice Generator) will deliver

advice to the facilitator with information such as the student name, the concepts with which

student is delayed, and the delay time (time-lag) of each concept. The facilitator may send

this information to the student or take the necessary actions depending on the delay times and

student case. Besides assisting facilitators to be more knowledgeable about their distant

students, this type of advice is useful in making students feel that they are being supervised

from their distant teachers.

Type 1-3 Advice offers more attention to the students who are at unsatisfactory

learning levels. Type 1-3 advice is used to focus on the students evaluated as “Weak”. will

classify those “Weak” students according to their communication levels (Weak and

uncommunicative, Weak and normally communicative, Weak and highly communicative).

The facilitator could take some action, e.g. talking directly to the students, creating special

online chatting sessions to discuss the reasons for their lagging behind their peers and

encouraging the students, or directing the students to contact their more knowledgeable peers.

Type 1-4 Advice is used, in contrast to Type 1-3 advice, to inform the facilitator about

the students with advanced learning levels. In this case, the AG looks for students evaluated

as “Excellent”. As in Type 1-3 advice, will classify the “Excellent” students according to

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

0976 – 6405(Print), ISSN 0976 – 6413(Online) Volume 4, Issue 3, September - December (2013), © IAEME

132

their communication levels. The facilitator may use this information to encourage those

students to maintain their general learning levels and/or to direct them to help other “Weak”

peers by talking to them through mail or discussion forums.

Type 1-5 Advice is generated to inform facilitator about the students who had not

started working with the course till the time of advice generation session. If this type of

advice is generated for one of the students, then other Type-1 advice will be suppressed.

2. Generating Type-2 advice Type-2 advice is concerned with a group of students. The learning level of each

concept and the general learning level of a set of concepts will be monitored to identify

problematic situations concerning the group’s learning. This type of advice enables the

facilitators to know about common problems that face a group and correlate these problems

to the group characteristics. In addition, the facilitator could try to solve the highlighted

problems by providing the students with appropriate feedback and guiding information. The

following subtypes are considered:

Type 2-1 Advice is used to inform a facilitator about problems related to the group’s

knowledge status. This advice subtype will be generated when the group knowledge model

shows concepts with “Unlearned” or “Learned” levels. Similar to the actions performed in

Type 1-1 advice, AG searches for reason(s) that may lead to this situation and presents this

information to the facilitator together with a recommendation of some actions that may be

taken.

Type 2-2 Advice is used to inform the facilitators about the problematic situations

related to the groups' learning levels. The facilitator’s attention is directed to groups, which

have unsatisfactory learning levels. Type 2-2 advice that concerns groups is similar to Type

1-3 advice that concerns individual students. Advice Type 2-2 is used to highlight to the

facilitator the “Weak” groups. will classify those “Weak” groups according to their

communication levels (weak and uncommunicative, weak and normally communicative, and

weak and highly communicative). The facilitator can take some actions, such as talking

directly to the group members, creating a special discussion forum or chat sessions for the

group, or guiding group members to “Excellent” peer students especially from the same

group.

Type 2-3 Advice is used to inform the facilitator about groups with satisfactory

learning levels. In this type of advice, AG should look for the “Excellent” groups. As in Type

2-2 advice, will classify “Excellent” groups according to their communication levels. This

information will be highlighted to the facilitator who may decide to encourage students in

these groups to maintain their general learning levels and/or to give help to their “Weak”

peers via e-mail, chat, or by posting on the discussion forums.

3. Generating Type-3 advice Type-3 advice is concerned with the status and behaviour of the whole class. Advice

of this type does not automatically result in subsequent recommended advice or feedback to

individual students instead it is primarily used to advice and guide course facilitators while

they are managing their distance classes. The overall class learning level will be monitored

according to each concept learning level. This type of advice is important to the facilitator

because it gives an overview of the class, and highlights the common problems. The

facilitator may try to solve these problems during the course period by taking appropriate

educational actions. Furthermore, analyzing the generated information and the reasons behind

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

0976 – 6405(Print), ISSN 0976 – 6413(Online) Volume 4, Issue 3, September - December (2013), © IAEME

133

common class problems, the facilitator may consider how to avoid the occurrence of these

problems in the following courses.

Type 3-1 Advice is used to inform the facilitator about problems related to the

knowledge status of the whole class. This advice will be generated when the AG detects

concepts with Unlearned or Learned levels in the class knowledge model. The AG will search

for possible reason(s) that might have led to this situation and will notify the facilitator about

it. The course facilitator may then make, according to the situation, appropriate decisions and

pass them to all students in the class. For example, if the class knowledge model indicates

that a concept c is “Unlearned” by the class because most students have not studied the

learning objects related to c, will highlight this situation to the facilitator. In this case the

facilitator may encourage the students to start studying learning objects related to c.

Type 3-2 Advice is generated to inform the facilitator about excellent students (for example,

the top three) and weak students (for example, the bottom three) relative to the whole class

during each of the advice generating sessions.

Type 3-3 Advice is generated to the facilitator to inform him about the most and least

communicative students relative to the whole class during each of the advice generating

sessions.

Type 3-4 Advice is generated to the facilitator to inform about the most and least

active students relative to the whole class during each of the advice generating sessions.

Students’ activity is measured by the aggregate number of interactions (hits) made by the

student in different sessions. Information from this advice can be compared to information

from advice Type 3-2 to correlate between the students’ activity and their general learning

levels.

MOODLE IN A LEARNING ENVIRONMENT

Moodle is an open source course management system (Moodle, 2013) designed for

managing flexible communities of learners [Lengyel et.al, 2007] based on the principles of

social pedagogy (Moodle, 2011). It is a software tool, which creates communication and

collaboration channels. Two attractive aspects of Moodle are: It is extensible and so a

developer / researcher / educationalist can contribute to its development and add the modules

they require. Secondly, Moodle is customizable. There are many options that can be adjusted

to suit the needs of the user.

Blocks in Moodle Blocks are “boxes” that appear on both sides of a Moodle page when displayed on a

browser (Alier et.al, 2007). Moodle is highly adaptable, driven by the use of these blocks,

which can be chosen and structured in a desired way. The Moodle community has created

many different add-on blocks to choose from. All courses in Moodle contain blocks where

the center block displays course content. Blocks can be added or removed to customize the

look and feel of the site.

CONCLUSION

This research to design and implement MVSA application to track students’ online

learning activities based on CMS logs. We then visualize the results with a simple graphical

interface. MVSA is also able to automatically send feedback and reminder emails based on

students’ activity histories. We have integrated the MVSA module into the Moodle CMS and

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

0976 – 6405(Print), ISSN 0976 – 6413(Online) Volume 4, Issue 3, September - December (2013), © IAEME

134

made some small interface changes to Moodle. Intuitively, the presence of the popularity bars

should encourage students to check the course materials more frequently and promptly if he

or she sees most of their classmates have already done so. Our hypothesis is that the

availability of MVSA statistics will positively affect both how an instructor adapts the course

and how students learn. Monitoring student learning activity is an essential component of

high quality education, and is one of the major predictors of effective teaching (Cotton,

1998). Research has shown that letting a learner know his or her ranking in the group results

in more effective learning. MVSA provides a possible means for students and the instructor

to receive this feedback. In future work, we plan to more comprehensively evaluate the

impact of MVSA statistics on student academic performance.

REFERENCES

[1] SujanaJyothi, Claire McAvinia*, John Keating (2012). "A visualisation tool to aid

exploration of students’ interactions in asynchronous online communication",

Computers & Education, Volume 58, Issue 1, January 2012, Pages 30-42.

[2] Abik, M. & Ajhoun, R. (2009). “Normalization and Personalization of Learning

Situation: NPLS”, International Journal of Emerging Technologies in Learning

(iJET), vol. 4(2), pp. 4-10.

[3] Alvaro R.F. & Joanne B.L. (2007). “Interaction Visualization in Web-based

LearningusingiGraphs”. Proceedings of the Eighteenth ACM Conference on

Hypertext and Hypermedia, Hypertext 2007. pp. 45-46.

[4] Bratitsis T. &Dimitracopoulou A. (2006). “Monitoring and Analyzing Group

Interactions in Asynchronous Discussions with the DIAS System”. CRIWG 2006:

pp. 54-61.

[5] Mazza, R. &Dimitrova, V. (2007) CourseVis: A Graphical Student Monitoring Tool

for Facilitating Instructors in Web-Based Distance Courses. International Journal in

Human-Computer Studies (IJHCS). Vol. 65, N. 2 (2007), 125-139. Elsevier Ltd.

[6] Riccardo M. &Dimitrova V. (2003). “CourseVis: Externalising student information to

[7] facilitate instructors in distance learning”. Proceedings of Artificial Intelligence in

Education (AIED), pp. 279-286

[8] Mazza, R., &Botturi, L. (2007). “Monitoring an online course with the GISMO tool: a

[9] case study”. Journal of Interactive Learning Research, vol. 18(2), pp. 251-265.

[10] May M., George S. &Prevot P. (2007). “Tracking, Analyzing and Visualizing

Learners’, Activities on Discussions Forums”. Proceedings of the 6th IASTED

International Conference on Web-based Education (WBE). France, pp. 649-656.

[11] Bratitsis, T., &Dimitracopoulou, A. (2008). Interpretation issues in monitoring and

analyzing group interactions in asynchronous discussions. International Journal of

e-Collaboration (IJeC), 4(1), 20–40.

[12] Mazza, R. &Dimitrova, V. (2004). “Visualizing Student Tracking Data to Support

Instructors in Web-Based Distance Education”. In Proceedings of 13th

International

Conference on World Wide Web, pp. 154-161.

[13] Moodle - A Free, Open Source Course Management System for Online Learning

(2011). [Online: Available at :http://moodle.org]

[14] Moore, M. G. (1989). “Editorial: Three types of interaction”. The American Journal

of Distance Education, vol.3 (2), pp. 1-6.

International Journal of Information Technology & Management Information System (IJITMIS), ISSN

0976 – 6405(Print), ISSN 0976 – 6413(Online) Volume 4, Issue 3, September - December (2013), © IAEME

135

[15] Schellens, T. &Valcke, M. (2005). “Collaborative learning in asynchronous

discussion groups: What about the impact on cognitive processing?” Computers in

Human Behavior, vol.21, pp. 957-975.

[16] Scheuermann, Larsson & Toto (2003). “Learning in virtual environments –

facilitating interaction and moderation”. CSCL 2003 – Proceedings of International

Conference of Computer-supported Collaborative Learning, Bergen.

[17] Schrire, S. (2004). “Interaction and cognition in asynchronous computer

conferencing”. Instructional Science. vol. 32. pp. 475–502.

[18] Schrire, S. (2006). “Knowledge building in asynchronous discussion groups: going

beyond quantitative analysis”. Computers & Education, vol.46, pp.49-70.

[19] Schults, N., & Beach, B. (2004). “From Lurkers to Posters”. Australian National

Training Authority.

[20] Senin P. (2008). “Dynamic Time Warping Algorithm Review”, Information and

Computer Science Departament University of Hawaii, Honolulu.

[21] Beaudoin, M. F. (2002). “Learning or lurking? Tracking the “invisible” online

student”. Internet and Higher Education, vol 5, pp. 147-155.

[22] Günes, I., Akçayb, M., &Dinçera, G. (2010)."Log analyser programs for distance

education systems". Procedia Social and Behavioral Sciences, Volume: 9, Elsevier,

108-1213.

[23] Jong, B., Chan, T. & Wu, Y. (2007)."Learning Log Explorer in E-Learning

Diagnosis". IEEE Transactions oneducation. Volume: 50, Issue: 3, IEEE, 216-228.

[24] Zhang, H., Almeroth, K., Knight, A., Bulger, M. & Mayer, R. (2007)." Moodog:

Tracking students' Online Learning Activities". In C. Montgomerie & J. Seale (Eds.),

Proceedings of World Conference on Educational Multimedia, Hypermedia and

Telecommunications 2007, 4415-4422. Chesapeake, VA: AACE.

[25] Vibha B. Lahane And Prof. Santoshkumar V. Chobe, “Multimode Network Based

Efficient and Scalable Learning of Collective Behavior: A Survey”, International

Journal of Computer Engineering & Technology (IJCET), Volume 4, Issue 1, 2013,

pp. 313 - 317, ISSN Print: 0976 – 6367, ISSN Online: 0976 – 6375.

[26] Maria Dominic, Anthony Philomen Raj, Sagayaraj Francis and Saul Nicholas,

“A Study on Users of Moodle Through Sarasin Model”, International Journal of

Computer Engineering & Technology (IJCET), Volume 4, Issue 1, 2013, pp. 71 - 79,

ISSN Print: 0976 – 6367, ISSN Online: 0976 – 6375.

[27] Nathan D’lima, Anirudh Prabhu, Jaison Joseph and Shamsuddin S. Khan, “Novel

Approach in E-Learning To Imbibe Environmental Awareness”, International Journal

of Computer Engineering & Technology (IJCET), Volume 4, Issue 2, 2013,

pp. 166 - 171, ISSN Print: 0976 – 6367, ISSN Online: 0976 – 6375.

![[XLS] · Web view1 2 2 2 3 2 4 2 5 2 6 2 7 2 8 2 9 2 10 2 11 2 12 2 13 2 14 2 15 2 16 2 17 2 18 2 19 2 20 2 21 2 22 2 23 2 24 2 25 2 26 2 27 2 28 2 29 2 30 2 31 2 32 2 33 2 34 2 35](https://static.fdocuments.in/doc/165x107/5aa4dcf07f8b9a1d728c67ae/xls-view1-2-2-2-3-2-4-2-5-2-6-2-7-2-8-2-9-2-10-2-11-2-12-2-13-2-14-2-15-2-16-2.jpg)

![file.henan.gov.cn · : 2020 9 1366 2020 f] 9 e . 1.2 1.3 1.6 2.2 2.3 2.4 2.5 2.6 2.7 2. 2. 2. 2. 2. 2. 2. 2. 2. 2. 2. 2. 2. 2. 2. 2. 2. 2. 2. 2. 17](https://static.fdocuments.in/doc/165x107/5fcbd85ae02647311f29cd1d/filehenangovcn-2020-9-1366-2020-f-9-e-12-13-16-22-23-24-25-26-27.jpg)