2016 L26 MEA716 4 19 DA1 - Nc State University · 2016. 5. 2. · (Panasonic Weather Services) GL:...

Transcript of 2016 L26 MEA716 4 19 DA1 - Nc State University · 2016. 5. 2. · (Panasonic Weather Services) GL:...

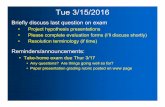

Tue 4/19/2016Data Assimilation

• Notes and optional practice assignment

Reminders/announcements:• MP experiment assignment (due Thursday)• Final presentations: 28 April, 1-4 pm (final exam period)

• Schedule optional meetings with me if feedback or assistance is needed • Be sure to emphasize the analysis aspect! • Handout/assignment provides additional guidance for content and evaluation• Extra credit option: YouTube presentation of your project! CamStudio (free)

https://www.youtube.com/watch?v=7k9rE3PfT0k&nohtml5=False

• Read short “scenario” for Thursday (will discuss in class)

Data AssimilationObjectives:

- Outline basic approach building from simple example

- Introduce central concepts and terminology

- Clarify differences between main methods:- 3DVAR, 4DVAR, Kalman filter, ensemble Kalman filter

- Increase appreciation for our reliance upon DA in modeling and observational work

Why study DA?• Everyone using gridded analyses for any study or

for model IC/LBC should know from where it came

• Quality of (re)analyses determined by quality of DA system used to create it (Reanalysis1,2, CFSR, MERRA, ERA, NARR, GFS, NAM, etc.… )

• Those who have DA skills will have no problem finding a job (see next slides)!

• Offerings in MEAS are too limited in this area

Recent DA job opportunity

Job perspective from colleague Neil Jacobs (Panasonic Weather Services)

GL: My sense is that there is a shortage of expertise in this area…

An extreme shortage. The problems are 1) even people with met backgrounds are lacking some of the more intensive math knowledge, and 2) you just can’t find people who know Fortran. We are always looking…

Anyway, there are literally 6-7 people I know in the US who know this stuff well… Kleist, Derber, Whitaker, Hamill, etc. They are all largely self taught. There are those who are great at the theory like Eugeina, but you need someone with the complete package, and that includes both math theory, as well as a software engineer’s level of Fortran knowledge.

Starting salaries for someone with the compete package just out of grad school (Ph.D.) would easily go 150k in NC and 300k+ on the west coast. Granted, there are probably only 15 or so job opportunities out there, but 60% are unfilled.

Job perspective from colleague Neil Jacobs (Panasonic Weather Services)

GL: Also, what advice would you offer to a group of MS and PhD atmospheric scientists who will be entering the job market with regard to modeling, programming, and DA skills?

Learn as much Fortran as possible from a software engineering side versus science side. Science based Fortran does solve problems, but running operational code needs someone who can write very logical *efficient* code, and not just code that gets the right answer.

Having experience with HPC is a big deal. Parallel processing will always be used over single threaded Fortran jobs.

Don’t be afraid to look outside of just meteorology/DA positions. Those type of math and programming skills are needed everywhere from landing probes on Mars to power trading in spot markets. My point is if you know the math and can write efficient code, you’ll be assured a very well-paying job. There is a general lack of qualified people in many different markets, so having a good foundation can set you up perfectly to go in many directions.

Job perspective from colleague Peter Neilley(IBM/The Weather Company)

Also, what advice would you offer…

I share your intuition that there is not a proper balance in qualified DA talent vs. NWP talent in our field to meet the needs of our science. Its hard to quantify that imbalance, but something that indirectly speaks to it is the percentage of computer time that majors center spend on DA vs the forward models. I believe ECMWF is roughly 50-50 on DA vs Model while I think NOAA is closer to 30-70, as is the UKMO. This numbers speak to the importance of DA, which indirectly speaks to need for scientific talent to support the system.

That said, there are two general classes of NWP jobs, but generally just 1 type of DA job. NWP jobs are generally either model development or model use (e.g. for research/diagnostic/phenomena study purposes). The latter doesn't need to know or in some cases well understand the model. But on the DA side, there generally is just the development kind of job. There isn't (as much of) an equivalent of the diagnostic NWP position for DA.

Job perspective from colleague Peter Neilley(IBM/The Weather Company)

Also, what advice would you offer…

Maybe if I'm in Raleigh one day I could give the students a brown bag on my perspective of success in the enterprise, and in particular the private sector. But my fundamental recommendation to students these days is to develop a hybrid of talents. The quote I like to use is "find your second passion", the first passion being meteorology of course. A second passion is what will make a student stand out in a subdiscipline and if they are strong there it will make them very employable.

Examples of second passions are software development, societal applications (e.g. financial markets, risk management, communication, renewables, etc.), teaching, etc. Perhaps NWP/DA development could be consider a second passion too. The point is being just a meteorologist is generally not sufficient anymore.

Updated graph: Courtesy Dr. Adrian Simmons, ECMWF (July 2010)

ECMWF 500-mb anomaly correlation

Data Assimilation1.) Introduction 2.) Older Empirical Techniques

a.) Successive correction methods (SCM)b.) Nudging

3.) Least Squares methods• Optimum Interpolation (OI)• Variational (cost function) approaches

– 3DVar– 4DVar

• Kalman Filtering4.) Dynamical balancing of initial conditions5.) Observational data QC

Data Assimilation (DA)

• Manual gridding of data used in initial NWP efforts

• Richardson (1922) and Charney et al. (1950) undertook time-consuming manual interpolation procedures

• Immediately recognized as not acceptable

Data Assimilation (DA)• Talagrand (1997) defined DA as

– “Using all the available information to determine as accurately as possible the state of the atmospheric (or oceanic) flow.”

• “Available information” includes much more than observations: dynamical relations between variables, error statistics, climatology, etc.

• Consider also the value of information at different times and places from analysis domain

• Info from one field is related to other fields (e.g., wind, geopotential height)

Data Assimilation• There are not enough observations at a given time to

initialize a primitive equation model

• Data density is non-uniform

• Many observed variables are not dependent variables in PE model (e.g., satellite radiance, radar reflectivity)

• Clear from start that “first guess” needed in model initialization (aka “background” or “prior”)

• Initially, climatology, or combination of climo and short-term forecast used. Now, short-term forecast used

Data Assimilation• Even in Richardson’s first NWP forecast, raw

observations alone could not be used to initialize a successful numerical forecast (as Lynch demonstrated)

• Must modify data in dynamically consistent manner to provide a valid initial condition

• Traditionally, “Data Assimilation” involves two facets:– (i) Objective analysis (OA) – transfer of irregular observations to

a grid, with quality control (use 1st guess or background field)

– (ii) Data initialization – objective analysis contains noise that would result in large, spurious gravity waves, must filter, balance

• Modern DA essentially combines these steps

DA flow diagram (after Kalnay Fig. 5.1.2a)

Observations (+/- 3 h)

Background or first guess

Global analysis (statistical interpolation and

balancing)

Global forecast model

Operational forecasts

6-h forecast

Initial Conditions

Model is mechanism

that propagates info in time & space

E.g., model can transmit

info from data rich to data poor areas

4DDAOver oceans, analysis consists largely of

Asynoptic data (ship/buoy reports from all hours, satellite data, aircraft data)

Earlier model short-term forecasts

These sources are difficult to build into objective analyses

Major operational centers have combined OA and initialization into a continuous cycle of data assimilation (e.g., 4DVAR at ECMWF)

3.) Least Squares MethodsResults from Least Squares method carries over to

more complex methods, so introduce first

Start with simplest example possible:

Two independent temperature observations, T1 & T2; assume instruments unbiased (errors are random, not systematic)

(whiteboard, starting with equation #1 thru #11)

3.) Least Squares - variational

For optimum interpolation method, minimized least-square error with respect to weights

For variational methods: All information, weighted by their statistical error characteristics, used to derive cost function

Cost function is minimized to yield analysis that is most likely estimate of the true atmospheric state at a given time

3. Least Squares: variationalCost function (from Kalnay 2003): 2 temperature obs, T1 & T2

from different, independent data sources with normally distributed errors & standard deviations 1 and 2

We can define the cost function J as

2

2

22

2

21

1

21)(

TTTTTJ

The cost function is clearly related to the square of difference between the actual temperature T and the 2 observations

How can we minimize J(T)?

(12)

Gaussian statistics for normal distributions: Minimizing J(T) yields an expression for maximum likelihood of T in terms of observations and their error statistics

If we take

Let Ta be the “maximum likelihood” value, and solve:

Variational approach

021)( 2

2

22

2

21

1

TTTT

TTJ

T

2 22 1

1 22 2 2 22 1 2 1

aT T T

Least squares: VariationalDoes this make sense, physically?

2 22 1

1 22 2 2 22 1 2 1

aT T T

Yes, an observation with a small error variance (reliable) is weighted more heavily than one with a large error variance

In this way, construct the best possible analysis using all available data and knowledge of data error characteristics

3.) Least Squares Methods

Operational systems use a “background” or “first guess” field, in addition to observations

Analogous development using 1 observation and 1 background (first guess) value yields:

(13) a b obs bT T W T T

3.) Least Squares Methods

(13) a b obs bT T W T T

22

2

obsb

bW

222

111

obsba

“The analysis is derived by adding innovation to 1st guess, weighted by optimal weight”

“The optimal weight is the background error variance divided by the total error variance”

“The analysis precision is the sum of the background and observational precision”

(14)

(15)

3.) Least Squares Methods

(16)“The analysis error variance is = to the background

error variance weighted by (1 – optimal weight)”

These equations were developed here for an extremely simple example, but they have exactly the same form as in OI, 3DVar, Kalman Filtering

22 1 ba W

Analysis cyclingWe can “cycle” eq. (13) – (16) if the background

field is a model forecast

Suppose we have analysis at time t = ti (e.g., 00 UTC), and we want subsequent analysis for t = ti+1 (e.g. 03 UTC)

Two phases in cycle:1.) Forecast phase to update Tb and 2.) Analysis phase to update Ta and (using Tobs, )

2b2a 2

obs

Extension to DA In forecast phase of analysis cycle, we compute a

background field:(17)

In (17), M is a “Model”, which does not have to be a dynamical model but is, in practice

Must also estimate for next analysis time

1( ) ( )b i a iT t M T t

2b

Analysis cycling methodsWe must also estimate for the future time:

(a) Optimum Interpolation approach:

(18)

In (18), “a” is > 1 but < 2, a simple assumption about error growth assumed in model M

Then, compute new weight W using (14)

(b) Kalman Filtering approach:Still use (17), but instead of a simple assumption as

(18), compute background error variance from model

2 21( ) ( )b i a it a t

2b

Kalman Filter method

1( ) [ ( )]t i t i mT t M T t (19)

Future “true” Temperature

Model forecast from “perfect”

analysis

Model error

E.g., Use linearized version of model, “tangent linear model”, or TLM, to isolate error growth (several methods)

2 2 2 21 ,( )b i a i mt M

(20)

Analysis cycling1.) Get observations for new time, model forecast for

background

2.) Determine at new time from (18 - OI) or (20 - KF)

3.) Compute analysis, determine analysis error variance for use in next cycle ( )

2b

2a

CommentsIn general, we do not and can not directly observe the

variables we are analyzing- Variables, times, or locations can differ between obs, analysis- E.g., radars measure reflectivity or radial velocity, satellites measure

radiance; these are not dependent variables in model- These quantities are related to what we are analyzing, however

Must use an “observation operator” or “observation forward operator” to project background information onto observation space, in order to compute statistics

H includes vertical and horizontal interpolations, and transformations based on physical laws (e.g., radiation laws to convert background T or q to radiances)

)( bTH

CommentsIn 1980s and early 1990s, DA systems used “retrieved”

analysis variablese.g., Satellite sounder data were processed to produce profiles of T,

q, that “looked like” rawinsonde data

This proved to be inferior relative to utilizing the raw measurements directly in observational forward model

Why?Retrieval technique doesn’t have all the other data or background

available; the other data help to make the retrieval more accurate and maximize usefulness of data

It is extremely difficult to know the error covariance of the retrieved profiles; e.g., the radiance error covariance is much better known because it is due to instrument error (more likely to be unbiased)

Extension to 4-D: Notation and Terminology

Generalize to complete NWP model problem:(i) Analysis for a field of model variables (ii) A background field available at grid points(iii) A set of “p” observations at irregular points

Can be 2D or 3D analysis

Combine all model variables into large arrays of length n, where # of model variables

H is observation operator that transforms background field to observation space; HT, transpose of H, converts back to model space

ax

bx

oyir

n i j k

Extension to 4-D: Notation and Terminology

Also:(i) “error variance” becomes “error covariance matrix” (ii) “optimal weight” becomes “optimal gain matrix”

Note that is used to denote the observations:- The observations are in different spatial (and

temporal) locations from the model grid points

- Observed quantities are often not same physical parameters that are analyzed (as discussed before)

oy

Multivariate OI ProblemFirst, we tackled the OI problem, which is similar in

form to 3DVar and Kalman Filtering, but with more approximations and other practical disadvantages

For OI, (13) becomes:(21)

In (21),

abobsbt xHyWxx

)(

taa xx

Multivariate OI ProblemEquivalently, we could write

We can also write (21) as:

where (22)

The “innovation” or “observational increments” vector

“B” is the “background error covariance” matrix

“R” is the observation error covariance matrix

)( bobsba xHyWxx

a bx x W d

)( bobs xHyd

Multivariate OI Problem

(23)

“Analysis obtained by adding background to the innovation, weighted by optimal weight matrix. The 1st guess of the observations is obtained by applying H to background”

(24)

“The optimal weight is the background error covariance in observation space (BHT) divided by total error variance”

(24) is obtained by minimizing the least squares equation with respect to the weights to find optimum (not shown)

1)( TT HBHRBHW

dWxxHyWxx bbobsba

)(

Multivariate OI Problem

(25)

“The analysis error variance is = to the background error covariance weighted by the identity matrix I minus the optimal weight matrix”

If you understood the earlier expressions for the simple problem, then you can understand these, because the meanings are exactly analogous

BWHIPa

OI and simple exampleWe can see that the following are analogous:

(23)

(13)

And the optimal weight equations are as well:

B = background error covariance, R = observation error covariance

( )a b obs bx x W y H x

bobsba TTWTT

T

T

B HWHBH R

22

2

obsb

bW

Multivariate OI Problem

(25)

(16)

“The analysis error variance is = to the background error covariance weighted by the identity matrix I minus the optimal weight matrix”

BWHIPa

22 1 ba W

Comments on OI MethodObservation errors are due to several sources:

(i) Instrument error (random)(ii) Error of representativeness(iii) Errors in transformation between obs, model space (H)

In practice, high-density observation clusters merged into “superobservations” that combine information from individual observations before assimilation

Most critical aspect of problem is determining B, because R is generally diagonal (or can be made so) if observations are independent

As before, form of Kalman Filtering equations is the same as for OI, except for determination of background error covariance (forecasted from model)

Hrepinst RRRR

(26)

3DVar3DVar case:

Parallel to earlier example, we can arrive at (23) by minimizing a cost function with respect to analysis variables:

(27)

(28)

)()(21)( 11 xHyRxHyxxBxxxJ o

Tob

Tb

)(1111bo

TTba xHyRHHRHBxx

0)( ax xJ

How does 3DVar Differ from OI?3DVar and OI are similar in form, but in practice 3DVar has

several major advantages

OI makes several approximations that are absent in variational methods:

Method of solution is local (grid point by grid point) and sequential over variables

The background error covariance is also locally approximated

In 3DVar, the cost function can be minimized globally (simultaneously everywhere) with all data used simultaneously

In 3DVar, can easily add new constraints to cost function (e.g., a balance condition, or direct QC procedures)

3DVarFrom our notes:

Zapotocny et al. (2000), eq. (3):

Difference is use of balance constraint in operational cost function, as discussed in article on page 609

)()(21)( 11 xHyRxHyxxBxxxJ o

Tob

Tb

(27)

4DVar4DVar is used at ECMWF, available in MM5 (Zou et al.

1997) and now WRF

(29)

Similar in form to 3DVar, but better incorporation of observations from times that differ from ta

Find analysis that minimizes difference between model solution, observations over some time interval

Model assumed perfect in this process

oiii

Toi

N

ii

btt

Tbttt yxHRyxHxxBxxxJ )())((

21

21)( 1

000

10000

4DVar3DVar and 4DVar:

(29)

4DVar cost function includes summation over time of each observational increment computed with respect to the model integrated to the time of the observation

Cost function minimized wrt initial state of model, but analysis at end of time interval is given by model integration – so analysis must be a solution of model equations

oiii

Toi

N

ii

btt

Tbttt yxHRyxHxxBxxxJ )())((

21

21)( 1

000

10000

)()(21)( 11 xHyRxHyxxBxxxJ o

Tob

Tb

(27)

Background integrated to same time as observations using model

4DVarUsed at ECMWF, available in MM5, WRF (Zou et al. 1997)

Some centers have skipped straight from earlier techniques to 4DVar (e.g., ECMWF)

Why has NCEP invested so heavily in 3DVar development? Insights from MM5/WRF Team:

Stronger reliance on model itself in 4DVar, can be a disadvantage

4DVar is computationally very expensive, and many systems cannot utilize all observations in time to get operational IC to model

Not clear whether benefit from 4DVar greater than could be derived from better use of high-density obs in 3DVar (Kalnay 2003)