1 Bayesian data analysis 1 using Bugs 2 and R 3 1 Fit complicated models 2 clearly 3 display and...

-

date post

19-Dec-2015 -

Category

Documents

-

view

222 -

download

1

Transcript of 1 Bayesian data analysis 1 using Bugs 2 and R 3 1 Fit complicated models 2 clearly 3 display and...

1

Bayesian data analysis1

using Bugs2

and R3

1Fit complicated models

2clearly

3display and understand results

2

Bayesian data analysisusing BUGS and R

Prof. Andrew GelmanDept. of Statistics and Political

ScienceColumbia University

Joint Program in Survey Methodology, University of Maryland, College ParkJanuary 17-18, 2008

3

Introduction to the course

4

Goals Should be able to…

Fit multilevel models and display and understand the results

Use Bugs from R Theoretical

Connection between classical linear models and GLM, multilevel models, and Bayesian inference

Practical Dealing with data and graphing in R Reformatting models in Bugs to get them to

work

5

Realistic goals

You won’t be able to go out and fit Bayesian models

But . . . you’ll have a sense of what Bayesian data analysis “feels like”: Model building Model fitting Model checking

6

Overview What is Bayesian data analysis? Why Bayes? Why R and Bugs [schools example] Hierarchical linear regression [radon example] Bayesian data analysis: what it is and what it is not [several

examples] Model checking [several examples] Hierarchical Poisson regression [police stops example] Understanding the Gibbs sampler and Metropolis algorithm Troubleshooting: simulations don’t work, run too slowly, make no

sense Advanced computation [social networks example] Accounting for design of surveys and experiments [polling example] Prior distributions [logistic-regression separation example] If there is time: hierarchical logistic regression with varying

intercepts and slopes [red/blue states example] Loose ends

7

Structure of this course Main narrative on PowerPoint Formulas on blackboard On my computer and yours:

Bugs, R, and a text editor Data, Bugs models, and R scripts for the

examples We do computer demos together Pause to work on your own to play with

the models—we then discuss together Lecturing for motivating examples Interrupt to ask questions

8

Structure of this course Several examples. For each:

Set up the Bugs model Use R to set up the data and initial values, and to run Bugs Use R to understand the inferences Sometimes fit model directly in R Play with variations on your own computer

Understanding how Bugs works and how to work with Bugs

More examples Working around problems in Bugs Using the inferences to answer real questions

Not much theory. If you want more theory, let me know!

Emphasis on general methods for model construction, expansion, patching, and understanding

9

Introduction to Bayes

10

What is Bayesian data analysis? Why Bayes? Effective and flexible

Modular (“Tinkertoy”) approach Combines information from different

sources Estimate inferences without overfitting for

models with large number of parameters Examples from sample surveys and public

health include: Small-area estimation, longitudinal data

analysis, and multilevel regression

11

What are BUGS and R? Bugs

Fit Bayesian statistical models No programming required

R Open source language for statistical computing and

graphics Bugs from R

Offers flexibility in data manipulation before the analysis and display of inferences after

Avoids tedious issues of working with Bugs directly

[Open R: schools example]

12

Begin 8-schools example

13

Open R: schools example Open R:

setwd (“c:/bugs.course/schools”) Open RWinEdt

Open file “schools/schools.R” Open file “schools.bug” Click on Window, Tile Horizontally

Copy the commands from schools.R and paste them into the R window

14

8 schools example Motivates hierarchical (multilevel) modeling Easy to do using Bugs Background: educational testing

experiments Classical analyses (load data into R)

No pooling Complete pooling

Hierarchical analysis using Bugs Talk through the 8-schools model Fit the model from R Look at the inferences, compare to classical

15

Playing with the 8 schools

Try it with different n.chains, n.iter Different starting values Changing the data values y Changing the data variances

sigma.y Changing J=#schools Changing the model

16

End 8-schools example

17

A brief prehistory of Bayesian data analysis Bayes (1763)

Links statistics to probability Laplace (1800)

Normal distribution Many applications, including census [sampling models]

Gauss (1800) Least squares Applications to astronomy [measurement error models]

Keynes, von Neumann, Savage (1920’s-1950’s) Link Bayesian statistics to decision theory

Applied statisticians (1950’s-1970’s) Hierarchical linear models Applications to animal breeding, education [data in groups]

18

A brief history of Bayesian data analysis, Bugs, and R “Empirical Bayes” (1950’s-1970’s)

Estimate prior distributions from data Hierarchical Bayes (from 1970)

Include hyperparameters as part of the full model Markov chain simulation (from 1940’s [physics] and 1980’s

[statistics]) Computation with general probability models Iterative algorithms that give posterior simulations (not point

estimates) Bugs (from 1994)

Bayesian inference Using Gibbs Sampling (David Spiegelhalter et al.) Can fit general models with modular structure

S (from 1980’s) Statistical language with modeling, graphics, and programming S-Plus (commercial version) R (open-source) lme() and lmer() functions by Doug Bates for fitting hierarchical linear

and generalized linear models

19

My own experiences with Bayes, Bugs, and R Bayesian methods really work,

especially for models with lots of parameters

Use R for data manipulations and various preprogrammed models

Use Bugs (from R) as first try in fitting complex models

Use R to make graphs to check that fitted model makes sense

When Bugs doesn’t work, program in R directly

20

Bayesian regression using Bugs

21

R and Bugs for classical inference

Radon example Fitting a linear regression in Bugs Displaying the results in R Complete-pooling and no-pooling

models Model extensions[open R: radon example]

22

Begin radon example

23

Open R: radon example In RWinEdt

Open file “radon/radon_setup.R” We’ll talk through the code

In R: setwd (“c:/bugs.course/radon”) source (“radon_setup.R”) Regression output will appear on the

R console and graphics windows

24

Fitting a linear regression in R and Bugs

The Bugs model Setting up data and initial values in

R Running Bugs and checking

convergence Displaying the fitted line and

residuals in R

25

Radon example in R(complete pooling) In RWinEdt

Open file “radon/radon.classical1.R” In other window, open file

“radon.classical.bug” Copy the commands (one paragraph at

a time) from radon.classical1.R and paste them into the R window Fit the model Plot the data and estimates Plot the residuals (two versions) Label the plot

26

Simple extensions of the radon model

Separate variances for houses with and without basements

t instead of normal errors Fitting each model, then

understanding it

27

Radon regression with 2 variance parameters

Separate variances for houses with and without basements Bugs model Setting it up in R Using the posterior inferences to

compare the two variance parameters

28

Radon example in R (regression with 2 variance parameters)

In RWinEdt Open file “radon/radon.extend.1.R” Other window, open file

“radon.classical.2vars.bug” Copy from radon.extend.1.R and paste

into the R window Fit the model Display a posterior inference Compute a posterior probability STOP

29

Robust regression for radon data

t instead of normal errors Issues with the degrees-of-freedom

parameter Looking at the posterior simulations

and comparing to the prior distribution

Interpreting the scale parameter

30

Radon example in R (robust regression using the t model) In RWinEdt

Stay with “radon/radon.extend.1.R” Other window:

“radon.classical.t.bug” Copy rest of radon.extend.1.R and

paste into the R window Fit the t model Run again with n.iter=1000 iterations Make some plots

31

Fit your own model to the radon data

Alter the Bugs model Change the setup in R Run the model and check

convergence Display the posterior inferences in

R[Suggestions of alternative models?]

32

Fitting several regressions in R and Bugs Back to the radon example

Complete pooling [we just did] No pooling [do now: see next slide]

Bugs model is unchanged Fit separate model in each county and

save them as a list Displaying data and fitted lines from 11

counties

Next step: hierarchical regression

33

Radon example in R (no pooling) In RWinEdt

Open file “radon/radon.classical2.R” In other window, open file

“radon.classical.bug” Copy from radon.classical2.R and paste

into the R window Fit the model (looping through n.county=11

counties) Plot the data and estimates Plot the residuals (two versions) Label the plot

34

Hierarchical linear regression: models

[Show the models on blackboard] Generalizing the Bugs model

Varying intercepts Varying intercepts, varying slopes Adding predictors at the group level

Also write each model using algebra More than one way to write (or

program) each model

35

Hierarchical linear regression: fitting and understanding

[working on blackboard] Displaying results in R Comparing models

Interpreting coefficients and variance parameters

Going beyond R-squared

36

Hierarchical linear regression: varying intercepts Example: county-level variation for radon Recall classical models

Complete pooling No pooling (separate regressions)

Including county effects hierarchically Bugs model Written version More than one way to write the model

Fitting and understanding Interpreting the parameters Displaying results in R

37

Radon example in Bugs and R (varying intercepts) In RWinEdt

“radon/radon.multilevel.1.R” Other window: “radon.multilevel.1.bug”

Copy from radon.multilevel.1.R and paste into the R window Fit the model (debug=TRUE) Close the Bugs window Run for 100, then 1000 iterations Plot the data and estimates for 11 counties Plot all 11 regression lines at once

38

Fitting the radon model using lmer()

lmer(): an R function for multilevel (hierarchical) regression and glm

Not as flexible as Bugs Has problems when group-level

variance parameters are near zero (as in 8-schools example)

But . . . is easy to use and pretty fast (Similar routines available in Stata

and SAS)

39

Radon example using lmer() (varying intercepts) In RWinEdt

“radon/radon.multilevel.lmer.1.R” Copy from radon.multilevel.lmer.1.R and

paste into the R window Fit the model using lmer() Display the results Pull out the intercepts and slopes Plot the data and estimates for 11 counties Get posterior simulations and compare to Bugs

40

Hierarchical linear regression: varying intercepts, varying slopes

The model Bugs model Written version

Setup in R Running Bugs, checking

convergence Displaying the fitted model in R Understanding the new parameters

41

Radon example in R (varying intercepts and slopes)

In RWinEdt “radon/radon.multilevel.2.R” Other window: “radon.multilevel.2.bug”

Copy from radon.multilevel.2.R and paste into the R window Fit the model (debug=TRUE) Close the Bugs window Run for 500 iterations Plot the data and estimates for 11 counties Plot all 11 regression lines at once Plot slopes vs. intercepts

42

Radon example using lmer() (varying intercepts and slopes) In RWinEdt

“radon/radon.multilevel.lmer.2.R” Copy from radon.multilevel.lmer.2.R and

paste into the R window Fit the model using lmer() Display the results Play around with centering and rescaling of x Pull out the intercepts and slopes Plot the data and estimates for 11 counties Get posterior simulations and compare to

Bugs

43

Structure of lmer() Data are grouped, can have nonnested

groups (see book for examples) Coefficients can be “fixed” (not varying

by group) or “random” (varying by group) lmer (y ~ fixed + (random | group)) Also glm version: lmer (y ~ fixed + (random | group), family=binomial (link=“logistic”))

44

Simple lmer() examples From R window

?mcsamp Scroll to the bottom of the help window

and paste the example into the R window Simulate some fake multilevel data Fit and display a varying-intercept model Varying-intercept, varying-slope model Logistic model: varying intercept Logistic: varying-intercept, varying-slope Non-nested varying-intercept, varying-slope

model

45

Playing with hierarchical modeling for the radon example

Uranium level as a county-level predictor

Changing the sample sizes Perturbing the data Fitting nonlinear models

46

Radon example in R (adding a county-level predictor)

In RWinEdt radon/radon.multilevel.3.R Other window: radon.multilevel.3.bug

Copy from radon.multilevel.3.R and paste into the R window Fit the model Plot the data and estimates for 11 counties Plot all 11 regression lines at once Plot county parameters vs. county uranium

level

47

Varying intercepts and slopes with correlated group-level errors

The model Statistical notation Bugs model

Show on blackboard Correlation and group-level

predictors More in chapters 13 and 17 of

Gelman and Hill (2006)

48

End radon example

49

Bayesian data analysis: what it is and what it is not

50

Bayesian data analysis: what it is and what it is not Popular view of Bayesian statistics

Subjective probability Elicited prior distributions

Bayesian data analysis as we do it Hierarchical modeling Many applications

Conceptual framework Fit a probability model to data Check fit, ride the model as far as it will

take you Improve/expand/extend model

51

Overview of Bayesian data analysis Decision analysis for home radon Where did the “prior distribution” come

from? Quotes illustrating misconceptions of

Bayesian inference State-level opinions from national polls

(hierarchical modeling and validation) Serial dilution assay

(handling uncertainty in a nonlinear model) Simulation-based model checking Some open problems in BDA

52

Begin radon decision example

53

Decision analysis for home radon Radon gas

Causes 15,000 lung cancers per year in U.S. Comes from underground; home exposure

Radon webpage http://www.stat.columbia.edu/~radon/ Click for recommended decision

Bayesian inference Prior + data = posterior Where did the prior distribution come from?

54

Prior distribution for your home’s radon level Example of Bayesian data analysis Radon model

theta_i = log of radon level in house i in county j(i) linear regression model:

theta_i = a_j(i) + b_1*base_i + b_2*air_i + … + e_i linear regression model for the county levels a_j,

given geology and uranium level in the county, with county-level errors

Data model y_i = log of radon measurement in house i y_i = theta_i + Bias + error_i Bias depends on the measurement protocol error_i is not the same as e_i in radon model above

55

Radon data sources National radon survey

Accurate unbiased data—but sparse 5000 homes in 125 counties

State radon surveys Noisy biased data, but dense 80,000 homes in 3000 counties

Other info House level (basement status, etc.) County level (geologic type, uranium level)

56

Bayesian inference for home radon Set up and compute model

3000 + 19 + 50 parameters Inference using iterative simulation (Gibbs sampler)

Inference for quantities of interest Uncertainty dist for any particular house

(use as prior dist in the webpage) County-level estimates and national averages Potential $7 billion savings

Model checking Do inferences make sense? Compare replicated to actual data, cross-validation Dispersed model validation (“beta-testing”)

57

Bayesian inference for home radon

Allows estimation of over 3000 parameters

Summarizes uncertainties using probability

Combines data sources Model is testable (falsifiable)

58

End radon decision example

59

Pro-Bayesian quotes Hox (2002):

“In classical statistics, the population parameter has only one specific value, only we happen not to know it. In Bayesian statistics, we consider a probability distribution of possible values for the unknown population distribution.”

Somebody’s webpage:“To a true subjective Bayesian statistician, the prior distribution represents the degree of belief that the statistician or client has in the value of the unknown parameter . . . it is the responsibility of the statistician to elicit the true beliefs of the client.”

60

Why these views of Bayesian statistics are wrong!

Hox quote (distribution of parameter values) Our response: parameter values are “fixed

but unknown” in Bayesian inference also! Confusion between quantities of interest

and inferential summaries Anonymous quote

Our response: the statistical model is an assumption to be held if useful

Confusion between statistical analysis and decision making

61

Anti-Bayesian quotes Efron (1986):

“Bayesian theory requires a great deal of thought about the given situation to apply sensibly.”

Ehrenberg (1986):“Bayesianism assumes: (a) Either a weak or uniform prior, in which case why bother?, (b) Or a strong prior, in which case why collect new data?, (c) Or more realistically, something in between, in which case Bayesianism always seems to duck the issue.”

62

Why these views of Bayesian statistics are wrong!

Efron quote (difficulty of Bayes) Our response: demonstration that Bayes solves

many problems more easily that other methods Mistaken focus on the simplest problems

Ehrenberg quote (arbitrariness of prior dist) Our response: the “prior dist” represents the

information provided by a group-level analysis One “prior dist” serves many analyses

63

Begin pre-election polling example

64

State-level opinions from national polls Goal: state-level opinions

State polls: infrequent and low quality National polls: N is too small for individual states Also must adjust for survey nonresponse

Try solving a harder problem Estimate opinion in each of 3264 categories:

51 states 64 demographic categories (sex, ethnicity, age,

education) Logistic regression model Sum over 64 categories within each state Validate using pre-election polls

65

State-level opinions from national polls

Logistic regression model y_i = 1 if person i supports Bush, 0 otherwise logit (Pr(y_i=1)) = linear predictor given demographics

and state of person i Hierarchical model for 64 demographic predictors and

51 state predictors (including region and previous Republican vote share in the state)

State polls could be included also if we want Sum over 64 categories within each state

“Post-stratification” In state s, the estimated proportion of Bush supporters

is sum(j=1 to 64) N_j Pr(y=1 in category j) / sum(j=1 to 64) N_j

Also simple to adjust for turnout of likely voters

66

Compare to “no pooling” and “complete pooling” “No pooling”

Separate estimate within each state Treat the survey as 49 state polls Expect “overfitting”: too many parameters

“Complete pooling” Include demographics only Give up on estimating 51 state parameters

Competition Use pre-election polls and compare to election

outcome Estimated Bush support in U.S. Estimates in individual states

67

Election poll analysis and Bayesian inference

Where was the “prior distribution”? In logistic regression model, 51 state

effects, a_k a_k = b*presvote_k + c_region(k) + e_k Errors e_k have Normal (0, sigma^2)

distribution; sigma estimated from data Where was the “subjectivity”?

68

Election poll analysis: validation

No Complete pooling pooling

BayesAvg std errors ofstate estimates 5.1% 0.9% 3.1%

Avg of actual absolutestate errors 5.1% 5.9% 3.2%

69

70

End pre-election polling example

71

Begin assays example

72

Serial dilution assays

73

Dots are data, curves are fitted

74

Serial dilution assays: motivation for Bayesian inference Classical approach: read the estimate off the

calibration curve Difficulties

“above detection limit”: curve is too flat “below detection limit”: signal/noise ratio is too low For some samples, all the data are above or below

detection limits! Goal: downweight—but don’t discard—weak

data Maximum likelihood (weighted least squares) Bayes handles uncertainty in the parameters

of the calibration curve

75

Serial dilution: validation

76

End assays example

77

Summary Bayesian data analysis is about modeling Not an optimization problem; no “loss

function” Make a (necessarily) false set of assumptions,

then work them hard Complex models --> complex inferences -->

lots of places to check model fit Prior distributions are usually not “subjective”

and do not represent “belief” Models are applied repeatedly (“beta-testing”)

78

Model checking

79

Model checking Basic idea:

Display observed data (always a good idea anyway)

Simulate several replicated datasets from the estimated model

Display the replicated datasets and compare to the observed data

Comparison can be graphical or numerical Generalization of classical methods:

Hypothesis testing Exploratory data analysis

Crucial “safety valve” in Bayesian data analysis

80

Model checking: simple example

A normal distribution is fit to the following data:

81

20 replicated datasets under the model

82

Comparison using a numerical test statistic

83

Model checking: another example

Logistic regression model fit to data from psychology: a 15 x 23 array of responses for each of 6 persons

Next slide shows observed data (at left) and 7 replicated datasets (at right)

84

Observed and replicated datasets

85

Another example: checking the fit of prior distributions

Curves show priors for 2 sets of parameters; histograms show estimates for each set

86

Improve the model, try again! Curves show new priors; histograms

show new parameter estimates

87

Model checking and model comparison Generalizing classical methods

t tests chi-squared tests F-tests R2, deviance, AIC

Use estimation rather than testing where possible

Posterior predictive checks of model fit DIC for predictive model comparison

88

Model checking: posterior predictive tests Test statistic T(y) Replicated datasets y.repk, k=1,

…,n.sim Compare T(y) to the posterior

predictive distribution of T(y.repk) Discrepancy measure T(y,thetak)

Look at n.sim values of the difference, T(y,thetak) - T(y.repk,thetak)

Compare this distribution to 0

89

Model comparison: DIC (deviance information criterion) Generalization of “deviance” in classical

GLM p_D is effective number of parameters

8-schools example Radon example

DIC is estimated error of out-of-sample predictions

DIC = posterior mean of deviance + p_D Radon example

90

More on multilevel modeling

91

Including linear predictors in a hierarchical model Radon example

Airflow as a data-level predictor Avg airflow as a county-level predictor Uranium as a county-level predictor

How can we include group indicators and a group-level predictor? Example from Advanced Placement

study[blackboard]

92

Hierarchical generalized linear models Poisson

Example: Police stops Overdispersed

Example: Police stops Logistic

Example: pre-election polls Other details in this example

Other issues Multinomial logit, robust model

93

Begin stop-and-frisk example

94

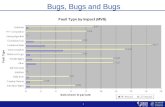

Police stop/frisks: example of Poisson regression Background on NYPD stops and frisks Data on stops for violent crimes

#stops, population, #previous arrests Blacks (African-Americans), Hispanics

(Latinos), Whites (European-Americans) Confidentiality: you’re getting fake data

In R, compute averages for each group Variation among precincts? Poisson regression (count data)[open R: frisk example]

95

Open R: frisk example In R:

setwd (“c:/bugs.course/frisk”) In RWinEdt

Open file “frisk/frisk.0.R” Other window: “frisk.0.bug”

Copy from frisk.0.R and paste into the R window Read in and look at the data Work with a subset of the data Pre-process Fit the classical Poisson regression in Bugs Fit using “glm” in R, check that results are approx the same

96

Hierarchical Poisson regression for police stops Poisson regression

Previous arrests as offset Logarithmic link Include precinct predictors

Set up model in Bugs Shift batches of parameters to sum to zero

Compute the model Slow convergence Compare to aggregate results

97

Frisk example in R (multilevel Poisson regression) In RWinEdt

Open file “frisk/frisk.1.R” Other window: “frisk.1.bug” Discuss the parts of the model

Copy from frisk.1.R and paste into the R window Fit the model Compare to classical Poisson regression

98

Shifting of multilevel regression coefficients

Frisk example: 3 ethnicity coefficients 75 precinct coefficients

Subtract mean of each batch See Bugs model “frisk1.dat” Define new variables g.eth, g.precinct Add the means back to g.0

99

Overdispersed hierarchical Poisson regression Use simulations from previous model to test

for overdispersion Chi-squared discrepancy measure Posterior predictive check in R compare observed discrepancy to simulations from

replicated data Poisson regression with offset and log link Set up model in Bugs Faster convergence! Display the results in R Compare to non-overdispersed model

100

Frisk example in R (overdispersed model) In RWinEdt

Open file “frisk/frisk.2.R” Other window: “frisk.2.bug” New variance component for overdispersion

Copy from frisk.2.R and paste into the R window Fit the model Compare to non-overdispersed model:

Coefficient estimates Overdispersion parameter sigma.y

101

Playing with the frisk example

Using %black as a precinct-level predictor

Looking at other crime types Including previous arrests as a

predictor rather than as an offset

102

2-stage regression for the frisk example #previous arrests is a noisy measure of

crime Model 1 of stops given “crime rate” Model 2 of arrests given “crime rate” Each of the 2 models is multilevel,

overdisp. “Crime rate” is implicitly estimated by

the 2 models simultaneously Set up Bugs model, fit in R

103

Frisk example in R (2-stage model) In RWinEdt

Open file “frisk/frisk.3.R” Other window: “frisk.3.bug” Entire model is duplicated: once for stops,

once for DCJS arrests Copy from frisk.3.R and paste into the R

window Fit the model Compare to previous models

104

End stop-and-frisk example

105

Computation

106

Understanding the computational method used by Bugs Key advantages

Gives posterior simulations, not a point estimate

Works with (almost) any model Connections to earlier methods

Maximum likelihood Normal approximation for std errors Multiple imputation

Gibbs sampler Metropolis algorithm Difficulties

107

Understanding computation: relation to earlier methods

Maximum likelihood Hill-climbing algorithm

+/- std errors Normal approximation

Multiple imputation Consider parameters as “missing data”

[More on blackboard]

108

Understanding computation: relation to earlier methods

Bayes’ theorem

)|()()|(

)(

)|()(

)(

),()|(

)|()(),(

yppyp

yp

ypp

yp

ypyp

yppyp

}

Prior (population) distribution

Sampling (data) distribution}

109

Understanding the Gibbs sampler and Metropolis algorithm

Examples of Bugs chains: Run mcmc.display.R

Interpretation as random walks (generalizing maximum likelihood)

Interpretation as iterative imputations (generalizing multiple imputation)

[open R: mcmc displays]

110

Open R: mcmc displays Open R:

setwd (“c:/bugs.course/schools”) Open RWinEdt

Open file “schools/mcmc.display.R” Copy from mcmc.display.R and paste

into the R window Read in the function sim.display() Apply it to the schools example In a few minutes, apply it to a previous run

of the radon model

111

Understanding the Gibbs sampler and Metropolis algorithm

Gibbs sampler as iterative imputations Demonstration on the blackboard for

the 8-schools example Can program directly in R [Appendix C

of Bayesian Data Analysis, 2nd edition] Radon example (graph in R) Conditional updating and the modular

structure of Bugs models

112

Understanding the Gibbs sampler and Metropolis algorithm

Monitoring convergence Pictures of good and bad convergence

[blackboard] n.chains must be at least 2 Role of starting points R-hat

Less than 1.1 is good Effective sample size

At least 100 is good

113

Understanding the Gibbs sampler and Metropolis algorithm

More efficient Gibbs samplers More efficient Metropolis jumping

114

Special lecture on computation [social networks example]

115

Troubleshooting

116

Troubleshooting

Problems in Bugs Simple Bugs and R solutions Reparameterizing Changing the model slightly Stripping the model down and

rebuilding it Expanding the model

117

Troubleshooting: problems and quick solutions Problem: model does not compile Solutions:

bugs (…, debug=TRUE) Look at where cursor stops in Bugs model Typical problems:

Typo, syntax error, illegal use of a distribution or function Go into Bugs user manual

In Bugs, click on Help, then User manual Check Model Specification and Distributions

Go into Bugs examples In Bugs, click on Help, then Examples Vol I and II Find an example similar to your problem

118

Troubleshooting: problems and quick solutions Problem: model does not run Solutions:

bugs (…, debug=TRUE) Look at where cursor stops in Bugs Typical problems:

Variable in data/inits/params but not in model Variable in model but not in data or params Error in subscripting

May need to fix in Bugs model or R script

119

Troubleshooting: problems and quick solutions

Problem: Trapping in Bugs Solutions:

Give reasonable starting values for all parameters in the model

Make prior distributions more restrictive e.g., dnorm(0, .01) instead of

dnorm(0, .0001) Change the model slightly

We will discuss some examples

120

Troubleshooting: slow convergence Problem: R-hat is still more than 1.1 for

some parameters Solutions:

Look at the separate sequences to see what is moving slowly

Run Bugs a little longer, possibly using last.value as initial values

Reparameterize Change the model slightly

Run model on a subset of the data (it’ll go faster)

121

Troubleshooting: reparameterizing Nesting predictors Adding redundant additive parameters Adding redundant multiplicative

parameters Rescaling

Example: item response models (hierarchical logistic regressions) for test scores or court votes

There is no unique way to write a model

122

Troubleshooting: changing the model slightly

Normal/t, logit/probit, etc. Bounds on predictions

Logistic regression example Use of robust models Approximations to functions

Golf putting

[open R: logistic regression example]

123

Begin logistic regression example

124

Open R: logistic regression example Open R

setwd (“c:/bugs.course/logit”) Open RWinEdt

Open file “logit/logit.R” Other window: “logit.bug”

Copy from logit.R and paste into the R window Simulate fake dataset and display it Fit the logistic regression model in R, then

Bugs Compare the classical and Bayes estimates Superimpose fitted models on data

125

Logistic regression in R and Bugs:latent-variable parameterization

In RWinEdt Continue looking at “logit.R” Other window: “logit.latent.bug”

Copy from logit.R and paste into R Fit logistic regression in Bugs using the

latent-variable parameterization Compare the Bugs models, “logit.bug” and

“logit.latent.bug” Create an “outlier” in the data and fit

logistic regression again

126

Robust logistic regression in Bugs using the t distribution In RWinEdt

Continue looking at “logit.R” Other window: “logit.t.bug”, then

“logit.t.df.bug”, then “logit.t.df.adj.bug” Copy from logit.R and paste into R

Fit latent-variable model with t4 distribution instead of logistic

Fit latent-variable model with tdf distribution (df estimated from data)

Fix model to adjust for variance of t distribution

127

End logistic regression example

128

Troubleshooting: stripping the model down and rebuilding it

Remove interactions, predictors, hierarchical structures

Set hyperparameters to fixed values

Assign strong prior distributions to hyperparameters

129

Troubleshooting: expanding the model Computational problems often indicate

statistical problems Adding a variance component

Recall Poisson model for frisk data before and after we put in overdispersion

Adding a hierarchical structure Example from analysis of serial dilution data

Adding a predictor Example: including previous election

results in an analysis of state-level public opinion

130

Accounting for design of surveys, experiments, etc.

131

Relevance of data collection to statistical analysis General principle

Include all design info in the analysis Poststratification

State-level opinions from national polls

Other topics Experiments Cluster sampling Censored data

132

Begin pre-election polling example

133

State-level opinions from national polls Background on pre-election polls

Political science Survey methods Survey weighting

Classical analysis of a pre-election poll from 1988

Hierarchical models[Open R: election88 example]

134

Open R: election88 example Open RWinEdt

Open file “election88/election88_setup.R” In R:

setwd (“c:/bugs.course/election88”) source (“election88_setup.R”) Data are being loaded in and cleaned

135

Pre-election polls: demographic models Demographic models

Politically interesting Adjust for nonresponse bias

Bugs model Run from R, check convergence Get predictions and sum over states Compare to classical estimates Play with the model yourself

136

Election88 example in R(demographics only) Open RWinEdt

Open file “election88/demographics.R” Other window: “demographics.1.bug”, then

“demographics.2.bug” Copy from demographics.R and paste into R

window Set up and run Bugs model with debug=TRUE Close Bugs window Run Bugs for 100 iterations Run Bugs using model 2 Compute predicted values for each survey respondent Use survey weights to get a weighted avg for each state Compare to raw data and election outcomes

137

Pre-election polls: state models Hierarchical regressions for states

Like the 8-schools model but with binomial data model

Bugs model Run from R, check convergence Get predictions and sum over states

Model with states within regions Fit nested model, summarize results

Compare all the models fit so far

138

Election88 example in R(states only) Open RWinEdt

Open file “election88/states.R” Other window: “states.bug”

Copy from states.R and paste into R window Set up and run Bugs model Compute estimate for each state Compare to raw data, demographic

estimates, and election outcomes

139

Pre-election polls: models with demographics and states Crossed multilevel model with

demographic and state coefficients Set up model in Bugs Alternative parameterizations Compare all the models fit so far

We’ve used the computational strategy of “subsetting” or “data sampling”

140

Election88 example in R(demographics and states) Open RWinEdt

Open file “election88/all.1.R” Other window: “all.1.bug”

Copy from all.1.R and paste into the R window Set up and run Bugs model Read in Census data (demographics x state) Compute estimate for each state Compare to raw data, demographic

estimates, state estimates, and election outcomes

141

Playing with the pre-election polls

Including presvote as a state-level predictor

Putting Maryland in the northeast Other model alterations?

142

End pre-election polling example

143

Other design and data collection topics Cluster sampling

Similar to poststratification, but some clusters are empty (as with Alaska and Hawaii in the pre-election polls)

Experiments and observational studies Model should be conditional on all design

factors Blocking can be treated like clustering

Censored data Include in the likelihood (see chapter 18 of

Gelman and Hill, 2006)

144

Prior distributions

145

Prior distributions

Two traditional extremes: Noninformative priors Subjective priors

Problems with each approach New idea: weakly informative priors Illustration with a logistic regression

example

146

Logistic regression for voting Using National Election Study: For each year, predict vote choice

from sex, race, income Estimates can be unstable Bayes to the rescue Where does the prior distribution

come from? It really works!

147

Begin logistic-regression separation example

148

Logistic regression for voting

149

Separation in logit regression

In 1964, no blacks in the sample were voting for Goldwater

Maximum likelihood estimate is –infinity

It’s a sparse-data problem

150

Weakly informative priors for logistic regression coefficients Some prior info: logistic regression

coefs are almost always between -5 and 5: 5 on the logit scale takes you from .01

to .50 or from .50 to .99 Smoking and lung cancer

Independent Cauchy prior dists with center 0 and scale 2.5

Rescale each predictor so that a change of 1 unit is meaningful

bayesglm() function in R

151

Regularization in action!

152

End logistic-regression separation example

153

Weakly informative priors Scale predictors so that coefficients are

almost always less than 5 (and typically less than 1)

Now we know why dnorm (0, .0001) prior distributions in Bugs (that is, normal(0,1002)) were OK

Actually, dnorm (0, .01) (that is, normal(0,102)) is probably better

How much prior information to include???

154

Varying-intercept, varying-slope logistic regression

Red/blue states example [if there is time]

155

Commencement

156

Loose ends Slow convergence More complicated models Multivariate models, including imputation Huge datasets Suggested improvements in Bugs Indirect nature of Bugs modeling Programming in R [see Appendix C from

“Bayesian Data Analysis,” 2nd edition]

157

Some open problems in Bayesian data analysis

Complex, highly structured models (polling example) interactions between states and

demographic predictors, modeling changes over time Need reasonable classes of hierarchical models (with

“just enough” structure) Computation

Algorithms walk through high-dimensional parameter space

Key idea: link to computations of simpler models (“multiscale computation”, “simulated tempering”)

Model checking Automatic graphical displays Estimation of out-of-sample predictive error

158

Concluding discussion

What should you be able to do? Set up hierarchical models in Bugs Fit them and display/understand the

results using R Compare to estimates from simpler

models Use Bugs flexibly to explore models

What questions do you have?

159

Software resources Bugs

User manual (in Help menu) Examples volume 1 and 2 (in Help menu) Webpage (http://www.mrc-bsu.cam.ac.uk/bugs) has

pointers to many more examples and applications R

?command for quick help from the console Html help (in Help menu) has search function Complete manuals (in Help menu) Webpage (http://www.r-project.org) has pointers to more

Appendix C from “Bayesian Data Analysis,” 2nd edition, has more examples of Bugs and R programming for the 8-schools example

“Data Analysis Using Regression and Multilevel/Hierarchical Models” has lots of examples of Bugs and R.

160

References

General books on Bayesian data analysisBayesian Data Analysis, 2nd ed., Gelman, Carlin, Stern, Rubin

(2004)Bayes and Empirical Bayes Methods for Data Analysis, Carlin

and Louis (2000)

General books on multilevel modelingData Analysis Using Multilevel/Hierarchical Models, Gelman

and Hill (2007)Hierarchical Linear Models, Bryk and Raudenbush (2001)Multilevel Analysis, Snijders and Bosker (1999)

Books on RAn R and S Plus Companion to Applied Regression, Fox (2002)An Introduction to R, Venables and Smith (2002)

161

Collaborators

The examples were done in collaboration with Donald Rubin, Phillip Price, Jeffrey Fagan, Alex Kiss, Thomas Little, David Park, Joseph Bafumi, Boris Shor, Howard Wainer, Zaiying Huang, Ginger Chew, Aleks Jakulin, Masanao Yajima, Yu-Sung Su, Matt Salganik, and Tian Zheng

![5 aggregates by bugs tan[1]](https://static.fdocuments.in/doc/165x107/54b61a3b4a7959fb188b45bb/5-aggregates-by-bugs-tan1.jpg)