1 arXiv:2004.12276v1 [cs.CV] 26 Apr 2020 · Fashionpedia: Ontology, Segmentation, and an Attribute...

Transcript of 1 arXiv:2004.12276v1 [cs.CV] 26 Apr 2020 · Fashionpedia: Ontology, Segmentation, and an Attribute...

![Page 1: 1 arXiv:2004.12276v1 [cs.CV] 26 Apr 2020 · Fashionpedia: Ontology, Segmentation, and an Attribute Localization Dataset Menglin Jia?1, Mengyun Shi;4, Mikhail Sirotenko 3, Yin Cui](https://reader033.fdocuments.in/reader033/viewer/2022052005/6018d5177a2faa25142f93eb/html5/thumbnails/1.jpg)

Fashionpedia: Ontology, Segmentation, and anAttribute Localization Dataset

Menglin Jia?1, Mengyun Shi?1,4, Mikhail Sirotenko?3, Yin Cui?3,Claire Cardie1, Bharath Hariharan1, Hartwig Adam3, Serge Belongie1,2

1Cornell University 2Cornell Tech 3Google Research 4Hearst Magazines

Abstract. In this work we explore the task of instance segmentationwith attribute localization, which unifies instance segmentation (detectand segment each object instance) and fine-grained visual attribute cat-egorization (recognize one or multiple attributes). The proposed taskrequires both localizing an object and describing its properties. To illus-trate the various aspects of this task, we focus on the domain of fash-ion and introduce Fashionpedia as a step toward mapping out the vi-sual aspects of the fashion world. Fashionpedia consists of two parts:(1) an ontology built by fashion experts containing 27 main apparelcategories, 19 apparel parts, 294 fine-grained attributes and their re-lationships; (2) a dataset with everyday and celebrity event fashion im-ages annotated with segmentation masks and their associated per-maskfine-grained attributes, built upon the Fashionpedia ontology. In orderto solve this challenging task, we propose a novel Attribute-Mask R-CNN model to jointly perform instance segmentation and localized at-tribute recognition, and provide a novel evaluation metric for the task.We also demonstrate instance segmentation models pre-trained on Fash-ionpedia achieve better transfer learning performance on other fash-ion datasets than ImageNet pre-training. Fashionpedia is available at:https://fashionpedia.github.io/home/index.html.

Keywords: Dataset, Ontology, Instance Segmentation, Fine-Grained,Attribute, Fashion

1 Introduction

Recent progress in the field of computer vision has advanced machines’ abil-ity to recognize and understand our visual world, showing significant impacts infields including autonomous driving [52], cancer detection [29], product recog-nition [33,14], etc. These real-world applications are fueled by various visualunderstanding tasks with the goals of naming, describing (attribute recognition),or localizing objects within an image.

Naming and localizing objects is formulated as an object detection task (Fig-ure 1(a-c)). As a hallmark for computer recognition, this task is to identify and

? equal contribution.

arX

iv:2

004.

1227

6v1

[cs

.CV

] 2

6 A

pr 2

020

![Page 2: 1 arXiv:2004.12276v1 [cs.CV] 26 Apr 2020 · Fashionpedia: Ontology, Segmentation, and an Attribute Localization Dataset Menglin Jia?1, Mengyun Shi;4, Mikhail Sirotenko 3, Yin Cui](https://reader033.fdocuments.in/reader033/viewer/2022052005/6018d5177a2faa25142f93eb/html5/thumbnails/2.jpg)

2 Menglin Jia, Mengyun Shi, Mikhail Sirotenko, Yin Cui, et al.

(a) (b) (c) (d)

Relationships: Part ofTextile Finishing Textile Pattern Silhouette Opening TypeLength Nickname Waistline

Jacket

Tops

Ankle length

Symmetrical

Above-the-hip length

Single-breasted

Dropped shoulder

Regular (fit)

Plain

Neckline

Shoe

SleeveSleeve PocketPocket

Above-the-hip length

Fly (Opening)

Slim (fit)

Regular (fit)

Symmetrical

Washed

Distressed

Plain

Plain

Normal Waist

Collar

Pants

Glasses

Bag

Shoe

Ensemble

Instance Segmentation

Localized Attribute

Annotations: +

Symmetrical

Above-the-hip length

Single-breasted

Dropped shoulder

Regular (fit)

Plain

Washed

Above-the-hip length

Regular (fit)

Plain

Ankle length

Fly (Opening)

Slim (fit)

Symmetrical

Distressed

Plain

Normal Waist

HAS

_ATT

RIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS

_ATT

RIB

UTE

HAS_ATT

RIBUTE

HAS_ATTRIB

UTE

HAS_ATT

RIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIB

UTE

HAS_ATTR

IBUTE

HAS

_ATT

RIB

UTE

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TE

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_AT

TR

IBU

TE

HA

S_AT

TR

IBU

TE

HA

S_A

TTR

IBU

TE

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTR

IBUTE

HA

S_ATTR

IBU

TE

HA

S_A

TTR

IBU

TE

HA

S_A

TTR

IBU

TE HAS

_ATT

RIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATT

RIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_A

TTRIB

UTE

HAS_ATTRIB

UTE

HAS

_ATT

RIB

UTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HAS_ATT

RIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATT

RIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS

_ATT

RIB

UTE

HAS_ATT

RIBUTE

HAS_ATTRIB

UTE

HAS_ATT

RIBUTE

HAS_ATTRIB

UTE

HAS

_ATT

RIB

UTE

HAS_ATTRIB

UTE

HAS_ATT

RIBUTE

HAS_A

TTRIB

UTE

HAS_ATTRIBUTE

HAS

_ATT

RIB

UTE

HAS_A

TTRIB

UTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_A

TTR

IBU

TE

HAS

_ATT

RIB

UTE

HAS_ATT

RIBUTE

HAS

_ATT

RIB

UTE

HAS_ATT

RIBUTE

HA

S_A

TTR

IBU

TE

HAS_A

TTRIB

UTE

HAS_A

TTRIB

UTE

HAS

_ATT

RIB

UTE

HA

S_A

TTR

IBU

TE

HAS

_ATT

RIB

UTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_A

TTR

IBU

TE

HA

S_A

TTR

IBU

TE

HAS

_ATT

RIB

UTE

HA

S_A

TTR

IBU

TE

HAS

_ATT

RIB

UTE

HA

S_A

TTR

IBU

TE

HAS

_ATT

RIB

UTE

HAS

_ATT

RIB

UTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATT

RIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HAS_ATT

RIBUTE

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_A

TTR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_AT

TR

IBU

TE

HAS_ATTR

IBUTE

HA

S_ATTR

IBU

TE

HAS_ATTR

IBUTE

HA

S_ATTR

IBU

TE

HA

S_AT

TR

IBU

TE

HA

S_ATTR

IBU

TE

HAS_ATTR

IBUTE

HAS_ATTR

IBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTR

IBUTE

HAS_ATTR

IBUTE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HA

S_ATTR

IBU

TE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE H

AS_ATTRIBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS

_ATT

RIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATT

RIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_A

TTRIB

UTE

HAS_ATTRIB

UTE

HAS

_ATT

RIB

UTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HAS_ATT

RIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS

_ATT

RIB

UTE

HAS_ATT

RIBUTE

HA

S_A

TTR

IBU

TEH

AS

_ATT

RIB

UTE

HA

S_A

TTR

IBU

TEH

AS

_ATT

RIB

UTE

HA

S_A

TTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E HA

S_A

TT

RIB

UT

E

HA

S_AT

TR

IBU

TE

HA

S_AT

TR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATTRI…

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HAS_ATT

RIBUTE

HA

S_AT

TR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_A

TTR

IBU

TE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HA

S_AT

TR

IBU

TE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE HAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HAS_ATTR

IBUTE

HA

S_AT

TR

IBU

TE

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HA

S_A

TTR

IBU

TE

HA

S_AT

TR

IBU

TE

HAS_ATTRIBUTE

HAS

_ATT

RIB

UTE

HA

S_A

TTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HAS_A

TTRIB

UTE

HA

S_A

TTR

IBU

TE

HA

S_A

TTR

IBU

TE

HAS

_ATT

RIB

UTE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_AT

TR

IBU

TE

HAS_ATTR

IBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_AT

TR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_AT

TR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HAS_ATTR

IBUTE

HAS_ATTRIB

UTE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTR

IBUTE

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HAS_A

TTRIB

UTE

HAS_ATTR

IBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HA

S_ATTR

IBU

TE

HAS_ATTR

IBUTE

HA

S_ATTR

IBU

TEH

AS

_ATT

RIB

UT

E

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HAS_ATTR

IBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TEH

AS

_AT

TR

IBU

TE

HAS_ATTRIBUTE

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HA

S_ATTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEH

AS

_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HA

S_A

TT

RIB

UT

EHAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_AT

TR

IBU

TE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HAS_ATTR

IBUTEH

AS

_ATT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HA

S_A

TTR

I…

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HA

S_AT

TR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HAS

_ATT

RIB

UTE

HAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TEHA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HA

S_AT

TR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_A

TTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS

_ATT

RIB

UTE

HAS_ATT

RIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HAS

_ATT

RIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS

_ATT

RIB

UTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATT

RIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS

_ATT

RIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS

_ATT

RIB

UTE

HAS_A

TTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS

_ATT

RIB

UTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS

_ATT

RIB

UTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_AT

TR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS

_ATT

RIB

UTE

HA

S_A

TT

RIB

UT

E

HA

S_A

TTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TTR

IBU

TE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

EH

AS

_AT

TR

IBU

TE

HAS_ATTRIBUTE

HAS

_ATT

RIB

UTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HAS_ATT

RIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_A

TTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS

_ATT

RIB

UTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS

_ATT

RIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS

_ATT

RIB

UTE

HAS_ATT

RIBUTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HAS_ATTR

IBUTE

HAS

_ATT

RIB

UTE

HAS_ATTRIB

UTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_A

TTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS

_ATT

RIB

UTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS

_ATT

RIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HAS

_ATT

RIB

UTE

HAS_ATTRIB

UTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HA

S_A

TTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIB

UTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_A

TTRIB

UTE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS

_ATT

RIB

UTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_A

TTRIB

UTE

HAS_ATTRIBUTE

HAS

_ATT

RIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HAS_A

TTRIB

UTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATT

RIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

EH

AS

_ATT

RIB

UT

E

HA

S_ATTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

EH

AS

_ATT

RIB

UT

E

HA

S_ATTR

IBU

TE

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HA

S_AT

TR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TE

HAS_ATTR

IBUTE

HA

S_ATTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_AT

TR

IBU

TE

HA

S_AT

TR

IBU

TE

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HAS_ATTR

IBUTE

HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_AT

TR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HA

S_AT

TR

IBU

TE

HA

S_ATTR

IBU

TE

HAS

_ATTRIBU

TE

HAS_ATTR

IBUTE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_AT

TR

IBU

TE

HA

S_ATTR

IBU

TE

HAS_ATTR

IBUTE

HA

S_ATTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_A

TTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_A

TTRIB

UTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTEHAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_AT

TRIB

UTE

HA

S_AT

TR

IBU

TE

HAS

_ATT

RIB

UTE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_ATTR

IBU

TE

HA

S_AT

TR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_AT

TR

IBU

TE

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HA

S_A

TT

RIB

UT

E

HAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HA

S_A

TTR

IBU

TE

HA

S_ATTR

IBU

TE

HAS_ATTR

IBUTE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HAS_ATTR

IBUTE

HA

S_ATTR

IBU

TE

HAS_ATTR

IBUTE

HAS_ATTR

IBUTE

HA

S_ATTR

IBU

TE

HA

S_ATTR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HA

S_A

TTR

IBU

TE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATTR

IBUTE

HAS_ATTR

IBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

HA

S_ATTR

IBU

TE

HAS_ATTR

IBUTE

HA

S_A

TT

RIB

UT

E

HA

S_AT

TR

IBU

TE

HAS_ATTRIBUTE

HAS_ATTRIBUTE

Asymm-etrical

Symm-etrical

Peplum

Circle

Flare

Fit and Flare

Trumpet

Merm-aid

Balloon /Bubble

Bell

Bell Bottom

Bootcut /Boot cut

Peg

Pencil

Straight

A-Line / ALine

Tent /Trapeze

Baggy

Wide Leg

High Low

Curved (fit)

Tight (fit) /Slim (fit) /Skinny (fit)

Regul-ar (fit)

Loose (fit)

Oversized /Oversize

EmpireWaistline

DroppedWaistline /

High waist /

NormalWaist /

Normal-w…

Low waist /Low Rise

Basque(wasitline)

No waistline

Above-the-…

Hip (length)

Micro (length)

Mini(length) /Mid-thigh(length)

Above-the-k…

Knee(Length) /Knee High

(length)

Below TheKnee

(length) /Below-th…

Midi /Mid-calf /mid calf /

Tea-length /tea len…

Maxi(length) /

Ankle(length)

Floor(length) / Full

(length)

Sleeveless

Short (length)

Elbow-length

Threequarter

(length) /

Wristlength

Singlebreasted

Doublebreasted

Lace Up /Lace-up

Wrapping /Wrap

Zip-up

Fly (Opening)

Chained(Opening)

Buckled(Opening)

Toggled(Opening)

no opening

Plastic

Rubber

Metal

StrawFeather

Gem /Gemstone

Bone

Ivory

Fur / Faux-fur

Leather /Faux-leather

Suede

Shearling

Crocodile

Snakeskin

Wood

nonon-textilematerial

Burnout

Distressed /Ripped

Washed

Embossed

FrayedPrinted

Ruched /Ruching

Quilted

Pleat /pleated

Gathering /gathered

Smocking /Shirring

Tiered /layered

Cutout

Slit

Perfor-ated

Lining / lined

Applique /Embroidery /

Patch

Bead

Rivet / Stud /Spike

Sequin

no specialmanufactur…

Plain(pattern)

Abstract

Cartoon

Letters &Numbers

Camou-flage

Check / Plaid/ Tartan

Dot

Fair Isle

Floral

Geometric

Paisley

Stripe

Houndstooth(pattern)

Herringbone(pattern)

Chevron

Argyle

Animal

Leopard

Snakeskin(pattern)

Cheetah

Peacock

Zebra

Giraffe

Toile de Jouy

Plant

Classic(T-shirt)

Polo (Shirt)

Under-shirt

Henley (Shirt)

Ringer(T-shirt)

Raglan(T-shirt)

Rugby (Shirt)

Sailor (Shirt)

Crop (Top) /Midriff (Top)

Halter (Top)

Camisole

Tank (Top)

Peasant(Top)

Tube (Top) /bandeau

(Top)

Tunic (Top)

Smock (Top)

Hoodie

Blazer

Pea (Jacket)

Puffer(Jacket) /

Down(Jacket)

Biker(Jacket) /

Moto(Jacket)

Trucker(Jacket) /

Denim(Jacket)

Bomber(Jacket)

Anorak

Safari(Jacket) /

Utility(Jacket) /

Cargo

Mao (Jacket)

Nehru(Jacket)

Norfolk(Jacket)

Classicmilitary(Jacket)

Track(Jacket)

Windbreaker Chanel(Jacket)

Bolero

Tuxedo(Jacket)

Varsity(Jacket)

Crop (Jacket)

Jeans

Sweatpants/ Jogger(Pants)

Leggings

Hip-huggers(Pants) / Hiphuggers (…

Cargo(Pants) /Military(Pants)

Culottes

Capri (Pants)

Harem(Pants)

Sailor (Pants)

Jodhpur /Breeches(Pants)

Peg (Pants)

Camo(Pants)

Track (Pants)

Crop (Pants)

Short(Shorts) / Hot

(Pants)

Booty(Shorts)

Berm-uda(Shorts)

Cargo(Shorts)

Trunks

Boardshorts/ Board(Shorts)

Skort

Roll-Up(Shorts) /Boyfriend(Shorts)

Tie-up(Shorts)

Culotte(Shorts)

Lounge(Shorts)

Bloom-ers

Tutu (Skirt) /Ballerina

(Skirt)

Kilt

Wrap (Skirt)

Skater (Skirt)

Cargo (Skirt)

Hobble(Skirt) /Wiggle(Skirt)

Sheath (Skirt)

Ball Gown(Skirt)

Gypsy(Skirt) /

Broomstick(Skirt)

Rah-rah(Skirt)

Prairie (Skirt)

Flame-nco

(Skirt)

Accordion(Skirt)

Sarong(Skirt)

Tulip (Skirt)

Dirndl (Skirt)

Godet (Skirt)

Blanket(Coat)

Parka

Trench (Coat)

Pea (Coat) /Reefer (Co…

Shearling(Coat)

TeddyBear (Coat)

/ Teddy(Coat) / Fur

(Coat)

Puffer (Coat)

Duster (Coat)

Raincoat

Kimono

Robe

Dress (Coat

Duffle(Coat) /

Duffel (Co…

Wrap (Coat)

Military(Coat)Swing

(Coat)

Halter(Dress)

Wrap (Dress)

Chemise(Dress)

Slip (Dress)

Cheongsams/ Qipao

Jumper(Dress)

Shift (Dress)

Sheath(Dress)

Shirt (Dress)

Sundress /Sun (Dress)

Kaftan /Caftan

Bodycon(Dress)

Nightgown

Gown

Sweater(Dress)

Tea (Dress)

Blouson(Dress)

Tunic (Dress)

Skater(Dress)

Asymmetric(Collar)

Regular(Collar)

Shirt (Collar)

Polo(Collar) / FlatKnit (Collar)

Chelsea(Collar)

Banded(Collar)

Mandarin(Collar) /Nehru(Collar)

Peter Pan

(Collar)

Bow(Collar) / Tie

(Collar) /Ascot

(Collar)

Stand-away(Collar)

Jabot (Collar)

Sailor (Collar)

Oversized(Collar)

Notched(Lapel)

Peak (Lapel)

Shawl (Lapel)

Napoleon(Lapel)

Oversized(Lapel)

Set-in sleeve

Dropped-sh…

Raglan(Sleeve)

Cap (Sleeve)

Tulip(Sleeve) /

Petal(Sleeve)

Puff (Sleeve)

Bell(Sleeve) /

Circularflounce

(Sleeve) /Ruffle

(Sleeve)

Poet (Sleeve)

Dolman(Sleeve) &

Batwing(Sleeve)

Bishop(Sleeve)

Leg ofmutton

(Sleeve)

Kimono(Sleeve)

Cargo(Pocket) /

Gusset(Pocket) /

Bellow

Patch(Pocket)

Welt (Pocket)

Kang-aroo(Pocket)

Seam(Pocket)

Slash(Pocket) /Slashed

(Pocket) /slant (…

Curved(Pocket)

Flap (Pocket)

Collarless

Asymmetric(Neckline)

Crew (Neck)

Round(Neck) /

Roundneck

V-neck / V(Neck)

Surplice(Neck)

Oval (Neck)

U-neck / U(Neck)

Sweetheart(Neckline)

Queen anne(Neck)

Boat (Neck)

Scoop (Neck)

Square(Neckline)

Plunging(Neckline) /

Plunge(Neckline)

Keyhole(Neck)

Halter (Neck)

Crossover(Neck)

Choker(Neck)

High (Neck) /

Bottle (Neck)

Turtle(Neck) /

Mock (Neck)/ Polo(Neck)

Cowl (Neck)

Straightacross(Neck)

Illusion(Neck)

Off-the-sho…

One shoulder

Jacket

Shirt / Blouse

Tops

Sweater(pullover, without

opening)

Cardigan (withopening)

Vest / Gilet Pants

ShortsSkirt

CoatDress

Jumpsuit

CapeCollar

Sleeve

Neckline

Lapel

Scarf

Umbrella

Epaulette

Buckle

Zipper

Applique

Bead

Bow

Ribbon

Rivet

Ruffle

Sequin

Tassel

Shoe

Bag, wallet

Flower

Fringe

Headband,

Hair Accessory Tie

Glove

Watch

Belt

Leg warmer

Tights, stockings

Sock

Glasses

Hat

ModaNetDeepFashion2

Apparel CategoriesFine-grained Attributes

(f)

JacketTops

Sweater

Cardigan (withopening)

Vest / Gilet Pants

ShortsSkirt

CoatDress

Jumpsuit

CapeCollar

Sleeve

Neckline

Lapel

Scarf

Umbrella

Epaulette

Buckle

Zipper

Applique

Bead

Bow

Ribbon

Rivet

Ruffle

Sequin

Tassel

Shoe

Bag, wallet

Flower

Fringe

Tie

Glove

Watch

Belt

Leg warmer

Sock

Glasses

Hat

ModaNetDeepFashion2

Apparel CategoriesFine-grained Attributes

(e)

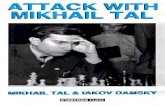

Fig. 1. An illustration of the Fashionpedia dataset and ontology: (a) main gar-ment masks; (b) garment part masks; (c) both main garment and garment part masks;(d) fine-grained apparel attributes; (e) an exploded view of the annotation diagram: theimage is annotated with both instance segmentation masks (white boxes) and per-maskfine-grained attributes (black boxes); (f) visualization of the Fashionpedia ontology: wecreated Fashionpedia ontology and separate the concept of categories (yellow nodes)and attributes [39] (blue nodes) in fashion. It covers pre-defined garment categoriesused by both Deepfashion2 [10] and ModaNet [54]. Mapping with DeepFashion2 alsoshows the versatility of using attributes and categories. We are able to present all 13garment classes in DeepFashion2 with 11 main garment categories, 1 garment part,and 7 attributes. Best viewed digitally

indicate the boundaries of objects in the form of bounding boxes or segmentationmasks [11,38,17]. Attribute recognition [5,30,8,37] (Figure 1(d)) instead focuseson describing and comparing objects, since an object also has many other prop-erties or attributes in addition to its category. Attributes not only provide acompact and scalable way to represent objects in the world, as pointed out byFerrari and Zisserman [8], attribute learning also enables transfer of existingknowledge to novel classes. This is particularly useful for fine-grained visualrecognition, with the goal of distinguishing subordinate visual categories such asbirds [47] or natural species [44].

In the spirit of mapping the visual world, we propose a new task, instancesegmentation with attribute localization, which unifies object detection and fine-grained attribute recognition. As illustrated in Figure 1(e), this task offers astructured representation of an image. Automatic recognition of a rich set of at-tributes for each segmented object instance complements category-level objectdetection and therefore advance the degree of complexity of images and sceneswe can make understandable to machines. In this work, we focus on the fash-ion domain as an example to illustrate this task. Fashion comes with rich and

![Page 3: 1 arXiv:2004.12276v1 [cs.CV] 26 Apr 2020 · Fashionpedia: Ontology, Segmentation, and an Attribute Localization Dataset Menglin Jia?1, Mengyun Shi;4, Mikhail Sirotenko 3, Yin Cui](https://reader033.fdocuments.in/reader033/viewer/2022052005/6018d5177a2faa25142f93eb/html5/thumbnails/3.jpg)

Fashionpedia 3

complex apparel with attributes, influences many aspects of modern societies,and has a strong financial and cultural impact. We anticipate that the proposedtask is also suitable for other man-made product domains such as automobileand home interior.

Structured representations of images often rely on structured vocabular-ies [28]. With this in mind, we construct the Fashionpedia ontology (Figure 1(f))and image dataset (Figure 1(a-e)), annotating fashion images with detailed seg-mentation masks for apparel categories, parts, and their attributes. Our pro-posed ontology provides a rich schema for interpretation and organization ofindividuals’ garments, styles, or fashion collections [24]. For example, we cancreate a knowledge graph (see supplementary material for more details) by ag-gregating structured information within each image and exploiting relationshipsbetween garments and garment parts, categories, and attributes in the Fash-ionpedia ontology. Our insight is that a large-scale fashion segmentation andattribute localization dataset built with a fashion ontology can help computervision models achieve better performance on fine-grained image understandingand reasoning tasks.

The contributions of this work are as follows:A novel task of fine-grained instance segmentation with attribute localization.

The proposed task unifies instance segmentation and visual attribute recognition,which is an important step toward structural understanding of visual content inreal-world applications.

A unified fashion ontology informed by product descriptions from the internetand built by fashion experts. Our ontology captures the complex structure offashion objects and ambiguity in descriptions obtained from the web, containing46 apparel objects (27 main apparels and 19 apparel parts), and 294 fine-grainedattributes (spanning 9 super categories) in total. To facilitate the development ofrelated efforts, we also provide a mapping with categories from existing fashionsegmentation datasets, see Figure 1(f).

A dataset with a total of 48,825 clothing images in daily-life, street-style,celebrity events, runway, and online shopping annotated both by crowd workersfor segmentation masks and fashion experts for localized attributes, with thegoal of developing and benchmarking computer vision models for comprehensiveunderstanding of fashion.

A new model, Attribute-Mask R-CNN, is proposed with the aim of jointlyperforming instance segmentation and localized attribute recognition; a novelevaluation metric for this task is also provided. We further demonstrate instancesegmentation models pre-trained on Fashionpedia achieve better transfer learn-ing performance on DeepFashion2 and ModaNet than ImageNet pre-training.

2 Related Work

The combined task of fine-grained instance segmentation and attribute lo-calization has not received a great deal of attention in the literature. On onehand, COCO [32] and LVIS [15] represent the benchmarks of object detection

![Page 4: 1 arXiv:2004.12276v1 [cs.CV] 26 Apr 2020 · Fashionpedia: Ontology, Segmentation, and an Attribute Localization Dataset Menglin Jia?1, Mengyun Shi;4, Mikhail Sirotenko 3, Yin Cui](https://reader033.fdocuments.in/reader033/viewer/2022052005/6018d5177a2faa25142f93eb/html5/thumbnails/4.jpg)

4 Menglin Jia, Mengyun Shi, Mikhail Sirotenko, Yin Cui, et al.

Table 1. Comparison of fashion-related datasets: (Cls. = Classification, Segm. = Seg-mentation, MG = Main Garment, GP = Garment Part, A = Accessory, S = Style,FGC = Fine-Grained Categorization). To the best of our knowledge, we include allfashion-related datasets focusing on visual recognition.

Name Category Annotation Type Attribute Annotation Type

Cls. BBox Segm. Unlocalized Localized FGC

Clothing Parsing [50] MG, A - - - - -Chic or Social [49] MG, A - - - - -Hipster [26] MG, A, S - - - - -Ups and Downs [19] MG - - - - -Fashion550k [23] MG, A - - - - -Fashion-MNIST [48] MG - - - - -

Runway2Realway [45] - - MG, A - - -ModaNet [54] - MG, A MG, A - - -Deepfashion2 [10] - MG MG - - -

Fashion144k [41] MG, A - - X - -Fashion Style-128 Floats [42] S - - X - -UT Zappos50K [51] A - - X - -Fashion200K [16] MG - - X - -FashionStyle14 [43] S - - X - -

Main Product Detection [40] - MG - X - -

StreetStyle-27K [36] - - - X - X

UT-latent look [21] MG, S - - X - XFashionAI [6] MG, GP, A - - X - XiMat-Fashion Attribute [14] MG, GP, A, S - - X - X

Apparel classification-Style [4] - MG - X - XDARN [22] - MG - X - XWTBI [25] - MG, A - X - XDeepfashion [33] S MG - X - X

Fashionpedia - MG, GP, A MG, GP, A - X X

for common objects. Panoptic segmentation is proposed to unify both seman-tic and instance segmentation, addressing both stuff and thing classes [27]. Inspite of the domain differences, Fashionpedia has comparable mask qualitieswith LVIS and the similar total number of segmentation masks as COCO. Onthe other hand, we have also observed an increasing effort to curate datasets forfine-grained visual recognition, evolved from CUB-200 Birds [47] to the recentiNaturalist dataset [44]. The goal of this line of work is to advance the state-of-the-art in automatic image classification for large numbers of real world, fine-grained categories. A rather unexplored area of these datasets, however, is toprovide a structured representation of an image. Visual Genome [28] providesdense annotations of object bounding boxes, attributes, and relationships in thegeneral domain, enabling a structured representation of the image. In our work,we instead focus on fine-grained attributes and provide segmentation masks inthe fashion domain to advance the clothing recognition task.

Clothing recognition has received increasing attention in the computer vi-sion community recently. A number of works provide valuable apparel-related

![Page 5: 1 arXiv:2004.12276v1 [cs.CV] 26 Apr 2020 · Fashionpedia: Ontology, Segmentation, and an Attribute Localization Dataset Menglin Jia?1, Mengyun Shi;4, Mikhail Sirotenko 3, Yin Cui](https://reader033.fdocuments.in/reader033/viewer/2022052005/6018d5177a2faa25142f93eb/html5/thumbnails/5.jpg)

Fashionpedia 5

datasets [50,4,49,26,45,41,22,25,19,42,33,23,48,51,16,43,40,36,21,54,6,10]. Thesepioneering works enabled several recent advances in clothing-related recognitionand knowledge discovery [9,35]. Table 1 summarizes the comparison among dif-ferent fashion datasets regarding annotation types of clothing categories andattributes. Our dataset distinguishes itself in the following three aspects.

Exhaustive annotation of segmentation masks: Existing fashion datasets[45,54,10] offer segmentation masks for the main garment (e.g., jacket, coat,dress) and the accessory categories (e.g., bag, shoe). Smaller garment objectssuch as collars and pockets are not annotated. However, these small objectscould be valuable for real-world applications (e.g., searching for a specific collarshape during online-shopping). Our dataset is not only annotated with the seg-mentation masks for a total of 27 main garments and accessory categories butalso 19 garment parts (e.g., collar, sleeve, pocket, zipper, embroidery).

Localized attributes: The fine-grained attributes from existing datasets[22,33,40,14] tend to be noisy, mainly because most of the annotations are col-lected by crawling fashion product attribute-level descriptions directly from largeonline shopping websites. Unlike these datasets, fine-grained attributes in ourdataset are annotated manually by fashion experts. To the best of our knowl-edge, ours is the only dataset to annotate localized attributes: fashion experts areasked to annotate attributes associated with the segmentation masks labeled bycrowd workers. Localized attributes could potentially help computational modelsdetect and understand attributes more accurately.

Fine-grained categorization: Previous studies on fine-grained attributecategorization suffer from several issues including: (1) repeated attributes be-longing to the same category (e.g., zip,zipped and zipper) [33,21]; (2) basiclevel categorization only (object recognition) and lack of fine-grained catego-rization [50,4,49,26,25,45,42,43,23,16,48,54,10]; (3) lack of fashion taxonomieswith the needs of real-world applications for the fashion industry, possibly dueto the research gap in fashion design and computer vision; (4) diverse taxonomystructures from different sources in fashion domain. To facilitate research in theareas of fashion and computer vision, our proposed ontology is built and veri-fied by fashion experts based on their own design experiences and informed bythe following four sources: (1) world-leading e-commerce fashion websites (e.g.,ZARA, H&M, Gap, Uniqlo, Forever21); (2) luxury fashion brands (e.g., Prada,Chanel, Gucci); (3) trend forecasting companies (e.g., WGSN); (4) academicresources [7,3].

3 Dataset Specification and Collection

3.1 Ontology specification

We propose a unified fashion ontology (Figure 1(f)), a structured vocabu-lary that utilizes the basic level categories and fine-grained attributes [39]. TheFashionpedia ontology relies on similar definitions of object and attributes as pre-vious well-known image datasets. For example, a Fashionpedia object is similar

![Page 6: 1 arXiv:2004.12276v1 [cs.CV] 26 Apr 2020 · Fashionpedia: Ontology, Segmentation, and an Attribute Localization Dataset Menglin Jia?1, Mengyun Shi;4, Mikhail Sirotenko 3, Yin Cui](https://reader033.fdocuments.in/reader033/viewer/2022052005/6018d5177a2faa25142f93eb/html5/thumbnails/6.jpg)

6 Menglin Jia, Mengyun Shi, Mikhail Sirotenko, Yin Cui, et al.

-gown-sequin-floor (length)-flare-high waist-lining-zip-up-fair isle-ruched-bead-plain (pattern)-asymmetrical-no non-textile material

-single breasted-floral-symmetrical

-shirt (collar)

-wrist-length-plain (pattern) -above-the-hip -no non-textile material -tight (fit) -symmetrical

-plain (pattern) -above-the-hip (length) -no non-textile material -trucker (jacket) -washed -regular (fit) -single breasted-symmetrical -no waistline

-regular (collar)

-plain (pattern) -no non-textile material -regular (fit) -fly (opening) -peg (pants) -maxi (length) -symmetrical -peg

-welt (pocket)

-welt (pocket)

-wrist-length-set-in sleeve

-plain (pattern) -no non-textile material -double breasted -regular (fit) -trench (coat) -mini (length) -symmetrical -no waistline

-above-the-hip (length)-plain (pattern)-normal waist-no non-textile material-tight (fit)-classic (t-shirt)-symmetrical

-above-the-hip (length)-normal waist-no non-textile material-regular (fit)-single breasted-symmetrical-dot

-plain (pattern)-no non-textile material-regular (fit)-mini (length)-blanket (coat)-single breasted-lining-symmetrical-no waistline

-v-neck

-wrist-length-set-in sleeve

-wrist-length

-plain (pattern)-jeans-no non-textile material-regular (fit)-washed-low waist-maxi (length)-symmetrical-fly (opening)-straight

-patch (pocket)

-curved (pocket)

total masks: 53 total masks: 55

total categories: 16 total categories: 16 total categories: 15 total categories : 15

(a) (b)

(c) (d) (e) (f) (g) (h) (i)

Fig. 2. Image examples with annotated segmentation masks (a-f) and fine-grainedattributes (g-i).

to “item” in Wikidata [46], or “object” in COCO [32] and Visual Genome [28]).In the context of Fashionpedia, objects represent common items in apparel (e.g.,jacket, shirt, dress). In this section, we break down each component of the Fash-ionpedia ontology and illustrate the construction process. With this ontologyand our image dataset, a large-scale fashion knowledge graph can be built as anextended application of our dataset (more details can be found in the supple-mentary material).

Apparel categories. In the Fashionpedia dataset, all images are annotatedwith one or multiple main garments. Each main garment is also annotated withits garment parts. For example, general garment types such as jacket, dress,pants are considered as main garments. These garments also consist of severalgarment parts such as collars, sleeves, pockets, buttons, and embroideries. Maingarments are divided into three main categories: outerwear, intimate and acces-sories. Garment parts also have different types: garment main parts (e.g., collars,sleeves), bra parts, closures (e.g., button, zipper) and decorations (e.g., embroi-dery, ruffle). On average, each image consists of 1 person, 3 main garments,3 accessories, and 12 garment parts, each delineated by a tight segmentationmask (Figure 1(a-c)). Furthermore, each object is assigned to a synset ID in ourFashionpedia ontology.

Fine-grained attributes. Main garments and garment parts can be asso-ciated with apparel attributes (Figure 1(e)). For example, “button” is part ofthe main garment “jacket”; “jacket” can be linked with the silhouette attribute“symmetrical”; the garment part “button” could contain the attribute “metal”with a relationship of material. The Fashionpedia ontology provide attributes for13 main outerwear garments categories, and 5 out of 19 garments parts (“sleeve”,“neckline”, “pocket”, “lapel”, and “collar”). Each image has 16.7 attributes onaverage (max 57 attributes). As with the main garments and garment parts, wecanonicalize all attributes to our Fashionpedia ontology.

Relationships. Relationships can be formed between categories and at-tributes. There are three main types of relationships (Figure 1(e)): (1) outfitsto main garments, main garments to garment parts: meronymy (part-of) rela-

![Page 7: 1 arXiv:2004.12276v1 [cs.CV] 26 Apr 2020 · Fashionpedia: Ontology, Segmentation, and an Attribute Localization Dataset Menglin Jia?1, Mengyun Shi;4, Mikhail Sirotenko 3, Yin Cui](https://reader033.fdocuments.in/reader033/viewer/2022052005/6018d5177a2faa25142f93eb/html5/thumbnails/7.jpg)

Fashionpedia 7

tionship; (2) main garments to attributes or garment parts to attributes: theserelationship types can be garment silhouette (e.g., peplum), collar nickname(e.g., peter pan collars), garment length (e.g., knee-length), textile finishing (e.g.,distressed), or textile-fabric patterns (e.g., paisley), etc.; (3) within garments,garment parts or attributes: there is a maximum of four levels of hyponymy(is-an-instance-of) relationships. For example, weft knit is an instance of knitfabric, and the fleece is an instance of weft knit.

3.2 Image Collection and Annotation Pipeline

Image collection. A total of 50,527 images were harvested from Flickrand free license photo websites, which included Unsplash, Burst by Shopify,Freestocks, Kaboompics, and Pexels. Two fashion experts verified the quality ofthe collected images manually. Specifically, the experts checked a scenes’ diversityand made sure clothing items were visible in the images. Fdupes [34] is used toremove duplicated images. After filtering, 48,825 images were left and used tobuild our Fashionpedia dataset.

Annotation Pipeline. Expert annotation is often a time-consuming pro-cess. In order to accelerate the annotation process, we decoupled the work be-tween crowd workers and experts. We divided the annotation process into thefollowing two phases.

First, segmentation masks with apparel objects are annotated by 28 crowdworkers, who were trained for 10 days before the annotation process (with pre-pared annotation tutorials of each apparel object, see supplementary materialfor details). We collected high-quality annotations by having the annotators fol-low the contours of garments in the image as closely as possible (See section 4.2for annotation analysis). This polygon annotation process was monitored dailyand verified weekly by a supervisor and by the authors.

Second, 15 fashion experts (graduate students in the apparel domain) wererecruited to annotate the fine-grained attributes for the annotated segmenta-tion masks. Annotators were given one mask and one attribute super-category(“textile pattern”, “garment silhouette” for example) at a time. An additionaltwo options, “not sure” and “not on the list” were added during the annotation.The option “not on the list” indicates that the expert found an attribute thatis not on the proposed ontology. If “not sure” is selected, it means the expertcan not identify the attribute of one mask. Common reasons for this selctioninclude occlusion of the masks and viewing angles of the image; (for example,a top underneath a closed jacket). More details can be found in Figure 2. Eachattribute supercategory is assigned to one or two fashion experts, dependingon the number of masks. The annotations are also checked by another expertannotator before delivery.

We split the data into training, validation and test sets, with 45,623, 1158,2044 images respectively. The dataset will be publicly available by the timeof paper publication. More details of the dataset creation can be found in thesupplementary material.

![Page 8: 1 arXiv:2004.12276v1 [cs.CV] 26 Apr 2020 · Fashionpedia: Ontology, Segmentation, and an Attribute Localization Dataset Menglin Jia?1, Mengyun Shi;4, Mikhail Sirotenko 3, Yin Cui](https://reader033.fdocuments.in/reader033/viewer/2022052005/6018d5177a2faa25142f93eb/html5/thumbnails/8.jpg)

8 Menglin Jia, Mengyun Shi, Mikhail Sirotenko, Yin Cui, et al.

4 Dataset Analysis

This section will discuss a detailed analysis of our dataset using the train-ing images. We begin by discussing general image statistics, followed by ananalysis of segmentation masks, categories, and attributes. We compare Fash-ionpedia with four other segmentation datasets, including two recent fashiondatasets DeepFashion2 [10] and ModaNet [54], and two general domain datasetsCOCO [32] and LVIS [15].

4.1 Image Analysis

We choose to use images with high resolutions during the curating processsince Fashionpedia ontology includes diverse fine-grained attributes for both gar-ments and garment parts. The Fashionpedia training images have an average di-mension of 1710 (width) × 2151 (height). Images with high resolutions are ableto show apparel objects in detail, leading to more accurate and faster annotationsfor both segmentation masks and attributes. These high resolution images canalso benefit downstream tasks such as detection and image generation. Examplesof detailed annotations can be found in Figure 2.

4.2 Mask Analysis

We define “masks” as one apparel instance that may have more than oneseparate components (the jacket in Figure 1 for example), “polygon” as a disjointarea.